A Complete Guide to the DADA2 Pipeline for 16S rRNA Analysis: From Raw Reads to ASV Tables

This comprehensive tutorial provides researchers, scientists, and drug development professionals with a complete workflow for analyzing 16S rRNA amplicon sequencing data using the DADA2 pipeline in R.

A Complete Guide to the DADA2 Pipeline for 16S rRNA Analysis: From Raw Reads to ASV Tables

Abstract

This comprehensive tutorial provides researchers, scientists, and drug development professionals with a complete workflow for analyzing 16S rRNA amplicon sequencing data using the DADA2 pipeline in R. We cover foundational concepts of Amplicon Sequence Variants (ASVs) versus OTUs, deliver a detailed, step-by-step methodological guide from quality control through taxonomy assignment, address common troubleshooting and optimization scenarios for challenging data, and conclude with validation strategies and comparisons to alternative pipelines. The guide synthesizes current best practices to ensure accurate, reproducible microbial community profiling for biomedical and clinical research applications.

Understanding DADA2 and ASVs: A Paradigm Shift in 16S rRNA Analysis

Core Conceptual Comparison

Amplicon sequencing of microbial communities, particularly targeting the 16S rRNA gene, relies on bioinformatic methods to group sequences into biologically meaningful units. The shift from Operational Taxonomic Units (OTUs) to Amplicon Sequence Variants (ASVs) represents a fundamental change in resolution and reproducibility.

Table 1: Conceptual & Methodological Comparison of OTUs vs. ASVs

| Feature | Traditional OTU Clustering (e.g., 97% similarity) | Amplicon Sequence Variants (ASVs) |

|---|---|---|

| Definition | Clusters of sequences defined by an arbitrary similarity threshold (e.g., 97%). | Exact biological sequences inferred from reads, distinguishing single-nucleotide differences. |

| Resolution | Species-level or genus-level, collapses intra-species variation. | Single-nucleotide, enabling strain-level discrimination. |

| Method Basis | Heuristic, greedy clustering (e.g., UPARSE, VSEARCH). | Error-correcting, parametric model (e.g., DADA2, Deblur, UNOISE). |

| Reproducibility | Low; clusters can vary between runs/parameters. | High; same sequence yields same ASV across studies. |

| Reference Database Dependence | Optional for de novo clustering; required for closed-reference. | Not required for inference; used post-hoc for taxonomy. |

| Handling of Sequencing Errors | Errors are clustered with true sequences, inflating diversity. | Errors are explicitly modeled and removed. |

| Downstream Analysis | Relative abundance of clusters. | Relative abundance of exact sequences. |

Table 2: Quantitative Performance Comparison (Typical Outcomes)

| Metric | OTU Clustering (97%) | ASV Inference (DADA2) |

|---|---|---|

| Number of Features | Fewer, due to clustering. | More, due to higher resolution. |

| Spurious Feature Count | Higher (includes PCR/sequencing errors). | Substantially lower (errors removed). |

| Cross-Study Recurrence | Low (<10% for de novo OTUs). | High (often >60% for common taxa). |

| Computational Demand | Moderate. | Moderate to High (model training). |

| Perceived Alpha Diversity | Inflated. | More accurate. |

Protocols and Application Notes

Protocol 1: TraditionalDe NovoOTU Clustering Workflow (Using VSEARCH)

This protocol is provided for historical and comparative context within the thesis on the DADA2 pipeline.

Materials & Reagents:

- Demultiplexed Paired-End FASTQ Files: Raw sequencing data.

- QIIME 2 (2024.5 or later) or USEARCH/VSEARCH: Clustering software.

- Silva 138 or Greengenes2 2022 Database: For taxonomy assignment.

- Computational Resources: 8+ CPU cores, 16GB+ RAM.

Procedure:

- Primer Removal & Quality Filtering: Use

cutadaptto remove primers. Filter reads based on quality scores (e.g., maxEE=2.0, minLen=150). - Merge Paired Reads: Use

vsearch --fastq_mergepairswith proper read alignment. - Dereplication: Combine identical sequences (

vsearch --derep_fulllength). - Chimera Detection (Reference-based): Remove chimeras using a database (

vsearch --uchime_ref). - De Novo Clustering at 97%: Cluster sequences into OTUs (

vsearch --cluster_sizewithid=0.97). - OTU Table Construction: Map filtered reads back to OTU representatives (

vsearch --usearch_global). - Taxonomic Assignment: Use a classifier (e.g.,

qiime feature-classifier classify-sklearn) against a reference database.

Protocol 2: ASV Inference Workflow (DADA2 Pipeline)

This is the core protocol for the thesis, providing a step-by-step guide for 16S rRNA data.

Materials & Reagents:

- Demultiplexed Paired-End FASTQ Files: Raw Illumina MiSeq/HiSeq data.

- R (v4.3.0+) with DADA2 (v1.30+): Core analysis environment.

- Silva 138.1 NR99 or GTDB R220 Database: For taxonomy assignment.

- High-Performance Computing Node (Recommended): 16+ CPU cores, 32GB RAM for large datasets.

Procedure:

- Prepare Environment: Install R, Bioconductor, and the DADA2 library. Load required packages (

dada2,ShortRead,ggplot2). - Inspect Read Quality Profiles: Use

plotQualityScore()to visualize quality trends across bases. Decide on truncation parameters. - Filter and Trim: Apply filtering based on quality scores.

Learn Error Rates: DADA2 algorithm learns the specific error profile of your dataset.

Dereplication: Combine identical reads.

Core Sample Inference (ASV Calling): Apply the DADA algorithm to each sample.

Merge Paired Reads: Merge forward and reverse reads to create full-length sequences.

Construct Sequence Table: Form an ASV table (analogous to OTU table).

Remove Chimeras: Identify and remove bimera sequences de novo.

Assign Taxonomy: Use a Naive Bayes classifier with a training database.

Visualizations

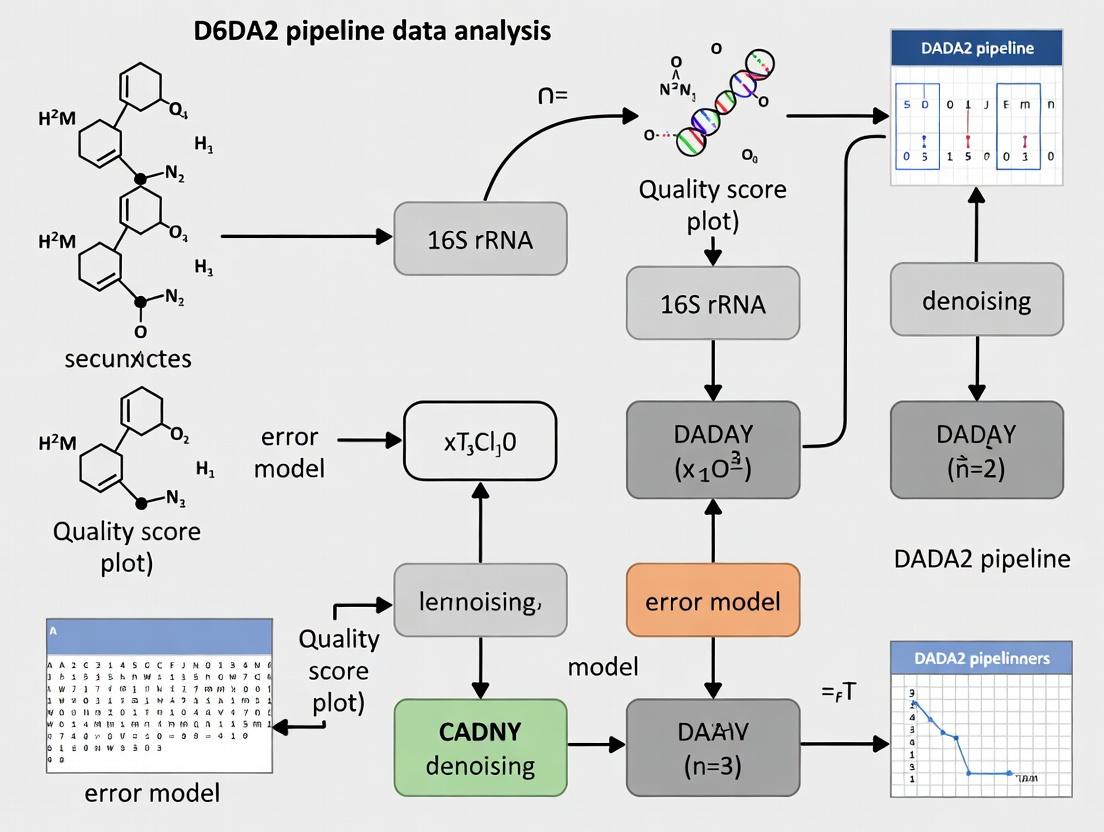

Title: DADA2 ASV Inference Workflow (8 Steps)

Title: OTU vs ASV Method Comparison (4 Key Aspects)

The Scientist's Toolkit: Key Reagent & Software Solutions

Table 3: Essential Research Reagents & Materials for 16S rRNA Amplicon Studies

| Item | Function/Description | Example Product/Version |

|---|---|---|

| 16S rRNA Gene Primers (V3-V4) | Amplify hypervariable regions for bacterial/archaeal profiling. | 341F (5’-CCTAYGGGRBGCASCAG-3’) / 806R (5’-GGACTACNNGGGTATCTAAT-3’) |

| High-Fidelity PCR Enzyme | Minimize amplification errors introduced during library prep. | KAPA HiFi HotStart ReadyMix, Q5 High-Fidelity DNA Polymerase |

| Size-Selective Magnetic Beads | Cleanup PCR products and select correct amplicon size. | AMPure XP Beads, SPRISelect Beads |

| Dual-Indexed Adapter Kit | Allows multiplexing of hundreds of samples in one run. | Illumina Nextera XT Index Kit v2 |

| PhiX Control v3 | Spike-in for low-diversity libraries; provides run quality metrics. | Illumina PhiX Control Kit (≥1%) |

| MiSeq Reagent Kit v3 (600-cycle) | Standard chemistry for 2x300bp paired-end sequencing of ~20M reads. | Illumina MS-102-3003 |

| Silva SSU Ref NR 99 Database | Curated reference for taxonomy assignment of bacterial/archaeal 16S sequences. | Release 138.1 (or latest) |

| DADA2 R Package | Core software for error modeling, denoising, and ASV inference. | Bioconductor v1.30.0+ |

| QIIME 2 Core Distribution | Integrative platform for analysis and visualization of microbiome data. | 2024.5 or later |

| Positive Control DNA (Mock Community) | Validates entire wet-lab and computational pipeline (e.g., ZymoBIOMICS). | ZymoBIOMICS Microbial Community Standard D6300 |

Within the framework of a comprehensive pipeline tutorial for 16S rRNA amplicon sequencing, the DADA2 algorithm represents a critical shift from traditional Operational Taxonomic Unit (OTU) clustering to exact Amplicon Sequence Variant (ASV) inference. This application note details its core principles of error modeling and denoising, enabling researchers and drug development professionals to achieve single-nucleotide resolution in microbial community profiling.

Core Algorithmic Principles

DADA2 employs a parametric model of sequencing errors to distinguish true biological sequences from erroneous reads generated during amplification and sequencing.

Probabilistic Error Modeling

The algorithm learns a detailed error rate for each possible nucleotide transition (e.g., A→C, A→G, A→T) at each position in the sequenced read. This model is trained on the dataset itself.

Table 1: Example Learned Error Rates (Per-Base Transition Probabilities)

| Read Position | A→C | A→G | A→T | C→A | C→G | C→T | ... |

|---|---|---|---|---|---|---|---|

| 1 | 1.2e-7 | 1.5e-7 | 9.8e-8 | 2.1e-7 | 1.8e-7 | 1.4e-7 | ... |

| 10 | 5.4e-6 | 6.1e-6 | 4.9e-6 | 7.2e-6 | 6.8e-6 | 5.9e-6 | ... |

| 150 | 2.3e-4 | 2.8e-4 | 2.1e-4 | 3.5e-4 | 3.1e-4 | 2.9e-4 | ... |

Note: Error rates typically increase along the read length. Values are illustrative.

Denoising via Partitioning

The core denoising process involves partitioning sequence reads into "partitions" hypothesized to originate from the same true biological sequence. This is performed via a greedy, sample-by-sample algorithm:

- The most abundant unique sequence is designated as the initial "partition center."

- All less abundant sequences are compared to it. If their abundance is consistent with arising from the center sequence via the error model, they are merged into its partition.

- The process repeats with the next most abundant unpartitioned sequence.

Table 2: Key Quantitative Outputs of DADA2 Denoising

| Metric | Typical Range/Description | Significance |

|---|---|---|

| Input Reads | Variable (e.g., 50,000 - 500,000/sample) | Raw sequence data post-quality filtering. |

| Output ASVs | 100 - 10,000 per run | Exact biological sequences inferred. |

| Denoising Efficiency | 70-95% of input reads retained | Proportion of reads assigned to ASVs. |

| Error Rate Reduction | 1-3 orders of magnitude | From initial estimate to final error rate post-denoising. |

| Chimeras Identified | 5-30% of potential sequences | Artificially fused sequences removed. |

DADA2 Denoising Partitioning Workflow

Detailed Experimental Protocol: Implementing DADA2 for 16S rRNA Analysis

Protocol Title: DADA2-Based Denoising of Paired-End 16S rRNA Gene Amplicon Data

Objective: To process raw Illumina paired-end FASTQ files into a table of exact Amplicon Sequence Variants (ASVs).

Materials and Software Requirements

The Scientist's Toolkit: Key Research Reagent Solutions & Software

| Item | Function & Rationale |

|---|---|

| R (v4.0+) & RStudio | Statistical computing environment for running the DADA2 pipeline (v1.28+). |

| DADA2 R Package | Core library containing all functions for error learning, denoising, merging, and chimera removal. |

| FastQC (v0.11+) | Initial quality assessment of raw FASTQ files to inform trimming parameters. |

| Short Read Archive (SRA) Toolkit | Required if downloading public data from NCBI SRA databases. |

| BIOM File Format | Standardized output for interoperability with downstream analysis tools (e.g., QIIME 2, phyloseq). |

| Silva / GTDB / NCBI Ref Databases | Curated 16S rRNA reference databases for taxonomic assignment of inferred ASVs. |

| High-Performance Computing (HPC) Cluster | Recommended for large datasets due to the computationally intensive denoising process. |

Step-by-Step Procedure

Step 1: Quality Profiling and Filtering

- Inspect read quality profiles using

plotQualityProfile(). - Filter and trim reads based on quality scores and expected amplicon length (e.g.,

filterAndTrim(truncLen=c(240,200), maxN=0, maxEE=c(2,5), truncQ=2)).

Step 2: Learning the Error Rates

- Learn forward and reverse read error rates separately using a subset of data (

learnErrors()). - Visualize the learned error model with

plotErrors()to ensure a good fit.

Step 3: Sample Inference and Denoising

- Apply the core denoising algorithm to each sample independently (

dada()). This function uses the error model to infer true sequences.

Step 4: Merging Paired-End Reads

- Merge denoised forward and reverse reads (

mergePairs()) to obtain the full-length target amplicon. - Construct a sequence table (

makeSequenceTable()), a matrix of ASVs (rows) per sample (columns).

Step 5: Post-Processing

- Remove chimeras (

removeBimeraDenovo()) using the consensus method. - Assign taxonomy (

assignTaxonomy()) against a reference database. - (Optional) Construct a phylogenetic tree for downstream diversity analyses.

DADA2 Pipeline Main Steps

Advanced Application: Error Model Validation Protocol

Protocol Title: Validating the DADA2 Error Model Using Mock Microbial Communities

Objective: To empirically verify the accuracy of the error model and denoising efficacy.

Procedure

- Obtain Mock Community Data: Sequence a well-defined mock community (e.g., ZymoBIOMICS, ATCC MSA-1000) using the same 16S library prep and sequencing protocol as your experimental samples.

- Process with DADA2: Run the mock community data through the standard DADA2 pipeline (Protocol 3.2).

- Benchmark Output: Compare the inferred ASVs to the known reference sequences of the mock community strains.

- Quantitative Analysis: Calculate:

- Recall: Proportion of expected strains detected.

- Precision: Proportion of inferred ASVs that correspond to true strains (vs. spurious sequences).

- Sequence Discrepancy: Compare the ASV sequence to the reference genome sequence for validated true positives.

Table 3: Example Mock Community Validation Results

| Expected Strain | Detected ASV Sequence? | Abundance Correlation (R²) | Mismatches to Reference |

|---|---|---|---|

| Pseudomonas aeruginosa | Yes | 0.98 | 0 |

| Escherichia coli | Yes | 0.95 | 0 |

| Bacillus subtilis | Yes | 0.99 | 1 (V region hypervariable) |

| Staphylococcus aureus | Yes | 0.92 | 0 |

| Spurious ASV 001 | N/A (False Positive) | N/A | N/A |

| Overall Metrics | Recall: 100% | Precision: 80% | Mean Mismatch: 0.2 |

The DADA2 algorithm, through its sample-specific error modeling and rigorous probabilistic partitioning, provides a more accurate and reproducible representation of microbial diversity in 16S rRNA studies compared to heuristic OTU methods. Integrating these protocols into a comprehensive pipeline allows researchers in drug development and microbial science to identify biomarkers and community shifts with unprecedented resolution.

This document provides the foundational setup and requirements for executing the DADA2 pipeline within a broader thesis focused on 16S rRNA gene amplicon analysis for microbial community research in drug development contexts.

Software and R Packages

The DADA2 pipeline operates primarily within the R statistical environment, requiring specific software dependencies and R packages.

Table 1: Core Software Prerequisites

| Software/Package | Minimum Version | Source / Installation Command | Primary Function |

|---|---|---|---|

| R | 4.0.0 | CRAN | Statistical computing environment. |

| RStudio (Recommended) | 1.4 | RStudio | Integrated Development Environment for R. |

| DADA2 | 1.28.0 | BiocManager::install("dada2") |

Core algorithm for inferring ASVs from FASTQ. |

| ShortRead | 1.48.0 | BiocManager::install("ShortRead") |

Handling and quality assessment of FASTQ files. |

| ggplot2 | 3.4.0 | install.packages("ggplot2") |

Generation of publication-quality figures. |

| phyloseq | 1.44.0 | BiocManager::install("phyloseq") |

Analysis and visualization of microbiome data. |

| DECIPHER | 2.28.0 | BiocManager::install("DECIPHER") |

Multiple sequence alignment and chimera checking. |

| Biostrings | 2.68.0 | BiocManager::install("Biostrings") |

Efficient manipulation of biological sequences. |

| cutadapt (Python) | 4.0 | pip install cutadapt |

Removal of primer/adapter sequences. |

Installation Protocol

- Install R from CRAN and RStudio from the official website.

- Open RStudio and install Bioconductor manager if not present:

if (!require("BiocManager", quietly = TRUE)) install.packages("BiocManager") - Install core Bioconductor packages:

BiocManager::install(c("dada2", "ShortRead", "phyloseq", "Biostrings", "DECIPHER")) - Install CRAN packages:

install.packages(c("ggplot2", "tidyverse")) - Install

cutadaptvia system terminal/Python:python3 -m pip install --user cutadapt. Ensure it is in your system PATH.

Input File Format: FASTQ

The pipeline requires raw sequencing data in FASTQ format, typically generated from Illumina platforms.

Table 2: FASTQ File Specifications for DADA2

| Parameter | Requirement | Notes |

|---|---|---|

| Format | Standard Sanger / Illumina 1.8+ (Phred+33) | Quality scores must be encoded correctly. |

| Read Type | Paired-end (recommended) or Single-end | Provides more accurate overlap for 16S V3-V4 regions. |

| File Naming | Sample-specific, consistent naming (e.g., SampleID_R1.fastq.gz, SampleID_R2.fastq.gz). |

Critical for automated sample tracking. |

| Primer Status | Primers may or may not be removed. | If present, specify sequence for trimming within DADA2 or via cutadapt. |

| Compression | .gz (gzip compressed) supported. |

Reduces storage and improves read speed. |

Protocol 1: Initial FASTQ Quality Assessment

Objective: Visually inspect raw read quality to inform DADA2 truncation parameters. Method:

- Set the path to your raw FASTQ files in R.

Extract sample names from file names (format:

SAMPLENAME_XXX.fastq.gz).Generate and visualize quality profiles for forward and reverse reads.

Analysis: Identify the position where median quality score drops significantly (e.g., below Q30). This informs the

truncLenparameter in the filtering step.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Experimental Materials for 16S rRNA Library Prep

| Reagent / Kit | Vendor Examples | Function in Upstream Workflow |

|---|---|---|

| DNA Extraction Kit (for stool, soil, tissue) | Qiagen DNeasy PowerSoil, MO BIO PowerSoil | Isolates total genomic DNA, including microbial DNA, from complex samples. |

| 16S rRNA Gene PCR Primers (e.g., 341F/806R) | Integrated DNA Technologies (IDT) | Amplifies the hypervariable region (e.g., V3-V4) for sequencing. |

| High-Fidelity DNA Polymerase | KAPA HiFi, Q5 Hot Start | Ensures accurate amplification with low error rates for downstream sequence fidelity. |

| Magnetic Bead-Based Cleanup System | AMPure XP Beads | Purifies and size-selects PCR amplicons to remove primers and dimers. |

| Library Quantification Kit | Qubit dsDNA HS Assay | Accurately quantifies DNA concentration prior to sequencing. |

| Sequencing Reagent Kit (e.g., MiSeq Reagent Kit v3) | Illumina | Provides chemistry for 600-cycle (2x300 bp) paired-end sequencing. |

Workflow Diagrams

Title: Prerequisites Workflow for DADA2 Thesis Project

Title: FASTQ Quality Assessment Protocol for Parameter Determination

The Importance of Metadata and Experimental Design for Downstream Analysis

1. Introduction Within the context of 16S rRNA amplicon sequencing analysis using the DADA2 pipeline, meticulous experimental design and comprehensive metadata collection are not preliminary steps but foundational components that determine the biological validity of all downstream results. The DADA2 pipeline excels at inferring exact amplicon sequence variants (ASVs) from raw reads, but the biological interpretation of those ASVs is entirely dependent on the context provided by the associated experimental metadata and the robustness of the initial study design.

2. The Role of Metadata in DADA2 Analysis Metadata—data about the data—transforms ASV tables from mere lists of sequence counts into biologically interpretable datasets. In a DADA2 workflow, the final output is an ASV table (counts per sample) and a taxonomy table. Without metadata, these tables have no experimental context.

Table 1: Essential Metadata Categories for 16S rRNA Studies

| Category | Specific Fields | Importance for DADA2 Downstream Analysis |

|---|---|---|

| Sample Information | SampleID, BarcodeSequence, LinkerPrimerSequence | Crucial for demultiplexing. DADA2 requires correctly formatted sample manifests for processing. |

| Experimental Factors | TreatmentGroup (e.g., Control, Drug_A), TimePoint (e.g., Day0, Day7), Dose | Defines groups for differential abundance testing (e.g., DESeq2, ALDEx2) and beta-diversity comparisons. |

| Host/Subject Data | SubjectID, Age, Sex, Genotype, HealthStatus | Enables correction for confounding variables in statistical models and subgroup analysis. |

| Sample Collection | CollectionDate, Collector, BodySite, StorageMethod | Identifies potential batch effects for normalization or inclusion as random effects in models. |

| Sequencing Run Info | RunID, SequencingCenter, Instrument, PrimerSet | Critical for merging multiple sequencing runs. DADA2 allows run-specific error rate learning. |

3. Experimental Design Principles for Robust DADA2 Outcomes Flawed design leads to irrecoverable bias. Key principles include:

- Controlling for Confounders: Use randomization and blocking.

- Replication: Biological replicates (distinct subjects) are mandatory; technical replicates (same sample sequenced multiple times) assess sequencing noise.

- Batch Effects: Minimize by processing control and treatment samples together across DNA extraction, PCR, and sequencing runs.

- Including Controls: Negative controls (extraction blanks) detect reagent contamination. Positive controls (mock communities) assess accuracy of DADA2's error correction and taxonomy assignment.

Protocol 1: Designing and Documenting a 16S rRNA Experiment for DADA2 Objective: To establish a study design that minimizes bias and generates comprehensive metadata for robust bioinformatic analysis. Materials: See "The Scientist's Toolkit" below. Procedure:

- Define Primary Hypothesis: Clearly state the biological question (e.g., "Drug X alters gut microbiota composition in a murine model of Disease Y").

- Power and Sample Size: Use previous data or pilot studies with tools like

HMPormicropowerto estimate sufficient biological replicates per group (typically n≥5). - Randomization: Randomly assign subjects to treatment groups across cages/litters to avoid cage effects.

- Sample Collection Plan: a. For each sample, record all fields from Table 1 at the time of collection. b. Process samples in random order across experimental groups within a single day's batch. c. Include one extraction negative control (sterile water) per every 20 samples.

- Library Preparation: a. Use a standardized primer set (e.g., 515F/806R for V4 region). b. Include a mock community (e.g., ZymoBIOMICS) as a positive control in each PCR batch. c. Perform PCR in triplicate for each sample, then pool replicates to reduce amplification bias.

- Metadata File Creation:

a. Create a sample metadata file in TSV format.

b. Ensure the first column is

sample-idand matches the prefix of the raw read filenames exactly. c. Populate all relevant columns without missing values (use "unknown" if necessary).

4. Impact on Downstream DADA2 Workflow Steps Poor design and metadata directly compromise key DADA2 steps:

- Quality Filtering & Error Learning: Batch effects in sequencing quality can skew error rate models. Metadata on

RunIDallows separate model learning per run. - Sample Inference: Contamination in negative controls can guide the threshold for chimera removal or post-hoc filtering.

- Taxonomy Assignment: The quality of the reference database (e.g., SILVA, GTDB) is a critical, often-overlooked metadata factor affecting interpretation.

- Statistical Analysis: Without proper experimental factor metadata, differential abundance testing and permutational multivariate analysis of variance (PERMANOVA) cannot be performed correctly.

Visualization 1: Metadata & Design Influence on DADA2 Workflow

Diagram Title: How Design & Metadata Guide the DADA2 Pipeline

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Robust 16S rRNA Experimental Design

| Item | Function & Importance |

|---|---|

| Standardized Mock Microbial Community (e.g., ZymoBIOMICS) | Validates the entire wet-lab and DADA2 bioinformatic workflow. Provides known composition to assess error correction, chimera removal, and taxonomy assignment accuracy. |

| DNA/RNA Shield or similar preservative | Preserves microbial community structure immediately upon sample collection, preventing shifts between sampling and processing. |

| Extraction Kit with Bead Beating (e.g., DNeasy PowerSoil) | Ensures efficient lysis of diverse bacterial cell walls. Critical for reproducible and unbiased community representation. |

| PCR Inhibitor Removal Reagents | Contaminants co-purified during extraction can inhibit amplification, causing false-low biomass signals. |

| High-Fidelity DNA Polymerase (e.g., Q5, Phusion) | Minimizes PCR amplification errors that the DADA2 algorithm must later correct, improving ASV inference accuracy. |

| Dual-Indexed Barcoded Primers | Enables multiplexing of hundreds of samples with low index-swapping (index-hopping) rates, accurately linking reads to sample metadata. |

| Quantification Kit (e.g., Qubit dsDNA HS) | Accurate quantification of DNA before PCR normalization is essential for uniform library preparation and sequencing depth. |

6. Conclusion The DADA2 pipeline provides powerful, reproducible inference of biological sequences from raw amplicon data. However, its output is only as meaningful as the experimental design that generated the samples and the metadata that annotates them. Investing rigorous effort into these pre-sequencing phases is the most critical step for ensuring that downstream analyses yield trustworthy, biologically insightful results that can robustly inform drug development and mechanistic research.

Navigating the DADA2 Documentation and Citing the Original Paper

Within the context of a comprehensive thesis on a DADA2 pipeline tutorial for 16S rRNA data, understanding the official documentation and proper citation of the original method is a foundational step. This protocol details the systematic navigation of these critical resources to ensure both methodological accuracy and academic integrity.

The DADA2 project provides resources primarily through two channels: the original peer-reviewed publication and the dedicated R package documentation. The table below summarizes the core quantitative metrics from the seminal paper and the corresponding documentation resources.

Table 1: DADA2 Original Publication Metrics and Documentation Resources

| Aspect | Detail / Metric | Source |

|---|---|---|

| Primary Citation | Callahan, B.J. et al. DADA2: High-resolution sample inference from Illumina amplicon data. Nat Methods 13, 581–583 (2016). | Publication |

| Reported Error Rate | 1 error per ~1.25 billion bases (a 100-fold improvement over previous methods). | Publication |

| Resolution | Single-nucleotide differences over the entire sequenced amplicon. | Publication |

| Official R Package | dada2, available via Bioconductor. |

Documentation |

| Primary Documentation | Package vignette: "DADA2 Pipeline Tutorial (1.16)". | Documentation |

| FAQ & Troubleshooting | Dedicated FAQ section on the DADA2 website. | Documentation |

Experimental Protocols

Protocol 1: Accessing and Utilizing the DADA2 Documentation

Objective: To locate and apply the official tutorial for processing 16S rRNA amplicon sequences.

- Access: Within R, execute

browseVignettes("dada2")or visit the Bioconductor package page. - Navigation: Open the vignette titled "DADA2 Pipeline Tutorial (1.16)". This HTML document provides a complete, step-by-step workflow.

- Implementation: Follow the tutorial sequentially, adapting file paths and parameters (e.g.,

truncLen,maxEE) to your specific dataset. - Troubleshooting: Consult the "Common Problems" section of the vignette or the dedicated online FAQ for error resolution.

Objective: To correctly attribute the DADA2 algorithm and workflow in scholarly work.

- Primary Method: Cite the original 2016 Nature Methods paper (Table 1) for the core algorithm.

- Specific Functions: When describing specific steps (e.g., error model learning, sample inference), reference the primary paper.

- Workflow Implementation: If following the exact workflow from the package vignette, cite the tutorial as: "DADA2 pipeline tutorial (version 1.16)."

- Software Citation: In methods, state: "Sequence variants were inferred using the DADA2 algorithm (Callahan et al., 2016) as implemented in the

dada2R package (version x.x.x)."

Mandatory Visualization

Diagram 1: Navigating DADA2 resources from start to analysis.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Digital Resources for DADA2 Pipeline Implementation

| Resource / Tool | Function / Purpose |

|---|---|

dada2 R/Bioconductor Package |

Core software library containing all functions for error modeling, dereplication, sample inference, and chimera removal. |

| DADA2 Pipeline Tutorial Vignette | Step-by-step protocol for processing raw FASTQ files to an Amplicon Sequence Variant (ASV) table. |

| Original Nature Methods Paper | Authoritative source for understanding the algorithm's theory, performance benchmarks, and foundational citation. |

| DADA2 FAQ (Online) | Curated list of solutions for common installation, data processing, and interpretation issues. |

| RStudio IDE | Integrated development environment for running R code, managing projects, and visualizing data during the pipeline execution. |

| Example FASTQ Datasets | Provided within the package or tutorial, used for testing pipeline installation and workflow comprehension. |

Step-by-Step DADA2 Pipeline: A Practical R Tutorial from FASTQ to Phyloseq

Application Notes and Protocols

Within the broader thesis on implementing the DADA2 pipeline for 16S rRNA amplicon sequencing analysis, this initial step is foundational. It ensures that the computational environment is correctly configured and that sequence data is properly imported for subsequent quality control, denoising, and taxonomic assignment. This protocol is designed for reproducibility in microbial ecology, biomarker discovery, and translational drug development research.

R Environment Configuration

A stable R environment with specific package versions is critical for reproducible bioinformatics analysis.

Detailed Protocol:

- Install R (≥4.1.0) and RStudio: Download and install the latest stable versions from CRAN (https://cran.r-project.org/) and Posit (https://posit.co/download/rstudio-desktop/).

Install Bioconductor: Open RStudio and execute the following in the console:

Install Core Packages: Install DADA2 and essential ancillary packages.

Verify Installation: Load the key package to confirm successful installation.

Table 1: Essential R Packages for DADA2 Pipeline Setup

| Package | Version (Tested) | Source | Primary Function in Pipeline |

|---|---|---|---|

| dada2 | 1.30.0 | Bioconductor | Core algorithm for error modeling, dereplication, and ASV inference. |

| ShortRead | 1.60.0 | Bioconductor | Handling FASTQ files, reading sequences. |

| phyloseq | 1.46.0 | Bioconductor | Post-processing, visualization, and statistical analysis of microbiome data. |

| ggplot2 | 3.4.4 | CRAN | Creation of publication-quality plots for quality profiles and results. |

| tidyverse | 2.0.0 | CRAN | Data manipulation and formatting. |

Loading Paired-End Read Files

The DADA2 pipeline requires sorted file paths for forward and reverse reads. Data is typically in demultiplexed, gzipped FASTQ format from platforms like Illumina MiSeq.

Detailed Protocol:

- Organize Your Data: Place all FASTQ files (e.g.,

sample1_R1.fastq.gz,sample1_R2.fastq.gz) into a single project directory (e.g.,./seq_data). Set Working Directory in R:

List and Sort Files: Use R commands to extract sorted file paths for forward (

R1) and reverse (R2) reads.Extract Sample Names: Derive sample identifiers from file names (assumes format:

SAMPLENAME_XXX.fastq.gz).Inspect File Lists: Validate that files are correctly paired and ordered.

Table 2: Example Data Structure for 6 Paired-End Samples

| Sample Name | Forward Read File | Reverse Read File | Expected Read Length (bp) |

|---|---|---|---|

| Control_01 | ./seq_data/Control_01_R1.fastq.gz |

./seq_data/Control_01_R2.fastq.gz |

250 |

| Control_02 | ./seq_data/Control_02_R1.fastq.gz |

./seq_data/Control_02_R2.fastq.gz |

250 |

| TreatmentA01 | ./seq_data/Treatment_A_01_R1.fastq.gz |

./seq_data/Treatment_A_01_R2.fastq.gz |

250 |

| TreatmentA02 | ./seq_data/Treatment_A_02_R1.fastq.gz |

./seq_data/Treatment_A_02_R2.fastq.gz |

250 |

| TreatmentB01 | ./seq_data/Treatment_B_01_R1.fastq.gz |

./seq_data/Treatment_B_01_R2.fastq.gz |

250 |

| TreatmentB02 | ./seq_data/Treatment_B_02_R1.fastq.gz |

./seq_data/Treatment_B_02_R2.fastq.gz |

250 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Materials for DADA2 Setup

| Item | Function/Description | Example/Note |

|---|---|---|

| Demultiplexed Paired-End FASTQs | Raw sequencing reads. Input data for the pipeline. | Files from Illumina MiSeq (2x250bp or 2x300bp) are typical for 16S V4 region. |

| High-Performance Computing (HPC) Resource | Provides necessary CPU and memory for computationally intensive steps (error modeling, merging). | Local server, cluster, or cloud instance (AWS, GCP). |

| R Environment Manager | Manages isolated, project-specific R environments to prevent version conflicts. | renv, conda. |

| Bioinformatics Project Directory | Organized file structure for raw data, scripts, and outputs. | Essential for reproducibility and data provenance. |

| Metadata Table (TSV/CSV) | Sample-associated data (treatment, patient ID, etc.). Required for downstream statistical analysis in phyloseq. | Must align sample names with those derived from FASTQ files. |

Workflow Diagram: DADA2 Setup and Data Loading

DADA2 Initial Setup and Data Load Workflow

Data Validation Diagram: FASTQ File Pairing Logic

FASTQ File Sorting and Pairing Validation

Within the DADA2 pipeline for 16S rRNA amplicon data analysis, assessing raw read quality is the critical first step. This protocol details the use of the plotQualityProfile() function in R to visualize quality scores across sequencing cycles, enabling the formulation of an evidence-based trimming strategy. Proper trimming removes low-quality regions, reduces errors, and improves the accuracy of downstream sequence variant inference.

Application Notes: Interpreting Quality Profiles

The plotQualityProfile() function generates a plot summarizing the quality scores for each base position in the input FASTQ files. The plot displays the median quality score (solid orange line) with interquartile range (orange-shaded region) and the median per-base sequencing quality (green line). A key feature is the distribution of quality scores at each position, shown as a grey-scale heatmap, where darker colors indicate a higher frequency of reads.

For Illumina data, quality scores (Q-scores) are on the Phred scale: Q30 = 99.9% base call accuracy, Q20 = 99%, Q10 = 90%. A common quality threshold for trimming is Q30. The typical profile shows high quality at the start of reads, with a gradual decline in later cycles. Forward and reverse reads are plotted separately, as reverse reads often exhibit a more pronounced quality drop.

Table 1: Common Quality Profile Characteristics and Interpretations

| Profile Feature | Typical Observation | Implication for Trimming Strategy |

|---|---|---|

| Initial Cycles | Lower median quality (often Q < 20) | Consider trimming first 5-15 bases to remove primer/adaptor remnants and low-complexity regions. |

| Quality Plateau | High, stable median quality (Q > 30) | The region retained for analysis. Identify the start of the drop. |

| Quality Decline | Gradual or sharp drop in median quality | Trim where median quality crosses acceptable threshold (e.g., Q30, Q20, or Q10). |

| Read Length | Sequence length distribution (grey lines) | Trim before length collapses, where the number of reads drops sharply. |

Experimental Protocol: Quality Visualization and Trimming

Materials & Software Requirements

- Software: R (v4.0.0 or later), RStudio, DADA2 package (v1.20.0 or later).

- Input Data: Demultiplexed, gzip-compressed FASTQ files (

.fastq.gz) from 16S rRNA amplicon sequencing (e.g., V4 region, paired-end Illumina MiSeq). - Computational Resources: Standard desktop/laptop for visualization; larger RAM for processing many samples.

Protocol Steps

1. Environment Setup and Data Inspection

2. Generate Quality Profile Plots

3. Analyze Plots and Define Trimming Parameters Examine the generated plots (see Diagram 1 for decision logic). Key decisions:

- truncLen: The read length to truncate to. Choose positions where median quality drops below your chosen threshold (e.g., Q20). For paired-end reads, balance lengths to maintain sufficient overlap for merging.

- trimLeft: Remove bases from the start to exclude primers and low-quality initial bases.

- maxN, maxEE: Set maximum number of Ns (0 recommended) and maximum expected errors per read.

Example Decision based on Hypothetical Plot:

- Forward reads: Quality drops below Q20 at position 240.

- Reverse reads: Quality drops below Q20 at position 160.

- Primers are 20bp.

- Chosen parameters:

trimLeft=c(20,20), truncLen=c(240,160)

4. Execute Filtering and Trimming

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for 16S rRNA DADA2 Pipeline Quality Control

| Item | Function in Experiment |

|---|---|

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard chemistry for generating 2x300bp paired-end reads, suitable for the 16S rRNA V4 region. |

| PCR Primers (e.g., 515F/806R) | Target the hypervariable V4 region of the 16S rRNA gene for bacterial/archaeal community profiling. |

| Qubit dsDNA HS Assay Kit | Accurately quantifies DNA library concentration before sequencing, ensuring proper loading. |

| AMPure XP Beads | Performs post-PCR clean-up to remove primer dimers and short fragments, purifying the final amplicon library. |

| DADA2 R Package | Key software containing plotQualityProfile() and all subsequent algorithms for error modeling, dereplication, and inference. |

| FastQC (Optional) | Independent, complementary tool for initial quality control of FASTQ files, providing another view of quality scores. |

Visualizing the Trimming Decision Workflow

Title: Decision Logic for DADA2 Trimming Parameters from Quality Plots

Within the DADA2 pipeline for 16S rRNA amplicon analysis, the filterAndTrim() function is a critical preprocessing step. It ensures data quality by removing low-quality reads and trimming primers/adapters, directly impacting the reliability of downstream ASV (Amplicon Sequence Variant) inference. This protocol details its application with best-practice parameters tailored for Illumina data.

Key Parameters & Quantitative Benchmarks

Table 1: Core Parameters for filterAndTrim() and Recommended Settings

| Parameter | Typical Setting | Function & Rationale |

|---|---|---|

truncLen |

R1: 240, R2: 160 (2x250 V3-V4) | Position to truncate reads. Quality typically drops at ends. Reads shorter than this are discarded. |

trimLeft |

Forward: 17, Reverse: 21 (for 515F/806R) | Removes primer sequences. Must be determined empirically from primer length. |

maxN |

0 | Reads with any ambiguous bases (N) are discarded. |

maxEE |

2.0 | Maximum expected errors. Filters reads with an improbably high error rate. |

truncQ |

2 | Truncates reads at the first instance of a quality score <= this value. |

rm.phix |

TRUE | Removes reads matching the PhiX control genome. |

compress |

TRUE | Outputs compressed .fastq.gz files. |

multithread |

TRUE | Enables parallel processing for speed. |

Table 2: Expected Output Metrics for a Healthy Dataset

| Metric | Acceptable Range | Explanation |

|---|---|---|

| Percentage of reads passing filter | > 70-80% | Significant drops may indicate poor input quality or overly strict parameters. |

| Reduction in expected errors | > 50% | Primary function of filtering is error reduction. |

| Read length post-truncation | Uniform at truncLen |

Confirms consistent truncation. |

Detailed Experimental Protocol: Applying filterAndTrim()

Protocol 1: Executing the filterAndTrim() Function

- Software & Environment: Execute within R, using the DADA2 package (version ≥ 1.28). Ensure all file paths are correctly specified.

- Input File Preparation: Place forward (

*_R1_001.fastq.gz) and reverse (*_R2_001.fastq.gz) reads in a designated directory. Create a separate output directory for filtered files. - Parameter Determination:

a. Inspect Quality Profiles: Use

plotQualityProfile()on a subset of samples to visualize where median quality drops below ~Q30. SettruncLenat these points. b. Identify Primer Lengths: SettrimLeftbased on the number of bases in your forward and reverse primers. Function Execution:

Output Assessment: Review the

outdata frame, which lists read counts in and out. Plot read length distribution post-filtering to confirm proper truncation.

Visual Workflow

Title: DADA2 filterAndTrim() Workflow & Parameters

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in 16S rRNA DADA2 Pipeline |

|---|---|

| Illumina MiSeq/HiSeq Platform | Generates paired-end (e.g., 2x250 bp or 2x300 bp) amplicon sequences of the target 16S region (e.g., V3-V4). |

| PCR Reagents & 16S Primers | (e.g., 515F/806R for V4). Amplify the target hypervariable region from genomic DNA. Must be well-purified. |

| DADA2 R Package (v1.28+) | Core software containing the filterAndTrim() function and all subsequent steps for ASV inference. |

| High-Performance Computing (HPC) Resource | Filtering and downstream processing are computationally intensive; multithreading is essential. |

| Quality Control Software (FastQC) | Used prior to DADA2 for initial assessment of raw fastq quality, informing truncLen choices. |

| PhiX Control v3 | Spiked into Illumina runs for quality control; reads are identified and removed by rm.phix=TRUE. |

| Validated 16S Reference Database | (e.g., SILVA, Greengenes). Used post-DADA2 for taxonomic assignment of inferred ASVs. |

Application Notes

This step is the core algorithmic component of the DADA2 pipeline, transitioning from quality-filtered sequence reads to exact amplicon sequence variants (ASVs). The learnErrors() function models the error rates specific to your sequencing run, which are then used by the dada() function to perform sample-independent denoising, distinguishing true biological sequences from sequencing errors.

Key quantitative outcomes from this step include the modeled error rates (per nucleotide transition) and the denoising results per sample, detailing input reads, merged reads, and chimeras removed.

Table 1: Typical Output Metrics from dada() Denoising

| Sample | Input Reads | Non-Chimeric ASVs | Percentage Retained | Chimeras Removed |

|---|---|---|---|---|

| Sample_1 | 50,000 | 18,500 | 37.0% | 3,200 |

| Sample_2 | 45,200 | 16,800 | 37.2% | 2,950 |

| Sample_3 | 62,100 | 22,100 | 35.6% | 4,150 |

| Average | 52,433 | 19,133 | 36.6% | 3,433 |

Table 2: Learned Error Rates Example (Probability x 10^-3)

| Nucleotide | A->C | A->G | A->T | C->A | C->G | C->T |

|---|---|---|---|---|---|---|

| Cycle 1 | 0.12 | 0.25 | 0.11 | 0.13 | 0.36 | 1.52 |

| Cycle 10 | 0.55 | 1.20 | 0.48 | 1.85 | 0.95 | 6.85 |

| Cycle 150 | 2.85 | 4.12 | 1.95 | 3.65 | 2.10 | 15.50 |

Experimental Protocols

Protocol 1: Learning Error Rates withlearnErrors()

Purpose: To estimate the sequencing error rate model from the data, which is essential for accurate denoising. Materials: Filtered and trimmed FASTQ files (from Step 3), R environment with DADA2 installed. Procedure:

- Load required library:

library(dada2) - Set file paths: Define the path to your filtered

*.fastq.gzfiles. - Execute

learnErrors: Run the function on the filtered reads. It is recommended to use a subset of data (e.g.,nreads = 1e6) for efficiency.

- Visualize Diagnostics: Plot the estimated error rates to assess model fit.

Interpretation: The plotted error model (black line) should closely follow the observed error rates (points). A poor fit may indicate low-quality data or issues in prior filtering.

Protocol 2: Sample Inference & Denoising withdada()

Purpose: To apply the error model to correct sequencing errors and infer exact ASVs. Procedure:

- Apply Denoising: Run the

dada()algorithm on each sample using the learned error model.

- Optional Pooling: For projects with low sequencing depth or very rare variants, consider

pool=TRUEorpool="pseudo"to increase sensitivity. - Inspect Results: Examine the returned object for a single sample to see read processing summary.

Protocol 3: Merge Paired-end Reads

Purpose: To combine denoised forward and reverse reads into full-length sequences. Procedure:

- Execute Merge: Use

mergePairs()with specified overlap and mismatch criteria.

- Inspect Mergers: Check the merger statistics for the first sample.

Protocol 4: Construct Sequence Table

Purpose: To create an ASV (feature) table across all samples. Procedure:

- Make Sequence Table: Generate a sample-by-sequence (ASV) matrix.

Check Dimensions: View the table dimensions (# samples x # ASVs).

Check Length Distribution: Ensure merged sequences are of expected length.

Visualizations

DADA2 Denoising and ASV Table Construction Workflow

How the DADA Algorithm Uses the Error Model

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools for DADA2 Denoising

| Item | Function/Description | Example/Note |

|---|---|---|

| High-Quality DNA Extract | Starting material for 16S PCR. Purity is critical to reduce non-target amplification. | Qiagen DNeasy PowerSoil Pro Kit |

| 16S rRNA Gene Primers (V3-V4) | Amplify the target hypervariable region for sequencing. | 341F (5’-CCTAYGGGRBGCASCAG-3’) / 806R (5’-GGACTACNNGGGTATCTAAT-3’) |

| Phusion High-Fidelity DNA Polymerase | Provides high-fidelity PCR amplification to minimize introduction of novel errors. | Thermo Scientific F-530S |

| Illumina Sequencing Reagents | Generate paired-end sequencing data (e.g., 2x250bp). | MiSeq Reagent Kit v3 (600-cycle) |

| DADA2 R Package (v1.28+) | Core software containing the learnErrors and dada functions for denoising. |

Available on Bioconductor |

| R Statistical Environment (v4.0+) | The platform required to run the DADA2 pipeline and analyses. | www.r-project.org |

| Multi-core Workstation/Server | Enables use of multithread=TRUE for computationally intensive steps. |

16+ CPU cores, 32+ GB RAM recommended |

| Reference Databases (e.g., SILVA, GTDB) | Used in subsequent steps for taxonomic assignment of inferred ASVs. | SILVA v138.1, GTDB R07-RS207 |

Application Notes

Following initial quality control and filtering in the DADA2 pipeline, Step 5 is the critical point where forward and reverse reads from paired-end 16S rRNA amplicon sequencing are combined. This step directly impacts the accuracy of inferred Amplicon Sequence Variants (ASVs), as correct merging increases read length and resolution. Incorrect merging leads to spurious sequences, data loss, and inflated diversity metrics. The merge process involves aligning the forward read with the reverse-complement of the reverse read, accounting for potential overlaps, and constructing a consensus sequence. Success is highly dependent on the quality of sequencing, the length of the amplicon, and the presence of technical artifacts like primers or adapters.

A key output is the sequence table, a high-resolution, sample-by-sequence matrix analogous to the traditional OTU table but denoised and error-corrected. This table is the foundation for all downstream ecological and statistical analyses.

Table 1: Key Metrics and Parameters for Merging Paired-End Reads

| Parameter / Metric | Typical Value / Description | Impact on Output |

|---|---|---|

| Minimum Overlap | 12-20 nucleotides | Lower values increase merges but risk false combinations. Higher values reduce merges but may discard good data. |

| Maximum Mismatches in Overlap | 0-1 (Default: 0) | Stringent setting (0) ensures only perfectly aligning overlaps merge, reducing errors. |

| Merge Efficiency | 70-95% of input reads | A low percentage indicates poor overlap, often due to poor quality trimming or amplicons longer than read length. |

| Post-Merge Sequence Length | Varies (e.g., ~250-450 bp for V3-V4) | Consistent length indicates a successful merge. A wide distribution may indicate chimera presence. |

| Sequence Table Dimensions | Samples x ASVs | Denoised table is sparser (fewer total sequences) but more precise than pre-filtered OTU tables. |

Experimental Protocols

Protocol 1: Merging Paired-End Reads with DADA2 Core Algorithm

Objective: To accurately combine filtered forward and reverse reads into full-denoised sequences.

Materials:

- Filtered and trimmed FASTQ files for forward (

*_R1_filt.fastq.gz) and reverse (*_R2_filt.fastq.gz) reads. - R environment with DADA2 package (≥1.28.0) installed.

Methodology:

- Load the error models and filtered data: Use the

dada()function learned error rates from Step 4 to denoise both forward and reverse reads independently.

Merge paired reads: Use the

mergePairs()function to combine the denoised forward and reverse reads.minOverlap: The minimum required overlap between the forward and reverse-complement reverse read.maxMismatch: The maximum number of mismatches allowed in the overlap region. Setting to 0 is highly stringent.

- Inspect the merge statistics: Examine the output object or use

sapply(mergers, getN)to see the number of reads that successfully merged per sample. Low merge rates necessitate revisiting the trim length parameters in Step 2.

Protocol 2: Constructing the Sequence Table

Objective: To create a sample-by-sequence (ASV) abundance matrix from the merged pairs.

Methodology:

- Generate the sequence table: The

makeSequenceTable()function constructs the matrix from themergersobject.

Assess sequence length distribution: Visual inspection ensures the majority of merged sequences fall within the expected amplicon length range.

(Optional) Remove chimeras: While often considered Step 6, chimeras can be removed directly after table construction using

removeBimeraDenovo().The final

seqtab.nochimobject is the denoised, merged, and chimera-free sequence table ready for taxonomic assignment.

Visualizations

DADA2 Paired-End Merging and Sequence Table Construction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for 16S rRNA Library Prep & DADA2 Analysis

| Item | Function / Role in Pipeline Context |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Minimizes PCR errors during library amplification, reducing background noise for more accurate ASV inference. |

| Dual-Indexed PCR Primers (Nextera-style) | Allows multiplexing of samples. Correct indexing is crucial for sample demultiplexing prior to DADA2 input. |

| AMPure XP Beads | For post-PCR clean-up and size selection. Removes primer dimers and optimizes library fragment size for sequencing. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard for 2x300bp paired-end sequencing of 16S rRNA amplicons (e.g., V3-V4), providing sufficient overlap for merging. |

| DADA2 R Package (v1.28+) | Core software implementing the error model and algorithms for denoising, merging, and sequence table construction. |

RStudio IDE with dplyr, ggplot2 |

Provides the computational environment for running the pipeline and visualizing results (e.g., sequence length distribution). |

| Reference Database (e.g., SILVA, Greengenes) | Used in the subsequent step for taxonomic assignment of ASVs in the constructed sequence table. |

Within the comprehensive thesis on the DADA2 pipeline for 16S rRNA amplicon sequencing analysis, Step 6 represents a critical quality control juncture. Following error rate learning, dereplication, sample inference, and read merging, the sequence variant table contains putative amplicon sequence variants (ASVs). A known artifact in PCR-based amplification is the formation of chimeric sequences—in vitro composites derived from two or more biological parent sequences. If not removed, these chimeras inflate diversity estimates and confound ecological and taxonomic conclusions. The removeBimeraDenovo() function implements a sensitive, de novo chimera detection algorithm that compares each sequence to more abundant potential "parent" sequences within the dataset, flagging and removing those likely to be artifactual.

Core Algorithm & Quantitative Performance

The removeBimeraDenovo(method="consensus") function, the most commonly used approach in DADA2, operates by a community-based consensus. For each sequence variant, it checks if it can be reconstructed by combining a left-segment from a more abundant "parent" sequence with a right-segment from another more abundant "parent." This is performed in each sample independently, and a sequence is flagged as a bimera if it is identified as such in a sufficient fraction of the samples in which it appears.

Table 1: Comparative Performance of Chimera Removal Methods in DADA2

| Method Parameter | Algorithm Description | Key Strength | Typical Reported Chimera Rate in 16S Data | Post-Removal Action |

|---|---|---|---|---|

method="consensus" |

Per-sample checking, consensus across samples. | Robust to rare bimeras present in few samples. | 10-25% of input sequences | Removes flagged ASVs from sequence table. |

method="pooled" |

Checks all sequences against entire pooled set of sequences. | Maximum sensitivity for low-frequency bimeras. | Slightly higher than consensus | Removes flagged ASVs from sequence table. |

method="per-sample" |

Independent checking per sample, no consensus. | Use when sample composition is highly divergent. | Variable per sample | Removes flagged ASVs from sequence table. |

Table 2: Impact of Chimera Removal on a Representative 16S Dataset

| Metric | Before removeBimeraDenovo() |

After removeBimeraDenovo() |

% Change |

|---|---|---|---|

| Total Sequence Variants (ASVs) | 5,842 | 4,521 | -22.6% |

| Total Read Count | 1,856,403 | 1,752,110 | -5.6% |

| Singletons Count | 1,205 | 892 | -26.0% |

Detailed Experimental Protocol

Protocol: De Novo Chimera Removal in the DADA2 Pipeline

Objective: To identify and remove chimeric amplicon sequence variants (ASVs) from the sequence variant table generated by the DADA2 inference algorithm.

Materials & Input:

- R environment (version 4.0 or higher) with DADA2 package installed.

- Input Data: A sequence table (

seqtab) object from Step 5 (merging paired ends). This is an integer matrix with rows as samples and columns as sequence variants.

Procedure:

- Load Sequence Table: Ensure the merged sequence table (

seqtab) from themergePairs()step is loaded into the R workspace. - Execute Chimera Removal: Run the consensus method of the

removeBimeraDenovo()function.

- Inspect Results: The function will print the percentage of input sequences identified as bimeras. Immediately assess the impact.

Validation (Recommended): Manually inspect some flagged chimeras using

isBimeraDenovo()on specific sequences to understand the decision.Output: The final object

seqtab.nochimis a non-chimeric sequence table ready for taxonomic assignment (Step 7). It is crucial to save this object.

Visualization: Workflow & Decision Logic

Title: Consensus Chimera Detection Logic in removeBimeraDenovo()

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Computational Tools & Inputs for Chimera Removal

| Item | Function/Description | Critical Notes |

|---|---|---|

High-Quality Merged Sequence Table (seqtab) |

Integer matrix from DADA2 mergePairs(). Provides the abundance of each ASV in each sample, the fundamental input for relative abundance comparisons. |

Chimeras are detected by comparing to more abundant parents. Poor merging or prior QC will compromise detection. |

| Reference Database (for post-check) | e.g., SILVA, Greengenes. Used after removeBimeraDenovo() to validate non-chimeric ASVs via assignTaxonomy(). |

Not used in the de novo detection step itself, but essential for the next pipeline step and overall validation. |

| Multi-core Computational Node | Enables multithread=TRUE parameter, significantly speeding up the pairwise sequence comparison. |

A hardware requirement for processing large datasets (e.g., >100 samples) in a reasonable time. |

| R DADA2 Package (v1.28+) | Contains the optimized removeBimeraDenovo() function and all dependencies (ShortRead, Biostrings, Rcpp). |

Must be kept updated to benefit from algorithm improvements and bug fixes. |

| Persistent Storage (e.g., SSD) | For saving the large seqtab.nochim RDS file and associated workspace. |

Prevents data loss and allows re-analysis without re-running computationally intensive prior steps. |

Within the DADA2 pipeline for 16S rRNA amplicon analysis, taxonomy assignment is the critical step that translates sequence variants (ASVs) into biological identities. This step classifies each ASV by comparing it to curated reference sequences of known organisms. The choice of reference database (SILVA, Greengenes, RDP) directly influences the taxonomic labels, nomenclature, and downstream ecological interpretation. This protocol details the methodologies for assigning taxonomy using these three primary databases within the DADA2 framework in R.

The selection of a reference database involves trade-offs between curation frequency, taxonomic scope, and nomenclature. The table below summarizes key characteristics.

Table 1: Comparison of Major 16S rRNA Reference Databases

| Feature | SILVA | Greengenes | RDP |

|---|---|---|---|

| Current Version | 138.1 (2020) | 13_8 (2013) | 18 (2023) |

| Number of Classified Sequences | ~2.7 million | ~1.3 million | ~3.4 million |

| Alignment & Tree | Yes (Parc) | Yes | No |

| Curation Frequency | Regular (1-2 years) | Static since 2013 | Regular |

| Taxonomy Nomenclature | Updated, includes candidate phyla | Somewhat outdated | Conservative |

| Primary Classification Algorithm | Naïve Bayesian Classifier (via DADA2) | NA | Naïve Bayesian Classifier |

| Primary Use Case | Comprehensive, modern studies | Legacy comparison, human microbiome | Well-curated, conservative classification |

| License | Custom, free for academic use | Public Domain | Free for academic use |

Detailed Experimental Protocol

This protocol follows the DADA2 workflow after chimera removal, assuming an ASV table (seqtab.nochim) and representative sequences (asv_seqs) are available.

A. Preparation: Downloading and Formatting Reference Data

- Download Training Sets:

- SILVA: Obtain the

silva_nr99_v138.1_train_set.fa.gzandsilva_species_assignment_v138.1.fa.gzfiles from the SILVA website. - Greengenes: Download

gg_13_8_train_set_97.fa.gzfrom the DADA2 website. - RDP: Download

rdp_train_set_18.fa.gzfrom the DADA2 website.

- SILVA: Obtain the

- Place the downloaded

.gzfiles in a dedicated directory (e.g.,~/tax_ref/). Do not decompress them; DADA2 reads them directly.

B. Taxonomy Assignment with the assignTaxonomy and addSpecies Functions

The core process uses a naïve Bayesian classifier implemented in DADA2.

Key Parameters for assignTaxonomy:

minBoot: Minimum bootstrap confidence (0-100) for assigning a taxonomic rank. Default=50. Increase for more conservative assignments.tryRC: Try reverse complement sequences if no match found. Recommended=TRUE.outputBootstraps: Return bootstrap values alongside assignments. Recommended=FALSE for simplicity.

C. Output Interpretation and Curation

- The output (

tax_silva, etc.) is a character matrix where rows are ASVs and columns are taxonomic ranks (Kingdom, Phylum, ...). - Inspect and filter results, e.g., remove Chloroplast, Mitochondria, and unassigned (NA) sequences at the Phylum level.

- Compare assignments across databases for key ASVs to gauge robustness.

Visualization of the Taxonomy Assignment Workflow

Diagram Title: Taxonomy Assignment and Curation Workflow in DADA2

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Taxonomy Assignment

| Item | Function/Description | Example/Note |

|---|---|---|

| Curated Reference FASTA | Core training set for classifier. Determines taxonomic labels and depth. | silva_nr99_v138.1_train_set.fa.gz |

| Species Assignment FASTA | Enables exact 100% match for species-level identification. | silva_species_assignment_v138.1.fa.gz |

| High-Performance Computing (HPC) Resources | Speeds up the computationally intensive classification process. | Local server, cloud instance (AWS, GCP), or multi-core workstation. |

| R Environment with DADA2 | Software platform containing the essential assignTaxonomy function. |

R version ≥ 4.0, DADA2 package ≥ 1.18. |

| Taxonomic Curation Scripts | Custom code to filter, subset, and manage taxonomy tables post-assignment. | R scripts to remove organelles, contaminants, or unassigned taxa. |

| Comparative Database Set | Multiple reference files to validate taxonomic calls on key ASVs. | Having SILVA, RDP, and Greengenes files for cross-checking. |

Within the comprehensive DADA2 pipeline for 16S rRNA amplicon data, the construction of a phyloseq object represents the critical juncture where denoised sequences, taxonomic assignments, and sample metadata are integrated into a single, unified data object. This step enables all subsequent ecological and differential abundance analyses. The phyloseq R package provides a powerful S4 class system for efficient handling and analysis of microbiome census data.

Core Data Components

A complete phyloseq object requires the integration of four primary components, which are outputs from previous steps in the DADA2 pipeline.

Table 1: Essential Input Components for Phyloseq Construction

| Component | Description | Typical File Format | Source DADA2 Step |

|---|---|---|---|

| ASV Table | A count matrix of Amplicon Sequence Variants (rows) across samples (columns). | .tsv, .csv, or R matrix | Step 5: Sequence Variant Inference |

| Taxonomy Table | Taxonomic assignment for each ASV, typically from Kingdom to Species. | .tsv, .csv, or R matrix | Step 7: Taxonomic Assignment |

| Sample Metadata | Experimental design, clinical, or environmental data for each sample. | .tsv, .csv, or R data.frame | Provided by researcher |

| Phylogenetic Tree | Phylogenetic relationship among ASVs (optional but recommended). | .tre, .nwk | Step 6: Sequence Alignment & Tree Building |

Table 2: Quantitative Data Structure Example

| Data Component | Dimension (Example) | Size in Memory (Approx.) | Data Type |

|---|---|---|---|

| ASV Table | 1,500 ASVs x 200 samples | 2.4 MB | Integer (counts) |

| Taxonomy Table | 1,500 ASVs x 7 ranks | 0.16 MB | Character |

| Sample Metadata | 200 samples x 15 variables | 0.02 MB | Mixed |

| Phylogenetic Tree | 1,500 tips | 1.1 MB | Phylogenetic |

Detailed Protocol

Protocol: Constructing a Phyloseq Object from DADA2 Outputs

Objective: To merge the core components into a single, reproducible phyloseq object for downstream analysis.

Materials & Software:

- R (version 4.3.0 or higher)

- RStudio (recommended)

- phyloseq package (version 1.44.0)

- DADA2 outputs:

seqtab.nochim,taxa - Sample metadata file (e.g.,

sample_metadata.tsv) - Phylogenetic tree file (e.g.,

tree.nwk)

Procedure:

Preparation and Loading:

Data Integrity Check:

Object Construction:

Validation and Basic Inspection:

Initial Filtering (Recommended):

Serialization (Saving):

Visualization of Workflow

Title: Data Integration Workflow to Build a Phyloseq Object

The Scientist's Toolkit

Table 3: Research Reagent & Computational Solutions

| Item | Function/Description | Example/Note |

|---|---|---|

| phyloseq R Package | Core S4 object class for organizing and analyzing microbiome data. | Provides functions for filtering, transformation, plotting, and statistical analysis. |

| DADA2 Outputs | The denoised sequence variant table and assigned taxonomy. | Primary inputs; must be in R matrix/data.frame format. |

| Structured Metadata File | Tab-separated file detailing sample characteristics, experimental groups, etc. | Critical for valid comparative analysis. Row names must match ASV table column names. |

| APE R Package | Handles phylogenetic tree reading and manipulation. | Used to import .nwk or .tre tree files into R. |

| RDS File Format | R's native serialization format for saving a single R object. | Ideal for preserving the complete state of a complex phyloseq object. |

| Quality Control Scripts | Custom R code for checking data integrity and performing initial filtering. | Essential for removing contaminants, low-quality samples, and non-target sequences. |

Solving Common DADA2 Problems: Troubleshooting Guide for Low-Quality or Complex Data

Diagnosing and Fixing Poor Merge Rates for Overlapping Reads

1. Introduction & Thesis Context Within the broader thesis on implementing the DADA2 pipeline for 16S rRNA gene amplicon research, achieving high merge rates for paired-end reads is a critical upstream step. Poor merging reduces the number of input sequences for the denoising algorithm, compromising the statistical power and accuracy of the final Amplicon Sequence Variant (ASV) table. This application note details the systematic diagnosis and remediation of low merge rates.

2. Common Causes & Quantitative Benchmarks Primary causes for poor merging include insufficient read overlap, high sequencing error rates in the overlap region, and variable amplicon length. Expected performance benchmarks are summarized below.

Table 1: Expected Merge Rate Benchmarks and Implications

| Parameter | Good Performance | Poor Performance | Primary Implication |

|---|---|---|---|

| Overall Merge Rate | >90% | <80% | Significant data loss, reduced depth. |

| Mismatch Rate in Overlap Region | <1% | >5% | False non-merges due to perceived divergence. |

| Mean Overlap Length | ≥20 bp | <10 bp | Insufficient sequence for reliable alignment. |

| Expected Amplicon Length (V4 region) | ~250-300 bp | N/A | Reference for designing sequencing parameters. |

3. Diagnostic Protocols

Protocol 3.1: Initial Merge Rate Assessment with DADA2 Objective: Quantify baseline merge performance. Procedure:

- Run the standard DADA2

filterAndTrim()andmergePairs()functions. - Record the input, filtered, and merged sequence counts from the

mergersobject or summary output. - Calculate: Merge Rate (%) = (Number of Merged Reads / Number of Filtered Forward Reads) * 100.

- Plot the length distribution of merged sequences using

table(nchar(getSequences(mergers))).

Protocol 3.2: Evaluating Overlap Sufficiency Objective: Determine if read overlap length is the limiting factor. Procedure:

- In Silico Calculation: Expected overlap = (Length of Forward Read + Length of Reverse Read) - Expected Amplicon Length.

- Empirical Measurement: Use

plotQualityProfile()on a subset of forward and reverse reads to inspect quality trends at the 3' ends (the presumed overlap region). - If the calculated overlap is <20 bp or the quality at the relevant cycle positions is poor (average quality score < 20), insufficient overlap is likely.

Protocol 3.3: Identifying Error-Prone Overlap Regions Objective: Pinpoint high error rates in the overlap zone that prevent merging. Procedure:

- Extract sequences that failed to merge using the

justConcatenate=TRUEargument inmergePairs()or by tracking reads through the pipeline. - Perform a local pairwise alignment of a random subset (e.g., 1000 pairs) of these failed reads using a tool like

pairwiseAlignment()from the Biostrings package. - Tabulate the frequency of mismatches and indels within the aligned region. A concentration of errors in the first ~10 bases of the reverse read's 5' end is common.

4. Fixing Strategies & Detailed Protocols

Protocol 4.1: Optimizing Truncation Parameters (Primary Fix) Objective: Improve overlap quality by strategically truncating low-quality ends. Procedure:

- Based on

plotQualityProfile(), identify the cycle number where the median quality score for reverse reads drops sharply below 20-25. - Set

truncLenin thefilterAndTrim()function toc(fwd_len, rev_len), whererev_lenis typically shortened more aggressively to remove poor-quality bases from the start of the reverse read's alignment. - Example: For 250V4 MiSeq data, if forward quality drops at 240 and reverse quality drops at 160, use

truncLen=c(240,160). Re-run merging and compare rates.

Protocol 4.2: Relaxing Merge Parameters Objective: Allow merging despite minor mismatches. Procedure:

- In the

mergePairs()function, adjust the critical parameters:maxMismatch: Increase from default (often 0) to 1 or 2.minOverlap: Decrease from default (often 20) to 12-16, but only if overlap is genuinely short.

- Apply with caution: Monitor the increase in merge rate against the potential for creating spurious merged sequences from non-overlapping fragments.

Protocol 4.3: Remedial Wet-Lab Protocol: Library Re-preparation Objective: Address the root cause of variable amplicon length or low input DNA quality. Procedure:

- Re-assess DNA Integrity: Run template DNA on a high-sensitivity gel or Bioanalyzer. Use only high-molecular-weight, non-degraded DNA.

- Optimize PCR Cycles: Reduce the number of PCR amplification cycles (e.g., from 35 to 25-28) to minimize chimera formation and heteroduplexes.

- Size Selection: Implement a stricter double-sided size selection (e.g., using SPRIselect beads) post-amplification to tightly control amplicon length variability.

5. Visual Workflows

Title: DADA2 Merge Rate Diagnosis and Optimization Loop

6. The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Merge Rate Issues

| Item | Function / Rationale |

|---|---|

| High-Fidelity DNA Polymerase | Minimizes PCR errors in the amplicon, reducing mismatches in the critical overlap region. |

| SPRIselect Beads | Enables precise double-sided size selection to control amplicon length variability, ensuring consistent overlap. |

| Qubit dsDNA HS Assay Kit | Accurately quantifies low-concentration amplicon libraries to prevent over-cycling or under-loading. |

| DADA2 R Package (v1.28+) | Provides the core mergePairs() function with tunable parameters for in silico optimization. |

| Bioconductor's Biostrings | Enables detailed sequence analysis, such as pairwise alignment of failed merges for diagnostics. |

| PhiX Control v3 | Spiked into sequencing runs for error rate monitoring; high error rates indicate a platform issue. |

Optimizing Trim/Filter Parameters for Degraded or Short-Amplicon Data

This Application Note is a critical component of a broader thesis providing a comprehensive DADA2 pipeline tutorial for 16S rRNA gene amplicon data research. While standard pipelines perform well with high-quality, full-length amplicons, many real-world samples—such as those from formalin-fixed paraffin-embedded (FFPE) tissues, ancient DNA, or environmental samples with sheared DNA—produce degraded or short amplicon data. This document details the parameter optimization required to adapt the DADA2 workflow for these challenging datasets, ensuring accurate inference of amplicon sequence variants (ASVs).

Core Challenge & Parameter Rationale

In the standard DADA2 workflow, filtering and trimming parameters are set assuming near-full-length 16S amplicons (e.g., V4 region ~250bp). Degraded/short amplicon data violates these assumptions, requiring adjustments to:

- Truncation Lengths (

truncLen): Must be reduced to match the actual length distribution of reads after primer removal. - Minimum Length (

minLen): Must be lowered to retain informative short fragments. - Maximum Expected Errors (

maxEE): Often needs tightening due to lower complexity and potential damage-associated errors in short reads. - Trim Left (

trimLeft): Critical for removing residual primer sequences or low-quality bases from the start, which disproportionately affect short reads.

Table 1: Recommended Parameter Ranges for Different Data Types

| Data Type / Amplicon Length | truncLen (Fwd, Rev) |

minLen |

maxEE (Fwd, Rev) |

trimLeft (Fwd, Rev) |

Justification & Notes |

|---|---|---|---|---|---|

| Standard V4 (~250bp) | (240, 200) | 50 | (2, 2) | (0, 0) | Default for high-quality MiSeq data. |

| Degraded FFPE (Target ~150bp) | (140, 120) | 40 | (1.5, 2) | (10-15, 10-15) | Aggressive start trim for fragmentation/damage. Stricter maxEE. |

| Ultra-Short (e.g., <100bp) | (90, 80) | 30 | (1, 1.5) | (As needed) | Very low minLen. Paired-end merging often omitted; treat as single-end. |

| Ancient DNA (Damaged) | (Length from plot) | 30 | (1, 1.5) | (≥ primer length) | Prioritize retaining data over length. High trimLeft to remove deaminated ends. |

Table 2: Impact of Parameter Changes on Output Metrics

| Parameter Adjustment | Typical Effect on Read Retention | Typical Effect on ASV Count | Risk if Too Lenient | Risk if Too Stringent |

|---|---|---|---|---|

Reduce truncLen |

Increases | May increase or decrease | Chimeras from long overhangs. | Loss of biological signal. |

Reduce minLen |

Increases | Increases | Inclusion of non-informative very short reads. | Loss of unique short variants. |

Reduce maxEE |

Decreases | Decreases | High-error reads propagate, inflating diversity. | Excessive data loss, reduced sensitivity. |

Increase trimLeft |

Decreases | May decrease | Primer contamination in ASVs. | Unnecessary loss of good sequence. |

Detailed Experimental Protocol

Protocol 1: Initial Quality Assessment & Parameter Discovery

Objective: To visualize read quality and length distribution for parameter determination. Materials: See "The Scientist's Toolkit" below. Procedure:

- Process Raw Reads: Use

fastqcon a subset of forward and reverse reads to generate initial quality profiles. - Generate DADA2 Quality Plots: In R, use

plotQualityProfile(fnFs)andplotQualityProfile(fnRs)on sample files. - Analyze Length Distribution: Inspect the sequence length histogram at the top of the DADA2 quality plot. Determine the median length and the point where quality collapses for both forward and reverse reads.

- Set Initial

truncLen: Choose truncation lengths just before the quality median sharply drops for each direction. For severely degraded data, the plots may show very short, low-quality reads. - Determine

trimLeft: If primers are not fully removed prior, settrimLeftto the length of the primer. If quality starts low, increase this value (e.g., from 0 to 10). - Set

minLenandmaxEE: Start with conservative values (e.g.,minLen=30,maxEE=c(1.5, 2)). These will be refined in Protocol 2.

Protocol 2: Iterative Filtering Optimization Loop

Objective: To empirically determine the optimal balance between read retention and error reduction. Materials: R environment with DADA2, high-performance computing cluster recommended. Procedure:

- Define Parameter Grid: Create a table of parameter combinations to test. Vary one or two key parameters (e.g.,

truncLen,maxEE) while holding others constant. - Run Filtering Iteratively: Use a

forloop in R to run thefilterAndTrim()function with each parameter set. Record the input and output read counts for each sample. - Calculate Retention Rate: For each sample and parameter set, compute:

(output reads / input reads) * 100. - Run Core DADA2 Pipeline: On the filtered output from each promising parameter set, run the core DADA2 steps (