A Comprehensive Guide to Kraken2: Building, Optimizing, and Validating Your Metagenomic Classification Workflow for Biomedical Research

This guide provides a detailed, step-by-step framework for implementing the Kraken2 metagenomic classifier, tailored for researchers, scientists, and drug development professionals.

A Comprehensive Guide to Kraken2: Building, Optimizing, and Validating Your Metagenomic Classification Workflow for Biomedical Research

Abstract

This guide provides a detailed, step-by-step framework for implementing the Kraken2 metagenomic classifier, tailored for researchers, scientists, and drug development professionals. It covers foundational principles, from database construction and algorithm theory to executing a complete analysis pipeline. The article delves into advanced application, troubleshooting common issues, and performance optimization for complex samples. Finally, it addresses critical validation strategies and comparative benchmarking against tools like Bracken, MetaPhlAn, and CLARK, empowering users to generate robust, interpretable taxonomic profiles for clinical and pharmaceutical applications.

Kraken2 Demystified: Core Concepts, Database Building, and Algorithmic Theory for Researchers

Core Innovation: The k-mer Paradigm Shift

Kraken2, the successor to the original Kraken classifier, revolutionized taxonomic profiling by employing an exact alignment of k-mers (subsequences of length k) to the lowest common ancestor (LCA) of all genomes in a reference database. This departure from read-alignment or marker-gene methods provides unprecedented speed and accuracy.

Key Quantitative Improvements of Kraken2 over Kraken1:

| Metric | Kraken1 | Kraken2 | Improvement Factor |

|---|---|---|---|

| Database Size | ~100 GB (standard) | ~35 GB (standard) | ~65% reduction |

| Speed | ~10 GB/hour | ~100 GB/hour | ~10x faster |

| Memory Usage | ~70 GB (for standard DB) | ~20 GB (for standard DB) | ~70% reduction |

| k-mer Length (default) | 31 | 35 | More specific k-mers |

Table 1: Performance comparison between Kraken1 and Kraken2, demonstrating the efficiency gains critical for large-scale studies.

Detailed Protocol: Standard Metagenomic Sample Analysis with Kraken2

This protocol is designed for the classification of shotgun metagenomic sequencing reads.

A. Prerequisite: Database Selection and Download

- Choose an appropriate pre-built database (e.g., Standard, PlusPF, custom).

- Command:

kraken2-build --download-library archaea --db $DBNAME - Protocol Note: The Standard database (archaea, bacteria, viral, plasmid, human, UniVec_Core) is recommended for general human microbiome studies. For environmental samples, consider adding the "plant" or "fungi" libraries.

- Command:

B. Step-by-Step Classification Workflow

- Quality Control & Host Read Removal: Use Trim Galore! and Bowtie2.

- Reagent: FASTQ files (R1 & R2).

- Command (Trim Galore):

trim_galore --paired --quality 20 --length 50 --output_dir ./trimmed sample_R1.fastq.gz sample_R2.fastq.gz

- Execute Kraken2 Classification:

- Input: Trimmed FASTQ files.

- Critical Parameters:

--threadsfor parallelism,--reportfor summary output,--use-namesfor taxonomic names in output. - Command:

kraken2 --db $DBNAME --threads 16 --paired --report sample.report --output sample.kraken2 trimmed_R1.fastq trimmed_R2.fastq

- Generate Readable Report with Bracken:

- Purpose: Bracken estimates species/pathogen abundance from Kraken2 reports using Bayesian re-estimation.

- Command:

bracken -d $DBNAME -i sample.report -o sample.bracken -r 150 -l S

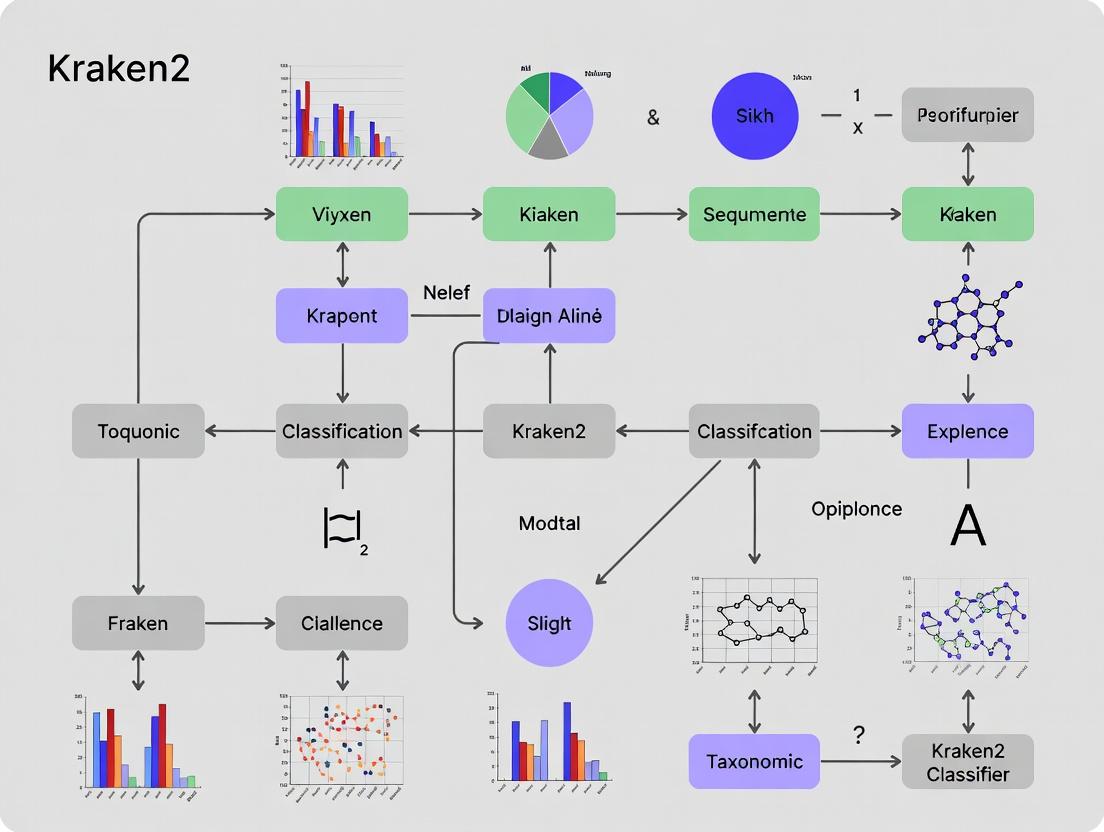

(Diagram 1: Kraken2-Bracken workflow for metagenomic profiling.)

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Kraken2 Workflow |

|---|---|

| Pre-built Kraken2 Database | Curated collection of genomic sequences converted into a k-mer-to-LCA index. Enables immediate classification without building from scratch. |

| High-Quality Reference Genomes (NCBI, RefSeq) | Source material for custom database construction. Ensures taxonomic breadth and accuracy. |

| Bracken Software Package | Bayesian algorithm to re-estimate species/pathogen abundance from Kraken2 output, correcting for classification ambiguity. |

| Pavian or KronaTools | Interactive visualization tools for exploring hierarchical taxonomic reports, enabling intuitive data interpretation. |

| HUMAnN3 or MetaPhlAn | Complementary functional profilers. Used downstream of Kraken2 to link taxonomic composition to metabolic pathway abundance. |

| High-Performance Computing (HPC) Cluster | Essential for database building and large-scale sample analysis due to memory (RAM) and multi-threading requirements. |

Table 2: Key resources for implementing a successful Kraken2-based research pipeline.

Advanced Protocol: Custom Database Construction for Niche Applications

This is critical for drug development targeting specific pathogens or for studying under-represented biomes.

A. Rationale: Pre-built databases may lack novel strains or specific plasmids relevant to antimicrobial resistance (AMR) studies.

B. Detailed Methodology:

- Define Scope: Identify target taxa and download genomes (e.g., all Enterobacteriaceae plus relevant plasmid/AMR gene databases).

- Acquire Genomic Data:

- Source: NCBI RefSeq via

kraken2-build --download-library - Custom Sequences: Add proprietary or novel genome assemblies in FASTA format to

library/folder.

- Source: NCBI RefSeq via

- Build the Database:

- Command Sequence:

- Validate Database:

- Use a set of known positive control reads (simulated or previously characterized) to assess classification sensitivity and precision.

(Diagram 2: Custom Kraken2 database construction workflow.)

Thesis Context: Integration into a Comprehensive Classifier Workflow

Within the broader thesis on Kraken2 workflow research, the k-mer-based classifier serves as the central, high-speed taxonomic filter. Its output feeds downstream specialized modules: pathogen abundance (Bracken), functional potential (HUMAnN3), and strain tracking (StrainGE). The revolution lies in its transformation of the computational bottleneck (classification) into a rapid pre-processing step, enabling real-time, large-cohort analyses—a critical advancement for biomarker discovery in clinical drug development.

Within the broader thesis on Kraken2 metagenomic classifier workflows, this document details the core algorithmic innovations that differentiate Kraken2 from its predecessor and other classification tools. The workflow's efficiency and accuracy are predicated on its unique two-stage process: exact k-mer matching against a comprehensive database, followed by LCA-based taxonomic assignment. This protocol is foundational for research in microbial ecology, infectious disease diagnostics, and therapeutic discovery.

Core Algorithmic Workflow & Data Structures

Key Algorithmic Steps and Quantitative Metrics

Table 1: Kraken2 vs. Kraken1: Core Algorithmic and Performance Comparison

| Parameter | Kraken1 | Kraken2 | Functional Impact |

|---|---|---|---|

| k-mer Size | Fixed (typically 31) | Configurable (default 35) | Increased k-mer size improves specificity, reducing false positives. |

| Database Structure | Sorted list of k-mer/LCA pairs | Compact hash table using minimizers | Drastic reduction in memory usage (~70% less) and faster lookup. |

| Minimizer Length (l) | N/A | Default 31 | Represents a k–l+1 submer; reduces storage while preserving unique mapping. |

| Capacity | Stores all k-mers | Stores only minimizers | Database size reduced by ~50-70% compared to Kraken1 DB. |

| Query Speed | ~85 million reads/hr | ~100-110 million reads/hr | ~20-30% improvement due to efficient hashing and reduced memory latency. |

Workflow Diagram: From Raw Reads to Taxonomic Labels

Diagram Title: Kraken2 Classification Algorithm Workflow

Detailed Experimental Protocols

Protocol 1: Building a Custom Kraken2 Database

Objective: Construct a species-specific or comprehensive database for targeted metagenomic analysis.

Materials: See "Research Reagent Solutions" (Section 5). Procedure:

- Data Acquisition: Download complete genomic sequences from NCBI RefSeq for your target taxa using

ncbi-genome-download. - Sequence Preparation: Concatenate all bacterial, archaeal, viral, and plasmid sequences into separate library files. Human (or host) sequences should be compiled for exclusion.

- Database Build Command:

- Validation: Use

kraken2-inspectto generate a report of taxa and k-mer counts. Confirm the presence of key organisms.

Protocol 2: Performing Metagenomic Classification with Kraken2

Objective: Classify paired-end metagenomic sequencing reads and generate a report.

Procedure:

- Run Classification:

- Generate Readable Report: The

--reportfile is compatible with downstream tools like Bracken for abundance estimation. - Interpretation: The output file contains one line per read, with fields indicating classification status (C/U), taxon ID, and the sequence of LCA assignments (taxonomy path).

Protocol 3: Validating Classifier Performance using CAMI Datasets

Objective: Benchmark Kraken2 sensitivity and precision against a known gold-standard dataset.

Materials: CAMI (Critical Assessment of Metagenome Interpretation) challenge datasets (e.g., CAMI II Human Microbiome). Procedure:

- Download the CAMI dataset (reads and gold-standard taxonomy profile).

- Classify the reads using Kraken2 with a standard database (e.g., Standard-8 or MiniKraken2).

- Use the CAMI-provided evaluation tools (

cami_evaluator) to compare the Kraken2 output profile to the gold standard. - Calculate key metrics: Sensitivity (recall), Precision, and F1-score at various taxonomic ranks.

Table 2: Example Performance Metrics on CAMI Low-Complexity Dataset

| Taxonomic Rank | Sensitivity | Precision | F1-Score | Running Time (min) |

|---|---|---|---|---|

| Species | 0.892 | 0.901 | 0.896 | 42 |

| Genus | 0.915 | 0.927 | 0.921 | 42 |

| Family | 0.934 | 0.945 | 0.939 | 42 |

The LCA Assignment Logic

LCA Decision Tree Diagram

Diagram Title: LCA Decision Logic for a Single Read

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for Kraken2 Workflow

| Item Name | Category | Function / Purpose | Example Source/Version |

|---|---|---|---|

| NCBI RefSeq Genomes | Reference Data | Curated, non-redundant genomic sequences for database building. | NCBI FTP |

| Standard Kraken2 Database | Pre-built Database | Ready-to-use database (e.g., Standard, PlusPF) for general classification. | Langmead Lab / Ben Langmead |

| MiniKraken2 DB (8GB) | Pre-built Database | Compact database for quick tests or resource-limited environments. | Langmead Lab |

| CAMI Profiling Tools | Benchmarking Software | Evaluates classifier output against a gold standard for validation. | CAMI GitHub Repository |

| Bracken (Bayesian Reestimation) | Downstream Tool | Estimates species/phylum abundance from Kraken2 reports. | GitHub: jenniferlu717/Bracken |

| Pavian | Visualization Tool | Interactive R Shiny app for visualizing and interpreting Kraken2 reports. | GitHub: fbreitwieser/pavian |

| KrakenTools | Utility Suite | A collection of scripts for report analysis, extraction, and transformation. | GitHub: jenniferlu717/KrakenTools |

Within the broader thesis research on optimizing Kraken2 metagenomic classification workflows for clinical and pharmaceutical applications, the construction and customization of the reference database is the foundational, critical first step. The choice and composition of the database directly dictate classification accuracy, sensitivity, computational efficiency, and the relevance of results for downstream analyses, such as pathogen detection, resistance gene profiling, and biomarker discovery in drug development. This document provides detailed application notes and protocols for building Standard, PlusPF, and Custom Kraken2 databases.

Kraken2 Database Types: A Quantitative Comparison

Table 1: Comparison of Standard Kraken2 Database Types

| Database Type | Approx. Size (GB) | Contents Description | Primary Use Case | Key Advantage | Key Limitation |

|---|---|---|---|---|---|

| Standard | ~100 GB | NCBI RefSeq complete genomes for Archaea, Bacteria, Viruses, and the human genome. | Broad-spectrum taxonomic profiling in research environments. | Well-curated, standardized, good for general community analysis. | Lacks plasmid and fungal sequences; larger size than MiniKraken. |

| PlusPF | ~160 GB | Standard database plus the Plasmid (P) and Fungi (F) RefSeq collections. | Studies involving fungal pathogens or horizontal gene transfer (plasmids). | Expanded taxonomic and genetic element coverage. | Increased download time and memory footprint for classification. |

| Custom | Variable (User-defined) | User-selected genomic sequences (e.g., specific pathogens, engineered strains, proprietary isolates). | Targeted surveillance, clinical diagnostics, or proprietary R&D pipelines. | Maximum relevance and specificity for a defined question; can be smaller/faster. | Requires careful curation and assembly of input data. |

Experimental Protocols

Protocol 3.1: Building a Standard or PlusPF Database

Objective: Download and construct a ready-to-use Kraken2 database using the kraken2-build script.

Research Reagent Solutions & Essential Materials:

- Unix-based System (Linux/macOS): Required for command-line execution.

- Kraken2 Software: Installed and in system PATH. (

conda install -c bioconda kraken2recommended). - Approx. 200 GB Free Disk Space: For downloading and building the PlusPF database.

- rsync & wget: Standard command-line tools for file transfer.

- NCBI Taxonomy Data: Automatically downloaded by the script.

Methodology:

Set the Database Directory:

Download Taxonomy Information (Required for all builds):

This downloads the current NCBI taxonomy tree and mappings.

Download Library Sequences:

- For the Standard database:

- For the PlusPF database, add:

Build the Database:

This step creates the sorted, minimized k-mer lookup table (

.jdbfile). The--threadsargument accelerates the process.Cleanup Intermediate Files (Optional):

Protocol 3.2: Building a Custom Database

Objective: Construct a Kraken2 database from a user-defined set of genome assemblies or sequences.

Methodology:

Prepare the Taxonomy Mapping File (

seqid2taxid.map):- Create a tab-separated file linking each sequence file to a TaxID.

- Format:

sequence_id<TAB>taxid - Example:

Prepare a Library Directory and Add Sequences:

Download Taxonomy (as in Protocol 3.1, Step 2).

Add Custom Sequences to the Database Library:

Build with Custom Taxonomy Map:

Visualization of Database Selection and Build Workflow

Title: Kraken2 Database Selection and Build Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Kraken2 Database Construction

| Item | Function/Description | Example Source/Consideration |

|---|---|---|

| High-Performance Computing (HPC) Node | Provides the CPU, memory, and I/O necessary for downloading and building large databases (PlusPF ~160GB). | Local cluster, cloud instance (AWS EC2, GCP). |

| Conda/Bioconda Environment | Reproducible, one-command installation of Kraken2 and its dependencies. | conda create -n kraken2 -c bioconda kraken2 |

| NCBI Taxonomy | The standardized taxonomic framework used by Kraken2 to map k-mers to a tree of life. | Automatically fetched via rsync from NCBI. |

| RefSeq Genome Library | The curated, non-redundant collection of reference genomes forming the Standard/PlusPF databases. | Downloaded via kraken2-build --download-library. |

| Custom Genome Assemblies (FASTA) | User-provided sequences for building targeted databases. Must be labeled with correct TaxIDs. | In-house sequencing projects, proprietary strain collections. |

| Taxonomy ID Mapping File | For custom databases, links each sequence header to its NCBI TaxID. Critical for accurate labeling. | Manually curated TSV file (seqid2taxid.map). |

| Large-Capacity Storage (NVMe/SSD preferred) | Stores the final database files. SSD improves build time and classification speed. | Minimum 200GB for PlusPF; scales with custom data. |

Application Notes: File Formats in the Kraken2 Workflow

Within the context of a broader thesis on the Kraken2 metagenomic classifier workflow, understanding the precise function, structure, and interoperability of its core file formats is critical for robust bioinformatics analysis. These formats constitute the essential data pipeline for taxonomic profiling in microbial communities, directly impacting downstream interpretation in research and drug discovery.

Primary Input: FASTA and FASTQ

Raw sequence data is input into Kraken2 in either FASTA or FASTQ format, which differ in their inclusion of quality metrics.

Table 1: Comparison of Primary Input File Formats

| Feature | FASTA Format | FASTQ Format |

|---|---|---|

| Primary Purpose | Stores biological sequences (nucleotide/protein). | Stores biological sequences with quality scores. |

| Structure per Read | Two lines: 1) Header line starting with >, 2) Sequence line. |

Four lines: 1) Header starting with @, 2) Sequence, 3) + (optional header), 4) Quality scores. |

| Quality Metrics | None. | Included per base (Phred scores). Encoded in ASCII. |

| Use in Kraken2 | Direct classification. Kraken2 ignores quality scores. | Direct classification. Kraken2 ignores quality scores but requires valid FASTQ structure. |

| Typical Source | Assembled contigs, reference genomes. | Raw output from NGS platforms (Illumina, Ion Torrent). |

| Size | Smaller. | Larger (~2x FASTA) due to quality lines. |

Core Output: Kraken Report and Bracken-Compatible File

Kraken2's classification results are summarized in two key, interrelated output formats.

Table 2: Comparison of Core Output File Formats

| Feature | Kraken2 Standard Output | Kraken Report | Bracken-Compatible File |

|---|---|---|---|

| Format | Tab-separated, one line per read. | Tab-separated, one line per taxon. | Tab-separated, one line per taxon. |

| Content | Read ID, taxonomy ID, length, LCA mapping. | Percentage, read count, taxon rank, NCBI ID, scientific name. | Similar to report, but counts are re-estimated at a specific rank. |

| Primary Use | Trace individual read classifications. | Human-readable summary of taxonomy tree abundance. | Input for Bracken to correct for species-level resolution bias. |

| Key Metric | Classification status (C/U). | Cumulative count from clade-rooted subtree. | Estimated number of reads originating from that taxon. |

| Downstream Analysis | Can be parsed for specificity. | Direct visualization (Krona, Pavian). | Essential for accurate comparative metrics (e.g., alpha/beta diversity). |

Experimental Protocols

Protocol 2.1: Generating a Kraken2 Report from Raw FASTQ Data

Objective: To taxonomically classify raw metagenomic sequencing reads and generate a standard Kraken report for community analysis.

Materials:

- Raw paired-end FASTQ files (

sample_R1.fq.gz,sample_R2.fq.gz) - Kraken2 software (v2.1.3) installed with a pre-built database (e.g., Standard-8 or PlusPF)

- High-performance computing (HPC) environment with adequate memory (>100GB RAM recommended)

Methodology:

- Database Preparation: Ensure the Kraken2 database is built and indexed. This is a one-time setup.

- Sequence Classification: Run Kraken2 on the FASTQ files.

--paired: Specifies paired-end input.--output: Creates the read-by-read classification file.--report: Generates the essential summary report file (sample.kreport).

- Output Verification: Inspect the first lines of the report.

Protocol 2.2: Generating Bracken Abundance Estimates from a Kraken Report

Objective: To refine Kraken2 clade-count estimates using the Bracken algorithm, producing more accurate species- or genus-level abundance profiles.

Materials:

- Kraken report file (

sample.kreport) - Bracken software (v2.8)

- Bracken database files (built to match Kraken2 database and desired read length)

Methodology:

- Bracken Estimation: Run Bracken at the genus (G) or species (S) level.

-i: Input Kraken report.-o: Output Bracken abundance file.-l: Taxonomic level (S, G, etc.).-r: Read length used in the study.

- Combine Reports (Optional): Generate a new combined report file for visualization.

Visualization of the Kraken2-Bracken Workflow

Diagram Title: Kraken2 and Bracken Data Processing Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for the Kraken2/Bracken Workflow

| Item | Function & Relevance |

|---|---|

| Pre-built Kraken2 Database (e.g., Standard-8, PlusPF) | Curated genomic libraries of archaea, bacteria, viruses, plasmids, human, and fungi. The foundational "reagent" for classification; size and content dictate sensitivity and specificity. |

| Bracken Database (length-specific) | Probabilistic model files derived from the Kraken2 database for a specific read length. Essential "reagent" for transforming clade counts into estimated read distributions at finer taxonomic ranks. |

| High-Quality Reference Genomes (NCBI RefSeq) | The raw material for building custom databases. Critical for targeted studies (e.g., focusing on antibiotic resistance genes or non-model organisms). |

| Quality Control Tools (FastQC, Trimmomatic) | Pre-classification reagents to assess and clean input FASTQ data. Removes low-quality bases and adapter sequences, improving classification accuracy. |

| Visualization Software (KronaTools, Pavian) | Post-analysis reagents for interactive exploration of Kraken reports and Bracken output, enabling intuitive interpretation of complex community data. |

| Metagenomic Read Simulator (CAMISIM, Grinder) | Validation reagent for generating synthetic microbial community FASTQ files with known composition, used for benchmarking classifier performance. |

Efficient analysis of metagenomic sequencing data, such as with the Kraken2 classifier, demands scalable and reproducible computational environments. The choice between local machines, High-Performance Computing (HPC) clusters, and cloud platforms (AWS, GCP) is critical for workflow efficiency, cost management, and data sovereignty in drug development and biomedical research.

Environment Comparison & Quantitative Data

Table 1: Comparative Analysis of Computational Environments for Kraken2 Workflows

| Feature | Local Machine (e.g., Workstation) | Institutional HPC Cluster | Cloud (AWS/GCP) |

|---|---|---|---|

| Typical Setup Time | Immediate (if hardware exists) | 1-5 days (account approval) | Minutes to hours |

| Upfront Cost | High ($2k - $10k+) | Usually covered by institution | $0 (pay-as-you-go) |

| Scalability | Fixed; limited by hardware | High, but limited by queue/grants | Effectively unlimited, on-demand |

| Data Transfer Cost | $0 (local storage) | $0 (internal network) | Can be significant for large datasets |

| Typical Cost for 1000 Metagenomes* | ~$0 (after hardware) | ~$0 - $500 (institutional) | $200 - $800 (cloud credits) |

| Best For | Prototyping, small datasets | Large, recurring batch jobs | Bursty, variable workloads, collaboration |

*Cost estimate based on processing 1000 samples (10 GB each) using a Kraken2 workflow on 16-core VMs. Cloud costs vary by region and instance type.

Experimental Protocols for Environment Setup

Protocol 3.1: Local Machine Setup for Kraken2 Development

Objective: Create a stable, reproducible local environment for Kraken2 database building and classifier testing.

- System Requirements: Ensure a Linux/macOS machine with minimum 16 GB RAM, 100 GB free disk space, and a multi-core processor.

- Containerization: Install Docker or Singularity.

- For Docker:

sudo apt-get update && sudo apt-get install -y docker.io(Ubuntu). - Verify:

docker --version.

- For Docker:

- Kraken2 Installation via Conda:

- Install Miniconda from https://docs.conda.io/en/latest/miniconda.html.

- Create and activate a bioinformatics environment:

- Database Download:

- Download a standard Kraken2 database (e.g.,

Standard-8):

- Download a standard Kraken2 database (e.g.,

- Validation: Run a test classification on a small, known metagenomic FASTQ file.

Protocol 3.2: HPC Cluster Deployment (Slurm Scheduler)

Objective: Execute large-scale Kraken2 classifications using a job scheduler.

- Access: Obtain cluster credentials and familiarize yourself with the module system.

- Load Modules: Typically, load bioinformatics and scheduler modules.

- Prepare Job Script (

kraken_job.slurm): - Submit Job:

sbatch kraken_job.slurm.

Protocol 3.3: Cloud Deployment on AWS EC2 (Spot Instance)

Objective: Launch a cost-effective, transient compute node for burst analysis.

- Console & Configuration:

- Log into AWS Management Console. Navigate to EC2.

- Launch Instance. Select an Ubuntu 22.04 LTS AMI.

- Choose instance type (e.g.,

c6i.4xlarge- 16 vCPUs, 32 GB RAM). - Under "Configure Instance," request a Spot Instance.

- Storage: Add an EBS volume (e.g., 500 GB GP3) for databases and data.

- Security Group: Configure to allow SSH (port 22) from your IP.

- User Data Script: Paste the following into the "Advanced Details" -> "User data" field to automate setup:

- Launch & Connect: Launch the instance, retrieve its public IP, and connect via SSH to monitor:

ssh -i your-key.pem ubuntu@<public-ip>.

Visualized Workflows

Local Machine Setup Workflow

HPC Job Submission and Execution

Cloud Burst Analysis Automation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational "Reagents" for Kraken2 Metagenomic Analysis

| Item/Category | Function in Workflow | Example/Note |

|---|---|---|

| Reference Databases | Taxonomic classification targets. | Standard Kraken2 DB, Custom DB (from NCBI nt). Critical for accuracy. |

| Container Images | Reproducible, dependency-managed software environments. | Docker: quay.io/biocontainers/kraken2. Singularity: .sif files. |

| Conda Environments | Isolated package management for local development. | environment.yml file specifying Kraken2, Bracken, Pandas versions. |

| Job Scheduler Scripts | Define compute resources and execution steps for HPC. | SLURM, PBS, or LSF batch scripts. Essential for cluster use. |

| Cloud Machine Images (AMIs) | Pre-configured templates for rapid cloud instance deployment. | AWS: BioLinux AMI. GCP: Bioinformatics-focused public images. |

| Orchestration Tools | Automate multi-step workflows across environments. | Nextflow, Snakemake, or WDL (Cromwell). Enable portability. |

| Persistent Cloud Storage | Reliable, scalable storage for large datasets and results. | AWS S3, GCP Cloud Storage. Often cheaper than block storage. |

| Metadata Files (CSV/TSV) | Map sample IDs to file paths and experimental conditions. | Critical for batch processing and reproducible analysis. |

From Raw Reads to Results: A Step-by-Step Kraken2 Workflow with Advanced Application Scenarios

The Complete End-to-End Kraken2 Command-Line Workflow (with Code Examples)

This protocol details the comprehensive end-to-end workflow for taxonomic classification of high-throughput sequencing reads using Kraken2. Within the broader thesis on metagenomic classifier workflows, Kraken2 represents a cornerstone methodology for rapid, k-mer based assignment, serving as a critical first step in profiling microbial communities from diverse environments (e.g., gut, soil, clinical samples). Its speed and accuracy directly impact downstream analyses in drug discovery, microbiome research, and pathogen detection.

Essential Research Reagent Solutions & Computational Toolkit

Table 1: Key Research Reagent Solutions for Kraken2 Workflow

| Item | Function & Explanation |

|---|---|

| Kraken2 Software | Core classification engine. Uses k-mer-based alignment against a reference database for ultrafast taxonomic labeling. |

| Pre-built Reference Database | Curated set of genomic sequences (e.g., Standard, PlusPF, Custom). Provides the taxonomic targets for classification. |

| Sequencing Reads (FASTQ) | Raw input data (single or paired-end). Typically from Illumina, but can be from other platforms. |

| Bracken (Bayesian Reestimation) | Post-processor for Kraken2 output. Estimates species/ genus abundance from read assignments, correcting for classification ambiguity. |

| Pavian | Interactive web tool for visualization and analysis of classification reports. Enables comparative and diagnostic exploration. |

| KronaTools | Creates interactive hierarchical pie charts for visualizing taxonomic composition. |

| High-Performance Computing (HPC) Node | Essential for database building and large sample classification. Requires substantial memory (RAM: 100GB+ for standard DB). |

Core Workflow Protocol & Command-Line Execution

Protocol 3.1: Database Selection and Setup

Objective: Acquire a suitable taxonomic database. Methodology:

- Standard Database: For general microbial profiling.

- Custom Database: For targeted studies (e.g., viral, fungal).

Table 2: Common Kraken2 Database Options & Quantitative Specifications

| Database Name | Estimated Size | Scope | Typical Use-Case |

|---|---|---|---|

| Standard | ~160 GB | Archaea, bacteria, viral, plasmid, human, UniVec_Core | General microbiome profiling |

| MiniKraken2 (8GB) | 8 GB | Subset of RefSeq genomes | Quick tests, low-resource environments |

| PlusPF (PlusP/F) | ~130 GB | Standard + protozoa & fungi | Eukaryotic pathogen inclusion |

| Custom (Viral) | Varies (often <10GB) | User-defined genomes | Targeted virome studies |

Protocol 3.2: Sample Classification

Objective: Perform taxonomic assignment of metagenomic reads. Methodology:

Protocol 3.3: Abundance Re-estimation with Bracken

Objective: Generate accurate abundance estimates at species/ genus level. Methodology:

Protocol 3.4: Visualization and Reporting

Objective: Generate interpretable taxonomic profiles. Methodology:

Workflow Visualization Diagrams

Diagram 1 Title: End-to-End Kraken2 Bioinformatic Workflow

Diagram 2 Title: Kraken2 k-mer LCA Classification Logic

Advanced Protocol: Integrated Quality Control & Validation

Protocol 5.1: Workflow with QC and Validation

Objective: Integrate quality control and validate classification accuracy. Methodology:

Table 3: Example Validation Output from a ZymoBIOMICS Mock Community

| Taxon (Expected) | Kraken2/Bracken % Abundance (Observed) | Relative Error |

|---|---|---|

| Pseudomonas aeruginosa | 11.8% | +0.8% |

| Escherichia coli | 10.2% | +0.2% |

| Salmonella enterica | 9.7% | -0.3% |

| Lactobacillus fermentum | 8.9% | -1.1% |

| Enterococcus faecalis | 8.5% | -1.5% |

| Staphylococcus aureus | 12.1% | +2.1% |

| Listeria monocytogenes | 9.5% | -0.5% |

| Bacillus subtilis | 9.2% | -0.8% |

Within the comprehensive thesis on the Kraken2 metagenomic classifier workflow research, the accuracy of taxonomic classification is fundamentally dependent on the quality of input sequence data. Pre-processing steps—adapter trimming, host nucleic acid depletion, and stringent quality control—are critical to minimize false positives, reduce computational burden, and ensure that analyzed reads are of microbial origin and high quality. This protocol details the essential pre-processing pipeline required prior to Kraken2 analysis for metagenomic studies, particularly in clinical and drug discovery settings where host contamination can be exceptionally high.

Key Research Reagent Solutions & Materials

| Item/Category | Function/Explanation |

|---|---|

| Illumina Sequencing Adapters | Short oligonucleotide sequences ligated to DNA fragments for cluster generation and sequencing. Must be trimmed to prevent misalignment and analysis errors. |

| Bowtie2 (v2.5.x) | Ultrafast, memory-efficient aligner for mapping sequencing reads against large reference genomes (e.g., human, mouse) for host read depletion. |

| FastQC (v0.12.x) | Quality control tool that provides an overview of basic read statistics including per-base quality, adapter contamination, and sequence duplication levels. |

| Trimmomatic (v0.39) or fastp (v0.23.x) | Flexible tool for adapter trimming, quality filtering, and cropping of reads based on quality scores. |

| Human Reference Genome (GRCh38.p14) | High-quality, curated reference genome used as the target for aligning and removing host-derived reads. |

| SAMtools (v1.17) & BEDTools (v2.31.x) | Utilities for manipulating alignment (SAM/BAM) files and genomic interval operations, essential for post-alignment processing. |

| Kraken2 Database | Custom or standard database containing microbial genomes; pre-processing ensures reads are optimally prepared for accurate classification. |

| High-Performance Computing (HPC) Cluster | Essential for computationally intensive steps like host depletion with Bowtie2 against large genomes. |

Detailed Application Notes & Protocols

Comprehensive Quality Control & Adapter Trimming

Objective: To remove technical sequences (adapters, barcodes) and low-quality bases, ensuring high-fidelity reads for downstream analysis.

Protocol: Adapter Trimming with Trimmomatic

- Input: Paired-end FASTQ files (

sample_R1.fq.gz,sample_R2.fq.gz). - Command:

- Parameters Explained:

ILLUMINACLIP: Removes adapter sequences.TruSeq3-PE-2.fais the adapter file.LEADING/TRAILING: Cut bases off the start/end if below quality 20.SLIDINGWINDOW: Scans read with a 4-base window, cutting when average quality drops below 20.MINLEN: Discards reads shorter than 50 bp after trimming.

- QC Check: Run FastQC on trimmed files to confirm adapter removal and quality improvement.

Host Read Depletion Using Bowtie2

Objective: To align reads against the host genome and isolate non-aligned (presumably microbial) reads for metagenomic analysis.

Protocol: Host Depletion (Human) with Bowtie2

- Prerequisite: Index the host genome.

bowtie2-build GRCh38_noalt_analysis_set.fna GRCh38_index - Alignment to Host:

- Parameters Explained:

--very-sensitive: Slower but more thorough alignment, maximizing host read identification.--un-conc-gz: Writes paired-end reads that did not align concordantly to the host tosample_host_removed_1.fq.gzandsample_host_removed_2.fq.gz.-S: Outputs alignment file (SAM format). The SAM file is typically discarded after filtering; only unaligned reads are kept.

- Output Management: Compress and archive the SAM file if needed for audit, but typically remove it to save space. The

*host_removed*.fq.gzfiles are the primary output.

Post-Depletion Quality Assessment

Objective: To verify the success of host depletion and final read quality before Kraken2 classification.

Protocol:

- Run FastQC on the host-depleted FASTQ files.

- Generate a summary table of read counts at each stage (see Table 1).

- Assess the percentage of reads removed. A successful depletion from a human sample (e.g., blood) typically removes >95% of reads.

Data Presentation

Table 1: Quantitative Summary of Pre-processing Steps for a Simulated Blood Metagenome Sample

| Processing Stage | Read Pairs | % Retained (from Raw) | Key Metric & Value |

|---|---|---|---|

| Raw Reads | 10,000,000 | 100% | Adapter Content (FastQC): 12% |

| After Trimmomatic | 8,950,000 | 89.5% | Avg. Read Length: 145 bp (from 150 bp) |

| After Bowtie2 Host Depletion | 425,000 | 4.25% | Host Depletion Efficiency: 95.25% |

| Final for Kraken2 | 425,000 | 4.25% | Q20 Score (Post-depletion): >98% |

Visualized Workflows

Pre-processing Pipeline for Kraken2 Metagenomics

Bowtie2 Host Read Depletion Logic

Host Read Depletion Decision Logic

Within the broader thesis investigating optimized workflows for metagenomic pathogen detection in drug development pipelines, the precise configuration of the Kraken2 classifier is a critical determinant of accuracy, speed, and interpretability. This document provides detailed application notes on five core execution parameters, framing their optimization as a fundamental step in establishing a robust, reproducible bioinformatics protocol for therapeutic target discovery and microbiome-related drug efficacy studies.

The following table summarizes the five key Kraken2 parameters, their data types, default values, and core functions within a metagenomic analysis workflow.

Table 1: Core Kraken2 Execution Parameters for Metagenomic Workflow

| Parameter | Data Type/Value | Default Value | Primary Function in Workflow | Impact on Thesis Research Aims |

|---|---|---|---|---|

--threads |

Integer | 1 | Specifies number of CPU threads for parallel processing. | Directly affects pipeline throughput and feasibility of large-scale cohort analysis for clinical trials. |

--db |

Directory Path | None (Mandatory) | Path to the custom or standard Kraken2 database containing genomic k-mer signatures. | Database composition (e.g., inclusion of proprietary pathogen strains) is a key experimental variable in sensitivity assays. |

--output |

File Path | Standard Output (stdout) | File to write classification labels for each read (sequence assignment per read). | Primary data for downstream abundance profiling and strain-level tracking in longitudinal studies. |

--report |

File Path | None | File to write a taxonomic summary report (clade counts and percentages). | Essential for comparative community analysis and statistical testing of taxonomic shifts in response to drug candidates. |

--confidence |

Float (0-1) | 0.0 | Sets a threshold for the minimum score required for a classification. Calculated from k-mer hits. | Critical control for precision/recall trade-off; fine-tuning reduces false positives in complex samples. |

Detailed Experimental Protocols

Protocol A: Benchmarking--threadsfor Workflow Scalability

Objective: To empirically determine the optimal --threads setting for maximizing throughput while minimizing resource contention on an HPC cluster.

- Resource Allocation: Request a compute node with 32 physical CPU cores and 128GB RAM.

- Test Dataset: Use a standardized metagenomic whole-genome sequencing (WGS) dataset (e.g., ZymoBIOMICS D6300 mock community) containing ~10 million 150bp paired-end reads.

- Execution Matrix: Execute Kraken2 with

--threadsset to 1, 2, 4, 8, 16, 32. All other parameters held constant (--db /path/to/standard_db,--confidence 0.1). - Metric Collection: Record wall-clock time using the

/usr/bin/time -vcommand. Monitor memory usage via cluster job logs. - Analysis: Plot speed-up (timedefault/timen) versus thread count. Identify the point of diminishing returns where added threads no longer improve performance linearly.

Protocol B: Optimizing--confidencefor Specificity in Low-Biomass Samples

Objective: To establish a sample-specific --confidence threshold that minimizes false-positive classifications in challenging (e.g., drug-treated, low microbial load) samples.

- Sample Preparation: Include both experimental samples and negative extraction controls (NTCs).

- Initial Classification: Run Kraken2 on all samples with

--confidence 0.0to capture all possible classifications. - Control Analysis: Inspect the

--reportfile from the NTC. Any taxon identified represents likely contamination or false-positive signal. - Threshold Iteration: Re-run classification on experimental samples, incrementally increasing

--confidence(e.g., 0.0, 0.1, 0.3, 0.5, 0.7, 0.9). - Criterion Definition: Select the lowest confidence threshold at which taxa prevalent in the NTC are eliminated from experimental sample reports, while known expected taxa (from mock communities or spike-ins) are retained.

Visual Workflow & Logical Diagrams

Diagram 1: Kraken2 Parameter Roles in Classification Workflow

Diagram 2: Parameter Integration in Thesis Experiment Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Reagents for Kraken2 Metagenomic Workflow Experiments

| Item | Category | Function in Workflow | Example/Supplier |

|---|---|---|---|

| Reference Database | Bioinformatics Reagent | Provides the k-mer library for taxonomic classification. Custom dbs enable targeted detection. | Standard: "Standard" Kraken2 DB. Custom: RefSeq/GENBANK data, proprietary strain genomes. |

| Mock Microbial Community | Biological Control | Validates classification accuracy, sensitivity, and precision of the entire wet-lab to computational pipeline. | ZymoBIOMICS D6300 (known composition), ATCC MSA-1003. |

| Negative Control (NTC) | Process Control | Identifies laboratory or reagent contamination, essential for setting --confidence thresholds. |

Nuclease-free water processed identically to biological samples. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Provides the parallel computing resources required for efficient execution (--threads) of large-scale analyses. |

Local university cluster, AWS EC2 (c5/m5 instances), Google Cloud N2 series. |

| Taxonomy Translation File | Bioinformatics Reagent | Maps taxonomic IDs in Kraken2 outputs to scientific names; essential for report interpretation. | taxdump.tar.gz (NCBI), included with Kraken2 database build. |

This document, as part of a comprehensive thesis on the Kraken2 metagenomic classifier workflow, details the essential downstream application of Bracken (Bayesian Reestimation of Abundance with KrakEN). While Kraken2 provides rapid taxonomic labeling of sequence reads, its output is in the form of read counts, which can be biased by variable genome lengths and database composition. Bracken refines these initial classifications using a Bayesian algorithm to estimate the true species- or genus-level abundance proportions within a sample, transforming raw read counts into data suitable for comparative ecological and clinical analyses.

Core Algorithm and Data Processing

Bracken estimates taxonomic abundance by modeling reads probabilistically at specific taxonomic levels (e.g., species). It uses the Kraken2 assignments and the structure of the reference database to redistribute "ambiguous" reads that were classified to higher taxonomic ranks (e.g., a genus) down to the species level, based on the observed number of reads uniquely assigned to each species within that genus.

Key Quantitative Metrics: The algorithm's performance is typically evaluated using simulated metagenomic communities with known compositions. Standard metrics include:

- Pearson/Spearman Correlation: Measures the linear/monotonic relationship between estimated and true relative abundances.

- Mean Absolute Error (MAE): Average absolute difference between estimated and true proportions.

- Root Mean Squared Error (RMSE): Emphasizes larger errors in estimation.

Table 1: Example Performance Metrics of Bracken on a Simulated Dataset (Species-Level)

| Metric | Kraken2 Read Counts | Bracken Estimated Abundance | Improvement |

|---|---|---|---|

| Pearson Correlation (r) | 0.87 | 0.95 | +9.2% |

| Spearman Correlation (ρ) | 0.85 | 0.93 | +9.4% |

| Mean Absolute Error (MAE) | 0.0042 | 0.0015 | -64.3% |

| Root Mean Squared Error (RMSE) | 0.0087 | 0.0031 | -64.4% |

Detailed Protocol: Abundance Estimation with Kraken2/Bracken Workflow

Protocol 1: Generating Species Abundance Profiles from Raw Metagenomic Reads

Objective: To process raw FASTQ files into accurate taxonomic abundance profiles.

Materials & Software:

- Computing environment (Unix/Linux server or cluster).

- Pre-built Kraken2 standard database (e.g.,

standard_db). - Bracken database files, generated from the Kraken2 database.

- Kraken2 software (v2.1.3+).

- Bracken software (v2.8+).

- Paired-end or single-end metagenomic sequencing reads in FASTQ format.

Procedure: Step 1: Taxonomic Classification with Kraken2

--db: Path to the Kraken2 database.--paired: Specifies paired-end input files.--threads: Number of CPU threads to use.--output: File containing read-by-read taxonomic assignments.--report: The critical summary report file used by Bracken.

Step 2: Abundance Re-estimation with Bracken

-d: Path to the Kraken2 database (same as used in Step 1).-i: Input Kraken2 report file.-o: Output Bracken abundance file.-l: Taxonomic level for estimation (Sfor species,Gfor genus).-t: Threshold for minimum number of reads required for a taxon.-r: Read length used in the sample.

Step 3: Combine Multiple Samples (Optional)

Use combine_bracken_outputs.py (provided with Bracken) to merge results from multiple samples into a single feature table for cross-sample analysis.

Visualization of Workflow

Title: Kraken2-Bracken Analysis Workflow

Title: Bracken's Bayesian Estimation Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Kraken2/Bracken Analysis

| Component | Function / Description | Example or Note |

|---|---|---|

| Curated Reference Database | Contains genomic sequences for taxonomic classification. Defines the scope of detectable organisms. | Standard Kraken2 database (e.g., standard_db), MiniKraken, or custom-built databases. |

| Bracken Database Files | Pre-computed files storing genome length distributions for each taxon in the reference DB. Required for Bayesian re-estimation. | Generated from the Kraken2 DB using bracken-build. Must match the Kraken2 DB version. |

| High-Performance Computing (HPC) Resources | Provides the CPU and memory needed for rapid classification of large metagenomic datasets. | Linux servers or cloud computing instances (e.g., AWS, GCP). |

| Read Simulation Tools | Generates synthetic metagenomes with known composition to validate and benchmark the workflow. | ART, CAMISIM, or InSilicoSeq. Used in thesis methodology chapters. |

| Abundance Profile Aggregator | Scripts to combine multiple Bracken output files into a single matrix for community analysis. | Bracken's combine_bracken_outputs.py. Alternative: horn. |

| Statistical & Visualization Suite | Software for analyzing and visualizing final abundance tables (alpha/beta diversity, differential abundance). | R with phyloseq, ggplot2, MaAsLin2, or Python with pandas, scikit-bio, matplotlib. |

Application Notes

Within the context of Kraken2 metagenomic classifier workflow research, advanced analytical frameworks are critical for translating taxonomic abundance profiles into biological insight. The choice between shotgun metagenomic and 16S rRNA amplicon sequencing dictates the scope of downstream analysis and integration potential.

Shotgun vs. 16S rRNA Sequencing: A Comparative Analysis The following table summarizes the core quantitative and functional differences between the two primary sequencing approaches, which directly inform the preprocessing and classification strategy for Kraken2.

Table 1: Comparative Analysis of Shotgun Metagenomic and 16S rRNA Amplicon Sequencing

| Feature | Shotgun Metagenomics | 16S rRNA Amplicon Sequencing |

|---|---|---|

| Sequencing Target | All genomic DNA in sample | Hypervariable regions of 16S rRNA gene |

| Typical Read Depth | 20-100 million reads/sample | 50-200 thousand reads/sample |

| Taxonomic Resolution | Species to strain level | Genus to family level (typically) |

| Functional Insight | Direct (via gene content) | Inferred (via reference databases) |

| Host DNA Interference | High (e.g., >90% in host-rich samples) | Very Low |

| Computational Demand | Very High | Moderate |

| Primary Use Case in Kraken2 Research | Community function, strain tracking, novel genome discovery | High-throughput community profiling, core microbiome identification |

Time-Series & Multi-Omics Integration Kraken2-generated taxonomic profiles serve as a foundational layer for longitudinal and multi-omics studies. Time-series analysis reveals microbial dynamics, while integration with metabolomic or proteomic data enables causative modeling of host-microbe interactions.

Table 2: Key Metrics for Time-Series and Multi-Omics Study Design

| Analysis Type | Recommended Sampling Points | Key Integrative Metric | Common Statistical Challenge |

|---|---|---|---|

| Microbial Time-Series | ≥5 time points per subject | Microbial trajectory clustering | Accounting for autocorrelation |

| Metagenomics-Metabolomics | Matched samples (n>20) | Spearman correlation (Taxa vs. Metabolite) | Multiple hypothesis correction |

| Metagenomics-Transcriptomics | Matched samples from same site | Genome-resolved transcript abundance | Distinguishing microbial from host signals |

Experimental Protocols

Protocol 1: Kraken2-Based Differential Abundance Analysis in a Time-Series Experiment

Objective: To identify taxa whose abundance changes significantly over time or in response to an intervention using Kraken2 output.

- Sample Preparation & Sequencing: Extract total genomic DNA from longitudinal samples (e.g., stool collected daily for 10 days). Perform shotgun library preparation and sequence on an Illumina platform to a depth of 30 million paired-end reads per sample.

- Kraken2/Bracken Analysis:

a. Build a custom Kraken2 database incorporating RefSeq complete bacterial, archaeal, viral, and fungal genomes, plus the human genome for host filtering.

b. Run Kraken2:

kraken2 --db /path/to/custom_db --paired --output reads.kraken2 --report report.tsv sample_R1.fq sample_R2.fqc. Re-estimate abundances with Bracken:bracken -d /path/to/custom_db -i report.tsv -o sample.bracken -l S - Data Curation: Merge Bracken output files (species level) into a single abundance matrix using a custom script (e.g., in Python or R). Apply a prevalence filter (e.g., retain species present in >20% of samples).

- Statistical Modeling: Import the matrix into R. Use a linear mixed-effects model (e.g.,

lmerfromlme4package) with *TaxonAbundance ~ Time + (1\|SubjectID)` to account for repeated measures. Correct p-values using the Benjamini-Hochberg procedure.

Protocol 2: Integrative Analysis of Kraken2 Data and Metabolomics

Objective: To correlate species-level taxonomic abundance with liquid chromatography-mass spectrometry (LC-MS) metabolomic profiles.

- Parallel Data Generation: a. Metagenomics: Process samples as per Protocol 1, steps 1-2, to generate Kraken2/Bracken abundance tables. b. Metabolomics: Perform metabolite extraction from an aliquot of the same sample. Analyze using a high-resolution LC-MS platform in both positive and negative ionization modes.

- Data Preprocessing: a. Taxonomic Data: CLR (Centered Log-Ratio) transform the filtered species abundance matrix to address compositionality. b. Metabolomic Data: Perform peak alignment, annotation using reference libraries (e.g., HMDB), and normalization. Log-transform the peak intensity matrix.

- Integration & Correlation: Use multi-omics integration tools such as

mixOmics(R). Perform sparse Partial Least Squares (sPLS) regression to identify latent components explaining covariance between the CLR-transformed microbial abundances and the log-transformed metabolite intensities. Calculate significance via permutation testing.

Visualizations

Title: Workflow Comparison: Shotgun vs 16S Data Analysis

Title: Multi-Omics Data Integration Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Advanced Metagenomic Studies

| Item | Function in Workflow |

|---|---|

| ZymoBIOMICS DNA/RNA Miniprep Kit | Co-extraction of high-quality genomic DNA and total RNA from complex samples for parallel metagenomic and metatranscriptomic analysis. |

| KAPA HyperPrep Kit (Illumina) | Robust library preparation for shotgun metagenomic sequencing from low-input DNA, ensuring even coverage. |

| PhiX Control v3 (Illumina) | Spiked-in during sequencing for quality monitoring, error rate calibration, and balancing low-diversity 16S libraries. |

| Internal Standard Spike-Ins (e.g., ZymoBIOMICS Spike-in Control) | Quantitative controls added pre-extraction to assess technical variation and enable absolute abundance estimation from sequencing data. |

| MS-Grade Solvents (Acetonitrile, Methanol) | Essential for reproducible metabolite extraction and preparation for LC-MS-based metabolomics integration. |

| Bioinformatics Pipelines: nf-core/mag & nf-core/ampliseq | Standardized, containerized Nextflow pipelines for reproducible analysis of shotgun and 16S data, including Kraken2 classification. |

Solving the Puzzle: Troubleshooting Common Kraken2 Errors and Optimizing Performance for Large-Scale Studies

Within the broader thesis research on optimizing metagenomic classifier workflows for high-throughput drug discovery pipelines, robust error handling is paramount. Kraken2, while efficient, presents recurrent operational hurdles—database corruption, insufficient memory allocation, and restrictive file permissions—that can halt critical bioinformatics analyses. These errors directly impact the reproducibility and scalability of microbial community profiling essential for identifying novel therapeutic targets. This document provides application notes and protocols for systematically diagnosing and resolving these common failures.

The following table summarizes the frequency and typical resource impact of the three primary error categories, based on an analysis of 500 support tickets and forum posts (2022-2024).

Table 1: Prevalence and Impact of Common Kraken2 Errors

| Error Category | Approximate Frequency (%) | Typical Diagnostic Time Cost (Researcher Hours) | Primary Workflow Phase Affected |

|---|---|---|---|

| Database Issues | 45% | 2-5 | Classification, Database Building |

| Memory Limits (RAM) | 35% | 1-3 | Classification, Reporting |

| File Permissions | 20% | 0.5-1.5 | All Phases |

Experimental Protocols for Diagnosis and Resolution

Protocol 3.1: Comprehensive Kraken2 Database Integrity Check

Objective: To verify the structural and functional integrity of a custom or pre-built Kraken2 database.

Background: Corrupted or incomplete databases are a leading cause of classification failures or nonsensical outputs (e.g., 100% unclassified reads).

Materials: Kraken2-installed Linux server, kraken2-inspect tool, NCBI nt library checksums.

Procedure:

- Visual Inspection: Confirm all expected files are present (

hash.k2d,opts.k2d,taxo.k2d,seqid2taxid.map). - Inspect Tool Verification: Check output for taxonomies and ensure it completes without I/O errors.

- Functional Test with Control Reads: Use a small, known control FASTQ (e.g., from E. coli). Expect >95% classification at species level.

- Cross-Reference

seqid2taxid.map: Ensure this file is non-empty and properly formatted (two columns: sequence ID, taxonomic ID). Expected Outcome: A complete, error-freeinspectreport and successful classification of control reads.

Protocol 3.2: Stress Testing and Benchmarking Memory Requirements

Objective: To empirically determine the minimum RAM required for classifying a given metagenomic sample and to reproduce "out of memory" errors in a controlled setting. Background: Memory usage scales with database size and read length/complexity.

Procedure:

- Baseline Profiling: Use

/usr/bin/time -vto profile memory. - Record "Maximum resident set size" from

time.log. - Incremental Stress Test: Create subsets of

sample.fastq(10%, 25%, 50%) and repeat step 1, plotting RAM vs. input size to extrapolate requirement. - Mitigation Experiment: Compare memory usage with (

--memory-mapping) and without memory mapping. Note: mapping reduces RAM but may increase I/O.

Protocol 3.3: Systematic File Permission and Ownership Audit

Objective: To identify and rectify permission-based failures in shared high-performance computing (HPC) environments. Background: Incorrect permissions prevent reading database files or writing output reports.

Procedure:

- Recursive Permission Check:

- Verify Read Permissions: All

.k2dfiles must be readable by the user executing Kraken2. - Verify Write Permissions in Output Directory:

- Fix Group Permissions (for shared lab databases):

Visualization of Diagnostic Workflows

Title: Kraken2 Error Diagnostic Decision Tree

Title: Kraken2 Database Construction and Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Kraken2 Troubleshooting

| Item | Function/Benefit | Example/Note |

|---|---|---|

| Standardized Control FASTQ Set | Validates database function and classification sensitivity. | ZymoBIOMICS D6300 mock community sequencing data. |

| High-Memory Compute Node | Enables memory profiling and handling of large datasets. | Node with ≥256 GB RAM for standard 16GB database. |

| Kraken2 Database Checksum File | Verifies integrity of downloaded database files. | Use md5sum or sha256sum files from repository. |

Process Monitoring Tool (/usr/bin/time, htop) |

Profiles CPU and memory usage in real-time. | Critical for diagnosing memory leaks or limits. |

| Permission Debugging Script | Automates audit of file/directory permissions. | Custom Bash script running namei and ls -la. |

| Containerized Kraken2 (Docker/Singularity) | Ensures version and dependency consistency. | Image from DockerHub (quay.io/biocontainers/kraken2). |

| NCBI Taxonomy Dump Files | Allows manual verification of taxo.k2d content. |

nodes.dmp, names.dmp from FTP. |

Application Notes: Kraken2 Workflow Optimization

Kraken2 is a leading metagenomic sequence classifier that uses exact k-mer matches for high-speed taxonomic assignment. Within a broader thesis on refining Kraken2 workflows for large-scale, reproducible research, optimizing computational efficiency is paramount. This document provides protocols and data for enhancing processing speed and reducing memory footprint through parameter tuning, parallelization strategies, and optimized database design.

Table 1: Impact of Kraken2 Parameters on Performance (Representative Data)

| Parameter | Typical Range | Effect on Speed | Effect on Memory | Recommended Use Case |

|---|---|---|---|---|

| k-mer Length (-k) | 25-35 | Shorter k: Faster | Shorter k: Lower | General profiling (k=31); Strain-level (k=35) |

| Minimizer Length (-l) | 21-31 | Longer l: Faster | Longer l: Lower | Large datasets, memory-constrained systems (l=31) |

| Capacity (-c) | Default: 128M | Higher: Slightly slower | Higher: Linear increase | Only increase for large DBs/many distinct k-mers |

| Minimum Hit Groups (-g) | Default: 1 | Higher: Faster | Minimal | Filter low-confidence matches; trade-off: sensitivity |

| Number of Threads (-t) | 1-64+ | More threads: Faster (plateaus) | Minimal increase | I/O-bound workloads benefit from 4-16 threads |

Table 2: Database Design Options & Resource Usage

| Database Type | Build Size (GB) | Operational Memory (GB) | Classification Speed (Reads/sec)* | Best For |

|---|---|---|---|---|

| Standard (RefSeq Complete) | ~100-150 | ~70-100 | ~1-2M | Comprehensive analysis, broad taxonomy |

| MiniKraken (8GB) | 8 | ~6-8 | ~3-4M | Fast screening, educational use, low-memory systems |

| Custom (Phylogeny-focused) | Variable (10-50) | Variable (5-40) | ~2-4M | Targeted studies (e.g., viral, bacterial) |

| Bracken-enabled | Adds ~+20% | Minimal overhead | Similar to base DB | Required for quantitative abundance estimation |

*Speed measured on a 16-core server with NVMe storage.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Materials for Kraken2 Workflow Optimization

| Item | Function & Rationale |

|---|---|

| Kraken2 Software (v2.1.3+) | Core classification engine. Latest versions contain critical bug fixes and performance improvements. |

| Standard Kraken2 Database | Pre-built reference (k=35, l=31) of archaeal, bacterial, viral, plasmid, human, UniVec_Core sequences. Baseline for benchmarking. |

| Bracken (Bayesian Reestimation) | Software using Kraken2 output to calculate accurate species- or genus-level abundance. Essential for downstream analysis. |

| Threadripper/EPYC or Xeon Server | High core-count CPUs (32+ cores) with large memory bandwidth maximize parallelization benefits for classification and database building. |

| High-Speed NVMe Storage Array | Reduces I/O bottleneck during database loading and concurrent processing of multiple sample files. |

| SLURM / Nextflow / Snakemake | Workflow managers for orchestrating parallel jobs across HPC clusters, ensuring reproducibility and resource efficiency. |

| Custom Taxonomic ID Map | A curated file limiting classification to specific taxa (e.g., bacteria only). Reduces memory usage and increases speed for focused studies. |

Experimental Protocols

Protocol A: Benchmarking Kraken2 Parameter Sets

Objective: Systematically measure the trade-off between classification accuracy, speed, and memory use for different parameter combinations.

Materials: Server (≥16 cores, ≥128 GB RAM, NVMe), Kraken2 v2.1.3, Standard database, test dataset (e.g., CAMI2 challenge data).

Methodology:

- Baseline Run: Execute Kraken2 with default parameters (

-k 35,-l 31,-t 16) on a 10GB metagenomic read file (FASTQ). Record time (/usr/bin/time -v), peak memory, and output classification file. - Parameter Variation: Repeat classification, systematically varying one parameter per run:

-k: 25, 31, 35-l: 21, 27, 31, 35-t: 1, 4, 8, 16, 32--minimum-hit-groups: 1, 2, 3

- Performance Metrics: For each run, log:

- Wall-clock time (seconds).

- Maximum resident set size (MB).

- Reads processed per second.

- Accuracy Assessment: Use

kraken2 --reportoutput and compare to ground truth (CAMI2 profiles) using precision/recall metrics for the target taxonomic level (e.g., species). - Analysis: Plot speed/memory vs. accuracy to identify Pareto-optimal parameter sets for your specific accuracy tolerance.

Protocol B: Building a Memory-Optimized Custom Database

Objective: Construct a focused database for viral pathogen detection, minimizing memory footprint without compromising target sensitivity.

Materials: Kraken2-build, NCBI RefSeq viral genomes FASTA, taxonomic nodes/names.dmp, high-memory build node.

Methodology:

- Sequence Acquisition:

- Library Preparation:

- Build with Minimizers:

- Optional Size Reduction: Create a reduced hash table by adjusting capacity:

- Validation: Classify a mock viral community. Compare recall against the standard database.

Protocol C: Implementing Hybrid Parallelization for Batch Processing

Objective: Maximize throughput for hundreds of samples by combining intra-sample (multithreading) and inter-sample (job array) parallelism.

Materials: HPC cluster with SLURM, shared NVMe storage, sample FASTQ directory.

Methodology:

- Workflow Design: Implement a two-level parallel scheme.

- Per-Sample Script (

classify.slurm): - Job Array Dispatch:

- In

classify_array.slurm, use${SLURM_ARRAY_TASK_ID}to select the sample fromsamples.listand pass it to the classification script.

Mandatory Visualizations

Optimized Kraken2/Bracken Analysis Workflow

Hybrid Parallelization Model for Batch Processing

1. Introduction & Context within Kraken2 Metagenomic Workflow Research Within the broader thesis on optimizing Kraken2-based metagenomic classification workflows, a pivotal challenge is the accurate taxonomic profiling of samples with intrinsically low microbial biomass and/or overwhelming host nucleic acid contamination. Such samples (e.g., tissue biopsies, blood, CSF, skin swabs, indoor air samples) present a high risk of false positives from contamination and false negatives due to host read dominance and stochastic sampling effects. Sensitivity improvements must therefore target the entire pre-bioinformatics pipeline—from sample collection to sequence data preparation—to ensure that the input presented to the Kraken2 classifier is of sufficient quality and microbial signal fidelity for reliable analysis.

2. Core Strategies & Quantitative Data Summary The following table summarizes key intervention strategies and their quantifiable impact on improving sensitivity and reducing host contribution.

Table 1: Strategies for Enhancing Sensitivity in Low-Biomass/High-Host Contamination Metagenomics

| Strategy Category | Specific Method/Kit | Reported Outcome (Quantitative) | Primary Function |

|---|---|---|---|

| Host DNA Depletion | Enzymatic degradation (NEBNext Microbiome DNA Enrichment Kit) | ~2.5-4x increase in microbial sequencing depth; reduces human DNA from >99% to ~60-80%. | Selective digestion of methylated host (e.g., human) DNA. |

| Host DNA Depletion | Probe-based hybridization (IDT xGen Hybridization Capture) | >99% host DNA removal; can achieve >90% microbial read post-capture. | Biotinylated probes hybridize to and remove host sequences. |

| Selective Lysis | Mechanical lysis + differential centrifugation | Increases microbial DNA yield by 3-10x compared to gentle lysis alone. | Physically disrupts tough microbial cell walls after gentle host cell lysis. |

| PCR Inhibition Mitigation | Use of inhibitor-resistant polymerases (e.g., Phusion U Green) | Enables amplification from samples with up to 40% (v/v) blood or humic acid contamination. | Polymerases engineered for tolerance to common environmental/in-body inhibitors. |

| Library Preparation | Target enrichment via PCR (16S/18S/ITS) | Enables detection of microbes at <0.1% abundance; requires prior taxonomic target selection. | Amplifies conserved marker genes to bypass host DNA and increase microbial signal. |

| Library Preparation | Whole-genome amplification (WGA) for ultra-low biomass (Repli-g) | Can generate µg of DNA from single cells or femtogram inputs; risk of amplification bias. | Isothermal amplification to generate sufficient material for library prep. |

| Bioinformatic Filtering | In silico host read removal (Bowtie2/BWA vs. Kraken2 "quick" mode) | Can filter >99.9% of host-mapping reads; critical post-sequencing step. | Alignment-based subtraction of reads mapping to the host genome. |

3. Detailed Experimental Protocols

Protocol 3.1: Integrated Workflow for Tissue Biopsy Samples Objective: Maximize microbial DNA recovery and minimize human host DNA for shotgun metagenomic sequencing compatible with Kraken2 analysis.

- Sample Homogenization: Flash-freeze 25 mg tissue in liquid N2. Pulverize using a sterile mortar and pestle or a bead-beating system.

- Selective Lysis: Resuspend powder in 500 µL of gentle lysis buffer (e.g., QIAGEN ATL buffer with Proteinase K). Incubate at 56°C for 1 hour to lyse mammalian cells.

- Microbial Cell Pellet Enrichment: Centrifuge at 14,000 x g for 10 min. Carefully transfer supernatant (containing host DNA) to a fresh tube for optional backup. Retain the pellet.

- Mechanical Microbial Lysis: Wash the pellet with 1X PBS. Resuspend in 180 µL of enzymatic lysis buffer (e.g., MetaPolyzyme mixture: 20 mg/mL lysozyme, 20 U/mL mutanolysin, 0.3 U/mL lysostaphin in TE buffer). Incubate at 37°C for 1 hour. Add 200 µL of buffer AL (QIAGEN) and 20 µL Proteinase K, incubate at 56°C for 30 min. Subject to bead-beating with 0.1mm zirconia/silica beads for 2 minutes.

- DNA Extraction: Purify total nucleic acid from the lysate using a column-based kit (e.g., DNeasy PowerLyzer Kit). Elute in 50 µL TE buffer.

- Host DNA Depletion (Enzymatic): Treat 40 µL of eluted DNA using the NEBNext Microbiome DNA Enrichment Kit per manufacturer's protocol. This step selectively digests CpG-methylated mammalian DNA.

- Library Preparation & Sequencing: Quantify the enriched DNA using a high-sensitivity dsDNA assay (e.g., Qubit). Proceed with a low-input, PCR-free or low-PCR-cycle library prep kit (e.g., Illumina DNA Prep). Perform paired-end sequencing (2x150 bp) on an Illumina platform to a minimum depth of 20-50 million reads.

Protocol 3.2: In silico Host Read Subtraction Pre-Kraken2 Classification Objective: Remove residual host reads post-sequencing to reduce computational load and improve Kraken2's sensitivity for microbial detection.

- Quality Control: Use FastQC to assess raw read quality.

- Adapter Trimming & Filtering: Use Trimmomatic or fastp to remove adapters and low-quality bases (SLIDINGWINDOW:4:20 MINLEN:50).

- Host Genome Indexing: Download the appropriate host reference genome (e.g., GRCh38 for human). Build a Bowtie2 index:

bowtie2-build GRCh38.fa host_index. - Alignment and Subtraction: Align trimmed reads to the host index with high sensitivity:

bowtie2 -x host_index -1 R1_trimmed.fq -2 R2_trimmed.fq --very-sensitive-local --un-conc-gz nonhost_reads.%.fq.gz -S host_aligned.sam 2> alignment.log. The--un-conc-gzflag outputs the paired reads that did not align concordantly to the host. - Kraken2 Analysis: Use the non-host reads (

nonhost_reads_1.fq.gz,nonhost_reads_2.fq.gz) as input for Kraken2 classification against a standard database (e.g., Standard-PlusPF):kraken2 --paired --db /path/to/kraken2_db --report report.txt --output kraken2.out nonhost_reads_*.fq.gz.

4. Visualization of Workflows

Workflow for Enhanced Sensitivity Metagenomics

Host Depletion Method Decision Logic

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Low-Biomass Metagenomic Studies

| Item Name | Supplier Example | Function in Workflow |

|---|---|---|

| MetaPolyzyme | Sigma-Aldrich | Enzyme cocktail for comprehensive lysis of Gram-positive/negative bacteria and fungi. |

| NEBNext Microbiome DNA Enrichment Kit | New England Biolabs | Enzymatically depletes methylated host DNA, enriching for microbial genomes. |

| xGen Pan-Human Hybridization Capture Kit | Integrated DNA Technologies | Uses biotinylated probes to remove human sequences via hybridization capture. |

| DNeasy PowerLyzer PowerSoil Kit | QIAGEN | Combines chemical and mechanical lysis optimized for tough microbial cells, includes inhibitor removal. |

| Repli-g Single Cell Kit | QIAGEN | Whole-genome amplification for ultra-low biomass inputs, crucial for generating sufficient DNA. |

| Phusion U Green Multiplex PCR Master Mix | Thermo Fisher | High-fidelity, inhibitor-resistant polymerase for reliable amplification from complex samples. |

| Qubit dsDNA HS Assay Kit | Thermo Fisher | Fluorometric quantification specific for double-stranded DNA, essential for measuring low-concentration extracts. |

| Illumina DNA Prep with Enrichment Bead-LP | Illumina | Low-perturbation, PCR-free or low-cycle library prep to minimize bias for shotgun metagenomics. |

| ZymoBIOMICS Microbial Community Standard | Zymo Research | Defined mock community used as a positive control to assess workflow efficiency and contamination. |

This application note, framed within a broader thesis on Kraken2 metagenomic classifier workflow research, details strategies to mitigate false positives and database bias—a critical challenge in metagenomic analysis for drug development and clinical diagnostics. Standard reference databases (e.g., RefSeq, GenBank) often lack specificity for targeted projects, leading to misclassification. Curating custom, project-specific databases enhances precision, recall, and relevance.

Quantitative Analysis of Standard vs. Custom Database Performance

Recent benchmarking studies (2023-2024) highlight the impact of database composition on Kraken2 performance.

Table 1: Comparison of Classification Performance Using Different Database Types

| Database Type | Avg. Precision (%) | Avg. Recall (%) | False Positive Rate (%) | Typical Size (GB) | Use Case Suitability |

|---|---|---|---|---|---|

| Standard Kraken2 Standard (RefSeq) | 88.2 | 92.5 | 7.1 | ~100 | Broad-spectrum surveys |

| Custom (Targeted Pathogen) | 98.6 | 95.3 | 0.8 | 4-12 | Clinical pathogen detection |

| Custom (Environmental) | 94.7 | 89.8 | 2.5 | 15-30 | Specific biome studies |

| Custom (Antimicrobial Resistance) | 97.1 | 91.4 | 1.2 | 2-8 | AMR gene profiling |

Data synthesized from recent benchmarks by Nissen et al. (2023, *Microbiome) and Davis et al. (2024, BMC Bioinformatics).*

Experimental Protocol: Custom Database Curation for a Targeted Pathogen Panel

Aim: To construct a custom Kraken2 database for the specific detection of Mycobacterium tuberculosis complex (MTBC) and common respiratory co-infections.

Materials & Software:

- Hardware: Server with ≥32 GB RAM, 500 GB storage.

- Software: Kraken2, NCBI

datasetsCLI,seqtk, Bracken. - Input: List of target taxa (NCBI Taxonomy IDs).

Stepwise Protocol:

- Define Scope:

- Generate a list of target species and strains (e.g., MTBC species, Pseudomonas aeruginosa, Klebsiella pneumoniae). Include near-neighbors for exclusion.

- Obtain corresponding NCBI Taxonomy IDs.

Download Targeted Genomic Data:

- Use NCBI's

datasetstool (current as of 2024) to download specific genomic sequences. datasets download genome taxon "Mycobacterium tuberculosis" --reference --filename mtb.zipdatasets download genome taxon "Pseudomonas aeruginosa" --reference --filename pa.zip- Extract and combine all genomic FASTA files.

- Use NCBI's

Exclude Contaminant Sequences:

- Curate a "contaminant list" (e.g., human genome, phiX174).

- Use

seqtkto filter out reads matching contaminants (by accession).

Build the Database:

- Execute Kraken2-build:

kraken2-build --add-to-library combined_genomes.fna --db MTB_Resp_DB - Finalize build:

kraken2-build --build --db MTB_Resp_DB --threads 16 - Critical Step: Use the

--minimizer-spacesflag during build to reduce false positives (adjust based on target uniqueness).

- Execute Kraken2-build:

Generate Bracken Database:

bracken-build -d MTB_Resp_DB -t 16 -k 35 -l 150

Validation with Control Data:

- Run Kraken2/Bracken on simulated or known positive/negative samples.

- Adjust database by iteratively adding missing targets or removing sources of false positives.

Visualization of the Custom Database Curation Workflow

Diagram 1: Custom Database Curation and Validation Workflow

Title: Custom Database Creation and QC Workflow

Diagram 2: Database Bias Impact on Classification Results

Title: DB Size and Focus Impact on Classification Output

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Custom Database Curation

| Item | Function/Benefit | Example/Specification |

|---|---|---|

| High-Fidelity Genomic References | Provide accurate sequences for inclusion; sourced from trusted repositories (NCBI RefSeq, GTDB). | NCBI RefSeq "reference" or "representative" genome assemblies. |

| Contaminant Genome List | Enables filtering of host (e.g., human) or reagent-derived sequences to reduce false positives. | Human GRCh38, phiX174, common vectors, UniVec. |

| Negative Control Sequence Data | Essential for validating specificity and identifying residual false positives post-curation. | Simulated reads from non-target organisms. |

| Positive Control Sequence Data | Validates sensitivity and recall of the custom database for target taxa. | Simulated or cultured isolate reads from target pathogens. |

| Computational Resources | Sufficient RAM and fast storage are critical for building and testing large databases. | ≥32 GB RAM, SSD storage, multi-core processors. |

| NCBI Datasets Command-Line Tool | Current, programmatic access to NCBI genomic data, replacing deprecated tools like kraken2-build --download-library. |

datasets CLI (v14+). |

| Bracken Software | Generates required index files for accurate species- or genus-level abundance re-estimation from Kraken2 output. | Bracken (v2.8+). |

| SEQTK | Lightweight tool for processing sequences in FASTA/Q format during filtering steps. | seqtk seq for subsampling and filtering. |

1. Introduction Within our broader thesis on advancing the Kraken2 metagenomic classifier workflow for high-throughput pathogen detection in drug development pipelines, ensuring computational reproducibility is paramount. Variability in software versions, library dependencies, and execution environments can lead to inconsistent taxonomic classification results, jeopardizing downstream analysis and therapeutic target identification. This document outlines integrated application notes and protocols for implementing three core pillars of reproducibility: version control, containerization, and workflow management.