Batch Effect Correction Strategies for Zero-Inflated Microbiome Data: A Comprehensive Guide for Biomedical Research

This article provides a complete framework for understanding and addressing batch effects in microbiome data, which is characterized by excessive zeros and high dimensionality.

Batch Effect Correction Strategies for Zero-Inflated Microbiome Data: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a complete framework for understanding and addressing batch effects in microbiome data, which is characterized by excessive zeros and high dimensionality. We first establish the fundamental challenges of zero-inflation and technical variation, then detail the latest methodological approaches for detection and correction. We offer practical troubleshooting advice for common pitfalls and systematically compare the performance of leading tools. Designed for researchers and drug development professionals, this guide bridges statistical theory with practical application to ensure robust, reproducible findings in microbial ecology and biomarker discovery.

Understanding the Dual Challenge: Zero-Inflation and Batch Effects in Microbiome Profiling

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My PERMANOVA results show a significant batch effect. What are the first steps to confirm and address this?

A: First, visualize your data using a PCoA plot colored by batch. If batches cluster separately, confirm with a PERMANOVA test (adonis2 in R) using batch as a predictor. For initial correction, consider using batch-effect correction tools like ComBat-seq (for raw counts) or RUVSeq after careful consideration of their impact on compositionality.

Q2: After rarefaction, I've lost many low-abundance taxa. Is this necessary, and what are the alternatives? A: Rarefaction is a traditional method to handle uneven sequencing depth but amplifies sparsity. Alternatives that better preserve data include:

- For Differential Abundance: Use compositionally aware tools like

ANCOM-BC,ALDEx2, orDESeq2with a zero-inflated Gaussian (ZIG) model. - For Diversity Analysis: Use diversity metrics that account for compositionality, such as robust Aitchison distance, implemented in

DEICODEorQIIME 2.

Q3: How do I choose between a compositional (e.g., ALDEx2) and a count-based model (e.g., DESeq2) for my zero-inflated data? A: This decision is critical. See the table below for a structured comparison based on your data characteristics and research question.

Q4: My negative controls show contaminant reads. How should I incorporate them into my model? A: Contaminants are a key source of technical variation. You must model them. Methods include:

- Subtraction: Using the

decontampackage (prevalence or frequency method) to identify and remove contaminants. - Inclusion as a Covariate: Include the abundance of contaminant taxa (aggregated from control samples) as a covariate in your statistical model (e.g., in a

ZINQmodel) to account for their influence.

Q5: What is the minimum sample size needed for reliable zero-inflation modeling?

A: There is no universal minimum, but power is a major concern. As a rule of thumb, for methods like ZINQ or GLMMs, you need at least 15-20 samples per group for stable parameter estimation. For complex models with multiple covariates, more samples are required. Always perform power analysis using tools like HMP or simulation-based approaches prior to data collection.

Table 1: Comparison of Common Statistical Models for Microbiome Data

| Model/Tool | Data Type Input | Handles Compositionality? | Handles Sparsity/Zeros? | Batch Effect Correction Integration | Best Use Case |

|---|---|---|---|---|---|

| DESeq2 (Wald test) | Raw Counts | No (Assumes independence) | Moderate (Uses regularization) | Can include as covariate in design | DAA when counts are not extremely sparse; large sample sizes. |

| ANCOM-BC | Relative Abundance | Yes (Uses log-ratio) | Yes (Uses pseudo-counts) | Can include as covariate | DAA with a focus on controlling FDR; robust to compositionality. |

| ALDEx2 | Relative Abundance | Yes (CLR transform) | Yes (Monte-Carlo Dirichlet instance) | Can include as covariate | DAA for small sample sizes; emphasizes effect size over significance. |

| MaAsLin 2 | Various | Optional (CLR transform) | Yes (Generalized linear models) | Directly in model formula | Flexible DAA with complex, multi-factorial metadata. |

| ZINQ | Raw Counts | No | Yes (Explicit zero-inflation model) | Must be addressed prior | Explicitly modeling excess zeros due to detection limits. |

| MMUPHin | Raw/Relative | Yes (Meta-analysis framework) | Yes | Primary function for batch correction | Correcting batch effects across multiple studies. |

Table 2: Impact of Sequencing Depth on Data Sparsity (Simulated Data)

| Mean Sequencing Depth | Total ASVs Detected | % of ASVs with >10 reads | % Zero Entries in OTU Table | Recommended Analysis Approach |

|---|---|---|---|---|

| 5,000 reads/sample | 500 | 45% | ~85% | Compositional methods (ALDEx2, ANCOM-BC) essential. |

| 20,000 reads/sample | 800 | 60% | ~75% | Mixed models or zero-inflated models become more stable. |

| 100,000 reads/sample | 1,200 | 75% | ~65% | Count-based models (DESeq2) more reliable; batch effects remain critical. |

Experimental Protocols

Protocol 1: Batch Effect Diagnosis and Correction with MMUPHin Objective: To diagnose and adjust for batch effects in multi-study or multi-batch microbiome datasets.

- Data Preparation: Create a feature (ASV/Genus) abundance table and a metadata table with

batchandstudycolumns. - Diagnosis: Run

MMUPHin::fit_adjust_batchin diagnostic mode to calculate the variance explained by batch versus biology. - Correction: If batch variance is high, run the correction function. Choose

"compositional"=TRUEfor relative abundance data. - Validation: Re-run PERMANOVA and PCoA visualization on the corrected data to confirm reduction in batch clustering.

Protocol 2: Differential Abundance Analysis with ANCOM-BC (Accounting for Batch) Objective: Identify differentially abundant taxa between conditions while adjusting for batch effects and compositionality.

- CLR Transformation: The tool internally uses a bias-corrected log-ratio (CBC) transformation.

- Model Fitting: Use the

ancombc2function with formula~ batch + primary_condition. Thebatchterm will be adjusted out. - Zero Handling: The method uses pseudo-counts and is robust to sparse data.

- Output: Obtain log-fold changes, standard errors, and p-values for the

primary_condition, adjusted for compositionality and batch.

Visualizations

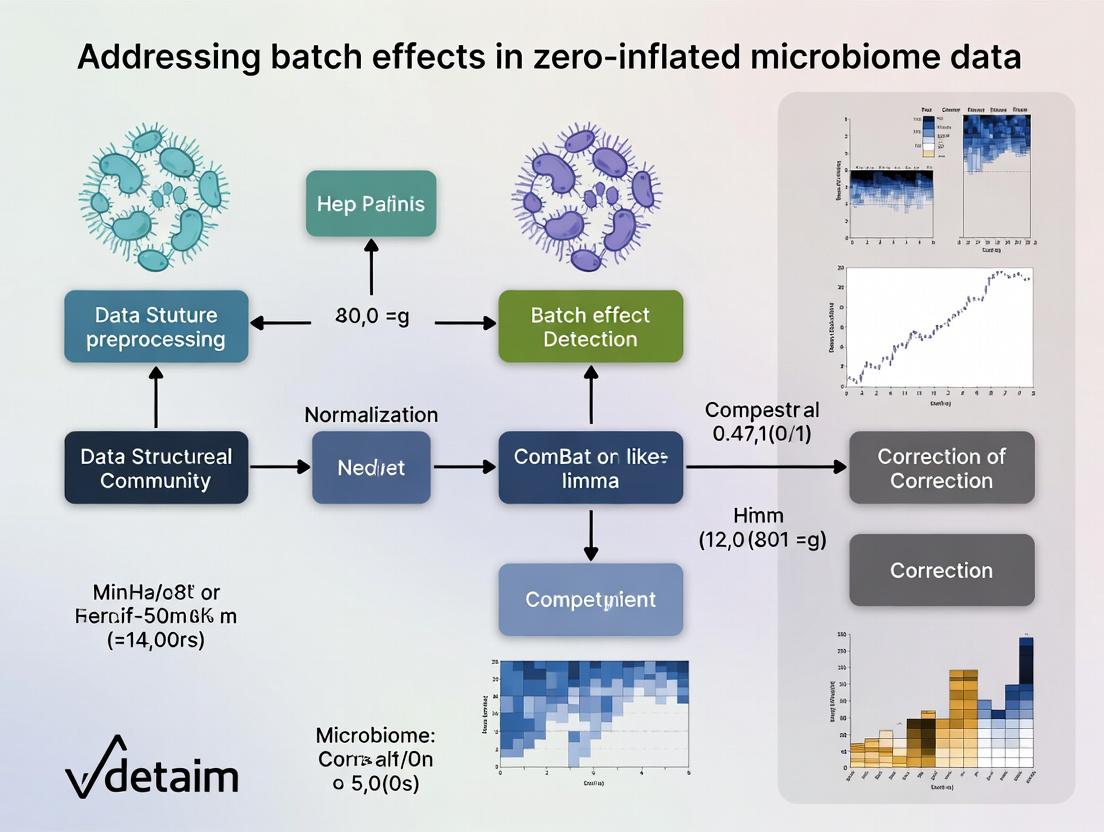

Diagram 1: Microbiome Data Analysis Workflow with Batch Control

Diagram 2: Sources of Zeros in Microbiome Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Controlled Microbiome Studies

| Item | Function in Addressing Data Challenges |

|---|---|

| Mock Community Standards (e.g., ZymoBIOMICS) | Contains known, fixed ratios of microbial cells. Used to diagnose batch effects in wet-lab steps, assess sequencing accuracy, and calibrate bioinformatic pipelines. |

| Negative Extraction Controls | Sterile water or buffer taken through the DNA extraction process. Critical for identifying contaminant taxa (kit/lab-borne) which contribute to sparsity and false positives. Must be sequenced alongside samples. |

| Positive Process Controls | A homogeneous sample (e.g., from a mock community) split across all extraction/sequencing batches. Allows direct quantification of technical vs. biological variation and batch effect magnitude. |

| Uniform Storage Reagents (e.g., DNA/RNA Shield) | Preserves microbial community structure at collection. Reduces pre-analytical variation, a major hidden source of batch effects and spurious zeros. |

| Indexed Sequencing Primers with Balanced Dual-Indexing | Unique combinatorial barcodes for each sample. Minimizes index hopping (crosstalk) between samples and batches, which can create false low-abundance signals and increase sparsity. |

Welcome to the Technical Support Center. This resource is designed within the context of addressing batch effects in zero-inflated microbiome data research. Here you will find troubleshooting guides and FAQs to help identify and mitigate batch effects stemming from technical variation across sequencing runs, distinguishing them from true biological variation.

Frequently Asked Questions & Troubleshooting

Q1: After merging two 16S rRNA gene sequencing datasets from different runs, my beta diversity PCoA shows separation by run, not by treatment. Is this a technical batch effect?

A: Yes, this is a classic sign of a pronounced technical batch effect. In zero-inflated data, this can be exacerbated by run-specific differences in library preparation efficiency or sequencing depth, causing artifactual "missingness" patterns. Before analyzing, apply a batch-correction method like ComBat-seq (for count data) or use a model that includes batch as a covariate (e.g., in DESeq2). Crucially, include a positive control (mock microbial community) in each run to quantify technical variation.

Q2: My negative controls show high reads in one sequencing run but not in others. How do I handle this contamination?

A: This indicates a reagent or cross-contamination batch effect. First, do not simply subtract control reads from samples. For each run independently, apply a prevalence-based filtering tool like decontam (using the "prevalence" method with your negative controls as input). This identifies and removes contaminant ASVs/OTUs. Document the contaminants and the run they originated from. This step must be performed prior to merging datasets or attempting batch correction.

Q3: How can I determine if an observed shift in a specific taxon is biological or an artifact of different sequencing kits? A: You need to disambiguate the sources of variation. Follow this experimental and analytical protocol:

- Experimental Design: If possible, re-sequence a subset of the same sample extracts using the different kits/on different runs.

- Statistical Analysis: Use a linear mixed model. For a given taxon, model its abundance as:

Abundance ~ Treatment + (1 | Batch/Run) + (1 | SampleID)WhereSampleIDis the random effect for repeated measures of the same biological sample. A significantTreatmenteffect after accounting for batch (Batch/Run) suggests a biological signal. For zero-inflated counts, use a model likeglmmTMBwith a zero-inflated negative binomial family.

Q4: What is the minimum number of samples per batch for reliable batch effect correction in microbiome studies? A: There is no universal minimum, but statistical power for correction drops sharply with small batch sizes. The following table summarizes recommendations based on current methodological literature:

| Correction Method | Recommended Minimum Samples Per Batch | Key Consideration for Zero-Inflation |

|---|---|---|

| ComBat-seq | 10-15 | Assumes negative binomial distribution. May not fully model excess zeros. Best applied after aggressive low-count filtering. |

| MMUPHin | 5-10 | Designed for meta-analysis. Includes a step to adjust for batch in microbial prevalence and abundance. More robust with multiple batches. |

| Linear Model Covariate Adjustment (e.g., in DESeq2) | 3-5 | Requires careful model specification. Can struggle with very small batches and high inter-sample variation. |

| Positive Control Spiking (e.g., Spike-in) | N/A (Uses controls) | Requires adding a known quantity of foreign cells/DNA to all samples. Corrects for technical variation directly, independent of sample batch size. |

Q5: I have no positive controls. Can I still diagnose batch effects from my sample data alone? A: Yes, using unsupervised methods. Perform the following diagnostic workflow:

- Calculate beta diversity (e.g., Bray-Curtis or Weighted Unifrac).

- Visualize via PCoA, coloring points by Batch/Run, Extraction Date, Sequencing Lane, and Treatment Group.

- Statistically test for associations between these metadata factors and community distances using PERMANOVA (

adonis2function in R'sveganpackage), conditioning on treatment if needed. - Examine the distribution of read counts per sample (library size) by batch; large median differences suggest a normalization-critical batch effect.

Key Diagnostic Workflow for Batch Effect Identification

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Mitigation |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | A defined mix of known microbes. Sequenced in every run as a positive control to quantify technical variation in composition and abundance. |

| External Spike-in DNA (e.g., SNAP Assay) | Non-biological synthetic DNA spikes added in known quantities to each sample post-extraction. Normalizes for variation in library prep efficiency and sequencing depth. |

| Extraction Kit Negative Control | Sterile water or buffer taken through the DNA extraction process. Identifies reagent/lab contamination specific to each extraction batch. |

| Sequencing Library Negative Control | Sterile water taken through the library preparation process. Identifies contamination introduced during PCR and indexing. |

| Duplicate Sample Reagents | Aliquots from the same biological sample extract, processed in different library prep batches or sequenced across multiple runs. Serves as an internal technical replicate. |

Protocol: Implementing a Spike-in Based Normalization for Zero-Inflated Data

Objective: To correct for technical variation in sequencing depth and efficiency across batches using externally added spike-in molecules. Materials: Synthetic spike-in oligonucleotides (e.g., from SNAP Assay kit), your sample DNA, library prep reagents. Method:

- Spike-in Addition: For each sample, add an identical, precise mass (e.g., 0.5 ng) of spike-in DNA after genomic DNA extraction but before PCR amplification.

- Library Preparation & Sequencing: Proceed with standard library prep (e.g., 16S rRNA gene amplification with barcoded primers) and sequence all batches.

- Bioinformatic Processing:

a. Process raw sequences through your standard pipeline (DADA2, QIIME2).

b. In the resulting ASV table, identify the rows corresponding to the spike-in sequences (by matching to reference).

c. Calculate the total read count for spikes in each sample:

Spike_i. - Normalization Calculation:

a. Compute the median spike count across all samples:

Median(Spike). b. For each samplei, calculate a size factor:SF_i = Spike_i / Median(Spike). c. Normalize the non-spike microbial counts in sampleiby dividing bySF_i. This scales counts based on technical recovery, not microbial load.

Disentangling Technical and Biological Sources of Variation

Technical Support Center: Troubleshooting Zero-Inflation in Microbiome Data Analysis

Frequently Asked Questions (FAQs)

Q1: How can I distinguish between a true biological absence of a microbe and a technical dropout in my 16S rRNA sequencing data? A: True biological absence is often supported by congruent absence across multiple technical replicates and sequencing runs, and may align with known physiological or environmental constraints (e.g., an obligate aerobe in an anaerobic sample). Technical dropouts are stochastic and more likely to appear as sporadic zeros in samples with low sequencing depth or low biomass. Implement a prevalence filter (e.g., retain features present in >10-20% of samples within a group) as a first pass, but be cautious of removing rare but real taxa.

Q2: My negative controls contain reads. How do I handle contamination-induced zeros?

A: Reads in negative controls indicate reagent or environmental contamination. This contamination can cause zeros in your true samples if the contaminant sequences outcompete or mask low-abundance real taxa. Use dedicated decontamination tools (e.g., decontam in R) which leverage prevalence or frequency methods to identify and remove contaminant ASVs/OTUs. This step is critical before assessing other sources of zeros.

Q3: What is the impact of library size (sequencing depth) on zero-inflation, and how do I correct for it? A: Undersampling due to low library size is a primary technical driver of zeros. A taxon present at low abundance may simply not be sampled in a given run. The table below summarizes the relationship.

| Sequencing Depth (Reads/Sample) | Approximate % of Zeros Attributable to Undersampling* | Recommended Action |

|---|---|---|

| < 5,000 | High (>60%) | Increase depth; use rarefaction or depth-based scaling. |

| 5,000 - 20,000 | Moderate (30-60%) | Statistical models (e.g., ZINB) that account for depth. |

| > 20,000 | Lower (<30%) | Focus on biological/contamination sources. |

*Hypothetical estimates for illustrative purposes based on common community profiles.

Q4: Which statistical model should I choose for zero-inflated microbiome data? A: The choice depends on the suspected source of zeros. For batch-correlated technical zeros, a Zero-Inflated Negative Binomial (ZINB) model with batch as a covariate is often suitable. For zeros assumed to be primarily biological or due to undersampling, a hurdle model (e.g., Presence/Absence + Truncated Count) or a Negative Binomial (NB) model with appropriate normalization may be sufficient. Always compare model fit using AIC/BIC.

Q5: How do I design an experiment to minimize batch effects that cause technical zeros? A: Follow these key protocols:

- Reagent Batching: Use a single lot of all critical reagents (extraction kits, PCR master mix) for the entire study.

- Randomization: Randomize samples from different experimental groups across DNA extraction plates, PCR plates, and sequencing lanes.

- Controls: Include both positive controls (mock microbial communities) and negative controls (blank extractions) in every batch.

- Metadata Tracking: Meticulously record all potential batch variables (extraction date, operator, sequencer lane, kit lot number).

Detailed Experimental Protocols

Protocol 1: Validating Biological Absence via qPCR Purpose: To confirm if a zero count from sequencing represents a true biological absence. Methodology:

- Design species- or genus-specific primers for the target taxon of interest.

- Perform quantitative PCR (qPCR) on the original DNA extracts from the samples showing zeros and on positive control samples.

- Use a standard curve generated from cloned amplicons or synthetic gBlocks.

- Compare Cq values. A Cq value at or beyond the limit of detection (e.g., >35-40 cycles) in the context of well-amplified internal controls supports true biological absence.

Protocol 2: Assessing Technical Dropout with Replicate Sequencing Purpose: To quantify the stochasticity of zeros and identify dropouts. Methodology:

- Select a subset of samples (n=10-20) representing high, medium, and low biomass.

- Re-amplify and sequence these samples in triplicate from the PCR stage onward on the same sequencing platform.

- Process data through the same bioinformatics pipeline.

- Create a presence-absence matrix for replicates. A feature (ASV) present in 1 or 2 out of 3 replicates for the same sample is likely a technical dropout. Calculate the dropout rate per sample.

Protocol 3: Correcting for Undersampling via In Silico Rarefaction Purpose: To evaluate if increased sequencing depth would resolve zeros. Methodology:

- Using a high-depth dataset, perform in silico rarefaction with tools like

veganin R. - Rarefy the data to multiple depths (e.g., 1k, 5k, 10k, 20k reads) with 100 iterations each.

- For each depth, calculate the mean number of observed features (ASVs/OTUs) and the mean proportion of zeros in the feature table.

- Plot rarefaction curves and a curve of zero proportion vs. depth to visualize the point of diminishing returns.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Mitigating Zero-Inflation |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | Serves as a positive control to assess sequencing efficiency, dropout rates, and batch-to-batch variability in technical steps. |

| UltraPure BSA or Skim Milk | Added during DNA extraction from low-biomass samples to reduce adsorption to tube walls, improving yield and reducing false zeros. |

| Duplex-Specific Nuclease (DSN) | Used to normalize eukaryotic host (e.g., human) DNA in host-associated microbiome studies, increasing sequencing depth for microbial taxa. |

| PCR Inhibitor Removal Beads (e.g., OneStep PCR Inhibitor Removal Kit) | Improves PCR amplification efficiency from complex samples (soil, stool), reducing stochastic dropouts of challenging taxa. |

| Unique Molecular Identifiers (UMIs) | Incorporated during library prep to correct for PCR amplification bias and chimera formation, providing more accurate absolute abundances. |

Visualizations

Diagram 2: Batch Effect Correction Workflow for Zero-Inflated Data

Troubleshooting Guide: Data Transformation & Normalization Issues

Q1: After using standard Z-score normalization, my sparse microbiome count data shows distorted cluster separation in my PCA plot. What went wrong and how do I fix it?

A: This is a classic symptom of applying Gaussian assumptions to sparse data. Z-score normalization (subtracting mean, dividing by standard deviation) assumes a symmetric, non-sparse distribution. Microbiome data, with its many zeros, is highly skewed. This forces the mean and variance to be heavily influenced by the zeros, distorting the true biological signal from the few non-zero counts.

Fix: Use a variance-stabilizing transformation designed for counts, such as the Centered Log-Ratio (CLR) transformation after adding a pseudocount, or a zero-inflated model-based normalization.

Protocol: CLR Transformation with Pseudocount

- Input: Raw count table (OTU/ASV table) with samples as columns and taxa as rows.

- Add a uniform pseudocount of 1 to all counts to handle zeros.

- For each sample (column), calculate the geometric mean of all taxa counts.

- Divide each taxon count in the sample by the sample's geometric mean.

- Take the natural logarithm of all resulting ratios.

- Output: A CLR-transformed matrix suitable for Euclidean-based statistical methods.

Q2: My differential abundance analysis yields unreliable p-values and inflated false positives when I try to use parametric tests (like t-tests) on normalized data. Why?

A: Parametric tests rely on assumptions of normality and homoscedasticity (equal variance). Standard normalization does not correct for the inherent mean-variance relationship in count data, where taxa with higher counts often have higher variance. Furthermore, the presence of excessive zeros violates normality. This combination leads to incorrect error rate estimation.

Fix: Employ statistical tests explicitly designed for zero-inflated count data.

Protocol: DESeq2 Workflow for Microbiome Count Data

- Data Input: Load raw, untransformed integer count matrix into DESeq2 using the

DESeqDataSetFromMatrix()function. - Model Design: Specify your experimental design formula (e.g., ~ batch + condition).

- Estimate Size Factors: DESeq2 calculates a per-sample size factor using the

estimateSizeFactors()method, which normalizes for library size differences via the median-of-ratios method. - Estimate Dispersions: Model the taxa-specific dispersion (variance) relative to the mean using

estimateDispersions(). This step is critical for handling the mean-variance relationship. - Fit Model & Test: Fit a negative binomial generalized linear model (NB-GLM) and perform hypothesis testing for differential abundance using the Wald test (

nbinomWaldTest()) or likelihood ratio test (LRT). - Results: Extract results with

results()function, which provides adjusted p-values controlling for false discovery rate (FDR).

FAQs on Batch Effect Correction for Sparse Data

Q3: Can I use ComBat to remove batch effects from my microbiome dataset?

A: Traditional ComBat, which assumes an empirical Bayes framework on normally distributed data, is not directly suitable for raw or standard-normalized count data. Applying it to CLR-transformed data can be effective if the transformation adequately handles zeros and sparsity. For robust correction, use methods that integrate the count model.

Recommended Alternative: Batch-correction incorporated into the NB-GLM model (e.g., including "batch" as a covariate in DESeq2 design) or specialized tools like MMUPHin (Meta-analysis Module with Uniform Pipeline for Heterogeneity in microbiome studies), which models batch effects within a zero-inflated mixture framework.

Q4: What is the best practice for visualizing zero-inflated data before and after correct normalization?

A: Avoid standard PCA on Euclidean distance for raw or poorly normalized data. Use distance metrics and ordination methods suited for compositional and sparse data.

Protocol: Principal Coordinates Analysis (PCoA) with Aitchison Distance

- Preprocess: Apply CLR transformation (see Protocol above) to your count matrix.

- Calculate Distance: Compute the Euclidean distance between all sample pairs in the CLR-transformed space. This is mathematically equivalent to the Aitchison distance for compositional data.

- Ordination: Perform PCoA (also known as Metric Multidimensional Scaling, MDS) on the resulting distance matrix.

- Visualize: Plot the first two PCoA axes. You can color points by batch and condition to visually assess batch effect removal and biological clustering.

Table 1: Comparison of Normalization Methods for Sparse Count Data

| Method | Underlying Assumption | Handles Zeros? | Preserves Compositionality? | Recommended For |

|---|---|---|---|---|

| Z-Score (Standard) | Gaussian Distribution | No, distorts distribution | No | Normally distributed continuous data |

| Total Sum Scaling | None (simple scaling) | Yes, but creates artifacts | Yes, but sensitive to outliers | Initial exploratory analysis |

| Centered Log-Ratio (CLR) | Compositional Data (Aitchison geometry) | Requires pseudocount | Yes | PCA, Euclidean-based methods |

| DESeq2 Median-of-Ratios | Negative Binomial Model | Yes, within model framework | Yes, via size factors | Differential abundance testing |

| CSS (MetagenomeSeq) | Cumulative Sum Scaling | Yes, model-based | Yes | Differential abundance in highly sparse data |

Table 2: Common Artifacts from Incorrect Normalization

| Artifact | Likely Cause | Diagnostic Plot |

|---|---|---|

| Clustering driven by library size | Using raw counts or TSS without addressing sparsity | PCA colored by sequencing depth |

| Inflated false positives in DA | Applying t-tests to normalized, non-normal counts | P-value histogram (should be uniform under null) |

| Masking of true biological signal | Over-correction or inappropriate assumption of symmetry | PCoA where batch explains more variance than condition |

| Skewed PERMANOVA results | Using Bray-Curtis on data with global zeros | Distance boxplot by group showing non-separation |

Experimental Workflow Diagram

Workflow: Standard vs. Correct Normalization for Sparse Data

The Scientist's Toolkit: Research Reagent Solutions

| Item / Software | Function & Application |

|---|---|

| DESeq2 (R/Bioconductor) | Primary tool for differential abundance analysis using Negative Binomial GLM. Correctly models count distribution and variance. |

| MMUPHin (R package) | Meta-analysis and batch effect correction tool specifically designed for microbiome compositional data. |

| ALDEx2 (R/Bioconductor) | Uses CLR transformation and Dirichlet-multinomial sampling for differential abundance inference, robust to compositionality. |

| QIIME 2 / DEICODE plugin | Incorporates Aitchison distance and Robust PCA for compositional, sparse data ordination and analysis. |

| ANCOM-BC (R package) | Accounts for compositionality and zeros in testing for differentially abundant taxa using a linear model framework with bias correction. |

| MaAsLin 2 (R package) | Flexible multivariate association analysis that can handle zero-inflated, over-dispersed microbiome data with fixed/random effects. |

| Phyloseq (R/Bioconductor) | Data structure and toolkit for organizing, visualizing, and applying diverse analyses to microbiome data. |

| Songbird (QIIME 2 plugin) | Multinomial regression framework for modeling microbial gradients, accounting for compositionality and sparsity. |

Technical Support Center: Troubleshooting Batch Effects in Zero-Inflated Microbiome Data

FAQ 1: Why do my alpha diversity measures (e.g., Shannon, Chao1) show significant batch-to-batch variation, even after rarefaction?

Answer: Batch effects introduce technical variation in library size and detection efficiency, which disproportionately impacts zero-inflated data. Rarefaction only equalizes sequencing depth but does not correct for batch-specific differences in composition or detection of low-abundance taxa. This skews diversity estimates, making biological comparisons unreliable.

Troubleshooting Guide:

- Diagnostic: Generate a Principal Coordinate Analysis (PCoA) plot using Bray-Curtis dissimilarity, colored by batch. If samples cluster strongly by batch, technical variation is present.

- Solution: Apply a batch-effect correction method designed for compositional count data before calculating diversity metrics. Recommended: Use

mmn(Meta-Neighborhood) orConQuR(Conditional Quantile Regression) which handle sparsity well. Avoid methods like ComBat that assume a normal distribution.

Experimental Protocol for Diagnostic PCoA:

- Input: Normalized or rarefied OTU/ASV table.

- Tool: Use the

vegdistfunction in R (veganpackage) with method="bray" to compute the Bray-Curtis dissimilarity matrix. - Visualization: Perform PCoA using

cmdscaleand plot withggplot2, mapping thefillaesthetic to your batch covariate. - Validation: Perform a PERMANOVA (

adonis2function) with formula~ Batch + Groupto quantify variance explained by batch vs. biological group.

FAQ 2: How can I distinguish a false association driven by batch from a true microbiome-disease association?

Answer: False associations arise when the batch variable (e.g., sequencing run, DNA extraction kit) is confounded with the biological variable of interest (e.g., disease status). A taxa may appear significant because it is differentially abundant between batches, not between disease states.

Troubleshooting Guide:

- Diagnostic: For any significantly associated biomarker, plot its abundance (or prevalence) across groups, stratified by batch. If the trend is consistent within each batch, association is more credible.

- Solution: Include batch as a covariate in your differential abundance or association model. Use models robust to zeros:

- For differential abundance:

ANCOM-BC2,corncob, orLinDA. - For multivariate association:

MMUPHinin meta-analysis mode orMaAsLin2with a random effect for batch.

- For differential abundance:

Experimental Protocol for Stratified Visualization:

- Input: CLR-transformed or proportion data for the target taxon.

- Tool: In R, use

ggplot2to create a boxplot or violin plot.x-axis: Disease Group (e.g., Case/Control)fill: Batch ID- Use

facet_wrap(~Batch)to create individual sub-plots per batch.

- Interpretation: Look for consistent direction of effect across all batch panels.

FAQ 3: My previously published microbiome biomarker fails to validate in a new cohort/study. Could batch effects be the cause?

Answer: Yes, irreproducible biomarkers are a major consequence of uncorrected batch effects. If the original biomarker was partially driven by technical artifacts specific to the first study's experimental conditions, it will not generalize.

Troubleshooting Guide:

- Diagnostic: Apply the original biomarker model (e.g., a specific taxon's abundance threshold) to the new data before any batch correction. Then, apply it after using a cross-platform correction method.

- Solution: Employ batch-effect adjustment before biomarker discovery. For cross-study validation, use methods that perform harmonization:

- For integrating multiple studies:

MMUPHinis specifically designed for this. - For a single study with batches:

SVA(Surrogate Variable Analysis) orRUV4(Remove Unwanted Variation) can estimate latent batch factors.

- For integrating multiple studies:

Experimental Protocol for Cross-Study Harmonization with MMUPHin:

- Input: Raw feature tables and metadata from all studies to be integrated.

- Tool: In R, use the

MMUPHin::fit_adjustfunction with arguments:feature_abd: List of feature tables.batch: Study or batch identifier.covariates: Biological variables of interest (e.g., disease status).

- Output: A harmonized feature table where batch-specific biases are reduced, enabling pooled analysis.

Data Presentation

Table 1: Comparison of Batch Effect Correction Methods for Zero-Inflated Microbiome Data

| Method | Underlying Model | Handles Sparsity (Zeros) | Maintains Compositionality | Recommended Use Case | Key Limitation |

|---|---|---|---|---|---|

| ComBat | Empirical Bayes, Gaussian | Poor | No | Normalized, non-sparse data (e.g., after CLR) | Assumes normal distribution; distorts compositional structure. |

| MMUPHin | Fixed-effects meta-analysis | Good | Yes | Harmonizing multiple studies (meta-analysis) | Requires multiple batches/studies. |

| ConQuR | Conditional Quantile Regression | Excellent | Yes | Strong, non-linear batch effects within a single study | Computationally intensive for very large feature sets. |

| ANCOM-BC2 | Linear model with bias correction | Good | Yes (via log-ratio) | Differential abundance testing with batch covariate | Primarily a testing tool, not a harmonization tool. |

| mmn | Metagenomic Neighborhood | Excellent | Yes | Single-study correction with a reference batch | Requires a designated "reference" batch. |

Visualizations

Diagram 1: Workflow for Diagnosing & Correcting Batch Effects

Diagram 2: Confounding Leading to False Association

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Robust Microbiome Analysis

| Item | Function | Example/Product Note |

|---|---|---|

| Mock Community (Standard) | Acts as a positive control and calibration standard across batches/runs. Essential for diagnosing technical variation. | BEI Resources HM-278D (ZymoBIOMICS) - Defines expected composition to quantify batch-specific deviations. |

| Internal Spike-In DNA | Added prior to DNA extraction to control for and correct biases in extraction efficiency and sequencing depth. | SERC Spike-in Control (SERC) - Synthetic sequences not found in nature for absolute quantification. |

| Uniform Extraction Kit | Minimizes batch variation at the wet-lab stage. Critical for multi-site studies. | MoBio PowerSoil Pro Kit (QIAGEN) - Widely adopted standard for soil/stool; use same lot across project if possible. |

| Library Prep Negative Control | Identifies contamination introduced during library preparation (kitome). | Nuclease-free Water - Processed alongside samples through all steps post-extraction. |

| Bioinformatic Standardized Pipeline | Ensures computational reproducibility and reduces analysis-based batch effects. | QIIME 2, DADA2, or Snakemake Workflow - Fix software versions and parameters for entire project. |

A Practical Toolkit: Methods for Detecting and Correcting Batch Effects in Sparse Data

Troubleshooting Guides & FAQs

Q1: My PCA/PCoA plot shows tight clustering by batch, not by treatment. What is this and what are my first steps? A: This is a classic sign of a dominant batch effect. Your first step is Detection First: visually confirm the artifact.

- Re-plot: Color and shape points by batch ID, experimental run, and technician.

- Validate: Run a Permutational Multivariate Analysis of Variance (PERMANOVA) on your distance matrix using batch as a factor.

- Action: If batch is statistically significant (p < 0.05), proceed to batch effect correction methods after this diagnostic phase. Do not ignore it.

Q2: After rarefaction or normalization, my hierarchical clustering dendrogram still separates samples purely by sequencing depth. Why? A: Zero-inflated data often has depth variation correlated with batch. Common normalizations (e.g., CSS, TSS) may not overcome this.

- Solution: Apply a variance-stabilizing transformation (VST) designed for count data, such as the one used in

DESeq2oredgeR, before calculating the distance matrix for clustering. - Protocol: Convert your OTU/ASV table to a

DESeqDataSetobject, estimate size factors, applyvarianceStabilizingTransformation, and use the resulting matrix for Euclidean distance calculation and clustering.

Q3: In PCoA, my control samples are scattered widely while treated samples cluster tightly. Is this a problem? A: Yes. This indicates heteroskedasticity—uneven variances between groups—common in zero-inflated data. It violates assumptions of many downstream statistical models.

- Diagnostic: Use beta-dispersion tests (e.g.,

betadisperin R) to check if dispersion differs significantly between groups. - Next Step: Consider analyses that are more robust to dispersion differences, or explore transformations that stabilize variance across groups.

Q4: How do I choose between PCA (a linear method) and PCoA (for any distance matrix) for my microbiome data? A: The choice depends on your distance metric.

- Use PCA when you want to use Euclidean distance on transformed (e.g., VST, centered log-ratio) data. It maximizes linear variance.

- Use PCoA (aka MDS) when you want to use ecological distances like Bray-Curtis, UniFrac, or Jaccard, which are better suited for compositional microbiome data. PCoA places samples in space based on these pairwise distances.

Q5: My visual diagnostics look good, but my PERMANOVA is insignificant for both batch and treatment. What could be wrong? A: Low statistical power due to zero inflation and high inter-individual variation.

- Check: Examine the within-group dispersions. If they are very high, the signal may be masked.

- Action:

- Consider filtering out low-prevalence features (e.g., present in <10% of samples).

- Increase sample size if possible.

- Use a more sensitive, model-based differential abundance test (e.g.,

ANCOM-BC,MaAsLin2,DESeq2on genus-level aggregates) after the visual diagnostic step confirms minimal batch effects.

Experimental Protocols for Cited Diagnostics

Protocol 1: Generating a Batch-Aware PCoA Plot with PERMANOVA

- Input: Normalized count or relative abundance table (samples x features).

- Calculate Distance: Compute a Bray-Curtis dissimilarity matrix using the

vegdistfunction in R (packagevegan). - Ordination: Perform PCoA on the distance matrix using the

pcoafunction (packageape). - Visualization: Plot the first two principal coordinates. Color points by

Treatmentand use point shapes forBatch. - Statistical Validation: Run

adonis2(PERMANOVA) from theveganpackage:adonis2(distance_matrix ~ Treatment + Batch, data=metadata).

Protocol 2: Hierarchical Clustering Diagnostic for Batch Effects

- Transform Data: Apply a Variance Stabilizing Transformation (VST) to the raw count data to normalize depth and stabilize variance.

- Distance & Clustering: Calculate Euclidean distance on the VST-transformed matrix. Perform hierarchical clustering using Ward's method (

hclustwith method="ward.D2" in R). - Visualization: Plot the dendrogram and color the sample labels below the tips according to their batch metadata.

- Interpretation: Observe if major branches of the tree exclusively contain samples from a single batch, indicating a strong batch-driven structure.

Data Presentation

Table 1: Comparison of Visual Diagnostic Methods for Batch Effect Detection

| Method | Input Data Type | Key Metric | Strengths for Batch Detection | Limitations |

|---|---|---|---|---|

| Principal Component Analysis (PCA) | Normalized/Transformed Abundance Matrix | Euclidean Distance | Fast; emphasizes major linear variance; intuitive axes. | Assumes linear relationships; sensitive to transformation choice. |

| Principal Coordinates Analysis (PCoA) | Any Distance Matrix (e.g., Bray-Curtis, UniFrac) | Pre-calculated Dissimilarity | Flexible; uses ecologically meaningful distances; good for compositional data. | Axes are abstract (coordinates); variance explained may be low. |

| Hierarchical Clustering | Distance Matrix or Transformed Matrix | Linkage Algorithm (e.g., Ward) | Clear tree structure; explicitly shows sample groupings. | Result is a dendrogram, not a 2D plot; sensitive to distance/linkage choice. |

Table 2: Essential Distance Metrics for Microbiome PCoA

| Metric | Formula/Concept | Best For | Sensitivity to Batch Effects |

|---|---|---|---|

| Bray-Curtis Dissimilarity | BC = (Σ|A_i - B_i|) / (Σ(A_i + B_i)) |

General community composition. | High. Directly influenced by abundance shifts from technical artifacts. |

| Weighted UniFrac | Branch-length weighted by abundance differences. | Phylogenetic structure + abundance. | High, as it incorporates abundance. |

| Unweighted UniFrac | Presence/absence of lineages on a tree. | Phylogenetic diversity, rare lineages. | Moderate. Can reveal batch-driven loss of rare taxa. |

| Aitchison Distance | Euclidean distance on CLR-transformed data. | Compositional data, overcoming sparsity. | High, but after proper CLR with zero-handling. |

Mandatory Visualization

Diagram 1: Visual Diagnostics Workflow for Batch Effects

Diagram 2: Key Relationships in Microbiome Distance Metrics

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Visual Diagnostics |

|---|---|

| R Statistical Software | Core platform for all statistical computing, visualization, and execution of packages like vegan, phyloseq, and DESeq2. |

phyloseq R Package |

Integrates OTU tables, taxonomy, sample data, and phylogeny into a single object; streamlines ordination and plotting. |

vegan R Package |

Essential for ecological analysis. Contains functions for distance calculation (vegdist), PERMANOVA (adonis2), and beta-dispersion tests (betadisper). |

| Centered Log-Ratio (CLR) Transformation | A compositional data transformation that handles zeros (via pseudocounts or imputation) to make Euclidean distance applicable. |

| Bray-Curtis Dissimilarity Metric | The standard ecological beta-diversity metric for visualizing overall community composition differences between samples. |

| Qiime2 / DADA2 Pipeline Output | Provides the high-quality, denoised amplicon sequence variant (ASV) tables and phylogenies that serve as the input for all downstream diagnostics. |

| PERMANOVA Statistical Test | A non-parametric multivariate hypothesis test used to statistically confirm whether groupings (batch/treatment) explain a significant portion of the variance seen in ordination plots. |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: My PERMANOVA results show a significant batch effect (p < 0.05), but the R² value is very small (e.g., 0.02). Should I still correct for this batch? A1: Yes. A statistically significant p-value, even with a small R², indicates a non-random association between batch and your data structure. In zero-inflated microbiome data, small, systematic technical shifts can disproportionately impact rare taxa and downstream analyses. Proceed with batch correction methods (e.g., ComBat, RUV, limma) and validate with post-correction Silhouette Width.

Q2: After batch correction, my Silhouette Width by batch is still negative. What does this mean? A2: A negative post-correction Silhouette Width suggests samples are, on average, closer to samples from other batches than to their own batch. This is a positive outcome, indicating successful batch effect removal. Your primary biological groups (e.g., treatment vs. control) should now be the dominant driver of variation. Re-calculate the Silhouette index using your biological groups to confirm.

Q3: How do I handle PERMANOVA when my microbiome data has many zeros? A3: Zero-inflation violates the assumptions of standard distance metrics. Follow this protocol:

- Choose an appropriate distance metric: Use Bray-Curtis or Jaccard, which are robust to zeros. Avoid Euclidean distance.

- Apply a gentle transformation: Use a Variance Stabilizing Transformation (VST) from

DESeq2or a center-log-ratio (CLR) transformation after pseudo-count addition, rather than rarefaction. - Run PERMANOVA: Use the

adonis2function (vegan package in R) with 9999 permutations for robust p-value estimation. - Validate with null model: Compare your results to a PERMANOVA on a shuffled dataset to ensure power isn't artificially inflated.

Q4: The Silhouette Width for my batches is positive but low (< 0.25). Is this a problem? A4: A low positive Silhouette Width indicates "weak" batch clustering. Consult the accompanying table to interpret your result in the context of your PERMANOVA R².

| PERMANOVA R² (Batch) | Silhouette Width (Batch) | Interpretation & Recommended Action |

|---|---|---|

| > 0.1 and p < 0.05 | > 0.5 (Strong) | Severe Batch Effect. Must correct. Use aggressive correction (e.g., batch-aware DA analysis, ComBat-seq). |

| 0.05 - 0.1 and p < 0.05 | 0.25 - 0.5 (Fair) | Moderate Batch Effect. Correct using standard methods (e.g., RUV, limma). |

| < 0.05 and p < 0.05 | 0 - 0.25 (Weak/None) | Minor but Significant Effect. Consider including batch as a covariate in linear models rather than pre-correction. |

| Not Significant (p > 0.05) | Any Value | No Statistical Evidence of Batch Effect. Do not apply correction, as it may introduce noise. Proceed with analysis. |

Troubleshooting Guides

Issue: Inconsistent PERMANOVA results between different distance metrics.

- Cause: Different metrics weight zeros and compositional effects differently.

- Solution:

- Pre-process data consistently (same normalization/transformation).

- Run PERMANOVA on a suite of relevant metrics (Bray-Curtis, Unweighted UniFrac, Weighted UniFrac).

- If results are congruent (all significant/non-significant), proceed with confidence.

- If discordant, investigate which taxa drive distances in each metric. Prioritize the metric most relevant to your biological question (e.g., Unweighted UniFrac for lineage, Bray-Curtis for abundance).

Issue: Silhouette Width calculation fails or produces NA values.

- Cause: This often occurs when a batch contains only one sample, or all pairwise distances between samples in a cluster are zero (common with many zero-inflated samples).

- Solution:

- Check batch sizes. Remove or merge batches with n=1.

- If zero distances are the cause, add a minimal, consistent pseudo-count (e.g., 1e-10) or use a distance metric that handles identical samples robustly before recalculation.

Issue: Batch correction appears to remove biological signal.

- Cause: Over-correction when batch and biological group are confounded.

- Solution:

- Diagnose: Create a PCoA plot colored by biological group before and after correction. Use the Silhouette Width for the biological group as a metric.

- Mitigate: Use supervised correction methods that protect a specified biological variable (e.g.,

limma removeBatchEffectwhile protecting the group variable) or switch to a model that includes batch as a covariate (e.g.,DESeq2,MaAsLin2).

Experimental Protocol: Quantifying Batch Effects in Zero-Inflated Microbiome Data

Objective: To statistically and geometrically quantify the degree of technical batch confounding in a 16S rRNA gene sequencing dataset.

Step 1: Data Preprocessing & Normalization

- Import ASV/OTU table into R (phyloseq object).

- Do NOT rarefy. Apply a Variance Stabilizing Transformation (VST) using

DESeq2or a Center Log-Ratio (CLR) transformation.- VST Protocol: Use

phyloseq_to_deseq2(), estimate size factors,varianceStabilizingTransformation(), then convert back to phyloseq. - CLR Protocol: Add a pseudo-count of min(relative abundance)/2, then use

transform_sample_counts(function(x) log(x) - mean(log(x))).

- VST Protocol: Use

Step 2: PERMANOVA Execution

- Calculate a Bray-Curtis dissimilarity matrix from the transformed data (

distance()function). - Run PERMANOVA using

adonis2from theveganpackage:adonis2(distance_matrix ~ batch + group, data = metadata, permutations = 9999). - Record the R² and p-value for the

batchterm.

Step 3: Silhouette Width Calculation

- Using the same Bray-Curtis distance matrix, compute the average Silhouette Width for the batch grouping.

- In R:

library(cluster); sil <- silhouette(as.numeric(metadata$batch), dist = distance_matrix); summary(sil)$avg.width. - Visualize with

fviz_silhouette(sil)(factoextrapackage).

Step 4: Integrated Interpretation

- Use the decision table (FAQ Q4) to synthesize PERMANOVA (R²/p) and Silhouette Width results.

- If correction is needed, proceed with a method appropriate for zero-inflated data (e.g.,

svawith VST data,RUVseq).

Visualization: Batch Effect Diagnosis Workflow

Diagram Title: Batch Effect Quantification & Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Analysis |

|---|---|

| R Statistical Software | Primary platform for statistical computing, hosting essential packages (vegan, phyloseq, cluster, sva). |

vegan Package |

Contains adonis2 function for running PERMANOVA, the gold-standard test for multivariate batch effects. |

phyloseq Package |

Data structure and tools for handling phylogenetic sequencing data, enabling integrated transformations and distance calculations. |

DESeq2 Package |

Provides a robust Variance Stabilizing Transformation (VST) to normalize zero-inflated count data prior to distance calculation. |

cluster Package |

Contains the silhouette function for calculating the Silhouette Width index to assess cluster compactness/separation (by batch). |

| Bray-Curtis Dissimilarity | A robust beta-diversity metric less sensitive to zeros and compositional bias than Euclidean distance, ideal for PERMANOVA on microbiome data. |

| ComBat (sva package) | An empirical Bayes method for batch correction of continuous, normally distributed data (use on VST/CLR transformed data). |

| MiRNA/mRNA Spike-Ins | External controls added pre-extraction to quantify and correct for technical variation in sample processing, not just sequencing. |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My microbiome dataset has many zeros and strong technical batch effects. Which tool should I start with: MMUPHin, ComBat, or ZINB-WaVE?

A: The choice depends on your primary goal and data structure. Use this decision guide:

- MMUPHin: Choose if your primary goal is batch correction for meta-analysis across multiple studies with discrete batches. It is robust for integrating publicly available datasets.

- ComBat-family (e.g., ComBat, ComBat-seq): Choose if you have known, discrete batches and want a simple, linear adjustment. Use ComBat-seq if your data is raw count-based (e.g., from RNA-Seq or 16S).

- ZINB-WaVE: Choose if your primary goal is noise reduction and dimensionality reduction for downstream analysis (like clustering) while explicitly modeling zero inflation. It can be used before or after batch correction.

Q2: After applying MMUPHin, my adjusted data still shows batch clustering in the PCA. What went wrong?

A: This is a common issue. Follow this troubleshooting checklist:

- Check Batch Variable: Ensure your batch covariate correctly labels all sources of technical variation.

- Adjust

n.factors: The default number of factors (5) may be insufficient. Increase this parameter (e.g., to 10-15) in theadjust_batchfunction to capture more batch-associated variance. - Inspect Variance Explained: Use MMUPHin's diagnostics to see the proportion of variance explained by the batch factor. If it's low, batch may not be the dominant signal.

- Consider Confounding: If batch is confounded with a biological condition of interest (e.g., all cases from one lab, controls from another), correction may remove the biological signal.

Q3: I used ZINB-WaVE, but the algorithm fails to converge or returns an error. How can I fix this?

A: Convergence issues in ZINB-WaVE often stem from model over-specification or data scaling.

- Simplify the Model: Reduce the number of covariates (

X) or sample-level covariates (V) in thezinbModelorzinbFitfunction. Start with an intercept-only model. - Adjust Parameters: Decrease the number of

K(latent factors) or increase the penalization parameters (epsilonorverbose). - Filter Features: Remove taxa/genes that are zero in an extremely high percentage of samples (e.g., >99%). This stabilizes estimation.

- Check Input: Ensure your input data is a numeric matrix of raw counts. Do not use log-transformed or normalized data as input.

Q4: Can I use ComBat on my sparse 16S rRNA sequencing count data?

A: Standard ComBat is not recommended for raw, zero-inflated count data as it assumes Gaussian-distributed data. Instead, you have two primary options:

- Use ComBat-seq: This variant models count data using a negative binomial distribution and is more appropriate. However, it does not explicitly model zero inflation.

- Transform Data First: Apply a Variance Stabilizing Transformation (VST) like

DESeq2::varianceStabilizingTransformationor a log(X+1) transform to your counts, then apply standard ComBat. This is a pragmatic but less theoretically rigorous approach.

Experimental Protocols

Protocol 1: Batch Correction and Integration with MMUPHin This protocol is for harmonizing multiple microbiome studies.

- Input Preparation: Format your feature (e.g., OTU, ASV) abundance table and metadata. Metadata must include

batch(study ID) andcovariates(e.g., disease status, age). - Run Batch Correction:

- Extract Output: Use

fit_adjust$feature_abd_adjfor the corrected abundance matrix. - Diagnostics: Plot the variance explained before/after correction using

fit_adjust$metrics.

Protocol 2: Dimensionality Reduction with ZINB-WaVE This protocol is for deriving low-dimensional, denoised representations of single-cell or microbiome data.

- Model Fitting:

Get WaVE Coordinates:

Downstream Analysis: Use the

wave_coordinatesfor clustering (e.g., k-means) or visualization (e.g., UMAP).

Data Presentation

Table 1: Comparison of Zero-Inflated Batch Effect Correction Tools

| Feature | MMUPHin | ComBat/ComBat-seq | ZINB-WaVE |

|---|---|---|---|

| Primary Goal | Meta-analysis integration | Batch effect removal | Dimensionality reduction |

| Data Type | Relative abundance/counts | ComBat: Gaussian; ComBat-seq: Counts | Raw counts (Zero-inflated) |

| Key Model | Linear mixed-effects | Empirical Bayes linear/Negative Binomial | Zero-Inflated Negative Binomial |

| Explicit Zero Model? | No | No | Yes |

| Handles Continuous Batch? | Yes | No (Discrete batches only) | Via covariates |

| Output | Corrected abundance matrix | Corrected abundance matrix | Low-dimensional (WaVE) coordinates |

| Best For | Harmonizing multiple studies | Simple, known batch correction | Exploratory analysis, clustering |

Table 2: Common Diagnostic Metrics Post-Correction

| Metric | Formula/Description | Target Outcome |

|---|---|---|

| Principal Variance Component Analysis (PVCA) Batch Variance | Proportion of variance attributed to batch factor. | Decrease after correction. |

| Average Silhouette Width by Batch | Measures batch mixing (range: -1 to 1). | Closer to 0 (from positive values). |

| Per-feature Mean/SD Plot | Visualization of variance stabilization. | Reduced spread around the mean. |

| k-NN Batch Effect Score | Proportion of nearest neighbors from same batch. | Decrease towards 1/(number of batches). |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| High-Quality Metadata Template | Standardized sheet to record batch covariates (sequencing run, extraction kit, operator, sample type). Critical for all tools. |

| Negative Control Samples | Used to estimate and subtract background/kitome contamination (e.g., with decontam R package) before batch correction. |

| Mock Community Standards | Even microbial mixture used to diagnose and quantify batch effects in sequencing and bioinformatics pipelines. |

| Variance Stabilizing Transformation (VST) Algorithm | (e.g., from DESeq2). Preprocessing step to make Gaussian-based tools (like standard ComBat) more applicable to count data. |

PERMANOVA Test (vegan::adonis2) |

Statistical test to quantify the variance (R²) explained by batch before and after correction. |

Workflow & Relationship Diagrams

Tool Selection Workflow for Batch Correction

Typical Analysis Pathways for Different Tools

Technical Support Center: Troubleshooting Guides & FAQs

Context: This support center is framed within a thesis on Addressing batch effects in zero-inflated microbiome data research. It addresses common issues during batch effect correction workflows.

FAQs & Troubleshooting

Q1: My data has many zeros (zero-inflation). Which batch correction method should I choose? A: For zero-inflated microbiome data (e.g., 16S rRNA sequencing counts), traditional variance-stabilizing methods may fail. We recommend:

- In R: Use

batchcorr::PLSDABatchEffect()after a centered log-ratio (CLR) transformation on non-zero values, or employsva::ComBat_seq()designed for count data. - In Python: Use

scprep'sComBatwithparametric=Falseon CLR-transformed data orbbknnfor integration in a UMAP space. - Troubleshooting: If correction creates negative or non-integer "pseudo-counts," ensure you are using a count-specific method (like ComBat-seq) and interpret results for downstream analyses like PERMANOVA with caution.

Q2: After batch correction, my biological signal seems diminished. What went wrong? A: This indicates potential over-correction.

- Diagnosis: Check PCA/PCoA plots before and after correction. Use negative control features (e.g., housekeeping genes, expected stable taxa) if available.

- Solution: Adjust the

modparameter insva::ComBatorscprep.ComBatto include a matrix of your biological conditions of interest. This protects the primary variable from being removed.

Q3: I get "Error in prcomp" or "SVD did not converge" errors during correction. A: This is often due to constant or near-constant features across samples after filtering.

- Fix: Aggressively filter low-variance or low-prevalence features before correction. For microbiome data, remove OTUs/ASVs present in less than 10-20% of samples.

- R code snippet:

- R code snippet:

Q4: How do I validate that batch correction worked for my dataset? A: Use a combination of visual and quantitative diagnostics.

- Visual: PCA/PCoA plots colored by Batch and by Condition.

- Quantitative: Perform a statistical test on the association between batch and principal components before and after correction.

- R Protocol:

- R Protocol:

Table 1: Comparison of Batch Correction Methods for Zero-Inflated Data

| Method | Language/Package | Input Data Type | Handles Zero-Inflation? | Key Parameter for Protection |

|---|---|---|---|---|

| ComBat | R (sva), Python (scprep) |

Continuous (e.g., logCPM, CLR) | No (requires pre-transform) | mod: Model matrix for desired variables |

| ComBat-seq | R (sva) |

Raw Counts | Yes (Negative Binomial model) | covar_mod: Covariates to protect |

| Harmony | R/Python (harmony) |

PCA Embedding | Indirectly (post-PCA) | theta: Diversity clustering penalty |

| MMUPHin | R (MMUPHin) |

Microbial Counts | Yes (Meta-analysis framework) | control: List of adjustment settings |

| limma removeBatchEffect | R (limma) |

Continuous | No (requires pre-transform) | design: Design matrix with conditions |

Table 2: Typical Impact of Pre-processing on Zero-Inflation

| Pre-processing Step | % Zeros in Example Dataset (Before) | % Zeros in Example Dataset (After) | Recommended For |

|---|---|---|---|

| Raw ASV Counts | 65.2% | 65.2% | Baseline |

| Prevalence Filtering (>20%) | 65.2% | 58.7% | All workflows |

| CLR Transformation (w/ pseudocount) | 58.7% | 0%* | Distance-based analyses |

| Rarefaction | 65.2% | 65.2% | Library size normalization |

*Zeros are replaced with log-transformed pseudocount values; structure is altered.

Experimental Protocols

Protocol 1: Batch Correction for Microbiome Counts using ComBat-seq in R

- Input: Raw ASV/OTU count table (matrix), sample metadata with 'Batch' and 'Condition' columns.

- Filtering: Remove features with prevalence < 20% across all samples.

- Model Setup: Create a model matrix for the biological condition you want to preserve (e.g.,

mod <- model.matrix(~Condition, data=metadata)). - Correction: Apply ComBat-seq:

corrected_counts <- ComBat_seq(counts=filtered_matrix, batch=metadata$Batch, group=metadata$Condition, covar_mod=mod). - Validation: Generate PCoA plots (Bray-Curtis) on pre- and post-correction data, colored by Batch and Condition.

Protocol 2: Integrating Multiple Batches in Python using Harmony on CLR-transformed Data

- Input: Filtered ASV count table (pandas DataFrame).

- Transform: Apply CLR transformation using

skbio.stats.composition.clr. - Dimensionality Reduction: Perform PCA on the CLR-transformed matrix using

sklearn.decomposition.PCA(retain top 50 PCs). - Integration: Run Harmony:

harmony_out = harmonypy.run_harmony(pca_embedding, meta_data, 'Batch'). - Downstream Analysis: Use the Harmony-corrected embeddings (

harmony_out.Z_corr.T) for clustering or UMAP visualization.

Mandatory Visualizations

Title: Batch Correction Workflow for Microbiome Data

Title: Choosing a Batch Correction Method

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Batch Effect Correction in Microbiome Research

| Item | Function in Workflow | Example/Note |

|---|---|---|

| sva Package (R) | Implements ComBat & ComBat-seq for empirical Bayes framework correction. | Core tool for most batch correction tasks. |

| harmony Package (R/Py) | Integrates datasets using iterative PCA and clustering, suitable for single-cell or microbial profiles. | Effective when batches have complex, non-linear differences. |

| MMUPHin Package (R) | Meta-analysis framework specifically designed for microbial community data. | Handles zero-inflation and phylogenetic structure. |

| CLR Transformation | Compositional data transform that handles zeros via pseudocount. | Converts counts to Euclidean space. compositions::clr (R), skbio.stats.composition.clr (Py). |

| PERMANOVA Test | Validates reduction in batch-associated variation using distance matrices. | Use vegan::adonis2 (R) or skbio.stats.distance.permanova (Py). |

| Negative Control Features | Set of features not expected to vary by biology. Used to diagnose over-correction. | Housekeeping genes (transcriptomics), ubiquitous microbial taxa (microbiome). |

| Positive Control Samples | Replicate samples across batches (e.g., pooled samples). | Gold standard for assessing technical variation. |

Troubleshooting Guides & FAQs

Q1: After using ComBat to correct for batch effects in my zero-inflated microbiome count data, my DESeq2 results show no significantly differentially abundant taxa. What could be wrong?

A: This is a common pitfall. The variance-stabilizing transformation in DESeq2 assumes a negative binomial distribution. Applying it directly to batch-corrected counts (which may contain non-integer values from ComBat) violates this assumption. Solution: Use a two-step pipeline: 1) Perform DESeq2's standard size factor estimation and dispersion estimation on the raw counts. 2) Use the varianceStabilizingTransformation function on the raw data to obtain VST data. 3) Apply ComBat only to this VST-transformed data (using the sva package) for batch correction. 4) Use the corrected VST data for downstream analysis and visualization. Do not feed ComBat-corrected counts back into DESeq2's Wald or LRT tests.

Q2: When using ANCOM-BC, should I correct for batch effects before or after running the analysis?

A: ANCOM-BC has a built-in parameter for handling batch effects. You should not pre-correct your data. Instead, include the batch variable in the formula argument of the ancombc() function (e.g., formula = "group + batch"). The model will simultaneously estimate the group effect while adjusting for the batch covariate, which is statistically more rigorous. Pre-correcting can distort the compositional structure of the data that ANCOM-BC is designed to handle.

Q3: I get convergence warnings or errors when running ANCOM-BC on my dataset with many zeros. How can I fix this? A: Convergence issues often stem from sparse data with many zeros or highly unbalanced groups. Try the following:

- Increase the

max_iterparameter (e.g., from 100 to 200). - Enable zero handling by setting

zero_cut = 0.90(ignores taxa with >90% zeros) andlib_cut = 1000(ignores samples with low library size). - Use a smaller pseudocount via the

pseudoparameter (e.g., 0.5) when adding it to zeros. - Ensure your group and batch variables are factors with appropriate reference levels.

Q4: How do I choose between VST (DESeq2) and CLR (ANCOM-BC) transformation for batch correction prior to Beta Diversity analysis? A: The choice depends on your downstream DA tool.

- For a DESeq2-centric pipeline, use VST on raw counts, then correct with ComBat. VST stabilizes variance across the mean, making the data more homoscedastic for linear batch correction methods.

- For an ANCOM-BC/compositional-aware pipeline, use CLR (after adding a proper pseudocount). CLR maintains the simplex geometry of compositional data. Batch correct the CLR-transformed data using

removeBatchEffectfromlimmaor a similar method. - Critical: Never correct on different transformations interchangeably. Stick to one coherent pipeline.

Q5: My PCoA plot shows good batch correction, but my differential abundance results seem over-inflated. Are there specific post-adjustment diagnostics? A: Yes. After batch adjustment, always:

- Re-check distributions: Plot the density of corrected values (VST or CLR). Look for residual bimodality or strange artifacts that might indicate over-correction.

- Positive Control Sanity Check: If available, compare the effect size of known "housekeeping" taxa (non-differentially abundant) across batches before and after correction. Their log-fold changes should move closer to zero.

- Negative Control Assessment: In the absence of a known true signal, use negative control features (spiked-in synthetic standards or ubiquitous taxa in a mock experiment) to estimate the false discovery rate empirically post-correction.

Experimental Protocols

Protocol 1: Integrated DESeq2 and ComBat-SVA Pipeline for Zero-Inflated Data

- Input: Raw OTU/ASV count table (

count_data), sample metadata withgroupandbatchfactors. - DESeq2 Object Creation:

dds <- DESeqDataSetFromMatrix(countData = count_data, colData = metadata, design = ~ batch + group) - Pre-filtering: Remove taxa with fewer than 10 reads total.

dds <- dds[rowSums(counts(dds)) >= 10, ] - VST Transformation:

vst_data <- vst(dds, blind=FALSE) - Batch Correction with ComBat:

corrected_vst <- ComBat_seq(assay(vst_data), batch=metadata$batch, group=metadata$group, covar_mod=NULL) - Differential Analysis: Use the original

ddsobject with the design~ batch + groupfor the Wald test:dds <- DESeq(dds); res <- results(dds, contrast=c("group", "A", "B")) - Visualization: Use

corrected_vstfor PCoA and heatmaps.

Protocol 2: ANCOM-BC with Integrated Batch Covariate

- Input: Raw count table and metadata as above.

Data Preprocessing: Run

ancombc2with structured formula.Extract Results:

res <- out$rescontaining log-fold changes, standard errors, p-values, and q-values for thegroupvariable, adjusted forbatch.

Table 1: Comparison of DA Tools Post-Batch Adjustment

| Feature | DESeq2 + Post-VST ComBat | ANCOM-BC with Covariate |

|---|---|---|

| Data Input | Raw Counts | Raw Counts |

| Batch Handling | Corrects transformed data (VST) | Models batch as a covariate |

| Output Metric | Log2 Fold Change (LFC) | Log Fold Change (natural) |

| Zero Inflation | Handled via NB model + pre-filtering | Built-in zero-cutoff & pseudo-count |

| Best For | Large effect sizes, well-powered studies | High-sparsity data, strict FDR control |

| Key Parameter | blind=FALSE in vst() |

zero_cut, lib_cut |

Table 2: Recommended Pipeline Based on Data Characteristics

| Data Characteristic | Recommended Pipeline | Rationale |

|---|---|---|

| Sparsity > 80% | ANCOM-BC with batch covariate | Robust compositional method for zeros |

| Strong Batch Effect | DESeq2 VST → ComBat | Powerful linear correction on stabilized variance |

| Multiple Batches | ANCOM-BC with covariate | Simpler model interpretability |

| Paired Design | DESeq2 with design = ~ subject + batch + group |

Directly controls for pairing |

Visualizations

Diagram 1: DESeq2-ComBat Integrated Pipeline

Diagram 2: ANCOM-BC Batch Covariate Modeling

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Microbiome DA Pipelines

| Item | Function in Pipeline | Example/Note |

|---|---|---|

| R Package: DESeq2 | Core engine for negative binomial-based DA testing on raw counts. | Use DESeqDataSetFromMatrix, critical for size factor estimation. |

| R Package: ANCOMBC | Compositional DA analysis that accounts for the microbial count structure. | Function ancombc2 is the primary workhorse. |

| R Package: sva | Contains the ComBat and ComBat_seq functions for empirical batch correction. |

ComBat_seq is designed for count data. |

| R Package: limma | Provides removeBatchEffect function, useful for CLR-corrected data. |

Flexible for complex designs. |

| Silva/GTDB Database | For taxonomic assignment of 16S rRNA sequences, required for interpretation. | Version consistency is key for reproducibility. |

| Mock Community Standards | Positive controls spiked into samples to assess DA false positives post-correction. | e.g., ZymoBIOMICS Microbial Community Standard. |

| High-Fidelity Polymerase | For accurate PCR amplification during library prep, minimizing technical batch variation. | Reduces batch effect at source. |

| Unique Molecular Indexes (UMIs) | Reagent system to correct for PCR amplification biases, reducing technical noise. | Mitigates a major source of variation pre-analysis. |

Navigating Pitfalls and Optimizing Your Correction Pipeline for Robust Results

Troubleshooting Guide: FAQs for Batch Effect Correction in Microbiome Studies

Q1: After applying ComBat or similar adjustment, my differentially abundant taxa have completely changed. Have I over-corrected and removed true biological signal? A: Yes, this is a classic sign of over-correction. It occurs when the batch effect model is too aggressive, often because batch is confounded with a biological condition of interest. To diagnose:

- Check Design: Create a table of sample counts per batch and condition.

| Condition A | Condition B | Total | |

|---|---|---|---|

| Batch 1 | 15 | 0 | 15 |

| Batch 2 | 0 | 15 | 15 |

| Total | 15 | 15 | 30 |

Table 1: Example of a completely confounded design where batch and condition are inseparable, making batch correction high-risk for over-correction.

- Protocol - Positive Control Spike-In: Use a synthetic microbial community (e.g., ZymoBIOMICS Microbial Community Standard) spiked into your extraction blanks at varying dilutions across batches. After batch correction, these invariant control taxa should still be detectable and not appear differentially abundant. Their disappearance suggests over-correction.

- Solution: Use methods that allow for

batch ~ conditioninteraction or use supervised batch correction where the model is informed about the confounding. Tools likefastMNN(Seurat) orHarmonycan be more conservative. If confounding is total, batch correction is not statistically possible; acknowledge it as a study limitation.

Q2: My PCA plot still shows strong batch clustering after using zero-inflated Gaussian (ZIG) or DESeq2's median ratio method. Is this under-correction? A: Likely yes. Standard normalization methods often under-correct for technical variation in zero-inflated data.

- Diagnosis: Perform a PERMANOVA test on your distance matrix (e.g., Aitchison) using batch as a factor. A significant p-value (e.g., p < 0.05) confirms residual batch effects.

- Protocol - Batch-Marker Detection:

- Apply a non-batch-aware Differential Abundance (DA) test (e.g.,

ANCOM-BC,Maaslin2) with batch as the sole predictor. - Identify taxa with FDR < 0.05 as "batch-marker" taxa.

- Post your primary batch correction, re-test these batch-markers. If many remain significant, under-correction is present.

- Apply a non-batch-aware Differential Abundance (DA) test (e.g.,

- Solution: Employ a two-step or joint model:

- For count-based models (DESeq2, edgeR): Include

batchas a covariate in your design formula (e.g.,~ batch + condition). - For compositional data: Apply a zero-imputation method (like

cmultReplfrom thezCompositionsR package) followed by a robust center log-ratio (CLR) transformation, then applyComBatorremoveBatchEffecton the CLR-transformed data.

- For count-based models (DESeq2, edgeR): Include

Q3: My negative controls show high levels of specific taxa after correction. Has signal distortion occurred? A: Yes. This indicates distortion, often from inappropriate variance stabilization or transformation applied to sparse data, amplifying noise.

- Diagnosis: Inspect the CLR-transformed or variance-stabilized counts of your negative control samples. They should center near zero for all taxa. Systematic positive deviations are problematic.

- Protocol - Negative Control Monitoring:

- Compute the mean CLR value for each taxon across all negative control samples.

- Flag any taxon where the mean absolute CLR value > 2 (or your chosen threshold) as a potential contaminant.

- Post-correction, regenerate this list. The introduction of new taxa to this list indicates correction-induced distortion.

- Solution: Use batch correction methods designed for sparse data:

- BatchBala (R package): Specifically designed for compositional and zero-inflated microbiome data.

- MMUPHin (Python/R): Provides uniform manifold approximation for meta-analysis, including batch correction.

- Prerequisite Filtering: Aggressively filter contaminants identified in controls using

decontam(R package) before attempting batch correction.

Experimental Workflow for Batch Correction Validation

Diagram 1: Batch correction validation workflow for microbiome data.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| ZymoBIOMICS Microbial Community Standard | Defined mock community of known composition. Serves as a positive control for DNA extraction, sequencing, and to monitor over-correction when spiked across batches. |

| ZymoBIOMICS Spike-in Control (I, II) | Synthetic sequences not found in nature. Added in known quantities to distinguish technical zeros from biological zeros and calibrate absolute abundance. |

| DNA Extraction Blanks | Reagents processed without sample. Critical for identifying kitome contaminants and diagnosing post-correction distortion. |

| PCR Negative Controls | Water or buffer taken through amplification. Identifies cross-contamination during library prep, a key batch-specific technical effect. |

| Homogenization Buffer/Saline | Consistent solution for sample resuspension. Reduces pre-extraction batch variability in sample processing steps. |

| Magnetic Bead-based Cleanup Kits (e.g., AMPure XP) | For consistent library purification. Reduces batch effects in final library quality and concentration. |

| Index/Barcode Primers (Dual-Indexed) | Unique combinatorial indexing for each sample. Drastically reduces index hopping and lane-specific batch effects in multiplexed sequencing runs. |

Frequently Asked Questions (FAQs) & Troubleshooting Guides