Beyond the Hype: Advanced Strategies for Robust False Discovery Rate Control in Microbiome Differential Abundance Analysis

This article provides a comprehensive guide for researchers and bioinformaticians tackling the critical challenge of false discovery rate (FDR) control in microbiome differential abundance (DA) analysis.

Beyond the Hype: Advanced Strategies for Robust False Discovery Rate Control in Microbiome Differential Abundance Analysis

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians tackling the critical challenge of false discovery rate (FDR) control in microbiome differential abundance (DA) analysis. We move beyond foundational concepts to explore current methodological innovations, practical implementation strategies, and comparative evaluations of leading tools. We dissect why standard FDR corrections often fail in microbiome data due to compositionality, sparsity, and correlation structures. The article then details modern adaptive, conditional, and ensemble FDR methods, offers troubleshooting for real-world datasets, and validates approaches through benchmark studies. Our goal is to empower scientists to produce more reproducible and statistically sound discoveries in microbial ecology and translational research.

Why FDR Control Fails in Microbiome Studies: Understanding Compositionality, Sparsity, and Dependence

The High Stakes of False Discoveries in Translational Microbiome Research

Technical Support Center: Troubleshooting Differential Abundance (DA) Analysis

FAQs & Troubleshooting Guides

Q1: My DA analysis (using a common tool like DESeq2 or edgeR on 16S data) yields hundreds of significant taxa, but many lack biological plausibility. What could be the cause? A: This is a classic sign of poor false discovery rate (FDR) control due to inappropriate data handling. The primary culprits are:

- Ignoring Compositionality: Raw count data from microbiome experiments is compositional (relative). Applying methods designed for absolute counts without proper normalization (e.g., CSS, TMM, or a log-ratio transformation) violates model assumptions and inflates false positives.

- Overdispersion Neglect: Microbiome data is highly overdispersed. Tools that don't model this (e.g., standard t-tests on CLR-transformed data) will produce spurious results.

- Solution: Use compositionally aware tools (like

ANCOM-BC,ALDEx2, orMaAsLin 2with appropriate transforms) or ensure your chosen model (e.g., negative binomial in DESeq2) is paired with a proper normalization strategy for compositional data.

Q2: My pipeline for shotgun metagenomics functional analysis shows many differentially abundant metabolic pathways, but validation fails. How do I improve reliability? A: Functional profiling adds layers of uncertainty. The issue often lies in the unit of analysis.

- Problem: Analyzing pathway abundances derived from tools like HUMAnN3 as simple features compounds errors from taxonomic alignment, gene family inference, and pathway reconstruction.

- Solution: Implement stricter multi-level FDR correction. First, control FDR at the gene family level. Then, only aggregate significant gene families into pathways. This prevents a single poorly quantified gene from inflating an entire pathway's significance. Consider stability selection or permutation-based frameworks.

Q3: How do I handle zero-inflation and high sparsity in my dataset during DA testing? A: Excessive zeros (from biological absence or undersampling) distort distributions.

- Common Mistake: Simply adding a small pseudocount can create false associations for rare taxa.

- Recommended Protocol:

- Pre-filtering: Remove features present in less than 10-20% of samples in the smallest group. This reduces noise.

- Tool Selection: Employ models designed for zero-inflated data, such as:

ZINQ(Zero-Inflated Negative Binomial Quantile)MAST(for pre-processed, normalized counts)LinDA(Linear model for Differential Abundance analysis), which is robust to zeros.

- Validation: Use a non-parametric test (e.g., PERMANOVA on a robust distance matrix like Aitchison) as a sanity check on the global effect.

Q4: My cross-sectional study identifies many microbial biomarkers for a disease, but they fail to replicate in an independent cohort. What experimental and analytical steps can increase generalizability? A: This points to overfitting and batch effects.

- Critical Protocol: Combatting Batch Effects & Overfitting

- Design: Include technical controls and randomize sample processing.

- Pre-processing: Apply batch correction methods like

ComBat-seq(for counts) orMMUPHinafter DA testing, not before, to avoid introducing bias. Its use is primarily for meta-analysis. - Analysis:

- Use

q-valueorBenjamini-Hochbergprocedure for FDR control within your primary analysis. - For high-dimensional biomarker discovery, employ Stability Selection or Regularized Regression (LASSO) with nested cross-validation. The stability of a taxon's selection across many model subsamples is a stronger indicator than a single p-value.

- Mandatory: Hold out a complete validation cohort (different batch/location) from all training and parameter-tuning steps.

- Use

Experimental Protocol: A Robust Pipeline for Controlling FDR in 16S rRNA DA Analysis

Title: Protocol for Compositionally Aware, Confounder-Adjusted Differential Abundance Analysis.

Step 1: Data Curation & Normalization.

- Start with raw ASV/OTU counts. Apply a prevalence filter (retain features in >10% of samples).

- Do not rarefy. Instead, normalize using the CSS (Cumulative Sum Scaling) method (via

metagenomeSeq) or a log-ratio transformation (e.g., CLR using a geometric mean of detected features only).

Step 2: Model-Based DA Testing with Covariates.

- For case-control studies, use

ANCOM-BCorMaAsLin 2with the following parameters:MaAsLin 2: UseTSS(Total Sum Scaling) orCLRnormalization,LMmodel, andBHFDR correction.ANCOM-BC: Use its built-in bias correction and FDR control.

- Crucially: Include all relevant technical (sequencing depth, batch) and biological (age, BMI) covariates as fixed effects in the model formula.

Step 3: Aggregative & Non-Parametric Validation.

- Aggregate significant taxa at the genus or family level. Do their directionality changes make ecological sense?

- Perform a confirmatory global test using

PERMANOVA(adonis2, 999 permutations) on the Aitchison distance matrix of the full dataset. The primary grouping variable should be significant (p < 0.05).

Step 4: Independent Validation.

- Apply the model (coefficients, normalization factors) derived from the discovery cohort to a completely separate cohort's raw data. Calculate the predictive AUC. A drop in performance >15% indicates non-generalizable findings.

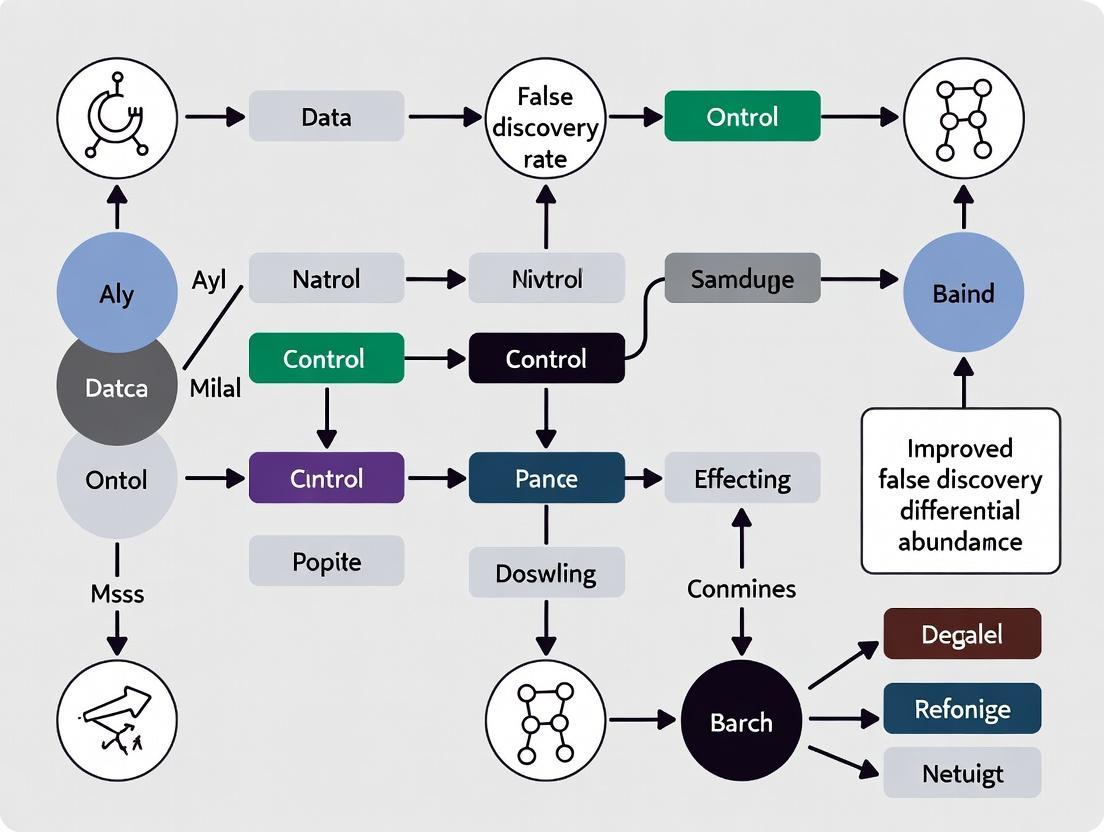

Visualizations

Title: Robust DA Analysis Workflow

Title: False Discovery Causes and Solutions

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Tool | Function in Controlling False Discoveries |

|---|---|

| ANCOM-BC (R Package) | Models sampling fraction and compositional bias to provide accurate FDR-controlled log-fold changes. |

| ALDEx2 (R Package) | Uses Monte Carlo sampling of Dirichlet distributions to account for compositionality, providing robust effect sizes. |

| q-value (R Package) | Estimates the proportion of true null hypotheses to provide less conservative FDR estimates than Benjamini-Hochberg. |

| MMUPHin (R Package) | Enables meta-analysis and batch correction of microbial community profiles, controlling for study-specific effects. |

Stability Selection (e.g., glmnet in R) |

Uses subsampling with LASSO to identify features consistently associated with an outcome, reducing false leads. |

Aitchison Distance (via robust::pivotCoord or vegan) |

A compositionally valid distance metric for PERMANOVA validation of DA results. |

| ZymoBIOMICS Microbial Community Standards | Defined mock communities essential for validating technical error rates and calibrating DA model thresholds. |

| Phusion HF DNA Polymerase | High-fidelity polymerase for library prep, minimizing PCR errors that create spurious sequence variants. |

| DADA2 / Deblur (Pipeline) | Sequence denoising algorithms that correct Illumina errors, reducing false positive ASVs/OTUs before DA. |

Defining FDR vs. Family-Wise Error Rate (FWER) in a High-Dimensional Context

Troubleshooting Guides & FAQs

Q1: I am analyzing a microbiome dataset with 500 taxa across 100 samples. When I use a method that controls the FWER (like Bonferroni), I get zero significant results. When I use FDR control (like Benjamini-Hochberg), I get 15 hits. Which result should I trust? A: This is a classic symptom of high-dimensional testing where signals are weak-to-moderate. FWER methods, which control the probability of any false positive, are overly conservative for exploratory 'discovery' screens like microbiome differential abundance (DA) analysis. FDR, which controls the proportion of false positives among declared discoveries, is more appropriate. Your FDR result (15 hits) is likely the more biologically plausible and useful outcome for downstream validation. You should proceed with these 15 candidates but confirm them with targeted, hypothesis-driven experiments.

Q2: My FDR-controlled DA analysis yields a list of significant genera, but subsequent validation experiments fail to confirm several of them. Is my FDR procedure faulty? A: Not necessarily. An FDR of 0.05 means that, on average, 5% of your discoveries are expected to be false. If you have 40 discoveries, 2 could be false leads. Other factors can increase false discoveries:

- Low Sample Size: Underpowered studies inflate the variance of effect estimates, leading to unstable p-values and unreliable FDR control.

- Violation of Test Assumptions: Many DA tools assume specific data distributions (e.g., negative binomial). Severe model misspecification can compromise p-value validity.

- Technical Artifacts: Batch effects, sequencing depth variation, and contamination can create spurious associations.

Troubleshooting Step: Apply a more robust FDR method like

q-value(which estimates the proportion of true null hypotheses) orIHW(Independent Hypothesis Weighting) that uses covariates (like abundance) to improve power. Always include sensitivity analyses in your pipeline.

Q3: How do I choose between different FDR-controlling procedures (e.g., Benjamini-Hochberg vs. Benjamini-Yekutieli) for my microbiome data? A: The choice depends on the assumptions about the dependency between your statistical tests.

- Use Benjamini-Hochberg (BH): This is the standard and recommended first choice. It controls FDR when test statistics are independent or positively dependent (a common scenario in omics data).

- Use Benjamini-Yekutieli (BY): This is much more conservative. Use it only if you have strong reason to believe your taxa abundances (and thus test statistics) exhibit arbitrary forms of negative dependency—this is less common. Protocol: For most microbiome DA analyses, the BH procedure is sufficient. Implement it as follows:

- Obtain p-values for all m hypotheses (e.g., for each taxon).

- Sort p-values in ascending order: ( p{(1)} \leq p{(2)} \leq ... \leq p_{(m)} ).

- Find the largest rank k where ( p_{(k)} \leq \frac{k}{m} \alpha ), where (\alpha) is your target FDR (e.g., 0.05).

- Declare the hypotheses ranked 1 to k as significant.

Q4: When performing multi-group or longitudinal comparisons, should I adjust the FDR within each contrast or globally across all tests? A: This is a critical decision that impacts error control.

- Global Adjustment (Recommended): Pool all p-values from all contrasts (e.g., Time1vsControl, Time2vsControl, Time1vsTime2) into a single pool and apply the FDR correction once. This controls the overall FDR across your entire experiment.

- Within-Group Adjustment: Applying FDR separately to each contrast inflates the overall false discoveries across the study.

Troubleshooting Protocol:

- Perform all planned pairwise or model-based statistical tests.

- Compile the resulting vector of m p-values, where m is (number of taxa * number of contrasts).

- Apply your chosen FDR procedure (e.g., BH) to this complete vector of m p-values.

- Report significance based on this globally adjusted q-values.

Q5: Can I use Storey's q-value directly on the p-values from my favorite DA tool (like DESeq2 for microbiome data)?

A: Yes, this is a valid and often powerful approach. The qvalue package in R/Bioconductor estimates the proportion (\pi_0) of truly null hypotheses from the p-value distribution, which can lead to more power than the standard BH procedure, especially when many taxa are truly differential.

Experimental Protocol:

- Run your DA model (e.g.,

DESeq2::DESeq,edgeR::glmQLFTest,Maaslin2) to obtain a p-value per feature. - In R, install the

qvaluepackage. library(qvalue); qobj <- qvalue(p_vector)- Discoveries are declared where

qobj$qvalues < FDR_threshold. - Critical Check: Always plot

plot(qobj)to inspect the p-value histogram and the (\pi_0) estimate. A flat histogram suggests reliable FDR control.

Data Presentation

Table 1: Comparison of Error Rate Control in High-Dimensional Testing

| Control Method | Target Error Rate | Stringency | Best Use Case in Microbiome Research | Key Limitation in High-Dimensions |

|---|---|---|---|---|

| Family-Wise Error Rate (FWER) | Probability of ≥1 false positive | Very High | Confirmatory studies, final validation steps, clinical trial endpoints | Severe loss of power (high Type II error) as number of tests (m) increases. |

| Bonferroni Correction | FWER | Extremely High | Same as above, when tests are independent. | Overly conservative, power ~ 1/m. |

| False Discovery Rate (FDR) | Expected proportion of false positives | Moderate | Exploratory DA analysis, feature selection for downstream work | Less strict; lists contain false positives but more true discoveries. |

| Benjamini-Hochberg (BH) | FDR | Standard | Default for most microbiome DA screens. | May be slightly conservative. |

| Storey's q-value | FDR (with π₀ estimation) | Adaptive | Large-scale screens where many features may be differential (e.g., strong intervention). | Requires well-behaved p-value distribution for π₀ estimation. |

Table 2: Typical Outcomes from a Simulation Study (m=1000 taxa, 50 truly differential)

| Error Control Method | Declared Discoveries | True Positives (Power) | False Positives | Achieved FDR |

|---|---|---|---|---|

| Bonferroni (FWER) | 15 | 12 | 3 | 0.20 (but controls FWER at 0.05) |

| BH (FDR=0.05) | 45 | 38 | 7 | 0.156 |

| q-value (FDR=0.05) | 48 | 40 | 8 | 0.167 |

| No Correction | 150 | 50 | 100 | 0.667 |

Experimental Protocols

Protocol 1: Standard FDR-Controlled Microbiome DA Analysis Workflow

Objective: Identify taxa differentially abundant between two groups with FDR control.

- Data Preprocessing: Normalize raw ASV/OTU counts using a method like Cumulative Sum Scaling (CSS) or median-of-ratios (DESeq2). Filter low-prevalence taxa (e.g., present in <10% of samples).

- Statistical Modeling: Apply a suitable model. For read count data, a negative binomial generalized linear model (e.g.,

DESeq2,edgeR) is standard. For pre-normalized data or with covariates, use linear models (Maaslin2,limma-voom). - P-value Generation: Extract the raw p-value for the coefficient of interest (e.g., group difference) for each taxon.

- FDR Adjustment: Apply the Benjamini-Hochberg procedure to the vector of all p-values to calculate q-values.

- In R:

qvalues <- p.adjust(p_vector, method = "BH")

- In R:

- Discovery Declaration: Declare all taxa with

qvalue < 0.05as differentially abundant. - Sensitivity Analysis: Re-run analysis using

qvaluepackage and/or IHW to check robustness of the discovery list.

Protocol 2: Simulation-Based Validation of FDR Control

Objective: Empirically verify that your chosen FDR method controls the error rate at the stated level under conditions similar to your study.

- Simulate Null Data: Use a parametric bootstrap or a probabilistic model (e.g., Dirichlet-multinomial) to generate synthetic microbiome datasets with no true differential abundance between groups. Preserve the real data's library size, overdispersion, and correlation structure.

- Run DA Analysis: Apply your full DA pipeline (steps 1-5 from Protocol 1) to this null dataset. Record the number of declared "discoveries" (all are false positives by construction).

- Repeat: Repeat steps 1-2 at least 500 times.

- Calculate Empirical FDR: For each simulation run, compute the proportion of false discoveries (FP/Declared). The average of this proportion across all 500 runs is the empirical FDR.

- Assessment: If the method is working correctly, the empirical FDR should be at or below the target FDR (e.g., 0.05). An empirical FDR consistently above 0.05 indicates anti-conservative behavior (inadequate control).

Visualizations

FDR vs FWER Decision Workflow

Benjamini-Hochberg FDR Control Procedure

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in FDR-Controlled Microbiome Research |

|---|---|

| DESeq2 (R/Bioconductor) | Primary tool for modeling overdispersed count data. Generates reliable p-values for differential abundance testing between conditions. |

| qvalue package (R/Bioconductor) | Implements Storey's q-value method for FDR estimation. Provides powerful adaptive control by estimating the proportion of null hypotheses. |

| Independent Hypothesis Weighting (IHW) | A covariate-powered FDR method. Uses a continuous covariate (e.g., taxon abundance or variance) to weight hypotheses, increasing power while controlling FDR. |

| Maaslin2 (R package) | Flexible framework for applying various linear models to normalized microbiome data. Outputs p-values ready for FDR correction, especially useful for complex covariate adjustments. |

| METAL (Metagenomic Analysis Toolbox) | For meta-analysis. Correctly pools p-values across multiple studies before applying global FDR control, increasing replicable discovery power. |

| Dirichlet-Multinomial Simulator | Used in Protocol 2 for simulation studies. Generates realistic null and alternative datasets to empirically validate FDR control and assess method performance. |

| Python statsmodels (multipletests) | Provides the fdrcorrection function for applying the Benjamini-Hochberg procedure, essential for FDR control in Python-based analysis pipelines. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During differential abundance (DA) analysis of microbiome count data using a method like DESeq2, my variance estimates appear inflated, leading to no significant findings despite clear visual trends in the data. What could be the cause?

A1: This is a classic symptom of unaddressed compositionality. Microbiome sequencing data is compositional; an increase in one taxon's relative abundance forces a decrease in others. This spurious correlation distorts variance estimates.

- Diagnosis: Apply a compositional data analysis (CoDA) transform, such as a Centered Log-Ratio (CLR) transformation, to your count data (with appropriate pseudo-counts) prior to variance estimation. Compare variance stability before and after transformation.

- Protocol:

- Input: Normalized count matrix (e.g., from

phyloseqormia). - Add Pseudocount:

counts_ps = counts + 1(or usezCompositions::cmultReplfor more sophisticated handling). - CLR Transform:

clr_matrix = t(apply(counts_ps, 1, function(x) log(x) - mean(log(x)))). - Re-run your variance estimation (e.g.,

DESeq2on CLR-transformed data usinglimma-voomfor Gaussian models). - Compare the dispersion plots from the raw count model and the CLR-based model.

- Input: Normalized count matrix (e.g., from

Q2: When I use ANCOM-BC or other compositionally-aware methods, I receive warnings about "variance of the test statistic is underestimated." How should I proceed?

A2: This warning indicates that the method's internal variance correction may be insufficient for your specific data structure, often due to extreme sparsity or batch effects.

- Diagnosis: Investigate data sparsity (

mean(otu_table == 0)) and the strength of batch/confounding variables. - Protocol for Batch Correction:

- Identify technical covariates (e.g., sequencing run, extraction batch).

- Use a method like

mmvecorComBat-seq(fromsvapackage) designed for counts to model and remove batch-associated variance before DA testing. Do not use standard ComBat on CLR-transformed data without careful consideration. - Re-run your compositionally-aware DA tool (ANCOM-BC, ALDEx2, etc.) on the batch-corrected counts.

Q3: My false discovery rate (FDR) control seems to fail when comparing many microbial features across multiple groups. Some methods find hundreds of hits, while others find none. How can I validate my FDR control?

A3: Inconsistent results highlight the sensitivity of FDR control to variance misspecification in compositional data.

- Diagnosis: Perform a simulation benchmark using realistic compositional data where the ground truth is known.

- Validation Protocol:

- Simulate Data: Use the

SPsimSeqormicrobiomeDASimR package to generate synthetic microbiome counts with known differential features, incorporating realistic compositionality, sparsity, and effect sizes. - Apply Multiple DA Methods: Test standard (DESeq2, edgeR) and compositional (ANCOM-BC, LinDA, fastANCOM) pipelines.

- Calculate Empirical FDR: For each method, compute (Number of False Discoveries / Total Number of Discoveries) across multiple simulation iterations.

- Compare to Nominal FDR: A well-calibrated method's empirical FDR should be close to the nominal FDR (e.g., 5%).

- Simulate Data: Use the

Key Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| zCompositions R Package | Handles zero replacement in compositional count data via Bayesian-multiplicative or other robust methods, a critical pre-processing step before CLR. |

| ALDEx2 R Package | Uses a Dirichlet-multinomial model to generate posterior distributions of CLR-transformed abundances, providing a robust, compositionally-aware framework for variance estimation and DA testing. |

| ANCOM-BC R Package | Directly models the compositional bias in the log-linear model and provides bias-corrected estimates and variances, controlling the FDR. |

| SPsimSeq R Package | Simulates realistic, sparse, and compositional microbiome count data for benchmarking DA methods and validating FDR control. |

| QIIME 2 (q2-composition) | Provides a plugin for compositional transformations (e.g., additive log-ratio) and essential beta-diversity metrics (e.g., Aitchison distance) for variance-aware ecological analysis. |

| Songbird (via QIIme 2) | Fits a multinomial regression model to relative abundances, ranking features by their differential ranking, useful for hypothesis generation and variance exploration. |

Table 1: Empirical False Discovery Rate (FDR) at Nominal 5% Across Simulation Scenarios

| DA Method | Low Sparsity (50% Zeros) | High Sparsity (90% Zeros) | With Batch Effect |

|---|---|---|---|

| DESeq2 (raw counts) | 8.2% | 15.7% | 22.3% |

| DESeq2 (CLR + limma) | 5.1% | 6.8% | 10.5% |

| ALDEx2 (t-test) | 4.8% | 5.3% | 6.1% |

| ANCOM-BC | 5.0% | 5.5% | 7.9% |

| LinDA | 4.9% | 5.2% | 6.5% |

Data simulated using SPsimSeq with 20 cases, 20 controls, and 500 features. 10% truly differential.

Table 2: Impact of Zero Replacement Strategy on Variance Estimation

| Replacement Method | Mean Variance (CLR Transformed) | Correlation with True Log-Fold Change |

|---|---|---|

| Pseudocount (1) | 4.32 | 0.71 |

| Bayesian Multiplicative (zCompositions) | 3.89 | 0.85 |

| Geometric Bayesian (cmultRepl) | 3.91 | 0.84 |

| No Replacement (CLR on non-zero only) | 5.67 (inflated) | 0.52 |

Analysis on a defined subset of 100 low-variance taxa from a Crohn's disease dataset.

Experimental Protocols

Protocol 1: Benchmarking FDR Control with Synthetic Data

- Data Generation: Use

SPsimSeq::SPsimSeq(n_sim = 100, n_feature = 500, ...)to create 100 synthetic datasets with predefined effect sizes and sparsity patterns. - DA Analysis: Apply each candidate DA pipeline (e.g., DESeq2, ALDEx2, ANCOM-BC) to all 100 datasets.

- Performance Calculation: For each method and dataset, record the number of True Positives (TP) and False Positives (FP).

- FDR Calculation: Compute Empirical FDR as

mean(FP / (TP+FP))across simulations where discoveries were made. Compare to the nominal level (e.g., 0.05).

Protocol 2: Variance Stabilization via CLR Transformation

- Input: A

phyloseqobjectpscontaining raw ASV/OTU counts. - Normalization: Apply a scaling normalization (e.g.,

median_ratiofromDESeq2) or convert to proportions (not recommended for sparse data). - Zero Handling: Use

zCompositions::cmultRepl(otu_table(ps), method="CZM", output="p-counts"). - CLR Transformation:

clr_table <- t(apply(p_counts, 1, function(r) log(r) - mean(log(r)))). - Variance Assessment: Plot the mean-variance trend (

vsnorlimma::voomfunction) of theclr_tableversus the raw counts.

Mandatory Visualizations

Title: Impact of Compositionality on Variance and FDR

Title: Recommended DA Workflow for Valid FDR Control

Title: Structure of a Compositionally-Aware Variance Model

Technical Support Center: Troubleshooting Zero-Inflation in Microbiome Differential Abundance Analysis

FAQs & Troubleshooting Guides

Q1: My P-P plot shows severe deviation from the expected uniform distribution under the null, with an excess of small p-values and a "bump" near 1. What does this indicate?

A: This pattern is a classic signature of zero-inflation in your microbiome count data. The excess of small p-values suggests false positives due to model misspecification, where standard tests (e.g., t-test, Wilcoxon) fail to account for the excess zeros. The bump near 1 often indicates taxa with many zeros in both groups, leading to tests that lack power and generate null p-values. To address this, implement a zero-inflated or hurdle model (e.g., via MAST for log-transformed data or glmmTMB for counts) that explicitly models the zero-generating process.

Q2: When using a standard differential abundance tool like DESeq2 or edgeR, my results are dominated by low-abundance taxa. Is this biologically plausible or a technical artifact?

A: This is frequently a technical artifact of sparsity. These models, while robust for RNA-seq, can be overly sensitive to the pattern of zeros in sparse microbiome data. A taxon with a few counts in one condition and all zeros in another can produce an artificially significant p-value. Solution: Apply prevalence (e.g., retain taxa present in >10% of samples) or variance filtering prior to analysis. Consider using methods designed for compositional data like ANCOM-BC or LinDA, which incorporate handling of zeros.

Q3: How do I diagnose if zero-inflation is the core problem in my dataset?

A: Perform the following diagnostic protocol:

- Calculate Sparsity: (Number of Zero Values / Total Values) * 100%. Sparsity >70% often warrants zero-inflated methods.

- Visualize the P-Value Distribution: Generate a histogram and a uniform Q-Q plot of p-values from a preliminary test. Look for the patterns described in Q1.

- Fit a Simple Model: Fit a negative binomial model (without zero-inflation) to a few prevalent taxa. Examine the residuals for over-dispersion and zero-inflation using diagnostic plots (e.g., rootogram).

Q4: Which FDR control method (Benjamini-Hochberg, Storey's q-value) is most robust under zero-inflation?

A: Standard FDR methods assume p-values are uniformly distributed under the null. Zero-inflation violates this, leading to inflated false discoveries. Recommendation:

- Primary: Use

Adaptive Benjamini-HochbergorStorey's q-value, which estimate the proportion of true null hypotheses (π0) and are somewhat adaptive to non-uniformity. - Robust Alternative: Employ the

Boca-Leek FDR(BL), which uses a permutation-based approach to estimate the null distribution of test statistics, making it more robust to violations of assumptions, including those caused by zero-inflation.

Experimental Protocols

Protocol 1: Diagnostic Analysis for Zero-Inflation Impact

- Data Preprocessing: Filter out taxa with less than 5% prevalence across all samples. Apply a centered log-ratio (CLR) transformation after adding a pseudocount.

- Null Data Simulation: Use the

SPsimSeqR package to simulate microbiome datasets with similar sparsity and library size distribution as your real data, but with no true differential abundance. - Differential Testing: Apply your chosen standard method (e.g., simple linear regression on CLR values) to the simulated null dataset.

- P-Value Distribution Assessment: Create a histogram and a P-P plot of the resulting p-values. Compare against a uniform distribution. Significant deviation confirms the method's vulnerability to your data's sparsity structure.

- FDR Evaluation: Apply the BH procedure at a nominal 5% FDR. Calculate the empirical FDR (should be ~5%). A higher value indicates poor FDR control.

Protocol 2: Comparative Evaluation of DA Methods under Sparsity

- Benchmark Design: Simulate datasets with known true positives (20% differentially abundant taxa) using tools like

MBCOSTorSparseDOSSA2. Vary the level of zero-inflation (50%, 70%, 90%). - Method Application: Run each differential abundance method on the simulated datasets:

- Standard: DESeq2, edgeR (with robust options).

- Compositional: ANCOM-BC, LinDA.

- Zero-aware: Model-based (MAST for CPM, glmmTMB with ZINB), Aldex2 (with IQLR CLR transformation).

- Performance Metrics Calculation: For each method and condition, calculate:

- False Discovery Rate (FDR): Proportion of false discoveries among all discoveries.

- True Positive Rate (TPR)/Power: Proportion of true positives correctly identified.

- AUC-ROC: Area under the receiver operating characteristic curve.

- Result Compilation: Summarize metrics in a comparative table (see Table 1).

Data Presentation

Table 1: Comparative Performance of DA Methods Under Varying Sparsity (Simulated Data)

| Method | Type | Sparsity Level | FDR (Target 5%) | TPR (Power) | AUC-ROC |

|---|---|---|---|---|---|

| DESeq2 | Count-based | 70% | 12.4% | 0.65 | 0.82 |

| edgeR (robust) | Count-based | 70% | 10.1% | 0.62 | 0.80 |

| ANCOM-BC | Compositional | 70% | 4.8% | 0.58 | 0.85 |

| LinDA | Compositional | 70% | 5.2% | 0.60 | 0.84 |

| MAST (CLR) | Zero-aware | 70% | 5.5% | 0.55 | 0.83 |

| Aldex2 (IQLR) | Compositional | 70% | 5.0% | 0.52 | 0.81 |

| DESeq2 | Count-based | 90% | 25.7% | 0.41 | 0.62 |

| ANCOM-BC | Compositional | 90% | 6.3% | 0.35 | 0.78 |

| MAST (CLR) | Zero-aware | 90% | 5.8% | 0.32 | 0.79 |

Diagrams

Diagram 1: Zero-Inflation Impact on P-Value Distribution

Diagram 2: Decision Workflow for Sparse Microbiome DA Analysis

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Context of Sparse Microbiome DA Research |

|---|---|

| R/Bioconductor Packages | |

phyloseq, mia |

Data object containers and standard preprocessing (filtering, transformation). |

SPsimSeq, SparseDOSSA2 |

Simulating realistic, sparse microbiome datasets for benchmarking and diagnostic checks. |

ANCOM-BC, LinDA, MAST, glmmTMB, Aldex2 |

Core differential abundance testing methods robust to compositionality and/or zero-inflation. |

qvalue, swfdr |

FDR estimation and control procedures, including adaptive and robust methods (e.g., Boca-Leek). |

| Benchmarking Frameworks | |

benchdamic |

Standardized framework for benchmarking differential abundance methods for microbiome data. |

IHW (Independent Hypothesis Weighting) |

Method to increase power while controlling FDR, can be used with external covariates (e.g., taxon prevalence). |

| Essential Transformations | |

| Centered Log-Ratio (CLR) | Compositional transformation. Requires careful handling of zeros (pseudocounts or model-based imputation). |

| Diagnostic Metrics | |

| Sparsity Percentage, P-P Plots, Rootograms | Key diagnostics for assessing the severity of zero-inflation and model fit. |

Frequently Asked Questions (FAQs)

Q1: My network inference returns an extremely high density of edges, making biological interpretation impossible. What could be the cause? A1: This is a classic symptom of failing to account for compositionality and test statistic dependence. Raw relative abundance or count data are compositional; correlations computed directly from them are biased and non-independent. First, apply a proper compositionality-aware transformation (e.g., CLR with a robust pseudo-count). Second, ensure your permutation or bootstrap-based significance testing for edges explicitly breaks the dependence structure by resampling whole samples, not individual taxa.

Q2: When I run my Differential Abundance (DA) analysis and co-occurrence network inference on the same dataset, how do I know if the FDR is controlled for the combined set of discoveries? A2: You likely cannot assume FDR control. Standard DA tools (e.g., DESeq2, edgeR, ANCOM-BC) and network inference tools (e.g., SparCC, SPIEC-EASI, MENA) use different statistical models and generate non-independent test statistics. Treating results from both as one discovery set invalidates standard FDR correction. The recommended workflow is to split your analysis into two independent, pre-registered hypotheses: one for DA and one for network inference, correcting for multiple tests separately within each domain.

Q3: I used SparCC to infer correlations, but my p-values for edges appear overly optimistic (too many significant edges). How should I validate them? A3: SparCC's default p-values, based on data re-sampling, can be anti-conservative under complex nulls. You must implement a more robust permutation test. The key is to permute the sample labels of the entire microbial community matrix, not individual taxon vectors, to destroy true correlations while preserving the compositional structure and any technical dependencies. Re-run SparCC on hundreds of these permuted datasets to generate a valid null distribution for each potential edge.

Q4: What are the best practices for visualizing a network when I have both DA results (e.g., log2FC) and co-occurrence results (e.g., correlation strength)? A4: Integrate findings cautiously. A common method is to create a node-attributed network. Use the co-occurrence inference to define the existence and weight of edges (e.g., only significant SparCC correlations). Then, use DA results to color or size the nodes (e.g., node color for phylum, node size for log2 fold change from DA analysis). Crucially, do not use edge significance to re-select or filter nodes from the DA analysis, as this creates a circular dependence.

Troubleshooting Guides

Issue: Inflated False Discovery Rate in Integrated Network-DA Workflows

Symptoms: An unexpectedly high number of taxa are both differentially abundant and highly connected in the co-occurrence network, raising suspicions of statistical artifact. Diagnosis: This is likely due to the violation of the independence (or positive dependence) assumption of the Benjamini-Hochberg FDR procedure. Test statistics from DA and network centrality measures are often correlated. Solution: Apply a dependence-aware FDR correction method.

- Compute your primary statistics: p-values from DA analysis (e.g., from DESeq2) and network centrality measures (e.g., degree or betweenness) for each taxon.

- Use the Benjamini-Yekutieli procedure or employ bootstrapping to estimate the empirical null distribution of the combined test statistics.

- Adjust p-values based on this more conservative framework.

Protocol: Permutation-Based Null for Combined DA-Network Statistics

- Input: Abundance matrix

M(samples x taxa), sample metadataD(e.g., case/control). - Real Analysis: Run DA test (get p-value p_i per taxon i). Run network inference (get centrality c_i per taxon i). Combine via Stouffer's method: Z_i = (Φ^{-1}(1-p_i) + scale(c_i)) / √2.

- Permutation: For

k=1toN(e.g., 1000):- Randomly shuffle the rows of

D(case/control labels). - Re-run DA and network inference on

Mwith permutedD. - Calculate the permuted combined statistic Z_i^{(k)} for each taxon.

- Randomly shuffle the rows of

- Null Distribution: Pool all Z_i^{(k)} across all taxa and permutations to form a global null distribution.

- Adjusted P-value: For each taxon's real Z_i, calculate its percentile rank against the global null distribution. This is your empirically adjusted p-value.

Issue: Unstable Network Topology Between Technical Replicates

Symptoms: Network properties (e.g., modularity, hub identity) vary widely between rarefied versions of the same dataset or between different sequencing runs. Diagnosis: Network inference is sensitive to sparsity, compositionality, and sampling depth. Rarefaction introduces unnecessary variance. Solution:

- Avoid Rarefaction: Use models that handle uneven sampling depth intrinsically (e.g., models assuming a Negative Binomial or Dirichlet-Multinomial distribution).

- Employ Consensus Networks: Use tools like

FlashWeaveorSPIEC-EASIthat are designed for sparse, compositional data. - Stability Selection: Run inference on multiple bootstrapped subsets of your samples. Only retain edges that appear in >80-90% of bootstrap networks.

Data Presentation

Table 1: Comparison of Co-occurrence Network Methods & Their FDR Control Assumptions

| Method | Core Algorithm | Handles Compositionality? | Default Significance Test | Dependence Issue for Integrated FDR |

|---|---|---|---|---|

| SparCC | Iterative correlation based on log-ratios | Yes (CLR-based) | Data permutation (can be invalid) | High. Correlation stats depend on the same counts used in DA. |

| SPIEC-EASI | Graphical LASSO / Meinshausen-Bühlmann | Yes (CLR precondition) | Stability selection / Bootstrap | Moderate. Stability selection improves control but doesn't eliminate joint dependence with DA. |

| MENAP | Random Matrix Theory | Yes (via similarity matrix) | RMT null threshold | High. Network module structure can be confounded by differential abundance. |

| MIC | Information Theory (Maximal Info. Coeff.) | No (applied to raw or transformed) | Permutation test | Very High. Non-parametric but highly sensitive to abundance shifts. |

| CCLasso | Least Squares on Compositional Log-Likelihood | Yes (direct model) | Bootstrap confidence intervals | Moderate. Explicit model may allow for joint modeling with DA. |

Table 2: Reagent & Computational Toolkit for Robust Network+DA Research

| Item | Name/Example | Function in Workflow |

|---|---|---|

| Primary Analysis Tool | QIIME 2 (with DEICODE plugin) or R (phyloseq, SpiecEasi, microbial) |

Container for data, standard preprocessing, and access to compositional methods. |

| DA Analysis Package | DESeq2, edgeR, ANCOM-BC, LinDA |

Performs rigorous differential abundance testing, generating primary p-values and effect sizes. |

| Network Inference Package | SpiecEasi, FlashWeave, ccrepe |

Infers microbial associations (edges) from abundance data, outputting adjacency matrices and weights. |

| FDR Correction Library | stats (R), qvalue (Bioconductor), mutoss |

Implements BH, BY, and other procedures for multiple testing correction. |

| Essential Transform | Centered Log-Ratio (CLR) with pseudo-count (e.g., cmultRepl) |

Converts compositional count data to a Euclidean space suitable for correlation. |

| Visualization Suite | igraph (R), Cytoscape, Gephi |

Visualizes and calculates topological properties of inferred networks. |

| Validation Dataset | Mock community data (e.g., BEI Resources) | Provides a ground-truth dataset with known abundances/no interactions to benchmark FDR control. |

Experimental Protocols & Visualizations

Diagram 1: Problem: Violated Independence in DA-Network Workflow

Diagram 2: Solution: Split Hypothesis Testing Workflow

Diagram 3: Protocol for Permutation-Based Validation of Edge Significance

Troubleshooting Guides & FAQs

FAQ 1: Why do I get different numbers of significant features when performing differential abundance (DA) analysis on taxonomic counts versus functional pathway abundances, even when using the same FDR correction method?

- Answer: The false discovery rate (FDR) is controlled within the set of hypotheses being tested. Taxonomic (e.g., ASV, genus) and functional (e.g., MetaCyc pathway, KO gene) profiles represent distinct hypothesis spaces with different total numbers of features (m) and underlying correlation structures. A method controlling the FDR at 5% on 500 taxonomic units is correcting for a different multiple testing burden than when applied to 10,000 functional genes. The proportion of true positives and the dependency among features differ, leading to varied outcomes. The "FDR problem" isn't eliminated; its context shifts.

FAQ 2: My negative control samples show spurious differential signals in functional data but not in taxonomic data. Is my normalization method wrong?

Answer: Not necessarily. This often highlights the increased sensitivity and complexity of functional inference. Functional profiles are predicted from marker genes (e.g., via PICRUSt2, HUMAnN3) and are subject to additional layers of technical noise and genomic incompleteness. Spurious signals in controls may indicate:

- Batch effects in sequencing depth disproportionately impacting gene copy number normalization.

- Database bias where certain pathways are over-predicted in your study group.

- Tool-Specific Artifacts: Check if the same issue occurs with an alternative functional profiling pipeline.

Troubleshooting Protocol:

- Re-run the functional profiling on randomized sample groups. If significance persists, it's an artifact.

- Apply a more stringent abundance/prevalence filter (e.g., >0.01% abundance in >20% of samples) to functional features before DA.

- Validate findings on a subset of samples using shotgun metagenomic sequencing if possible.

FAQ 3: How should I choose between 16S rRNA gene-derived functional prediction and shotgun metagenomics for functional DA studies focused on FDR control?

- Answer: The choice directly impacts the hypothesis space and thus FDR control. See the table below for a comparison.

Table 1: Comparison of Functional Data Sources for DA Analysis

| Feature | 16S-Derived Prediction (e.g., PICRUSt2) | Shotgun Metagenomics |

|---|---|---|

| Primary Input | 16S rRNA gene amplicon sequences | Whole-community genomic DNA |

| Functional Unit | Predicted pathway/ enzyme abundance | Directly observed gene/pathway abundance |

| Typical # Features (m) | Lower (~100s-1000s of pathways) | Higher (~10,000s of genes/pathways) |

| Major FDR Consideration | False positives from prediction error. FDR methods control for statistical, not methodological, false discoveries. | Large m increases multiple testing burden. Requires robust normalization for gene length & copy number. |

| Best for FDR Control when: | Hypothesis generation; resource-limited projects; taxonomic unit is also of interest. | Hypothesis testing; requiring direct genetic evidence; studying non-bacterial domains. |

FAQ 4: I am using the same DA tool (e.g., DESeq2, LEfSe) on both data types. Why are the model assumptions more frequently violated for my functional data?

Answer: Functional data often has different distributional properties. Taxonomic count data is often zero-inflated. Functional profile data, especially pathway coverage, can be more continuous (non-integer) and may not fit negative binomial models well. Furthermore, functional features are inherently correlated (e.g., genes in the same pathway), violating the independence assumption of many FDR procedures more severely than taxonomic data.

Experimental Protocol for Model Validation:

- Distribution Check: For each DA model, plot the residuals versus fitted values.

- Transform Data: If using count-based models (DESeq2, edgeR) on functional data, ensure inputs are integer counts (e.g., gene counts, not coverage percentages). Consider using variance-stabilizing transformation.

- Consider Alternative Models: For continuous functional data, evaluate methods like

limma-voomor non-parametric tests (e.g.,ALDEx2) with appropriate FDR correction.

FAQ 5: Can integrating taxonomic and functional analysis help improve overall FDR control?

- Answer: Direct integration can be methodologically challenging for FDR control. However, a tiered approach can improve confidence and contextualize findings, effectively reducing the "biological false discovery" rate.

- Perform DA analysis on both units separately.

- Use statistically significant taxonomic changes to constrain the interpretation of functional results. For example, a significant pathway should ideally be encoded by taxa that also show some differential abundance or activity.

- Employ causal inference or mediation models (if sample size permits) to test hypotheses linking specific taxon changes to specific functional shifts. This moves beyond correlation.

Diagram Title: Workflow and Separate FDR Control in Taxonomic vs. Functional DA

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Robust Taxonomic & Functional DA Analysis

| Item | Function & Relevance to FDR Control |

|---|---|

| ZymoBIOMICS Microbial Community Standards | Validated mock communities of known composition. Critical for benchmarking DA pipelines, quantifying false positive rates, and comparing performance between taxonomic and functional analyses. |

| Negative Extraction Controls & PCR Blanks | Essential for identifying contaminant or spurious sequences that can inflate feature count (m) and become false discoveries, especially in low-biomass samples. |

| Benchmarking Datasets (e.g., curatedMetagenomicData) | Public resources containing paired 16S and shotgun data from the same samples. Allow for direct empirical assessment of how the unit of analysis (taxonomic vs. functional) impacts FDR in real-world scenarios. |

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Reduces PCR errors and chimeric sequences in amplicon workflows, leading to more accurate taxonomic counts and downstream functional predictions. Minimizes technical artifacts mistaken for biological signal. |

| Standardized DNA Extraction Kits (e.g., MagAttract, DNeasy PowerSoil) | Consistency in lysis efficiency across samples is paramount. Variation introduces batch effects that can confound both taxonomic and functional DA, increasing the risk of false associations. |

| Bioinformatics Pipeline Version Control (e.g., Snakemake, Nextflow) | Ensures computational reproducibility. Small changes in parameters (clustering threshold, database version) alter the feature set (m) and statistical outcomes, making FDR reports irreproducible. |

Technical Support Center: Troubleshooting False Discovery Rate Control in Microbiome Differential Abundance Analysis

Troubleshooting Guides

Issue 1: Inflated False Positives Despite FDR Correction

- Problem: After applying an FDR correction (e.g., Benjamini-Hochberg), the number of significant differentially abundant (DA) features remains implausibly high based on experimental design.

- Diagnosis: This often stems from violations of the underlying assumptions of the FDR method or the statistical test. Common causes include:

- High Sparsity and Zero-Inflation: Microbiome count data is sparse. Standard parametric tests assume a different distribution.

- Compositional Effects: Data is relative (e.g., from 16S rRNA sequencing), not absolute. A change in one taxon artificially changes the perceived proportions of others.

- Weak Effect Size with High Sample Variance: True biological signal is obscured by technical and inter-individual variation.

- Solution:

- Pre-Filtering: Remove low-abundance or low-prevalence features (e.g., present in <10% of samples) to reduce noise. Caution: Over-filtering can lose signal.

- Model-Based Methods: Shift from simple non-parametric tests (Wilcoxon) to models designed for microbiome data (e.g.,

DESeq2,edgeR,limma-voom,ANCOM-BC,MaAsLin 2). These account for dispersion and compositionality. - Stratified FDR: For multi-group or confounded designs, consider using

DESeq2'slfcsThresholdfor testing against a fold-change threshold or employingsvato account for batch effects before DA testing. - Validation: Use a hold-out subset or a different normalization/transformation method (e.g., CLR, TSS+log) to see if results are consistent.

Issue 2: Overly Conservative FDR Control (No Significant Hits)

- Problem: After correction, no features are reported as significant, despite strong prior evidence.

- Diagnosis: The statistical test may lack power due to:

- Small Sample Size (n < 5-10 per group): Microbiome data is high-variance. Small n severely limits power.

- Over-correction for Multiple Testing: When testing thousands of taxa, FDR methods become very stringent.

- Inappropriate Normalization: Normalization method may not be suited to the data's characteristics.

- Solution:

- Increase Power: If possible, increase sample size. Use power calculators (

HMP,curatedMetagenomicDatafor effect size estimates). - Two-Stage Procedures: Implement methods like

IHW(Independent Hypothesis Weighting) that use covariate information (e.g., mean abundance) to increase power while controlling FDR. - Alternative FDR Methods: Explore

q-value(stores FDR) orLocal FDRwhich can be more powerful under certain dependencies. - Relaxed Thresholds: For exploratory studies, consider presenting results at a less stringent alpha (e.g., 0.1) alongside the standard 0.05, clearly labeling them.

- Increase Power: If possible, increase sample size. Use power calculators (

Issue 3: Inconsistent Results Across Different FDR Methods or Tools

- Problem: Different software pipelines (

QIIME2,phyloseq+DESeq2,LEfSe,MaAsLin 2) yield different lists of significant DA taxa. - Diagnosis: Each tool employs different statistical tests, normalization strategies, and FDR handling.

- Solution:

- Benchmark Your Data: Run your data through 2-3 established, well-cited pipelines (e.g.,

DESeq2,ANCOM-BC,MaAsLin 2). - Identify Consensus: Use an ensemble approach. Features called significant by multiple, methodologically distinct tools are higher-confidence candidates.

- Standardize Input: Ensure the input data (filtering, rarefaction if used) is as identical as possible across pipelines for a fair comparison.

- Report Methodology Precisely: In publications, explicitly state the tool, test, normalization, and FDR correction used.

- Benchmark Your Data: Run your data through 2-3 established, well-cited pipelines (e.g.,

Frequently Asked Questions (FAQs)

Q1: Should I rarefy my samples before differential abundance testing?

A: The consensus in recent statistical literature is generally no. Rarefaction (subsampling) throws away valid data and can introduce artifacts. It is not necessary for model-based methods like DESeq2 or edgeR, which internally handle varying library sizes. Use alternative normalization like CSS (metagenomeSeq), TMM (edgeR), or RLE (DESeq2). Rarefaction may still be useful for alpha diversity estimation.

Q2: How do I choose between DESeq2, edgeR, limma-voom, ANCOM-BC, and MaAsLin 2? A: See the decision table below.

Q3: What is the difference between controlling the FDR and the FWER? Which should I use? A: FWER (Family-Wise Error Rate, e.g., Bonferroni) controls the probability of at least one false positive. It is overly conservative for high-dimensional microbiome data. FDR (False Discovery Rate, e.g., Benjamini-Hochberg) controls the expected proportion of false positives among discoveries. It is more appropriate for exploratory microbiome studies where accepting some false positives is tolerable to gain biological insights.

Q4: My data has a strong batch effect. Should I correct for it before or after FDR correction?

A: Before. Batch effects are a major confounder and can create both false positives and negatives. Correct for them during the modeling stage if possible. Tools like MaAsLin 2 and limma allow batch as a covariate. For complex designs, use sva or RUVseq to estimate surrogate variables of unwanted variation and include them in your model. Then proceed with differential testing and FDR correction.

Q5: How do I handle longitudinal or paired samples for FDR control? A: Use methods that account for within-subject correlation. Options include:

MaAsLin 2with a random effects term for subject ID.lmerorglmmTMBin a mixed-effects model framework, applying FDR correction across taxa post-testing.LinDA, a method specifically designed for linear models for DA analysis with FDR control that can handle correlated data.LOCOM, a non-parametric method for compositional data that controls FDR and allows for correlation structures.

Data Presentation: Comparison of Common DA Methods & FDR Control

Table 1: Overview of Primary Differential Abundance Methods in Recent Literature (2022-2024)

| Method/Tool | Core Statistical Approach | Handles Compositionality? | Recommended FDR Procedure | Key Strength | Common Limitation |

|---|---|---|---|---|---|

| DESeq2 (phyloseq) | Negative Binomial GLM | No (Assumes absolute counts) | Benjamini-Hochberg (default) | Powerful for large effects, robust dispersion estimation | Sensitive to compositionality; requires care with zero-inflated data |

| ANCOM-BC | Linear regression with bias correction | Yes (Central to method) | Benjamini-Hochberg (on p-values) | Strong control of false positives due to compositionality | Can be conservative; slower on very large datasets |

| MaAsLin 2 | Generalized linear or mixed models | Via CLR transform (optional) | Benjamini-Hochberg (default) | Extreme flexibility (any model form, random effects) | CLR transform requires a pseudo-count; sensitive to its choice |

| edgeR / limma-voom | Negative Binomial (edgeR) or linear (voom) | No (Assumes absolute counts) | Benjamini-Hochberg (default) | Excellent power, good for complex designs (voom) | Same compositionality issue as DESeq2 |

| LEfSe | Kruskal-Wallis + LDA | Indirectly via relative abundance | Not a standard FDR; uses factorial K-W & LDA score | Good for class comparison & biomarker discovery | No formal FDR control on LDA scores; high false positive rate |

| Aldex2 | CLR transform + Dirichlet prior | Yes (Uses CLR) | Benjamini-Hochberg (on effect size p-values) | Robust to compositionality, provides effect sizes | Computationally intensive due to Monte Carlo sampling |

Experimental Protocols

Protocol 1: Standard Differential Abundance Analysis Workflow with DESeq2/ANCOM-BC

- Data Preprocessing: From ASV/OTU table, remove taxa with total counts < 10 (or similar low threshold) across all samples.

- Tool-Specific Setup:

- For DESeq2 (via

phyloseq::phyloseq_to_deseq2): Create aDESeqDataSetobject, specifying the experimental design formula (e.g.,~ group). - For ANCOM-BC (via

ANCOMBC::ancombc2): Prepare an OTU table, sample metadata, and specify the fixed effect formula (e.g.,formula = "group").

- For DESeq2 (via

- Model Fitting & Testing:

- DESeq2: Run

DESeq(), then extract results usingresults()function. Specifyalpha=0.05for FDR threshold. - ANCOM-BC: Run

ancombc2()withprv_cut = 0.10(prevalence cutoff),lib_cut = 0(library size cutoff), andp_adj_method = "BH".

- DESeq2: Run

- FDR Application: Both tools internally apply the Benjamini-Hochberg procedure. The output

padjcolumn (DESeq2) orp_val_adjusted(ANCOM-BC) contains the FDR-adjusted p-values. - Interpretation: Identify taxa with

padj < 0.05. Examine log2 fold changes and base mean counts for biological significance.

Protocol 2: Employing IHW to Increase Power for FDR Control

- Installation: In R, install and load the

IHWpackage. - Run DESeq2 (or equivalent) without filtering on FDR: Obtain raw p-values and a informative covariate (e.g., the mean normalized count from

DESeq2::results). - Apply IHW: Use the

ihw()function, specifying the p-values and the covariate. Setalpha = 0.05. - Extract Results: The

adj_pvaluecolumn of the IHW result object contains FDR-adjusted p-values that are potentially more powerful than standard BH when the covariate is informative.

Mandatory Visualization

Diagram 1: Microbiome DA Analysis Workflow with FDR Control Points

Diagram 2: FDR Control Methods Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Robust Microbiome DA Analysis

| Item | Function in FDR Control Context | Example/Note |

|---|---|---|

| High-Quality DNA Extraction Kit | Minimizes technical variation and batch effects, a major source of false discoveries. | MoBio PowerSoil Pro Kit, ZymoBIOMICS DNA Miniprep Kit. |

| Mock Community Control | Allows assessment of technical false positive/false negative rates for DA calls. | ZymoBIOMICS Microbial Community Standard. |

| Internal Spike-In Standards | Helps correct for compositionality and varying efficiency, improving accuracy of models. | Known quantities of exogenous non-bacterial DNA (e.g., ERCC RNA Spike-In Mix adapted for metagenomics). |

| Benchmarking Dataset | Validates your pipeline's performance against known results. | curatedMetagenomicData (R package), simulated data from SPsimSeq. |

| R/Bioconductor Packages | Provide the core statistical engines and FDR procedures. | phyloseq, DESeq2, ANCOMBC, MaAsLin2, IHW, qvalue. |

| High-Performance Computing (HPC) Resources | Enables permutation-based FDR methods and large model fitting. | Cloud computing (AWS, GCP) or local cluster for ALDEx2 (Monte Carlo) and large MaAsLin 2 runs. |

| Standardized Metadata Template | Ensures correct modeling of confounding variables, critical for FDR control. | Use MIXS standards; document all relevant sample data. |

Modern FDR Methodologies for Microbiome DA: From BH to Adaptive and Conditional Approaches

FAQs & Troubleshooting Guides

Q1: In our simulation, the Benjamini-Hochberg (BH) procedure fails to control the False Discovery Rate (FDR) at the nominal level (e.g., 5%) when testing many microbial taxa. The observed FDR is consistently higher. What is the cause and how can we diagnose it? A: This is a common issue in microbiome differential abundance (DA) analysis due to violation of the BH procedure's core assumption of independence or positive dependence between p-values. Microbiome data exhibits strong inter-taxon correlation (e.g., due to co-occurrence networks or compositionality), leading to negatively correlated test statistics. To diagnose, calculate the empirical FDR from your simulation over many iterations (e.g., 1000 runs) and plot it against the nominal alpha level across different correlation strengths (see Table 1). Also, generate a visualization of the p-value dependency structure.

Experimental Protocol for Diagnosis:

- Simulate Data: Use a tool like

SPsimSeqorHMPin R to generate correlated count data for two groups (case/control). Introduce a known proportion of truly differentially abundant features (e.g., 10%). - DA Analysis: Perform statistical testing (e.g., DESeq2, edgeR, ALDEx2) to obtain p-values for all taxa.

- Apply BH: Apply the BH correction to the p-values and declare discoveries at a nominal FDR (e.g., 0.05).

- Calculate Empirical FDR: Over many simulations, compute: (Number of Falsely Rejected Hypotheses) / (Max(1, Total Number of Rejections)).

- Vary Correlation: Repeat simulation while systematically varying the underlying correlation matrix strength.

Q2: When benchmarking, what are the key performance metrics to compute besides FDR, and how should they be structured in results? A: A comprehensive benchmark assesses both error control and power. Summarize metrics in a clear table for comparison across methods or simulation scenarios.

Table 1: Key Benchmarking Metrics for FDR Procedures

| Metric | Formula / Description | Target Value |

|---|---|---|

| Empirical FDR | Mean(FDP) across simulations | ≤ Nominal α (e.g., 0.05) |

| FDR Deviation | Empirical FDR - Nominal α | Close to 0 |

| True Positive Rate (Power) | Mean(TP / Total True Positives) | Maximized |

| False Positive Rate | Mean(FP / Total True Negatives) | Controlled |

| Family-Wise Error Rate (FWER) | Proportion of sims with ≥1 false discovery | Controlled (if targeting) |

| Area Under ROC Curve | Plotting TPR vs FPR across thresholds | Maximized |

Q3: We need a clear, reproducible workflow for the core benchmarking experiment. Can you provide a step-by-step protocol? A: Follow this detailed experimental protocol for benchmarking BH.

Experimental Protocol: Core Benchmarking Workflow

- Define Simulation Parameters:

- Sample Size (n): 20 per group.

- Number of Taxa (m): 500.

- Effect Size: Log2 fold change for DA taxa (e.g., 2.0).

- Proportion of DA taxa (π0): 0.9 (10% are truly DA).

- Data Distribution: Negative Binomial (with dispersion tied to mean).

- Correlation Structure: Use an AR(1) or block correlation matrix to induce dependencies.

- Data Generation: Implement simulation in R using the

mvtnormpackage to generate latent correlated variables, which are then transformed into Negative Binomial counts viastats::rnbinom. - Differential Testing: For each simulated dataset, run a chosen DA method (e.g., DESeq2's

DESeqandresultsfunctions) to obtain raw p-values for each taxon. - Multiple Testing Correction: Apply the BH procedure using

stats::p.adjust(method="fdr"). - Performance Calculation: For iteration i, calculate: False Discoveries (FD), True Discoveries (TD), False Discovery Proportion (FDP = FD / max(1, (FD+TD))).

- Iterate: Repeat steps 2-5 for N=1000 independent simulations.

- Aggregate Metrics: Calculate mean empirical FDR (mean(FDP)), empirical Power (mean(TD) / (m * (1-π0))), etc.

Q4: How do the properties of microbiome data (compositionality, sparsity) specifically affect BH performance, and how can we visualize this relationship? A: Compositionality induces negative spurious correlations, and sparsity increases variance of test statistics. Both lead to violations of the positive dependence assumption, causing FDR inflation. The following diagram illustrates this logical relationship.

Diagram: How Data Properties Affect BH

Q5: What are essential reagent solutions or computational tools for conducting this benchmarking study? A: The following toolkit is required.

Table 2: Research Reagent & Computational Toolkit

| Item / Software | Function / Purpose | Key Notes |

|---|---|---|

| R Statistical Environment | Primary platform for simulation, analysis, and visualization. | Use v4.3.0+. Essential for reproducibility. |

| SPsimSeq / HMP R Package | Simulates correlated microbiome sequencing data with realistic properties. | Allows control of effect size, dispersion, and correlation structure. |

| DESeq2 / edgeR / ALDEx2 | Generates p-values for differential abundance from count data. | Each makes different distributional assumptions; benchmark across them. |

| mvtnorm R Package | Generates multivariate normal data to create a latent correlation structure. | Foundational for building correlated count data. |

| High-Performance Computing (HPC) Cluster | Runs thousands of simulation iterations in parallel. | Critical for obtaining stable, precise performance estimates. |

| tidyverse / data.table | Efficient data manipulation and aggregation of simulation results. | |

| ggplot2 | Creates publication-quality figures of FDR and power curves. |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between the Benjamini-Hochberg (BH) and Benjamini-Yekutieli (BY) procedures? A: The BH procedure controls the False Discovery Rate (FDR) under independence or positive dependence of the test statistics. The BY procedure provides FDR control under any dependence structure, making it more robust but also more conservative, leading to lower power (fewer discoveries). In microbiome differential abundance (DA) research, where features (e.g., OTUs, ASVs) are often highly correlated due to ecological networks, BY can be a safe default when dependence is unknown.

Q2: When should I use the Two-Stage Benjamini-Hochberg (TSBH) procedure instead of standard BH or BY? A: Use TSBH when you suspect a proportion of the null hypotheses are true. It is an adaptive method that first estimates this proportion, then uses that estimate to gain power while maintaining FDR control. It is less conservative than BY and more powerful than BH when many hypotheses are non-null, which is common in microbiome DA studies with large effect sizes.

Q3: I applied the BY procedure and found no significant hits. Is my experiment flawed? A: Not necessarily. The BY correction is extremely stringent. Before concluding, check: 1) The raw p-value distribution. If there are no low p-values, the issue is with statistical power, not correction. 2) Consider using a less conservative method if you can assume positive dependence (use BH) or employ an adaptive method like TSBH. 3) Ensure your foundational test (e.g., DESeq2, edgeR, MaAsLin2) is appropriate for your data structure.

Q4: How does dependence among microbial features impact FDR control? A: Violations of the independence assumption can lead to both inflated and deflated FDR. Positive dependence (common in co-occurring microbes) can make BH anti-conservative (FDR > target). Negative dependence can make it overly conservative. BY guarantees control but at a high cost to power. Two-stage procedures can be more sensitive but require verification of their specific dependence assumptions.

Q5: Can I combine these FDR methods with my favorite DA tool? A: Yes. These are post-hoc adjustments applied to a set of p-values generated by any tool. The standard workflow is: 1) Generate raw p-values from your DA model. 2) Apply your chosen FDR correction (BH, BY, TSBH) to the entire set of p-values. Most statistical software (R, Python) includes implementations.

Troubleshooting Guide

Issue: Inconsistent results between FDR methods.

- Symptoms: BH returns many significant taxa, BY returns none, TSBH returns an intermediate number.

- Diagnosis: This is expected due to differing stringency. The key is to justify your choice.

- Resolution: Reference your thesis context on improving FDR control. For exploratory analysis, BH may suffice. For confirmatory studies or when publishing, justify your choice: use BY for maximum robustness, or TSBH for a balance of power and control. Always report which method was used.

Issue: TSBH procedure fails or gives an error.

- Symptoms: Software throws an error related to "π₀ estimation" or "two-stage step-up."

- Diagnosis: This often occurs when the p-value distribution is unusual (e.g., all p-values are very large or very small), confusing the first-stage estimation.

- Resolution: Check the histogram of your raw p-values. If they are uniformly distributed (flat), the null proportion is high and TSBH may not add value—use BH or BY. If the error persists, consider using a different implementation or defaulting to the standard BH procedure.

Issue: FDR-adjusted q-values are exactly 1.0 for all features.

- Symptoms: Every adjusted p-value (q-value) output is 1.000.

- Diagnosis: This happens when the correction is extremely conservative (common with BY) or when all raw p-values are already very high.

- Resolution: 1) Inspect your raw p-values. If the minimum is >(α/m) where m is the number of tests, no method will find significance. 2) Switch to a less conservative method only if justifiable. 3) Re-evaluate the statistical power of your underlying DA test (consider effect size, sample size, dispersion estimation).

Data Presentation: Comparison of FDR Methods

Table 1: Key Characteristics of FDR Control Procedures

| Procedure | FDR Control Under | Conservativeness | Relative Power | Ideal Use Case in Microbiome DA |

|---|---|---|---|---|

| Benjamini-Hochberg (BH) | Independence or Positive Dependence | Moderate | High | Initial screening; when feature correlations are likely positive (e.g., co-abundant guilds). |

| Benjamini-Yekutieli (BY) | Any Dependence Structure | Very High | Low | Confirmatory analysis; when dependence is complex/unknown; for maximum reliability. |

| Two-Stage BH (TSBH) | Independence (robust to some dependence) | Low to Moderate | Very High (when π₀ < 1) | General use when an unknown proportion of features are non-null; to maximize discovery power. |

Table 2: Illustrative Results from a Simulated Microbiome DA Experiment (m=1000 tests, True Discoveries=100) Simulation parameters: Weak to moderate positive dependence between features.

| Procedure | Target FDR (α) | Significant Calls | False Discoveries | Estimated FDR | Notes |

|---|---|---|---|---|---|

| Uncorrected | 0.05 | 250 | 45 | 0.180 | Severely inflated FDR. |

| Benjamini-Hochberg | 0.05 | 88 | 4 | 0.045 | Control achieved, power moderate. |

| Benjamini-Yekutieli | 0.05 | 35 | 0 | ~0.000 | Control achieved, very low power. |

| Two-Stage BH | 0.05 | 95 | 4 | 0.042 | Control achieved, highest power. |

Experimental Protocols

Protocol 1: Implementing and Comparing FDR Methods in R Objective: To adjust p-values from a microbiome DA analysis using BH, BY, and TSBH and compare outcomes.

- Input: A vector of raw p-values (

p_raw) from a DA tool (e.g., DESeq2'sresults()function). - Adjustment:

- Output: Three vectors of adjusted p-values (q-values).

- Analysis: Count significant hits at α=0.05 for each method. Create a Venn diagram to visualize overlap.

Protocol 2: Validating FDR Control via Simulation (for Thesis) Objective: To empirically demonstrate FDR control under simulated microbial dependence structures.

- Simulate Data: Use a multivariate normal model or a Dirichlet-multinomial model (e.g.,

HMPorSPsimSeqR packages) to generate correlated count data for two groups. Induce DA for a known subset of features. - DA Analysis: Apply a standard method (e.g., DESeq2's Wald test) to obtain raw p-values for all features.

- Apply Corrections: Apply BH, BY, and TSBH to the p-value vector.

- Calculate Empirical FDR: Over N=1000 simulation runs, compute: Empirical FDR = (Average # of false discoveries) / (Average # of significant calls).

- Compare: Verify that Empirical FDR ≤ Target FDR (0.05) for each method across different correlation strengths.

Visualizations

FDR Method Selection Workflow

Two-Stage BH (TSBH) Procedure Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Adaptive FDR Analysis

| Item | Function/Benefit | Example in R/Python |

|---|---|---|

| P-Value Vector | The foundational input; raw, unadjusted p-values from a statistical test of differential abundance. | p_values <- resultsDESeq2$pvalue (R/DESeq2) |

| Basic FDR Function | Applies standard BH and BY corrections. | stats::p.adjust() (R), statsmodels.stats.multitest.multipletests() (Python) |

| Adaptive FDR Package | Implements two-stage and other adaptive procedures (TSBH, Storey's q-value). | multtest::mt.rawp2adjp() (R), ltm::tsbh() (R) |

| Simulation Framework | Validates FDR control under custom dependence structures relevant to microbiome data. | SPsimSeq package (R), custom multivariate normal scripts. |

| Visualization Library | Creates comparative plots (p-value histograms, Venn diagrams, ROC curves). | ggplot2 (R), matplotlib/seaborn (Python) |

Troubleshooting Guides & FAQs

FAQ 1: My q-value output from the qvalue R package is all 1s or NAs. What does this mean and how can I fix it?

- Answer: This typically indicates an issue with the input p-value distribution or estimation of the proportion of true null hypotheses (π₀).

- Check p-value distribution: Generate a histogram of your p-values. A healthy distribution for FDR estimation should have a large uniform component (null tests) and an enrichment near 0 (alternative tests). If all p-values are large (>0.05), there may be no signal.

- Inspect π₀ estimation: The

qvaluefunction estimates π₀. If this estimate is 1, it assumes all tests are null, leading to q-values of 1. You can adjust thelambdaparameter for smoothing or use thepi0.methodoption (e.g.,"bootstrap"). - Verify input: Ensure you are providing a numeric vector of p-values between 0 and 1, with no missing values other than NA.

FAQ 2: When using limma-voom for microbiome differential abundance (DA) analysis on my count data, the model fails to converge or gives unrealistic log-fold changes.

- Answer: This is common when dealing with sparse, zero-inflated microbiome data.

- Address zeros: Consider using a zero-inflated model (like

model.matrix(~ condition)), or incorporate an offset for zero counts. - Check normalization: Ensure proper normalization (e.g., TMM via

edgeR::calcNormFactors) is applied beforevoomtransformation. Do not use rarefaction. - Inspect design matrix: Ensure your design matrix is full rank and correctly specified. Use

limma::nonEstimableto check. - Filter low-abundance features: Apply a prevalence filter (e.g., retain features present in >10% of samples) before analysis to reduce noise.

- Address zeros: Consider using a zero-inflated model (like

FAQ 3: What is the difference between global FDR (e.g., Benjamini-Hochberg) and local FDR (e.g., from fdrtool), and when should I use each?

- Answer:

- Global FDR: Controls the expected proportion of false discoveries among all significant tests. It's a property of the entire rejected set.

- Local FDR (lfdr): Estimates the probability that a specific individual test is a null hypothesis given its statistic. It's a per-test measure.

- When to Use: Use global FDR (adjusted p-values/q-values) for declaring a list of significant findings while controlling overall error. Use lfdr to assess confidence in individual hits or for prioritization within your results. For microbiome DA, global FDR is standard for final claims, while lfdr can inform downstream validation experiments.

FAQ 4: How do I choose between limma, DESeq2, and ALDEx2 for controlling the FDR in microbiome DA analysis?

- Answer: The choice depends on your data and question. See the comparison table below.

Key Experiment: Comparative Performance of DA Methods on Sparse Microbiome Data

Experimental Protocol

- Data Simulation: Use a tool like

SPsimSeq(R package) to simulate 16S rRNA gene sequencing count data. Parameters: 100 samples (50 per group), 500 features (ASVs), 90% true null (no differential abundance), 10% true alternative (log-fold change ranging from 2 to 5). Introduce sparsity typical of microbiome data (60-80% zeros). - Method Application: Apply each DA method to the same simulated dataset:

limma-voom: Normalize counts with TMM. Applyvoomtransformation. Fit linear model with empirical Bayes moderation. Extract p-values and adjust with Benjamini-Hochberg.DESeq2: Use default Wald test. Use independent filtering. Extract adjusted p-values (FDR).ALDEx2(t-test on CLR): Usealdex.clrwith 128 Dirichlet Monte-Carlo instances. Performaldex.ttest. Extract expected Benjamini-Hochberg adjusted p-values.

- Performance Assessment: Calculate True Discovery Rate (Power), Observed FDR, and Area under the Precision-Recall curve (AUPRC) across 100 simulation replicates.

Data Presentation

Table 1: Performance Comparison of DA Methods on Simulated Sparse Data (Mean over 100 Replicates)

| Method | True Discovery Rate (Power) | Observed FDR (at nominal 5% FDR) | AUPRC | Runtime (sec) |

|---|---|---|---|---|

limma-voom |

0.72 | 0.048 | 0.81 | 45 |

DESeq2 |

0.68 | 0.042 | 0.78 | 210 |

ALDEx2 |

0.61 | 0.037 | 0.70 | 310 |

Visualization

Title: Generalized Workflow for Empirical Bayes FDR Control

Title: Local FDR (lfdr) Calculation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Empirical Bayes & FDR Analysis

| Item | Function | Example/Implementation |

|---|---|---|

limma R Package |

Fits linear models to sequence data with empirical Bayes moderation of variances, stabilizing estimates for small sample sizes. | voom() transformation, eBayes(), topTable() |

qvalue R Package |

Estimates q-values and the proportion of true null hypotheses (π₀) from a list of p-values, providing robust global FDR control. | qvalue(p = p.values) |

fdrtool R Package |

Estimates both local FDR and tail-area based FDR from diverse test statistics (p-values, z-scores, t-scores). | fdrtool(x, statistic="pvalue") |

DESeq2 R Package |