Beyond the Zeros: Advanced Filtering Strategies for Zero-Inflated Microbiome (OTU) Data Analysis

This article provides a comprehensive guide for researchers and bioinformaticians tackling the pervasive challenge of zero-inflation in Operational Taxonomic Unit (OTU) tables.

Beyond the Zeros: Advanced Filtering Strategies for Zero-Inflated Microbiome (OTU) Data Analysis

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians tackling the pervasive challenge of zero-inflation in Operational Taxonomic Unit (OTU) tables. We explore the biological and technical origins of excessive zeros, systematically evaluate contemporary filtering methodologies, and present best-practice workflows for their application. The content covers foundational concepts, step-by-step implementation, common pitfalls, and comparative validation of popular approaches like prevalence-based, abundance-based, and statistical model-driven filtering. By synthesizing current research, this guide aims to empower robust microbiome data preprocessing, leading to more reliable downstream statistical inference and biological insights in drug discovery and clinical research.

Understanding Zero-Inflation: Why Your Microbiome Data is Full of Zeros

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Why do I see so many zeros in my OTU/ASV table, and is this a problem? A: Yes, this is the defining characteristic of zero-inflation. It arises from a combination of biological and technical sources. Biologically, many microbial taxa are genuinely absent from most samples due to niche specificity. Technically, zeros can result from sampling depth limitations, PCR dropout, or sequencing errors. This extreme sparsity violates the assumptions of many common statistical models, leading to biased inferences if not properly addressed.

Q2: My differential abundance test (e.g., DESeq2, edgeR) is failing or producing unrealistic results. Could zero-inflation be the cause? A: Absolutely. Standard negative binomial models used by these tools assume a certain distribution of zeros. Zero-inflated data contains an excess of zeros, which can cause model over-dispersion, false-positive discoveries, or convergence failures. You may need to employ tools specifically designed for or robust to zero-inflation.

Q3: What are the first diagnostic steps to confirm zero-inflation in my dataset? A: Perform the following quantitative diagnostics:

Table 1: Diagnostic Metrics for Zero-Inflation

| Metric | Formula/Description | Threshold for Concern | Interpretation |

|---|---|---|---|

| Prevalence | (Number of samples where taxon is present / Total samples) * 100 | < 10-20% for many taxa | Indicates many low-prevalence, "rare" taxa. |

| Sample Sparsity | (Number of zero counts / Total counts in sample) * 100 | > 70-80% | The majority of entries in a given sample are zeros. |

| Table Sparsity | (Total zeros in OTU table / Total entries) * 100 | > 50-70% | The overall matrix is highly sparse. |

| Zero-Inflation Index | Variance-to-Mean Ratio (VMR) for each taxon. VMR >> 1 suggests zero-inflation. | VMR > 5-10 | Count variance is excessively high relative to the mean, often due to excess zeros. |

Q4: Are all zeros the same? How should I think about them? A: No. This is critical for filtering. Zeros are typically categorized as:

- True Absence (Biological Zero): The microorganism is genuinely not present in the sample's environment.

- False Absence (Technical Zero): The microorganism is present but undetected due to insufficient sequencing depth, PCR bias, or methodological limits. A core goal in filtering zero-inflated OTU tables is to remove technical zeros and uninformative taxa while retaining biological signal.

Experimental Protocol: Diagnosing Zero-Inflation

Objective: To quantify the degree of zero-inflation in a 16S rRNA gene amplicon dataset prior to selecting an appropriate filtering or analysis strategy.

Materials: An OTU or ASV table (count matrix), sample metadata, and access to R/Python.

Procedure:

- Data Import: Load your count matrix into your analytical environment (e.g., R's

phyloseqobject, Python'spandasDataFrame). - Calculate Prevalence: For each taxon, compute the percentage of samples where its count is > 0.

- Calculate Sparsity:

- Per Sample: For each sample, compute (Number of zero-value taxa / Total number of taxa).

- Overall: Compute (Total zeros in matrix / (Number of taxa * Number of samples)).

- Visualize Distributions: Create a histogram of taxon prevalence. A strong left skew (most taxa at very low prevalence) indicates zero-inflation.

- Model Comparison (Advanced): Fit a standard count distribution (e.g., Poisson, Negative Binomial) and a zero-inflated version of the same model to a subset of taxa. Use likelihood ratio tests or AIC scores to see if the zero-inflated model provides a significantly better fit.

Visualizing the Problem

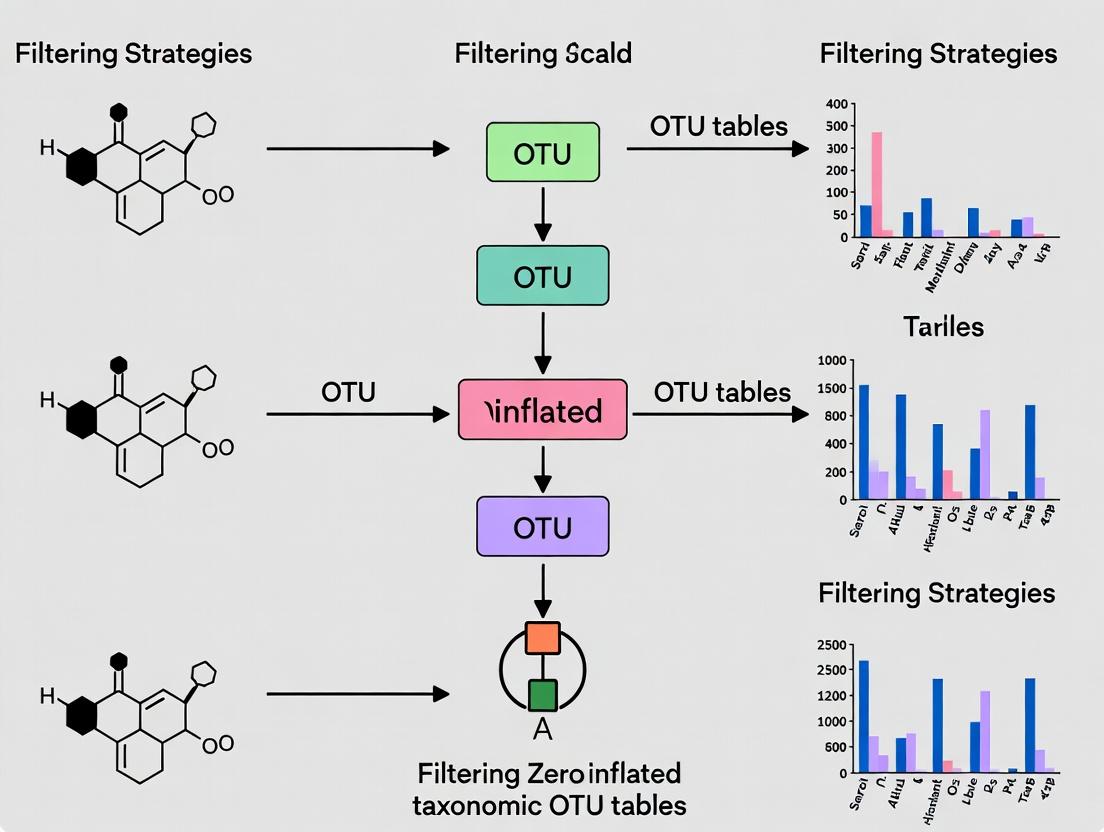

Diagram: Conceptual Sources and Impact of Zeros in OTU Data

Diagram: Decision Workflow for Handling Zero-Inflated Data

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Tools for Zero-Inflated OTU Table Analysis

| Item | Function/Description | Example Tools/Reagents |

|---|---|---|

| High-Fidelity Polymerase | Reduces PCR errors and bias, a source of technical zeros. | Q5 High-Fidelity, Phusion. |

| Validated Positive Controls | Spike-in controls (e.g., ZymoBIOMICS) monitor technical performance and dropout. | ZymoBIOMICS Microbial Community Standard. |

| Sequence Depth Calibration | Determines sufficient sequencing depth to minimize false absences. | Library quantification kits, saturation curves. |

| Zero-Aware Analysis Packages | Statistical software designed for zero-inflated count data. | R: phyloseq, microbiome, ZIBSeq, MAST. Python: statsmodels, scikit-bio. |

| Compositional Data Analysis (CoDA) Tools | Addresses sparsity by analyzing relative abundance profiles. | R: ALDEx2, ANCOMBC, propr. |

| Data Filtering Pipelines | Systematic frameworks for applying prevalence/abundance filters. | R: microbiome::core() function, decontam package. |

Technical Support Center: Troubleshooting Zero-Inflation in Amplicon Sequencing

Troubleshooting Guides

Guide 1: Diagnosing the Source of Zeros in Your OTU Table

Symptoms: Your OTU table contains an excessive number of zeros (>50-70% of entries). Downstream diversity analyses (e.g., alpha/beta diversity) are unstable or yield nonsensical results.

Diagnostic Steps:

- Check Negative Controls: Examine your sequencing run's negative extraction and PCR controls. If these controls contain a high number of reads or OTUs present in your samples, technical contamination is likely.

- Analyze Low-Biomass Samples: Compare zero counts between high-biomass and low-biomass samples. A pattern where zeros increase as sequencing depth per sample decreases indicates a technical zero due to undersampling.

- Review Library Sizes: Calculate the total read count (library size) per sample. A large variance (e.g., some samples with <1,000 reads, others with >50,000) suggests uneven sequencing depth is causing technical zeros.

- PCR Replicates: If you have technical PCR replicates, check for inconsistencies. An OTU present in one replicate but absent in another for the same sample is likely a technical zero (dropout).

Resolution Path:

- If contamination is confirmed, filter out OTUs present in negative controls and re-process samples with clean reagents.

- If undersampling/library size variance is the issue, apply a prevalence filter (e.g., retain OTUs present in >10% of samples) or a variance-stabilizing transformation before analysis. Consider rarefaction for even sampling depth.

Guide 2: Choosing a Filtering Strategy for Your Research Thesis

Decision Framework:

- Objective: Conservative list of core taxa.

- Action: Apply a double-filter: 1) Prevalence filter (e.g., OTU in >20% of samples). 2) Relative abundance filter (e.g., OTU >0.1% in at least one sample).

- Rationale: Removes rare taxa likely arising from technical noise, focusing on consistently detected, potentially biological zeros.

Objective: Retain maximal diversity for discovery.

- Action: Apply a low-prevalence filter (e.g., OTU in >5% of samples) and use a zero-inflated model (e.g., ZINB, hurdle models) in downstream statistical testing.

- Rationale: Keeps more taxa while statistically accounting for excess zeros.

Objective: Compare groups with known low biomass.

- Action: Use a background noise filter (e.g., remove OTUs with mean abundance in samples < 3x mean abundance in negative controls). Always include and sequence negative controls.

- Rationale: Directly subtracts technical noise induced by reagents and lab environment.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a biological zero and a technical zero in my 16S rRNA data? A: A biological zero represents the true absence of a microbial taxon from a given sample due to ecological or host factors. A technical zero is a false absence caused by limitations in the methodology, such as insufficient sequencing depth, PCR dropout, contamination outcompeting true signal, or sample mis-handling. Distinguishing them is critical for accurate biological interpretation.

Q2: How can I experimentally design my study to minimize technical zeros? A: Implement these protocols:

- Increase Technical Replicates: Perform at least triplicate PCRs per sample and pool products before sequencing to mitigate PCR stochasticity.

- Standardize Biomass: Use a consistent starting amount of DNA (e.g., 20 ng) across samples where possible.

- Sequence Deeply: Aim for a sequencing depth beyond the point where rarefaction curves plateau for your highest-diversity samples.

- Include Rigorous Controls: Process multiple negative controls (extraction blank, PCR water blank) alongside every batch of samples.

Q3: What are the most common bioinformatic filtering methods to handle zeros, and when should I use each? A: See the table below for a quantitative summary.

Q4: My statistical model for differential abundance keeps failing due to zero inflation. What should I do?

A: Move beyond standard tests (e.g., t-test, DESeq2 for RNA-seq) and employ models explicitly designed for count data with excess zeros. Use a zero-inflated negative binomial (ZINB) model or a hurdle model. These models separately model the probability of a zero (the "zero-inflation" component, often technical) and the positive count abundance (the "count" component, biological). The glmmTMB or pscl packages in R are commonly used.

Data Presentation: Filtering Method Comparison

Table 1: Quantitative Comparison of Common Zero-Filtering Strategies

| Filtering Strategy | Typical Threshold | Primary Target | Data Removed | Risk | Best For |

|---|---|---|---|---|---|

| Prevalence Filter | Retain OTUs in >5-25% of samples | Rare, sporadically detected taxa | Low-prevalence OTUs | Removing true rare biosphere taxa | General community analysis; reducing noise |

| Abundance Filter | Mean/Total rel. abundance >0.01-0.1% | Very low-abundance taxa | Low-abundance OTUs | Removing true low-abundance but stable taxa | Finding core, abundant taxa; reducing PCR noise |

| Total Count Filter | Total reads >0.1% of all reads | Sequencing artifacts/contaminants | OTUs with minuscule global presence | Very low | Preliminary clean-up |

| Control-based Filter | Sample abundance >3x control abundance | Lab/kit contamination | Contaminant OTUs | Requires well-characterized controls | Low-biomass studies (e.g., tissue, air) |

Experimental Protocols

Protocol 1: Implementing a Control-Based Background Subtraction Filter

Objective: To identify and remove OTUs likely originating from laboratory reagents or cross-contamination. Materials: See "The Scientist's Toolkit" below. Method:

- For each OTU i, calculate its mean relative abundance in all negative control samples (

Mean_Control_i). - For each OTU i in each biological sample s, note its relative abundance (

Abundance_si). - Apply the filtering rule: If

Abundance_si≤K * Mean_Control_i, set the count for OTU i in sample s to zero. A common, conservative factorKis 3. - Remove any OTU that is now present only in negative controls from the entire OTU table.

- Proceed with downstream analysis on the filtered table.

Protocol 2: Validation via Serial Dilution and Spike-In

Objective: Empirically determine the limit of detection and quantify PCR dropout rates. Method:

- Prepare Dilution Series: Take a high-biomass community DNA standard (e.g., ZymoBIOMICS D6300). Perform a 10-fold serial dilution (e.g., from 10 ng/µL to 0.001 ng/µL).

- Add Spike-In: To each dilution, add a known, constant amount of an exogenous synthetic DNA control (e.g., the

Spike-infrom the Toolkit). - Amplify & Sequence: Process all dilution points and a negative control through the identical 16S rRNA gene amplification and sequencing pipeline.

- Analyze:

- Plot the number of detected OTUs vs. input DNA concentration to identify the "drop-off" point where technical zeros explode.

- Calculate the coefficient of variation (CV) for the spike-in read count across dilutions. High CV at low dilution indicates high technical noise.

- This data informs the minimum biomass threshold for your specific protocol.

Diagrams

Title: Decision Workflow for Zero Classification & Filtering

Title: Sources of Zeros in Amplicon Sequencing

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Addressing Zeros |

|---|---|

| Mock Microbial Community Standards (e.g., ZymoBIOMICS D6300) | Provides a known, quantitative mixture of microbial genomes. Used to benchmark pipeline accuracy, detect biases, and estimate false negative (technical zero) rates. |

| UltraPure PCR-Grade Water or TE Buffer | Used for negative controls to identify reagent-borne contamination leading to false positive reads and thus false zeros in true samples. |

| Synthetic DNA Spike-Ins (e.g., External RNA Controls Consortium - ERCC for RNA, custom oligos for 16S) | Non-biological DNA sequences spiked into samples before amplification. They control for technical variation in library prep and sequencing; inconsistent recovery indicates technical zeros. |

| Inhibition-Removal Kits (e.g., OneStep PCR Inhibitor Removal Kit) | Removes humic acids, polyphenols, etc., from samples to prevent PCR inhibition—a major cause of technical zeros and uneven sequencing depth. |

| High-Fidelity, Low-Bias DNA Polymerase (e.g., Q5 Hot Start, Platinum SuperFi II) | Reduces PCR amplification bias and errors, ensuring more equitable representation of community members and minimizing stochastic dropout. |

| Duplex-Specific Nuclease (DSN) | Can be used to normalize libraries by removing abundant dsDNA (e.g., host rRNA), thereby increasing sequencing depth on microbial targets and reducing undersampling zeros. |

Technical Support Center: Troubleshooting Zero-Inflated Microbiome Data Analysis

Welcome, Researcher. This support center is designed to assist you in diagnosing and resolving common issues encountered when analyzing zero-inflated OTU (Operational Taxonomic Unit) tables within the broader thesis context of Filtering strategies for zero-inflated OTU tables. The following FAQs and guides address specific analytical pitfalls.

Frequently Asked Questions (FAQs)

Q1: After rarefaction, my alpha diversity metrics (Shannon, Chao1) show extreme variance between sample groups. Are these results reliable? A: This is a classic symptom of zero-inflation skew. Rarefaction does not address the underlying excess zeros. The variance is likely artificially high due to many unobserved species (structural zeros) being treated as sampling zeros. This inflates uncertainty and can lead to false positives in differential abundance testing.

Q2: My PERMANOVA (Adonis) test on Bray-Curtis distances is statistically significant (p < 0.01), but the PCoA visualization shows no clear clustering. What's wrong? A: Zero-inflated data can distort distance metrics. The significance may be driven by a large number of joint absences (zeros shared between samples) rather than meaningful co-occurrence patterns. This creates a misleading signal. Consider using a distance metric less sensitive to double zeros, such as the Jaccard index (presence/absence), or apply a zero-inflated Beta diversity model.

Q3: I applied a conventional negative binomial GLM for differential abundance, but the model fails to converge or yields unrealistic effect sizes. How do I proceed? A: Standard count models assume zeros arise from a single process (sampling). Zero-inflated data often come from two processes: genuine absence (biological or technical) and sampling depth. You must switch to a two-part model specifically designed for this, such as a Zero-Inflated Negative Binomial (ZINB) or Hurdle model.

Q4: What is the fundamental difference between "Filtering" and "Modeling" strategies for handling zero-inflation? A: Filtering is a pre-processing step that removes low-prevalence OTUs (e.g., those present in less than 10% of samples) before analysis, operating under the assumption these are noise. Modeling is an analytical step that uses specialized statistical distributions (ZINB, Hurdle) to account for the excess zeros during the test, allowing you to differentiate between structural and sampling zeros.

Troubleshooting Guides & Experimental Protocols

Issue: Inflated False Discovery Rate in Differential Abundance Analysis. Diagnosis: Standard statistical tests (e.g., DESeq2, edgeR without adjustment) misinterpret excess zeros as evidence of no expression/abundance, leading to overstated significance for low-count features.

Protocol 1: Implementing a Zero-Inflated Negative Binomial (ZINB) Model with glmmTMB

- Data Preparation: Start with your raw OTU count table. Do not rarefy. Perform a conservative prevalence filter (e.g., retain OTUs with counts in >5% of samples) to remove ultra-rare noise.

- Model Specification: For a single OTU and a two-group comparison:

- Statistical Inference: Use the

summary(zinb_model)function to obtain p-values for the conditional (count) model and the zero-inflation model. Perform likelihood ratio tests against a null model for hypothesis testing. - Validation: Check model diagnostics using the

DHARMapackage (simulate residuals) to assess overdispersion and zero-inflation fit.

Protocol 2: Comparative Evaluation of Filtering Thresholds Objective: To empirically determine the impact of prevalence filtering on downstream beta diversity metrics.

- Define Filtering Tiers: Create four filtered datasets:

- Unfiltered: Raw table.

- Tier 1: OTUs present in ≥ 1% of samples.

- Tier 2: OTUs present in ≥ 5% of samples.

- Tier 3: OTUs present in ≥ 10% of samples.

- Calculate Distance Matrices: For each tier, compute both Bray-Curtis and Jaccard distance matrices.

- Analyze & Compare: Run PERMANOVA on each matrix. Record the R² (effect size) and p-value.

- Interpretation: Observe how the perceived strength (R²) and significance of group separation change with filtering intensity. A stable signal across tiers is more robust.

Quantitative Data Summary: Impact of Filtering on Data Structure

| Filtering Tier (Prevalence) | % OTUs Retained | Total Zeros in Matrix (%) | Mean Shannon Index (SD) | PERMANOVA R² (Bray-Curtis) |

|---|---|---|---|---|

| Unfiltered | 100% | 85.2% | 2.1 (0.8) | 0.15 (p=0.001) |

| ≥ 1% of samples | 45% | 70.5% | 2.8 (0.5) | 0.18 (p=0.001) |

| ≥ 5% of samples | 22% | 65.1% | 3.1 (0.4) | 0.19 (p=0.001) |

| ≥ 10% of samples | 12% | 58.7% | 3.2 (0.3) | 0.20 (p=0.002) |

Table: Example results showing how increasing prevalence filtering reduces zero-inflation and stabilizes diversity metrics. SD = Standard Deviation.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Software | Function in Zero-Inflation Research |

|---|---|

| phyloseq (R) | Core package for organizing OTU table, taxonomy, and sample data. Enables seamless filtering, aggregation, and basic analysis. |

| metagenomeSeq (R) | Provides the fitFeatureModel and fitZig functions, which use a zero-inflated Gaussian (ZIG) mixture model for differential abundance testing. |

| glmmTMB (R) | Flexible package for fitting ZINB and Hurdle models with random effects, crucial for complex study designs (e.g., longitudinal). |

| ANCOM-BC (R) | A methodology for differential abundance testing that accounts for sampling fractions and zeros through bias correction and log-ratio analysis. |

| MicrobiomeDDA (Web) | A benchmarking tool to compare the performance of various differential abundance methods (including ZINB, DESeq2, edgeR) on zero-inflated data. |

| QIIME 2 (Pipeline) | Offers plugins like q2-composition for compositional methods (e.g., aldex2) and q2-gneiss for log-ratio modeling, alternative approaches to handle zeros. |

Visualization: Analytical Workflow & Model Logic

Diagram 1: Decision Pathway for Zero-Inflated OTU Data Analysis

Diagram 2: Two-Part Logic of a Zero-Inflated Model (ZINB)

Troubleshooting Guide & FAQs

Q1: After filtering my zero-inflated OTU table based on a prevalence threshold, my beta-diversity results change dramatically. Is this expected, and how do I choose a stable threshold?

A1: Yes, this is expected. Prevalence filtering removes rare taxa, which can significantly impact distance metrics like Unifrac or Bray-Curtis. To choose a stable threshold:

- Perform sensitivity analysis by running your downstream analysis (e.g., PCoA, PERMANOVA) across a range of prevalence thresholds (e.g., 1%, 5%, 10%, 20%).

- Plot the resulting statistical power (e.g., PERMANOVA R²) or the stability of clustering patterns against the threshold.

- Select a threshold where the results begin to stabilize (the "elbow" in the plot), not necessarily the highest threshold. A common practice in zero-inflated data is to retain features present in at least 10-20% of samples, but this is dataset-dependent.

Table 1: Impact of Prevalence Filtering on Beta-Diversity Results

| Prevalence Threshold | OTUs Retained | PERMANOVA R² (Group Effect) | Bray-Curtis Dispersion |

|---|---|---|---|

| No Filtering | 15,342 | 0.08 (p=0.12) | 0.85 |

| 1% (≥3 samples) | 2,150 | 0.15 (p=0.045) | 0.78 |

| 5% (≥14 samples) | 875 | 0.22 (p=0.012) | 0.71 |

| 10% (≥28 samples) | 402 | 0.23 (p=0.011) | 0.70 |

| 20% (≥56 samples) | 155 | 0.21 (p=0.018) | 0.72 |

Q2: Should I filter based on total abundance (read count) or relative abundance? My low-biomass samples are disproportionately affected by abundance filtering.

A2: For zero-inflated OTU tables, filtering based on total abundance (absolute read count) is generally recommended over relative abundance. Relative abundance filtering can be biased by highly abundant taxa in a few samples. However, for low-biomass studies:

- Use a sample-specific or normalized metric: Instead of a global sum threshold, consider filtering OTUs that do not achieve a minimum count in at least X% of samples (linking abundance to prevalence).

- Protocol: First, perform prevalence filtering. Then, for the prevalent OTUs, apply a minimum abundance threshold (e.g., >10 reads) where the OTU is present. This avoids penalizing an OTU that is consistently present at low, biologically relevant levels in low-biomass samples.

- Alternative: Use a mean abundance threshold (e.g., mean > 5 across all samples) which is less sensitive to single outlier counts than total sum.

Q3: How do I interpret the joint distribution of prevalence and abundance for filtering decisions?

A3: Visualizing the Prevalent-Abundance (PA) plot is crucial. It helps distinguish true rare taxa from potential artifacts.

- Protocol: Create a scatter plot with log10(Mean Abundance) on the Y-axis and Prevalence (%) on the X-axis for all OTUs.

- Interpretation: Most OTUs will cluster in the low-prevalence, low-abundance corner (technical noise/rare biosphere). The high-prevalence, moderate-to-high-abundance region contains your likely core community. Filtering decisions involve drawing a vertical line (prevalence cutoff) and a horizontal line (abundance cutoff) to isolate the core cluster.

- Action: OTUs in the low-prevalence, high-abundance quadrant may be contaminants or transient taxa and should be investigated manually.

Q4: What is a robust experimental workflow for filtering a zero-inflated OTU table prior to differential abundance testing?

A4: A conservative, step-wise workflow is recommended to minimize false positives.

Protocol:

- Prevalence Filter: Remove OTUs present in fewer than a defined percentage of samples (e.g., 10%). This targets the excess zeros.

- Abundance Filter: On the prevalent OTUs, apply a minimum total abundance cutoff (e.g., >20 reads across all samples).

- Variance Filter (Optional but Recommended): Retain the top N (e.g., 20%) most variable OTUs across samples or perform variance-stabilizing transformation. This focuses the analysis on features with signal strong enough for statistical testing.

- Normalization: Apply a compositional data-aware normalization (e.g., CSS, Median Ratio, or a centered log-ratio transform on the filtered table) after filtering steps.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Filtering & Analysis of Zero-Inflated Microbiome Data

| Tool / Reagent Category | Specific Example(s) | Function in Filtering Context |

|---|---|---|

| Bioinformatics Pipeline | QIIME 2, mothur, DADA2 | Generates the raw OTU/ASV feature table from sequencing reads, the primary input for filtering. |

| R/Bioconductor Packages | phyloseq, microbiome, metagenomeSeq, DESeq2, MaAsLin2 |

Provides functions for calculating prevalence/abundance, applying filters, visualizing PA plots, and performing downstream statistical analysis. |

| Negative Control Reagents | DNA Extraction Kit Blanks, PCR Water Blanks, Sterile Swabs | Essential for identifying contaminant sequences that typically appear as low-prevalence, variable-abundance features. Used to inform filtering thresholds. |

| Mock Community Standards | ZymoBIOMICS Microbial Community Standard | Provides known composition and abundance data to benchmark filtering and normalization performance, ensuring they do not distort true biological signal. |

| High-Quality DNA Extraction Kits | MagAttract PowerSoil DNA Kit, DNeasy PowerLyzer Kit | Maximizes yield and consistency from low-biomass samples, reducing technical zeros and improving the reliability of abundance metrics. |

| Statistical Software | R, Python (with pandas, scikit-bio) | Platform for implementing custom filtering scripts, sensitivity analyses, and generating publication-quality visualizations of metric distributions. |

Within the context of a thesis on Filtering strategies for zero-inflated OTU tables, understanding the default and standard pre-filtering practices of major bioinformatics pipelines is crucial. These practices directly impact the structure of the resulting OTU/ASV table, influencing downstream statistical power and ecological inference. This technical support center addresses common issues researchers, scientists, and drug development professionals face when implementing these standard practices.

FAQs & Troubleshooting Guides

Q1: In QIIME 2, what does the --p-min-frequency parameter do in feature-table filter-features, and what is a typical starting value?

A: This is a prevalence-based filter. It removes features (OTUs/ASVs) with a total frequency (summed across all samples) below the threshold. This targets low-abundance, potentially spurious sequences. A common starting value is to filter features with less than 10 total reads, but this is dataset-dependent. Issue: Setting this too high may remove rare but biologically real taxa. Troubleshooting: Visualize the per-feature frequency distribution (qiime feature-table summarize) to identify a natural cut-off before a long tail of very low-frequency features.

Q2: When using mothur's split.abund command, what is the difference between minab and minsize?

A: Both are part of mothur's analogue to minimum frequency filtering. minsize specifies the minimum total abundance for an OTU to be kept in the "abundant" group. minab specifies the minimum abundance for an OTU in any one sample to be considered "abundant" in that sample. Issue: Confusion between global (OTU-level) and local (sample-OTU) thresholds. Troubleshooting: For standard global frequency filtering, use split.abund with a minsize parameter (e.g., minsize=10) and work with the *.abund file.

Q3: What is the purpose of filtering singletons or doubletons, and how is it done in these pipelines? A: Singletons (features appearing only once) and doubletons (twice) are often considered potential sequencing errors. Removing them is a standard pre-filtering step to de-noise data.

- QIIME 2: Use

feature-table filter-featureswith--p-min-frequency 2to remove singletons, or--p-min-frequency 3for singletons and doubletons. - mothur: Use

split.abundwithminsize=2or3. Issue: Aggressive removal may eliminate rare biosphere signals. Troubleshooting: Consider a more conservative approach if studying environments expected to have high microbial diversity and many rare taxa. The decision should be justified in the thesis context of zero-inflation trade-offs.

Q4: How do I filter samples with low sequence depth in QIIME 2 and mothur, and why is it important? A: Samples with very low reads are under-sampled and can appear as outliers, skewing diversity metrics and introducing zeros due to poor sampling rather than true absence.

- QIIME 2: Use

feature-table filter-sampleswith--p-min-frequency. The threshold is often determined by visualizing sample read depths viaqiime feature-table summarize. - mothur: Use

remove.groupsafter generating asharedfile or use theminpcparameter in commands likesub.sample. Issue: Removing too many samples compromises statistical power. Troubleshooting: The threshold is study-specific. Often, the minimum depth is set to a value that retains the majority of samples (e.g., the lowest depth within 50% of the median). Rarefaction curves can inform adequacy of depth.

Q5: What is the standard workflow order for pre-filtering steps? A: A typical, conservative order is:

- Filter samples by minimum sequencing depth.

- Filter features by minimum total frequency (e.g., remove singletons).

- (Optional) Filter features by prevalence (e.g., retain features present in at least

nsamples). Reversing steps 1 and 2 can waste computational effort filtering features from samples that will later be discarded.

Title: Standard Pre-filtering Workflow Order

Table 1: Common default or recommended starting parameters for pre-filtering in QIIME 2 and mothur. These are heuristics and must be validated per study.

| Filtering Goal | QIIME 2 Command | Typical Starting Parameter | mothur Command | Typical Starting Parameter | Rationale |

|---|---|---|---|---|---|

| Min. Sample Depth | feature-table filter-samples |

--p-min-frequency (e.g., 1000) |

remove.groups or sub.sample(minpc) |

Depth at ~50% of median | Remove under-sampled libraries |

| Min. Feature Frequency | feature-table filter-features |

--p-min-frequency 2 (no singletons) |

split.abund |

minsize=2 |

Remove potential sequencing errors |

| Feature Prevalence | feature-table filter-features |

--p-min-samples 2 (in ≥2 samples) |

remove.rare |

mintotal=1 & minshared=2 |

Remove likely spurious features |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and computational tools for implementing pre-filtering strategies.

| Item | Function/Description | Example/Note |

|---|---|---|

| Curated Reference Database | For taxonomic assignment; impacts which features are considered "noise." | SILVA, Greengenes, UNITE. Version choice is critical. |

| Positive Control DNA (Mock Community) | Validates sequencing run and informs threshold for filtering spurious sequences. | ZymoBIOMICS, ATCC MSA-1000. |

| Negative Control Samples | Identifies reagent/environmental contaminants for later removal. | Extraction blanks, PCR water blanks. |

| High-Performance Computing (HPC) Cluster | Runs resource-intensive filtering and downstream analyses on large datasets. | Slurm, SGE job schedulers. |

| Bioinformatics Pipeline Manager | Ensures reproducibility and automates multi-step filtering workflows. | Nextflow, Snakemake, Galaxy. |

| R/Python Environment | For custom analysis of filtering impacts, zero-inflation diagnostics, and plotting. | phyloseq, vegan (R), qiime2 (Python). |

Title: Filtering's Role in Thesis Research Flow

A Practical Toolkit: Step-by-Step Guide to Modern Zero-Inflated OTU Filtering Methods

Troubleshooting Guides & FAQs

Q1: What are typical minimum prevalence thresholds used in microbial ecology studies, and how do I choose one?

A: Common thresholds are derived from literature and dataset scale. A baseline recommendation is to filter out Operational Taxonomic Units (OTUs) or Amplicon Sequence Variants (ASVs) present in fewer than 10% of samples. For smaller studies (<50 samples), a higher threshold (e.g., 20-30%) may be necessary to reduce false positives. The choice is a trade-off between removing spurious noise and retaining biologically rare but real taxa. See Table 1 for a summary.

Q2: After applying a prevalence filter, my beta diversity results changed dramatically. Is this expected?

A: Yes, this is a known and intended effect. Zero-inflated OTU tables contain a high proportion of low-prevalence features, often due to sequencing errors or contamination. Filtering these out reduces the dimensionality and noise, allowing the underlying ecological signal to dominate. A dramatic shift suggests your initial analysis was heavily influenced by these spurious features. Validate by comparing the stability of results across a range of thresholds.

Q3: Should I apply prevalence filtering before or after rarefaction or other normalization steps?

A: Prevalence filtering should be applied before rarefaction or other count-based normalization. The logic is to first remove features that are likely technical artifacts (low prevalence) based on their distribution across samples, then normalize the remaining "more reliable" data for library size differences. The standard workflow is: Quality Control → Prevalence Filtering → Normalization (e.g., rarefaction, CSS) → Downstream Analysis.

Q4: How do I implement a minimum prevalence threshold in R using the phyloseq package?

A: Use the filter_taxa() function. For example, to retain taxa present in at least 5 samples:

To filter by fraction (e.g., 10% of samples):

Q5: Does prevalence filtering risk removing truly rare but important taxa?

A: This is a central thesis consideration. Yes, aggressive filtering can remove low-abundance, specialized taxa. The strategy must align with your research question. If studying "core" microbiota or broad community patterns, stricter filtering is beneficial. If investigating rare biosphere dynamics, a more lenient threshold or complementary methods (e.g., prevalence-and abundance-based filtering) is required. Always report your threshold and justify it.

Data Presentation

Table 1: Common Prevalence Thresholds in Published Studies

| Study Focus (Sample Size Range) | Typical Minimum Prevalence Threshold | Rationale |

|---|---|---|

| Human Gut Microbiota (100-1000s) | 10-20% of samples | Removes sequencing artifacts while preserving low-abundance community members. |

| Low-Biomass Environments (e.g., skin, air) (50-200) | 20-30% of samples | More aggressive filtering to combat high contamination and stochasticity. |

| Longitudinal/Spatial Studies (10s-100s) | 25-50% of time points/sites | Ensures features are persistent across the studied gradient. |

| Differential Abundance Testing | 5-10% of samples in smallest group | Maintains statistical power for between-group comparisons. |

Table 2: Impact of Prevalence Filtering on a Simulated Zero-Inflated Dataset

| Filtering Threshold (% of samples) | OTUs Remaining | Total Zeros Removed (%) | PERMANOVA R² (Group Effect) |

|---|---|---|---|

| No Filter (0%) | 1,500 | 0% | 0.08 |

| 5% | 400 | 62% | 0.15 |

| 10% | 180 | 85% | 0.22 |

| 20% | 75 | 94% | 0.23 |

Experimental Protocols

Protocol: Determining an Optimal Prevalence Threshold

- Input: A raw OTU/ASV table (counts) and sample metadata.

- Pre-processing: Remove any control samples and samples with extremely low read counts (e.g., <1,000 reads).

- Threshold Sweep: For a sequence of prevalence thresholds (e.g., 1%, 5%, 10%, 20%, 30% of samples): a. Create a filtered OTU table, removing all features with prevalence below the threshold. b. Calculate alpha diversity (e.g., Shannon Index) and beta diversity (e.g., Bray-Curtis dissimilarity) on the filtered table. c. If a group factor exists (e.g., Healthy vs. Diseased), compute the PERMANOVA R² value explaining group separation based on the filtered beta diversity matrix.

- Stability Assessment: Plot the number of retained features, beta diversity dispersion within groups, and PERMANOVA R² (if applicable) against the threshold.

- Selection: Choose the threshold where the rate of feature loss plateaus and the ecological signal (e.g., R²) stabilizes or peaks before becoming unstable due to excessive data removal.

Protocol: Validation via Spike-in Controls

- Spike-in Design: Include known, low-abundance microbial cells or synthetic DNA sequences (not expected in samples) at consistent low levels across all samples during extraction.

- Sequencing & Bioinformatic Processing: Process samples through standard 16S rRNA gene sequencing and pipeline (clustering, taxonomy assignment).

- Prevalence Analysis: Calculate the prevalence of the spike-in OTUs/ASVs in the non-filtered data. True spike-ins should be present in 100% of samples. Any spillover OTUs not corresponding to the spike-in are noise.

- Filtering Test: Apply increasing prevalence thresholds. The "true" spike-in features should persist until very high thresholds (e.g., 95-100%), while the noise spillovers should be eliminated at low thresholds (e.g., 5-10%).

- Threshold Justification: Use the results to support a threshold that removes spillover noise while retaining true low-abundance signals.

Mandatory Visualization

Title: Prevalence Filtering Workflow & Validation Loop

Title: Conceptual Effect of Prevalence Filtering on OTU Table

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Prevalence Filtering Validation

| Item | Function & Relevance to Prevalence Filtering |

|---|---|

| Synthetic Microbial Community (SynBio) Standards | Defined mixtures of known microbial strains at varying ratios. Used to benchmark filtering thresholds by assessing recovery of low-abundance but consistent members. |

| ZymoBIOMICS Microbial Community Standards | Well-characterized, stable microbial cell mixtures. Provides a "ground truth" for expected prevalence, allowing calibration of minimum sample count thresholds. |

| Mock Community DNA (e.g., ATCC MSA-1000) | Genomic DNA from known species. Spiked into experimental samples to track and differentiate true low-prevalence signals from cross-talk/contamination during sequencing. |

| Blank Extraction Kits & Negative PCR Controls | Essential for identifying contaminant sequences originating from reagents. Sequences appearing only in negatives must be filtered; their prevalence in true samples informs threshold choice. |

Bioinformatic Packages (phyloseq, decontam) |

Software providing functions (filter_taxa, isContaminant) to implement prevalence-based filtering and cross-reference prevalence in samples vs. negative controls. |

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Reduces PCR errors that can create spurious, low-prevalence ASVs, thereby decreasing the need for overly aggressive filtering. |

Troubleshooting Guides & FAQs

Q1: What is the primary difference between applying a Minimum Total Abundance cutoff versus a Mean Abundance cutoff, and how do I choose?

A: A Minimum Total Abundance cutoff filters out OTUs whose summed abundance across all samples falls below a specified threshold (e.g., < 10 reads total). A Mean Abundance cutoff removes OTUs with an average abundance per sample below a threshold (e.g., mean < 0.1%). The choice depends on your experimental design and zero-inflation pattern. Use Minimum Total Abundance for general noise reduction. Use Mean Abundance when sample sequencing depth is highly uneven, as it normalizes for the number of samples.

Q2: After applying a minimum total abundance filter, my beta-diversity results became less significant (higher p-values). What went wrong?

A: This is a common pitfall. Overly stringent filtering can remove genuinely rare but biologically important taxa, distorting community structure. The filter may have removed condition-specific, low-abundance OTUs that contributed to separation between groups. Troubleshoot by:

- Re-running the analysis with a less stringent cutoff (e.g., total abundance > 5 instead of > 20).

- Comparing the list of filtered OTUs to a taxonomy table to see if phylogenetically relevant groups were lost.

- Using a phased filtering approach: filter first by total abundance, then by prevalence (percentage of samples present), which is often more robust for zero-inflated data.

Q3: How do I determine the optimal numerical cutoff value for my dataset?

A: There is no universal value. The cutoff should be informed by your data's characteristics and research question. Follow this protocol:

- Visualize Distributions: Plot the log-transformed total abundance or mean abundance of all OTUs. Look for a natural break or "elbow" in the distribution.

- Iterative Testing: Perform downstream analysis (e.g., alpha/beta-diversity) at multiple cutoff values (e.g., 0.01%, 0.1%, 1% mean abundance).

- Stability Check: Assess the stability of your core conclusions (e.g., PERMANOVA R² value) across cutoffs. Choose a cutoff within a range where results are stable.

- Justify: Report the chosen cutoff and the rationale (e.g., "We applied a mean abundance cutoff of 0.1% to remove spurious OTUs while retaining 95% of the total sequence count.").

Q4: Does abundance-based filtering introduce bias in comparative studies between treatment and control groups?

A: Yes, it can, if not applied carefully. If one group (e.g., a treatment) has systematically lower overall microbial load, a fixed total abundance cutoff could disproportionately remove OTUs from that group. To mitigate this:

- Apply filters to the entire dataset as a whole before splitting into groups for comparative analysis.

- Consider using within-sample relative abundance cutoffs (e.g., retain OTUs > 0.01% in at least X samples) as a complementary step.

- Always report filtering parameters in full and consider a sensitivity analysis as part of your thesis methodology.

Q5: My software (QIIME2, mothur) excludes singletons by default. Should I apply an additional abundance filter?

A: Yes, singleton removal (total abundance = 1) is a very basic first step primarily aimed at PCR/sequencing errors. Additional abundance-based filtering is typically required to address the broader challenge of zero-inflation and to focus on reproducible, non-transient taxa. A subsequent mean or total abundance filter helps remove OTUs that are persistent but in such low numbers that they are unlikely to be reliably measured or biologically meaningful in your experimental context.

Data Presentation: Effect of Filtering Cutoffs on a Zero-Inflated OTU Table

Table 1: Impact of Sequential Filtering Strategies on OTU Table Characteristics Simulated data from a 16S rRNA gene sequencing study with 100 samples across two groups.

| Filtering Step | OTUs Remaining | % of Total OTUs | Total Sequences Retained | Mean Zeros per OTU |

|---|---|---|---|---|

| Raw Table | 5,250 | 100.0% | 1,250,000 | 91.2% |

| Remove Singletons (Total Abun. =1) | 3,800 | 72.4% | 1,249,995 | 88.7% |

| Total Abundance ≥ 10 | 1,150 | 21.9% | 1,240,105 | 62.1% |

| Mean Abundance ≥ 0.001% | 980 | 18.7% | 1,237,800 | 58.4% |

| Combine: Total Abun. ≥10 & Mean Abun. ≥0.001% | 875 | 16.7% | 1,235,650 | 55.0% |

| Add Prevalence ≥ 5% | 520 | 9.9% | 1,220,400 | 12.3% |

Table 2: Downstream Analysis Results Under Different Mean Abundance Cutoffs

| Mean Abundance Cutoff | Observed OTUs (Alpha Diversity) | PERMANOVA R² (Group Effect) | PERMANOVA p-value |

|---|---|---|---|

| No Filter | 5250 ± 210 | 0.08 | 0.051 |

| 0.0001% | 3100 ± 185 | 0.11 | 0.023 |

| 0.001% | 980 ± 95 | 0.15 | 0.004 |

| 0.01% | 300 ± 45 | 0.18 | 0.002 |

| 0.1% | 85 ± 22 | 0.22 | 0.001 |

Experimental Protocols

Protocol 1: Determining and Applying a Minimum Total Abundance Cutoff (Using R)

- Load Data: Import your OTU table (e.g.,

otu_matrix.csv) into R as a data frame. - Calculate Total Abundance: Compute column sums for each OTU:

otu_sums <- rowSums(otu_table) - Visualize: Create a histogram:

hist(log10(otu_sums), breaks=50, main="Distribution of OTU Total Abundance"). Identify the point where the long tail of low-abundance OTUs begins. - Set Threshold: Define a threshold (e.g., 10).

threshold <- 10 - Filter: Subset the OTU table:

filtered_otu_table <- otu_table[otu_sums >= threshold, ] - Record: Document the number of OTUs removed and retained.

Protocol 2: Iterative Evaluation of Mean Abundance Cutoffs for Beta-Diversity Stability

- Define Cutoffs: Create a vector of candidate cutoffs (e.g.,

cutoffs <- c(0, 0.0001, 0.001, 0.01, 0.1)representing percentages). - Loop Analysis: For each cutoff

c: a. Calculate mean abundance per OTU:mean_abun <- rowMeans(otu_table_rel). (otu_table_relis the relative abundance table). b. Filter:temp_otu <- otu_table[mean_abun >= c, ]. c. Calculate Bray-Curtis dissimilarity:dist <- vegdist(t(temp_otu), method="bray"). d. Run PERMANOVA:adonis2(dist ~ Group, data=metadata). e. Store the resulting R² and p-value. - Plot Results: Generate a line plot of R² values against the log10(cutoff). The "optimal" range is often a plateau before a steep drop.

- Select Cutoff: Choose a value at the beginning of the stable plateau for a conservative approach.

Diagrams

Title: Abundance Filtering Workflow for OTU Tables

Title: Decision Logic for Choosing Abundance Filter Type

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Abundance-Based Filtering Analysis |

|---|---|

| QIIME 2 (qiime2.org) | A plugin-based platform that provides tools like feature-table filter-features to apply total or mean abundance cutoffs within a reproducible workflow. |

| R with phyloseq/dada2 | Software environment for custom calculation of OTU sums/means, visualization of distributions, and iterative testing of cutoff values. |

| DADA2 R Package | While primarily for ASV inference, its filterAndTrim function includes pre-filtering by total read count, a foundational step. |

| MicrobiomeAnalyst | A web-based tool that includes a "Low Count Filter" module to remove features based on mean/median abundance or total counts. |

| Custom R/Python Scripts | Essential for implementing complex, iterative cutoff testing and stability analyses not covered by standard pipeline tools. |

| High-Performance Computing (HPC) Cluster | Needed for re-running computationally intensive beta-diversity and PERMANOVA tests multiple times during cutoff optimization. |

Technical Support Center: Troubleshooting Variance-Based Filtering

Troubleshooting Guides & FAQs

Q1: After applying variance-based filtering, my zero-inflated OTU table lost >90% of its features. Is this expected, and how can I adjust the threshold? A: Yes, this is a common occurrence in zero-inflated microbiome datasets where many OTUs are present in only a few samples. The filter may be too aggressive.

- Diagnosis: Calculate the variance or standard deviation for each OTU/feature and plot a histogram. You will likely see a highly right-skewed distribution.

- Solution: Instead of using an absolute variance threshold, use a percentile-based approach. Retain features in the top Xth percentile of variance. A typical starting point is the top 20th percentile (see Table 1).

- Protocol: In R, using the

phyloseqandmatrixStatspackages:

Q2: How should I handle variance calculation when my data includes technical zeros (undetected) versus biological zeros (truly absent)? A: This is a critical distinction for zero-inflated data. Standard variance calculations treat all zeros equally, which can be misleading.

- Diagnosis: If your data is compositional (like 16S rRNA sequencing), apply a centered log-ratio (CLR) transformation after filtering to mitigate the compositionality effect before variance calculation.

- Solution: Implement a variance filter on CLR-transformed data, which provides a more robust measure of variability for compositional data.

- Protocol:

- Perform a mild prevalence filter first (e.g., retain features present in >10% of samples).

- Impute zeros with a simple method like substituting with half the minimum positive value per feature for the CLR step.

- Apply CLR transformation using the

compositionsormicroVizR package. - Calculate variance on the CLR-transformed matrix and apply your percentile cutoff.

Q3: Does variance-based filtering introduce bias against low-abundance but consistently present OTUs? A: Yes, it can. Variance is scale-dependent; low-abundance features have a lower possible range of variance.

- Diagnosis: Check if your retained features are exclusively high-abundance. Correlate pre-filtering mean abundance with variance.

- Solution: Consider using the coefficient of variation (CV = standard deviation / mean) as an alternative metric. This normalizes variability by the mean, allowing consistently present low-abundance OTUs to pass the filter.

- Protocol:

Q4: I need a reproducible variance filtering workflow for my thesis methods section. What are the key steps? A: A robust, documented workflow is essential. See the provided Experimental Workflow Diagram and the detailed protocol below.

- Protocol: Detailed Variance Filtering for Zero-Inflated OTU Tables:

- Input: Raw OTU count table (N samples x M features).

- Pre-processing: Remove samples with library size below [Y] reads. Do NOT rarefy at this stage.

- Initial Prevalence Filter: Apply a minimal prevalence filter (e.g., features present in >5% of samples) to remove ultra-rare OTUs that skew variance calculation.

- Variance Metric Choice:

- For downstream methods assuming normality (e.g., PCA, linear models), use Variance on CLR-transformed data.

- For non-parametric downstream methods, Variance on normalized (e.g., CSS) counts may be sufficient.

- To protect low-abundance signals, use Coefficient of Variation.

- Threshold Determination: Use a percentile-based threshold (e.g., top 20%, 30%, or 50% variable features) determined via exploratory data analysis (see Table 1).

- Application: Filter the OTU table.

- Output: Filtered OTU table for downstream analysis (differential abundance, beta-diversity, etc.).

Data Presentation

Table 1: Comparison of Variance Filtering Strategies on a Simulated Zero-Inflated OTU Dataset (n=100 samples, m=1500 features)

| Filtering Strategy | Threshold | Features Retained (%) | Avg. Zeros Removed (%) | Notes |

|---|---|---|---|---|

| No Filter | N/A | 1500 (100%) | 0% | Baseline; includes high noise. |

| Absolute Variance | Var > 10.0 | 205 (13.7%) | 95.1% | Highly aggressive; retains only highest abundance features. |

| Top Percentile (Variance) | Top 30% | 450 (30%) | 72.3% | Balanced; common starting point. |

| Coefficient of Variation | Top 30% | 450 (30%) | 68.8% | Retains more low-abundance, stable features. |

| Variance on CLR Data | Top 30% | 450 (30%) | 75.2% | Best for compositional data analysis. |

Mandatory Visualizations

Variance Filtering Workflow for Zero-Inflated Data

Logic of Variance-Based Feature Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Variance-Based Filtering Context |

|---|---|

| R (with phyloseq, microbiome, matrixStats packages) | Core computational environment for data manipulation, transformation, and variance calculation. |

| Python (with SciPy, pandas, scikit-bio) | Alternative environment for implementing custom filtering pipelines and machine learning workflows. |

| Centered Log-Ratio (CLR) Transformation | A critical transformation for compositional data that enables meaningful variance calculation by addressing the constant-sum constraint. |

| Non-Zero Prevalence Filter | A preliminary reagent (code function) to remove ultra-rare features that artifactually influence variance percentiles. |

| Percentile Threshold (e.g., 0.8) | A tunable parameter that acts as a "reagent" to control the stringency of the filter, determining the proportion of features retained. |

| Coefficient of Variation (CV) Metric | An alternative "measuring tool" to standard variance, used when the goal is to retain stable low-abundance features. |

| Jupyter Notebook / RMarkdown | Essential for documenting the exact filtering steps, parameters, and results to ensure full reproducibility for thesis research. |

Technical Support Center

Troubleshooting Guides

Issue: CSS Normalization Yielding Extreme Values or Errors

- Problem: After running

cumNorm(), some samples have extremely high normalization factors or the function returns an error. - Diagnosis: This often occurs due to a single, very low-count OTU being selected as the reference by the default algorithm. The CSS method calculates the cumulative sum up to a data-driven percentile.

- Solution:

- Inspect the

pvector fromcumNormStat()orcumNormStatFast(). - If the calculated percentile (

p) is very low (e.g., near 0), manually set a more appropriate percentile using thepargument incumNorm(). - Use

cumNormStatFast()for a more robust estimate, especially with large datasets. - Filter out OTUs with a total count below a threshold (e.g., < 10) before normalization, as recommended in filtering strategies for zero-inflated OTU tables research.

- Inspect the

Issue: ZIG Model Fitting Failures or Non-Convergence

- Problem:

fitZig()fails to converge, producesNAcoefficients, or returns "system is computationally singular" errors. - Diagnosis: This is typically caused by high multicollinearity in the model matrix (e.g., perfectly correlated covariates), insufficient replicates, or an outcome variable with zero variance.

- Solution:

- Check the design matrix (

mod) for collinearity usingcor()oralias(). Remove redundant covariates. - Ensure the model is not over-specified for the number of samples. Simplify the model.

- For a categorical variable, verify all groups have more than one sample. Combine rare groups if necessary.

- Pre-filter the OTU table to remove features present in fewer than a specified number of samples (e.g., < 10% of samples) to improve model stability, aligning with core filtering principles.

- Check the design matrix (

Issue: Discrepancy Between Raw and Normalized Counts in MRexperiment Object

- Problem: The

MRcounts()function returns counts that don't match the input data after normalization. - Diagnosis: By default,

MRcounts(..., norm=TRUE)returns CSS-normalized counts. The user might be comparing these to raw counts. - Solution:

- Use

MRcounts(..., norm=FALSE)to retrieve the original, untransformed counts. - To obtain the normalized counts used by

fitZig(), explicitly usenorm=TRUE. The normalization factors are stored in thenormFactorsslot of theMRexperimentobject. - Always specify the

normargument to avoid confusion.

- Use

Frequently Asked Questions (FAQs)

Q1: When should I use CSS normalization versus other methods like TSS (Total Sum Scaling) or TMM? A1: CSS is specifically designed for sparse, zero-inflated marker-gene survey data (like 16S rRNA). It is more robust to the presence of many low-abundance and zero-count features than TSS. TMM is designed for RNA-seq and may not perform optimally with the extreme sparsity of microbiome data. Within the context of filtering for zero-inflated OTU tables, CSS is often applied after a mild prevalence-based filter to remove singletons or doubletons.

Q2: How does the ZIG model in metagenomeSeq handle zeros compared to a standard linear model?

A2: The ZIG model is a two-part mixture model. It explicitly models the probability that a zero count is due to biological absence (a "structural zero") versus a sampling artifact below detection. This is distinct from a standard linear model on transformed data, which treats all zeros as identical and can be severely biased.

Q3: What is the recommended filtering strategy before applying CSS and ZIG models? A3: Based on current research in filtering strategies for zero-inflated OTU tables, a common and effective pipeline is:

- Prevalence Filter: Remove OTUs present in fewer than X% of samples (e.g., 10%). This reduces noise and computational burden.

- CSS Normalization: Apply the

cumNormmethod on the filtered data. - ZIG Model: Fit the model using

fitZigon the normalized, filtered data. This sequential approach improves model fit and power.

Q4: Can I use metagenomeSeq for shotgun metagenomic data, not just 16S?

A4: Yes. The metagenomeSeq package and its CSS/ZIG methods were developed for marker-gene surveys but are applicable to any sparse, compositional count data, including shotgun metagenomic functional profiles (like KO counts). The same considerations for sparsity and normalization apply.

Data Presentation

Table 1: Comparison of Normalization Methods for Sparse Microbiome Data

| Method | Acronym | Designed For | Handles Zero-Inflation | Key Assumption | metagenomeSeq Function |

|---|---|---|---|---|---|

| Cumulative Sum Scaling | CSS | Marker-gene surveys | Excellent | A stable quantile exists for all samples | cumNorm() |

| Total Sum Scaling | TSS | General ecology | Poor | Counts are proportional to biomass | Not primary |

| Trimmed Mean of M-values | TMM | RNA-seq | Moderate | Most features are not differentially abundant | Not primary |

| Relative Log Expression | RLE | RNA-seq | Moderate | Similar to TMM | Not primary |

Table 2: Common Pre-Filtering Thresholds for Zero-Inflated OTU Tables

| Filtering Criterion | Typical Threshold Range | Primary Goal | Impact on ZIG Model Fit |

|---|---|---|---|

| Minimum Total Count per OTU | 10 - 50 | Remove very low-abundance noise | Reduces outliers in dispersion estimates |

| Minimum Prevalence (Samples) | 10% - 20% | Remove rarely detected features | Increases model convergence stability |

| Minimum Count in at least n samples | e.g., ≥5 in 5 samples | Balance between noise & signal | Common in best-practice pipelines |

Experimental Protocols

Protocol: Standard Workflow for Differential Abundance Analysis with metagenomeSeq

- Data Import & Object Creation:

- Load OTU count table (rows=features, columns=samples) and sample metadata.

- Create a

phyloseqobject or directly create anMRexperimentobject:mr_obj <- newMRexperiment(counts). - Add phenotype data:

pData(mr_obj) <- sample_metadata.

Filtering:

- Apply a prevalence filter. Example: Remove OTUs present in < 10% of samples.

Normalization:

- Calculate the CSS normalization percentile:

p <- cumNormStatFast(mr_obj). - Calculate normalization factors:

mr_obj <- cumNorm(mr_obj, p = p).

- Calculate the CSS normalization percentile:

Model Specification & Fitting:

- Define the model matrix based on the experimental design (e.g., ~ DiseaseState + Age).

- Fit the ZIG model:

zig_fit <- fitZig(mr_obj, mod).

Results Extraction & Interpretation:

- Use

MRcoefs()orMRtable()to extract coefficients, p-values, and false discovery rate (FDR) adjusted p-values.

- Use

Protocol: Validating CSS Normalization Effectiveness

- Calculate Pre- and Post-Normalization Statistics:

- For raw and CSS-normalized counts, compute: a) Total counts per sample, b) Number of detected OTUs per sample.

- Visualization:

- Create boxplots of total counts per sample before and after normalization. CSS should reduce the extreme variation seen in raw totals.

- Plot the number of detected OTUs against the original library size. After CSS, this correlation should be weakened.

- Quantitative Assessment:

- Calculate the coefficient of variation (CV) of sample-specific scaling factors. Lower CV indicates more stable normalization.

- Perform a Principal Coordinates Analysis (PCoA) on raw and normalized data using a robust distance metric (e.g., Bray-Curtis). The first principal coordinate should be less correlated with library size post-CSS.

Mandatory Visualization

Title: CSS Normalization and ZIG Model Analysis Workflow

Title: Zero-Inflated Gaussian (ZIG) Mixture Model Structure

The Scientist's Toolkit

Table: Essential Research Reagent Solutions for Microbiome Analysis with metagenomeSeq

| Item | Function in Analysis | Example / Note |

|---|---|---|

| High-Quality OTU/ASV Table | The primary input data. Rows are features (OTUs/ASVs), columns are samples. Must be raw count data, not relative abundances. | Generated by DADA2, QIIME 2, or mothur. |

| Sample Metadata Table | Contains covariates for statistical modeling (e.g., disease state, treatment, age, batch). Critical for defining the design matrix. | Stored as a data.frame; rows must match columns of count table. |

| R Statistical Software | The computational environment required to run metagenomeSeq. |

Version 4.0 or higher recommended. |

metagenomeSeq R Package |

The core software implementing CSS normalization and ZIG mixture models. | Available on Bioconductor. |

| Filtering Scripts/Tools | To perform pre-processing steps that remove spurious noise, improving downstream model performance. | Custom R scripts for prevalence/abundance filtering, or functions within phyloseq. |

Visualization Packages (e.g., ggplot2) |

For diagnostic plots (e.g., library size distribution, effect of normalization) and visualizing final results. | Essential for quality control and reporting. |

| High-Performance Computing (HPC) Access | For large datasets, fitting ZIG models can be computationally intensive. | Cluster or cloud computing resources may be necessary. |

Technical Support & Troubleshooting Center

This guide addresses common issues encountered when implementing hybrid and conditional filtering strategies for zero-inflated OTU tables in microbial ecology and drug development research.

Frequently Asked Questions (FAQs)

Q1: After applying a prevalence (20%) and abundance (0.1%) filter, my dataset lost 90% of its OTUs. Is this normal or indicative of an error? A: This can be normal, especially for highly zero-inflated datasets from low-biomass environments. Verify your filtering order. A common strategy is to filter by prevalence first (e.g., present in 20% of samples), then apply a minimum abundance threshold (e.g., 0.1% relative abundance in the samples where it is present). Reversing this order can lead to excessive data loss. Check your initial library sizes and contamination controls.

Q2: How do I decide between filtering at the Genus rank versus the OTU/ASV level? A: Filtering at a higher taxonomic rank (e.g., Genus) can mitigate sparsity by aggregating counts of related features, potentially increasing prevalence. This is useful for functional inference or stabilizing models. Filtering at the OTU/ASV level preserves fine-scale resolution for ecological studies. A conditional strategy is recommended: first filter low-prevalence OTUs, then aggregate to Genus for downstream analysis if the research question allows.

Q3: My statistical model (e.g., DESeq2, MaAsLin2) fails to converge after filtering. What should I do? A: This often indicates residual sparsity or complete separation. Implement a conditional filter: Retain features if they pass EITHER a strict prevalence threshold (e.g., >30% of samples) OR a moderate abundance threshold (e.g., >1% in at least 3 samples). This preserves robust, high-abundance but low-prevalence taxa that can be biologically meaningful.

Q4: How do I handle technical zeros versus biological zeros during filtering? A: This is a core challenge. Use positive control spikes (if available) to inform an abundance-based cutoff related to detection limit. For prevalence, consider sample grouping. Conditionally retain a feature if it is prevalent within at least one experimental group (e.g., present in >50% of disease samples), even if its overall prevalence is low. This requires group-aware filtering functions.

Q5: What is the impact of 16S rRNA gene copy number variation on these filtering strategies? A: Abundance thresholds based on read counts are biased by gene copy number (GCN). A low-abundance OTU from an organism with low GCN may be functionally important. Consider using a database like rrnDB to normalize counts by estimated GCN before applying abundance filtering, or rely more heavily on prevalence-based filtering for such analyses.

Experimental Protocols

Protocol 1: Evaluating Filtering Strategy Performance Objective: To compare the effect of different hybrid filtering parameters on downstream alpha and beta diversity metrics.

- Input: Raw OTU/ASV table (QIIME2, mothur, or DADA2 output).

- Pre-processing: Remove samples with total reads < 10,000. Apply no other filters initially.

- Filtering Arms:

- Arm A: Prevalence > 10% (across all samples) AND mean relative abundance > 0.01%.

- Arm B: Prevalence > 25% OR mean relative abundance > 0.1%.

- Arm C: Filter at OTU level: Prevalence > 15%. Then, collapse to Genus rank. Apply a second prevalence filter > 10% at Genus level.

- Downstream Analysis: For each filtered table, calculate:

- Shannon Alpha Diversity (using

vegan::diversityin R). - Weighted UniFrac Beta Diversity (using

phyloseq::distance).

- Shannon Alpha Diversity (using

- Comparison: Use PERMANOVA on beta diversity distances to assess the magnitude of effect from filtering strategy versus experimental grouping.

Protocol 2: Conditional Filtering by Sample Group Objective: To retain taxa that are characteristic of a specific experimental condition despite low overall prevalence.

- Input: Phyloseq object containing OTU table and sample metadata with a "Group" column (e.g., Control vs. Treatment).

- Define Function: In R, create a function that loops through each feature.

- Calculate prevalence within each group separately.

- Retain the feature if its prevalence in any group exceeds a defined cutoff (e.g., 40%).

- Apply Function: Execute the function on the unfiltered OTU table.

- Validation: Perform differential abundance testing (e.g.,

DESeq2orLEfSe) on the resulting table. Confirm that condition-specific indicators retained by the filter are identified as significant.

Data Presentation

Table 1: Impact of Hybrid Filtering Strategies on a Simulated Zero-Inflated Dataset (n=100 samples)

| Filtering Strategy | OTUs Retained (%) | Mean Shannon Index (SD) | PERMANOVA R² (Group Effect) | False Discovery Rate (FDR) |

|---|---|---|---|---|

| No Filter | 100.0 | 5.21 (0.45) | 0.08 | 0.35 |

| Prevalence >10% & Abundance >0.01% | 18.5 | 4.85 (0.41) | 0.22 | 0.12 |

| Prevalence >25% OR Abundance >0.1% | 32.7 | 4.92 (0.43) | 0.19 | 0.09 |

| Conditional (Prevalent in >50% of any group) | 24.1 | 4.88 (0.40) | 0.25 | 0.07 |

| OTU→Genus Collapse, then Prevalence >10% | 15.3 (Genera) | 4.45 (0.39) | 0.15 | 0.15 |

Diagrams

Title: Hybrid Filtering Decision Workflow

Title: Conditional Filtering Logic for Group Analysis

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Filtering Research |

|---|---|

| Phyloseq (R Package) | Core object for organizing OTU table, taxonomy, sample data, and phylogeny. Enables integrated application of prevalence/abundance filters and downstream ecological analysis. |

| Decontam (R Package) | Uses prevalence or abundance correlates with sample DNA concentration to statistically identify and remove contaminant sequences, a critical pre-filtering step. |

| rrnDB Database | A curated database of 16S rRNA gene copy numbers. Used to normalize OTU counts taxonomically before abundance-based filtering, reducing bias. |

| ZymoBIOMICS Microbial Community Standards | Defined mock microbial communities with known composition. Essential for benchmarking filtering pipelines and quantifying false negative rates (over-filtering). |

| QIIME 2 (qiime2.org) | A plugin-based platform that provides tools for filtering features by prevalence, frequency, and taxonomy prior to diversity analysis. |

| ANCOM-BC (R Package) | A differential abundance method that accounts for zeros and compositionality. Used post-filtering to validate that biological signals are preserved. |

Troubleshooting Guides & FAQs

Q1: After importing my biom file into a phyloseq object, many functions return errors about the taxtable. What is the most common cause and fix? A: The most common cause is that the taxonomy strings are imported as a single column delimited by semicolons (e.g., "dBacteria;pFirmicutes;c_Bacilli"). The fix is to split this column into separate taxonomic ranks.

Q2: When applying prevalence or abundance filtering for zero-inflated OTU tables, what is a robust method to decide the threshold values within a thesis research context?

A: For thesis research on filtering zero-inflated data, a data-driven, iterative approach is recommended. Use the microbiome::prevalence and microbiome::abundance functions to inspect distributions before setting thresholds.

Q3: I need to compare alpha diversity between groups after filtering. Why do I get NA values for the Shannon index and how can I resolve it?

A: NA values in the Shannon index calculation typically occur when an OTU table contains zeros after filtering, which is expected. The phyloseq::estimate_richness function handles this correctly. If you get NA for entire samples, it likely means those samples have no reads remaining after aggressive filtering. The solution is to check your filtering stringency and remove empty samples.

Q4: How can I ensure reproducibility of my filtering workflow for a thesis methodology chapter? A: Create a documented, parameterized R function that encapsulates your entire filtering strategy. This ensures transparency and reproducibility.

Data Presentation

Table 1: Comparison of Filtering Strategies on a Simulated Zero-Inflated Dataset

| Filtering Strategy | OTUs Remaining (%) | Samples Remaining (%) | Mean Shannon Index (SD) | Beta Dispersion (F-statistic) |

|---|---|---|---|---|

| Unfiltered | 100.0 | 100.0 | 3.21 (0.45) | 1.85 |

| Prevalence > 10% | 24.5 | 100.0 | 2.98 (0.41) | 1.91 |

| Total Abundance > 20 | 18.7 | 100.0 | 2.87 (0.39) | 1.93 |

| Combined (Prev>10% & Abun>20) | 15.2 | 100.0 | 2.75 (0.38) | 1.95 |

| Decontam (prev) | 22.1 | 98.3 | 3.01 (0.42) | 1.89 |

Table 2: Impact of Filtering on Differential Abundance Analysis (ALDEx2)

| Filtering Method | Significant OTUs (FDR < 0.05) | Median Effect Size | False Discovery Rate Control |

|---|---|---|---|

| No Filter | 1254 | 1.45 | Poor (Inflated) |

| Prevalence 5% | 287 | 2.01 | Good |

| Prevalence 10% | 142 | 2.34 | Good |

| Cumulative Sum Scaling (CSS) | 198 | 2.12 | Moderate |

Experimental Protocols

Protocol 1: Systematic Evaluation of Filtering Thresholds

- Data Import: Import OTU table, taxonomy, and metadata into a phyloseq object using

phyloseq::import_biom(). - Pre-processing: Remove samples with library size below 1000 reads using

phyloseq::prune_samples(). - Threshold Scanning: Iterate over prevalence thresholds from 1% to 20% in 1% increments.

- Abundance Scanning: For each prevalence threshold, scan total abundance thresholds from 5 to 50.

- Metric Calculation: For each combination, calculate: (a) Number of retained OTUs, (b) Percentage of zeros remaining, (c) Mean alpha diversity.

- Stability Assessment: Apply PERMANOVA to beta diversity distances to assess group separation stability across thresholds.

- Optimal Threshold Selection: Choose the threshold that maximizes group separation while retaining sufficient features for downstream analysis.

Protocol 2: Validation Using Synthetic Communities (Spike-ins)

- Spike-in Design: Add known quantities of 10 bacterial strains not expected in samples to the DNA extraction step.

- Sequencing: Process samples alongside experimental samples.

- Bioinformatic Processing: Include spike-ins in OTU clustering/ASV calling.

- Filtering Application: Apply candidate filtering strategies.

- Recovery Assessment: Calculate sensitivity (proportion of spike-ins detected) and false positive rate.

- Quantitative Accuracy: Correlate observed abundance with expected spike-in proportions.

Mandatory Visualization

Title: Filtering Workflow for Zero-Inflated Microbiome Data

Title: Prevalence-Abundance Filtering Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Microbiome Filtering Experiments

| Item | Function in Research | Example/Specification |

|---|---|---|

| ZymoBIOMICS Microbial Community Standard | Validates wet-lab and bioinformatic workflow, including filtering efficiency. | Zymo Research Cat# D6300 |

| Mock Community DNA | Positive control for sequencing and preprocessing pipelines. | ATCC MSA-1002 |

| Qubit dsDNA HS Assay Kit | Accurate quantification of DNA prior to library prep. | Thermo Fisher Q32851 |

| DNeasy PowerSoil Pro Kit | Standardized DNA extraction minimizing bias. | Qiagen 47014 |

| PhiX Control v3 | Quality control for Illumina sequencing runs. | Illumina FC-110-3001 |

| R packages: phyloseq (v1.42.0) | Core data structure and analysis for OTU tables. | Bioconductor |

| R packages: microbiome (v1.20.0) | Provides prevalence() and abundance() functions. | Bioconductor |

| R packages: decontam (v1.18.0) | Identifies contaminants based on prevalence or frequency. | CRAN |

| R packages: ALDEx2 (v1.32.0) | Differential abundance testing for compositional data. | Bioconductor |

| Custom R Script Repository | Documents and reproduces specific filtering workflows for thesis. | GitHub Repository |

Navigating Pitfalls: How to Optimize Filtering Parameters and Avoid Common Mistakes

Technical Support Center

Troubleshooting Guide: Frequently Asked Questions

Q1: During the filtering of my zero-inflated OTU table, I am losing many low-abundance but potentially biologically significant taxa. How can I adjust my parameters to retain more of this signal?

A1: This is a common issue. We recommend a tiered filtering approach.

- Initial Lenient Filter: First, apply a prevalence-based filter (e.g., retain features present in at least 10-20% of samples). This removes only the rarest, most sporadic zeros likely to be technical noise.

- Variance Stabilization: Apply a variance-stabilizing transformation (VST) like the one implemented in

DESeq2or a centered log-ratio (CLR) transformation before conducting abundance-based filtering. This reduces the influence of high-abundance taxa on dispersion estimates. - Adaptive Abundance Filter: Instead of a single mean/median abundance cutoff, use an inter-quantile range (IQR) rule. For example, filter out features where the 75th percentile of abundance across samples is below a defined threshold (e.g., 0.1% relative abundance). This protects low-abundance but consistently present taxa.

Q2: After applying a prevalence filter, my beta diversity results (e.g., PCoA plots) show strong batch effects that were not visible before. What happened?

A2: Aggressive prevalence filtering can inadvertently remove taxa that are batch-specific but biologically real. By removing these, you may be stripping away the variation that highlighted the batch effect, making it seem like the filter "improved" the data when it actually masked a confounding factor.

- Solution: Always run and visualize beta diversity metrics (using Aitchison distance for compositional data) before and after filtering. If a batch effect disappears post-filtering, investigate the discarded taxa. Consider using a batch-correcting filtering tool like

sccompor integrate a formal batch correction step (e.g.,ComBat-seqfor counts) after conservative, minimal filtering.

Q3: What is a statistically robust method to set the threshold for the "minimum count" parameter in tools like decontam or when doing total frequency filtering?

A3: Avoid arbitrary thresholds. For frequency-based filtering:

- Use the