Compositional Data Analysis in Microbiome Studies: A Complete Guide for Biomedical Researchers

This comprehensive guide explores the critical importance of compositional data analysis in microbiome research.

Compositional Data Analysis in Microbiome Studies: A Complete Guide for Biomedical Researchers

Abstract

This comprehensive guide explores the critical importance of compositional data analysis in microbiome research. It covers the fundamental mathematical constraints of relative abundance data, explains why standard statistical methods fail, and introduces specialized methods like log-ratio transformations (CLR, ILR, ALR). The article provides practical workflows for applying these techniques, addresses common pitfalls in data interpretation, and compares the performance of different compositional methods. Aimed at researchers and drug development professionals, this resource equips scientists with the necessary framework to derive biologically meaningful and statistically valid insights from microbiome sequencing data.

Why Your Microbiome Data is Compositional: Understanding the Core Constraint

Microbiome sequencing data, derived from technologies like 16S rRNA gene amplicon or shotgun metagenomic sequencing, is fundamentally compositional. The total number of reads obtained per sample (the library size) is an arbitrary technical constraint, not a biological truth. This "sequencing sum constraint" means that an increase in the relative abundance of one taxon necessitates an artificial decrease in the relative abundance of others, creating spurious correlations. This technical guide explores the implications of this constraint and details methodologies for moving from raw count data to meaningful relative abundance estimates within the rigorous framework of Compositional Data Analysis (CoDA).

Table 1: Impact of the Sum Constraint on a Hypothetical Two-Sample, Three-Taxon Dataset

| Taxon | Sample A Raw Reads | Sample B Raw Reads | Sample A Relative (%) | Sample B Relative (%) | Artifactual Fold-Change (B/A) |

|---|---|---|---|---|---|

| Taxon_X | 10,000 | 10,000 | 50.0 | 25.0 | 0.5 (Down) |

| Taxon_Y | 9,000 | 9,000 | 45.0 | 22.5 | 0.5 (Down) |

| Taxon_Z | 1,000 | 21,000 | 5.0 | 52.5 | 10.5 (Up) |

| Total | 20,000 | 40,000 | 100 | 100 | N/A |

Interpretation: Taxon_X and Taxon_Y did not change in absolute abundance between Sample A and B, yet their relative abundances halved due to the massive, compositionally-induced increase in Taxon_Z. This demonstrates the necessity of CoDA methods.

Table 2: Common Data Transformations Addressing Compositionality

| Method | Formula (for taxon i, sample j) | Key Property | Primary Use Case |

|---|---|---|---|

| Total Sum Scaling (TSS) | $x{ij}^{rel} = \frac{x{ij}}{\sum{k} x{kj}}$ | Maps to Simplex (0,1) | Basic relative abundance reporting. |

| Centered Log-Ratio (CLR) | $clr(xj)i = \ln \frac{x{ij}}{g(xj)}$ where $g(x_j)$ is geometric mean | Zero-sum, Euclidean space | Many downstream multivariate stats (e.g., PCA on CLR). |

| Additive Log-Ratio (ALR) | $alr(xj)i = \ln \frac{x{ij}}{x{Dj}}$ (D is reference denominator) | Non-isometric, reduces dimension | Specific hypothesis about a reference taxon. |

| Isometric Log-Ratio (ILR) | $ilr(xj)i = \sqrt{\frac{rs}{r+s}} \ln \frac{g(x^+)}{g(x^-)}$ (balance) | Isometric, orthonormal coordinates | Defining interpretable, orthogonal balances. |

Experimental Protocols

Protocol 1: Standard 16S rRNA Gene Amplicon Sequencing Workflow (Generating Count Data)

Objective: To generate raw Operational Taxonomic Unit (OTU) or Amplicon Sequence Variant (ASV) count tables from microbial samples.

- Sample Collection & DNA Extraction: Use a standardized kit (e.g., DNeasy PowerSoil Pro Kit) to lyse cells and isolate total genomic DNA. Include extraction controls.

- PCR Amplification: Amplify the hypervariable region (e.g., V4) of the 16S rRNA gene using barcoded primers (e.g., 515F/806R). Perform triplicate reactions to reduce PCR bias.

- Library Preparation & Sequencing: Pool purified amplicons in equimolar ratios. Sequence on an Illumina MiSeq or NovaSeq platform using paired-end chemistry (e.g., 2x250 bp).

- Bioinformatic Processing (QIIME 2/DADA2):

a. Demultiplexing & Primer Trimming: Assign reads to samples based on barcodes.

b. Quality Filtering & Denoising: Using DADA2, correct errors, merge paired-end reads, and remove chimeras to infer exact ASVs.

c. Taxonomy Assignment: Classify ASVs against a reference database (e.g., SILVA v138) using a classifier like

q2-feature-classifier. d. Output: A feature table (BIOM format) of ASV counts per sample.

Protocol 2: Converting Counts to CoDA-Ready CLR Transformed Data

Objective: To transform raw count data into centered log-ratio values for robust statistical analysis.

- Preprocessing & Filtering: (In R, using

phyloseqandmicroViz) - Pseudocount Addition: Add a uniform pseudocount (e.g., 1) to all counts to handle zeros, enabling log transformation.

- CLR Transformation: Calculate the geometric mean of each sample and center the log-transformed counts.

- Output: A matrix of CLR-transformed values suitable for Euclidean-based statistical methods (e.g., PCA, PERMANOVA, linear models).

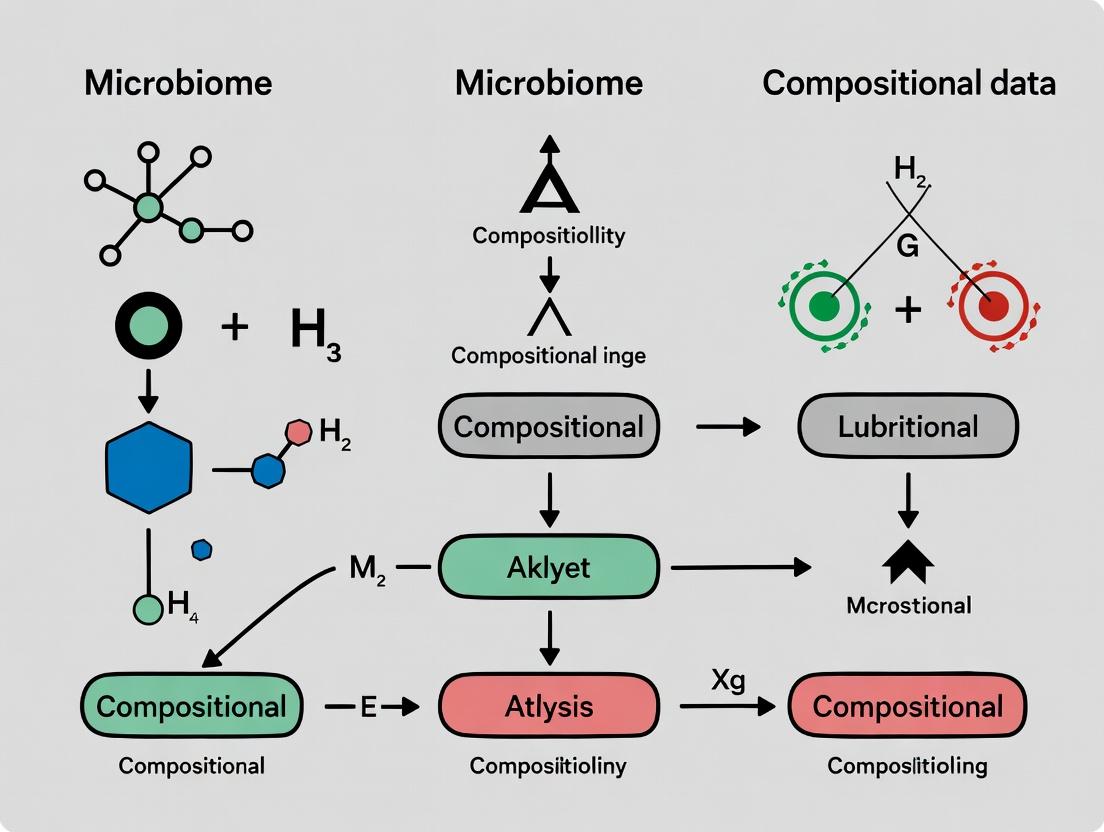

Mandatory Visualizations

Title: CoDA Transformation Workflow from Raw Counts

Title: Problem of Spurious Correlation & CoDA Solution

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Compositional Microbiome Studies

| Item | Function/Application | Example Product |

|---|---|---|

| Standardized DNA Extraction Kit | Consistent lysis of diverse microbial cell walls and isolation of inhibitor-free DNA for reproducible library prep. | Qiagen DNeasy PowerSoil Pro Kit |

| PCR Barcoded Primers | Amplify target region (e.g., 16S V4) while attaching unique sample identifiers (barcodes) for multiplex sequencing. | Illumina 16S Metagenomic Sequencing Library Prep (515F/806R) |

| Mock Community Control | Defined mix of known microbial genomic DNA to assess technical bias, sequencing accuracy, and validate bioinformatic pipelines. | ZymoBIOMICS Microbial Community Standard |

| Negative Extraction Control | Sterile water or buffer processed through extraction and sequencing to identify and filter contamination. | Nuclease-Free Water |

| Phosphate-Buffered Saline (PBS) | Sterile buffer for sample collection, homogenization, and serial dilutions; minimizes background interference. | Thermo Fisher Gibco PBS, pH 7.4 |

| Bioinformatic Pipeline Software | Process raw sequences into count tables and perform CoDA transformations. | QIIME 2, DADA2 (R), microViz & compositions (R packages) |

Microbiome data, generated via high-throughput sequencing of 16S rRNA or shotgun metagenomes, is intrinsically compositional. The fundamental constraint is that the data represent relative abundances, not absolute counts. Each sample yields a vector of counts that are normalized to a constant sum (e.g., library size), meaning the information is contained in the ratios between parts, not in the magnitudes of the parts themselves. This places the data on a simplex—a geometric space where points are constrained to sum to a constant (e.g., 1 or 100%). Applying standard Euclidean statistical methods to such data leads to spurious correlations and erroneous conclusions, a problem central to the field of Compositional Data Analysis (CoDA).

Core Principles of Compositional Data

The properties of compositional data were formally defined by John Aitchison. The key principles are:

- Scale Invariance: The relevant information is unchanged if the composition is multiplied by a constant (e.g., converting counts to proportions).

- Subcompositional Coherence: Conclusions drawn from a subcomposition (a subset of parts) should be consistent with conclusions drawn from the full composition.

- Perturbation as Addition: The natural operation of difference between two compositions is perturbation, defined as component-wise multiplication followed by closure (re-normalization to sum to 1).

- Powering as Multiplication: The natural operation of scaling a composition is powering, defined as raising each component to a power and re-closing.

Standard Euclidean distance and covariance violate these principles. For example, Euclidean distance between two compositions is affected by the presence or absence of components not shared between them, violating subcompositional coherence.

Table 1: Comparison of Euclidean vs. Compositional (Simplex) Methods for Microbiome Data

| Aspect | Euclidean Space Approach | Simplex/CoDA Approach | Key Implication |

|---|---|---|---|

| Data Representation | Raw or normalized counts/proportions | Log-ratio transformed components (e.g., CLR, ILR) | CoDA uses relative information; Euclidean assumes absolute. |

| Center (Average) | Arithmetic mean | Closed geometric mean (centroid) | Arithmetic mean is not a valid measure of central tendency for compositions. |

| Distance Metric | Euclidean distance | Aitchison distance | Euclidean distance exaggerates differences for rare taxa and is non-subcompositionally coherent. |

| Hypothesis Testing | t-test, ANOVA on proportions | PERMANOVA on Aitchison distance, ALDEx2 | Tests in Euclidean space yield inflated false-positive rates. |

| Correlation | Pearson, Spearman on proportions | Proportionality (e.g., ρp) or correlation of log-ratios | Pearson correlation of proportions is inherently biased (spurious correlation). |

| Differential Abundance | Fold-change on proportions | ALDEx2, ANCOM-BC, DESeq2 (with care) | Fold-change of proportions is confounded by the compositional nature. |

Table 2: Prevalence of CoDA Methods in Recent Microbiome Literature (Search: "compositional data analysis microbiome 2023-2024")

| Method/Tool | Core Principle | % of Analyzed Papers Citing Method (Est.) | Primary Use Case |

|---|---|---|---|

| Center Log-Ratio (CLR) | Log-transforms components relative to geometric mean. | ~45% | PCA, distance calculations, preprocessing for ML. |

| Isometric Log-Ratio (ILR) | Orthogonal log-ratio coordinates for Euclidean analysis. | ~25% | Hypothesis testing, regression in Euclidean space. |

| ANCOM / ANCOM-BC | Tests for differential abundance using log-ratio contrasts. | ~30% | Identifying differentially abundant taxa. |

| ALDEx2 | Uses a Dirichlet-multinomial model and CLR within Monte-Carlo instances. | ~20% | Differential abundance with scale-invariant probabilistic framework. |

| PhILR / Phylogenetic ILR | Uses phylogeny to construct balanced ILR coordinates. | ~15% | Incorporating evolutionary relationships into analysis. |

Experimental Protocols for Valid Compositional Analysis

Protocol 1: Core Preprocessing and Aitchison Distance Matrix Calculation

- Sequence Data Processing: Process raw FASTQ files through DADA2 or deblur for 16S data, or through MetaPhlAn for shotgun data, to generate an Amplicon Sequence Variant (ASV) or taxonomic profile table.

- Filtering: Remove ASVs/taxa with less than a minimal prevalence (e.g., present in <10% of samples) to reduce noise.

- Imputation (Optional): Replace zero counts using a multiplicative replacement strategy (e.g.,

zCompositions::cmultRepl) or a Bayesian-multiplicative replacement (e.g.,robCompositions::impRZilr). Note: Some methods like ALDEx2 handle zeros internally. - Center Log-Ratio (CLR) Transformation:

a. For each sample, calculate the geometric mean of all non-zero components.

b. Transform each component x_i:

clr(x_i) = log( x_i / G(x) ), where G(x) is the geometric mean. c. This creates a transformed vector with values centered around zero (sum of clr values = 0). - Calculate Aitchison Distance: The Aitchison distance d_A between samples x and y is the Euclidean distance of their CLR-transformed vectors:

d_A(x, y) = sqrt( Σ_i (clr(x_i) - clr(y_i))^2 ). This matrix is the basis for beta-diversity analysis (e.g., PERMANOVA).

Protocol 2: Differential Abundance Analysis using ANCOM-BC

- Input: Filtered count or relative abundance table, sample metadata.

- Model Specification: ANCOM-BC fits a linear model on the log-abundance data:

log(abundance_ij) = β_0 + β_1*Group_j + ε_ij, with bias correction terms for sample-specific sampling fractions and variance stabilization. - Bias Estimation: The algorithm estimates the sample-specific sampling fraction (bias) and subtracts it.

- Hypothesis Testing: For each taxon, it tests the null hypothesis H_0: β_1 = 0 using a Wald-type test with Tukey's trend test procedure to control the False Discovery Rate (FDR) across taxa.

- Output: A list of taxa with significant β_1 coefficients (log-fold changes), p-values, and adjusted q-values.

Visualizing Compositional Data Relationships

Title: The Crossroads of Microbiome Data Analysis

Title: CoDA Transformation Pipeline for Valid Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Compositional Microbiome Analysis

| Tool/Reagent | Category | Function in Analysis |

|---|---|---|

QIIME 2 (with q2-composition) |

Bioinformatics Pipeline | Provides plugins for ANCOM and other compositional methods within an end-to-end analysis framework. |

R package compositions |

Statistical Software | Core R package for CoDA, providing functions for ILR/CLR, perturbation, powering, and Aitchison distance. |

R package robCompositions |

Statistical Software | Specializes in robust methods for CoDA, including outlier detection, imputation of zeros (impRZilr), and regression. |

R package ALDEx2 |

Statistical Software | Provides a differential abundance tool that models compositional data correctly via Monte-Carlo sampling from a Dirichlet distribution. |

R package ancombc |

Statistical Software | Implements the ANCOM-BC method for differential abundance testing with bias correction. |

R package zCompositions |

Statistical Software | Handles zero replacement in compositional datasets using count-based multiplicative methods (cmultRepl). |

| PhILR Weights | Bioinformatics Tool | Generates phylogenetically informed ILR balances, integrating taxonomic tree structure into the transformation. |

| GitHub Repository: microViz | Bioinformatics Tool | An R package that provides a tidyverse-friendly workflow for CoDA and visualization of microbiome data. |

Within the broader thesis on Introduction to compositional data in microbiome analysis research, a fundamental challenge is the misinterpretation of relative abundance data. Microbiome sequencing yields compositional data, where the reported abundance of each species is not independent but is constrained by the total sum (e.g., to 100% or 1 million reads). This compositional nature can induce spurious correlations, where associations between taxa are artifacts of the data structure rather than true biological relationships. This whitepaper provides an in-depth technical guide, using a constrained toy example of three species, to illustrate the genesis, diagnosis, and solution to this pervasive problem.

Core Concept: The Compositional Constraint

Consider a simple microbial community with only three species: Species A, Species B, and Species C. For a given sample i, the sequencing instrument provides count data, which is then normalized to relative abundances. Let the absolute abundances (e.g., cells per gram) be ( Ai, Bi, Ci ). The observed relative abundances are: [ ai = \frac{Ai}{Ai + Bi + Ci}, \quad bi = \frac{Bi}{Ai + Bi + Ci}, \quad ci = \frac{Ci}{Ai + Bi + Ci} ] with the constraint ( ai + bi + c_i = 1 ).

This closure operation is the root of the spurious correlation. If ( Ai ) increases while ( Bi ) and ( Ci ) stay constant, ( ai ) increases, but ( bi ) and ( ci ) must decrease proportionally, creating a negative correlation between ( ai ) and (( bi, c_i )) even in the absence of any biological interaction.

Toy Experiment & Data Simulation

To demonstrate, we simulate data for 100 samples under two scenarios.

Protocol 3.1: Simulation of Absolute Abundances

- Independent Growth Scenario: Generate absolute abundances for A, B, and C from independent log-normal distributions.

- ( \log(A) \sim N(\mu=2, \sigma=0.5) )

- ( \log(B) \sim N(\mu=1, \sigma=0.7) )

- ( \log(C) \sim N(\mu=1.5, \sigma=0.4) )

- Competitive Exclusion Scenario: Simulate a real negative interaction where an increase in A directly inhibits the growth of B. Here, ( Bi = k / Ai ) where k is a constant, plus noise. C remains independent.

- Calculate the true absolute correlation matrix from these simulated absolute abundances.

- Convert absolute abundances to relative abundances (closed compositions).

- Calculate the observed relative correlation matrix from the relative abundances.

Table 1: Correlation Matrices from Toy Simulation

| Scenario | Data Type | Corr(A, B) | Corr(A, C) | Corr(B, C) |

|---|---|---|---|---|

| Independent Growth | Absolute (TRUE) | 0.02 | -0.05 | 0.03 |

| Independent Growth | Relative (OBSERVED) | -0.65 | -0.48 | -0.31 |

| Competitive Exclusion | Absolute (TRUE) | -0.82 | -0.04 | 0.06 |

| Competitive Exclusion | Relative (OBSERVED) | -0.91 | -0.33 | -0.21 |

Interpretation: In the Independent Growth scenario, the true absolute correlation is near zero for all pairs. However, the observed relative abundances show strong negative spurious correlations, entirely due to the compositional effect. In the Competitive Exclusion scenario, the true negative correlation between A and B is amplified, and additional spurious negatives appear for (A,C) and (B,C).

Visualizing the Problem and Solutions

Diagram 1: From Absolute to Relative Abundance

Title: The Closure Operation Creates Compositional Constraint

Diagram 2: Spurious vs. Real Negative Correlation

Title: Mechanism of Spurious vs. Biological Correlation

Analytical Solutions and Protocols

To recover true associations, analysts must use compositionally aware methods.

Protocol 5.1: Performing Centered Log-Ratio (CLR) Transformation

- Input: A matrix of relative abundances ( x_{ij} ) for species j in sample i.

- Handle Zeros: Apply a multiplicative replacement method (e.g.,

zCompositions::cmultRepl) or a minimal impute to replace zeros. - Calculate Geometric Mean: For each sample i, compute ( g(\mathbf{x}i) = \sqrt[K]{x{i1} \cdot x{i2} \cdots x{iK}} ), where K is the number of species.

- Transform: Compute the CLR for each species j in sample i: ( \text{clr}(x{ij}) = \log \left( \frac{x{ij}}{g(\mathbf{x}_i)} \right) ).

- Analysis: Perform standard correlation (e.g., Pearson) or regression on the CLR-transformed values. The CLR coordinates alleviate the sum constraint.

Protocol 5.2: SparCC (Sparse Correlations for Compositional Data) Inference

- Concept: Estimates underlying log-ratio variances assuming the true association network is sparse.

- Procedure: a. Variance Estimation: Estimate the variance of ( \log(xi / xj) ) for all pairs. b. Linear Equations: Formulate a system linking these pairwise variances to the unknown variances of the latent log-abundances. c. Iterative Sparsification: Solve the system, excluding the strongest correlated pair in each iteration, to enforce sparsity. d. Bootstrap: Repeat on bootstrapped data to assess significance.

Table 2: Post-CLR Transformation Correlation (Independent Growth Scenario)

| Species Pair | Raw Relative Correlation | CLR-Transformed Correlation |

|---|---|---|

| A vs. B | -0.65 | 0.05 |

| A vs. C | -0.48 | -0.02 |

| B vs. C | -0.31 | 0.01 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Compositional Data Analysis in Microbiome Research

| Item / Solution | Function / Purpose |

|---|---|

| 16S rRNA Gene Sequencing Kits (e.g., Illumina 16S Metagenomic) | Provides the raw count data from which relative abundance profiles are derived. The starting point of the compositional problem. |

| qPCR Assays for Absolute Quantification | Quantifies absolute abundance of a target (e.g., total bacterial load) to potentially de-compositionalize data or validate findings. |

| Synthetic Microbial Community Standards (e.g., BEI Mock Communities) | Known mixtures of cells/DNA with defined absolute ratios. Critical for benchmarking and identifying bioinformatic biases, including compositional effects. |

| Spike-in Controls (e.g., known quantities of an alien species) | Added to samples pre-processing to estimate and correct for total microbial load, enabling estimation of absolute abundances. |

R Package compositions |

Provides functions for CLR transformation, Aitchison geometry, and robust covariance estimation for compositional data. |

R Package zCompositions |

Handles zero replacement in compositional datasets, a necessary pre-processing step before log-ratio analysis. |

R Package SpiecEasi |

Implements SparCC and other compositionally-aware methods for inferring microbial association networks from relative abundance data. |

Python Library scikit-bio |

Includes utilities for compositional data analysis, including various log-ratio transformations and diversity metrics. |

The three-species toy model unequivocally demonstrates that the compositional constraint inherent to relative abundance data generates spurious negative correlations. These artifacts can mask true biological independence and exaggerate or obscure real ecological interactions. Within introductory compositional data analysis for microbiome research, recognizing this problem is the first critical step. Researchers must move beyond Pearson correlation of raw proportions and adopt a toolkit of compositionally aware methods—including log-ratio transformations, spike-in controls, and specialized inference algorithms—to draw biologically accurate conclusions about microbial ecology and host-microbe interactions in drug development and beyond.

Compositional data, defined as vectors of positive components carrying only relative information, are fundamental in microbiome research. In high-throughput 16S rRNA or shotgun metagenomic sequencing, the data generated are inherently compositional—the total read count per sample (library size) is arbitrary and constrained. Consequently, the meaningful information lies in the relative abundances of taxa, not their absolute counts. Within this framework, two mathematical properties, Sub-compositional Coherence and Scale Invariance, are critical for ensuring robust, interpretable, and statistically valid analyses. This guide details these properties, their implications for analysis, and practical methodologies for researchers and drug development professionals.

Foundational Concepts and Definitions

The Aitchison Simplex and the Unit Sum Constraint

Microbiome abundance data for a sample with D taxa is represented as a vector x = [x₁, x₂, ..., x_D], where xᵢ > 0. Due to the technical nature of sequencing, data are typically normalized to a constant sum (e.g., 1, 10⁶ for counts per million). This places the data on the D-part simplex.

Key Properties

- Scale Invariance: The relative information in a composition is unchanged under multiplication by a positive constant. Formally, for any scalar λ > 0, the composition x and λx convey the same relative information. This makes the analysis independent of the arbitrary total count (library size).

- Sub-compositional Coherence: Any analysis of a subset of components (a sub-composition, e.g., a specific phylogenetic clade) should be consistent with the analysis of the full composition. Conclusions drawn from a sub-composition should not contradict those drawn from the full set.

Table 1: Impact of Ignoring Compositional Properties on Differential Abundance Analysis (Simulated Data)

| Scenario | Method Used | False Positive Rate (%) | False Discovery Rate (%) | Consistency with Sub-compositional Coherence |

|---|---|---|---|---|

| Raw Counts, No Normalization | Standard t-test/Wilcoxon | 35.2 | 42.7 | No |

| Relative Abundance (%) | Standard t-test/Wilcoxon | 28.5 | 33.1 | No |

| CLR Transformation | LinDA / ANCOM-BC | 5.1 | 8.9 | Yes |

| Additive Log-Ratio | ALDEx2 | 4.8 | 9.5 | Yes |

Table 2: Common Data Representations and Their Adherence to Key Properties

| Data Representation | Scale Invariant? | Sub-compositionally Coherent? | Primary Use Case in Microbiome |

|---|---|---|---|

| Raw Read Counts | No | No | Input for compositional models |

| Relative Abundance (%) | Yes | No | Visualization, Preliminary EDA |

| Centered Log-Ratio (CLR) | Yes | No | PCA, Correlation (with constraints) |

| Additive Log-Ratio (ALR) | Yes | Yes | Regression, Hypothesis Testing |

| Isometric Log-Ratio (ILR) | Yes | Yes | Balances, Dimensionality Reduction |

Experimental Protocols & Methodologies

Protocol: Validating Scale Invariance in Beta-Diversity Analysis

Objective: To demonstrate that between-sample distance metrics should be scale invariant to prevent artifacts driven by sequencing depth.

- Data Input: Start with a raw count OTU/ASV table.

- Normalization Variants:

- V1: Rarefy all samples to an even depth (e.g., 10,000 reads/sample).

- V2: Convert to relative abundances (total sum scaling).

- V3: Apply a CSS (Cumulative Sum Scaling) normalization.

- V4: Use a CLR transformation after adding a pseudocount.

- Distance Calculation: For each normalized dataset, calculate Bray-Curtis and Aitchison (Euclidean on CLR) distances between all sample pairs.

- Comparison: Compute the Mantel correlation between the distance matrices from V2, V3, V4 against the matrix from V1 (rarefaction). A high correlation (>0.95) for V2 and V4 confirms scale invariance. Bray-Curtis on relative abundances (V2) is scale invariant; the Aitchison distance (V4) is inherently so.

Protocol: Testing Sub-compositional Coherence in Differential Abundance

Objective: To ensure a chosen statistical method yields consistent results when analyzing a full dataset versus a phylogenetically relevant subset.

- Full Model Fitting: Using an ALR or ILR-based method (e.g., in

selbalorcorncob), fit a model to test the association of microbial communities with a phenotype (e.g., Disease vs. Healthy) on the full OTU table. - Sub-computation Selection: Identify a phylogenetically coherent subset (e.g., all members of the Bacteroidetes phylum).

- Sub-model Fitting: Fit the same model type only to the sub-composition (the Bacteroidetes data, re-closed to sum to 1).

- Coherence Check: Compare the direction (sign) and significance (p-value) of the association for taxa present in both analyses. A coherent method will show agreement. For example, if a specific Bacteroides species is significantly enriched in Disease in the full model, it should remain enriched in the sub-composition model.

Visualizations

Title: Workflow for Compositional Data Analysis in Microbiome Research

Title: Principle of Sub-compositional Coherence

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Computational Tools

| Item / Tool | Category | Function / Purpose |

|---|---|---|

| QIIME 2 / MOTHUR | Bioinformatics Pipeline | Processes raw sequences into an OTU/ASV count table, the primary compositional input. |

compositions R package |

Core Analysis | Provides functions for CLR, ALR, ILR transformations and operations on the simplex. |

CoDaSeq / zCompositions |

R Package | Handles zeros in compositional data (zero replacement, essential for log-ratios). |

ANCOM-BC, ALDEx2, MaAsLin2 |

R Package | Differential abundance tools designed for or compatible with compositional data. |

selbal, coda4microbiome |

R Package | Implements balance (ILR-based) approaches for microbial signature identification. |

| Phred-Adapted Buffers | Wet Lab Reagent | Ensures high-quality DNA extraction, the foundational step for accurate relative abundance. |

| Mock Community Standards | Control Material | Contains known absolute abundances of strains to benchmark technical variation and normalization. |

| PCR Inhibitor Removal Kits | Wet Lab Reagent | Reduces technical bias in amplification, crucial for preserving true relative abundances. |

In microbiome analysis, data such as 16S rRNA sequencing results or metagenomic counts are inherently compositional. They convey relative abundance information, where changes in the proportion of one taxon inevitably affect the perceived proportions of others. Standard Euclidean statistics applied to such data lead to spurious correlations and erroneous conclusions. This primer introduces Aitchison geometry, the mathematically correct framework for analyzing compositional data, providing the working scientist with the tools to apply it within microbiome and therapeutic development research.

Core Principles of Aitchison Geometry

Compositional data are vectors of positive components carrying only relative information. They reside in the Simplex sample space. Aitchison geometry applies a set of operations—perturbation, powering, and the Aitchison inner product—that make the simplex a finite-dimensional Hilbert space.

Key Transformations (Log-Ratio Analysis): To move from the simplex to real Euclidean space, we employ log-ratio transformations.

- Additive Log-Ratio (alr): ( \text{alr}(\mathbf{x})i = \ln(xi / x_D) ) for ( i=1,...,D-1 ). Not isometric.

- Centered Log-Ratio (clr): ( \text{clr}(\mathbf{x})i = \ln(xi / g(\mathbf{x})) ) where ( g(\mathbf{x}) ) is the geometric mean. Isometric but leads to a singular covariance matrix.

- Isometric Log-Ratio (ilr): ( \text{ilr}(\mathbf{x}) = \mathbf{V}^\top \text{clr}(\mathbf{x}) ) where ( \mathbf{V} ) is a matrix of orthonormal basis in the simplex. Isometric and orthogonal coordinates.

Quantitative Data Summary

Table 1: Comparison of Core Log-Ratio Transformations

| Transformation | Formula | Isometric? | Covariance | Primary Use |

|---|---|---|---|---|

| Additive Log-Ratio (alr) | (\ln(xi / xD)) | No | Full-rank (D-1) | Reference-based analysis |

| Centered Log-Ratio (clr) | (\ln(x_i / g(\mathbf{x}))) | Yes | Singular | Visualization, PCA |

| Isometric Log-Ratio (ilr) | (\mathbf{V}^\top \text{clr}(\mathbf{x})) | Yes | Full-rank (D-1) | Hypothesis testing, regression |

Table 2: Prevalence of Methods in Recent Microbiome Literature (2020-2024)

| Analytical Method | Approximate Prevalence | Common Associated Test |

|---|---|---|

| CLR-based PCA/PCoA | 65% | PERMANOVA |

| ALR with specific reference | 15% | Linear Regression |

| ILR / Phylofactorization | 12% | ANOVA, Linear Models |

| Raw Count Models (e.g., DESeq2) | 8% | Wald Test, LRT |

Experimental Protocols for Microbiome Data Analysis

Protocol 1: Standard CLR Transformation and Dimensionality Reduction

- Input: Raw OTU/ASV count table ((n) samples (\times) (D) taxa).

- Preprocessing: Apply a pseudocount (e.g., +1) or use a multiplicative replacement method to handle zeros.

- Transformation: Calculate the geometric mean (g(\mathbf{x})) for each sample. Compute ( \text{clr}(\mathbf{x})i = \ln(xi / g(\mathbf{x})) ) for all taxa (i).

- Singularity Handling: Perform PCA on the clr-transformed data. Note: The clr covariance matrix is singular (rank = D-1), so PCA will return at most D-1 non-zero principal components.

- Downstream Analysis: Use principal coordinates for visualization, clustering, or as input for PERMANOVA.

Protocol 2: ILR Transformation for Differential Abundance Testing

- Basis Construction: Define an ilr basis ((\mathbf{V}) matrix). For a sequential binary partition (SBP) based on phylogenetic tree or known taxonomy, use the

phyloseqandcompositionspackages in R. - Transformation: Compute ilr coordinates: ( \text{ilr}(\mathbf{x}) = \mathbf{V}^\top \text{clr}(\mathbf{x}) ). This yields D-1 orthogonal, real-valued coordinates per sample.

- Statistical Modeling: Apply standard multivariate tests (e.g., MANOVA) or univariate tests on individual ilr coordinates (representing balances between groups of taxa).

- Interpretation: Significant balances indicate a shift in the relative abundance between the two groups of taxa defined in the SBP.

Visualizing the Aitchison Geometry Workflow

Title: Aitchison Analysis Workflow for Microbiome Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Compositional Data Analysis

| Tool / Resource | Function | Primary Use Case |

|---|---|---|

R Package: compositions |

Provides functions for clr, alr, ilr transformations, perturbation, and simplex visualization. | Core Aitchison geometry operations. |

R Package: robCompositions |

Offers robust methods for compositional data imputation (e.g., LR-based) and outlier detection. | Handling zeros and outliers in relative data. |

R Package: phyloseq + microViz |

Integrates microbiome data structures with clr-transformation and compositional PCA plots. | Exploratory data analysis and visualization. |

R Package: CoDaSeq |

Implements compositional filtering and differential abundance testing using log-ratio methods. | Identifying differentially abundant features. |

Python Library: scikit-bio |

Contains skbio.stats.composition module with clr, alr, and ilr (via closure, clr, ilr_transform). |

Python-based compositional analysis pipeline. |

Web Tool: gneiss (QIIME 2 plugin) |

Performs ilr regression and visualization using phylogenetic or user-defined balances. | Balance-based regression in a QIIME2 workflow. |

| Zero-handling: Count Zero Multiplicative | Bayesian-multiplicative replacement of zeros, preserving relative structure. | Preprocessing step before log-ratio transforms. |

Balance Definition: PhyloFactor |

Algorithmically identifies phylogenetic balances that explain the most variance. | Constructing biologically relevant ilr coordinates. |

From Theory to Practice: A Workflow for Compositional Data Analysis (CoDA)

Microbiome sequencing data is inherently compositional. The total read count per sample (library size) is an artifact of sequencing depth, not biological information. Therefore, data is typically transformed to relative abundances, where each sample sums to a constant (e.g., 1 or 1,000,000). A fundamental challenge in this framework is the presence of zeros—taxa absent from a sample's sequencing results. These zeros can be true biological absences or false negatives due to technical limitations (e.g., low sequencing depth, PCR bias). Effective zero handling is critical for valid downstream statistical analysis, as many compositional data tools (e.g., log-ratio transforms) cannot process zeros.

The Nature and Impact of Zeros

Zeros in microbiome data present analytical challenges by creating undefined logarithms in central transformations like the centered log-ratio (CLR). Their prevalence can be substantial, often exceeding 50-90% of entries in sparse high-throughput datasets. The choice of handling method directly influences distance measures, differential abundance testing, and network inference, making it a pivotal methodological decision.

Table 1: Prevalence and Types of Zeros in Microbiome Data

| Zero Type | Likely Cause | Estimated Prevalence | Impact on Analysis |

|---|---|---|---|

| Technical (False Negative) | Low sequencing depth, DNA extraction bias, PCR dropout. | ~20-60% of all zeros | Inflates beta-diversity; masks low-abundance taxa. |

| Biological (True Zero) | Genuine absence of the taxon in the sampled environment. | Variable by ecosystem | Represents true ecological state; should be preserved. |

| Rounding (Count Zero) | Finite sampling from a finite population (sampling zeros). | High in low-depth samples | Leads to underestimation of alpha diversity. |

Method 1: Pseudocounts

The simplest approach is to add a small positive value to all counts before transformation or analysis.

Detailed Protocol: Uniform Pseudocount Addition

- Input: Raw count matrix X (samples x taxa).

- Selection: Choose a pseudocount value c. Common choices are 1, 0.5, or the minimum positive count observed in the dataset.

- Application: Create a new matrix X' where X'ij = Xij + c for all i, j.

- Transformation: Proceed with compositional transformation (e.g., CLR:

log(X'<sub>i</sub> / g(X'<sub>i</sub>)), whereg()is the geometric mean).

Limitations

Pseudocounts arbitrarily distort the compositional structure, disproportionately affecting low-abundance taxa and smaller counts. They assume all zeros are of the same nature and provide no statistical justification for the imputed value.

Method 2: Model-Based Imputation

Advanced methods use statistical models to predict plausible counts for zeros based on the distribution of non-zero data.

Detailed Protocol: Bayesian-Multiplicative Replacement

A popular model-based method, as implemented in tools like the zCompositions R package.

- Input: Raw count matrix X.

- Probabilistic Rounding: For each zero, estimate a probability distribution based on the multivariate characteristics of non-zero data (e.g., using a Dirichlet or logistic-normal model).

- Replacement: Replace zeros with expected values drawn from this model, conditioned on the multivariate associations present in the data. This preserves the imputed values' relative structure.

- Closure: The imputed matrix is re-closed (all values scaled proportionally) to the original library size or a common sum to maintain compositionality.

Detailed Protocol: Phylogeny-Aware Imputation (e.g.,softImpute)

Some methods leverage phylogenetic correlation, assuming closely related taxa have similar abundance patterns.

- Input: Count matrix X and phylogenetic distance matrix P.

- Matrix Completion: Formulate the log-transformed count matrix as a low-rank matrix with missing entries (zeros).

- Regularized Optimization: Solve a minimization problem incorporating a penalty term that encourages similarity between abundances of phylogenetically proximate taxa.

- Output: A completed matrix with imputed, real-valued counts for zero entries, which can then be used in downstream compositional analysis.

Table 2: Comparison of Zero-Handling Strategies

| Method | Core Principle | Advantages | Disadvantages | Best Suited For |

|---|---|---|---|---|

| Uniform Pseudocount | Add constant to all counts. | Simplicity, speed. | High bias, distorts covariance, arbitrary. | Initial exploratory analysis. |

| Bayesian Multiplicative (BM) | Probabilistic replacement based on data distribution. | Presents covariance structure better than pseudocounts. | Computationally intensive; assumes specific distribution. | General-purpose analysis pre-log-ratio. |

| Phylogeny-Aware Imputation | Uses evolutionary relationships to inform imputation. | Biologically informed; can improve accuracy. | Requires robust phylogeny; complex implementation. | Studies focusing on phylogenetic diversity. |

| Model-Based (e.g., ALDEx2) | Uses a Dirichlet model to generate posterior distributions. | Propagates uncertainty; robust for differential abundance. | Not a direct imputation; output is a distribution. | Differential abundance testing. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Zero-Handling Context |

|---|---|

R Package: zCompositions |

Implements Bayesian-multiplicative replacement (CZM, GBM) and other model-based methods for count data. |

R Package: ALDEx2 |

Uses a Dirichlet-multinomial model to infer posterior probabilities, effectively modeling zero uncertainty without direct imputation. |

R Package: mia (MicrobiomeAnalysis) |

Integrates tools for compositional data, including zCompositions and scater for imputation and visualization. |

R Package: softImpute |

Performs matrix completion via nuclear norm regularization, applicable for log-transformed data with zeros. |

QIIME 2 (q2-composition) |

Provides plugins for compositional transformations (e.g., add_pseudocount) within a reproducible workflow framework. |

| Silva / GTDB Reference Database | Provides high-quality phylogenetic trees essential for phylogeny-aware imputation methods. |

| High-Performance Computing (HPC) Cluster | Necessary for running intensive model-based imputation on large-scale microbiome datasets (e.g., >1000 samples). |

Visualizations

Title: Decision Workflow for Zero Handling in Microbiome Data

Title: Mechanism Comparison: Pseudocounts vs Model-Based

Within the broader thesis of introducing compositional data analysis to microbiome research, the selection of an appropriate log-ratio transform is a critical methodological step. Raw microbiome sequencing data, typically represented as relative abundances or counts, resides in a simplex—a space where each sample's components sum to a constant. This constraint induces spurious correlations, invalidating standard statistical methods. Log-ratio transformations, pioneered by John Aitchison, project this constrained data into a real Euclidean space where standard statistical tools can be reliably applied. This guide provides an in-depth comparison of the three predominant transforms: Centered Log-Ratio (CLR), Additive Log-Ratio (ALR), and Isometric Log-Ratio (ILR).

Core Mathematical Definitions & Properties

The following table summarizes the formal definitions, key properties, and implications of each transformation.

Table 1: Core Characteristics of CLR, ILR, and ALR Transforms

| Transform | Mathematical Definition | Dimensionality | Euclidean | Invertible | Key Feature |

|---|---|---|---|---|---|

| Centered Log-Ratio (CLR) | clr(x)_i = ln(x_i / g(x)) where g(x) is the geometric mean of all parts. |

D parts → D dimensions (singular covariance). | No (coordinates are linearly dependent). | To the simplex, with constraint. | Centers parts relative to the geometric mean. Simple, symmetric. |

| Additive Log-Ratio (ALR) | alr(x)_i = ln(x_i / x_D) where x_D is an arbitrarily chosen denominator part. |

D parts → D-1 dimensions. | No (distances are not preserved). | Yes, to the simplex. | Simple, interpretable as log-fold change relative to a reference taxon. |

| Isometric Log-Ratio (ILR) | ilr(x) = V^T * clr(x) where V is an orthonormal basis of a (D-1)-dim subspace of the clr-plane. |

D parts → D-1 dimensions. | Yes (preserves exact distances and angles). | Yes, to the simplex. | Creates orthonormal coordinates, enabling use of all Euclidean geometry. |

Comparative Analysis: Statistical & Practical Considerations

Table 2: Practical Comparison for Microbiome Analysis

| Aspect | CLR | ILR | ALR |

|---|---|---|---|

| Covariance Structure | Singular (non-invertible). Use pseudo-inversion or regularization. | Full-rank, standard analysis applicable. | Non-isometric; covariance is distorted. |

| Interpretability | Intuitive: each coordinate is a part's dominance over the "average" part. | Low. Coordinates represent balances between groups of parts. | High. Directly interpreted as log-ratio to a specific reference taxon. |

| Reference/Dependence | Implicit reference (geometric mean). | No single reference; uses a sequential binary partition (SBP). | Explicitly depends on the choice of denominator part. |

| Best Use Case | PCA-like explorations (e.g., CoDA-PCA), univariate feature screening. | Multivariate stats (regression, clustering, hypothesis testing) requiring Euclidean geometry. | Specific hypotheses about ratios to a biologically fixed reference (e.g., a pathogen or keystone species). |

| Primary Limitation | Coordinates are collinear; cannot use directly in standard multivariate models. | Requires pre-definition of a phylogenetic or functional hierarchy for the SBP. | Results are not invariant to the choice of denominator; distances are not preserved. |

Detailed Methodological Protocols

Protocol A: Applying the CLR Transform (with zero-handling)

- Input: A count or relative abundance table (OTU/ASV table) with

Nsamples (rows) andDtaxa (columns). - Zero Imputation: Replace zeros using a multiplicative replacement strategy (e.g., the

cmultReplfunction in R'szCompositionspackage ormultiplicative_replacementin Python'sscikit-bio). This method preserves the compositional structure. - Normalization: Convert counts to relative abundances (closed to 1) if not already done.

- Geometric Mean: For each sample, calculate the geometric mean

g(x)of allDtaxon abundances. - Log-Ratio Calculation: For each taxon

iin the sample, computeclr_i = ln(abundance_i / g(x)). - Output: A

N x DCLR-transformed matrix. For downstream analysis like PCA, the covariance is centered.

Protocol B: Constructing ILR Coordinates via a Sequential Binary Partition (SBP)

- Define a Hierarchy: Establish a meaningful binary partition of taxa. This is often based on phylogenetic relationships (e.g., at the phylum, family level) or known functional groups.

- Create Balance Pairs: For each partition (node) in the hierarchy, define two groups: a numerator group (

+1) and a denominator group (-1). Taxa not in the node are assigned0. - Build Contrast Matrix: Encode these

+1/ -1 /0assignments into an(D-1) x DmatrixΨ. - Normalize Weights: For the k-th balance with

rtaxa in the+1group andstaxa in the-1group, calculate the balancing element:ilr_k = sqrt((r*s)/(r+s)) * ln( (geom_mean(+1 group)) / (geom_mean(-1 group)) ). - Calculation: The full ILR-transformed matrix is obtained by applying this formula across all balances. This is implemented in software as

ilr(x) = clr(x) * Ψ^T.

Protocol C: Applying the ALR Transform

- Select Denominator: Choose a taxon

x_Dto serve as the reference (e.g., a common, stable taxon or one of biological interest). - Zero Imputation: Apply zero-handling as in Protocol A.

- Log-Ratio Calculation: For each of the remaining

D-1taxaiin a sample, computealr_i = ln(abundance_i / abundance_D). - Output: A

N x (D-1)ALR-transformed matrix.

Flowchart: Decision Process for Log-Ratio Transform Selection

Workflow: Standard CLR Transformation Process

The Scientist's Toolkit: Essential Reagents & Computational Solutions

Table 3: Key Research Reagent Solutions for Compositional Data Analysis

| Item / Software Package | Primary Function | Application Context |

|---|---|---|

R: compositions package |

Provides core functions for clr(), alr(), ilr(), and ilrInv() for back-transformation. |

General CoDA workflow in R. |

R: robCompositions package |

Advanced tools for outlier detection, robust imputation of zeros, and model-based composition estimation. | Handling noisy, sparse microbiome data. |

R: zCompositions package |

Specialized methods for zero imputation (e.g., multiplicative, count-based Bayesian). | Essential pre-processing step before any log-ratio transform. |

R: phyloseq & microbiome packages |

Integrate CoDA transforms with standard microbiome data objects and ecological statistics. | End-to-end microbiome analysis pipeline. |

Python: scikit-bio module |

Provides clr, alr, ilr functions (skbio.stats.composition). |

Core CoDA in Python ecosystems. |

Python: SciPy & NumPy |

For custom implementation of log-ratio transforms and subsequent linear algebra operations. | Building custom analysis pipelines. |

| Zero Imputation Algorithm | Multiplicative replacement or Bayesian Multinomial replacement. | Replacing zeros without distorting covariance structure. |

| Sequential Binary Partition (SBP) | A user-defined or phylogenetically-derived hierarchy for ILR balance coordinates. | Giving biological meaning to ILR coordinates. |

Within the framework of compositional data analysis for microbiome research, raw count data from 16S rRNA or shotgun sequencing is not suitable for standard statistical methods due to the constant-sum constraint. This guide details the critical third step: performing robust downstream analyses—Principal Component Analysis (PCA), regression, and differential abundance testing—after data has been appropriately transformed into log-ratio space (e.g., using centered log-ratio (CLR) or additive log-ratio (ALR) transformations).

Core Methodologies for Log-Ratio Analysis

Principal Component Analysis (PCA) in Log-Ratio Space

PCA on CLR-transformed data is a cornerstone for exploring compositional variation.

- Protocol:

- Input: A count matrix

X(samples x features) that has been normalized (e.g., via Total Sum Scaling or CSS) and transformed to CLR. The CLR transformation is defined as:CLR(x) = [ln(x_1 / g(x)), ln(x_2 / g(x)), ..., ln(x_D / g(x))], whereg(x)is the geometric mean of the composition. - Covariance Matrix: Compute the covariance matrix of the CLR-transformed data. Due to compositionality, this covariance matrix is singular (dimensions add to zero).

- Singular Value Decomposition (SVD): Perform SVD on the covariance matrix or directly on the column-centered CLR data:

U S V^T = SVD(CLR(X_centered)). - Output: Principal Components (PCs) are derived from

U*S(scores) andV(loadings). The first few PCs capture the major axes of log-ratio variance.

- Input: A count matrix

Regression Modeling

Regression models explain a continuous or categorical outcome using log-ratios as predictors.

- Protocol (Linear Regression with ALR):

- Reference Feature Selection: Choose a stable, prevalent feature as the denominator for ALR transformation:

ALR(x) = [ln(x_1 / x_D), ln(x_2 / x_D), ..., ln(x_{D-1} / x_D)]. - Model Specification: Fit a linear model:

Y = β_0 + β_1*ALR1 + β_2*ALR2 + ... + β_{D-1}*ALR_{D-1} + ε. - Interpretation: Coefficient

β_irepresents the expected change inYfor a unit increase in the log-ratio between featureiand the reference featureD, holding all other log-ratios constant.

- Reference Feature Selection: Choose a stable, prevalent feature as the denominator for ALR transformation:

Differential Abundance Testing

Identifying features differentially abundant between groups requires log-ratio methods to avoid false positives.

- Protocol (ANCOM-BC):

- Bias Correction: Estimates and corrects for sample-specific sampling fractions (bias) inherent in observed counts.

- Log-Linear Model: Fits a linear model on the bias-corrected log-transformed abundances:

E[ln(o_{ij})] = β_j + θ_i + Σ γ * covariate, whereois observed count,βis log abundance,θis sampling fraction bias. - Statistical Testing: Tests the null hypothesis

H0: β_j^{(Group A)} = β_j^{(Group B)}for each featurejusing a Wald test or similar. - False Discovery Rate (FDR): Apply FDR correction (e.g., Benjamini-Hochberg) to p-values.

Table 1: Comparison of Log-Ratio Methods for Downstream Analysis

| Method | Recommended Transformation | Key Strength | Key Limitation | Primary Use Case |

|---|---|---|---|---|

| PCA | Centered Log-Ratio (CLR) | Preserves metric, symmetric handling of parts. | CLR covariance is singular; requires PCA via SVD. | Unsupervised exploration, beta-diversity visualization. |

| Linear Regression | Additive Log-Ratio (ALR) | Simple interpretation relative to a reference. | Results are not invariant to choice of reference. | Predicting a continuous outcome from microbiome composition. |

| Differential Abundance (ANCOM-BC) | Bias-corrected Log (pseudo-CLR) | Controls FDR, corrects for sampling bias. | May be conservative with very sparse data. | Case-control studies to identify differentially abundant taxa. |

| Differential Abundance (ALDEx2) | Centered Log-Ratio (CLR) | Uses Bayesian approach to model uncertainty. | Computationally intensive due to Monte Carlo sampling. | Identifying features with consistent differences across groups. |

Table 2: Hypothetical PCA Results (Variance Explained)

| Principal Component | Eigenvalue | Proportion of Variance (%) | Cumulative Variance (%) |

|---|---|---|---|

| PC1 | 45.2 | 28.5 | 28.5 |

| PC2 | 32.1 | 20.2 | 48.7 |

| PC3 | 18.7 | 11.8 | 60.5 |

| PC4 | 12.4 | 7.8 | 68.3 |

Visual Workflows

Downstream Analysis in Log-Ratio Space Workflow

PCA Decomposes CLR Data into Scores & Loadings

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Tools

| Item | Function in Analysis | Example/Note |

|---|---|---|

| R Package: compositions | Provides core functions for CLR, ALR, and ILR transformations, and simplex geometry. | clr() function for centered log-ratio transformation. |

| R Package: robCompositions | Offers robust methods for compositional PCA (CoDa-PCA) and outlier detection. | Critical for datasets with outliers or non-normal error. |

| R Package: ANCOMBC | Implements the ANCOM-BC method for differential abundance testing with bias correction. | Uses a linear model framework with bias correction terms. |

| R Package: ALDEx2 | Uses a Bayesian Dirichlet-multinomial model to estimate technical uncertainty for DA testing. | Outputs effect sizes and expected FDR estimates. |

| R Package: microbiome (in R) / qiime2 (outside R) | Provides streamlined workflows from normalization to CLR transformation and PCA. | transform(x, "clr") function. |

| Reference Genome or Taxon | Serves as the denominator for ALR transformation or as a stable reference for perturbation. | Often chosen as a prevalent, low-variance taxon across samples. |

| FDR Control Software | Corrects for multiple hypothesis testing across thousands of microbial features. | p.adjust(method="BH") in R (Benjamini-Hochberg). |

Within the broader thesis on compositional data in microbiome analysis, this step is critical. Data generated from high-throughput sequencing is fundamentally compositional; the count of a specific microbe is only meaningful relative to the counts of all others in the sample. After statistical modeling on transformed data (e.g., centered log-ratio, CLR), results must be back-transformed to the intuitive scale of relative abundance for biological interpretation and communication.

Core Concepts and Quantitative Comparisons

Table 1: Common Transformations and Their Back-Transformations

| Transformation | Formula (Forward) | Purpose | Back-Transformation | Interpretable Output |

|---|---|---|---|---|

| Centered Log-Ratio (CLR) | clr(x_i) = ln(x_i / g(x)) |

Center data, relax compositionality constraint for some models. | x_i = exp(clr(x_i)) * g(x) |

Additive log-ratio scale. Back-transformed values are relative to the geometric mean. |

| Additive Log-Ratio (ALR) | alr(x_i) = ln(x_i / x_D) |

Use a reference taxon (D). Simple, but choice of reference is arbitrary. | x_i = exp(alr(x_i)) / (1 + Σexp(alr)) |

Proportion relative to the chosen reference denominator. |

| Relative Abundance | rel(x_i) = x_i / Σx |

Original compositional scale. | Not applicable (starting point). | Direct proportion of the community. |

Table 2: Impact of Back-Transformation on Interpreted Effect Sizes Simulated data showing a 1.5 unit increase in CLR space for a feature.

| Feature | CLR Coeff | Geometric Mean of Sample (g(x)) | Back-Transformed Multiplier | Interpretation |

|---|---|---|---|---|

| Bacteroides | 1.5 | 0.01 | exp(1.5) ≈ 4.48 |

Bacteroides abundance increases by a factor of ~4.5 relative to the geometric mean of the community. |

| Prevotella | 1.5 | 0.10 | exp(1.5) ≈ 4.48 |

Prevotella abundance increases by a factor of ~4.5 relative to the geometric mean of the community. |

Experimental Protocol for Differential Abundance Analysis with Back-Transformation

Protocol: ANCOM-BC2 Workflow with Back-Transformation

- Data Preprocessing: Filter amplicon sequence variant (ASV) or operational taxonomic unit (OTU) tables to remove low-abundance features (e.g., prevalence < 10% across samples). Do not rarefy.

- Model Specification: Apply the ANCOM-BC2 model, which incorporates bias correction for sampling fractions. The model is of the form:

log(y_{ij}) = β_j + θ_{i} + ε_{ij}, whereβ_jis the log-fold-change for feature j, andθ_iis the sampling fraction for sample i. - Estimation: Estimate the bias-corrected log-fold-changes (

β_j) and their standard errors. Perform hypothesis testing (Wald test) with FDR correction (e.g., Benjamini-Hochberg). - Back-Transformation (Critical Step):

- For a significant feature j, obtain the bias-corrected coefficient

β_j. - The fold-change in relative abundance is calculated as

exp(β_j). - To express the change for a specific baseline group, calculate the estimated mean log-abundance for each group from the model, then back-transform via

exp()to obtain the estimated geometric mean of the absolute abundances (proportional to cell counts). - Convert these to estimated median relative abundances by dividing each group's estimated absolute abundance for feature j by the sum of estimated absolute abundances for all features in that group.

- For a significant feature j, obtain the bias-corrected coefficient

- Visualization: Plot back-transformed estimated median relative abundances with confidence intervals for significant features across comparison groups.

Diagram: Back-Transformation Workflow for Microbiome Data

Diagram: Compositional Data Analysis Conceptual Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Compositional Data Analysis in Microbiomics

| Item / Solution | Function / Purpose | Example (Non-endorsement) |

|---|---|---|

| R Package: 'compositions' | Provides core functions for CLR, ILR, and ALR transformations and their inverse operations. | clr(), ilr(), alr() functions. |

| R Package: 'ANCOMBC' | Implements the ANCOM-BC2 method for differential abundance testing with bias correction, outputting log-fold-changes ready for back-transformation. | ancombc2() function. |

| R Package: 'microViz' | Includes helper functions for interpreting and visualizing results from compositional models, including CLR back-transformation. | ord_plot() with transform = "clr". |

| R Package: 'zCompositions' | Handles zeros in compositional data via multiplicative replacement, a critical pre-processing step before log-ratio transformations. | cmultRepl() function. |

| Python Library: 'scikit-bio' | Offers stoichiometry and composition analysis modules, including various compositional transforms. | skbio.stats.composition.clr. |

| Normalization Standard (Mock Community) | External control containing known, absolute abundances of strains used to estimate and correct for technical variation (sampling fraction). | ZymoBIOMICS Microbial Community Standard. |

| Internal Spike-Ins (ISDs) | Known quantities of foreign DNA added to each sample pre-extraction to estimate and correct for sample-specific technical biases. | Spike-in of Salmonella barcode strains or synthetic oligonucleotides. |

Microbiome data, derived from high-throughput sequencing, is intrinsically compositional. The total number of reads per sample (library size) is an arbitrary constraint imposed by sequencing depth, not a biological absolute. Consequently, the data only conveys relative abundance information. This compositional nature invalidates the application of standard statistical methods designed for unconstrained data, as they can produce spurious correlations and misleading results. This guide provides a technical framework for handling compositional microbiome data using established R and Python packages.

Core Concepts of Compositional Data Analysis (CoDA)

Compositional data are vectors of positive values that sum to a constant, typically 1 (or 100%). The relevant sample space is the Simplex. CoDA operates on log-ratios of components, which are scale-invariant and allow for valid statistical inference.

Key Principles:

- Scale Invariance: Analysis should not depend on the total count (e.g., doubling all counts yields the same composition).

- Subcompositional Coherence: Conclusions drawn from a full composition should be consistent with those drawn from a subcomposition.

Software Toolkit: Capabilities and Comparative Analysis

R Packages for Compositional Microbiome Analysis

| Package | Primary Purpose | Key CoDA Functions | Input/Output Data Structure |

|---|---|---|---|

phyloseq |

Data organization, visualization, and basic analysis. | transform_sample_counts(), ordinate() for PCoA. |

phyloseq object (OTU table, taxonomy, sample data). |

microbiome |

Wrapper and extension for phyloseq. |

transform() (CLR, relative abundance), core() membership. |

phyloseq object or data.frame. |

robCompositions |

Explicit compositional data analysis. | cenLR() (CLR), pivotCoord() (ILR), imputeBDLR() (imputation). |

data.frame or matrix. |

Python Packages for Compositional Microbiome Analysis

| Package | Primary Purpose | Key CoDA Functions/Methods | Input/Output Data Structure |

|---|---|---|---|

scikit-bio |

Bio-informatics and ordination methods. | clr, multiplicative_replacement (imputation), pcoa. |

skbio.TreeNode, DistanceMatrix, OrdinationResults. |

gneiss |

Compositional regression and differential abundance. | ilr_transform, ols, balance_bplot (visualization). |

pandas.DataFrame, biom.Table, gneiss.TreeNode. |

Detailed Experimental Protocols & Code Snippets

Protocol 1: Core Compositional Transformation Workflow in R

Objective: Transform raw OTU count data into a compositionally valid representation for downstream analysis (e.g., beta-diversity, PERMANOVA).

Materials/Reagents:

phyloseqobject: Contains raw OTU table, sample metadata, and taxonomy.- R Environment: (Current versions as of search) R 4.3+,

phyloseqv1.46,microbiomev1.24,robCompositionsv3.0.

Methodology:

- Data Import & Preprocessing:

- Imputation of Zeroes (Bayesian, Multiplicative):

- Centered Log-Ratio (CLR) Transformation:

- Beta-Diversity Analysis (Aitchison Distance):

Protocol 2: Balance Trees and Differential Abundance with Gneiss in Python

Objective: Identify differentially abundant balances (log-ratios between groups of taxa) in a hypothesis-driven or data-driven manner.

Materials/Reagents:

- BIOM Table & Metadata:

table.biomandmetadata.txt. - Python Environment: (Current versions as of search) Python 3.11+,

gneissv0.4,scikit-biov0.5,pandas,numpy.

Methodology:

- Data Preparation and Imputation:

- Build a Phylogenetic Balance Tree (or use correlation clustering):

- Isometric Log-Ratio (ILR) Transformation:

- Balance Differential Abundance (Linear Regression):

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Compositional Analysis |

|---|---|

| Raw OTU/ASV Count Table | The primary input data, representing sequence counts per feature per sample. Must be filtered and normalized compositionally. |

| Phylogenetic Tree (Newick format) | Defines the evolutionary relationship between microbial taxa. Used in gneiss and phyloseq to inform balance definitions or UniFrac distances. |

| Sample Metadata File | Contains covariates (e.g., treatment group, age, BMI) essential for statistical modeling and interpretation of compositional changes. |

| Zero Imputation Algorithm (e.g., Bayesian-multiplicative) | A "reagent" for handling zeros, which are non-informative and prevent log-ratio transformations. Replaces zeros with sensible, small probabilities. |

| Balance/Basis Matrix (ILR) | Defines the set of orthogonal log-ratio coordinates that span the simplex. Acts as a "reagent" to transform compositions for standard multivariate stats. |

| Aitchison Distance Matrix | The compositionally valid measure of dissimilarity between samples, replacing Bray-Curtis or Jaccard in many analyses. Essential for beta-diversity. |

Visualizing Workflows and Relationships

Title: Compositional Microbiome Analysis Core Workflow

Title: CoDA Problem-Solution Logic Chain

Common Pitfalls and Advanced Solutions in CoDA for Microbiomes

In microbiome research, data are inherently compositional—they convey relative abundance information constrained to a constant sum (e.g., total read count per sample). Within this framework, zeros—taxa with no recorded reads in a sample—pose a critical analytical challenge. Distinguishing between structural zeros (true biological absence) and sampling zeros (undetected due to insufficient sequencing depth or technical dropout) is fundamental for accurate ecological inference and statistical modeling.

Defining the Zero Types

- Structural Zero (Essential Zero): Represents the genuine biological absence of a taxon in a specific sample or environment. Its probability of presence is zero.

- Sampling Zero (Count Zero): Arises from methodological limitations. The taxon is present in the community but is missed due to finite sampling depth (rarefaction), low biomass, or PCR amplification bias.

Quantitative Impact on Analysis

The prevalence and misinterpretation of zeros directly skew core microbiome metrics and differential abundance testing.

Table 1: Impact of Unresolved Zeros on Common Microbiome Metrics

| Analytical Metric | Effect of Sampling Zeros | Effect of Structural Zeros |

|---|---|---|

| Alpha Diversity | Underestimation of richness and diversity; sensitive to rarefaction. | Accurate if correctly identified; removal improves estimates. |

| Beta Diversity | Inflation of dissimilarity between samples (e.g., in Jaccard, Bray-Curtis). | Crucial for defining true presence/absence patterns (e.g., Unifrac). |

| Differential Abundance (DA) | High false positive rates; zeros can dominate significance in count models. | Must be modeled separately; confounding leads to false negatives. |

| Co-occurrence Networks | Spurious negative correlations; loss of true positive associations. | Essential for defining niche exclusion and true negative links. |

Experimental Protocols for Zero Investigation

Protocol 1: Technical Replication & Dilution Series

Objective: To estimate the rate of sampling zeros due to sequencing depth. Methodology:

- Sample Splitting: Aliquot a homogenized microbial community sample (e.g., stool, soil extract) into n technical replicates (e.g., n=5).

- DNA Extraction & Library Prep: Process each replicate independently through DNA extraction, PCR amplification (using the same primer set), and library preparation.

- Sequencing: Sequence all libraries on the same platform (e.g., Illumina MiSeq) to a high depth (>50,000 reads/sample).

- Data Analysis: For each taxon, calculate its prevalence across technical replicates. A taxon absent in some replicates but present in others at comparable abundance is likely a sampling zero. The probability of detection can be modeled as a function of mean abundance.

Protocol 2: Spike-in Controls & Digital PCR

Objective: To differentiate zeros caused by PCR dropout/extraction bias from true absence. Methodology:

- Spike-in Addition: Prior to DNA extraction, add a known, low quantity of an exotic synthetic DNA control (e.g., from Aliivibrio fischeri) to the sample.

- Parallel Quantification: Split the extracted DNA.

- Portion A: Subject to standard 16S rRNA gene amplicon sequencing.

- Portion B: Use digital PCR (dPCR) with primers specific to the spike-in and a ubiquitous bacterial gene (e.g., 16S) for absolute quantification.

- Analysis: If the spike-in is detected via dPCR but missing (zero count) in the amplicon sequencing data, it indicates a technical/sampling zero from amplification bias. Consistent absence in both suggests a protocol failure.

Visualizing the Zero Investigation Workflow

Diagram Title: Decision Workflow for Classifying Zero Types

Modeling and Statistical Approaches

Table 2: Statistical Models Addressing the Zero Dilemma

| Model Class | Key Mechanism | Handles Zero Type | Example R Package |

|---|---|---|---|

| Zero-Inflated Models | Splits data into a binary (presence/absence) and a count component. | Explicitly models structural & sampling zeros. | pscl, zinbwave |

| Hurdle Models | Two-part model: 1) Logistic regression for zero vs. non-zero, 2) Truncated count model for positives. | Treats all zeros as structural in first step. | pscl, corncob |

| Compositional Methods | Uses log-ratios (e.g., ALR, CLR) after zero imputation or replacement. | Treats zeros as missing data (sampling). | compositions, zCompositions |

| Bayesian Multinomial | Models counts as draws from a latent, unobserved abundance. | Infers sampling zeros from probability. | DirichletMultinomial |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Zero Investigation

| Item | Function & Relevance to Zero Dilemma |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | Defined mix of known bacterial genomes. Serves as a positive control to benchmark sampling zero rates across protocols. |

| Exotic DNA Spike-in Controls (e.g., A. fischeri synth. DNA) | Added pre-extraction to quantify and correct for technical losses and amplification biases causing sampling zeros. |

| Digital PCR (dPCR) Master Mix & Assays | Provides absolute quantification of target genes, independent of sequencing, to confirm true absences. |

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Reduces PCR amplification bias and chimera formation, minimizing technical sampling zeros. |

| Uniformly ¹³C-labeled Internal Standard Cells | Added pre-extraction for metaproteomic/metabolomic studies to differentiate biological absence from analytical dropout. |

| Competitive PCR Primers ("PCR mimics") | Spiked into PCR to detect inhibition that could lead to false zeros for low-abundance taxa. |

| Molecular-grade Water & Negative Extraction Kits | Critical for contamination monitoring. Reads in negatives must be filtered to avoid false positives. |

Within the framework of compositional data analysis in microbiome research, the selection of an appropriate reference for log-ratio transformations is a critical methodological decision. The core challenge is that microbiome sequencing data, typically presented as counts, are inherently relative (compositional). Analyses using raw counts or relative abundances can lead to spurious correlations. Isometric Log-Ratio (ILR) and Additive Log-Ratio (ALR) transformations address this by converting D-part compositions into D-1 real-valued coordinates, but both require the definition of a reference. This guide contrasts two predominant strategies: Phylogenetic (Tree-Based) and Variance-Based referencing.

Core Concepts and Quantitative Comparison

The choice of reference directly impacts the interpretability and statistical power of downstream analyses. The table below summarizes the key characteristics of each approach.

Table 1: Comparison of Phylogenetic and Variance-Based Reference Strategies for ILR/ALR

| Feature | Phylogenetic (Tree-Based) Reference | Variance-Based (PCA / Balance) Reference |

|---|---|---|

| Theoretical Basis | Uses evolutionary relationships to define balances between monophyletic groups. | Uses data-driven methods to identify partitions that maximize variance or signal. |

| Primary Method | Phylogenetic ILR (PhILR) / Sequential Binary Partitioning guided by taxonomy. | PCA of CLR-transformed data or iterative variance maximization for balance selection. |

| Interpretability | High. Balances have direct biological meaning (e.g., Firmicutes vs. Bacteroidetes). | Data-driven. Interpretability depends on the resulting partition, which may not align with taxonomy. |

| Stability | Stable across studies using the same tree/taxonomy. Consistent and reproducible. | Study-specific. Sensitive to cohort composition, technical noise, and dominant signals. |

| Primary Goal | To test biologically predefined hypotheses about evolutionary groups. | To discover the dominant sources of variation in a dataset without a priori hypotheses. |

| Ideal Use Case | Hypothesis-driven research on specific phylogenetic clades. | Exploratory analysis to identify the primary drivers of microbiome variation. |

| Software/Tools | phyloseq, philr R package. |

compositions, robCompositions, selbal R packages. |

Detailed Methodological Protocols

Protocol 1: Implementing a Phylogenetic (PhILR) Reference

This protocol creates ILR coordinates where balances represent evolutionary splits in a phylogenetic tree.

- Input Preparation: Start with an OTU/ASV abundance table (counts) and a corresponding phylogenetic tree (e.g., from QIIME2, Greengenes, SILVA).

- Tree Processing: Ensure the tree is rooted and bifurcating. If taxa are missing from the tree, impute them at the parent node or use a generalized reference.

- Sequential Binary Partition (SBP): Automatically generate an SBP matrix from the phylogenetic tree. Each partition corresponds to a binary split in the tree, defining two groups of taxa.

- Balance Calculation: For each partition (balance), perform an ILR transformation.

- Let group A have p taxa and group B have q taxa.

- The balance value for sample i is calculated as:

balance_i = sqrt((p*q)/(p+q)) * ln( (geometric mean of abundances in group A) / (geometric mean of abundances in group B) )

- Downstream Analysis: Use the resulting balances (D-1 coordinates) in standard multivariate statistical tests (e.g., PERMANOVA, linear models).

Protocol 2: Implementing a Variance-Based Reference (Principal Balance Approach)

This protocol identifies balances that sequentially explain the maximum variance in the data.

- Initial Transformation: Perform a Centered Log-Ratio (CLR) transformation on the entire composition to move to Euclidean space.

CLR(x_i) = [ln(x_i1 / g(x_i)), ..., ln(x_iD / g(x_i))]whereg(x_i)is the geometric mean of all taxa in sample i. - Variance Decomposition: Calculate the variance of the CLR-transformed components or use a greedy algorithm to find the binary partition of the set of parts that maximizes the explained variance of the corresponding balance.

- Iterative Partitioning: a. From the set of all taxa, find the bipartition (split into two groups) that defines a balance with the largest possible variance. b. Fix this first balance. Then, recursively repeat the process within each of the two resulting subgroups to find the next most informative balances.

- Coordinate Construction: The sequence of balances generated forms a new orthonormal basis (ILR coordinates) ordered by explained variance.

- Downstream Analysis: Use the first k balances (akin to principal components) as explanatory variables in regression or ordination.

Visualizing the Reference Selection Workflow

Workflow for Choosing ILR/ALR Reference

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents and Computational Tools for Reference-Based Compositional Analysis

| Item / Tool | Category | Primary Function |

|---|---|---|

| Silva / Greengenes | Reference Database | Provides curated, full-length 16S rRNA gene sequences and pre-computed phylogenetic trees for phylogenetic placement and reference. |

| QIIME2 (q2-phylogeny) | Bioinformatics Pipeline | Generates phylogenetic trees from sequence data (via alignment and FastTree) for phylogenetic reference construction. |

| PhILR R Package | Software Package | Implements the phylogenetic ILR workflow, including tree-aware balance generation and transformation. |

| compositions R Package | Software Package | Core suite for CoDA, including CLR, ALR, and ILR transformations, and variance-based coordinate methods. |

| propr / selbal | Software Package | Provides tools for identifying differentially balanced taxa and selecting optimal balances based on variance or association. |

| ZymoBIOMICS Standards | Wet-lab Control | Defined microbial community standards used to validate experimental protocols and benchmark batch effects, crucial for variance assessment. |

| DNeasy PowerSoil Pro Kit | Wet-lab Reagent | High-yield, consistent DNA extraction kit to minimize technical variance that could confound biological signal in variance-based methods. |

| Mock Community DNA | Wet-lab Control | Synthetic DNA mixture of known abundances to calibrate sequencing bias, essential for accurate log-ratio calculations. |

Dealing with High-Dimensionality and Sparse Data

In microbiome analysis research, data are inherently compositional. Each sample provides a vector of counts (e.g., Operational Taxonomic Units or OTUs, Amplicon Sequence Variants or ASVs) summing to a total determined by sequencing depth, not by the absolute abundance of microbes in the environment. This compositional nature, coupled with the extreme high-dimensionality (thousands to millions of features) and extreme sparsity (many zero counts), presents unique analytical challenges. This guide examines core strategies for dealing with these challenges within the framework of compositional data analysis (CoDA).

The Core Challenge: Compositionality, Sparsity, and Dimensionality

Microbiome count data resides in a high-dimensional simplex. The sparsity arises from both biological absence and technical undersampling (low sequencing depth relative to microbial diversity). High dimensionality increases the risk of false discoveries and overfitting in statistical models.

Table 1: Characteristics of High-Dimensional, Sparse Microbiome Datasets