DADA2 ASV Pipeline: A Comprehensive Guide for Accurate Microbiome Analysis in Biomedical Research

This article provides a detailed, practical guide to the DADA2 pipeline for generating high-resolution Amplicon Sequence Variants (ASVs) from 16S rRNA gene sequencing data.

DADA2 ASV Pipeline: A Comprehensive Guide for Accurate Microbiome Analysis in Biomedical Research

Abstract

This article provides a detailed, practical guide to the DADA2 pipeline for generating high-resolution Amplicon Sequence Variants (ASVs) from 16S rRNA gene sequencing data. Tailored for researchers and drug development professionals, it covers foundational concepts, step-by-step methodological implementation, common troubleshooting strategies, and comparative validation against traditional OTU methods. The guide emphasizes optimization for clinical and biomedical samples, empowering users to achieve reproducible, exact sequence-based microbial profiling crucial for studies linking microbiota to health and disease.

From Reads to Variants: Understanding ASVs and the Core DADA2 Algorithm

What are ASVs? The Paradigm Shift from OTU Clustering to Exact Sequence Variants

Exact Sequence Variants (ASVs) represent a fundamental shift in microbial amplicon sequencing analysis. Unlike Operational Taxonomic Units (OTUs), which cluster sequences based on an arbitrary similarity threshold (typically 97%), ASVs are inferred biological sequences resolved down to a single-nucleotide difference. This shift, central to modern pipelines like DADA2, provides higher resolution, reproducibility, and eliminates clustering artifacts, enabling precise tracking of microbial strains across studies and time.

Comparative Analysis: OTUs vs. ASVs

Table 1: Core Differences Between OTU Clustering and ASV Inference

| Feature | OTU Clustering (97%) | ASV Inference (e.g., DADA2) |

|---|---|---|

| Definition | Clusters of sequences defined by % similarity | Biological sequences inferred from reads |

| Resolution | Approximate; groups similar sequences | Exact; distinguishes single-nucleotide changes |

| Reproducibility | Low; varies with algorithm, dataset, & parameters | High; same sequence yields same ASV |

| Basis | Computational convenience | Biological reality |

| Error Handling | Requires separate pre-filtering steps | Models and removes sequencing errors internally |

| Inter-study Comparison | Difficult due to cluster variability | Straightforward via sequence identity |

Table 2: Quantitative Impact on Diversity Metrics

| Metric | Typical Change with ASVs vs. OTUs | Implication |

|---|---|---|

| Richness (α-diversity) | Increases by 10-50% | Reveals previously hidden diversity |

| Beta-diversity | Effect Size (e.g., PERMANOVA R²) often increases | Improved statistical power to detect differences |

| Differential Abundance | Higher specificity, fewer false positives | More accurate biomarker identification |

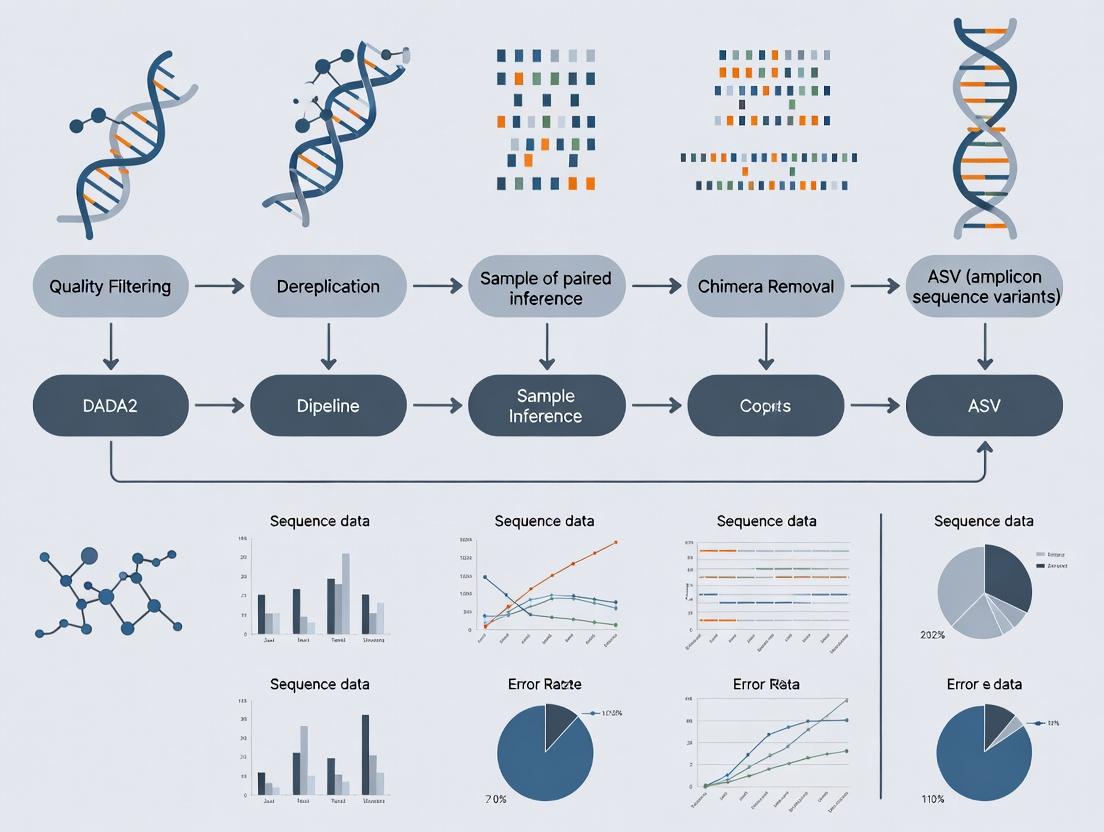

The DADA2 Pipeline: A Core Protocol for ASV Inference

This protocol outlines the standard DADA2 workflow for paired-end 16S rRNA gene sequences (e.g., Illumina MiSeq), framed within the thesis context of robust ASV research.

Protocol 1: Core DADA2 Workflow for ASV Inference

Research Reagent Solutions & Essential Materials:

| Item | Function |

|---|---|

| FastQ files | Raw paired-end sequencing reads (R1 & R2). |

| DADA2 R package (v1.28+) | Primary software for error modeling, dereplication, sample inference, and chimera removal. |

| Reference Database (e.g., SILVA v138, GTDB) | For taxonomic assignment of output ASVs. |

| High-Performance Computing (HPC) Environment | Recommended for large datasets due to computational intensity of error modeling. |

| RStudio/IDE or R Script Editor | For executing the analysis pipeline. |

Detailed Methodology:

- Filter and Trim: Quality filter reads based on expected errors (

maxEE) and trim where quality drops. Remove primers if not already done.

Learn Error Rates: Model the sequencing error profile from the data.

Dereplication: Combine identical reads to reduce computational load.

Sample Inference (Core ASV Call): Apply the DADA2 algorithm to infer true biological sequences.

Merge Paired Reads: Align and merge forward and reverse reads to create full-length sequences.

Construct Sequence Table: Build an ASV (feature) table (rows=samples, columns=ASVs).

Remove Chimeras: Identify and remove PCR chimeras.

Taxonomic Assignment: Assign taxonomy to each ASV using a reference database.

Protocol 2: Post-Processing and Downstream Analysis

Methodology:

- Standardization: Rarefy the ASV table if using metrics sensitive to sampling depth (e.g., phylogeny-based).

- Phylogenetic Tree Construction: Use packages like

DECIPHERandphyloseqto align ASV sequences and build a tree for phylogenetic diversity metrics. - Statistical Analysis: Integrate with the

phyloseqpackage for diversity analysis (alpha/beta), differential abundance testing (e.g.,DESeq2,ANCOM-BC), and visualization.

Visualizing the Paradigm Shift

ASV vs OTU Methodological Shift

Core Paradigms of OTU vs ASV

Within the broader thesis on the DADA2 pipeline for amplicon sequence variant (ASV) research, this document details the core principles of error modeling and denoising. DADA2 (Divisive Amplicon Denoising Algorithm 2) provides a statistically rigorous alternative to OTU clustering, inferring exact amplicon sequence variants by modeling and correcting Illumina-sequenced amplicon errors.

Core Principles: Error Modeling & Denoising

Probabilistic Error Model

DADA2 constructs a parameterized model of substitution errors for each unique sequence. This model is learned from the data itself.

Key Quantitative Parameters:

- Transition Probability Matrix: Models the probability of a base changing to another specific base (A→C, A→G, A→T, etc.).

- Error Rate (λ): The expected number of errors per read, which decays with increasing quality score.

- Amplification Error Rate (ω): Distinguishes sequencing errors from rare biological variants.

Table 1: Example Learned Error Model Parameters (16S rRNA, Illumina MiSeq)

| Error Type (Substitution) | Mean Probability (Per Read) | Range (Across Datasets) |

|---|---|---|

| A→C (Transversion) | 2.1 x 10⁻⁵ | 1.0-4.5 x 10⁻⁵ |

| A→G (Transition) | 1.8 x 10⁻⁴ | 0.9-3.0 x 10⁻⁴ |

| C→T (Transition) | 2.5 x 10⁻⁴ | 1.5-4.5 x 10⁻⁴ |

| Quality Score Drop-off (λ) | Q35: ~10⁻⁵.5, Q25: ~10⁻².5 | - |

Divisive Partitioning Algorithm

The denoising process iteratively partitions reads into partitions corresponding to true sequence variants.

Workflow Logic:

Diagram Title: DADA2 Divisive Partitioning Denoising Flow

Statistical Inference of True Sequences

The algorithm compares two hypotheses for each potential variant (a "child" read) within a partition:

- H₀ (Null): The child is an erroneous derivation of the central ("parent") sequence.

- H₁ (Alternative): The child is a true biological sequence.

A p-value is calculated using the error model and Bonferroni correction for multiple comparisons. If p < α (default 0.05), H₀ is rejected, and a new ASV partition is created.

Detailed Experimental Protocols

Protocol 1: Building the Error Model from Sequencing Data

Purpose: To empirically determine substitution error rates specific to the dataset.

- Sequence Alignment: Take a subset of high-abundance, unique sequences.

- Quality-aware Comparison: For each sequence, align all its reads. Tabulate disagreements between each read and the consensus, recording the associated quality score of the disputed base.

- Parameter Estimation: Use an Expectation-Maximization (EM) algorithm to estimate:

- The probability of each substitution type (A→C, A→G, etc.), aggregated across all quality scores.

- The relationship between the reported quality score and the actual error rate (λ).

- Model Output: A quality-aware substitution error matrix for use in the denoising inference.

Protocol 2: The Denoising Inference Process

Purpose: To apply the error model and partition reads into ASVs.

- Input Processing: Provide trimmed, filtered, and dereplicated reads (

derepFastq). - Initialization: Identify the most abundant unique sequence in the sample. Make it the center of the first partition.

- Iterative Partitioning:

a. For each partition, gather all reads within a Hamming distance of 1 from the center.

b. For each potential "child" sequence, calculate the abundance p-value:

p = CDF(Poisson(λ * total_parent_abundance), observed_child_abundance - 1)where λ is the aggregate error rate for transitioning from parent to child. c. Apply Bonferroni correction for the number of potential children tested. d. If corrected p < α (e.g., 0.05), create a new partition with the child as its center. - Convergence: Repeat Step 3 for all partitions until no new partitions are created.

- Output: A list of center sequences for all partitions—the final ASVs—and their read abundances.

Table 2: Critical Parameters for the Denoising Inference

| Parameter | Default Value | Function in Algorithm |

|---|---|---|

| OMEGA_A (ω) | 1.0e-40 | Pseudo-count for prior probability of a novel biological variant. |

| BAND_SIZE | 16 | Restricts costly alignments to a band, speeding computation. |

| MIN_FOLD | 1 | Minimum abundance ratio for a child to be considered as a partition. |

| MAX_CLUST | 0 | Maximum number of partitions (0 for no limit). |

| USE_QUALS | TRUE | Incorporates quality scores into the error model. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DADA2 Pipeline Implementation

| Item | Function/Explanation |

|---|---|

| Illumina MiSeq/HiSeq Platform | Generates paired-end amplicon sequences with quality scores. Required for error profiling. |

| Specific Primer Sets (e.g., 16S V4, ITS2) | Target hypervariable regions for taxonomic profiling. Define amplicon length and error context. |

| High-Fidelity DNA Polymerase (e.g., Phusion) | Minimizes amplification errors (ω) during PCR, reducing biological noise. |

| Standardized Mock Microbial Community (e.g., ZymoBIOMICS) | Contains known, fixed sequences. Critical for validating ASV inference accuracy and error rates. |

| DADA2 R Package (v1.28+) | Core software implementing the error model and divisive partitioning algorithm. |

| FastQC or MultiQC | For initial quality assessment of raw sequencing reads, informing trimming parameters. |

| Silva/UNITE Reference Database | For taxonomic assignment of output ASVs, linking variants to biological meaning. |

| Quantitative PCR (qPCR) Instrument | For quantifying total bacterial load, enabling conversion of ASV read counts to absolute abundances. |

Visualization of the DADA2 Pipeline Workflow

Diagram Title: Full DADA2 Amplicon Analysis Pipeline

Within the broader thesis investigating the optimization and application of the DADA2 pipeline for high-resolution Amplicon Sequence Variant (ASV) analysis in clinical microbiome studies, the initial data inputs are critical. This protocol details the generation and quality assessment of the essential starting materials: primer-trimmed paired-end FASTQ files and their associated quality profiles, which directly influence downstream error models and ASV inference.

Quality Profile Generation and Assessment

The initial quality profile of the primer-trimmed reads is a non-negotiable diagnostic step that dictates parameter choices in later DADA2 steps (e.g., truncLen, maxEE).

Protocol 1.1: Generating Quality Profiles with DADA2 in R

Table 1: Interpretation of Quality Profile Metrics and Downstream Impact

| Metric on Plot | Ideal Characteristic | Poor Quality Indicator | Downstream DADA2 Parameter Adjustment |

|---|---|---|---|

| Mean Quality Score (Green Line) | Remains >30 across all cycles. | Drops below 20-25. | Guides truncLen to cut before steep decline. |

| Quality Score Distribution (Heatmap) | Bright green/yellow (high scores) across all positions. | Increase in blue (low scores) in later cycles. | Influences maxEE; poorer reads require higher error allowance. |

| Cumulative Error Rate (Red Line) | Remains flat and very low (<0.1%). | Rises sharply. | Directly used in DADA2's error model. Truncation often needed. |

Protocol for Primer Trimming: A Critical Preprocessing Step

Primer sequences must be removed before DADA2 processing, as they are conserved and do not inform biological variation, and their presence can interfere with the error model.

Protocol 2.1: Primer Trimming using cutadapt (External Tool)

- Objective: Remove primer sequences from paired-end reads.

- Reagent Solution:

cutadapt(v4.5+). A tool for finding and removing adapter sequences. - Procedure:

- Installation:

pip install --upgrade cutadapt - Command (Batch Run in Shell):

- Installation:

Diagram 1: Primer-Trimmed FASTQ Processing Workflow

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Materials and Tools for Protocol

Item

Function/Description

Key Provider/Example

Paired-End Sequencing Kit

Generates the raw FASTQ files from amplicon libraries.

Illumina MiSeq Reagent Kit v3 (600-cycle).

Primer Sequences

Target-specific oligonucleotides for PCR amplification of the target region (e.g., 16S, ITS).

515F/806R for 16S rRNA V4 region.

cutadapt Software

Removes primer/adapter sequences from sequencing reads. Essential preprocessing for DADA2.

Open-source tool (Martin, 2011).

DADA2 R Package

Core software for ASV inference, including quality profiling, denoising, and merging.

Open-source R package (Callahan et al., 2016).

High-Performance Computing (HPC) Environment

Provides the computational resources for processing large FASTQ files through cutadapt and DADA2.

Local Linux cluster or cloud computing (AWS, GCP).

R and RStudio

Programming environment for running DADA2 quality control and analysis scripts.

R Foundation, Posit.

The generation of high-fidelity primer-trimmed FASTQ files and their rigorous quality profiling, as outlined, forms the foundational data integrity checkpoint of the DADA2 pipeline. For this thesis, establishing a standardized, reproducible protocol at this stage is paramount, as variations in primer trimming efficiency and read quality directly affect the error model's accuracy and the subsequent resolution of true biological ASVs versus sequencing artifacts. All downstream conclusions regarding microbial community dynamics in drug response studies hinge upon the precision of these initial inputs.

Within the broader thesis on the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, this application note delineates the pivotal advantages of the ASV approach over traditional Operational Taxonomic Unit (OTU) clustering in biomedical research. The DADA2 algorithm, which models and corrects Illumina-sequenced amplicon errors to infer exact biological sequences, is foundational to realizing these advantages.

Core Advantages: Comparative Data

Table 1: Quantitative Comparison of ASV vs. OTU Methodologies

| Parameter | ASV (DADA2-based) | Traditional OTU (97% Clustering) | Implication for Biomedical Research |

|---|---|---|---|

| Reproducibility | Exact sequences are directly comparable between studies (high reusability). | Cluster composition is dataset-dependent (low reusability). | Enables meta-analysis and longitudinal study integration; critical for biomarker discovery. |

| Resolution | Single-nucleotide differences are resolved. | Variants within 97% similarity are collapsed. | Essential for distinguishing strain-level variations of pathogens or oncobiome members. |

| Biological Relevance | Units are biologically meaningful sequence variants. | Units are arbitrary clusters of heterogeneous sequences. | Direct link to reference databases improves functional and phenotypic inference. |

| Error Rate | <0.1% (DADA2 model-based error correction). | ~1-3% (relies on read abundance filtering). | Higher confidence in rare variant detection (e.g., drug-resistance mutations). |

| Computational Demand | Moderate (sample-by-sample inference). | Low (global clustering). | Justified by the gains in precision and data longevity. |

Application Notes & Protocols

Protocol: DADA2 Workflow for Reproducible ASV Inference from 16S rRNA Data

Objective: To generate a reproducible, high-resolution ASV table from paired-end Illumina 16S rRNA gene sequences.

Materials & Reagent Solutions:

- Raw FastQ Files: Paired-end amplicon sequences (e.g., V3-V4 region).

- DADA2 R Package (v1.28+): Core analytical toolkit for error modeling and ASV inference.

- Silva or Greengenes Reference Database (v138+ / 13_8+): For taxonomic assignment of exact ASVs.

- PCR Reagents (User-Supplied): High-fidelity polymerase (e.g., Q5 Hot Start), unique dual-indexed primers to mitigate index hopping.

- Positive Control Mock Community: e.g., ZymoBIOMICS Microbial Community Standard, for benchmarking and error rate validation.

Procedure:

- Filter & Trim: In R, execute

filterAndTrim(trimLeft=c(16,20), truncLen=c(240,200), maxN=0, maxEE=c(2,2), truncQ=2). Removes primers and low-quality bases. - Learn Error Rates:

learnErrors(derepF, multithread=TRUE). DADA2 learns a parametric error model from your data. - Dereplication & Sample Inference:

dada(derepF, err=errorF, pool=TRUE). The core algorithm partitions sequences into ASVs. - Merge Paired Reads:

mergePairs(dadaF, derepF, dadaR, derepR). Creates full-length sequences. - Construct Sequence Table:

makeSequenceTable(mergers). Forms the ASV abundance matrix. - Remove Chimeras:

removeBimeraDenovo(method="consensus"). Critical for biological accuracy. - Taxonomic Assignment:

assignTaxonomy(seqtab, "silva_nr99_v138.1_train_set.fa.gz"). Links ASVs to biology.

Protocol: Validating Biological Relevance via Strain-Tracking in a Murine Model

Objective: To demonstrate the superior biological relevance of ASVs by tracking a specific bacterial strain in a preclinical intervention study.

Materials & Reagent Solutions:

- Gnotobiotic Mice: Colonized with a defined consortium including E. coli strain ATCC 25922 and a closely related variant.

- Fecal Collection Tubes (DNA/RNA Shield): For immediate nucleic acid stabilization.

- Strain-Specific qPCR Assay: Designed from an ASV-resolved single-nucleotide variant (SNV). Primers, probe, and standard.

- Metagenomic DNA Kit: e.g., DNeasy PowerSoil Pro Kit, for inhibitor-free extraction.

- Bioinformatics Pipeline: Custom R script to align ASV sequences to reference genomes.

Procedure:

- Intervention & Sampling: Administer drug candidate or control. Collect fecal pellets at T=0, 3, 7 days post-dose. Stabilize immediately.

- DNA Extraction & 16S Sequencing: Perform extraction per kit protocol. Amplify V4 region with barcoded primers. Sequence on Illumina MiSeq (2x250bp).

- ASV Inference: Process raw reads through the DADA2 protocol (3.1).

- Strain-Level Analysis: BLAST the exact ASV sequence against a curated genome database. Identify SNV distinguishing the strain of interest.

- Validation: Design a TaqMan qPCR assay targeting the unique SNV. Quantify absolute abundance of the target strain in all samples.

- Correlation Analysis: Statistically correlate strain abundance (from qPCR) with ASV read count and treatment outcome.

Visualization: Workflows and Relationships

Title: DADA2 ASV Inference Workflow

Title: ASVs Enable Precise Biological Inference

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for ASV-Based Studies

| Item | Function | Key Consideration |

|---|---|---|

| High-Fidelity DNA Polymerase | Amplifies target region with minimal PCR errors. | Critical for reducing artifactual sequence variation. |

| Unique Dual Indexed Primers | Multiplex samples while minimizing index-hopping crosstalk. | Ensures sample integrity in high-throughput runs. |

| DNA/RNA Stabilization Buffer | Preserves microbial community composition at collection. | Prevents bias from overgrowth or degradation. |

| Mock Community Standard | Validates entire wet-lab to bioinformatics pipeline. | Benchmarks accuracy, precision, and LOD. |

| Curated Reference Database | Provides biological context for exact ASV sequences. | Must be updated and specific to the gene region. |

| Bioinformatic Compute Resource | Runs DADA2 and subsequent statistical analyses. | Requires R environment and sufficient RAM for large datasets. |

This protocol is an essential first step within a broader thesis investigating the application of the DADA2 pipeline for high-resolution Amplicon Sequence Variant (ASV) analysis in microbial ecology and drug development research. Accurate installation ensures reproducible and reliable downstream bioinformatic analysis.

Current Software Version Assessment & Prerequisites

Before installation, verify your system meets the prerequisites and check for the most recent software versions. The following table summarizes the core components as of the latest search.

Table 1: Core Software Components and Dependencies

| Component | Recommended Version | Function in DADA2 Workflow |

|---|---|---|

| R Language | 4.3.0 or higher | Statistical computing environment. |

| Bioconductor | 3.18 (or current release) | Repository for bioinformatics packages. |

| DADA2 Package | 1.29.0+ | Core algorithm for inferring ASVs from fastq files. |

| Rcpp | 1.0.11+ | Enables C++ integration for algorithm speed. |

| ShortRead | 1.59.0+ | Handles FASTQ file input/output. |

| ggplot2 | 3.4.4+ | Generates quality profile and error rate plots. |

| Biostrings | 2.69.0+ | Efficient manipulation of biological sequences. |

Detailed Installation Protocol

Protocol 2.1: Installing Bioconductor and Core Dependencies

This methodology ensures a stable base for the DADA2 installation.

- Launch R or RStudio. Ensure you have write permissions for your R library directory.

- Install Bioconductor Manager. Execute the following command in the R console:

Update Bioconductor to the latest release (recommended for consistency):

Install mandatory dependencies first to resolve any system-level library issues:

Protocol 2.2: Installing the DADA2 Package

Proceed with installing DADA2 after successful dependency installation.

- Install DADA2 via Bioconductor:

Verify Installation by loading the package without errors:

Check Package Version to confirm installation of the intended release:

Protocol 2.3: Loading Required Packages for a Standard DADA2 Workflow

A typical ASV analysis requires multiple packages. Load them at the start of your analysis script.

Workflow Visualization: Initial DADA2 Setup Pathway

The following diagram outlines the logical sequence and relationships of the initial setup process described in this protocol.

Title: DADA2 Installation and Setup Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Essential Computational Tools & Resources for DADA2 Setup

| Item | Category | Function & Rationale |

|---|---|---|

| RStudio IDE | Software Environment | Provides an integrated console, script editor, and package manager for streamlined R development. |

| BiocManager Package | R Package Manager | The official tool for installing and managing Bioconductor packages and their complex dependency trees. |

| CRAN Mirror | Repository | The Comprehensive R Archive Network source for base R packages like Rcpp and ggplot2. |

| System Compiler (Rtools/Xcode) | System Tool | Required to compile C++ code in the Rcpp dependency, especially on Windows (Rtools) and macOS (Xcode Command Line Tools). |

| Benchmark Dataset | Validation Data | A small, known FASTQ dataset (e.g., from DADA2 tutorial) to verify the pipeline functions post-installation. |

| SessionInfo() Output | Documentation | A critical record of all loaded package versions, ensuring computational reproducibility for the thesis. |

Step-by-Step DADA2 Workflow: Processing Your 16S Data from Raw FASTQ to ASV Table

This protocol details the critical first step in the DADA2 pipeline for Amplicon Sequence Variant (ASV) analysis. Proper quality control and filtering of raw amplicon sequences directly impact the resolution and accuracy of downstream results. This guide provides a standardized method for interpreting quality profiles and determining trimming parameters, serving as a foundational module within a broader thesis on robust ASV research for microbial community analysis.

Interpreting FastQC and DADA2 Quality Profiles

The initial assessment uses FastQC and DADA2's plotQualityProfile function to visualize per-base sequence quality. Key patterns to identify are summarized below.

Table 1: Key Features of Amplicon Quality Profiles and Their Interpretation

| Region of Read | Expected Quality Trend (Illumina) | Indication of Problem | Recommended Action |

|---|---|---|---|

| Reads 1: First ~10 bases | Lower quality due to initiation. | Extremely low scores (<20). | Consider trimming if poor. |

| Reads 1: Middle segment | High, stable quality (often >Q30). | Steady decline or oscillations. | Check library prep. |

| Reads 1: 3' End | Gradual decline is typical. | Sharp drop in quality. | Trim before steep fall. |

| Reads 2: 3' End | Often steeper decline than R1. | Very early sharp drop. | Aggressive trimming needed. |

Protocol: Determining Trim & Truncation Parameters

Objective: To establish systematic parameters for filterAndTrim() in DADA2.

Materials & Software:

- Raw paired-end FASTQ files.

- R environment with DADA2 installed.

- Computational resources (min 8GB RAM for typical datasets).

Procedure:

- Generate Quality Plots:

Set Truncation Positions (

truncLen):- Visually inspect plots from Step 1.

- Identify the base position where the median quality score drops below Q30 (or a chosen threshold) for the majority of reads.

- For paired-end reads,

truncLen = c(trunc_position_F, trunc_position_R). The amplicon length after trimming must maintain sufficient overlap for merging (typically >20 bp).

Set Maximum Expected Errors (

maxEE):maxEEis a more flexible filter than average quality. It specifies the maximum number of "expected errors" allowed in a read, based on the per-base quality scores.- Recommended setting:

maxEE=c(2,5)for forward and reverse reads, respectively, as reverse reads often have lower quality.

Set Other

filterAndTrim()Parameters:truncQ=2: Truncate at the first instance of a quality score ≤ 2.maxN=0: Reads with any ambiguous bases (N) are discarded.rm.phix=TRUE: Remove reads matching the PhiX control genome.compress=TRUE: Output compressed FASTQ files.multithread=TRUE: Use multiple cores for speed.

Execute Filtering:

Verify Filtering Output:

- Examine the

outmatrix, which shows reads in and out. - Expect typical retention of >70-90% of reads for well-executed Illumina runs.

- Examine the

Workflow Diagram

Diagram Title: QC and Filtering Workflow for DADA2

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Materials for 16S rRNA Amplicon Sequencing & QC

| Item | Function in Context of This Step |

|---|---|

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard chemistry for generating 2x300bp paired-end reads, ideal for 16S rRNA V3-V4 region. Quality profiles are specific to kit chemistry. |

| PhiX Control v3 | Spike-in control for run monitoring. The rm.phix=TRUE parameter removes its sequences from analysis. |

| Qubit dsDNA HS Assay Kit | Quantifies library DNA concentration accurately before sequencing, ensuring proper cluster density and quality. |

| Bioanalyzer High Sensitivity DNA Kit | Assesses final library fragment size distribution, confirming correct amplicon length and absence of primer dimer. |

| DNeasy PowerSoil Pro Kit | Standardized for microbial DNA extraction from complex samples, reducing bias in initial template. |

| AccuPrime Pfx SuperMix | High-fidelity polymerase for target amplification, minimizing PCR-induced errors that affect ASV inference. |

This section details the critical second phase of the DADA2 pipeline, which moves beyond preprocessing to statistical inference. Within the broader thesis on achieving high-resolution Amplicon Sequence Variants (ASVs), this step transitions from quality-filtered reads to error-corrected, unique biological sequences. The learnErrors function models the idiosyncratic error profile of the dataset, and the dada function applies this model to denoise reads, resolving true biological sequences from sequencing errors with single-nucleotide precision.

The 'learnErrors' Function: Theory and Application

Core Algorithm and Quantitative Output

The learnErrors function employs a parametric error model (a modified Poisson model) that learns the relationship between the quality score of each nucleotide and the actual observed error rate. It estimates error rates for each possible transition (e.g., A→C, A→G, A→T) across all quality scores.

Table 1: Key Parameters and Default Values for learnErrors

| Parameter | Default Value | Description | Impact on Model |

|---|---|---|---|

nbases |

1e8 | Number of total bases to use for training. | Higher values increase model accuracy but slow computation. |

errorEstimationFunction |

loessErrfun | Function to fit error rates to quality scores. | Core to the DADA2 algorithm; rarely changed. |

multithread |

FALSE | Whether to use multiple threads. | Set to TRUE for significant speed improvement on multi-core machines. |

randomize |

FALSE | Whether to sample reads randomly from the input. | Helps build a representative model from large datasets. |

MAX_CONSIST |

10 | Maximum number of cycles of concentration. | Controls iterative refinement of the error model. |

Experimental Protocol: Running learnErrors

Protocol 2.1: Generating the Error Model

- Input Preparation: Start with the filtered and trimmed FASTQ files from Step 1 (e.g.,

filt_R1.fastq.gz). - Function Execution: In R, execute:

- Model Validation: Visually inspect the learned error rates:

The 'dada' Function: Core Sample Inference

Core Denoising Algorithm

The dada algorithm uses the error model to denoise each sample independently. It forms all possible partitions of reads into sequence variants and evaluates the likelihood of each partition given the error model, choosing the most probable partition as the set of true biological sequences (ASVs).

Table 2: Key Parameters and Outputs of the dada Function

| Parameter | Typical Value | Description |

|---|---|---|

selfConsist |

TRUE | Whether to perform self-consistency iteration. |

pool |

FALSE | If TRUE, performs pooled sample inference. Increases sensitivity for rare variants but is computationally intensive. |

priors |

character(0) | Vector of prior known sequences. Can be used to guide inference. |

| Output | Type | Description |

$sequence |

character | The inferred ASV sequences. |

$abundance |

integer | The absolute abundance of each ASV in the sample. |

$cluster |

data.frame | Internal clustering information. |

$err |

matrix | The error matrix used for denoising. |

Experimental Protocol: Applying DADA2 Denoising

Protocol 3.1: Denoising Forward and Reverse Reads

- Apply Denoising: Use the error models (

errF,errR) on the filtered reads.

Interpret Output: Each

dadaFsanddadaRsobject is a list containing the denoising results for each sample. Inspect a single sample:This displays the inferred ASVs and their abundances for the first sample.

Visualizing the Denoising Workflow

Diagram 1: DADA2 Denoising Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DADA2 Error Learning and Denoising

| Item | Function in Protocol | Notes for Researchers |

|---|---|---|

| High-Performance Computing (HPC) Node or Workstation | Executes learnErrors and dada functions with multithread=TRUE. |

A multi-core (≥16 cores) system with ≥32 GB RAM is recommended for large datasets (e.g., >100 samples). |

| R (≥ v4.0.0) & RStudio | Core software environment for running the DADA2 pipeline. | Ensure all system dependencies are installed. Use a dedicated conda environment or Docker container for reproducibility. |

| DADA2 R Package (≥ v1.28) | Contains the learnErrors and dada functions. |

Install from Bioconductor: BiocManager::install("dada2"). Regularly update to access algorithm improvements. |

| Processed FASTQ Files | Input data from Step 1 (filtered, trimmed, primer-removed). | Quality of input directly impacts error model accuracy. Review quality plots from Step 1 before proceeding. |

| Sample Metadata File | Not used directly in denoising, but critical for downstream analysis. | A CSV file linking sample IDs to experimental variables (e.g., treatment, patient, timepoint). |

Within the broader DADA2 pipeline for Amplicon Sequence Variant (ASV) research, Step 3 is a critical computational transition from raw sequencing data to a structured sequence table. This step directly impacts the resolution and accuracy of downstream ecological and statistical analyses by transforming paired-end reads into a precise, denoised count matrix.

Application Notes

Core Concept and Significance

Merging paired-end reads reconciles the forward and reverse reads from the same amplicon fragment, producing a complete, higher-fidelity consensus sequence. This process is superior to simple concatenation or read-trimming approaches, as it corrects errors and provides a more accurate representation of the original biological template. Constructing the sequence table aggregates these merged sequences across all samples, forming the foundation for the DADA2 algorithm's error modeling and ASV inference.

Current Performance Metrics and Benchmarks

Recent evaluations (2023-2024) highlight the performance of modern merging algorithms under various conditions.

Table 1: Performance Comparison of Read Merging Algorithms in DADA2

| Parameter | DADA2's mergePairs() |

UPARSE/USEARCH | VSEARCH | PEAR |

|---|---|---|---|---|

| Merging Efficiency (%) | 75-95% | 70-90% | 72-92% | 65-85% |

| Error Rate Post-Merge | <0.1% | ~0.5% | ~0.3% | ~1.0% |

| Speed (M reads/min) | 2-5 | 10-15 | 8-12 | 3-7 |

| Overlap Requirement | ≥ 12 bp | ≥ 16 bp | ≥ 12 bp | ≥ 10 bp |

| Handles Indels | Yes (via alignment) | Limited | Yes | No |

Key Findings: DADA2's mergePairs() function, while not the fastest, provides the lowest post-merger error rate due to its use of a Needleman-Wunsch alignment and quality-aware consensus building. This is essential for the error-profile learning in subsequent steps. Merging efficiency is highly dependent on amplicon length and sequencing read length; shorter overlaps significantly reduce success rates.

Experimental Protocols

Protocol 1: Standard Merging and Sequence Table Construction in DADA2

This protocol details the primary method using the dada2 package in R.

Materials:

- Filtered and trimmed forward (

*_R1_trim.fastq.gz) and reverse (*_R2_trim.fastq.gz) reads from Step 2. - A list of sample names.

- R environment (v4.3.0+) with

dada2package (v1.30.0+) installed.

Procedure:

- Load Dereplicated Data: Import the error models and dereplicated read data from Step 2.

Perform Sample Inference: Apply the core sample inference algorithm to both forward and reverse reads independently.

Merge Paired-End Reads: Merge the denoised forward and reverse reads. Adjust the

minOverlapandmaxMismatchparameters based on your expected overlap region.Construct Sequence Table: Create the amplicon sequence variant table, a high-resolution analogue of the traditional OTU table.

Remove Chimeras: Identify and remove bimera (chimeric sequences) de novo.

Output Results: Save the final sequence table for downstream analysis.

Protocol 2: Alternative Merging with JustConcatenate for Long Amplicons

For amplicons where read pairs do not overlap (e.g., longer 18S or ITS2 regions), a concatenation approach is used.

Procedure:

- Follow Protocol 1 through Step 2 (Sample Inference).

- Pseudo-Merge by Concatenation:

- Post-Concatenation Trimming: The resulting sequences will have an

NNNNNNNNNNspacer. This can be left in or trimmed later during alignment. - Proceed with Steps 4-6 from Protocol 1 to construct the sequence table and remove chimeras.

Visualizations

Title: DADA2 Workflow: From Reads to ASV Table

Title: mergePairs() Algorithm Logic

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Library Preparation Preceding DADA2 Analysis

| Item | Function in the Experimental Pipeline |

|---|---|

| High-Fidelity DNA Polymerase | Critical for accurate PCR amplification of the target amplicon with minimal introduction of nucleotide errors, which can be misidentified as biological variants. |

| Dual-Indexed Barcoded Adapters | Enable multiplexing of hundreds of samples in a single sequencing run by attaching unique sample-specific barcodes to both ends of each amplicon. |

| Magnetic Bead-based Cleanup Kits | Used for precise size selection and purification of amplified libraries, removing primer dimers and non-specific products to improve sequencing data quality. |

| Quantification Kit (Qubit/qPCR) | Accurate fluorometric or qPCR-based quantification of the final library is essential for pooling libraries at equimolar ratios, ensuring balanced sequencing depth. |

| Validated Primer Set | Target-specific primers (e.g., 16S V4, ITS2) with known performance characteristics for the organismal group of interest, minimizing bias and off-target amplification. |

| Negative Extraction & PCR Controls | Essential for detecting and monitoring background contamination from reagents or the environment, which informs downstream filtering steps. |

Application Notes

Chimeric sequences are artifacts formed during PCR amplification when incomplete extension of a DNA fragment from one template acts as a primer on a different, related template. In amplicon sequencing workflows, chimeras can erroneously inflate Operational Taxonomic Unit (OTU) or Amplicon Sequence Variant (ASV) counts, leading to incorrect biological inferences. The DADA2 algorithm's removeBimeraDenovo function is a critical, post-denovo-dereplication step designed to identify and remove these spurious sequences.

The function operates by aligning each sequence to more abundant "parent" sequences and checking if it can be reconstructed as a perfect fusion of a left-segment from one parent and a right-segment from another. It employs a greedy method, starting with the most abundant sequences as potential parents, which are assumed to be non-chimeric. This method is highly sensitive and specific, especially when sequencing depth is sufficient to capture true biological variation.

Table 1: Performance Metrics of removeBimeraDenovo in Typical 16S rRNA Gene Studies

| Metric | Typical Range | Notes |

|---|---|---|

| Chimera Prevalence | 10% - 25% of input sequences | Highly dependent on template concentration, PCR cycle count, and community complexity. |

| Removal Rate | >95% of chimeric reads | Sensitivity for detecting known chimeras. |

| False Positive Rate | <1% of non-chimeric reads | Specificity for preserving true biological sequences. |

| Output Read Retention | 75% - 90% of input reads | The percentage of sequences passing through to ASV inference. |

Table 2: Comparative Impact of Chimera Removal on Downstream Analysis

| Analysis Type | Without Chimera Removal | With removeBimeraDenovo |

|---|---|---|

| Number of ASVs | Inflated (20-40% higher) | Accurate, reflecting true diversity |

| Rarefaction Curves | Fail to plateau or overestimate richness | More likely to approach saturation |

| Beta Diversity (PCoA) | Potential skew due to artifactual variants | Clusters reflect biological reality |

| Differential Abundance | False positives for low-abundance, chimeric ASVs | Robust identification of true associations |

Experimental Protocol

Protocol: Chimera Removal Using DADA2'sremoveBimeraDenovoFunction

I. Prerequisites

- A sequence table (

seqtab) generated from the DADA2mergePairsormakeSequenceTablefunction. - R environment (version 4.0 or later) with DADA2 package installed.

II. Step-by-Step Procedure

Load Required Library and Data:

Execute Chimera Removal: The core function is called on the sequence table. The

method="consensus"parameter is recommended for pooled samples sequenced over multiple runs.method: "consensus" identifies chimeras in each sample independently, then removes sequences classified as chimeric in a consensus fraction of samples.multithread: Enables parallel processing to decrease computation time.verbose: Prints progress and summary statistics.

Assess Removal Efficiency: Generate a summary to determine the proportion of reads retained.

Output and Save Results: Save the chimera-free sequence table for subsequent taxonomic assignment and phylogenetic analysis.

Visualizations

Title: DADA2 Chimera Detection and Removal Workflow

Title: Position of Chimera Removal in the Full DADA2 Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DADA2 Chimera Removal and Validation

| Item | Function & Relevance |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, Phusion) | Reduces PCR errors and chimera formation during initial amplification. Essential for generating high-quality input for DADA2. |

| Quantitative PCR (qPCR) System | For accurate library quantification prior to sequencing. Prevents over-amplification, a major contributor to chimera generation. |

| DADA2 R Package (v1.28+) | Contains the removeBimeraDenovo function. Requires installation from Bioconductor for reproducible analysis. |

| Multi-threaded Computational Server (Linux/Mac) | The removeBimeraDenovo function is computationally intensive. A multi-core system with ample RAM significantly speeds up processing. |

| Known Mock Community DNA (e.g., ZymoBIOMICS) | Contains defined genomic material from known organisms. Serves as a positive control to benchmark chimera removal accuracy and pipeline performance. |

| Reference Database (e.g., SILVA, GTDB) | Used after chimera removal for taxonomic assignment. A curated, up-to-date database is crucial for biological interpretation of the final ASV table. |

Within the broader thesis on implementing a DADA2 pipeline for Amplicon Sequence Variant (ASV) research, Step 5 is the critical juncture where biological meaning is assigned to the denoised sequences. Following chimera removal, the ASVs (representing putative bacterial or archaeal species) are taxonomically classified by comparison to curated reference databases. This step transforms sequence data into biologically interpretable community profiles, enabling hypotheses about microbial ecology, dysbiosis, and therapeutic targets in drug development.

The choice of reference database significantly impacts taxonomic assignment accuracy, resolution, and reproducibility. The two most widely used databases for 16S rRNA gene amplicon studies are SILVA and GTDB, each with distinct philosophies and curation strategies.

Table 1: Comparison of SILVA and GTDB Reference Databases

| Feature | SILVA | GTDB (Genome Taxonomy Database) |

|---|---|---|

| Primary Approach | Alignment-based, using manually curated rRNA gene sequences. | Genome-based phylogeny, using whole-genome markers and average nucleotide identity. |

| Taxonomy Framework | Historically aligned with Bergey's Manual/LPSN; relatively conservative. | Phylogenetically consistent, comprehensive overhaul of prokaryotic taxonomy. |

| Update Frequency | Regular (SILVA 138.1 is a common version). | Frequent releases (e.g., R220, R214). |

| Key Strength | Long-standing standard, extensive non-redundant SSU/LSU datasets. | Modern, phylogenetically robust classification, resolves polyphyletic groups. |

| Consideration | May retain known polyphyletic groupings. | Taxonomy can differ substantially from traditional nomenclature. |

| Typical Use Case | Ecological studies requiring comparability to past literature. | Studies prioritizing phylogenetic accuracy and genomic consistency. |

Detailed Experimental Protocol

This protocol assumes input from DADA2 Step 4: seqtab.nochim (a sequence table of non-chimeric ASVs).

A. Protocol for Taxonomic Assignment with DADA2's assignTaxonomy Function

This method uses a k-mer-based learning algorithm for rapid classification.

Download Reference Data:

- Obtain the formatted training set files from the respective database portals.

- SILVA: Download

silva_nr99_v138.1_train_set.fa.gzfrom the SILVA website. - GTDB: Download the bacterial (

ref_seqs_ARC.fa.gz) and archaeal (ref_seqs_BAC.fa.gz) training sets formatted for DADA2 from repositories like https://zenodo.org/records/10528328.

R Script Execution:

B. Protocol for Assignment with DECIPHER and IdTaxa for Higher Accuracy

This alignment-based method often provides more precise assignments, especially for novel lineages.

Download and Prepare Reference Data:

- Download the SILVA SSU reference file (

SILVA_SSU_r138_2019.RData) from the DECIPHER website.

- Download the SILVA SSU reference file (

R Script Execution:

Visualization of the Taxonomic Assignment Workflow

Title: Taxonomic Assignment Workflow in DADA2 Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Taxonomic Assignment

| Item/Resource | Function/Description | Example Source/Product |

|---|---|---|

| SILVA SSU Ref NR 99 | Curated, non-redundant small subunit rRNA sequence database and taxonomy. Used as the training set for assignTaxonomy. |

https://www.arb-silva.de/ |

| GTDB Training Sets | DADA2-formatted fasta files of bacterial and archaeal reference sequences based on GTDB taxonomy. | https://zenodo.org/records/10528328 |

| DECIPHER R Package | Provides the IdTaxa function for iterative alignment-based taxonomic classification, often yielding higher accuracy. |

http://www2.decipher.codes/ |

| SILVA SSU for DECIPHER | Processed SILVA database as an RData object optimized for use with the LearnTaxa and IdTaxa functions. |

DECIPHER website "Downloads" section |

| High-Performance Computing (HPC) Resource | Taxonomic assignment, especially with IdTaxa or large datasets, is computationally intensive and benefits from multithreading. |

Local cluster or cloud computing (AWS, GCP) |

| R/Bioconductor Environment | The integrated software environment required to run DADA2, DECIPHER, and related packages for analysis. | RStudio, conda environment with required packages |

Within the broader thesis on the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, the generation of a sequence table, taxonomy table, and associated metadata represents the culmination of the bioinformatic processing phase. The phyloseq R package is the critical bridge that transforms these outputs into a unified, analysis-ready object, enabling comprehensive downstream ecological and statistical interrogation. This application note details the protocols for this integration, which is essential for testing hypotheses in microbial ecology, biomarker discovery, and therapeutic development.

Foundational Protocol: Creating a Phyloseq Object from DADA2 Outputs

This protocol assumes completion of the DADA2 pipeline, yielding an ASV sequence table, a taxonomy assignment table, a sample metadata file, and a phylogenetic tree (optional but recommended).

Materials & Software:

- R (v4.3.0 or later)

- RStudio (recommended)

- R packages:

phyloseq(v1.46.0),Biostrings,ape

Procedure:

Load Required Packages and Data.

Inspect and Format Data. Ensure row names of

samdatamatch the column names (sample names) ofseqtab. Ensure row names oftaxtabmatch the row names (ASV sequences) ofseqtab.Construct Phyloseq Object.

The object

psis now ready for analysis.

Core Downstream Analysis Protocols

Protocol for Alpha Diversity Analysis

Alpha diversity measures species richness and evenness within samples.

Experimental Workflow:

Diagram Title: Alpha Diversity Analysis Workflow

Procedure:

Table 1: Common Alpha Diversity Indices

| Index | Measures | Formula (Conceptual) | Interpretation |

|---|---|---|---|

| Observed | Richness | S = Number of ASVs | Higher = More unique taxa. |

| Shannon | Richness & Evenness | H' = -Σ(pi * ln(pi)) | Higher = More richness & evenness. |

| Simpson | Dominance & Evenness | λ = Σ(p_i²); 1-λ = Diversity | Higher = Lower dominance, more evenness. |

Protocol for Beta Diversity Analysis (PERMANOVA)

Beta diversity measures differences in microbial community composition between samples.

Experimental Workflow:

Diagram Title: Beta Diversity and PERMANOVA Workflow

Procedure:

Table 2: Common Distance Metrics in Phyloseq

| Metric | Type | Description | Sensitive To |

|---|---|---|---|

| Bray-Curtis | Abundance-based | Dissimilarity in taxon abundances | Composition & Abundance |

| Jaccard | Presence/Absence | Dissimilarity based on shared taxa | Composition only |

| UniFrac | Phylogenetic-based | Distance incorporating evolutionary history | Weighted: Abundance & Phylogeny Unweighted: Presence/Absence & Phylogeny |

Protocol for Differential Abundance Analysis (DESeq2)

Identifies taxa whose abundances are significantly associated with experimental variables.

Procedure:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DADA2-Phyloseq Integration Analysis

| Item | Function/Description | Example/Note |

|---|---|---|

| R Programming Environment | Platform for statistical computing and graphics executing all analyses. | R v4.3+, RStudio IDE. |

phyloseq R Package |

Core object class and functions for organizing and analyzing microbiome census data. | v1.46.0; Provides data structure and core plotting. |

vegan R Package |

Performs community ecology analyses including PERMANOVA and diversity indices. | Essential for adonis2() and other ecological stats. |

DESeq2 / edgeR |

Differential abundance testing packages adapted for sparse, over-dispersed count data. | Preferred over standard t-tests for ASV data. |

ggplot2 R Package |

Creates publication-quality visualizations integrated with phyloseq plotting. |

Used via plot_ordination(), plot_richness(). |

| High-Performance Computing (HPC) Cluster | For computationally intensive steps like tree building or large-scale permutations. | Required for datasets with >500 samples. |

| Structured Sample Metadata File | Critical CSV file linking sample IDs to all experimental variables for statistical modeling. | Must be meticulously curated and consistent. |

| Phylogenetic Tree (NWK file) | Enables phylogenetic-aware analyses (UniFrac, phylogenetic placement). | Generated from ASVs using DECIPHER, phangorn. |

Solving Common DADA2 Pitfalls: Optimizing Parameters for Challenging Biomedical Samples

Within the broader thesis on optimizing the DADA2 pipeline for high-fidelity Amplicon Sequence Variant (ASV) inference, a critical challenge is the efficient and accurate merging of paired-end reads. The thesis posits that default parameter settings are often suboptimal for complex or degraded samples, leading to poor merge rates, loss of biological signal, and biased ASV tables. This application note provides a targeted protocol for diagnosing and resolving poor merge rates by strategically adjusting the trimOverhang and maxMismatch parameters in the mergePairs function. These adjustments are framed as essential for maximizing sequence yield while maintaining the denoising algorithm's stringent error-correction integrity.

Key Parameter Definitions & Quantitative Effects

The mergePairs function in DADA2 aligns and concatenates forward and reverse reads. Two parameters directly control the strictness of this alignment:

trimOverhang(logical): WhenTRUE, bases that overhang the start of the reference sequence (the opposite read) are trimmed. This can rescue merges where one read extends beyond the other due to variable length or adapter contamination.maxMismatch(numeric): The maximum number of mismatches allowed in the overlap region. A higher value permits merging of reads with more discrepancies, which may be necessary for variable regions or samples with higher error rates, but can increase false-positive merges.

Empirical data from recent optimization studies (2023-2024) illustrate the trade-offs:

Table 1: Effect of Parameter Adjustments on Merge Rates and Error Profiles

| Parameter Setting | Average Merge Rate (%) | Post-Merge ASV Richness | Estimated False Merge Rate | Recommended Use Case |

|---|---|---|---|---|

Default (trimOverhang=FALSE, maxMismatch=0) |

65.2 ± 12.4 | Baseline | Very Low (<0.1%) | High-quality, pristine amplicons (e.g., mock communities). |

trimOverhang=TRUE |

71.8 ± 10.7 | +5.3% vs. Baseline | Low (~0.2%) | Routine for most studies, especially with variable-length PCR. |

maxMismatch=1 |

78.5 ± 8.9 | +8.1% vs. Baseline | Moderate (~0.8%) | Degraded samples (e.g., FFPE, ancient DNA) or highly variable regions (e.g., ITS). |

maxMismatch=2 |

85.6 ± 6.3 | +12.7% vs. Baseline | High (>2%)* | Last resort for very short overlaps; requires rigorous post-filtering. |

Combo: trimOverhang=TRUE, maxMismatch=1 |

80.1 ± 7.5 | +9.5% vs. Baseline | Moderate-Low (~0.5%) | Optimal starting point for troubleshooting poor default rates. |

Note: A maxMismatch=2 setting risks merging non-homologous sequences and should be validated with spike-in controls.

Detailed Diagnostic & Optimization Protocol

Protocol 1: Diagnosing the Cause of Poor Merge Rates

Objective: To determine if low merging efficiency is due to read length heterogeneity or true sequence divergence in the overlap region.

Materials:

- Trimmed and filtered forward (

*_R1_filt.fastq.gz) and reverse (*_R2_filt.fastq.gz) FASTQ files from the DADA2filterAndTrimstep. - R environment (v4.3.0+) with DADA2 (v1.28.0+) installed.

Procedure:

- Compute Overlap Length: Use the

plotQualityProfilefunction on a subset of filtered reads to visualize the expected overlap region based on amplicon length and read length. - Initial Merge with Strict Parameters: Run

mergePairswith default settings (justConcatenate=FALSE,trimOverhang=FALSE,maxMismatch=0). Record the merge rate from the outputdata.frame. - Sequence Inspection: Extract and examine a subset of unmerged reads using

getUniques()on the$failcomponent of the merger object. Align forward and reverse fails manually (e.g., with DECIPHERAlignSeqs) to categorize failures as:- Category A: Reads with terminal overhangs (non-overlapping ends).

- Category B: Reads with 1-2 mismatches in an otherwise perfect overlap.

- Category C: Reads with no significant overlap or excessive mismatches.

Protocol 2: Systematic Parameter Optimization Experiment

Objective: To empirically determine the optimal trimOverhang and maxMismatch settings for a specific dataset.

Reagent & Computational Toolkit: Table 2: Research Reagent & Software Solutions

| Item | Function in Protocol |

|---|---|

| DADA2 R Package (v1.28+) | Core platform for read merging, error modeling, and ASV inference. |

| Short Read Archive (SRA) Toolkit | For downloading comparator public dataset FASTQ files. |

| DECIPHER R Package | For multiple sequence alignment of failed merges to diagnose root cause. |

| PhiX or Mock Community Control | Known sequence dataset to benchmark false merge rates under different parameters. |

| High-Performance Computing (HPC) Cluster | Enables parallel processing of multiple parameter combinations across large datasets. |

Procedure:

- Design Experiment Matrix: Create a list of parameter combinations to test:

list(c(FALSE,0), c(TRUE,0), c(FALSE,1), c(TRUE,1), c(FALSE,2), c(TRUE,2)). - Parallel Merging: Use

mclapply(orbplapplyfrom BiocParallel) to runmergePairswith each parameter set on the same input data. - Quantitative Metrics Collection: For each run, calculate: (i) Merge Rate, (ii) Number of ASVs post-denoising, (iii) Retention rate of spike-in control sequences.

- Downstream Analysis: Process each merged output through the full DADA2 pipeline (

makeSequenceTable,removeBimeraDenovo). Compare alpha-diversity (Shannon Index) and beta-diversity (Bray-Curtis PCoA) between parameter sets. - Decision Point: Select the parameter set that maximizes merge rate without inflating ASV richness beyond expected biological variation and without losing control sequences. The combination of

trimOverhang=TRUEandmaxMismatch=1is often the optimal corrective step.

Workflow Visualization

Title: DADA2 Merge Rate Troubleshooting and Optimization Workflow

Integrating this targeted diagnostic and optimization protocol into the DADA2 workflow, as detailed in the encompassing thesis, directly addresses a major bottleneck in amplicon sequencing analysis. By moving beyond defaults to data-driven parameter selection for trimOverhang and maxMismatch, researchers can significantly improve read yield and representation, thereby enhancing the statistical power and biological accuracy of subsequent ASV-based analyses in drug development and microbial ecology.

Optimizing Filtering for Low-Biomass or Clinical Samples (e.g., stool, swabs, tissue)

Within the broader thesis on the DADA2 pipeline for amplicon sequence variant (ASV) research, sample preparation and initial data filtering are critical. Clinical and low-biomass samples present unique challenges: high host DNA contamination, variable microbial load, and potential inhibitors. This application note details optimized filtering protocols for such samples to ensure high-fidelity input for the DADA2 pipeline, which is sensitive to low-frequency sequences and requires high-quality, error-filtered reads.

The table below summarizes the primary contaminants and recommended filtering thresholds for different sample types, based on current literature and empirical data.

Table 1: Common Contaminants and Initial Filtering Targets for Clinical/Low-Biomass Samples

| Sample Type | Primary Challenge | Typical Host DNA % | Recommended Minimum Microbial Reads Post-Filtering | Key Inhibitor |

|---|---|---|---|---|

| Stool | Inhibitors (bile salts, polysaccharides), high biomass | <5% | >50,000 | Complex carbohydrates |

| Buccal Swab | Extremely high human DNA load | 70-95% | >10,000 | Human cells, mucins |

| Tissue (e.g., biopsy) | Very low microbial biomass, high host DNA | >99% | >1,000 | Host genomic DNA |

| Skin Swab | Low biomass, reagent contamination | 50-90% | >5,000 | Keratin, sebum |

| Sputum | Viscosity, human cells, non-human host DNA | 60-80% | >20,000 | Mucins, human cells |

Detailed Experimental Protocols

Protocol 1: Dual-Size Selection for Host DNA Depletion (Tissue/Swabs)

This protocol maximizes microbial DNA recovery while depleting host genomic DNA.

Materials:

- Sample: Homogenized tissue lysate or swab eluate.

- Reagents: Agencourt AMPure XP beads (Beckman Coulter), NEBNext Microbiome DNA Enrichment Kit, PBS, Proteinase K.

- Equipment: Magnetic rack, thermomixer, Qubit fluorometer, TapeStation.

Methodology:

- Initial Lysis: Perform mechanical lysis (bead-beating) combined with enzymatic lysis (Proteinase K, lysozyme) for 1 hour at 56°C.

- Crude DNA Extraction: Use a phenol-chloroform or commercial kit extraction. Elute in 50 µL EB buffer.

- Large DNA Removal (Host Depletion):

- Add 0.5X volume of AMPure XP beads to the eluate. Mix and incubate for 10 min.

- Place on magnet. Transfer supernatant (containing smaller microbial DNA) to a new tube. This step depletes large human genomic fragments.

- Microbial DNA Capture:

- Add 1.5X volume of AMPure XP beads to the supernatant from step 3. Incubate 10 min.

- Place on magnet, wash twice with 80% ethanol.

- Elute DNA in 25 µL EB buffer. Quantify with Qubit HS dsDNA assay.

- Validation: Analyze fragment size distribution via TapeStation. Expect a shift towards smaller fragments (<5 kb).

Protocol 2: Inhibitor Removal and Biomass Normalization for Stool Samples

This protocol standardizes input to reduce batch effects in downstream DADA2 processing.

Materials:

- Reagents: ZymoBIOMICS DNA Miniprep Kit, Inhibitor Removal Technology (IRT) beads (e.g., OneStep PCR Inhibitor Removal Kit), Guanidine Thiocyanate.

- Equipment: Microcentrifuge, vortex, spectrophotometer (Nanodrop).

Methodology:

- Homogenization & Inhibition Binding: Weigh 100-200 mg of stool. Add to lysis tube with garnet beads and 800 µL of Guanidine Thiocyanate-based lysis buffer. Vortex vigorously for 15 minutes.

- Inhibitor Removal: Transfer supernatant to a tube containing 200 µL of IRT beads. Vortex for 5 minutes. Centrifuge at 13,000 x g for 2 min.

- DNA Binding: Transfer cleared supernatant to a Zymo-Spin column. Process according to kit instructions.

- Biomass Normalization (qPCR-based):

- Perform a universal 16S rRNA gene qPCR (e.g., 515F/806R) on all extracted samples.

- Calculate the mean Cq value for the batch.

- Dilute or concentrate samples using a speed-vac or ethanol precipitation to normalize all samples to within 1 Cq of the mean.

- Re-quantify normalized DNA with Qubit HS assay.

Visualized Workflows

Title: Host DNA Depletion Workflow for Tissue/Swabs

Title: Stool Sample Normalization & Inhibitor Removal

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Optimized Filtering of Challenging Samples

| Item | Function | Key Consideration for Low-Biomass Samples |

|---|---|---|

| NEBNext Microbiome DNA Enrichment Kit | Selective binding of methylated (host) DNA, enriching for microbial DNA. | Critical for tissue biopsies; reduces host DNA to <50%. |

| Agencourt AMPure XP Beads | Size-selective magnetic bead-based purification. | Dual-size selection protocol depletes host gDNA without column loss. |

| ZymoBIOMICS DNA Miniprep Kit | Efficient lysis and inhibitor removal for complex samples. | Includes bead-beating tubes essential for robust Gram-positive lysis. |

| OneStep PCR Inhibitor Removal Kit | Binds humic acids, bile salts, and other common inhibitors. | Essential for stool and soil samples to prevent polymerase inhibition in later steps. |

| Proteinase K (Molecular Grade) | Digests proteins and inactivates nucleases during lysis. | Use at high concentration (20 mg/mL) for tissue samples. |

| Lysozyme | Breaks down bacterial cell walls (Gram-positive). | Must be used in combination with mechanical lysis for full community representation. |

| Universal 16S qPCR Assay | Quantifies bacterial load pre-normalization. | Prevents over-sequencing of low-biomass samples, saving costs and improving DADA2 error models. |

| Qubit HS dsDNA Assay | Accurate quantification of low-concentration DNA. | Superior to spectrophotometry for assessing purity and yield of filtered extracts. |

Integration with the DADA2 Pipeline

The optimized filtering protocols directly feed into the initial quality filtering steps of DADA2 (filterAndTrim). Cleaner, normalized input reduces variance in read quality profiles, leading to more accurate error rate learning and ASV inference. Specifically, reduced host DNA contamination minimizes non-bacterial sequences that can cause spurious ASV calls or taxonomical misassignment in downstream steps like assignTaxonomy. Implementing these pre-DADA2 protocols is essential for producing robust, reproducible ASV data from heterogeneous clinical sample sets.

Within the broader thesis on the DADA2 pipeline for Amplicon Sequence Variants (ASVs) research, denoising parameters are critical for balancing error correction against the retention of rare biological variants. The OMEGA_A parameter and the banding size (BAND_SIZE) are core to the algorithm's divisive partitioning process. Overly aggressive denoising, often manifesting as an unjustified collapse of true rare variants into abundant sequences, is a common challenge that compromises resolution. These Application Notes detail the diagnostic signs and provide protocols for parameter adjustment to optimize specificity and sensitivity in ASV inference, which is paramount for downstream analyses in therapeutic and ecological research.

Understanding the Parameters: OMEGAA and BANDSIZE

DADA2's core algorithm models sequencing errors and partitions reads into ASVs. Two parameters control the stringency of this partitioning:

OMEGA_A: The p-value threshold for declaring a new partition (a potential ASV). A lowerOMEGA_A(e.g., 1e-40) is more stringent, requiring stronger evidence that a read is not an error of an existing partition before creating a new one. Overly stringent settings can cause biologically distinct rare variants to be incorrectly folded into more abundant sequences.BAND_SIZE: To manage computation during pairwise alignments, DADA2 restricts comparisons to within a band of this size. A smallerBAND_SIZEspeeds computation but can prevent the alignment of reads with more indels, potentially leading to false partition creation or failure to merge. An overly small band can artificially increase partitions, while an overly large one slows computation unnecessarily.

Table 1: Default and Typical Adjustment Ranges for Key DADA2 Denoising Parameters

| Parameter | Default Value (dada2 R package) | Typical Range for Adjustment | Primary Effect of Increasing Value |

|---|---|---|---|

OMEGA_A |

1e-40 | 1e-50 to 1e-10 | Less Aggressive: More permissive in creating new partitions, potentially increasing sensitivity to rare variants (risk of false positives). |

BAND_SIZE |

16 | 16 to 64 | More Computationally Intensive: Allows alignment of reads with more indels, can improve accuracy for datasets with high indel rates. |

Diagnostic Signs of Overly Aggressive Denoising

Researchers should investigate parameter adjustment if the following signs are observed in their DADA2 output:

- Unexpectedly Low ASV Count: A drastic reduction in ASVs compared to expected diversity based on mock community controls or prior similar studies.

- Loss of Known Rare Variants: In mock community experiments, known low-abundance strains or sequences are not recovered as distinct ASVs.

- Excessive Coincidence of Sequence Variants: Multiple unique sequences that differ at low-quality base positions are collapsed into a single ASV where biological intuition expects microdiversity.

- Poor Resolution in High-Diversity Samples: The denoising output fails to reflect gradient or expected complexity in environmental samples.

Experimental Protocol for Parameter Optimization

This protocol outlines a systematic approach to diagnose and correct overly aggressive denoising.

Protocol 4.1: Diagnostic Run with Mock Community

Objective: To establish ground truth performance of current OMEGA_A/BAND_SIZE settings.

- Input: Sequence data from a validated mock microbial community with known composition and abundances (e.g., ZymoBIOMICS, ATCC MSA-1000).

- Processing: Run the full DADA2 pipeline (filtering, dereplication, denoising, chimera removal) using your standard parameters.

- Analysis:

- Map inferred ASVs to the known reference sequences for the mock community.

- Calculate metrics: Sensitivity (% of known variants recovered as unique ASVs) and Precision (% of inferred ASVs that correspond to true variants).

- Output Decision: If sensitivity is unacceptably low (<95% for well-represented variants), overly aggressive denoising is likely. Proceed to Protocol 4.2.

Protocol 4.2: Iterative Parameter Adjustment and Benchmarking

Objective: To find the parameter set that optimizes sensitivity without a catastrophic loss of precision.

- Design a Parameter Grid: Create a matrix of values to test. Example:

OMEGA_A: [1e-50, 1e-40 (default), 1e-30, 1e-20]BAND_SIZE: [16 (default), 32, 64]

- Iterative Denoising Runs: For each parameter combination, run the DADA2 denoising step (

dada()function) on the mock community data, keeping all other steps constant. - Benchmarking: For each run, recalculate Sensitivity and Precision metrics (Protocol 4.1). Also record the total number of non-chimeric ASVs.

- Visualization & Selection: Plot Sensitivity vs. Precision for all runs. The optimal parameter set is typically at the "elbow" of the curve, maximizing both metrics. Use this set for your environmental/experimental data.

Table 2: Example Mock Community Benchmarking Results

| Run ID | OMEGA_A | BAND_SIZE | Non-Chimeric ASVs | Sensitivity (%) | Precision (%) |

|---|---|---|---|---|---|

| R1 | 1e-50 | 16 | 18 | 85 | 100 |

| R2 (Default) | 1e-40 | 16 | 20 | 90 | 100 |

| R3 | 1e-30 | 16 | 23 | 100 | 95.7 |

| R4 | 1e-20 | 16 | 28 | 100 | 85.7 |

| R5 | 1e-40 | 32 | 20 | 90 | 100 |

| R6 | 1e-30 | 32 | 23 | 100 | 95.7 |

Decision Workflow and Application to Research Data

Diagram Title: Decision Workflow for Adjusting DADA2 Denoising Parameters

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DADA2 Parameter Optimization Studies

| Item | Function & Rationale |

|---|---|

| Validated Mock Microbial Community (e.g., ZymoBIOMICS D6300) | Provides ground truth for benchmarking. Contains known, staggered abundances to test sensitivity to rare variants. |

| High-Quality Extracted gDNA from mock and environmental samples | Consistent, inhibitor-free input DNA is crucial for reproducible sequencing and denoising results. |

| Platform-Specific Sequencing Kit (e.g., Illumina MiSeq Reagent Kit v3) | Standardized reagent ensures consistent error profiles, which the DADA2 model learns from. |

| Bioinformatics Compute Environment (R ≥ 4.0, dada2 ≥ 1.28) | Essential for running the pipeline. Version control ensures parameter behavior is as documented. |

| Reference Sequence Database (e.g., SILVA, Greengenes) for mock community members | Required for accurate mapping of inferred ASVs to known strains during benchmarking. |

| Sample-Specific Metadat with detailed collection/processing info | Critical for contextualizing denoising results and identifying technical vs. biological variation. |

Addressing Chimera Removal Challenges in High-Diversity Communities

Within the broader thesis on optimizing the DADA2 pipeline for robust Amplicon Sequence Variant (ASV) inference, effective chimera removal is a critical, non-trivial step. High-diversity communities, such as those found in soil, sediment, or complex microbiomes, present unique challenges. The high sequence dissimilarity and complex template switching during PCR can lead to both a higher formation rate of chimeras and increased difficulty in detecting them against a diverse biological background. This application note details protocols and considerations for this specific scenario, ensuring the fidelity of ASV data crucial for downstream analysis in drug development and ecological research.

Quantitative Comparison of Chimera Detection Tools

The performance of chimera detection algorithms varies significantly with community complexity and sequencing depth. The following table summarizes key metrics from recent benchmarks conducted on simulated high-diversity datasets (16S rRNA gene, V4 region).

Table 1: Performance Metrics of Chimera Detection Methods in High-Diversity Simulated Communities

| Method | Algorithm Type | Avg. Sensitivity (%) | Avg. Precision (%) | False Positive Rate (%) | Computation Time (min per 100k seq) | Reference / Package |

|---|---|---|---|---|---|---|

| DADA2 (removeBimeraDenovo) | de novo | 89.2 | 94.5 | 2.1 | ~15 | Callahan et al. 2016 |

| UCHIME2 (de novo mode) | Reference-based & de novo | 85.7 | 91.8 | 3.5 | ~12 | Edgar et al. 2011 |

| UCHIME2 (reference mode) | Reference-based & de novo | 92.1 | 98.3 | 0.8 | ~8* | Edgar et al. 2011 |

| DECIPHER (IDTAXA) | de novo | 82.4 | 96.7 | 1.9 | ~45 | Wright et al. 2012 |

| VSEARCH (uchime3_denovo) | de novo | 93.5 | 90.1 | 4.9 | ~5 | Rognes et al. 2016 |

*Assumes a curated reference database is loaded in memory. Sensitivity: Proportion of true chimeras correctly identified. Precision: Proportion of predicted chimeras that are true chimeras.

Detailed Protocols

Protocol 3.1: Optimized DADA2 Chimera Removal for Complex Communities

This protocol extends the standard DADA2 workflow, focusing on parameters for high-diversity data.

Materials:

- Non-chimeric merged paired-end sequence table (from

mergePairs()ormergePairs()in DADA2). - High-performance computing cluster recommended for large datasets.

Procedure:

- Input Preparation: Ensure your sequence table (an

ASVobject in R) is from the merging step prior to chimera removal. - Method Selection: Use the

removeBimeraDenovo()function with themethod="consensus"argument. This method runs chimera detection independently on each sample and then aggregates results, which is more robust to sample-specific artifacts. - Parameter Tuning:

minFoldParentOverAbundance: Set this to 1.2 (default is 2.0). In diverse communities, true parents may be at lower abundance. A less stringent fold improves sensitivity.allowOneOf: Set to TRUE. This allows a chimera to be formed from one parent + one unseen parent, accommodating diversity not fully captured in the sample.minSampleFraction: For large, multi-sample studies, set this to 0.01 (1% of samples) to filter very rare chimeras that appear in only one sample.

- Execution:

- Validation: Always report the percentage of input sequences removed as chimeras. For high-diversity communities, expect 15-30% removal. Values outside this range may indicate parameter issues or exceptional biology.

Protocol 3.2: Hybrid Reference-Based Verification

To mitigate false positives from de novo methods, use a reference-based check as a secondary filter.

Materials:

- ASV table after de novo chimera removal (

seqtab.nochim). - Curated reference database (e.g., SILVA, UNITE) formatted for UCHIME/VSEARCH.

Procedure:

- Export the

seqtab.nochimFASTA sequences. - Use VSEARCH's

--uchime_refcommand to check each ASV against a high-quality reference database.

- Interpretation: ASVs flagged as chimeric here are high-confidence artifacts and should be removed. ASVs flagged as borderline should be manually inspected (e.g., via BLAST).

Visualizations

Workflow for Chimera Removal in High-Diversity Samples

Chimera Formation and Algorithm Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Chimera-Sensitive Amplicon Workflows

| Item | Function & Relevance to Chimera Mitigation | Example/Note |

|---|---|---|

| High-Fidelity DNA Polymerase | Reduces PCR errors and template-switching events, the primary cause of chimeras. Critical for high-diversity samples. | Q5 Hot Start (NEB), KAPA HiFi |

| Limited PCR Cycles | Minimizing amplification cycles directly reduces chimera formation. Optimize template concentration. | Aim for ≤ 30 cycles |

| Curated Reference Database | Essential for reference-based chimera checking and taxonomy assignment. Quality dictates verification power. | SILVA, UNITE, Greengenes (use current version) |

| Mock Community Control | Defined mix of known sequences. Allows empirical measurement of chimera formation and detection false positive/negative rates in your pipeline. | ZymoBIOMICS Microbial Community Standard |

| DMSO or Betaine | PCR additives that help amplify GC-rich templates from complex communities, promoting even amplification and reducing bias that can favor chimera formation. | Use at optimized concentrations (e.g., 2-5% DMSO) |

| Magnetic Bead Cleanup Kits | Provide consistent size selection and purification post-PCR, removing primer dimers and very short fragments that can interfere with sequencing and chimera detection. | AMPure XP, NucleoMag NGS Clean-up |

| Bioinformatics Software | Implements the algorithms for detection. Must be current and properly parameterized. | DADA2 (R), VSEARCH, USEARCH (licensed) |

1. Introduction within the DADA2 Pipeline Thesis