DADA2 ASVs in Microbial Research: A Comprehensive Guide from Theory to Application for Scientists

This article provides a complete resource on DADA2 (Divisive Amplicon Denoising Algorithm) for generating high-resolution Amplicon Sequence Variants (ASVs).

DADA2 ASVs in Microbial Research: A Comprehensive Guide from Theory to Application for Scientists

Abstract

This article provides a complete resource on DADA2 (Divisive Amplicon Denoising Algorithm) for generating high-resolution Amplicon Sequence Variants (ASVs). Tailored for researchers and drug development professionals, it covers foundational principles, step-by-step methodological workflows, common troubleshooting and optimization strategies, and critical validation and comparative analyses against OTU-based methods. We synthesize current best practices to enable accurate, reproducible microbiome profiling for biomedical and clinical applications.

What Are DADA2 and ASVs? Core Concepts Revolutionizing Microbiome Analysis

Amplicon Sequence Variants (ASVs) represent a paradigm shift in microbial marker-gene analysis, moving beyond the heuristic clustering of Operational Taxonomic Units (OTUs) to infer exact biological sequences. Framed within the broader thesis of DADA2-driven research, this technical guide elucidates the core principles, methodologies, and applications of ASVs, providing researchers and drug development professionals with the tools for high-resolution microbiome analysis.

Traditional OTU methods cluster sequences based on an arbitrary similarity threshold (typically 97%), inherently模糊 biological reality by combining distinct sequences. ASVs are inferred exactly, up to the resolution of the sequencing technology, treating single-nucleotide differences as potentially biologically significant. This allows for reproducible, precise, and granular analysis across studies.

Core Algorithmic Principle: DADA2

The DADA2 algorithm (Divisive Amplicon Denoising Algorithm) is a cornerstone of ASV inference. It models substitutions and indels within amplicon reads to distinguish sequencing errors from true biological variation.

Key Steps in DADA2's Denoising Process:

- Error Rate Learning: Models the amplicon-specific error rates from the data.

- Dereplication & Sample Composition: Collapses identical reads and infers the initial composition of each sample.

- Denoising (Core Algorithm): Partitions reads into partitions where the abundance of a sequence can be explained by error from a more abundant sequence. It alternates between:

- Partitioning: Clustering reads by sequence similarity.

- Denoising: Testing if partitions can be merged under the error model.

- Chimera Removal: Identifies and removes chimeric sequences.

Quantitative Impact of ASV vs. OTU Approaches: Table 1: Comparative Analysis of OTU vs. ASV Methods

| Metric | 97% OTU Clustering | DADA2 ASV Inference | Implication |

|---|---|---|---|

| Resolution | Heuristic, approximate (~97% similarity) | Exact, single-nucleotide | ASVs detect finer ecological gradients and strain variants. |

| Reproducibility | Low; varies with clustering algorithm & parameters | High; invariant given same input & parameters | Enables direct cross-study comparison and meta-analysis. |

| Typical Output Count | Fewer, artificially consolidated units | More, biologically precise units | ASV counts are closer to true biological diversity. |

| Error Handling | Errors often propagated into OTUs or filtered by abundance | Errors explicitly modeled and removed | Reduces false diversity; true variants retained regardless of abundance. |

| Downstream Analysis | Ecological metrics on模糊groups | Strain-level tracking, precise genotyping | Enables host-microbe linkage and targeted therapeutic development. |

Detailed Experimental Protocol: 16S rRNA Gene Analysis with DADA2

Workflow Overview:

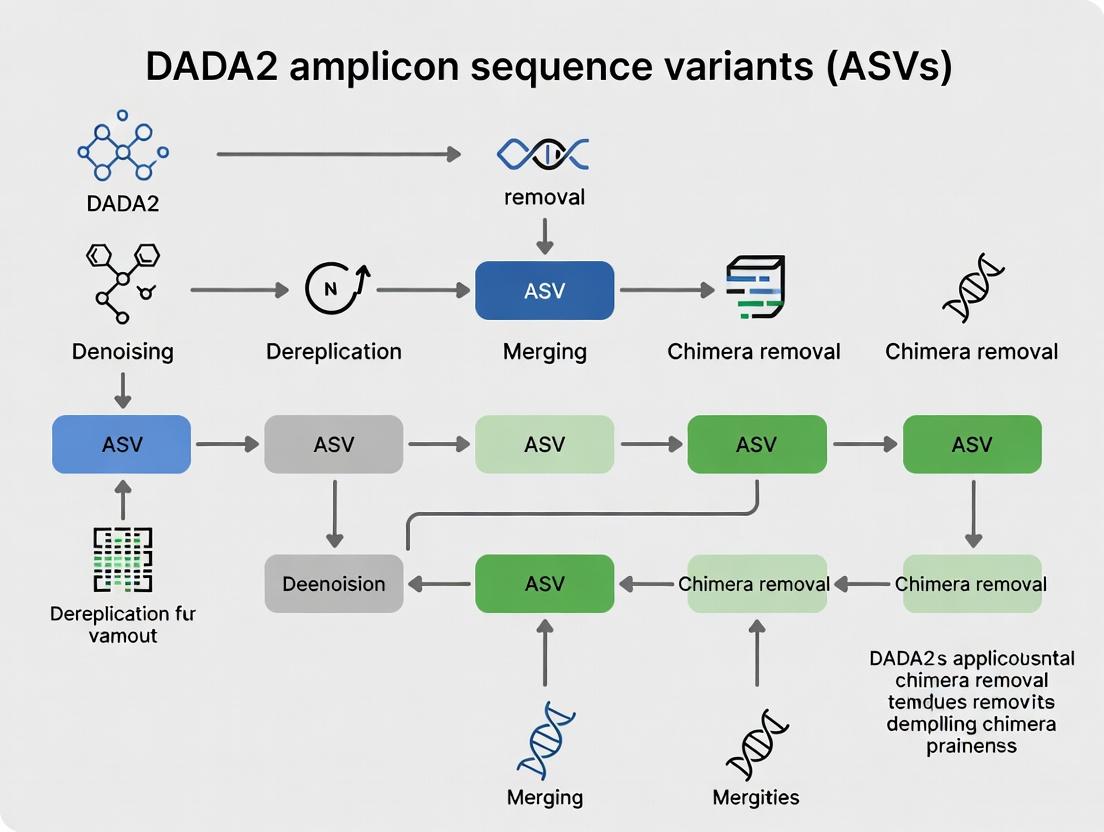

Diagram Title: DADA2 ASV Inference Workflow (16S rRNA)

Step-by-Step Protocol (R environment):

1. Quality Filtering & Trimming:

2. Learn Error Rates: Models the error profile of the sequencing run.

3. Dereplication & Sample Inference:

4. Merge Paired-End Reads:

5. Construct Sequence Table & Remove Chimeras:

6. Taxonomic Assignment (e.g., with SILVA):

7. Generate Count Matrix & Phylogenetic Tree:

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for ASV-based Amplicon Studies

| Item / Reagent | Function & Purpose | Example/Notes |

|---|---|---|

| High-Fidelity PCR Mix | Amplifies target region (e.g., 16S V4) with minimal bias and error. | KAPA HiFi HotStart ReadyMix, Q5 High-Fidelity DNA Polymerase. |

| Dual-Indexed Barcoded Primers | Enables multiplexing of samples; unique combinations per sample reduce index hopping. | Illumina Nextera XT Index Kit, custom Golay-coded primers. |

| Magnetic Bead Cleanup Kits | For post-PCR purification and size selection to remove primer dimers. | AMPure XP Beads, SizeSelect beads. |

| Quantification Kit (fluorometric) | Accurate measurement of DNA concentration for library pooling normalization. | Qubit dsDNA HS Assay, Quant-iT PicoGreen. |

| Illumina Sequencing Reagents | Platform-specific chemistry for cluster generation and sequencing. | MiSeq Reagent Kit v3 (600-cycle), NovaSeq 6000 SP Reagent Kit. |

| Positive Control (Mock Community) | Validates entire wet-lab and bioinformatic pipeline; assesses accuracy & bias. | ZymoBIOMICS Microbial Community Standard. |

| Negative Extraction Control | Identifies contamination introduced during DNA extraction. | Nuclease-free water processed alongside samples. |

| Reference Database | For taxonomic assignment of ASVs. | SILVA, Greengenes, UNITE (for fungi), RDP. |

| Bioinformatics Pipeline | Executes DADA2 and subsequent analysis. | R packages (dada2, phyloseq), QIIME 2 (via q2-dada2 plugin), DADA2 in Galaxy. |

Applications in Drug Development & Therapeutic Research

The precision of ASVs enables novel applications:

- Tracking Bacterial Strain Engraftment: Precisely monitor the fate of probiotic or live biotherapeutic products (LBPs) in host microbiomes.

- Identifying Pathobionts & Biomarkers: Associate specific ASVs (potential strains) with disease states or treatment response for targeted intervention.

- Microbiome Stability Assessment: Measure subtle, strain-level shifts in community structure in response to drug candidates.

Logical Pathway for Therapeutic Discovery:

Diagram Title: ASV-Driven Therapeutic Discovery Pathway

ASVs, as exact biological sequences inferred by algorithms like DADA2, provide a robust, reproducible, and high-resolution framework for marker-gene analysis. This paradigm supersedes OTUs and is essential for advancing rigorous microbiome science, particularly in the demanding context of drug development where precision and reproducibility are paramount. The transition to ASVs empowers researchers to ask and answer questions at the appropriate biological scale, from broad ecology to actionable strain-level dynamics.

The shift from Operational Taxonomic Units (OTUs) to Amplicon Sequence Variants (ASVs) represents a paradigm change in microbial marker-gene analysis, enabling reproducible, high-resolution community profiling. Within this thesis, the DADA2 (Divisive Amplicon Denoising Algorithm 2) algorithm is positioned as the foundational statistical model that makes true biological sequence variant inference possible. It moves beyond simplistic clustering to a model-based approach that distinguishes sequencing errors from true biological variation, forming the cornerstone of modern, precise microbiome research critical for drug development and biomarker discovery.

Core Statistical Model of DADA2

DADA2 is built on a parametric error model and a dereplication algorithm that infers exact biological sequences from noisy sequencing data. Its core innovation is modeling the amplicon sequencing process as a branching process and solving the partition problem to identify the original sequences.

The Divisive Partitioning Algorithm

The algorithm begins with a partition containing all unique sequences. It iteratively tests each partition for being generated from a single true sequence versus multiple true sequences. This test is based on comparing the observed abundances of sequences to their expected abundances under the error model. Partitions that fail the test are split.

Error Rate Estimation

A critical component is learning sample-specific error rates. DADA2 estimates these rates from the data itself by examining the transition frequencies in sequencing reads compared to a set of high-quality, abundant "training" sequences presumed to be error-free.

Table 1: Key Quantitative Parameters in the DADA2 Model

| Parameter | Description | Typical Range/Value | Impact on Output |

|---|---|---|---|

| OMEGA_A | P-value threshold for partition significance | Default: 1e-40 | Lower values increase sensitivity to rare variants. |

| Error Rate (ϵ) | Per-nucleotide transition probability | Sample-specific (e.g., 10^-3 to 10^-2) | Directly influences denoising stringency. |

| BAND_SIZE | Width of banded alignment | Default: 16 | Controls computational speed/accuracy trade-off. |

| MIN_FOLD | Minimum abundance ratio for "parents" over "daughters" | Default: 1 (DADA1), 8 (DADA2) | Affects chimera detection sensitivity. |

P-value Calculation and Significance

For each potential partition, DADA2 calculates a p-value using the differential abundance of sequences. The fundamental question is whether the abundance pattern of reads within a partition is consistent with errors from a single true sequence (the null hypothesis). The p-value is computed via a Poisson likelihood or a more complex model incorporating the error rates.

Detailed Experimental Protocol for DADA2 Analysis

The following protocol is the standard workflow for processing 16S rRNA gene amplicon data (e.g., V4 region, Illumina MiSeq 2x250) using the DADA2 pipeline (v1.28+).

Prerequisite: Data Preparation

- Raw Data: Paired-end FASTQ files (R1 and R2).

- Metadata: Sample metadata file matching file names.

- Software: R environment (≥4.0), DADA2 package installed (

BiocManager::install("dada2")).

Step-by-Step Methodology

Filter and Trim: Remove low-quality bases, trim primers, and enforce a minimum length.

Learn Error Rates: Estimate the sample-specific error model from the data.

Dereplication: Combine identical reads into "unique sequences" with abundances.

Core Sample Inference (Denoising): Apply the DADA2 algorithm.

Merge Paired Reads: Align forward and reverse reads to construct full denoised sequences.

Construct Sequence Table: Create an ASV abundance table (rows=samples, columns=ASVs).

Remove Chimeras: Identify and remove bimera sequences.

Visualizing the DADA2 Workflow and Model

Diagram Title: DADA2 Bioinformatics Pipeline from Raw Data to ASVs

Diagram Title: Core Divisive Partitioning Logic of DADA2

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for DADA2-Driven ASV Research

| Item | Function in ASV Research | Key Consideration for Reproducibility |

|---|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | PCR amplification of target region (e.g., 16S V4) with minimal bias and error. | Low error rate is critical to not introduce artifactual variation mistaken for true ASVs. |

| Standardized Primer Sets (e.g., 515F/806R for 16S) | Specific amplification of the target variable region. | Consistent primer sequence and purification (e.g., HPLC) ensure comparable results across studies. |

| Mock Microbial Community (e.g., ZymoBIOMICS) | Positive control containing known, quantifiable strains. | Validates the entire workflow, from extraction to sequencing, and assesses DADA2's error correction accuracy. |

| Magnetic Bead-Based Cleanup Kits (e.g., AMPure XP) | Size selection and purification of PCR amplicons. | Consistent bead-to-sample ratio is vital for removing primer dimers and controlling final library size. |

| Dual-Indexed Sequencing Adapters (e.g., Nextera XT) | Allows multiplexing of samples on an Illumina sequencer. | Unique dual indexing minimizes index-hopping (misassignment) artifacts. |

| PhiX Control v3 (Illumina) | Provides a balanced nucleotide library for sequencing run quality control. | Typically spiked at 1-5% to improve low-diversity amplicon cluster identification and error rate estimation. |

| Quantification Kit (e.g., Qubit dsDNA HS Assay) | Accurate measurement of DNA concentration before sequencing. | Fluorometric methods are preferred over spectrophotometry for amplicon library quantification. |

The shift from Operational Taxonomic Units (OTUs) to Amplicon Sequence Variants (ASVs) represents a fundamental advancement in microbial marker-gene analysis. DADA2 (Divisive Amplicon Denoising Algorithm) is a cornerstone method that infers exact biological sequences from amplicon data, moving beyond the heuristic clustering of OTUs. This whitepaper explores the core technical advantages that make DADA2's ASV approach transformative: Reproducibility, Reusability, and Single-Nucleotide Resolution. Within the broader thesis of ASV research, these advantages enable precise, cumulative, and hypothesis-driven science, directly impacting fields from microbial ecology to drug development targeting microbiomes.

In-Depth Technical Analysis of Core Advantages

Reproducibility

Reproducibility is ensured because ASV inference is a deterministic bioinformatic process. Unlike OTU clustering, which involves random seeding in algorithms like UPARSE, DADA2 uses a statistical model of sequencing errors to distinguish true biological sequences from errors.

- Technical Mechanism: DADA2 models the abundance of each unique sequence across samples and its q-scores (quality scores). It constructs an error model specific to the dataset and then uses this model to denoise reads. The same input data, run through the same version of DADA2 with identical parameters, will always produce the same output ASV table.

- Quantitative Impact: A 2017 study by Callahan et al. demonstrated that DADA2 reproduced the same ASVs from technical replicates with 100% consistency, whereas OTU methods showed variability.

Table 1: Reproducibility Metrics: DADA2 ASVs vs. Traditional OTU Clustering

| Metric | DADA2 (ASVs) | 97% OTU Clustering (UPARSE) | Notes |

|---|---|---|---|

| Inter-run Consistency | 100% | 85-95% | Technical replicates processed independently. |

| Parameter Sensitivity | Low | High | ASV inference is robust to typical parameter adjustments. |

| Algorithm Determinism | Fully Deterministic | Often Stochastic | Clustering often involves random seed initialization. |

| Reference Database Dependence | Optional (for chimera removal) | Required for closed-reference | Enhances reproducibility across studies. |

Reusability

ASVs are biologically meaningful units that can be directly compared across studies. An ASV is defined by its exact DNA sequence, forming a stable currency for microbial ecology.

- Technical Mechanism: Because ASVs are not defined by an arbitrary similarity threshold to other sequences in a single study, they can be aggregated into global databases. This allows for meta-analyses where ASV tables from different projects are merged without re-processing raw data.

- Research Impact: An ASV identified in a human gut study from 2020 can be directly queried against a new soil microbiome study from 2024, enabling temporal and cross-biome analyses impossible with OTUs.

Single-Nucleotide Resolution

This is the foundational advantage enabling the other two. DADA2 can resolve sequences differing by as little as a single nucleotide.

- Technical Mechanism: The algorithm uses a p-value-driven decision process. It compares sequences and asks: "Can the abundance of a more rare sequence be explained as errors originating from a more abundant sequence?" If the p-value is below a threshold (default α=0.05), the sequences are resolved as distinct ASVs.

- Biological Significance: This resolution detects subtle but critical biological variation, such as:

- Strain-level microbial differences.

- Single-nucleotide polymorphisms (SNPs) within a species.

- Exact sequence variants linked to phenotypic traits (e.g., antibiotic resistance genes).

Table 2: Resolution Power Comparison

| Feature | DADA2 ASV | 97% OTU |

|---|---|---|

| Minimum Discernible Difference | 1 nucleotide | ~21 nucleotides (for 150bp V4 region) |

| Ability to Distinguish Closely Related Strains | High | Low |

| Representation of Sequence Diversity | Precise, exact sequences | Fuzzy, centroid-based |

| Information Retained | Full sequence information | Partial, consensus-based |

Detailed Experimental Protocol for DADA2 Analysis

The following is a standard workflow for processing paired-end 16S rRNA gene sequences from Illumina MiSeq.

Protocol: DADA2 Pipeline for 16S rRNA Amplicon Data

1. Prerequisites & Software Installation:

- Install R (≥4.0.0).

- Install the

dada2package from Bioconductor:if (!require("BiocManager", quietly = TRUE)) install.packages("BiocManager") BiocManager::install("dada2"). - Install recommended dependencies:

DECIPHER,phangorn.

2. Prepare Environment and Inspect Data:

3. Filter and Trim:

4. Learn Error Rates and Denoise:

5. Merge Paired-End Reads:

6. Construct ASV Table and Remove Chimeras:

7. Assign Taxonomy (Optional but Recommended):

8. Generate Output:

Visualizations

Diagram 1: DADA2 ASV Inference Workflow

Diagram 2: Single-Nucleotide Resolution Decision Logic

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Resources for DADA2 ASV Research

| Item | Category | Function & Rationale |

|---|---|---|

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Wet-lab Reagent | Standard for 2x300bp paired-end sequencing, ideal for the ~250bp 16S V4 region, providing sufficient overlap for high-quality merging. |

| PCR Primers (e.g., 515F/806R) | Wet-lab Reagent | Target the hypervariable V4 region of 16S rRNA gene; must be chosen for specificity and compatibility with the intended reference database. |

| Phusion High-Fidelity DNA Polymerase | Wet-lab Reagent | High-fidelity PCR enzyme critical for minimizing amplification errors that could be misidentified as true biological variation. |

| DADA2 R Package (v1.28+) | Software | Core algorithm for denoising, ASV inference, and chimera removal. The primary tool enabling the discussed advantages. |

| SILVA SSU Ref NR 99 Database | Reference Data | Curated rRNA database for accurate taxonomic assignment of bacterial and archaeal ASVs. Version alignment is crucial for reproducibility. |

| QIIME 2 (with DADA2 plugin) | Software Platform | Optional but popular environment that wraps the DADA2 algorithm, providing a standardized pipeline and extensive downstream analysis tools. |

| Positive Control Mock Community (e.g., ZymoBIOMICS) | Quality Control | Defined mixture of microbial genomes. Essential for validating pipeline performance, calculating accuracy, and detecting batch effects. |

| DECIPHER R Package | Software | Used for optional but recommended multiple sequence alignment and phylogenetic tree construction from ASVs. |

Amplicon sequencing of marker genes like the 16S ribosomal RNA (rRNA) gene for bacteria/archaea and the Internal Transcribed Spacer (ITS) for fungi is a cornerstone of microbial ecology. The transition from Operational Taxonomic Units (OTUs) to Amplicon Sequence Variants (ASVs) via algorithms like DADA2 represents a paradigm shift. ASVs are resolved to the level of single-nucleotide differences, providing biologically meaningful, reproducible units that can be tracked across studies. This technical guide details the essential prerequisites—from experimental design to raw data characteristics—required to effectively generate and analyze Illumina amplicon data for rigorous ASV-based research.

Experimental Design & Wet-Lab Protocol

A robust experimental design is critical for generating meaningful ASV data.

Key Protocol: Library Preparation via Two-Step PCR (16S rRNA V4 Region)

- Primary PCR (Target Amplification): Amplify the target region from genomic DNA using gene-specific primers (e.g., 515F/806R for 16S V4) with overhang adapters.

- Reaction Mix: 2-50 ng genomic DNA, polymerase mix (e.g., Q5 Hot Start High-Fidelity 2X Master Mix), forward/reverse primers (0.2 µM each), nuclease-free water to 25 µL.

- Cycling Conditions: 98°C for 30s; 25-35 cycles of (98°C for 10s, 55°C for 30s, 72°C for 30s); final extension at 72°C for 2 min.

- PCR Clean-up: Purify amplicons using magnetic bead-based clean-up (e.g., AMPure XP beads) to remove primers and dimers.

- Index PCR (Library Indexing): Attach unique dual indices and full Illumina sequencing adapters.

- Reaction Mix: 5 µL purified primary PCR product, polymerase mix, index primer i5 and i7 (Nextera XT Index Kit v2), water to 50 µL.

- Cycling Conditions: 98°C for 30s; 8 cycles of (98°C for 10s, 55°C for 30s, 72°C for 30s); final extension at 72°C for 5 min.

- Final Library Clean-up & Pooling: Clean index PCR products, quantify (e.g., fluorometrically with Qubit), normalize, and pool equimolarly.

- Sequencing: Run on Illumina MiSeq, NextSeq, or NovaSeq with paired-end chemistry (e.g., 2x250 bp for V4).

Experimental Workflow Diagram:

Title: Illumina Amplicon Library Prep Workflow

Raw Data Structure & Quality Metrics

Illumina sequencing outputs binary base call (BCL) files, converted to FASTQ. Each sample is associated with two FASTQ files (R1, R2). Key quality metrics are summarized in the table below.

Table 1: Core FASTQ File Quality Metrics & Implications for ASV Analysis

| Metric | Typical Value/Range | Importance for ASV Analysis (DADA2) |

|---|---|---|

| Q-Score (Phred) | ≥30 (Q30) | Critical. DADA2 uses quality profiles to model and correct errors. Low Q-scores increase false-positive ASVs. |

| Reads per Sample | 20,000 - 100,000+ | Determines sequencing depth. Inadequate depth fails to capture rare variants; excessive depth yields diminishing returns. |

| Read Length (bp) | e.g., 250-300 bp (2x paired-end) | Must be sufficient to span amplicon with overlap (e.g., ~290 bp for 16S V4). Overlap is required for DADA2's merging. |

| % Bases ≥ Q30 | >75-80% overall | Indicator of overall run health. A sudden drop at cycle position may signal trimming parameters. |

| GC Content | ~50-60% for 16S | Deviations may indicate contamination or primer bias. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Illumina Amplicon Sequencing

| Item | Function & Rationale |

|---|---|

| High-Fidelity DNA Polymerase | Minimizes PCR amplification errors, preventing inflation of artifactual ASVs. Essential for true variant calling. |

| Magnetic Bead Clean-up Kits | For size-selective purification, removing primer dimers and non-specific products that consume sequencing reads. |

| Fluorometric Quantitation Kit | Accurate DNA quantification (e.g., Qubit dsDNA HS Assay) for equitable library pooling, ensuring balanced sample coverage. |

| Validated Primer Set | Specific primers (e.g., Earth Microbiome Project's 515F/806R) with known performance and minimal bias for target taxa. |

| Dual-Indexed Adapter Kit | Unique combinatorial barcodes (e.g., Nextera XT) enable multiplexing and prevent index-hopping-induced cross-talk between samples. |

| PhiX Control v3 | A spiked-in control library (∼1%) for monitoring cluster generation, sequencing accuracy, and identifying mixed phases. |

From Raw Data to ASVs: The DADA2 Conceptual Pipeline

DADA2 employs a quality-aware, parametric error model to distinguish true biological sequences from sequencing errors, outputting ASVs.

DADA2 Core Algorithm Workflow:

Title: DADA2 ASV Inference Pipeline Steps

Critical Preprocessing Considerations for ASV Accuracy

- Primer & Adapter Trimming: Must be performed before DADA2's

filterAndTrim()to prevent interference with error modeling. - Quality Filtering Thresholds: Stringent but not excessive filtering (e.g.,

maxN=0,truncQ=2,maxEE=c(2,2)) balances read retention and quality. - Error Model Training: The

learnErrors()step must be run on a subset of sufficient size (e.g., 100M total bases) to accurately estimate error rates for the specific run. - Pooling Strategy: For studies with low biomass samples or expected low variant overlap, using the

pool=TRUEoption in thedada()function can improve sensitivity to rare variants shared across samples.

Amplicon Sequence Variants (ASVs) represent a paradigm shift in microbial marker-gene analysis, moving beyond operational taxonomic units (OTUs) to provide single-nucleotide-resolution data. Within the broader thesis of DADA2-based research, the ASV table is not merely an output but the foundational quantitative matrix that encodes the precise biological reality of a microbiome. This guide details its structure, correct interpretation, and its critical role in powering statistically robust downstream analyses in pharmaceutical and clinical research.

Core Structure of the ASV Table

The ASV table is a high-dimensional, sparse matrix where rows represent unique ASVs and columns represent samples. Its structure is summarized below.

Table 1: Core Structure and Metadata of a Standard ASV Table

| Component | Description | Data Type | Example |

|---|---|---|---|

| ASV Identifier | Unique DNA sequence (or hash) defining the variant. | String | ASV_001, ACAAGG... |

| Sample Columns | Read counts per sample (non-negative integers). | Integer | 0, 15, 1284 |

| Taxonomic Lineage | Assigned taxonomy (Kingdom to Species). | String | k__Bacteria; p__Firmicutes;... |

| Sequence Length | Length of the representative sequence. | Integer | 253 bp |

| Total Reads | Sum of reads for that ASV across all samples. | Integer | 14592 |

| Prevalence | Number of samples where ASV is present (≥1 read). | Integer | 23 |

Generation via DADA2: Detailed Protocol

The generation of the ASV table via DADA2 follows a rigorous, error-model-based pipeline.

Experimental Protocol 1: DADA2 ASV Inference Workflow (16S rRNA Gene)

- Quality Profiling & Trimming: Use

plotQualityProfile()on forward and reverse reads. Trim where median quality drops below Q30 (e.g.,truncLen=c(240,160)). - Error Rate Learning: Estimate the amplicon error rates from the data using

learnErrors()with a default of 100 million base pairs. - Sample Inference: Core algorithm execution. Run

dada()on each sample's reads, applying the error model to distinguish biological sequences from sequencing errors. - Merge Paired Reads: Merge denoised forward and reverse reads with

mergePairs(), requiring a minimum overlap of 12 bases and no mismatches. - Construct Sequence Table: Create the initial ASV-by-sample count matrix with

makeSequenceTable(). - Remove Chimeras: Identify and remove bimera (chimeric sequences) using

removeBimeraDenovo()with the "consensus" method. - Taxonomic Assignment: Assign taxonomy against a reference database (e.g., SILVA, GTDB) using

assignTaxonomy()and optionally add species withaddSpecies().

DADA2 ASV Table Construction Pipeline

Interpretation and Normalization

Interpretation requires understanding that read counts are compositional. Normalization is essential before comparative analysis.

Table 2: Common ASV Table Normalization & Transformation Methods

| Method | Formula/Process | Purpose | Use Case |

|---|---|---|---|

| Rarefaction | Random subsampling to an even sequencing depth. | Controls for library size; permits diversity metrics. | Alpha diversity comparisons. Controversial for differential abundance. |

| Total Sum Scaling (TSS) | Count in Sample / Total Reads in Sample | Converts to proportions (relative abundance). | Simple exploratory analysis. |

| Center Log-Ratio (CLR) | log(count / geometric mean of sample) |

Aitchison geometry. Handles zeros via pseudocount. | Most differential abundance tools (ALDEx2, Songbird). |

| DESeq2's Median of Ratios | Models raw counts with sample-specific size factors. | Negative binomial model for differential testing. | Identifying significantly different ASVs between conditions. |

| Cumulative Sum Scaling (CSS) | Implemented in metagenomeSeq. |

Normalizes based on data distribution to handle sparsity. | Differential abundance with high sparsity. |

ASV Table as the Foundation for Downstream Analysis

The ASV table feeds into all subsequent ecological and statistical analyses.

ASV Table Powers Diverse Downstream Analyses

Experimental Protocol 2: Core Downstream Analysis Workflow

- Alpha Diversity: Calculate indices (Shannon, Faith's PD) on a rarefied table using

phyloseq::estimate_richness()orpicante::pd(). - Beta Diversity: Compute distance matrix (e.g., Weighted/Unweighted UniFrac, Bray-Curtis on CLR-transformed data). Perform PERMANOVA (

vegan::adonis2()) to test group differences. - Differential Abundance: Use a dedicated tool. For DESeq2: Convert ASV table to

DESeqDataSet, applyDESeq(), and extract results withresults(). - Network Analysis: Calculate robust correlations (SparCC, SPIEC-EASI) on CLR-transformed data. Visualize and analyze in

igraphorGephi.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for DADA2/ASV Research

| Item | Function/Description | Key Consideration |

|---|---|---|

| High-Fidelity PCR Polymerase (e.g., Q5, KAPA HiFi) | Amplifies target region with minimal error to reduce sequencing noise. | Critical for accurate ASV inference; low error rate is paramount. |

| Dual-Indexed PCR Primers | Allows multiplexing of hundreds of samples with unique barcode pairs. | Prevents index-hopping artifacts (essential for Illumina NovaSeq). |

| Magnetic Bead Clean-up Kits (e.g., AMPure XP) | Size selection and purification of amplicon libraries. | Ratio optimization is key for removing primer dimers. |

| Quantification Kit (e.g., Qubit dsDNA HS Assay) | Accurate concentration measurement of final libraries. | More accurate than spectrophotometry for low-concentration amplicons. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard sequencing kit for paired-end 300bp reads for full 16S V3-V4 overlap. | Enables high-quality merging for accurate ASVs. |

| Reference Database (e.g., SILVA, GTDB, UNITE) | For taxonomic assignment of ASV sequences. | Choice dictates taxonomic nomenclature and comprehensiveness. |

| Positive Control Mock Community (e.g., ZymoBIOMICS) | Validates entire wet-lab and bioinformatic pipeline. | Allows benchmarking of error rates, ASV recovery, and bias. |

| Negative Extraction Control | Identifies contaminant ASVs introduced during sample processing. | Essential for contaminant removal in low-biomass studies. |

A Step-by-Step DADA2 Pipeline: From Raw Reads to Biological Insights

Within the framework of a comprehensive thesis on DADA2-derived Amplicon Sequence Variants (ASVs), rigorous pre-processing is the cornerstone of reliable, reproducible results. The DADA2 pipeline transforms raw amplicon sequences into high-resolution ASVs, but its accuracy is fundamentally dependent on input quality. The plotQualityProfile function is the critical diagnostic tool that visually interprets sequence quality, providing the empirical evidence required to set rational, data-driven trimming parameters. This guide details how to use this visualization to optimize trimming, thereby reducing error rates and enhancing the fidelity of downstream ASV inference, taxonomy assignment, and subsequent ecological or clinical interpretation in drug discovery research.

Interpreting plotQualityProfile Outputs

The plotQualityProfile function (from the dada2 R package) generates plots showing the mean quality score (y-axis) at each cycle/base position (x-axis) for forward and reverse reads, typically using a green-yellow-red heatmap. The following table summarizes the key metrics and their interpretation for guiding trimming decisions.

Table 1: Key Metrics from plotQualityProfile and Their Implications for Trimming

| Metric | Description | Ideal Profile | Indicator for Trimming |

|---|---|---|---|

| Mean Quality Score | Average Phred score per cycle. Phred score (Q) = -10*log10(P), where P is probability of an incorrect base call. | Q ≥ 30 (99.9% accuracy), stable across cycles. | Trim where mean quality drops sustainably below Q30 (or Q25 for variable regions). |

| Quality Spread | Distribution of quality scores (25th-75th percentile interquartile range shown as solid line, 10th-90th as whiskers). | Tight distribution (narrow lines/whiskers). | Widening spread indicates increased uncertainty; consider trimming before significant widening. |

| Cumulative Error Rate | Derived from mean Phred score. Calculated as 10^(-Q/10). | Low and stable. | A sharp rise in cumulative error suggests an optimal truncation point. |

| Read Length Distribution | Number of reads remaining at each cycle (grey line, secondary y-axis). | Sharp drop at expected amplicon length. | Truncate before reads prematurely terminate, often coinciding with quality drop. |

| Nucleotides Frequency | Proportion of A, C, G, T per cycle. Helps detect primers or adapter contamination. | Balanced composition after primer region, without sharp biases. | If primers persist, trim starting after the primer sequence ends. |

Example Experimental Protocol: Generating and Analyzing Quality Profiles

- Load Libraries and Set Path: In R, load

dada2and set the path to the directory containing demultiplexed FASTQ files. - Sort Files: List and sort forward and reverse read files.

- Generate Plots: Execute

plotQualityProfile(fnFs[1:2])andplotQualityProfile(fnRs[1:2])to visualize quality for the first two samples. For aggregate trends, use a subset of samples. - Quantitative Assessment: Record the cycle number where the mean quality for forward and reverse reads intersects Q25 and Q30. Note the position where the read count distribution peaks and falls.

- Decision Point: Based on aggregated profiles, choose truncation lengths (

truncLen) for forward and reverse reads that retain maximum overlap for merging while removing low-quality bases.

From Profile to Pipeline: A Trimming Workflow

The following diagram outlines the logical decision-making process informed by plotQualityProfile analysis within a standard DADA2 pre-processing workflow.

Title: Quality-Driven Trimming Decision Workflow for DADA2

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for 16S rRNA Amplicon Sequencing Pre-processing

| Item | Function | Example/Provider |

|---|---|---|

| High-Fidelity DNA Polymerase | PCR amplification of target region with minimal bias and errors. | Phusion Plus (Thermo Fisher), KAPA HiFi (Roche). |

| Validated Primer Pairs | Target-specific amplification of hypervariable regions (e.g., V3-V4). | 341F/806R, 515F/926R (modified for Illumina). |

| Size-Selective Beads | Cleanup of PCR products and removal of primer dimers. | AMPure XP beads (Beckman Coulter). |

| Dual-Indexed Adapter Kits | Multiplexing samples on Illumina sequencing platforms. | Nextera XT Index Kit (Illumina). |

| Library Quantification Kit | Accurate quantification of final library for pooling. | Qubit dsDNA HS Assay (Thermo Fisher). |

| Sequencing Reagents | Generation of paired-end reads (e.g., 2x250bp). | MiSeq Reagent Kit v3 (Illumina). |

| DADA2 R Package | Primary software for quality filtering, ASV inference, and chimera removal. | Available via Bioconductor. |

| Computational Resources | Server or HPC environment for processing large sequence datasets. | Minimum 16GB RAM, multi-core processor. |

Case Study: Quantitative Trimming Decisions from Empirical Data

Consider a hypothetical but realistic 16S rRNA (V3-V4) MiSeq run (2x250bp). The following table presents aggregated metrics from plotQualityProfile for 20 samples, informing a specific trimming strategy.

Table 3: Aggregated Quality Metrics and Resulting Trimming Parameters

| Read Direction | Cycle of Mean Q < 30 | Cycle of Mean Q < 25 | Peak Read Length | Recommended truncLen |

Rationale |

|---|---|---|---|---|---|

| Forward (R1) | 230 | 240 | 250 | 240 | Trim 10 bases from end where quality declines below Q25, preserving most reads. |

| Reverse (R2) | 200 | 220 | 230 | 200 | Aggressive trim where quality drops below Q30; reverse reads often degrade faster. |

| Overlap Post-Truncation | - | - | - | ~260 bases (F240 + R200 - 180bp amplicon) | Ensures a minimum 20-30bp overlap for reliable merging in DADA2. |

Supporting Experimental Protocol: Implementing the Filtering

In a DADA2-focused thesis, documented quality profiling and justified trimming are not merely procedural steps; they are critical methodological validations. Suboptimal trimming can lead to:

- Over-trimming: Loss of biological signal and reduced read overlap, causing merge failures.

- Under-trimming: Propagation of sequencing errors, inflating spurious ASVs, and increasing computational burden during error modeling.

Proper use of plotQualityProfile mitigates these risks, leading to a more accurate error model in the dada algorithm itself. This results in a faithful ASV table that reliably represents the true biological diversity in a sample—a non-negotiable foundation for any downstream analysis, such as differential abundance testing in clinical cohorts or biomarker discovery in drug development pipelines. The methodological transparency provided by these visualizations and derived parameters strengthens the entire thesis by explicitly linking raw data quality to final analytical outcomes.

Thesis Context: This guide details the core algorithmic steps of the DADA2 pipeline for deriving exact Amplicon Sequence Variants (ASVs) from high-throughput amplicon sequencing data. Moving beyond Operational Taxonomic Units (OTUs), DADA2's denoising approach provides higher resolution for microbial community analysis, crucial for ecological studies, biomarker discovery, and therapeutic development in drug research.

Core Denoising Theory

DADA2 models the process by which sequencing errors generate amplicon reads. It uses a parametric error model to distinguish genuine biological sequences (ASVs) from erroneous reads derived from them. The core steps are interdependent, with the output of each informing the next.

Detailed Stepwise Methodologies & Protocols

Filtering and Trimming: Quality Control

This initial step removes low-quality data to improve the efficiency and accuracy of subsequent error modeling.

Experimental Protocol:

- Quality Profile Inspection: Visualize mean quality scores per base position using

plotQualityProfile()(DADA2 R package). - Set Thresholds: Define

truncLen(position to truncate reads) where median quality typically drops below a threshold (e.g., Q20). DefinemaxEE(maximum expected errors) to discard reads with an aggregate expected error score above this value. - Execute Filtering: Run

filterAndTrim()function with parameters tailored to your dataset (see Table 1). - Output: Trimmed and filtered FASTQ files, with a summary table of read counts.

Error Rate Learning: Building the Model

DADA2 learns a dataset-specific error model by alternating between estimating error rates and inferring sample composition.

Experimental Protocol:

- Subsampling: Randomly sample (e.g., 1-2 million reads) from the filtered dataset to train the model efficiently.

- Error Model Estimation: Execute

learnErrors()function. The algorithm: a. Initializes with a simple error model or prior estimates. b. Alternates between inferring the true sequence variants present in the sample and re-estimating the error rates based on the differences between observed reads and inferred true sequences. c. Converges on a set of error rates for each transition (A→C, A→G, etc.) per sequencing cycle. - Validation: Visualize the learned error rates with

plotErrors()to ensure they align with expected trends (error rates decrease with higher quality scores).

Sample Inference: The Core Denoising

This step applies the error model to partition reads into ASVs.

Experimental Protocol:

- Dereplication: Combine identical reads into "unique sequences" with abundance counts using

derepFastq()to reduce computational load. - Denoising Algorithm: Run

dada()on each sample. For each unique sequence: a. All reads are compared to each other. b. A Poisson model, parameterized by the learned error rates and read abundances, evaluates whether a less abundant sequence is likely to be an erroneous derivative of a more abundant one. c. A p-value is computed for each partition. Sequences are partitioned into ASVs where the model rejects the null hypothesis that they are erroneous offspring. - Output: A list of ASVs with their corrected sequences and abundances per sample.

Table 1: Typical Filtering Parameters for Illumina MiSeq 16S rRNA Gene Data (V4 Region)

| Parameter | Typical Setting | Rationale & Quantitative Impact |

|---|---|---|

truncLen |

F: 240, R: 200 | Truncates forward/reverse reads where median Q-score falls below ~20-25. Removes low-quality tails. |

maxEE |

(2, 5) | Reads with Expected Errors >2 (Fwd) or >5 (Rev) are discarded. Removes ~5-15% of reads. |

trimLeft |

F: 10, R: 10 | Removes primer sequences and adjacent low-complexity bases. Fixed length removal. |

truncQ |

2 | Truncates reads at first base with Q-score <=2. Aggressive quality trimming. |

minLen |

50 | Discards reads shorter than 50bp post-trimming. Removes uninformative fragments. |

Table 2: DADA2 Error Model Output Metrics

| Metric | Description | Typical Range (Illumina MiSeq) |

|---|---|---|

| Error Rate per Transition | Probability of base substitution (e.g., A→C). | 10^-3 to 10^-2 at cycle 1, decreasing to 10^-4 to 10^-5 by cycle 250. |

| Convergence Iterations | Number of alternating updates in learnErrors. |

3-6 cycles to reach convergence. |

| Final ASV Yield | Percentage of input reads assigned to an inferred ASV. | 20-50% of raw reads; 70-90% of filtered reads. |

Visualizations

DADA2 Core Analytical Workflow

Error Rate Learning Alternating Algorithm

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for DADA2 ASV Research

| Item | Function in DADA2/ASV Pipeline | Example/Note |

|---|---|---|

| High-Fidelity Polymerase | Minimizes PCR errors during library prep, reducing artificial diversity. | Q5 Hot Start (NEB), KAPA HiFi. Critical for accurate ASV inference. |

| Staggered Primers | Reduces index swapping ("bleeding") on Illumina flow cells. | Nextera XT indices with staggered base composition. |

| PhiX Control Library | Provides balanced nucleotide diversity for Illumina sequencing calibration. | Typically 1-5% spike-in. Improves cluster identification and base calling. |

| DADA2 R Package | Core software implementing filtering, error learning, and sample inference. | Requires R (>=4.0.0). Primary tool for denoising. |

| Short Read Archive | Public repository for raw sequence data (FASTQ). | Required for reproducibility. Accession numbers (e.g., SRR1234567) must be cited. |

| QIIME 2 / phyloseq | Downstream analysis platforms for taxonomy assignment, diversity analysis, and visualization of DADA2 output. | q2-dada2 plugin; phyloseq R package integrates seamlessly. |

| SILVA / GTDB Database | Curated 16S/18S rRNA gene reference databases for taxonomic assignment of ASVs. | Used with assignTaxonomy() in DADA2 or within QIIME2. |

| Bioinformatics Cluster | High-performance computing (HPC) environment. | Denoising of large datasets (>100 samples) requires significant memory (16-64GB RAM). |

Sample Inference, Chimera Removal, and Constructing the Sequence Table

Within the broader thesis on DADA2-based Amplicon Sequence Variant (ASV) research, this guide details the critical, sequential bioinformatic steps that transform raw high-throughput amplicon sequencing data into a high-resolution, chimera-free sequence table. This process is fundamental for downstream ecological and biomarker analyses in microbiome research and drug development.

Sample Inference with DADA2

Sample inference is the process of modeling and correcting Illumina-sequenced amplicon errors without clustering, resolving true biological sequences down to single-nucleotide differences.

Core Algorithmic Workflow

The DADA2 algorithm implements a parametric error model (P(observed read | true sequence)) learned from the data itself. The workflow is as follows:

- Dereplication: Identical reads are collapsed into unique sequences with associated abundance.

- Error Model Learning: Estimates the rate of each possible nucleotide transition (e.g., A→C) from a subset of high-quality data.

- Dereplicated Sample Inference: The core algorithm uses the error model to probabilistically partition reads between true sequences and erroneous reads, iteratively refining ASV abundances.

Key Experimental Protocol Parameters

- readQualityPlot Function: Visualize mean sequence quality per base pair to determine trim lengths.

filterAndTrim(): Typical parameters:truncLen=c(240, 200)(forward, reverse),maxN=0,maxEE=c(2,2),truncQ=2.learnErrors(): Uses a subset of data (e.g.,nbases=1e8) to learn the error rate for A->C, A->G, A->T, etc.dada(): Applies the error model to each sample. Thepool=TRUEoption enables more sensitive inference by pooling samples.

Table 1: Typical read count changes during DADA2 inference.

| Processing Stage | Metric | Typical Value Range | Function |

|---|---|---|---|

| Raw Reads | Input Reads Per Sample | 50,000 - 200,000 | -- |

| Post-Filtering | Reads Passing QC | 70-95% of input | filterAndTrim() |

| Post-Denoising | Inferred ASVs Per Sample | 10 - 1000s | dada() |

| Key Output | Non-Chimeric Sequence Count | 80-99% of filtered reads | removeBimeraDenovo() |

Diagram 1: DADA2 sample inference workflow (56 chars)

Chimera Removal

Chimeras are spurious sequences formed during PCR from two or more parent sequences. They are a major source of false-positive ASVs and must be removed.

Detailed Methodology: Bimera Denovo Identification

DADA2's removeBimeraDenovo() uses a de novo consensus method:

- Sequence Sorting: All inferred sequences are sorted by abundance (most to least abundant).

- Parent Comparison: Each sequence is checked against more abundant "parent" sequences.

- Chimera Test: A sequence is flagged as a bimera if it can be reconstructed by stitching a left segment of one parent with a right segment of another, allowing for a configurable number of mismatches (

minFoldParentOverhang). - Removal: All flagged chimeric sequences are removed from the ASV table.

Quantitative Impact of Chimera Removal

Table 2: Effect of chimera removal on ASV table.

| Sample Type | Typical Chimera Rate | Primary Cause | Key Parameter |

|---|---|---|---|

| Low-Complexity | 1-5% | Limited template diversity | minFoldParentOverhang=2 |

| High-Complexity (e.g., soil) | 10-40% | High template diversity & PCR cycles | method="consensus" |

| Mock Community | <1% (validation) | Controlled known composition | minParentAbundance |

Constructing the Sequence Table

The final step merges denoised, non-chimeric data from all samples into a single observation matrix.

Protocol:makeSequenceTable()and Post-Processing

Final Sequence Table Structure

The output is a sample-by-sequence matrix where rows are samples, columns are unique ASVs (represented by their DNA sequence), and values are read counts. This is the foundational table for all subsequent analyses (e.g., alpha/beta diversity, differential abundance).

Diagram 2: Constructing the final chimera-free ASV table (64 chars)

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for DADA2 Wet-Lab Preparation.

| Item | Function in ASV Workflow | Critical Consideration |

|---|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, Phusion) | PCR amplification of target region (e.g., 16S rRNA V4). | Minimizes PCR errors that can be misidentified as rare ASVs. |

| UltraPure PCR-Grade Water | Reagent resuspension and reaction setup. | Reduces background bacterial DNA contamination. |

| Quant-iT PicoGreen dsDNA Assay | Accurate quantification of amplicon library concentration. | Essential for precise, equimolar pooling of samples. |

| SPRIselect Beads | Size selection and purification of final amplicon libraries. | Removes primer dimers and non-specific products to improve sequencing quality. |

| PhiX Control v3 | Spiked into Illumina runs (1-5%). | Provides balanced nucleotide diversity and improves base calling for low-diversity amplicons. |

| DNeasy PowerSoil Pro Kit | Microbial DNA extraction from complex samples (e.g., stool, soil). | Maximizes lysis efficiency and inhibitor removal for representative community profiling. |

In the DADA2 (Divisive Amplicon Denoising Algorithm) pipeline, the generation of Amplicon Sequence Variants (ASVs) provides high-resolution, reproducible units for microbial community analysis. A critical subsequent step is the biological interpretation of these ASVs via taxonomy assignment. This process anchors the precise ASV sequences to established biological nomenclature by comparing them against curated reference databases. The choice and proper integration of databases like SILVA, Greengenes, and UNITE directly influence the accuracy, reproducibility, and ecological relevance of findings in drug development and human microbiome research.

Reference databases provide taxonomically annotated sequences from small-subunit ribosomal RNA genes (16S/18S) or Internal Transcribed Spacer (ITS) regions. Key features are summarized below.

Table 1: Core Features of Major Taxonomic Reference Databases

| Database | Primary Gene/Region | Target Domain | Current Version | Key Distinguishing Feature |

|---|---|---|---|---|

| SILVA | SSU & LSU rRNA (16S/18S/23S) | Bacteria, Archaea, Eukarya | SSU r138.1 (2020) | Manually curated, comprehensive, includes Eukaryotes. |

| Greengenes | 16S rRNA | Bacteria, Archaea | gg138 (2013) | Gold standard for human microbiome; no longer updated. |

| UNITE | ITS (ITS1, 5.8S, ITS2) | Fungi | 9.0 (2023) | Species-level hypotheses with dynamic clustering thresholds. |

Quantitative Comparison (Typical Full-Length 16S Datasets)

| Database | Approx. # of Reference Sequences | Taxonomy Strings | Recommended Classifier |

|---|---|---|---|

| SILVA | ~2.0 million | 7-8 ranks (Domain to Species) | DADA2 assignTaxonomy, IDTAXA, QIIME2 |

| Greengenes | ~1.3 million | 7 ranks (Domain to Species) | DADA2 assignTaxonomy, RDP Classifier |

| UNITE | ~1.1 million (species hypotheses) | 7 ranks (Kingdom to Species) | DADA2 assignTaxonomy (for ITS) |

Experimental Protocols for Taxonomy Assignment

Protocol: DADA2-based Taxonomy Assignment with SILVA/Greengenes

This protocol follows the DADA2 workflow after ASV inference and chimera removal.

Materials:

- FASTA file of inferred ASV sequences.

- Pre-formatted reference database FASTA file and corresponding taxonomy file.

- Computational environment with DADA2 installed (R/Bioconductor).

Method:

- Database Preparation: Download the non-redundant SILVA or Greengenes dataset formatted for DADA2 (

.fastafor sequences,.txtfor taxonomy). Trim to the same region as your amplicon (e.g., V3-V4) using a provided script or pre-trimmed version. - Taxonomy Assignment Function: Use the

assignTaxonomyfunction in DADA2.

Add Species-Level Designation (Optional): For precise matches, use

addSpecies.Output Interpretation: The output is a matrix of ASVs x taxonomic ranks, with bootstrap confidence values. Filter or interpret results considering the

minBootparameter (typically 80).

Protocol: ITS Analysis with UNITE using DADA2

The workflow for fungal ITS is complicated by high length variation.

Method:

- Preprocessing: Do not trim reads to a fixed length. Use

filterAndTrimwithmaxN=0,truncQ=2, andtrimLeftto remove primers. - Error Learning & ASV Inference: Proceed with standard DADA2 steps (

learnErrors,dada). - Taxonomy Assignment: Use UNITE's general release or "developer" datasets with

assignTaxonomy.

- Considerations: UNITE uses "Species Hypothesis" (SH) identifiers. The dynamic version clusters sequences at multiple identity thresholds, improving assignment accuracy.

Workflow and Decision Pathway

Diagram Title: Taxonomy Assignment Integration Workflow for DADA2 ASVs

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for DADA2 Taxonomy Assignment

| Item/Reagent | Function/Purpose | Example/Note |

|---|---|---|

| Curated Reference FASTA | Contains aligned reference sequences for classifier training. | SILVA train_set, Greengenes 97_otus, UNITE sh_qiime_release. |

| Corresponding Taxonomy File | Provides taxonomic lineage for each reference sequence. | Must match the order of sequences in the reference FASTA. |

| DADA2 R Package (v1.28+) | Core software containing assignTaxonomy and addSpecies functions. |

Requires R>=4.0. Available via Bioconductor. |

| High-Performance Computing (HPC) Node | Enables multithreading (multithread=TRUE) for computationally intensive assignment. |

8-16 cores and 32+ GB RAM recommended for large datasets. |

Bootstrap Confidence Threshold (minBoot) |

Quality filter; assigns taxonomy only when confidence exceeds threshold. | Default=50. Recommend 80 for higher precision in clinical/drug development contexts. |

| QIIME2 (Alternative Platform) | Provides feature-classifier plugin for taxonomy assignment compatible with DADA2 ASVs. |

Useful for integrating into broader QIIME2 pipelines. |

| IDTAXA (Alternative Algorithm) | Machine learning-based classifier from DECIPHER R package; often more accurate. |

Can be used with same SILVA/Greengenes databases as an alternative to assignTaxonomy. |

This guide is situated within a broader thesis on DADA2 (Divisive Amplicon Denoising Algorithm) amplicon sequence variant (ASV) research, which has revolutionized microbial ecology by providing reproducible, single-nucleotide-resolution inferences from marker-gene (e.g., 16S rRNA) sequencing data. The transition from the DADA2 pipeline output to robust statistical analysis and publication-quality visualization represents a critical and often challenging phase. This technical whitepaper details the systematic integration of ASV sequence tables, taxonomy assignments, and sample metadata into the phyloseq R/Bioconductor object—a powerful framework for managing, analyzing, and graphically representing complex microbiome census data.

The Scientist's Toolkit: Research Reagent Solutions for DADA2 and Phyloseq Workflow

| Item | Function |

|---|---|

| DADA2 R Package (v1.30+) | Core algorithm for modeling and correcting Illumina-sequenced amplicon errors, inferring exact amplicon sequence variants (ASVs). |

| phyloseq R/Bioconductor Package (v1.46+) | Data structure and unified interface for organizing ASV count table, taxonomy table, sample metadata, and phylogenetic tree; enables downstream statistical analysis and visualization. |

| DECIPHER R Package | Used for multiple sequence alignment of ASVs, a precursor for phylogenetic tree construction. |

| FastTree | Software for inferring approximately-maximum-likelihood phylogenetic trees from alignments of ASV sequences. |

| Silva or GTDB Reference Database | Curated taxonomic training datasets (formatted for DADA2) for classifying ASVs to taxonomic ranks (Kingdom to Species). |

| ggplot2 R Package | Core graphics system used by phyloseq for creating and customizing publication-quality plots. |

| RStudio IDE | Integrated development environment for R, facilitating project management, code execution, and visualization. |

Core Data Structures and Integration Protocol

The standard DADA2 pipeline outputs three critical files:

- Sequence Table: A matrix of read counts (non-chimeric ASVs x Samples).

- Taxonomy Table: A matrix assigning taxonomic identity (e.g., Phylum, Genus) to each ASV.

- Sample Metadata: A data frame containing experimental variables (e.g., treatment, timepoint, pH) for each sample.

Experimental Protocol: Constructing a Phyloseq Object

Methodology:

- Load Required Libraries and Data.

Infer a Phylogenetic Tree (Optional but Recommended).

Integrate Components into a Phyloseq Object.

Filter and Normalize.

Statistical Analysis and Visualization Workflows

Table 1: Core Alpha Diversity Indices Computable via Phyloseq

| Index | Function in Phyloseq | Description | Interpretation |

|---|---|---|---|

| Observed | plot_richness(ps, measures="Observed") |

Simple count of distinct ASVs in a sample. | Lower richness may indicate stress or disturbance. |

| Shannon | plot_richness(ps, measures="Shannon") |

Measures both richness and evenness. | Higher values indicate greater diversity and evenness. |

| Simpson | plot_richness(ps, measures="Simpson") |

Emphasizes evenness, weighted towards dominant ASVs. | Higher values indicate lower diversity (inverse Simpson is often used). |

Experimental Protocol: Beta Diversity and PERMANOVA

Methodology:

- Calculate Distance Matrix.

Ordination (NMDS).

Statistical Test (PERMANOVA) using

vegan::adonis2.

Title: ASV Data Integration & Analysis Workflow in Phyloseq

Title: DADA2 to Phyloseq Experimental Pipeline

Advanced Visualization and Differential Abundance Testing

Phyloseq seamlessly integrates with ggplot2 for customizable plots. For differential abundance testing, packages like DESeq2 (for raw counts) or corncob (for relative abundances with covariates) are commonly employed alongside phyloseq data.

Experimental Protocol: DESeq2 Integration

Methodology:

This integrated pipeline, from DADA2 output to statistical inference in phyloseq, provides a reproducible and comprehensive framework for deriving biological insights from amplicon sequencing data, directly supporting hypothesis-driven research in drug development and microbial ecology.

The shift from Operational Taxonomic Units (OTUs) to Amplicon Sequence Variants (ASVs) represents a pivotal advance in microbial ecology, with DADA2 standing as a cornerstone algorithm for high-resolution inference. This technical guide explores a critical application of this foundational thesis: the precise tracking of individual microbial strains over time within human hosts. Longitudinal clinical studies demand discrimination beyond the species level to link specific bacterial lineages to disease progression, treatment response, and microbiome resilience. DADA2-derived ASVs, which are biological sequences rather than clustered approximations, provide the necessary resolution to distinguish strain-level dynamics, enabling researchers to move from correlation to causation in understanding host-microbiome interactions in health and disease.

Core Methodological Framework

Longitudinal Sample Processing & DADA2 Pipeline

Experimental Protocol:

- Sample Collection: Serial biospecimens (e.g., stool, saliva, skin swabs) are collected from participants at predefined intervals (e.g., baseline, during intervention, follow-up).

- DNA Extraction & Amplicon Sequencing: Consistent, standardized DNA extraction kits are used for all samples. The 16S rRNA gene (V4 region) or, for higher resolution, the full-length 16S or ITS regions are amplified and sequenced on an Illumina platform. Note: For true strain tracking, shotgun metagenomic sequencing is superior but cost-prohibitive for large cohorts.

- DADA2 ASV Inference (Core):

- Filter and Trim:

filterAndTrim(truncLen=c(240,200), maxN=0, maxEE=c(2,2), truncQ=2, rm.phix=TRUE) - Learn Error Rates:

learnErrors(..., nbases=1e8, multithread=TRUE) - Dereplication & Sample Inference:

dada(derep, err=learned_error_rates, pool="pseudo", multithread=TRUE) - Merge Paired Reads & Construct Table:

mergePairs(...)thenmakeSequenceTable(merged) - Remove Chimeras:

removeBimeraDenovo(table, method="consensus", multithread=TRUE)

- Filter and Trim:

- Longitudinal ASV Table Curation: The final ASV-by-sample table is transposed for longitudinal analysis. ASVs are tracked by their exact DNA sequence across all time points for each subject.

Workflow Diagram:

Key Bioinformatics & Statistical Analyses for Tracking

1. Persistence & Prevalence Analysis: Calculate the per-subject persistence of each ASV across time points.

2. Abundance Trajectory Modeling: Use tools like geeM or GLMMs to model changes in ASV abundance linked to clinical covariates.

3. Phylogenetic Placement: Place ASV sequences on a reference phylogeny (e.g., using pplacer) to infer evolutionary relationships among persistent strains.

4. Stability Metrics: Compute subject-specific alpha-diversity stability (e.g., Bray-Curtis dissimilarity between consecutive time points) and correlate with persistent ASV signatures.

Analysis Logic Diagram:

Quantitative Data from Recent Studies (2023-2024)

The following table summarizes key metrics from recent longitudinal studies utilizing ASV-level resolution.

| Study Focus (PMID / DOI) | Cohort Size & Duration | Key ASV-Level Finding | Quantitative Result (ASV Resolution Enabled) |

|---|---|---|---|

| FMT for Recurrent CDI (10.1016/j.cell.2023.08.008) | 24 patients, 12 months | Engraftment of donor-derived Bacteroides strains predicts sustained cure. | Patients with >10% engrafted donor ASVs at 2 months had 100% cure rate vs. 33% in low engraftment. |

| IBD Flare Prediction (10.1038/s41591-023-02468-4) | 132 IBD patients, 2 years | Specific Ruminococcus gnavus ASV abundance rises 6-8 weeks pre-flare. | A 1-log increase in the specific R. gnavus ASV associated with 4.2x higher flare odds (p<0.001). |

| Antibiotic Recovery in Preterms (10.1126/scitranslmed.adg8862) | 60 neonates, first 90 days | Persistent Enterobacteriaceae ASVs post-antibiotics linked to poor growth. | Subjects with stable, dominant Enterobacteriaceae ASVs had 25% lower weight gain velocity (p=0.01). |

| Dietary Intervention (10.1186/s40168-024-01778-0) | 150 adults, 6 months | Personal baseline Prevotella copri ASV composition predicts fiber response. | Individuals with ASV Cluster A had a 3-fold greater SCFA increase than those with Cluster B (p=0.002). |

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Longitudinal ASV Studies |

|---|---|

| Stool DNA Stabilization Kit (e.g., OMNIgene•GUT) | Preserves microbial DNA at room temperature, critical for multi-site/long-term studies and reducing collection bias. |

| High-Fidelity PCR Polymerase (e.g., Q5, KAPA HiFi) | Minimizes PCR errors during library prep, ensuring sequence variants are biological (true ASVs) not technical. |

| Mock Microbial Community (ZymoBIOMICS) | Standardized positive control for tracking pipeline performance and batch effects across sequencing runs. |

| DADA2-compatible R Environment (v1.28+) | Core software for accurate ASV inference. Requires R, dada2, phyloseq, and DECIPHER/Biostrings packages. |

| Longitudinal Data Analysis Tools | R packages: vegan (beta-diversity), lme4/geeM (mixed models), mvabund (multivariate abundance models). |

| Phylogenetic Placement Database (e.g., GTDB, SILVA) | Curated reference tree and alignment for placing ASVs to interpret strain-level evolution and relatedness. |

Advanced Protocol: Strain-Level Network Analysis

Objective: Identify co-persistence patterns among ASVs to infer ecological guilds or host-adapted strain consortia.

Detailed Protocol:

- Data Filtering: From the longitudinal ASV table, retain only ASVs present in ≥20% of time points for at least 20% of subjects.

- Correlation Network Construction: For each subject, calculate pairwise Spearman correlations (ρ) between the abundance trajectories of persistent ASVs over time. Use a subject-specific threshold (e.g., ρ > 0.8 or < -0.8).

- Meta-Network Aggregation: Aggregate individual subject networks into a single consensus network. An edge is included in the consensus network if it appears in >30% of subject-specific networks.

- Module Detection & Annotation: Use the

igraphpackage to detect highly connected modules (clusters) within the consensus network. Annotate modules by the phylogenetic identity and known functional potential (via PICRUSt2 or similar) of member ASVs. - Clinical Validation: Test whether the abundance trajectory of entire network modules correlates more strongly with clinical outcomes than individual ASVs using multivariate association models.

Network Analysis Workflow Diagram:

The integration of DADA2's precise ASV inference into longitudinal clinical study design transforms our capacity to observe the human microbiome as a dynamic, personalized ecosystem. Tracking ASVs, as biologically relevant units, across time enables the identification of strain-level drivers of health, prognostic biomarkers, and true targets for therapeutic intervention. This approach solidifies the thesis that high-resolution amplicon analysis is not merely a taxonomic improvement but a fundamental requirement for mechanistic understanding in microbiome science.

Solving Common DADA2 Challenges: Parameter Tuning and Performance Optimization

Within the broader thesis on DADA2 amplicon sequence variant (ASV) research, achieving high merge rates—the successful pairing of forward and reverse reads into full-length sequences—is critical for accurate microbial community profiling. Low merge rates directly compromise downstream diversity analyses and statistical power, a significant concern for researchers and drug development professionals investigating microbiomes in therapeutic contexts. This technical guide examines the core computational and sequence-based factors leading to low merge rates, focusing on overlap length and quality thresholds, and provides actionable diagnostic and resolution protocols.

DADA2 infers ASVs with single-nucleotide resolution. The merging step is performed by the mergePairs() function, which aligns the overlapping region of forward and reverse reads. A low merge rate indicates a failure to construct full-length sequences from the paired-end data, resulting in loss of data and potential bias. Within our thesis framework, this step is paramount for preserving true biological variation, especially in low-biomass or clinically derived samples where sequence depth is limited.

Core Diagnostics: Identifying the Source of Low Merge Rates

The primary levers controlling merge success are the overlap requirement and the sequence quality profile.

Quantitative Analysis of Key Parameters

The following table summarizes the default and recommended adjustable parameters in DADA2's mergePairs() function and their impact on merge rates.

Table 1: Key DADA2 Merge Parameters and Their Impact

| Parameter | Default Value | Function | Effect on Merge Rate | Recommended Diagnostic Adjustment |

|---|---|---|---|---|

minOverlap |

12 | Minimum length of overlap required. | Increasing can decrease rate; decreasing can increase rate but may raise false merges. | Gradually decrease to 8-10 if overlap is short. |

maxMismatch |

0 | Maximum mismatches allowed in overlap region. | Decreasing (to 0) ensures high fidelity but lowers rate; increasing (to 1-2) can rescue rate. | Increase to 1 if quality is high but primers/variable region cause mismatches. |

justConcatenate |

FALSE | If TRUE, concatenates without overlapping. | Forces a 100% "merge" rate but creates a fake overlap with N's. | Use only for non-overlapping reads. |

| Input Read Quality (Q-score) | - | Average quality in overlap region. | Low quality ( | Pre-filter with filterAndTrim(); inspect quality profiles. |

Diagnostic Experimental Protocol

Protocol 1: Systematic Assessment of Merge Failure Causes

- Quality Profile Visualization: Use

plotQualityProfile()on subsets of forward and reverse reads. Visually identify the point where median quality drops substantially, typically at the ends of reads. - Compute Expected Overlap: Calculate:

(Length of Fwd Read) + (Length of Rev Read) - (Length of Amplicon). For common V4 16S rRNA assays (e.g., 251bp x 2, ~385bp amplicon), expected overlap is ~117bp. A significantly shorter empirical overlap indicates truncation during sequencing or primer mispositioning. - Iterative Parameter Testing: Run

mergePairs()in a loop, varyingminOverlap(from 20 down to 8) andmaxMismatch(0 to 2). Plot merge rate vs. parameter value to identify the "cliff" where rate drops. - Inspect Failed Reads: Extract reads that failed to merge and align them using a tool like MUSCLE. Manually inspect the alignment for consistent patterns of mismatches, indels, or poor quality in the overlap region.

Resolution Strategies: Optimizing Overlap and Quality Thresholds

Pre-processing for Optimal Overlap

Protocol 2: Truncation for Maximal Reliable Overlap

- Based on

plotQualityProfile(), set truncation lengths (truncLen) forfilterAndTrim()to remove low-quality tails while preserving sufficient overlap.- Example: If forward read quality drops at position 240 and reverse at position 160, use

truncLen=c(240,160). Ensure the truncated lengths still yield a positive expected overlap.

- Example: If forward read quality drops at position 240 and reverse at position 160, use

- Re-run

filterAndTrim()with these parameters. - Critical Check: Post-truncation, re-calculate expected overlap. If it falls below 20-25 nucleotides, consider

justConcatenate=TRUEor revisiting sequencing design.

Tuning Merge Parameters

Protocol 3: Adaptive Merging Based on Sample Quality

- For datasets with heterogeneous sample quality (common in clinical studies), avoid a single stringent

maxMismatch. - Implement a quality-aware merging wrapper:

- Derive the average quality score in the overlap region for each sample.

- For samples with high average overlap quality (>Q35), use

maxMismatch=0. - For samples with moderate quality (Q30-Q35), use

maxMismatch=1. - This preserves specificity where possible while rescuing data from lower-quality runs.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Optimizing 16S rRNA Sequencing for DADA2

| Item | Function in ASV Research | Relevance to Merge Rates |

|---|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Minimizes PCR errors during library prep, reducing artificial mismatches in overlap. | Reduces maxMismatch failures from polymerase errors, increasing true merges. |

| Standardized Mock Community DNA (e.g., ZymoBIOMICS) | Provides known sequence composition for positive control. | Enables benchmarking of merge rate parameters against ground truth to optimize for accuracy, not just rate. |

| Magnetic Bead-based Cleanup Kits (e.g., AMPure XP) | Precise size selection removes primer dimers and non-target fragments. | Produces a tight amplicon size distribution, leading to consistent expected overlap lengths. |

| Dual-Indexed Primers (Nextera XT compatible) | Allows unique sample identification, reducing index hopping. | While not directly affecting merge, ensures merged reads are correctly assigned, preserving sample integrity. |

| Phix Control v3 | Spiked-in during sequencing for run quality monitoring. | Helps distinguish sequencing-related quality drops (affecting overlap) from sample-specific issues. |

Visualization of Workflows and Decision Pathways

Title: Diagnostic Decision Tree for Low DADA2 Merge Rates

Title: DADA2 Pipeline with Merge Step Parameters

Optimizing merge rates in DADA2 is a balancing act between inclusivity of genuine sequences and exclusion of spurious mergers. For ASV-based research, particularly in clinical and therapeutic development where data integrity is paramount, a systematic approach—diagnosing via quality and overlap analysis, then resolving with targeted truncation and parameter tuning—is essential. Implementing the protocols and utilizations outlined herein will ensure maximal yield of high-fidelity, full-length sequences, forming a robust foundation for downstream analyses of microbial diversity and function.

Within the rapidly evolving field of microbial ecology and diagnostics, DADA2-based amplicon sequence variant (ASV) analysis has become the gold standard for high-resolution characterization of microbiomes. This methodological shift, central to modern thesis research in microbial systems, presents significant computational challenges when applied to large-scale studies involving thousands of samples. Efficient management of compute resources and runtime is no longer optional but a critical determinant of research feasibility, reproducibility, and speed to insight, particularly for professionals in drug development who rely on robust, timely data.

The Computational Burden of DADA2 ASV Pipelines

The DADA2 algorithm is inherently computationally intensive. Unlike clustering-based OTU methods, DADA2 models sequence errors to infer exact biological sequences, requiring significant memory and CPU cycles for error rate learning, dereplication, sample inference, and chimera removal. Scaling from tens to thousands of samples increases runtime non-linearly. Key bottlenecks include:

- Dereplication & Sample Inference: Memory (RAM) usage scales with the number of unique sequences across all samples.

- Error Rate Learning: A machine-learning step that is computationally heavy and benefits from multi-threading.

- Merging Paired-end Reads: A pairwise alignment step that is often the most time-consuming phase.

Current benchmarking data indicates the following typical resource requirements for a standard 16S rRNA gene V4 region dataset:

Table 1: Computational Profile of DADA2 Workflow (Per 100 Samples, ~150bp PE Reads)

| Pipeline Stage | Avg. Runtime (CPU-hr) | Peak RAM (GB) | Parallelizable | Key Resource Constraint |

|---|---|---|---|---|

| Filter & Trim | 2-5 | 2-4 | Yes (by sample) | I/O, CPU |

| Learn Error Rates | 5-10 | 8-12 | Limited | Single-thread CPU |

| Dereplication | 3-6 | 10-20 | Yes (by sample) | RAM, I/O |

| Sample Inference | 10-25 | 15-30 | No | RAM, Single-thread CPU |

| Merge Pairs | 20-50 | 5-10 | Yes (by sample) | CPU |

| Chimera Removal | 5-10 | 4-8 | Yes | CPU |

| Total (Approx.) | 45-106 | 30+ |

Strategic Compute Resource Management

High-Performance Computing (HPC) vs. Cloud Orchestration

For thesis-scale research, leveraging institutional HPC clusters or cloud platforms (AWS, GCP, Azure) is essential.

- HPC (Slurm/PBS): Use array jobs to process samples in parallel during embarrassingly parallel steps (filtering, dereplication). Request chunks of memory proportional to sample batch size.

- Cloud (Nextflow/Snakemake): Use orchestration tools to create scalable, reproducible pipelines. Kubernetes or AWS Batch can auto-scale based on queue size.

Optimizing Runtime: Key Protocols & Methodologies

Protocol A: Staged, Parallelized DADA2 Execution This protocol minimizes wall-clock time by maximizing parallel execution where algorithmically possible.

- Quality Profiling & Trimming: Run

filterAndTrim()on all samples independently using a job array. Save intermediate filtered FASTQs. - Error Model Learning: Execute

learnErrors()on a subset (e.g., 5-10 million reads) from multiple samples. This step is not sample-parallel but can be run once for the entire study if sequencing runs are consistent. - Parallel Dereplication and Inference: While DADA2's core inference is serial per sample, launch individual jobs for each sample using the pre-learned error model. This is the most effective parallelization step.

- Merging and Chimera Removal: Merge pairs for each sample independently, then run chimera removal on the merged sequence table.

Protocol B: Resource-Aware Batch Processing for Massive Datasets For studies exceeding 10,000 samples, a batch processing approach is necessary to manage memory limits.

- Split Sample Manifest: Partition the sample list into batches that will fit within available RAM (e.g., 50-100 samples per batch).

- Batch-Specific Inference: Run the full DADA2 inference (dereplication, inference, merging) independently on each batch. This yields separate sequence tables per batch.

- Cross-Batch Sequence Integration: Use DADA2's

mergeSequenceTables()function to combine all batch-specific tables into a single study-wide sequence table. Finally, apply consensus chimera removal (removeBimeraDenovo()) on the merged table.

Title: DADA2 workflow optimization decision tree.

Data Lifecycle Management