DADA2 Pipeline for ASVs: A Comprehensive Guide for Biomedical Research and Microbiome Analysis

This article provides a complete guide to the DADA2 (Divisive Amplicon Denoising Algorithm) pipeline for generating Amplicon Sequence Variants (ASVs), tailored for researchers and professionals in microbiology, drug development, and...

DADA2 Pipeline for ASVs: A Comprehensive Guide for Biomedical Research and Microbiome Analysis

Abstract

This article provides a complete guide to the DADA2 (Divisive Amplicon Denoising Algorithm) pipeline for generating Amplicon Sequence Variants (ASVs), tailored for researchers and professionals in microbiology, drug development, and clinical studies. We cover the foundational theory of error-correction and ASVs versus OTUs, deliver a step-by-step methodological walkthrough from raw reads to taxonomy assignment, address common troubleshooting and optimization for challenging datasets (e.g., host-derived or low-biomass samples), and critically evaluate DADA2's performance against other bioinformatics tools. The guide synthesizes best practices for robust, reproducible microbiome analysis applicable to biomedical research.

Understanding DADA2 and ASVs: From Core Concepts to Revolutionizing Microbiome Resolution

Within the context of a broader thesis on the DADA2 pipeline for ASV research, this document outlines the fundamental shift in microbial community analysis from Operational Taxonomic Unit (OTU) clustering to Exact Sequence Variant (ESV) or ASV determination. ASVs are biological sequences distinguished by single-nucleotide differences, providing higher resolution and reproducibility than OTU-based methods, which cluster sequences based on an arbitrary similarity threshold (e.g., 97%).

Comparative Analysis: OTU vs. ASV

Table 1: Quantitative Comparison of OTU Clustering and ASV Methods

| Feature | OTU Clustering (97%) | ASV (DADA2) |

|---|---|---|

| Basis | Clustering by % similarity (subjective threshold) | Exact biological sequences (no clustering) |

| Resolution | Species/Genus level | Single-nucleotide difference (strain-level) |

| Reproducibility | Low (varies with algorithm/parameters) | High (deterministic, reproducible across runs) |

| Chimeric Sequence Handling | Post-clustering removal, often incomplete | Integrated, probabilistic removal during inference |

| Typical Output Count | Lower (artificial groups) | Higher (true biological variants) |

| Computational Demand | Moderate (distance matrix calculation) | High (error model training, partition) |

| Key Advantage | Computational simplicity, historical data | Biological precision, longitudinal study compatibility |

Application Notes & Protocols

Protocol 1: Core DADA2 Workflow for ASV Inference from Paired-end Illumina Data

This protocol details the standard pipeline for deriving ASVs from raw FASTQ files.

Research Reagent Solutions & Essential Materials:

- Illumina MiSeq/HiSeq Platform: Generates paired-end 16S rRNA gene amplicon sequences (e.g., V4 region).

- Sample-specific Barcodes & Adapters: For multiplexed sequencing.

- DADA2 R Package (v1.28+): Core software for ASV inference.

- R Environment (v4.0+): With dependencies (ShortRead, ggplot2, Biostrings).

- Reference Taxonomy Database: e.g., SILVA v138.1, Greengenes2 2022.2, for taxonomic assignment.

- High-Performance Computing (HPC) Cluster: Recommended for large datasets (>100 samples).

Detailed Methodology:

- Demultiplexing & Primer Removal: Use

cutadaptorDADA2::removePrimersto trim sequencing adapters and PCR primers. Output: Trimmed FASTQ files. - Quality Filtering & Trimming: In R, run

filterAndTrim. Typical parameters:maxN=0,maxEE=c(2,2),truncQ=2. This removes low-quality reads. - Error Rate Learning: Execute

learnErrorson a subset of data to model the platform-specific error profile. - Dereplication & Sample Inference: Run

derepFastqfollowed by the coredadafunction to infer true biological sequences per sample. - Merge Paired Reads: Use

mergePairsto combine forward and reverse reads, creating a contiguous ASV sequence. - Construct Sequence Table: Generate an ASV abundance table with

makeSequenceTable. - Remove Chimeras: Apply

removeBimeraDenovowith method="consensus" to filter out PCR chimeras. - Taxonomic Assignment: Assign taxonomy using

assignTaxonomyagainst a curated reference database. - Downstream Analysis: Proceed with phyloseq R package for ecological analysis (alpha/beta diversity, differential abundance).

Protocol 2: Benchmarking ASV Fidelity via Mock Community Analysis

A validation protocol to assess the accuracy of the DADA2 pipeline.

Research Reagent Solutions & Essential Materials:

- ZymoBIOMICS Microbial Community Standard (D6300): A defined mock community with known genomic composition.

- DNA Extraction Kit: e.g., DNeasy PowerSoil Pro Kit, for consistent cell lysis and DNA purification.

- 16S rRNA Gene PCR Primers (e.g., 515F/806R): For the target hypervariable region.

- Quantification Kit (Qubit dsDNA HS Assay): For accurate DNA concentration measurement.

- DADA2 Pipeline: As described in Protocol 1.

Detailed Methodology:

- Sample Preparation: Extract DNA from the mock community standard in triplicate following the kit's protocol. Include an extraction negative control.

- Amplification & Sequencing: Perform PCR amplification with barcoded primers under optimized conditions. Pool amplicons and sequence on an Illumina MiSeq with 2x250 bp chemistry.

- ASV Inference: Process raw reads through the full DADA2 pipeline (Protocol 1).

- Data Analysis & Validation:

- Compare inferred ASV sequences to the expected reference sequences of the mock community strains.

- Calculate sensitivity (percentage of expected strains detected) and false positive rate (percentage of ASVs not matching any expected strain).

- Assess the correlation between the known relative abundance of each strain and the sequence count of its corresponding ASV (Pearson r > 0.95 indicates high fidelity).

Visualizations

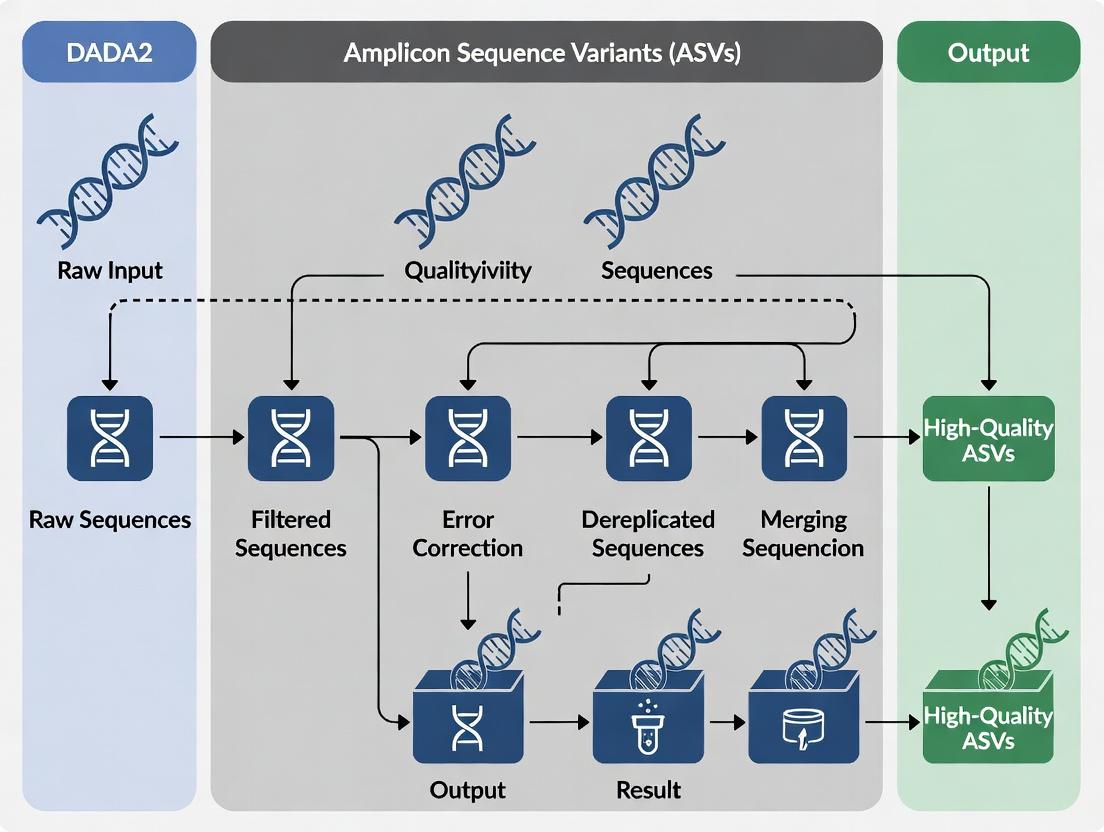

Title: DADA2 ASV Inference Workflow

Title: OTU vs ASV Methodological Consequences

This document serves as a critical application note within a broader thesis investigating the DADA2 (Divisive Amplicon Denoising Algorithm) pipeline for Amplicon Sequence Variant (ASV) research. Unlike traditional Operational Taxonomic Unit (OTU) clustering, which heuristically groups sequences based on a fixed similarity threshold (e.g., 97%), DADA2 resolves exact biological sequences by modeling and correcting Illumina-sequenced amplicon errors. This shift from fuzzy clusters to precise variants provides finer resolution for microbial community analysis, directly impacting biomarker discovery, therapeutic microbiome modulation, and translational drug development.

Core Algorithm: Error Modeling and Denoising

DADA2 employs a novel, parameterizable error model built from the data itself.

Learning the Error Rates

The algorithm first learns the site-specific error rates from the sequence data by alternating between sample inference and error rate estimation.

Quantitative Summary of Error Model Parameters

| Parameter | Description | Typical Range/Value | Impact on Inference |

|---|---|---|---|

| Error Rate (ε) | Probability of a substitution at a given position in a read. | (10^{-8} ) to (10^{-2} ) (platform-dependent) | Core of the model; higher rates require more evidence for a variant to be called real. |

| A Priori Error Matrix (E) | (16 x N) matrix (for N read length) of transition probabilities (e.g., A→C, A→G, A→T, A→A). | Learned from data. | Encodes the context (nucleotide and position) of sequencing errors. |

| Amplicon Abundance (λ) | The expected number of reads for a true sequence. | Inferred per sequence. | Used in the Poisson abundance p-value to distinguish true sequences from errors. |

| P-value Alpha (α) | Significance threshold for the abundance p-value. | Default = (10^{-4}) | Stringency control; lower alpha reduces false positives but may miss rare variants. |

Protocol: Error Rate Learning from a Mock Community

- Objective: Validate and tune the error model using a known microbial community.

- Materials: Illumina FASTQ files from sequencing a ZymoBIOMICS or ATCC mock community.

- Method:

- Process Reads: Trim, filter, and dereplicate reads using

filterAndTrim()andderepFastq()in the DADA2 R package. - Learn Errors: Run

learnErrors(derep, randomize=TRUE, multithread=TRUE). Therandomize=TRUEparameter is crucial for a proper unsupervised learning of the error rates. - Visualize Fit: Plot the error model using

plotErrors(err). A good fit shows the black lines (learned error rates) closely following the red lines (observed rates in the data) and deviating from the grey lines (theoretical error rates if no learning occurred). - Validation: Compare inferred ASVs to the known reference sequences. Calculate sensitivity and precision.

- Process Reads: Trim, filter, and dereplicate reads using

Divisive Partitioning for Denoising

DADA2 denoises by repeatedly partitioning reads into core and outlier sequences, contrasting with greedy clustering.

Detailed Denoising Protocol

- Input: Filtered, dereplicated reads and the learned error model.

- Core Algorithm Steps (via

dada()function):- Start with all reads in a single partition.

- For each partition, compute the abundance p-value for each sequence versus the most abundant (central) sequence, using the Poisson model and the error matrix.

- If the most significant p-value is below the threshold (α), partition the reads into two groups: the "core" (central sequence and all reads consistent with it as potential errors) and the "outlier" (the divergent sequence and its potential errors).

- Repeat this process recursively on each new partition until no more significant outliers are found.

- The final output is a list of "sequence hubs" (denoised sequences) with corrected read abundances.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in DADA2/ASV Pipeline |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Critical for minimal PCR amplification bias and error introduction during library preparation. Errors here become input for DADA2's model. |

| Quant-iT PicoGreen dsDNA Assay | Accurate quantification of amplicon libraries prior to pooling and sequencing, ensuring even read depth across samples. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard for paired-end 16S rRNA (V3-V4, 2x300bp) and ITS sequencing, providing read lengths suitable for DADA2's overlap merging. |

| ZymoBIOMICS Microbial Community Standard | Mock community with defined genomic composition. Essential for validating the entire DADA2 pipeline, from error rate learning to final ASV calling. |

| Mag-Bind Environmental DNA 96 Kit | For consistent, high-yield microbial DNA extraction from complex samples (e.g., soil, stool), ensuring representative input for PCR. |

| DADA2 R Package (v1.28+) | The primary software implementation. Requires R (v4.0+). Key functions: learnErrors(), dada(), mergePairs(). |

| Phred Quality Score Data (embedded in FASTQ) | The foundational data for initial quality filtering and informing the error model. Not a physical reagent, but the primary input "material." |

DADA2 Workflow Visualization

Title: DADA2 Standard Bioinformatic Analysis Workflow

Title: Divisive Partitioning Logic of the DADA2 Algorithm

Within the broader thesis on the DADA2 (Divisive Amplicon Denoising Algorithm 2) pipeline for Amplicon Sequence Variant (ASV) research, its adoption represents a paradigm shift from Operational Taxonomic Unit (OTU) clustering. This shift directly addresses three pillars critical for translational biomedical research in microbiology, oncology, and drug development: Reproducibility, Resolution, and Quantitative Accuracy. This application note details protocols and data supporting these advantages.

Reproducibility: Standardized ASV Inference

The DADA2 pipeline replaces heuristic OTU clustering with a model-based, error-correcting algorithm. This ensures the same input data yields identical ASVs across computational runs, a foundational requirement for collaborative and longitudinal studies.

Protocol 1.1: Core DADA2 Workflow for 16S rRNA Gene Sequencing

- Input: Demultiplexed paired-end FASTQ files.

- Step 1 - Filter & Trim: Remove low-quality bases and trim primers. Use

filterAndTrim()with parametersmaxN=0,maxEE=c(2,2),truncQ=2. - Step 2 - Learn Error Rates: Model error profiles from data using

learnErrors(). - Step 3 - Dereplication: Combine identical reads into unique sequences with abundances (

derepFastq()). - Step 4 - Sample Inference: Apply the core denoising algorithm to infer true biological sequences (

dada()). - Step 5 - Merge Paired Reads: Combine forward and reverse reads, removing mismatches (

mergePairs()). - Step 6 - Construct ASV Table: Build a sequence-by-sample count matrix (

makeSequenceTable()). - Step 7 - Remove Chimeras: Identify and remove PCR chimeras (

removeBimeraDenovo()). - Output: A reproducible Amplicon Sequence Variant (ASV) table.

Diagram Title: Reproducible DADA2 ASV Inference Workflow

Resolution: Distinguishing Closely Related Taxa

ASVs are resolved to the level of single-nucleotide differences, providing species- or even strain-level resolution. This is crucial for tracking pathogenic strains, differentiating tumor microbiome signatures, or identifying biomarkers.

Table 1: Comparative Resolution of OTU vs. ASV Methods on Mock Community Data

| Method | Clustering Threshold | # of Features Inferred | Match to Known Strains | Spurious Features |

|---|---|---|---|---|

| OTU (97%) | 97% similarity | 8 | 7 | 2 |

| OTU (99%) | 99% similarity | 15 | 10 | 5 |

| DADA2 (ASV) | Exact sequence | 20 | 20 | 0 |

Data illustrates ASV's superior ability to resolve all known strains in a mock community without generating spurious OTUs.

Quantitative Accuracy: From Relative to Absolute Abundance

DADA2's read count per ASV is a more accurate representation of starting template concentration than clustered OTU counts, as it avoids inflation from arbitrary cluster merging. This improves correlation with qPCR and meta-genomic data.

Protocol 1.2: Validating Quantitative Accuracy via Spike-Ins

- Objective: Assess the linearity between input cell count and ASV read count.

- Materials: Defined mock microbial community with known cell concentrations (e.g., ZymoBIOMICS Microbial Community Standard).

- Procedure:

- Perform genomic DNA extraction from serial dilutions of the mock community.

- Amplify the 16S rRNA V4 region using barcoded primers (515F/806R).

- Sequence on an Illumina MiSeq with 2x250 bp chemistry.

- Process data through the DADA2 pipeline (Protocol 1.1).

- Map ASVs to the known reference sequences of the mock community.

- Analysis: Plot the log-transformed input cell count against the log-transformed sequence count for each resolved strain. Calculate the Pearson correlation coefficient (R²).

Diagram Title: Protocol for Validating ASV Quantitative Accuracy

Table 2: Quantitative Accuracy Metrics: DADA2 vs. OTU Clustering

| Metric | DADA2 (ASVs) | OTU Clustering (97%) |

|---|---|---|

| Mean Correlation (R²) to Spike-in Abundance | 0.98 | 0.85 |

| Coefficient of Variation (Technical Replicates) | < 5% | 10-15% |

| False Abundance Inflation | Minimal | High (due to cluster merging) |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in DADA2/ASV Research |

|---|---|

| ZymoBIOMICS Microbial Community Standard | Defined mock community of known composition and abundance for validating pipeline accuracy and resolution. |

| PhiX Control v3 (Illumina) | Spiked-in during sequencing for error rate monitoring and calibrating base calling. |

| DADA2 R Package (v1.28+) | Core software implementing the error model and denoising algorithm for ASV inference. |

| QIIME 2 (with DADA2 plugin) | A reproducible, scalable platform that incorporates DADA2 for full microbiome analysis pipelines. |

| Silva or GTDB Reference Database | Curated rRNA databases for taxonomic assignment of inferred ASVs. |

| PCR Reagents (Low-bias Polymerase) | High-fidelity enzymes (e.g., Phusion, Q5) to minimize amplification errors that burden the error-correction model. |

| Magnetic Bead-based Cleanup Kits | For consistent size selection and purification of amplicon libraries, reducing primer dimer contamination. |

This document details the foundational prerequisites for executing the DADA2 (Divisive Amplicon Denoising Algorithm) pipeline, a core methodology for resolving Amplicon Sequence Variants (ASVs) in marker-gene analysis. Within the broader thesis investigating optimal ASV inference for drug development microbiome research, rigorous data preparation and software setup are critical for ensuring reproducible, high-fidelity results that can inform clinical decisions and therapeutic discovery.

Input Data Requirements

Paired-end Read Specifications

DADA2 processes demultiplexed Illumina paired-end sequencing data. The following requirements are mandatory:

Table 1: Required Paired-end Read Characteristics

| Feature | Requirement | Rationale |

|---|---|---|

| Format | Demultiplexed FASTQ files (.fastq or .fq.gz). |

DADA2 operates on per-sample files. |

| Naming Convention | Consistent, parseable (e.g., SampleName_R1_001.fastq, SampleName_R2_001.fastq). |

Enables automated sample name inference. |

| Read Length | Typically ≥ 150 bp for V3-V4 16S rRNA regions. Must be long enough for ≥ 20 bp overlap after trimming. | Ensures sufficient overlap for merging paired reads. |

| Overlap | Minimum 20 base pairs after quality trimming. | Essential for accurate read merging. |

| Platform | Illumina MiSeq, HiSeq, or NovaSeq recommended. | The pipeline is optimized for Illumina error profiles. |

Quality Score Encoding

Accurate quality score interpretation is essential for DADA2's error model.

Table 2: Quality Score Encoding Requirements

| Encoding | Accepted? | Action |

|---|---|---|

| Sanger / Illumina 1.8+ (Phred+33) | Yes (Standard). | Directly compatible. |

| Illumina 1.3+ / 1.5+ (Phred+64) | No. | Must be converted to Phred+33 using seqtk or Bioconductor. |

| Check Method | Use seqtk seq -A in.fastq | head -n 4 to view ASCII characters. |

Confirm scores align with Sanger range. |

Software Installation Protocol

Comprehensive Installation via Conda

The recommended method ensures dependency resolution.

Installation via R/Bioconductor

For users within an existing R ecosystem.

Validation Test with Mock Data

Application Note: Pre-processing Workflow Protocol

Objective: Process raw FASTQ files into a quality-filtered, error-corrected sequence table.

Materials:

- Compute resource (Unix-based OS, ≥16GB RAM for large datasets).

- Demultiplexed paired-end FASTQ files.

- Installed software (Section 3).

Procedure:

- Initial Quality Assessment:

- DADA2 Core Processing in R:

Visual Workflow

Title: DADA2 Pre-processing and ASV Inference Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in DADA2/ASV Research | Specification/Note |

|---|---|---|

| Illumina Sequencing Kit | Generate paired-end amplicon data. | MiSeq Reagent Kit v3 (600-cycle) for 2x300 bp reads. |

| PCR Primers | Target hypervariable region of marker gene (e.g., 16S rRNA). | Must be well-documented (e.g., 341F/806R for V3-V4). |

| Positive Control | Assess pipeline accuracy. | Mock microbial community (e.g., ZymoBIOMICS D6300). |

| Negative Control | Detect reagent/lab contamination. | Nuclease-free water taken through extraction/PCR. |

| Silva Reference Database | Assign taxonomy to ASVs. | SILVA SSU NR 99 (v138.1 or newer) formatted for DADA2. |

| Compute Environment | Run computationally intensive steps. | Unix-based system (Linux/macOS) with ≥16GB RAM. |

| Sample Metadata File | Associate biological variables with ASV data. | Tab-separated (.tsv) file with sample IDs matching FASTQ names. |

Application Notes

DADA2 (Divisive Amplicon Denoising Algorithm) is a pivotal pipeline for generating Amplicon Sequence Variants (ASVs) from high-throughput sequencing data, particularly targeting the 16S rRNA gene and ITS region. Its core innovation is error modeling and correction without clustering sequences by an arbitrary similarity threshold (e.g., 97% for OTUs), thereby resolving biological sequences at single-nucleotide resolution.

Table 1: Comparison of DADA2 with Key Contemporary ASV/OTU Pipelines

| Feature | DADA2 | Deblur | UNOISE3 | QIIME 2 (with VSEARCH) | Traditional OTU Clustering |

|---|---|---|---|---|---|

| Core Method | Divisive, model-based denoising | Error-profile-based deblurring | UNOISE denoising algorithm | Heuristic, similarity-based clustering (e.g., 97%) | Heuristic clustering |

| Resolution | Amplicon Sequence Variant (ASV) | Amplicon Sequence Variant (ASV) | Zero-radius OTU (zOTU) | OTU or ASV (via DADA2 plugin) | Operational Taxonomic Unit (OTU) |

| Basis | Error model learned from data | Static, pre-defined error profile | Greedy clustering and denoising | User-defined % identity | User-defined % identity |

| Chimera Removal | Integrated (consensus) | Post-processing | Integrated | Post-processing (e.g., uchime2) | Often separate step |

| Paired-end Read Handling | Native merging & quality filtering | Requires pre-merged reads | Requires pre-merged reads | Native merging available | Often requires pre-processing |

| Run Time | Moderate | Fast | Fast | Fast to Moderate (clustering) | Fast |

| Key Advantage | High precision, robust error model, integrated workflow | Speed, reproducibility | Speed, sensitivity for low-abundance variants | Flexibility, extensive ecosystem | Simplicity, historical precedent |

| Primary Output | Feature table of ASVs, representative sequences | Feature table of ASVs, representative sequences | Feature table of zOTUs, representative sequences | Feature table (OTU/ASV), representative sequences | Feature table (OTU), representative sequences |

Positioning in the Ecosystem: DADA2 occupies a central role as a high-fidelity, denoising-based ASV caller. It is frequently benchmarked as the most accurate in terms of error correction, though sometimes at a computational cost compared to Deblur or UNOISE3. Its integration as a core plugin within the QIIME 2 framework and availability as a standalone R package make it highly accessible. In the modern ecosystem, DADA2 is often the preferred choice for studies where maximizing biological resolution and minimizing false positives from sequencing errors are critical, such as in longitudinal cohort studies or intervention trials in drug development.

Protocol: DADA2 Workflow for 16S rRNA Paired-end Data (R Package)

Research Reagent Solutions & Essential Materials

| Item | Function |

|---|---|

| Raw FASTQ Files | Input sequencing data (R1 & R2 for paired-end). |

| DADA2 R Package (v1.28+) | Core software containing all denoising and processing functions. |

| R Studio / R Environment | Platform for executing the pipeline. |

| Sample Metadata File | Tab-separated file linking sample IDs to phenotypic/experimental conditions. |

| Reference Database (e.g., SILVA, GTDB) | For taxonomic assignment of ASVs (e.g., silva_nr99_v138.1_train_set.fa.gz). |

| PCR Primers (FWD & REV sequences) | Required for precise primer removal during trimming. |

| High-Performance Computing (HPC) Resources | Recommended for large datasets (>100 samples). |

Detailed Methodology

Environment Setup and Import:

Quality Profiling and Trimming:

Learn Error Rates and Denoise:

Merge Paired Reads and Construct Table:

Remove Chimeras and Assign Taxonomy:

Downstream Analysis: Output can be imported into

phyloseq(R) or QIIME 2 for diversity analysis, differential abundance testing, and visualization.

Visualizations

DADA2 Core Workflow for 16S rRNA Analysis

DADA2 in the Bioinformatics Ecosystem

Step-by-Step DADA2 Pipeline: From Raw FASTQ to Analyzable ASV Table

Within the broader thesis on implementing the DADA2 pipeline for Amplicon Sequence Variant (ASV) research in microbial ecology and drug development, the initial quality assessment of raw sequencing reads is a critical first step. This protocol details the use of the plotQualityProfile function from the DADA2 R package to perform this essential diagnostic. Accurate ASV inference, which provides higher resolution than traditional OTU clustering, is fundamentally dependent on high-quality input data. This initial inspection directly informs the subsequent trimming and filtering parameters within the DADA2 workflow, ultimately impacting the reliability of downstream analyses, including biomarker discovery and therapeutic target identification.

Core Principles of Read Quality Visualization

The plotQualityProfile function generates an overview of the quality profiles for each cycle (base position) in a set of FASTQ files. It plots the mean quality score (green line) and the quartiles of the quality score distribution (orange lines) across all reads. Additionally, it shows the frequency of each nucleotide base (A, C, G, T) at each position, which can reveal issues like primer contamination or biased nucleotide composition. The quality score (Q-score) is a logarithmic measure of base-calling error probability: Q20 = 1% error (99% accuracy), Q30 = 0.1% error (99.9% accuracy).

Experimental Protocol: Quality Assessment withplotQualityProfile

Materials and Pre-requisites

- Input Data: Paired-end or single-end FASTQ files from 16S rRNA gene (or other marker gene) amplicon sequencing (e.g., Illumina MiSeq).

- Software Environment: R (version 4.0 or later), RStudio, and the

dada2package installed (BiocManager::install("dada2")). - Computational Resources: Standard desktop or server with sufficient RAM to load read quality data.

Step-by-Step Methodology

Set Up R Session and Path.

Sort and List Forward and Reverse Reads.

Generate Quality Profile Plots.

Data Interpretation and Decision Points

- Quality Score Trend: Identify the position where the mean quality score drops below an acceptable threshold (e.g., Q30 or Q20). This becomes the primary basis for the

truncLenparameter. - Nucleotide Frequency: Check for abnormal spikes in a particular base at the start or end, which may indicate remaining adapter or primer sequences requiring trimming (

trimLeftparameter). - Forward vs. Reverse Comparison: Reverse reads typically degrade faster in Illumina sequencing. The

truncLenfor reverse reads is often shorter.

Summarized Quantitative Data from Typical Runs

Table 1: Representative Quality Metrics from a 250bp Paired-end MiSeq Run

| Read Direction | Cycle Position | Mean Q-Score Start | Mean Q-Score End | Recommended Truncation Length (Q20 cutoff) | Observed Primer Length |

|---|---|---|---|---|---|

| Forward (R1) | 1-250 | 35 | 22 | 240 | 20 |

| Reverse (R2) | 1-250 | 33 | 18 | 200 | 20 |

Table 2: Impact of Truncation on Read Retention in DADA2 Filtering Step

Applied Filter Parameters (filterAndTrim) |

Input Reads | Output Reads | % Retained | Post-Filtering Mean Expected Errors |

|---|---|---|---|---|

truncLen=c(240,200), maxN=0, maxEE=c(2,2) |

1,000,000 | 925,000 | 92.5% | <1.0 |

truncLen=c(200,180), maxN=0, maxEE=c(2,2) |

1,000,000 | 950,500 | 95.1% | <1.5 |

No truncation, maxEE=c(5,5) |

1,000,000 | 880,000 | 88.0% | ~3.0 |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for DADA2-based ASV Research

| Item | Function/Description | Example/Supplier |

|---|---|---|

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Provides chemistry for 2x300bp paired-end sequencing, ideal for full-length 16S rRNA gene amplicons (e.g., V3-V4 region). | Illumina (Cat# MS-102-3003) |

| HotStarTaq Plus DNA Polymerase | High-fidelity polymerase for PCR amplification of target region with minimal bias. | Qiagen (Cat# 203645) |

| NucleoMag DNA/RNA Isolation Kits | For consistent microbial genomic DNA extraction from complex samples (stool, soil, biofilm). | Macherey-Nagel |

| Quant-iT PicoGreen dsDNA Assay Kit | Fluorometric quantification of double-stranded DNA library concentration for accurate normalization before pooling. | Thermo Fisher (Cat# P7589) |

| *DADA2 R Package (v1.28+) * | Core software suite containing plotQualityProfile, filterAndTrim, learnErrors, dada, and mergePairs for ASV inference. |

Bioconductor |

| Phylogenetic Marker Gene Primers | Target-specific primers (e.g., 515F/806R for 16S V4; ITS1F/ITS2 for fungal ITS). | See Earth Microbiome Project protocols. |

Visualized Workflows

Title: Workflow for Initial Quality Assessment Informing DADA2 Filtering

Title: Interpreting plotQualityProfile to Set DADA2 Parameters

Within the broader thesis investigating the application of the DADA2 pipeline for Amplicon Sequence Variant (ASV) research in clinical drug development, the initial step of raw read filtering and trimming is paramount. This protocol details the parameterization of this critical quality control step, focusing on length, quality scores, and PhiX contamination removal, to ensure the generation of high-fidelity ASVs for downstream analyses.

The precision of the DADA2 pipeline in resolving single-nucleotide differences is highly sensitive to input read quality. Suboptimal filtering can propagate errors, creating artifactual ASVs that confound microbial community analyses essential for therapeutic target discovery. This document establishes standardized parameters based on current Illumina sequencing technology and the DADA2 algorithm's requirements.

Core Parameter Definitions & Rationale

Table 1: Core Filtering Parameters and Recommended Settings

| Parameter | Recommended Setting | Rationale & Empirical Basis |

|---|---|---|

| TruncLen (Forward/Reverse) | F: 240, R: 200 (for 2x250bp V4) | Read length where median quality drops below Q30. Must maintain >20bp overlap for merger. |

| MaxN | 0 | DADA2 requires no ambiguous bases (N). |

| MaxEE (Expected Errors) | 2.0 | Calculates sum(10^(-Q/10)). More flexible than a fixed Q-score cutoff. |

| TruncQ | 2 | Truncate read at first instance of quality ≤ Q2. |

| MinLen | 50 | Remove reads post-truncation that are too short for analysis. |

| PhiX Removal | Alignment (k-mer) based | PhiX is a common sequencing control; its sequences must be identified and removed. |

Table 2: Parameter Impact on Read Retention

| Filtering Stringency | % Reads Retained | Estimated ASV Inflation Rate |

|---|---|---|

| Lenient (MaxEE=5, MinLen=20) | 95% | High (≤15%) |

| Standard (Table 1) | 70-85% | Low (≤2%) |

| Aggressive (MaxEE=1, TruncLen stringent) | 40-60% | Very Low (≤1%) |

Detailed Experimental Protocols

Protocol 3.1: Visual Assessment for Parameter Determination

Objective: To determine TruncLen and MaxEE cutoffs using per-cycle quality profiles.

- Generate Quality Profile Plots: Using

plotQualityProfile()in DADA2 on a subset of samples (n=3-5). - Identify Truncation Points: Visually inspect plots. Set

TruncLenat the cycle where the median quality score (solid green line) falls below Q30. - Calculate MaxEE: Use the quality score distribution from the plots to model expected errors. The standard

MaxEE=2retains high-quality data while removing outliers. - Verify Overlap: Ensure

TruncLen-F + TruncLen-R > Amplicon Lengthfor successful read merging.

Protocol 3.2: PhiX Contamination Removal

Objective: To identify and remove reads originating from the PhiX sequencing control. Method A: Alignment-based Removal (Recommended)

- Download PhiX Genome: Fetch the PhiX174 reference genome (Accession: NC_001422.1) from NCBI.

- Build Alignment Index: Use

bowtie2-buildor a similar aligner to index the PhiX genome. - Align and Filter: Align a subset of reads (e.g., 10,000) to the PhiX index. Calculate the proportion of aligning reads. Filter all reads using

filterAndTrim()after identifying a negligible contamination threshold (e.g., <0.1%).

Method B: k-mer based Removal (DADA2 native)

- Scan for kmers: The DADA2

filterAndTrimfunction can screen for a specific set of kmers known to be present in PhiX. - Parameter Setting: Set

rm.phix=TRUE. This is effective for standard Illumina runs where PhiX is spiked at low concentration (~1%).

Protocol 3.3: Iterative Filtering and Optimization

Objective: To balance read retention with error rate minimization.

- Initial Filtering: Run

filterAndTrim()with initial parameters from Table 1. - Process through DADA2: Run core sample inference steps (

learnErrors,dada) on the filtered data. - Monitor Error Rates: Plot the error models. Poor models often indicate residual low-quality reads.

- Adjust Parameters: If error rates are high, tighten

MaxEE(e.g., from 2.0 to 1.5) or shortenTruncLen. Re-run and compare ASV tables and read counts.

Visualization of Workflows

Title: DADA2 Filtering and Trimming Decision Workflow

Title: Sequential Steps in DADA2 Read Filtering

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for DADA2 Filtering

| Item | Function / Relevance | Example / Specification |

|---|---|---|

| DADA2 R Package | Core software environment containing all filtering, learning, and inference algorithms. | Version ≥ 1.28.0 |

| RStudio IDE | Integrated development environment for executing and documenting the analysis pipeline. | Version with R ≥ 4.2 |

| High-Performance Computing (HPC) Cluster or equivalent | Necessary for processing large microbiome datasets (100s of samples) in a reasonable time. | Access to multi-core nodes with ≥16GB RAM. |

| PhiX174 Reference Genome | FASTA file for positive control and contamination screening. | NCBI Accession NC_001422.1 |

| Alignment Tool (e.g., Bowtie2) | Used for sensitive detection of PhiX contamination if k-mer screening is insufficient. | bowtie2 --very-sensitive-local |

| Quality Assessment Tool (e.g., FastQC) | For independent verification of read quality before and after filtering. | FastQC v0.12.0+ |

| Benchmark Dataset | A publicly available, well-characterized mock community dataset to validate parameter choices. | e.g., ZymoBIOMICS Microbial Community Standard |

Application Notes

Within the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, the core denoising steps transform raw amplicon sequencing reads into a table of exact biological sequences. This process moves beyond clustering-based Operational Taxonomic Units (OTUs) by modeling and correcting sequencing errors to infer the true sequences present in the original sample. The fidelity of this process is critical for downstream analyses in microbial ecology, biomarker discovery, and therapeutic development.

Learning Error Rates: This initial step builds an error model specific to the sequencing run. Unlike assuming a universal error profile, DADA2 learns the error rates from the data itself by examining the frequencies at which amplicon reads transition to other reads as a function of their quality scores. This sample-specific model is fundamental for distinguishing true biological variation from technical noise.

Sample Inference: Using the learned error model, the algorithm applies a statistical test to each set of unique sequences. It compares the abundance of a sequence to the expected number of errors arising from more abundant sequences. This allows for the resolution of true ASVs that may differ by as little as a single nucleotide, providing fine-scale taxonomic resolution.

Merging Paired Reads: For paired-end sequencing, forward and reverse reads are merged after denoising to reconstruct the full amplicon. This is performed post-inference to maintain the highest quality information for error correction, creating longer, more informative sequences for classification and analysis.

Protocols

Protocol 1: Learning Sample-Specific Error Rates

Objective: To construct an accurate error model for a given Illumina amplicon sequencing run.

Materials: See "Research Reagent Solutions" table.

Procedure:

- Subsampling: From each sample, randomly select a subset of reads (e.g., 2 million reads total) to reduce computation time during model learning.

- Error Model Parameterization: Using the

learnErrorsfunction in DADA2, the algorithm alternates between: a. Estimating the error rates for each possible nucleotide transition (A→C, A→G, A→T, etc.) based on the alignment of sequences to their abundances. b. Re-estimating the abundances of sequences by subtracting the expected errors flowing from more abundant parent sequences. - Model Convergence: Iterate until the error rates and sequence abundances stabilize. The output is a 16xN matrix of error rates (for the 4x4 nucleotide transitions) across each quality score (N).

- Validation: Plot the estimated error rates (points) against the consensus error rates observed in the data (solid line). A well-fit model shows close alignment, confirming the learned model is appropriate for the dataset.

Protocol 2: Divisive Amplicon Denoising Algorithm (DADA) Sample Inference

Objective: To apply the error model and infer the true biological sequences (ASVs) in each sample.

Procedure:

- Dereplication: Collapse identical reads into "unique sequences" with associated abundance counts.

- Core Algorithm Execution: For each sample, run the

dadafunction using the error model from Protocol 1. a. Partitioning: Start with all reads in a single partition. b. Model Testing: For each partition, test the hypothesis that the observed sequences are generated from a single true sequence via the error model. c. Division: If the hypothesis is rejected (p < a significance threshold, default α=0.05), divide the partition into two new partitions: one for the most abundant sequence (putative "real" sequence) and one for the others. d. Iteration: Repeat steps b-c on all new partitions until no partition can be further divided. - Output: The final partitions represent the inferred ASVs for that sample, with corrected sequence counts.

Protocol 3: Merging Paired-End Reads Post-Denoising

Objective: To combine denoised forward and reverse reads into full-length amplicon sequences.

Procedure:

- Denoising: Perform sample inference separately on the forward and reverse read files.

- Pair Alignment: For each denoised pair of forward and reverse reads, align the overlapping region. The algorithm uses a simple Needleman-Wunsch global alignment.

- Merging Consensus: If the forward and reverse reads agree in the overlap region (by default, with zero mismatches), they are merged into a single, full-length contig. Optionally, a

minOverlapparameter (e.g., 20 bases) and amaxMismatchparameter (e.g., 1) can be set to allow some flexibility. - Chimera Removal: The final merged sequences are subjected to a de novo chimera check (e.g., using

removeBimeraDenovo) to identify and remove artifacts formed by the fusion of two parent sequences during PCR.

Data Presentation

Table 1: Typical Error Rates Learned by DADA2 from a 2x250bp Illumina MiSeq Run (V4 Region)

| Nucleotide Transition | Mean Error Rate at Q30 | Mean Error Rate at Q25 |

|---|---|---|

| A→C | 2.5 x 10⁻⁴ | 8.0 x 10⁻⁴ |

| A→G | 1.8 x 10⁻⁴ | 6.2 x 10⁻⁴ |

| A→T | 1.2 x 10⁻⁴ | 4.5 x 10⁻⁴ |

| C→A | 2.1 x 10⁻⁴ | 7.1 x 10⁻⁴ |

| C→G | 1.5 x 10⁻⁴ | 5.5 x 10⁻⁴ |

| C→T | 3.0 x 10⁻⁴ | 1.1 x 10⁻³ |

| Average All | ~2.0 x 10⁻⁴ | ~7.0 x 10⁻⁴ |

Table 2: Impact of Denoising on Sequence Variant Resolution

| Processing Step | Output Description | Approximate Number from a 10⁷ Read Mock Community |

|---|---|---|

| Raw Reads | Total input sequences | 10,000,000 |

| After Quality Filter | High-quality reads | 8,500,000 |

| After DADA2 Inference | True Biological ASVs Inferred | 20 (matching known mock strains) |

| After Chimera Removal | Final ASV Table | 20 |

Visualizations

Title: DADA2 Core Denoising and Merging Workflow

Title: DADA Divisive Partitioning Algorithm Logic

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for DADA2 Protocol Execution

| Item | Function in Protocol |

|---|---|

| Illumina MiSeq/HiSeq Platform | Generates paired-end amplicon sequence data (e.g., 16S rRNA gene V3-V4 or V4 region) with associated per-base quality scores. |

| DADA2 R Package (v1.28+) | Primary software environment containing all core functions (learnErrors, dada, mergePairs, removeBimeraDenovo) for denoising. |

| High-Performance Computing (HPC) Cluster or Server | Necessary for processing large-scale metagenomic datasets due to the computationally intensive nature of the sample inference algorithm. |

| Quality Assessment Tools (e.g., FastQC) | Used prior to DADA2 for initial visualization of read quality profiles to inform trimming parameters. |

| Reference Databases (e.g., SILVA, GTDB, UNITE) | Used post-denosing for taxonomic assignment of the final ASV sequences, linking variants to known biology. |

| PCR Reagents & Target-Specific Primers | Used in upstream sample preparation to amplify the genomic region of interest (e.g., 16S, ITS, 18S) before sequencing. |

| Quantitative Mock Community DNA | Essential positive control containing known sequences at defined abundances for validating pipeline accuracy and error rates. |

Constructing the Sequence Table and Removing Chimeras

Within the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, constructing the final sequence table and removing chimeras are critical downstream steps. This protocol follows sample inference and the merging of paired-end reads. The sequence table is the high-resolution analogue of the traditional OTU table, while chimera removal ensures that each ASV represents a true biological sequence, not a PCR artifact. These steps are foundational for accurate downstream ecological and statistical analyses in microbial ecology, biomarker discovery, and drug development research.

Core Concepts & Data

Table 1: Comparison of Chimera Detection Algorithms

| Algorithm | Principle | Key Strength | Reported False Positive Rate* | Reference |

|---|---|---|---|---|

| de novo (DADA2) | Identifies chimeras by aligning potential parents within the sample. | Effective without a reference database. | ~1-2% | Callahan et al. (2016) |

| Reference-based (UCHIME2) | Compares sequences to a curated reference database of non-chimeric sequences. | High accuracy with a comprehensive database. | <1% | Edgar et al. (2011) |

| IDTAXA | Uses a machine learning classifier trained on taxonomy. | Integrates taxonomic consistency. | Data-dependent | Murali et al. (2018) |

*Rates are approximate and dependent on dataset and parameters.

Table 2: Typical Output Metrics from DADA2 Chimera Removal

| Metric | Typical Range in 16S rRNA Studies | Interpretation |

|---|---|---|

| Input Sequences | 1,000 - 100,000 per sample | Post-merge, pre-chimera count. |

| Percent Chimeric | 10% - 40% | Highly dependent on amplicon length and PCR cycle count. |

| Non-Chimeric Output | 60% - 90% of input | Final, high-quality ASVs for analysis. |

Application Notes & Protocols

Protocol: Constructing the Sequence Table in DADA2

Purpose: To create a sample-by-ASV abundance matrix from the merged sequence lists.

Materials & Software:

- R environment (v4.0+)

- DADA2 package (v1.24+)

- List of

dadaobjects for each sample. - List of merged sequences for each sample.

Procedure:

- Load Data: Ensure all sample inference (

dada()) and read merging (mergePairs()) steps are complete for every sample in your dataset. - Execute

makeSequenceTable: Run the commandseqtab <- makeSequenceTable(mergers), wheremergersis the list of merged samples from the previous step. - Inspect the Table: Use

dim(seqtab)to view the number of samples and ASVs. Useseqtab[1:5, 1:5]to preview the matrix. The table is stored as a matrix with rows as samples and columns as ASVs (sequences). - Optional Length Filtering: Visually inspect sequence length distribution with

table(nchar(getSequences(seqtab))). Remove non-target-length sequences (e.g., primer dimers) by subsetting:seqtab <- seqtab[, nchar(colnames(seqtab)) %in% seq(250, 256)](adjust range accordingly).

Protocol: Reference-Based Chimera Removal with DADA2

Purpose: To identify and remove chimeric ASVs by comparison to a known reference database.

Materials:

- Sequence table (

seqtabmatrix). - Reference database FASTA file (e.g., SILVA, GTDB, UNITE).

- High-performance computing resources (for large datasets).

Procedure:

- Database Preparation: Download the latest non-redundant version of your preferred database (e.g., SILVA nr99). Ensure it is compatible (not clustered too aggressively).

- Run

removeBimeraDenovoin Reference Mode:

- Calculate Statistics: Determine the proportion of chimeric sequences:

- Track Retention: Record the number of ASVs and sequences retained after chimera removal for pipeline summary statistics.

Protocol: De Novo Chimera Removal for Novel Environments

Purpose: To identify chimeras when a suitable reference database is unavailable or likely to be incomplete.

Procedure:

- Use the same

removeBimeraDenovofunction but withmethod="pooled". - Pooling: The

pooledmethod pools all samples together before chimera detection, increasing sensitivity for rare parent sequences that may be present in other samples.

- Validation: If possible, validate results on a subset using a reference-based method or by cross-referencing with a different algorithm (e.g., DECIPHER's

IdTaxaclassification for taxonomic incongruity).

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Library Prep Preceding DADA2

| Item | Function in ASV Workflow | Key Consideration |

|---|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Minimizes PCR amplification errors, reducing false diversity and chimera formation. | Lower error rate is critical for true SNV detection. |

| Dual-Indexed Nextera-style Adapters | Allows for multiplexing of hundreds of samples with minimal index hopping/crosstalk. | Unique dual indexing is essential for Illumina sequencing. |

| Magnetic Bead Clean-up Kit (e.g., AMPure XP) | Size selection and purification of amplicon libraries, removing primer dimers and non-target fragments. | Bead-to-sample ratio dictates size cutoff. |

| Quantification Kit (e.g., Qubit dsDNA HS Assay) | Accurate measurement of library concentration for precise pooling and sequencing loading. | More accurate than spectrophotometry for low-concentration libraries. |

| Phasing Control (e.g., PhiX) | Added to sequencing runs (1-5%) to increase nucleotide diversity, improving base calling accuracy for low-diversity amplicon libraries. | Essential for reliable sequencing of single-gene amplicons. |

Visualizations

Title: DADA2 Sequence Table Construction and Chimera Removal Workflow

Title: Reference-Based Chimera Detection Principle

Within the broader thesis on the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, the step of assigning taxonomy is critical for transforming anonymous sequences into biologically meaningful data. This process involves comparing ASVs against curated reference databases, such as SILVA and GTDB, which provide the taxonomic framework for identification. The choice of database and interpretation of the output directly impact downstream ecological and functional inferences, especially in applied contexts like drug development where linking microbiota to host phenotypes is essential.

Reference Databases: A Quantitative Comparison

The selection of a reference database involves trade-offs between coverage, curation philosophy, and taxonomic nomenclature. Below is a comparison of the two most widely used databases for 16S rRNA gene sequencing.

Table 1: Comparison of SILVA and GTDB Reference Databases (2024 Data)

| Feature | SILVA (Release 138.1) | GTDB (Release 220) |

|---|---|---|

| Primary Curation Goal | Provide a comprehensive, manually curated rRNA database reflecting classical nomenclature. | Provide a phylogenetically consistent genome-based taxonomy, standardizing bacterial and archaeal classification. |

| Taxonomic Framework | Largely aligns with Bergey's Manual and historical literature; may contain polyphyletic groups. | Strictly based on genome phylogeny, resulting in significant reclassification of many taxa. |

| Number of Full-Length 16S Ref Seqs | ~2.8 million | ~1.2 million (derived from genomes) |

| Coverage of Prokaryotic Diversity | Extensive, but includes unclassified environmental sequences. | High for sequenced genomes, but may miss diversity from uncultivated taxa without genomes. |

| Update Frequency | Major releases every 2-3 years. | Regular releases (~every 6 months). |

| Typical Use Case | Ecological studies requiring comparability with vast prior literature. | Studies prioritizing phylogenetic accuracy and a standardized taxonomy. |

| Key Consideration | May include low-quality sequences; requires quality filtering (e.g., minBoot setting). |

Implements major nomenclature changes (e.g., splitting of Pseudomonas, reclassification of Clostridia). |

Key Research Reagent Solutions

Table 2: Essential Materials & Tools for Taxonomy Assignment

| Item | Function/Explanation |

|---|---|

| DADA2 R Package (v1.30+) | Provides the assignTaxonomy() and addSpecies() functions for exact matching and species assignment. |

| IDTAXA (DECIPHER R Package) | An alternative algorithm using a machine learning approach; may be more accurate for noisy datasets. |

| SILVA SSU Ref NR 99 Dataset | The non-redundant version of SILVA, recommended for general use to reduce computational load. |

| GTDB Bacterial & Archaeal RefSeq Files | GTDB-formatted reference sequences and taxonomy files for use with classification tools. |

minBoot Parameter |

Confidence threshold (0-100); only assignments at or above this bootstrap confidence are kept. |

| Kraken2/Bracken | Alternative k-mer based classification system for ultra-fast profiling, often used with custom GTDB builds. |

| QIIME2 (q2-feature-classifier) | A plugin that provides a framework for training and using classifiers on reference databases. |

Experimental Protocols

Protocol 4.1: Taxonomy Assignment with DADA2 using SILVA

This protocol follows the DADA2 pipeline after the ASV table has been generated.

Download Reference Data:

- Access the SILVA website (https://www.arb-silva.de/). Navigate to the "Downloads" section.

- Download the

SILVA_138.1_SSURef_NR99_tax_silva.fasta.gzfile. This is the non-redundant, curated version suitable for taxonomy assignment. - Uncompress the file:

gunzip SILVA_138.1_SSURef_NR99_tax_silva.fasta.gz.

Assign Taxonomy:

- In R, load the DADA2 library and your ASV sequence object (

seqtab.nochim).

- In R, load the DADA2 library and your ASV sequence object (

(Optional) Add Species-Level Annotation:

- For the V3-V4 region, you can attempt exact matching to add species.

- For the V3-V4 region, you can attempt exact matching to add species.

Interpret Output:

- The

taxamatrix will have rows corresponding to ASVs and columns for Kingdom, Phylum, Class, Order, Family, Genus. - Any assignment with a bootstrap confidence below

minBoot(here, 80) will be marked asNA. Inspect the distribution of bootstrap values for each taxonomic rank.

- The

Protocol 4.2: Taxonomy Assignment with a GTDB-based Classifier in QIIME2

This protocol uses QIIME2's q2-feature-classifier plugin with a pre-fitted classifier.

Obtain a Pre-trained Classifier:

- Access the QIIME2 Data Resources page (https://docs.qiime2.org/current/data-resources/).

- Download the classifier artifact trained on GTDB Release 207 for the appropriate 16S region (e.g.,

515F/806R).

Run Taxonomy Classification:

- Execute the classification command on your ASV representative sequences (

rep-seqs.qza).

- Execute the classification command on your ASV representative sequences (

Generate and View Results:

Export the taxonomy table to a viewable format.

Visualize the

taxonomy.qzvfile on https://view.qiime2.org to see assignments and confidence scores.

Visualization of Workflows and Logical Relationships

Taxonomy Assignment Workflow in DADA2/QIIME2

Logical Decision Process in assignTaxonomy()

Within the broader thesis employing the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, the transition from sequence processing to ecological and statistical analysis is critical. The phyloseq object in R is the fundamental data structure that integrates all components of an amplicon study—taxonomic assignments, sample metadata, phylogenetic tree, and the ASV abundance table—into a single, manageable R object. This protocol details the generation of a phyloseq object from DADA2 outputs, enabling subsequent downstream analyses such as alpha/beta diversity, differential abundance, and ordination.

Research Reagent Solutions & Essential Materials

Table 1: Key Software Packages and Their Functions

| Item | Function in Phyloseq Object Creation |

|---|---|

| R (v4.3.0+) | The statistical computing environment required to run all analyses. |

| RStudio | An integrated development environment (IDE) that facilitates R scripting and project management. |

| phyloseq (v1.44.0+) | The core R/Bioconductor package for handling and analyzing microbiome census data. |

| dada2 (v1.28.0+) | Provides the sequence processing pipeline output (ASV table, sequence fasta, taxonomy). |

| Biostrings | Efficiently handles biological sequences (DNAStringSet) for integrating ASV sequences into phyloseq. |

| ape | Package used for reading and manipulating phylogenetic trees (Newick format). |

| Sample Metadata (CSV) | Tabular data containing sample-specific variables (e.g., treatment, pH, host health status). |

| Taxonomy Table (CSV/TSV) | Assigned taxonomy for each ASV, typically from a classifier like IDTAXA or the RDP classifier. |

Application Notes & Protocol

Prerequisites and Input Data Preparation

Prior to phyloseq object assembly, ensure the DADA2 pipeline has been completed, yielding the following files:

- Sequence Table (

seqtab.rds): An ASV abundance matrix (samples x ASVs). - Taxonomy Assignment (

taxa.rds): A taxonomic classification matrix (ASVs x taxonomic ranks). - ASV Sequences (

asv_seqs.fasta): A FASTA file containing the DNA sequences for each ASV. - Sample Metadata (

metadata.csv): A comma-separated file with sample identifiers as row names matching those in the sequence table.

Detailed Protocol: Constructing the Phyloseq Object

Step 1: Load Required R Packages.

Step 2: Import DADA2 Outputs.

Step 3: Construct Individual phyloseq Components.

Step 4: (Optional) Incorporate a Phylogenetic Tree.

Step 5: Merge Components into the Phyloseq Object.

Data Validation and Quality Control

Table 2: Quantitative Summary of Phyloseq Object Components

| Component | Dimension | Description | Typical QC Check |

|---|---|---|---|

| otu_table | [m x n] |

m ASVs (taxa) by n samples. |

Ensure no samples have zero total reads. Use sample_sums(ps). |

| tax_table | [m x r] |

m ASVs by r taxonomic ranks (e.g., Kingdom to Species). |

Check for NA's at the Genus level; consider aggregating to a higher rank. |

| sample_data | [n x p] |

n samples by p metadata variables. |

Confirm row names exactly match sample_names(ps). |

| refseq | [m] |

DNAStringSet of length m (one per ASV). |

Verify names(refseq(ps)) match taxa_names(ps). |

| phy_tree | (Optional) | Phylogenetic tree with m tips. |

Verify phy_tree(ps)$tip.label match taxa_names(ps). |

Protocol for Basic Validation:

Visualization of Workflow

Title: Workflow for Constructing a phyloseq Object from DADA2 Outputs

This protocol provides a standardized method for generating a phyloseq object, the essential container for microbiome data analysis in R. Proper construction and validation of this object, as outlined here, are pivotal first steps for any downstream ecological or statistical investigation following ASV inference via the DADA2 pipeline.

Solving Common DADA2 Challenges: Optimization for Clinical and Low-Biomass Samples

Diagnosing and Resolving Poor Merge Rates for Amplicon Overlaps

Within the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, the merging of paired-end reads is a critical step for reconstructing full-length amplicons. Poor merge rates directly reduce the number of high-quality sequences available for inference, compromising downstream diversity and differential abundance analyses. This application note details diagnostic procedures and optimization protocols to address suboptimal merging performance, ensuring data integrity for researchers, scientists, and drug development professionals.

Diagnosis of Poor Merge Rates

The first step is a systematic diagnostic to identify the root cause. Quantitative metrics should be collected and compared against expected benchmarks.

Table 1: Diagnostic Metrics for Merge Rate Assessment

| Metric | Expected Range (for healthy 16S V3-V4 data) | Indicative Problem if Outside Range |

|---|---|---|

| Overall Merge Rate | >70-80% | Poor overlap, primer dimers, or quality issues. |

| Mean Overlap Length | ~50-100 bp (for 2x250bp V3-V4) | Amplicon longer than possible from read length. |

| Mismatch Rate in Overlap | <1% | High sequencing error or true biological variation. |

| Input Read Count | As per experimental design | Library prep or sequencing failure. |

| Post-Merge Read Count | ~(Input Fwd Reads * Merge Rate) | Algorithmic failure in merging step. |

Diagnostic Protocol

- Generate Quality Profile: Use

dada2::plotQualityProfile()on forward and reverse reads. Look for quality drops within the overlap region. - Calculate Expected Overlap: Determine amplicon length (e.g., ~450bp for 16S V3-V4). Expected overlap = (Length of Fwd Read + Length of Rev Read) - Amplicon Length.

- Inspect Primer Sequences: Check for the presence of primer sequences using

dada2::removePrimers(). High levels of non-primer reads indicate primer dimer contamination. - Run a Test Merge: Perform a merge with default parameters (

dada2::mergePairs()ormergePairs()in the pipeline) on a subset (n=1e6) of reads. Record the merge rate and mismatch rate.

Optimization Protocols

Based on diagnostic outcomes, apply one or more of the following protocols.

Protocol A: Adjusting Merge Algorithm Parameters

This is the primary intervention for simple overlap issues.

- Increase

maxMismatch: If the mismatch rate is slightly high but overlap is good, increase from default (often 0) to 1 or 2. This accommodates true biological variation or minor errors.

Decrease

minOverlap: If the expected overlap is short (e.g., <20bp), lower theminOverlaprequirement (default is often 12 or 16).Use

justConcatenate: If reads do not overlap but are from a short amplicon, concatenate with an N-padding (sacrifices error correction in overlap).

Protocol B: Pre-processing for Improved Overlap

Apply when quality profiles or primer contamination is the issue.

- Trim Reads Aggressively: Trim to the region before quality drops, ensuring high-quality overlap.

- Remove Primers: Explicitly remove primers if not already done.

Protocol C: Utilizing Alternative Merge Algorithms

If DADA2's internal merger fails, use a pre-merge with more flexible tools.

- Merge with

bbmerge.sh(BBTools):

- Process merged reads through DADA2: Use

dada2::dada()on the merged reads from BBmerge, bypassing themergePairsstep.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions

| Item | Function in DADA2 Merging Context |

|---|---|

| DADA2 R Package | Core software containing the mergePairs() algorithm and all quality profiling functions. |

| BBTools Suite | External tool for performing aggressive, flexible read merging outside DADA2. |

| FastQC | Initial quality control visualization to identify systematic quality drops or adapter contamination. |

| Cutadapt | Precise removal of primer/adapter sequences prior to processing in DADA2. |

| High-Fidelity PCR Polymerase | Critical wet-lab component to minimize PCR errors that manifest as mismatches in the overlap region. |

| Quantitation Kit (Qubit) | Accurate library quantitation prevents over-clustering on sequencers, which reduces read quality. |

| PhiX Control Spikes | Provides internal control for sequencing error rates and cluster identification. |

Visualizations

Diagnostic Decision Tree for Poor Merge Rates

Optimized DADA2 Workflow with Merge Solutions

Application Notes

Within the broader thesis investigating the optimization of the DADA2 pipeline for Amplicon Sequence Variant (ASV) research in pharmaceutical microbiomics, the precise tuning of filtering parameters is critical. These parameters directly influence error rate estimation, chimera removal, and the final ASV table's biological fidelity, impacting downstream analyses in drug development. The optimal values are dataset-specific, contingent upon sequencing technology, amplicon length, and sample integrity.

Core Parameter Functions & Impact

- trimLeft: Removes a specified number of nucleotides from the start of reads to eliminate primer sequences or low-quality bases introduced by the sequencing chemistry. Insufficient trimming incorporates non-biological sequences, while excessive trimming wastes data.

- truncLen: Truncates reads at a specific position based on quality score deterioration. This is a crucial quality-control step where reads are trimmed to the position before quality drops significantly. Paired-end reads often have different optimal truncation points for forward and reverse reads.

- maxEE (Maximum Expected Errors): Sets a quality-based threshold for read filtering. It calculates the sum of the error probabilities for each base in a read. Reads with an expected error count higher than

maxEEare discarded. More stringent than average quality scoring. - minLen: Discards reads shorter than a specified length after trimming and truncation, typically to remove primer-dimers or other small, non-specific amplification products.

Table 1: Typical Parameter Ranges by Sequencing Platform & Amplicon

| Target Region | Platform | Recommended trimLeft (F/R) | Recommended truncLen (F/R) | Recommended maxEE (F/R) | Recommended minLen | Key Rationale |

|---|---|---|---|---|---|---|

| 16S V4 (~250bp) | Illumina MiSeq 2x250 | 10-20 / 10-20 | 220-240 / 200-220 | 2 / 2 | 200 | High-quality overlap; truncate where median quality drops below Q30. |

| 16S V3-V4 (~460bp) | Illumina MiSeq 2x300 | 15-20 / 15-20 | 270-290 / 250-270 | 2 / 2 | 200 | Moderate overlap; forward read often longer high-quality segment. |

| ITS1/2 (variable) | Illumina MiSeq 2x300 | 10-30 / 10-30 | 200-250 / 180-220 | 2-3 / 2-3 | 150 | High length variability; prioritize quality over length for merger. |

| 18S V9 (~130bp) | Illumina NovaSeq 2x150 | 10 / 10 | 130-140 / 130-140 | 2 / 2 | 120 | Very short amplicon; minimal trimming to retain biological signal. |

Table 2: Impact of Parameter Stringency on Output Metrics (Hypothetical 16S Dataset)

| Parameter Set (trimL, truncL, maxEE) | Input Reads | % Passed Filter | % Merged | ASVs Generated | Notes on Community Bias |

|---|---|---|---|---|---|

| Liberal (10, 230/210, 5) | 100,000 | 95% | 92% | 350 | High read retention but may increase spurious ASVs from errors. |

| Moderate (15, 240/220, 2) | 100,000 | 85% | 88% | 280 | Recommended starting point; balances quality and data loss. |

| Stringent (20, 245/225, 1) | 100,000 | 70% | 85% | 220 | May lose legitimate rare taxa with lower-quality reads. |

Experimental Protocols

Protocol 1: Empirical Determination oftruncLenandtrimLeft

Objective: To visually identify optimal truncation and trimming points using per-base quality profiles. Materials: FastQ files from paired-end Illumina sequencing of the target amplicon. Workflow:

- Load Libraries: In R, load the

dada2package and set the path to your FASTQ files. - Generate Quality Profiles: Use

plotQualityProfile(fnFs)andplotQualityProfile(fnRs)to visualize the mean quality scores (green line) at each cycle for forward and reverse reads. - Determine

trimLeft: Identify the cycle where quality stabilizes above Q30, or the known length of the primer sequence. SettrimLeftto this value. - Determine

truncLen: Identify the cycle where the median quality score (orange solid line) drops sharply below Q30 (e.g., Q28). SettruncLento this cycle number. For paired-end reads, choose points where forward and reverse reads still have sufficient (~20+ bp) high-quality overlap for merging. - Documentation: Record the chosen values and the rationale from the quality plots.

Protocol 2: Iterative Tuning ofmaxEEandminLen

Objective: To optimize read filtering parameters by monitoring read retention and ASV yield.

Materials: FastQ files, pre-determined trimLeft and truncLen values.

Workflow:

- Baseline Filtering: Run

filterAndTrim()with initial parameters (e.g.,maxEE=c(2,2),minLen=50). Record the reads out. - Iterate

maxEE: Repeat filtering with a range ofmaxEEvalues (e.g.,c(1,1),c(2,2),c(5,5)). Plot the percentage of reads retained versus themaxEEvalue. - Iterate

minLen: Using the optimalmaxEE, repeat filtering with a range ofminLenvalues (e.g., 50, 100, 150). The goal is to remove primer-dimers (often <100 bp) while retaining true amplicons. - Downstream Validation: Process each parameter set through the full DADA2 pipeline (error learning, dereplication, sample inference, merging). Compare the resulting ASV table richness (e.g., alpha diversity) and composition (e.g., beta diversity stability) to select the set that maximizes valid biological signal while minimizing technical noise.

- Final Selection: Choose parameters just beyond the "elbow" in the read retention curve, where further relaxation yields minimal read gain but potential error increase.

Mandatory Visualization

Diagram 1: Parameter Tuning and Evaluation Workflow for DADA2

Diagram 2: Sequential Application of DADA2 Filtering Parameters on a Read

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for DADA2 Pipeline Parameter Tuning

| Item | Function in Parameter Tuning |

|---|---|

| High-Quality FASTQ Files | The primary input. Must be from the specific sequencing run and amplicon to be analyzed for accurate quality assessment. |

| R Statistical Environment | The computational platform required to run the DADA2 package and associated visualization tools. |

| DADA2 R Package (v1.28+) | Core software containing the filterAndTrim(), plotQualityProfile(), and all downstream ASV inference functions. |

| Known Primer Sequences | Essential for accurately setting the trimLeft parameter to remove all primer bases without cutting into biological sequence. |

| Positive Control Mock Community | A standardized sample with known composition. Crucial for validating that chosen parameters recover the expected species without artifacts. |

| Computational Log File | A documented record of input read counts, reads passed at each step, and final ASV counts for each parameter set tested. |

| Negative Control Samples | Used to identify contaminant or non-specific amplification sequences that should be removed, informing minLen and maxEE settings. |

Handling Non-Overlapping Reads and Alternative Workflows (e.g., ITS region analysis).

Application Note AN-2023-001: Integrating ITS Analysis into a DADA2-Centric ASV Thesis

Within a comprehensive thesis on the DADA2 pipeline for Amplicon Sequence Variant (ASV) research, a significant challenge arises from the analysis of genetic loci where the standard paired-end reads do not overlap. This is most prevalent in the analysis of the Internal Transcribed Spacer (ITS) region of fungal rRNA operons, which can exceed 600-700 bp in length, exceeding the combined length of typical Illumina paired-end reads (e.g., 2x250 bp or 2x300 bp). This document provides application notes and detailed protocols for extending the DADA2 framework to handle such non-overlapping reads and alternative workflows.

1. Quantitative Summary of Non-Overlapping Read Challenges

Table 1: Comparison of 16S rRNA vs. ITS Amplicon Sequencing Challenges

| Feature | 16S rRNA Gene (V4 Region) | ITS Region (ITS1 or ITS2) |

|---|---|---|

| Typical Amplicon Length | ~250-300 bp | 400-700+ bp (highly variable) |

| Compatibility with 2x300 bp sequencing | Full overlap, merging possible | Often no overlap, reads remain separate |

| Primary DADA2 Approach | mergePairs() |

pseudoPool method or concatenation |

| Key Pre-processing Step | Quality filtering, merging | Read orientation checking & trimming |

| Error Model | Single, learned from merged reads | Two separate models (R1 & R2) |

| Downstream Analysis | Single ASV table | Single ASV table based on concatenated sequences |

Table 2: Pseudo-Pooling vs. Simple Concatenation in DADA2

| Method | Process | Advantage | Disadvantage |

|---|---|---|---|

Pseudo-Pooling (pool="pseudo") |

Dereplicates R1 and R2 separately, then infers sequences by linking corresponding R1 & R2 ASVs. | Maintains paired information; more accurate for error correction. | Computationally intensive; requires high sample count for effective inference. |

| Simple Concatenation | Manually concatenate filtered R1 and R2 reads (e.g., with a NNNN spacer) before input to DADA2. |

Simple, straightforward, works on few samples. | Loses paired information for error correction; treats concatenated read as a single entity. |

2. Detailed Protocol for ITS Analysis with DADA2 (Non-Overlapping Reads)

Protocol: ITS2 Region Analysis Using Pseudo-Pooling in DADA2

I. Sample Preparation & Sequencing

- Primers: Use ITS-specific primers (e.g., ITS3/ITS4 for ITS2).

- PCR & Library Prep: Follow standard amplicon library preparation protocols. Include negative controls.

- Sequencing Platform: Illumina MiSeq or NovaSeq (2x250 bp or 2x300 bp recommended).

II. Bioinformatics Analysis (DADA2 Pipeline Adaptation)

- Software Requirements: R 4.0+, DADA2 (≥1.18), cutadapt.

- Step 1: Read Orientation & Primer Removal.

- ITS reads can be in forward or reverse orientation. Use

cutadaptto orient all reads uniformly and remove primers. - Example Command (bash):

- ITS reads can be in forward or reverse orientation. Use

Step 2: DADA2 R Script Core Workflow.

Step 3: Post-Processing & Analysis.

- The resulting

seqtab.nochimis an ASV table where each ASV is defined by the concatenated R1 and R2 sequences that were successfully linked during themergePairs()step. Proceed with standard ecological analysis (e.g., phyloseq).

- The resulting

3. Visualization of Workflows

Diagram Title: DADA2 Workflow Comparison for 16S vs ITS Analysis

4. The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for ITS Amplicon Sequencing

| Item | Function/Description | Example/Note |

|---|---|---|

| ITS-specific PCR Primers | Amplify the highly variable ITS1 or ITS2 subregion for fungal community profiling. | ITS3 (5'-GCATCGATGAAGAACGCAGC-3') / ITS4 (5'-TCCTCCGCTTATTGATATGC-3') for ITS2. |

| Proofreading DNA Polymerase | High-fidelity PCR to minimize amplification errors in ASV inference. | Q5 Hot Start Polymerase (NEB), Phusion HF. |

| Magnetic Bead Cleanup Kit | Post-PCR purification and library normalization. | AMPure XP Beads (Beckman Coulter). |

| Indexed Adapter Kit | Adds unique sample indices and Illumina sequencing adapters. | Nextera XT Index Kit (Illumina). |

| UNITE Reference Database | Curated fungal ITS sequence database for taxonomic assignment in DADA2. | Download the "developer" version formatted for DADA2. |

| Positive Control DNA | Known fungal genomic DNA to monitor PCR and sequencing efficiency. | ZymoBIOMICS Microbial Community Standard. |

| Negative Control (PCR-grade water) | Critical for detecting reagent/lab-borne contamination. | Nuclease-free water, used in library prep master mix. |

| DADA2 R Package | Core software for modeling sequencing errors and inferring exact ASVs. | Available via Bioconductor. |

Strategies for Host-Derived (e.g., human) or Contaminant-Rich Samples

Application Notes

In the context of ASV research using the DADA2 pipeline, host-derived or contaminant-rich samples present a significant challenge. These samples, such as human tissue biopsies, sputum, or low-biomass environmental swabs, are characterized by an overwhelming abundance of host or contaminant nucleic acids relative to the target microbial signal. This imbalance can lead to inefficient sequencing of the microbial community, inflated costs, and bioinformatic complications including false-positive ASVs from reagent contaminants.

Key strategies focus on two phases: 1) Wet-lab enrichment to physically deplete non-target nucleic acids prior to sequencing, and 2) Bioinformatic subtraction to remove residual host/contaminant sequences post-sequencing. The optimal approach is often a combination of both.

Table 1: Comparison of Host/Contaminant Depletion Strategies

| Strategy | Method Category | Principle | Approximate Host DNA Reduction | Key Considerations for DADA2 Pipeline |

|---|---|---|---|---|

| Probe-Based Hybridization (e.g., NuGEN AnyDeplete) | Wet-lab Enrichment | Oligonucleotide probes bind host DNA/RNA for enzymatic degradation. | 70-99% | Increases microbial sequencing depth; reduces required sequencing effort per sample for equivalent coverage. |

| Selective Lysis (e.g., MetaPolyzyme) | Wet-lab Enrichment | Enzymatic digestion of host eukaryotic cells, sparing microbial cell walls. | 50-95% | Efficiency varies by sample type and microbiota; may lyse some fragile microbes (e.g., Gram-negatives). |

| Bioinformatic Subtraction (e.g., Bowtie2 + host genome) | Computational | Alignment and removal of reads mapping to a reference host genome. | Up to ~99% of residual host reads | Requires high-quality reference genome; critical post-wet-lab step to clean data before DADA2. |

Background Contaminant Identification (e.g., decontam R package) |

Computational | Statistical identification of ASVs associated with negative controls. | Identifies contaminant ASVs | Must be applied to the ASV table after DADA2; uses frequency or prevalence methods across sample batches. |

Detailed Protocols

Protocol 1: Combined Probe-Based Host Depletion and 16S rRNA Gene Amplicon Library Preparation

Objective: To deplete human host nucleic acids from a sputum DNA extract prior to 16S rRNA gene sequencing, optimizing for input into the DADA2 pipeline.

Research Reagent Solutions & Essential Materials:

- AnyDeplete Human DNA/RNA Depletion Kit (NuGEN): Contains hybridization probes and enzymes for targeted depletion of human sequences.

- MetaPolyzyme (Sigma-Aldrich): Cocktail of enzymes for gentle lysis of eukaryotic cells.

- Magnetic Stand for 1.5 mL tubes: For bead-based cleanups.

- Agencourt AMPure XP Beads (Beckman Coulter): For size selection and purification of DNA libraries.

- Qubit dsDNA HS Assay Kit (Thermo Fisher): For accurate quantification of low-concentration DNA post-depletion.

- Platinum Hot Start PCR Master Mix (Thermo Fisher): For robust and specific amplification of the 16S V3-V4 region.

- Nuclease-Free Water (not DEPC-treated): For all dilution steps.

Procedure:

- Input DNA Quantification: Quantify 10-1000 ng of total DNA from sputum extraction using the Qubit HS assay.

- Host Depletion Reaction: Set up the AnyDeplete reaction according to the manufacturer's instructions. Briefly, mix input DNA with Depletion Probes and Depletion Enzyme Mix. Incubate at 47°C for 30 minutes.

- Post-Depletion Cleanup: Purify the depleted DNA using a 1:1 ratio of AMPure XP beads. Elute in 20 µL of nuclease-free water.

- Quantify Depleted DNA: Re-quantify using the Qubit HS assay. Typical yields are 1-10% of the original input, representing enriched microbial DNA.

- 16S rRNA Gene Amplification: Amplify the V3-V4 region using primers 341F/806R with Platinum Hot Start Master Mix. Use 2-10 ng of depleted DNA as template. Cycle conditions: 94°C for 3 min; 30 cycles of 94°C for 45s, 55°C for 60s, 72°C for 90s; final extension at 72°C for 10 min.