From Raw Reads to Biological Insights: A Comprehensive DADA2 Pipeline Tutorial for 16S rRNA Amplicon Analysis

This guide provides a complete, step-by-step framework for implementing the DADA2 pipeline to analyze 16S rRNA gene amplicon sequencing data.

From Raw Reads to Biological Insights: A Comprehensive DADA2 Pipeline Tutorial for 16S rRNA Amplicon Analysis

Abstract

This guide provides a complete, step-by-step framework for implementing the DADA2 pipeline to analyze 16S rRNA gene amplicon sequencing data. Designed for researchers and bioinformaticians in drug development and biomedical science, it covers the foundational concepts of amplicon sequence variants (ASVs), delivers a detailed methodological workflow from quality control to taxonomic assignment, addresses common troubleshooting and optimization challenges, and validates DADA2's performance against other methods. The article equips readers with the practical knowledge to generate robust, reproducible microbial community profiles for downstream statistical analysis and biomarker discovery.

Understanding DADA2: The Foundation of Precise 16S rRNA Amplicon Analysis

Within the broader context of a thesis on the DADA2 pipeline for 16S rRNA amplicon data research, this document serves as an introduction to the core concepts, applications, and protocols of 16S rRNA amplicon sequencing for microbiome profiling. This technique targets the hypervariable regions of the conserved 16S ribosomal RNA gene to characterize bacterial and archaeal community composition, diversity, and dynamics. The accurate interpretation of such data hinges on robust bioinformatic pipelines, with DADA2 representing a state-of-the-art method for resolving amplicon sequence variants (ASVs).

16S rRNA amplicon sequencing involves PCR amplification of specific hypervariable regions (e.g., V1-V2, V3-V4, V4), followed by high-throughput sequencing. The choice of region impacts taxonomic resolution and bias. The following table summarizes common sequencing platforms and their typical outputs for 16S studies.

Table 1: Common Sequencing Platforms for 16S Amplicon Studies

| Platform | Typical Read Length (bp) | Run Output (Max) | Common 16S Region Targets |

|---|---|---|---|

| Illumina MiSeq | 2x300 | 25 M reads | V3-V4, V4 |

| Illumina NextSeq 550 | 2x150 | 400 M reads | V4, V1-V2 |

| Illumina NovaSeq 6000 | 2x250 | 20 B reads | Full-length (w/ multiplexing) |

| Ion Torrent PGM/S5 | 200-400 | 3-130 M reads | V4, V6-V9 |

| PacBio Sequel II (HiFi) | ~1,500 | 4 M reads | Full-length 16S |

Table 2: Comparison of Analysis Outputs: OTU Clustering vs. DADA2 ASVs

| Feature | OTU Clustering (97% identity) | DADA2 (Amplicon Sequence Variants) |

|---|---|---|

| Resolution | Species/Genus level | Single-nucleotide difference |

| Method Basis | Heuristic clustering | Error-corrected, exact sequences |

| Denoising | Post-clustering chimera removal | Integrated error modeling & removal |

| Result Type | Operational Taxonomic Unit (OTU) | Amplicon Sequence Variant (ASV) |

| Cross-study Comparison | Difficult due to reference database dependence | Directly comparable |

Detailed Experimental Protocol: Library Preparation for the V4 Region (Illumina MiSeq)

Materials & Reagents

- Genomic DNA from microbial communities (e.g., stool, soil, swab).

- Primers 515F (5'-GTGYCAGCMGCCGCGGTAA-3') and 806R (5'-GGACTACNVGGGTWTCTAAT-3') targeting the V4 region.

- High-fidelity DNA polymerase (e.g., Phusion or Q5).

- dNTPs.

- Magnetic bead-based purification system (e.g., AMPure XP).

- Indexing primers (Nextera XT Index Kit v2).

- Quantification kit (e.g., Qubit dsDNA HS Assay).

- Agilent Bioanalyzer or TapeStation reagents.

- Illumina MiSeq Reagent Kit v3 (600-cycle).

Procedure

A. Primary PCR Amplification (Add Barcoded Adapters)

- Reaction Setup: In a 25 µL reaction, combine:

- 12.5 µL 2x High-Fidelity Master Mix

- 1.0 µL Forward Primer (10 µM)

- 1.0 µL Reverse Primer (10 µM)

- 1.0 µL Template DNA (1-10 ng)

- 9.5 µL Nuclease-free water.

- Cycling Conditions:

- 98°C for 30 sec (initial denaturation).

- 25-35 cycles of: 98°C for 10 sec, 55°C for 30 sec, 72°C for 30 sec.

- 72°C for 5 min (final extension).

- Purification: Clean amplified products using a 1x ratio of AMPure XP beads. Elute in 30 µL of 10 mM Tris buffer, pH 8.5.

- Quantification & QC: Quantify with Qubit and check amplicon size (~390 bp) on a Bioanalyzer.

B. Index PCR (Add Dual Indices for Multiplexing)

- Reaction Setup: In a 50 µL reaction, combine:

- 25 µL 2x Master Mix

- 2.5 µL Nextera i7 Index Primer

- 2.5 µL Nextera i5 Index Primer

- 10 µL Purified Primary PCR Product

- 10 µL Nuclease-free water.

- Cycling Conditions:

- 95°C for 3 min.

- 8 cycles of: 95°C for 30 sec, 55°C for 30 sec, 72°C for 30 sec.

- 72°C for 5 min.

- Purification: Clean with a 0.8x ratio of AMPure XP beads. Elute in 30 µL Tris buffer.

C. Pooling, Quantification, and Sequencing

- Normalize & Pool: Quantify all indexed libraries with Qubit, dilute to 4 nM, and pool equimolarly.

- Final QC: Validate pool size and concentration (Bioanalyzer, qPCR).

- Denature & Dilute: Denature the pooled library with NaOH and dilute to 6-8 pM in HT1 buffer, adding a 10-15% PhiX control.

- Sequencing: Load onto an Illumina MiSeq system using a 500-cycle or 600-cycle reagent kit for 2x250 bp paired-end sequencing.

DADA2 Pipeline Protocol for Analysis

This protocol is executed in R and assumes paired-end, demultiplexed FASTQ files.

Visualizations

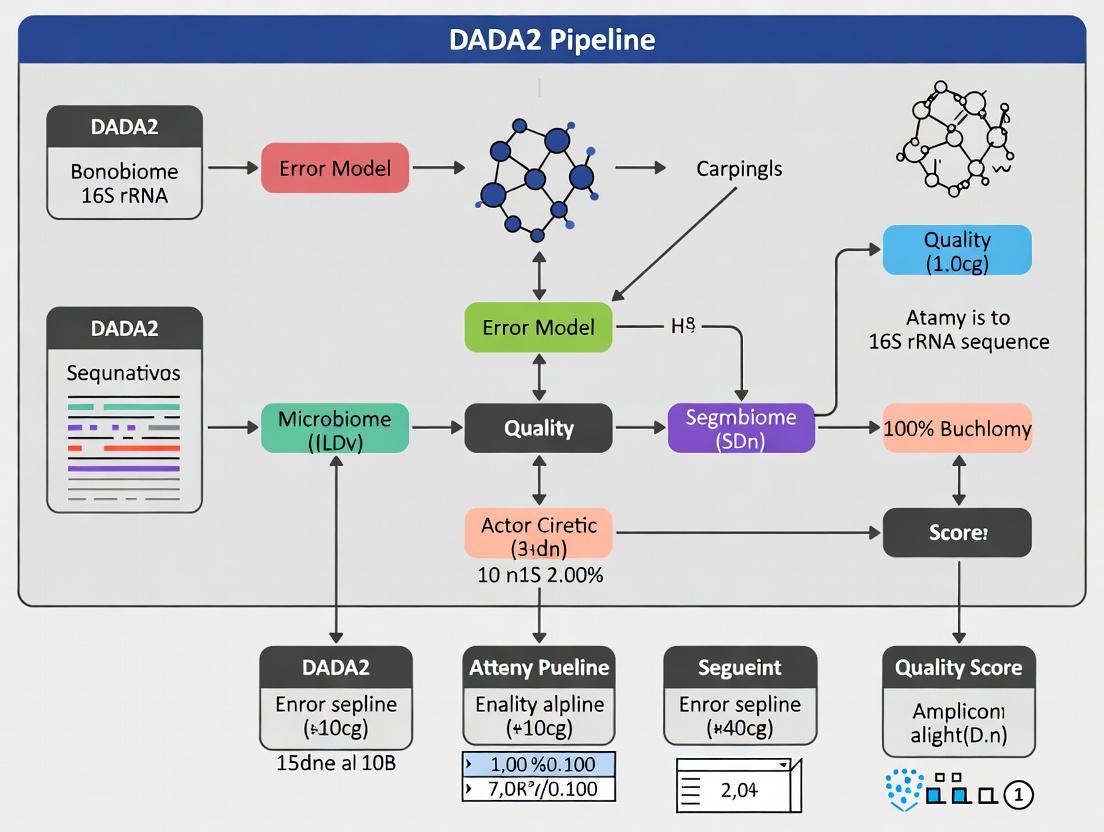

16S rRNA Amplicon Sequencing Workflow

DADA2 Pipeline Analysis Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for 16S rRNA Amplicon Sequencing

| Item | Function & Rationale |

|---|---|

| Magnetic Bead Purification Kits (e.g., AMPure XP) | Size-selective clean-up of PCR products to remove primers, dimers, and contaminants. Critical for high library quality. |

| High-Fidelity DNA Polymerase (e.g., Q5, Phusion) | Provides accurate amplification with low error rates, minimizing PCR-introduced sequencing errors. |

| Quantitation Kit (e.g., Qubit dsDNA HS Assay) | Fluorescence-based, specific for double-stranded DNA. More accurate for library quantitation than absorbance (A260). |

| Bioanalyzer/TapeStation & Kits | Microfluidic capillary electrophoresis for precise sizing and quality assessment of libraries prior to sequencing. |

| Illumina Indexing Primers (e.g., Nextera XT Index Kit) | Contains unique dual index combinations for multiplexing many samples in a single sequencing run. |

| PhiX Control v3 | Spiked into runs (1-10%) as a quality control for cluster generation, sequencing, and alignment. |

| Reference Databases (e.g., SILVA, Greengenes, RDP) | Curated collections of aligned 16S sequences with taxonomy for bioinformatic classification of ASVs/OTUs. |

| Positive Control (Mock Community) | Genomic DNA from a defined mix of known bacterial strains. Essential for evaluating bias and accuracy of the entire workflow. |

Why DADA2? From OTU Clustering to Amplicon Sequence Variants (ASVs)

This application note serves as a core chapter in a broader thesis evaluating the DADA2 (Divisive Amplicon Denoising Algorithm) pipeline for 16S rRNA gene amplicon data. The thesis posits that moving from traditional Operational Taxonomic Unit (OTU) clustering to Amplicon Sequence Variants (ASVs) via DADA2 represents a paradigm shift in microbial ecology, offering superior resolution, reproducibility, and data fidelity for research and drug development. This document details the rationale, protocols, and practical tools for this transition.

The Paradigm Shift: OTUs vs. ASVs

Traditional OTU clustering groups sequences based on an arbitrary similarity threshold (typically 97%), inherently losing single-nucleotide variation information. DADA2 uses a parametric error model to correct amplicon errors in silico and infers exact biological sequences, or ASVs, providing single-nucleotide resolution.

Table 1: Quantitative Comparison of OTU Clustering vs. DADA2 (ASV Inference)

| Feature | OTU Clustering (97%) | DADA2 (ASVs) | Implication for Research |

|---|---|---|---|

| Resolution | Groups sequences within ~3% divergence. | Single-nucleotide resolution. | Detects subtle strain-level shifts crucial for drug response studies. |

| Reproducibility | Low; results vary with clustering algorithm & parameters. | High; algorithm is deterministic. | Enables direct cross-study comparison, meta-analyses. |

| Error Handling | Heuristic (e.g., abundance filtering, chimera removal post-clustering). | Model-based, error correction precedes variant calling. | Reduces false positives, increases confidence in rare variants. |

| Output Type | Arbitrary cluster (OTU) defined by centroid. | Exact biological sequence (ASV). | ASVs are portable and can be referenced across databases permanently. |

| Common Tool | VSEARCH, USEARCH, mothur. | DADA2 R package. | Integrates into tidyverse R workflow for streamlined analysis. |

Core DADA2 Protocol for 16S rRNA Data

Protocol 1: Standard DADA2 Workflow for Paired-end Reads

Research Reagent Solutions & Essential Materials:

- Raw FASTQ Files: Paired-end Illumina amplicon data (e.g., V3-V4 region of 16S rRNA).

- R Environment (v4.0+): Primary computational platform.

- DADA2 R Package (v1.24+): Core denoising and ASV inference engine.

- High-Performance Computing (HPC) Cluster or Workstation: Minimum 16GB RAM recommended for large datasets.

- Reference Database: e.g., SILVA (v138) or GTDB for taxonomic assignment.

- Bioinformatics File Manipulation Tools: Cutadapt (for primer removal) or

dada2::functions.

Detailed Methodology:

- Preparation & Primer Removal: Use

dada2::filterAndTrim()withtrimLeftparameter or pre-process with Cutadapt to remove primer sequences. This is critical for accurate error model learning. - Quality Profiling: Visualize read quality plots with

dada2::plotQualityProfile()to inform truncation parameters. Filtering & Trimming: Filter reads based on quality scores, max expected errors (

maxEE), and truncate to consistent length where quality drops.Error Model Learning: Learn dataset-specific error rates for forward and reverse reads independently using a machine learning algorithm.

Dereplication & Sample Inference: Dereplicate identical reads and apply the core denoising algorithm to infer true biological sequences.

Merge Paired Reads: Merge forward and reverse reads to create full-length sequences, removing non-overlapping pairs.

Construct ASV Table: Create a sequence table (analogous to OTU table) across all samples.

Remove Chimeras: Identify and remove bimera sequences de novo.

Taxonomic Assignment: Assign taxonomy to each ASV using a Naive Bayes classifier trained on a reference database.

- Preparation & Primer Removal: Use

DADA2 Workflow for Paired-end 16S Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for DADA2 Analysis

| Item / Solution | Function / Purpose | Example / Notes |

|---|---|---|

| Illumina MiSeq/HiSeq | Platform for generating paired-end amplicon sequences. | V2/V3 500-cycle kit common for 16S. |

| 16S rRNA Primer Set | Amplifies target hypervariable region. | 515F/806R for V4, 338F/806R for V3-V4. |

| DNeasy PowerSoil Kit | Gold-standard for microbial genomic DNA extraction from complex samples. | Ensures unbiased lysis and inhibitor removal. |

| Quant-iT PicoGreen dsDNA Assay | Accurate fluorometric quantification of DNA for normalization prior to PCR. | Critical for reducing amplification bias. |

| Phusion High-Fidelity PCR Master Mix | High-fidelity polymerase to minimize PCR errors early in workflow. | Reduces introduction of artifactual sequences. |

| DADA2 R Package | Core software for error modeling, denoising, and ASV inference. | Primary analytical tool documented here. |

| SILVA or GTDB Database | Curated reference for taxonomic assignment of 16S sequences. | Provides accurate taxonomy; must match primer region. |

| QIIME 2 (with DADA2 plugin) | Alternative containerized environment for running DADA2. | Useful for reproducible, pipeline-based analyses. |

Logical Flow from Sample to Biological Insight

Within the comprehensive thesis on the DADA2 pipeline for 16S rRNA amplicon data research, this document details the foundational bioinformatic principles that enable accurate inference of true biological sequences from noisy next-generation sequencing reads. DADA2 (Divisive Amplicon Denoising Algorithm 2) implements a core workflow of Error Modeling, Denoising, and Chimera Removal to transition from raw sequence variants to a faithful table of Amplicon Sequence Variants (ASVs). This contrasts with traditional OTU clustering, offering higher resolution. These principles are critical for researchers, scientists, and drug development professionals seeking to characterize microbiomes with precision for diagnostic and therapeutic discovery.

Error Modeling: Quantifying Sequencing Uncertainty

Sequencing errors are systematic and learnable. DADA2 models these errors by estimating the rate at which each nucleotide (A, C, G, T) is erroneously observed as each other nucleotide in the context of the sequencing run.

Protocol: Building the Error Model in DADA2

- Input Preparation: Start with quality-filtered, trimmed FASTQ files (e.g.,

filtered_fwd.fastq.gz,filtered_rev.fastq.gz). Algorithm Execution: Use the

learnErrorsfunction in the DADA2 R package.Process: The function alternates between estimating error rates and inferring the true sequence composition of the dataset. It uses a subset of data (

nbasesparameter) to estimate rates for each possible nucleotide transition (e.g., A→C, A→G, A→T) across all quality scores.- Output: An error rate matrix (error model) for forward (

errF) and reverse (errR) reads, which is passed to the core denoising algorithm.

Table 1: Example Error Rate Matrix (A-to-* transitions, Q-score ~30)

| True Nucleotide | Called as A | Called as C | Called as G | Called as T |

|---|---|---|---|---|

| A | 0.99985 | 3.2e-05 | 7.8e-05 | 4.0e-05 |

Denoising: Inferring True Biological Sequences (ASVs)

Denoising is the process of partitioning reads into groups that originated from the same true biological sequence. DADA2 uses a parametric model of substitution errors, based on the error model, to assess the probability that an observed sequence is an erroneous derivation of a putative true sequence.

Protocol: Core Sample Inference (Denoising) in DADA2

- Input: Quality-filtered FASTQ files and the corresponding error models (

errF,errR). Algorithm Execution: Use the

dadafunction.Process: For each sample (or pseudo-pooled group), the algorithm:

- Dereplicates identical reads.

- Iteratively partitions reads by sequence abundance and similarity.

- Uses the error model to calculate the probability that a less abundant sequence is an error of a more abundant one (the "p-value" of a partition). Partitions are accepted if this probability is below a significance threshold (OMEGA_A, default 1e-40).

- Output: A list of inferred ASVs and their abundances for each sample.

Table 2: Denoising Output for a Single Sample

| ASV ID | Sequence (5'-3') | Abundance | Prevalence (%) |

|---|---|---|---|

| ASV_01 | ATCGGAAGCT... | 12500 | 12.5 |

| ASV_02 | ATCGGAAACT... | 9800 | 9.8 |

| ASV_03 | ATTGGAAGCT... | 4500 | 4.5 |

Chimera Removal: Identifying Artificial Hybrid Sequences

Chimeras are PCR artifacts formed from incomplete extension, where a new sequence is created from two or more parent sequences. They must be identified and removed post-denoising.

Protocol: Chimera Identification in DADA2

- Input: The merged ASV sequences from all samples (post

mergePairsandmakeSequenceTable). Algorithm Execution: Use the

removeBimeraDenovofunction with the "consensus" method.Process: For each candidate ASV, the algorithm:

- Searches for potential "parent" sequences within the set that could form the candidate via a left-segment/right-segment combination.

- If a plausible set of more abundant parents is found, the candidate is flagged as a chimera.

- The "consensus" method applies this check across all samples.

- Output: A chimera-free sequence table. Typically, 5-20% of ASVs may be identified as chimeric.

Table 3: Chimera Check Results

| Metric | Count | Percentage of Input |

|---|---|---|

| Total ASVs Before | 1500 | 100% |

| Chimeric ASVs Identified | 225 | 15% |

| Non-Chimeric ASVs (Final) | 1275 | 85% |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for DADA2-Based 16S rRNA Analysis

| Item | Function & Relevance to DADA2 Principles |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Minimizes PCR errors during library prep, reducing noise that must be modeled and denoised. Critical for accurate error rate estimation. |

| Quant-iT PicoGreen dsDNA Assay Kit | Accurately quantifies amplicon library concentration for balanced sequencing, preventing over-clustering which increases error rates. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard for paired-end 2x300bp 16S sequencing (V3-V4). Provides the raw FASTQ data containing the error profiles DADA2 models. |

| Mag-Bind TotalPure NGS Beads | For clean-up and size selection post-PCR, removing primer dimers that can interfere with sequencing and downstream analysis. |

| NucleoSpin Gel and PCR Clean-up Kit | Extracts and purifies PCR products. Pure input DNA is essential for generating high-quality reads for denoising. |

| ZymoBIOMICS Microbial Community Standard | Mock community with known composition. Used as a positive control to validate the entire DADA2 pipeline's accuracy in denoising and chimera removal. |

| DNeasy PowerSoil Pro Kit | Robust, standardized microbial DNA extraction from complex samples. High-quality, inhibitor-free input is foundational. |

| DADA2 R Package (v1.30+) / QIIME 2 (with DADA2 plugin) | The core bioinformatics software implementing the error modeling, denoising, and chimera removal algorithms. |

Visualizations

DADA2 Pipeline Core Workflow

Error Model Informs Denoising Process

Within the context of a DADA2 pipeline for 16S rRNA amplicon data research, the transformation of raw sequencing reads into biologically meaningful insights hinges on two fundamental data structures: the input FastQ files and the output feature table. This application note details their composition, quality assessment, and the experimental protocols governing their generation and processing.

Input: FastQ Files

FastQ files are the standard raw output from high-throughput sequencing platforms, containing both sequence data and per-base quality scores.

2.1 FastQ File Structure: Each read is represented by four lines:

- Sequence Identifier: Begins with '@', followed by instrument and run details.

- Nucleotide Sequence.

- Separator: Begins with '+', optionally followed by the same identifier.

- Quality Scores: Encoded in Phred+33 (standard for Illumina 1.8+), where each character represents an integer value (Q) indicating the probability of an incorrect base call: Q = -10 log₁₀(P(error)).

2.2 Quantitative Quality Metrics: Key metrics to assess from FastQ files prior to DADA2 processing are summarized below.

Table 1: Key FastQ Quality Metrics for 16S Amplicon Analysis

| Metric | Description | Target/Expected Range for V3-V4 2x250bp (Illumina MiSeq) |

|---|---|---|

| Total Reads | Number of raw sequence reads per sample. | Highly variable; typically 50k-100k per sample. |

| Read Length | Distribution of nucleotide count per read. | Forward & Reverse: ~250-300bp. |

| Mean Q-Score | Average per-base quality across all reads. | >30 for majority of bases (base call accuracy >99.9%). |

| GC Content | Percentage of G and C nucleotides. | ~50-60% for bacterial 16S rRNA. |

| Ambiguous Bases (N) | Count of undetermined bases. | Aim for 0. |

| Adapter Content | Percentage of reads with adapter sequences. | <5% after trimming. |

2.3 Protocol: FastQ File Generation (Illumina MiSeq)

- Experimental Goal: Generate paired-end 16S rRNA gene amplicon sequences.

- Primers: Target hypervariable regions (e.g., V3-V4, 341F/805R).

- Protocol Summary:

- DNA Extraction: Use a bead-beating kit (e.g., PowerSoil) for microbial lysis.

- PCR Amplification: Perform triplicate 25-µL reactions with barcoded primers and high-fidelity polymerase. Cycle: 95°C/3min; 25-30 cycles of (95°C/30s, 55°C/30s, 72°C/30s); 72°C/5min.

- Amplicon Purification: Clean PCR products using magnetic beads.

- Library Quantification: Use fluorometric assay (e.g., Qubit).

- Library Pooling: Combine equimolar amounts of each sample amplicon.

- Sequencing: Load pooled library onto MiSeq reagent cartridge (v2 or v3, 500-cycle) for 2x250bp paired-end sequencing.

2.4 Diagram: FastQ Generation and Initial QC Workflow

Diagram Title: FastQ File Generation and Initial QC Workflow

Output: Feature Tables from DADA2

The DADA2 pipeline denoises sequences to resolve exact amplicon sequence variants (ASVs), producing a feature table as its primary output.

3.1 Feature Table Composition: The table is a bioconductor object or a classic OTU-style matrix where:

- Rows: Exact ASVs (features), often represented by their DNA sequence.

- Columns: Samples.

- Values: Read counts for each ASV in each sample, representing abundance.

3.2 Quantitative Output Metrics: Post-DADA2 processing yields several key summary statistics.

Table 2: Key Output Metrics from DADA2 Pipeline

| Metric | Description | Interpretation |

|---|---|---|

| Total Input Reads | Reads entering the pipeline. | Baseline for filtering loss. |

| Filtered & Trimmed | Reads remaining after quality filtering. | Typically 80-95% of input. |

| Denoised Reads | Reads merged and error-corrected. | Lower than filtered due to merger failure. |

| Non-Chimeric Reads | Reads assigned to non-chimeric ASVs. | Final reads in feature table. |

| Number of ASVs | Total unique biological sequences inferred. | Resolution is higher than OTU clustering. |

| Sample Read Depth | Final reads per sample in feature table. | Critical for alpha/beta diversity analysis. |

3.3 Protocol: DADA2 Pipeline for Generating Feature Tables

- Software: DADA2 (R package, version 1.28+).

- Input: Demultiplexed, gzipped FastQ files (R1 & R2).

- Detailed Protocol:

- Quality Profile Inspection:

plotQualityProfile(fnFs)to determine trim parameters. - Filtering & Trimming:

filterAndTrim(fnFs, filtFs, fnRs, filtRs, truncLen=c(240,200), maxN=0, maxEE=c(2,2), truncQ=2, rm.phix=TRUE, compress=TRUE) - Learn Error Rates:

learnErrors(filtFs, multithread=TRUE) - Sample Inference (Denoising):

dada(filtFs, err=errF, multithread=TRUE) - Merge Paired Reads:

mergePairs(dadaF, filtFs, dadaR, filtRs, minOverlap=12) - Construct Sequence Table:

makeSequenceTable(mergers) - Remove Chimeras:

removeBimeraDenovo(seqtab, method="consensus", multithread=TRUE) - Assign Taxonomy: Using a reference database (e.g., SILVA, GTDB).

assignTaxonomy(seqtab_nochim, "silva_nr99_v138.1_train_set.fa.gz", multithread=TRUE) - Create Final Feature Table: The chimera-removed sequence table is the ASV abundance matrix.

- Quality Profile Inspection:

3.4 Diagram: DADA2 Workflow from FastQ to Feature Table

Diagram Title: DADA2 Workflow from FastQ to Feature Table

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for 16S Amplicon Studies

| Item | Function & Application |

|---|---|

| DNA Extraction Kit (e.g., DNeasy PowerSoil Pro) | Isolates high-quality microbial genomic DNA from complex samples, removing PCR inhibitors. |

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Provides accurate amplification of the 16S target with low error rates, critical for ASV inference. |

| Barcoded 16S rRNA Gene Primers (e.g., 341F/805R) | Amplify specific hypervariable regions and add unique sample indexes for multiplexing. |

| Magnetic Bead Cleanup Kit (e.g., AMPure XP) | Size-selects and purifies PCR amplicons, removing primer dimers and contaminants. |

| Fluorometric DNA Quantification Kit (e.g., Qubit dsDNA HS) | Accurately measures DNA concentration of libraries prior to pooling and sequencing. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Provides chemistry for 2x300bp paired-end sequencing, suitable for full-length amplification of common 16S regions. |

| SILVA or GTDB Reference Database | Curated, high-quality rRNA sequence database used for taxonomic assignment of ASVs. |

| Positive Control (Mock Community DNA) | Defined mix of known bacterial genomic DNA; essential for validating pipeline accuracy and estimating error rates. |

| Negative Control (PCR-Grade Water) | Carried through extraction and PCR; identifies reagent or environmental contamination. |

Essential Software and Computing Environment Setup (R, Bioconductor)

The DADA2 pipeline for 16S rRNA amplicon analysis is a gold standard for inferring exact amplicon sequence variants (ASVs) from microbial community data. Its implementation within R and Bioconductor necessitates a precise, reproducible computing environment. This protocol details the establishment of this foundational environment, critical for subsequent thesis research on microbial dynamics in drug development contexts.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item/Category | Function/Explanation |

|---|---|

| R (≥ 4.2.0) | The core statistical programming language and environment in which all analyses are executed. Provides the engine for Bioconductor packages. |

| RStudio IDE | An integrated development environment for R. Enhances productivity with syntax highlighting, project management, and integrated help. |

| Bioconductor (≥ 3.16) | A repository of R packages specifically for the analysis and comprehension of high-throughput genomic data, including DADA2. |

| DADA2 Package (≥ 1.26.0) | The core package implementing the Divisive Amplicon Denoising Algorithm for accurate single-nucleotide resolution inference of ASVs. |

| ShortRead / Biostrings | Bioconductor packages essential for handling and manipulating FASTQ files and biological sequences. |

| ggplot2 / phyloseq | Packages for generating publication-quality visualizations and for the comprehensive analysis and visualization of microbiome census data. |

| Conda/Mamba | A cross-platform package and environment manager. Crucial for managing non-R dependencies and ensuring computational reproducibility. |

| FastQC / MultiQC | Tools for initial quality control of raw sequencing reads and aggregation of results into a single report. |

System-Wide Prerequisite Installation (via Conda)

A robust environment management system is essential to handle software dependencies.

Protocol 3.1: Creating a Conda Environment for DADA2 Analysis

- Install Miniconda: Download and install Miniconda from https://docs.conda.io/en/latest/miniconda.html. Follow platform-specific instructions.

Create Environment: Open a terminal (or Anaconda Prompt on Windows) and execute:

Activate Environment:

Verify Installation: Launch R from within the activated environment (

R) and test loading core packages:

R and Bioconductor Core Setup

Protocol 4.1: Installation and Configuration of R/Bioconductor within the Conda Environment

Note: If using the Conda environment from Protocol 3.1, R and key packages are already installed. This protocol is for a native R installation.

- Install R: Download and install the latest stable release of R from https://cran.r-project.org/.

Install Bioconductor: Open R or RStudio and install the core Bioconductor manager.

Install Critical Packages: Install the suite of packages required for the DADA2 pipeline.

Quantitative Data: Current Software Versions (as of latest search)

| Software/Package | Minimum Recommended Version | Purpose in DADA2 Pipeline |

|---|---|---|

| R | 4.2.0 | Base computing environment. |

| Bioconductor | 3.16 | Genomic analysis package repository. |

| DADA2 | 1.26.0 | Core denoising algorithm for ASV inference. |

| phyloseq | 1.42.0 | Microbiome data analysis & visualization. |

| Conda | 23.0+ | Environment & dependency management. |

Validating the DADA2 Environment

Protocol 5.1: Functional Validation Test with Mock Data

This experiment verifies the complete setup using a built-in mock dataset.

Load Libraries and Data:

Execute Core DADA2 Workflow Steps:

Expected Outcome: The

filterAndTrimfunction should execute without error, producing a table showing read counts before and after filtering. This confirms that R, Bioconductor, DADA2, and system linkages are functional.

Workflow Visualization: DADA2 Pipeline Setup & Validation

DADA2 Software Setup and Validation Workflow

Step-by-Step DADA2 Workflow: A Practical Tutorial from FastQ to Phyloseq

Within the broader thesis on implementing the DADA2 pipeline for 16S rRNA amplicon data research, the initial quality assessment phase is foundational. This step determines the suitability of raw sequencing data for downstream denoising and analysis, directly impacting the reliability of inferred Amplicon Sequence Variants (ASVs) and subsequent ecological or clinical conclusions.

Loading Essential Libraries

The analysis is conducted in the R statistical environment. The following core packages must be loaded.

Table 1: Essential R Libraries for Initial DADA2 Setup

| Library Name | Purpose in Initial Setup | Version Note |

|---|---|---|

dada2 |

Core pipeline functions for quality profiling, filtering, denoising. | v1.30+ |

ShortRead |

Enables reading and manipulation of FASTQ files. | Required by dada2 |

ggplot2 |

Generates publication-quality visualizations of quality profiles. | v3.4+ |

phyloseq |

(Optional) For eventual integration of ASV tables, taxonomy, and sample data. | v1.44+ |

Protocol 2.1: Library Installation and Loading

- Open RStudio or an R console.

- Set the CRAN repository:

setRepositories(ind=1:2). - Install required packages if not present:

- Load the libraries into the R session:

Inspecting Read Quality Profiles

Quality profiles graphically represent the distribution of sequencing quality scores (typically Phred scores, Q) at each base position for a set of reads. A drop in average quality informs truncation parameters in the filtering step.

Protocol 3.1: Generating Read Quality Profiles

- Define Path and List Files: Set the path to the directory containing demultiplexed, gzipped FASTQ files.

Subset Samples for Visualization: To expedite initial inspection, profile a subset (e.g., 4-6 samples).

Generate Quality Profile Plots: Use

dada2::plotQualityProfile().Display Plots:

Table 2: Interpretation of Quality Profile Metrics

| Feature in Plot | Ideal Characteristic | Implications for Truncation |

|---|---|---|

| Mean Quality Score (Green solid line) | Remains above Q30 (99.9% base call accuracy) across read length. | Truncate before the position where the mean quality drops below an acceptable threshold (e.g., Q25 or Q20). |

| Quality Score Interquartile Range (Yellow/orange ribbon) | Tight ribbon, indicating consistent quality across reads. | A widening ribbon suggests increased variability and error rate. |

| Cumulative Frequency of Reads (Grey-scale lines) | Shows proportion of reads extending to each position. | Informs the trade-off between read length retention and read loss during truncation. |

| Nucleotide Frequency (Bottom panel) | Balanced A/C/G/T distribution without strong cycles. | Severe biases may indicate technical artifacts. |

Table 3: Example Quantitative Summary from Quality Profiles (Hypothetical Data, Illumina MiSeq v3, 2x300 bp)

| Read Direction | Position | Mean Phred Score | % Reads >= Position | Recommended Truncation Point (Example) |

|---|---|---|---|---|

| Forward | 1 | 36.5 | 100% | Position 260 |

| 200 | 34.1 | 99.8% | ||

| 260 | 30.2 | 99.5% | ||

| 280 | 25.7 | 98.9% | ||

| Reverse | 1 | 35.8 | 100% | Position 200 |

| 180 | 32.5 | 99.6% | ||

| 200 | 28.9 | 99.2% | ||

| 220 | 20.1 | 97.8% |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for 16S rRNA Amplicon Sequencing & Initial QC

| Item | Function in Experiment |

|---|---|

| 16S rRNA Gene Primer Set (e.g., 515F/806R for V4) | Targets hypervariable regions for bacterial/archaeal community profiling. |

| High-Fidelity DNA Polymerase (e.g., Phusion) | Reduces PCR errors during library amplification, preserving true sequence diversity. |

| Size-Selective Magnetic Beads (e.g., AMPure XP) | Purifies amplicons and removes primer dimers to ensure clean library construction. |

| Illumina Sequencing Kits (e.g., MiSeq Reagent Kit v3) | Provides chemistry for paired-end sequencing (e.g., 2x300 cycles). |

| Positive Control Mock Community DNA | Validates entire wet-lab and bioinformatics pipeline using known organism composition. |

| Negative Control (PCR-grade Water) | Detects reagent or environmental contamination. |

Visualizing the Initial DADA2 Workflow

Diagram Title: Initial DADA2 Setup and Quality Check Workflow

Within the context of the DADA2 pipeline for 16S rRNA amplicon analysis, the initial filtering and trimming steps are critical for generating accurate Amplicon Sequence Variants (ASVs). Suboptimal parameters can introduce errors, reduce sensitivity, and compromise downstream ecological inferences. These application notes provide a structured protocol for empirically determining the optimal truncation and filtering parameters for a specific dataset, a foundational chapter in a broader thesis on robust microbiome analysis.

Key Parameter Optimization Experiments

Experiment 1: Read Quality Profile Assessment

Objective: To visualize per-base read quality and identify where quality significantly degrades, informing truncation length decisions. Protocol:

- Input: Raw forward (

R1) and reverse (R2) FASTQ files from Illumina MiSeq or HiSeq. - Tool: Use

plotQualityProfile()function from the DADA2 R package (v1.28+). - Procedure:

- Run the function on a subset of files (e.g., first 5 samples) for both R1 and R2 reads.

- Visually inspect the plots. The solid line represents the mean quality score at each position; the green shaded area represents the quartiles.

- Key Decision Point: Identify the position at which the mean quality score for a significant proportion of reads falls below a chosen threshold (typically Q30 for MiSeq data). This position is a candidate for truncation.

- Deliverable: Quality profile plots for R1 and R2, used to propose initial

truncLenvalues.

Experiment 2: Error Rate Learning with Parameter Testing

Objective: To measure the effect of different filtering parameters on the error model's learnable signal and the number of reads retained. Protocol:

- Design: Perform a factorial experiment testing a range of

truncLen(e.g., 240, 245, 250 for R1; 200, 210, 220 for R2) andmaxEE(Expected Errors) values (e.g., c(2,4), c(3,6)). - Procedure:

- For each parameter combination, run

filterAndTrim()on the full dataset. - Record the percentage of reads passing the filter for each sample.

- For a representative subset, learn the error rates using

learnErrors()on the filtered output. - Plot the estimated error rates (

plotErrors()) to assess convergence. A good model will show the learned error rates (black lines) closely following the observed rates (red dots) and will converge with increasing information.

- For each parameter combination, run

- Deliverable: A table comparing read retention and a qualitative assessment of error model fit for each parameter set.

Experiment 3: Sample Inference Sensitivity Analysis

Objective: To evaluate how parameter choice impacts final ASV counts and sample composition. Protocol:

- Procedure:

- Select the top 3-4 parameter combinations from Experiment 2.

- For each set, run the complete DADA2 pipeline (

derepFastq(),dada(),mergePairs(),makeSequenceTable()) through to generating a sequence table. - Record the total number of ASVs inferred, the percentage of merged reads, and the number of chimeras removed.

- Perform a basic alpha diversity analysis (e.g., observed ASVs) on the non-chimeric sequence tables.

- Deliverable: Quantitative comparison of pipeline outputs under different filtering regimes.

Table 1: Example Parameter Grid & Filtering Efficiency

| TruncLen (R1,R2) | maxEE (R1,R2) | Mean Reads In | Mean Reads Out | % Retained | Mean Merged Reads | % Merged |

|---|---|---|---|---|---|---|

| (250, 200) | (2,4) | 100,000 | 87,500 | 87.5% | 80,000 | 91.4% |

| (250, 200) | (3,6) | 100,000 | 94,200 | 94.2% | 85,100 | 90.3% |

| (240, 190) | (2,4) | 100,000 | 90,100 | 90.1% | 78,900 | 87.6% |

Table 2: Impact on Final ASV Inference

| Parameter Set (TruncLen, maxEE) | Total ASVs | Mean ASVs/Sample | Chimeras Removed | Mean Alpha Diversity (Observed) |

|---|---|---|---|---|

| (250,200), (2,4) | 1,450 | 145 | 12% | 142 |

| (250,200), (3,6) | 1,620 | 162 | 15% | 155 |

| (240,190), (2,4) | 1,380 | 138 | 11% | 135 |

Recommended Optimization Workflow

Title: DADA2 Filter Parameter Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in DADA2 Filtering Optimization |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Generates the initial amplicon library with minimal PCR errors, reducing background noise during error rate learning. |

| Validated 16S rRNA Primer Panels (e.g., 515F/806R for V4) | Ensures specific amplification of the target region, crucial for consistent read length and alignment for merging. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standardized chemistry producing ~300bp paired-end reads; knowing the kit version is essential for expected quality drop-off. |

| PhiX Control v3 Library | Spiked-in during sequencing to monitor error rates independently, providing a benchmark for pipeline performance. |

| Quantitative DNA Standard (e.g., from ZymoBIOMICS) | Used in mock community controls with known composition to validate that chosen parameters recover the expected species. |

| Magnetic Bead-Based Cleanup Kit (e.g., AMPure XP) | For post-PCR purification, affecting the input read quality profile by removing primer dimers and short fragments. |

| DADA2 R Package (v1.28+) | The core software toolkit containing the filterAndTrim(), learnErrors(), and plotQualityProfile() functions. |

| High-Performance Computing Cluster or Cloud Instance | Necessary for the computationally intensive error rate learning and multiple iterative runs with different parameters. |

Learning Error Rates and Core Denoising with 'learnErrors()' and 'dada()'

Application Notes

Within the DADA2 pipeline for 16S rRNA amplicon analysis, accurate error rate modeling and core denoising are critical for converting raw sequence reads into amplicon sequence variants (ASVs). The learnErrors() function constructs a parametric error model by learning from the data itself, while the dada() function applies this model to perform the core sample-independent denoising. This process distinguishes true biological variation from sequencing errors with higher resolution than traditional OTU clustering methods.

The error model is a matrix of probabilities, where p(A->C, q) represents the probability of a true base 'A' being called as 'C' at a given quality score q. The learnErrors() algorithm alternates between estimating error parameters and inferring sample composition until convergence. The subsequent dada() algorithm uses this model to model the abundance of each sequence as a mixture of its true abundance and the abundance of erroneous sequences derived from it.

Table 1: Key Quantitative Metrics from a Standard DADA2 Error Learning Run (Illumina MiSeq 2x250)

| Metric | Typical Value Range | Description |

|---|---|---|

| Initial Reads for Error Model | 1-10 million | Number of reads used by learnErrors(). |

| Convergence Iterations | 3-10 | Number of EM algorithm cycles to convergence. |

| Avg. Error Rate (Forward) | 0.5% - 2.0% | Average per-base error probability post-learning. |

| Avg. Error Rate (Reverse) | 1.0% - 3.5% | Reverse reads often have higher error rates. |

| Transition/Transversion Ratio in Model | ~2.0 | Reflects higher probability of certain substitutions (e.g., A->G). |

Final ASVs Output by dada() |

10s - 1000s per sample | Highly dependent on sample complexity and sequencing depth. |

Table 2: Comparison of Denoising Performance: DADA2 vs. OTU Clustering

| Feature | DADA2 (learnErrors() + dada()) |

Traditional 97% OTU Clustering |

|---|---|---|

| Resolution | Single-nucleotide (ASVs) | ~3% divergence (OTUs) |

| Error Correction | Parametric model (learnErrors) |

Heuristic (chimera removal only) |

| Run-to-Run Consistency | High (sequence-based) | Low (depends on reference DB) |

| Computational Demand | Moderate-High | Low-Moderate |

| Sensitivity to Rare Taxa | Higher | Lower (clustered away) |

Experimental Protocols

Protocol 1: Building the Error Model withlearnErrors()

Objective: To estimate the sample-specific sequencing error rates for downstream denoising.

- Input Preparation: Start with quality-filtered and trimmed FASTQ files (e.g., output from

filterAndTrim()). For optimal learning, use a subset of data (e.g., first 1-3 samples) or a combined set of all samples. Function Execution: In R, run the error learning function:

multithread=TRUEenables parallel processing.nbases=1e8sets the maximum number of bases to use for training (default 1e8).randomize=TRUEhelps avoid overfitting to the first few samples.

Model Validation: Plot the error model to assess fit:

The observed error rates (points) should closely follow the black line (learned model) and diverge from the red line (nominal quality score). Poor fit may indicate low-quality data or insufficient training data.

Protocol 2: Core Sample Inference withdada()

Objective: To denoise each sample independently, inferring true biological sequences (ASVs).

Apply Error Model: For each sample's forward reads, run:

Repeat for reverse reads using

errR.derepFastq()dereplicates sequences, storing abundances.err=supplies the learned error model.pool=FALSEdenotes sample-independent inference. Usepool=TRUEfor experiments with very low per-sample sequencing depth to leverage information across samples.

- Process Output: The

dada()return object (dadaF) contains:$sequence: The inferred ASVs.$abundance: The estimated abundances of each ASV.$clustering: A data frame detailing the denoising process (reads in, partitions, etc.).

Merge Paired-End Reads: Combine forward and reverse inferences after

dada():Construct Sequence Table: Create an ASV abundance table across all samples:

Visualizations

Title: DADA2 Error Learning and Denoising Workflow

Title: Error Model: Probability of Base Mis-call

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for DADA2 Protocol

| Item | Function in Protocol | Notes |

|---|---|---|

| 16S rRNA Gene Primers (e.g., 515F/806R) | Amplify hypervariable regions for sequencing. | Choice defines taxonomic breadth and length for denoising. |

| High-Fidelity DNA Polymerase (e.g., Phusion) | Minimizes PCR errors during library prep. | Critical to reduce errors not originating from sequencing. |

| Illumina MiSeq Reagent Kit v3 (600-cycle) | Standard for 2x300 bp paired-end 16S sequencing. | Provides optimal read length for common V3-V4 regions. |

| DADA2 R Package (v1.30+) | Contains the learnErrors() and dada() algorithms. |

Must be installed from Bioconductor for latest updates. |

| Quality Control Software (FastQC, MultiQC) | Assess raw read quality pre- and post-filtering. | Identifies issues (adapter contamination, low Q scores). |

| Reference Databases (SILVA, GTDB) | For taxonomic assignment post-denoising. | Used after ASV table construction; not for clustering. |

| High-Performance Computing (HPC) Resources | Enables multithreading for large datasets. | learnErrors() and dada() are computationally intensive. |

Merging Paired-End Reads and Constructing the Sequence Table

Within the context of a DADA2 pipeline for 16S rRNA amplicon sequencing analysis, merging paired-end reads is a critical preprocessing step for Illumina MiSeq data. This procedure combines the forward and reverse reads of each DNA fragment, which are partially overlapping, to create a single, longer, high-quality contig. This step increases accuracy in amplicon sequence variant (ASV) inference by providing more information per read. Subsequently, constructing the sequence table—a high-resolution analogue of the traditional OTU table—is the foundational output of the DADA2 algorithm, representing the distribution of exact ASVs across all samples. This Application Note details current protocols for these steps, optimized for accuracy and reproducibility in microbial community research relevant to drug development and therapeutic discovery.

The success of read merging is contingent on several quantitative parameters derived from the sequencing data itself. The following table summarizes critical data points and their implications for protocol adjustment.

Table 1: Key Quantitative Parameters for Read Merging and Sequence Table Construction

| Parameter | Typical Value/Range | Importance & Impact on Protocol |

|---|---|---|

| Read Length (e.g., MiSeq v3) | 2 x 300 bp | Determines potential overlap length. Longer reads increase overlap region for reliable merging. |

| Amplicon Length (V4 region) | ~250-290 bp | Target length must be less than sum of read lengths. Ensures sufficient overlap (e.g., 20-50+ bp). |

| Minimum Overlap Required (DADA2) | Default: 12 bp | Sets lower bound for allowing a merge. Increase if spurious merges are observed. |

| Maximum Mismatches in Overlap | Default: 0 | Tolerance for mismatches in overlap region. Can be increased slightly (e.g., 1) for error-tolerant merging. |

| Post-Merge Sequence Length | e.g., 253 ± 5 bp | Quality control metric. Sequences outside expected range are filtered out. |

| Sample Read Depth Post-Merge | Variable (e.g., 10k-100k) | Informs downstream filtering; samples with very low depth may be excluded. |

| Total ASVs in Sequence Table | Depends on complexity (e.g., 100-10,000) | Final output metric. Affected by prior chimera removal and error rate learning. |

Experimental Protocols

Protocol 1: Merging Paired-End Reads Using DADA2 in R

This protocol follows quality filtering and error rate learning steps in the DADA2 pipeline.

Materials & Reagents:

- Filtered and trimmed FASTQ files for forward (

*_R1_trim.fastq.gz) and reverse (*_R2_trim.fastq.gz) reads. - Sample metadata file.

- R statistical environment (version 4.0+).

- DADA2 package (version 1.28+).

- High-performance computing cluster or workstation with adequate RAM (>16 GB recommended for large datasets).

Methodology:

- Load Libraries and Set Paths:

Perform Read Merging: The

mergePairs()function aligns the forward and reverse reads, checks for agreement in the overlap region, and merges them into a single sequence.dadaFs/dadaRs: The denoised forward and reverse read objects from thedada()step.maxMismatch=0: Strict default; mismatches in the overlap typically indicate errors.minOverlap=12: Empirical minimum for reliable merging.

Construct the Sequence Table: The merged reads are assembled into a sample-by-sequence (ASV) abundance matrix.

Remove Chimeras: Chimeric sequences are artifacts formed from two or more biological sequences and must be removed.

Inspect Read Counts Through Pipeline: Track the number of reads surviving each step to assess efficiency and potential sample loss.

Protocol 2: Visualization and Validation of Merge Success

Methodology:

- Length Distribution Plot:

Visualize the distribution of merged sequence lengths to confirm they cluster around the expected amplicon size.

- Overlap Length Calculation:

Compute the empirical overlap for a random subset of reads to ensure it was sufficient.

Visualization of Workflows

Title: DADA2 Workflow for Merging Reads and Building ASV Table

Title: Schematic of Paired-End Read Merging Process

The Scientist's Toolkit

Table 2: Research Reagent Solutions for DADA2 Pipeline

| Item | Function in Merging/Sequence Table Construction | Example/Specification |

|---|---|---|

| Illumina MiSeq Reagent Kit | Generates 2x250bp or 2x300bp paired-end reads, providing the necessary overlap for the V4 region. | MiSeq Reagent Kit v3 (600-cycle) |

| 16S rRNA Gene Primers | Amplify the target hypervariable region. Choice determines amplicon length and overlap potential. | 515F (Parada) / 806R (Apprill) for V4 region. |

| QIAmp PowerFecal Pro DNA Kit | High-yield, inhibitor-free microbial genomic DNA extraction from complex samples. Essential for input quality. | Qiagen, Cat. No. 51804 |

| DADA2 R Package | Core software containing the mergePairs(), makeSequenceTable(), and removeBimeraDenovo() functions. |

Version 1.28+ on Bioconductor |

| RStudio IDE | Integrated development environment for executing, documenting, and visualizing the R-based DADA2 pipeline. | RStudio 2024.04+ with R 4.3+ |

| High-Fidelity PCR Enzyme | For library preparation; minimizes PCR errors that can inflate perceived ASV diversity. | KAPA HiFi HotStart ReadyMix |

| Quant-iT PicoGreen dsDNA Assay | Fluorometric quantification of amplicon libraries for precise pooling before sequencing. | Thermo Fisher Scientific, Cat. No. P11496 |

Removing Chimeras and Assigning Taxonomy with Reference Databases

This protocol details the critical, concluding bioinformatic steps within a comprehensive thesis on the DADA2 pipeline for 16S rRNA amplicon analysis. Following the inference of exact amplicon sequence variants (ASVs) via DADA2's core sample inference algorithm, two essential processes ensure biological interpretability: the removal of PCR chimeras and the taxonomic classification of the resultant ASVs. Chimeras are spurious sequences formed during PCR from two or more parent sequences, representing artifacts rather than true biological diversity. Their removal is mandatory for accurate ecological interpretation. Subsequent taxonomic assignment links each ASV to known biological nomenclature, enabling hypothesis testing in microbial ecology, biomarker discovery, and drug development research.

Application Notes: Core Principles and Current Best Practices

- Chimera Detection: Modern approaches, as implemented in DADA2, perform de novo chimera identification by aligning each sequence to more abundant, phylogenetically similar sequences, seeking evidence of being a composite. This method is sensitive and does not rely solely on reference databases.

- Taxonomic Assignment: The standard method is classification via exact matching and probabilistic modeling against a curated reference database (e.g., SILVA, Greengenes, RDP). The

assignTaxonomyfunction in DADA2 uses the RDP naïve Bayesian classifier, providing confidence estimates (bootstrap values) for assignments from genus to phylum level. - Species-Level Assignment: For finer resolution, the

addSpeciesfunction can perform exact string matching of the ASV against a database of reference species sequences, a stricter method that often leaves many ASVs unassigned at the species level. - Database Choice: Selection is critical and project-dependent. SILVA is comprehensive and frequently updated, Greengenes offers a standardized taxonomy for older comparative studies, and the RDP database is a long-standing, quality-controlled resource. The chosen database must be compatible with the primer region used for amplification.

Table 1: Comparison of Major 16S rRNA Reference Databases (as of 2024)

| Database | Latest Version | Release Year | Number of Taxonomically Curated Sequences | Primary Use Case | Recommended For DADA2? |

|---|---|---|---|---|---|

| SILVA | SSU r138.1 | 2020 | ~2.7 million (bacteria/archaea) | Comprehensive, updated taxonomy; broad-range studies | Yes (use silva_nr99_v138.1_train_set.fa.gz) |

| Greengenes | gg138 | 2013 | ~1.3 million | Historical comparisons; microbiome studies aligning with legacy data | Yes (use gg_13_8_train_set_97.fa.gz) |

| RDP | RDP 18 | 2024 | ~4.5 million (bacteria/archaea/fungi) | Quality-focused, includes fungi; general taxonomic assignment | Yes (use rdp_train_set_18.fa.gz) |

| UNITE | 9.0 | 2022 | ~1.1 million (eukaryotes) | Fungal ITS region studies | For ITS2 analysis with DADA2 |

Detailed Experimental Protocols

Protocol 3.1: Chimera Removal with DADA2

Objective: To identify and remove chimeric ASVs from the sequence table.

Materials: DADA2 sequence table (output from mergePairs or mergeDenoised), list of ASV sequences.

Methodology:

- Load the DADA2 sequence table (

seqtab) into the R environment. - Execute the

removeBimeraDenovofunction. Themethod="consensus"parameter is recommended, where each sample is independently checked for chimeras, and sequences flagged in a sufficient fraction of samples are removed.

- Save the non-chimeric sequence table. Typically, 10-25% of sequences are identified as chimeric and removed.

Protocol 3.2: Taxonomic Assignment with DADA2

Objective: To assign taxonomy to each non-chimeric ASV.

Materials: Final ASV sequence table (seqtab.nochim), downloaded reference database training files.

Methodology:

- Download the appropriate training set (e.g., from https://benjjneb.github.io/dada2/training.html) and unzip.

- Use the

assignTaxonomyfunction. A minimum bootstrap confidence of 50 is standard, but 80 is more conservative.

(Optional) For species-level assignment: Use the

addSpeciesfunction with a species-specific database.The final output is a taxonomy table where rows correspond to ASVs and columns to taxonomic ranks (Kingdom, Phylum, ..., Genus).

Visual Workflows

Title: DADA2 Post-Processing: Chimera Removal & Taxonomy

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Reagents for Chimera Removal and Taxonomy

| Item/Reagent | Function in Protocol | Example/Format | Key Consideration |

|---|---|---|---|

| DADA2 R Package | Provides core functions (removeBimeraDenovo, assignTaxonomy) for all steps. |

R library (bioconductor) |

Must be installed from Bioconductor, not CRAN. |

| Curated Reference Database | Training set for the Bayesian classifier; defines taxonomic nomenclature. | FASTA file (e.g., silva_nr99_v138.1_train_set.fa.gz) |

Primer region must match your amplified region. |

| Species Assignment Database | For exact matching to assign species-level taxonomy. | FASTA file (e.g., silva_species_assignment_v138.1.fa.gz) |

Highly stringent; expect many NA assignments. |

| High-Performance Computing (HPC) Environment | Enables multithreading for computationally intensive steps. | Linux server or cluster with SLURM scheduler | Set multithread=TRUE to significantly reduce runtime. |

| Sequence Table (seqtab) | Input containing ASV abundances per sample. | R data object (.rds file) |

Direct output from DADA2's mergePairs step. |

| Taxonomy Table | Final output linking ASV ID to taxonomic lineage. | Data frame or matrix in R | Can be combined with sequence table for phyloseq. |

Creating a Phyloseq Object for Downstream Analysis

Within the broader thesis employing the DADA2 pipeline for 16S rRNA amplicon research, the construction of a phyloseq object represents the critical juncture where denoised sequence data is organized into a unified, reproducible data structure for ecological and statistical analysis. This protocol details the steps required to assemble the core components—an OTU table, a sample metadata table, a taxonomic table, and a phylogenetic tree—into a phyloseq-class object. This object is the essential input for downstream analyses including alpha/beta diversity, differential abundance testing, and ordination.

Prerequisites: Outputs from the DADA2 Pipeline

The DADA2 pipeline (v1.28+) yields the necessary components. The following table summarizes the required input files and their descriptions.

Table 1: Required Input Data Components from DADA2

| Component | Format | Description | Typical DADA2 Output File |

|---|---|---|---|

| Sequence Variant Table | Matrix (SV x Samples) | A count matrix of Amplicon Sequence Variants (ASVs). | seqtab.nochim (R object) |

| Taxonomic Assignments | Matrix (SV x Rank) | Taxonomic classification for each ASV. | taxa (R object from assignTaxonomy) |

| Sample Metadata | Data Frame (Samples x Variables) | Experimental design and sample-specific data. | User-provided CSV file. |

| Representative Sequences | DNAStringSet or FASTA | The DNA sequence of each ASV. | dada2::getSequences(seqtab.nochim) or FASTA. |

| (Optional) Phylogenetic Tree | phylo object (ape) |

Phylogenetic relationship of ASVs. | Constructed via DECIPHER/phangorn. |

Detailed Protocol

Load Required R Packages

Import Core Data Components

Assume DADA2 outputs are in the working directory.

(Optional) Construct a Phylogenetic Tree

A multiple sequence alignment and tree are needed for phylogeny-aware metrics (e.g., UniFrac).

Create the Phyloseq Object

Combine all components into a single phyloseq object.

Basic Validation and Preprocessing

Perform essential checks and filtering.

Workflow Diagram

Title: Workflow for Constructing a Phyloseq Object from DADA2 Outputs

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Computational Tools

| Item / Software Package | Function in Protocol | Key Notes |

|---|---|---|

| R (v4.3+) | Primary programming environment for data manipulation and analysis. | Base system required. |

| phyloseq (v1.46+) | Core R/Bioconductor package for organizing and analyzing microbiome data. | Provides the S4 object class and analysis functions. |

| DADA2 (v1.28+) | Generates the core input data: denoised sequence variant table and taxonomy. | Must be run prior to this protocol. |

| Biostrings | Handles biological sequence data (DNAStringSet for ASV sequences). | Required for storing sequences in phyloseq. |

| DECIPHER | Performs accurate multiple sequence alignment for phylogenetic tree construction. | Used in optional tree-building step. |

| phangorn | Performs phylogenetic reconstruction and modeling in R. | Used for distance calculation and ML tree fitting. |

| Sample Metadata CSV File | Contains all sample-specific variables (e.g., treatment, host, pH). | Crucial for meaningful statistical analysis. Must be meticulously curated. |

| High-Performance Computing (HPC) Cluster | For computationally intensive steps (tree building, large dataset processing). | Recommended for datasets > 10,000 ASVs or 1000 samples. |

Solving Common DADA2 Pipeline Errors and Optimizing for Your Data

Diagnosing and Fixing Common Error Messages and Convergence Issues

This document serves as a critical technical appendix to the broader thesis on optimizing the DADA2 pipeline for robust 16S rRNA amplicon analysis in pharmaceutical and clinical research. A reproducible and error-free bioinformatics workflow is paramount for generating reliable microbial community data, which informs downstream analyses in drug development, such as identifying microbiome-based therapeutic targets or biomarkers. Convergence issues and cryptic error messages within DADA2 can halt analysis, introduce bias, and compromise the integrity of research conclusions. These Application Notes provide targeted diagnostic protocols and solutions to ensure pipeline robustness.

Common DADA2 Error Messages: Diagnosis and Resolution

The following table consolidates frequent errors, their likely causes, and specific remediation steps.

Table 1: Common DADA2 Errors and Fixes

| Error Message / Symptom | Root Cause | Diagnostic Check | Fix Protocol |

|---|---|---|---|

Error in[<-.data.frame(tmp, "sequence", value = ....) in addSpecies |

Taxonomy assignment file format mismatch or missing column. | Verify the speciesAssignmentFa file is a valid FASTA and the header format matches DADA2 expectations (e.g., >Genus_species). |

Re-download or reformat the taxonomy database. Use assignSpecies(..., allowMultiple=TRUE) to bypass strict matching. |

derepFastq fails with "Index out of bounds" |

The provided file paths are incorrect or files are empty/corrupt. | Run file.exists(fnFs) and file.info(fnFs)$size > 0 on all input file paths. |

Correct file paths. Re-run sequencing adapter trimming and quality filtering upstream of DADA2. |

Error in .Call(...) : Negative index in filterAndTrim |

Inconsistent pairing of forward and reverse reads post-trimming; one file may have zero reads. | Compare read counts in output of filterAndTrim: out <- filterAndTrim(...); head(out). |

Loosen filterAndTrim parameters (maxEE, truncLen). Manually inspect quality profiles with plotQualityProfile to set appropriate truncation lengths. |

learnErrors fails to converge or yields extremely high error rates |

Insufficient read data for robust error model learning or poor sequencing quality. | Check the nbases parameter (default 1e8). Monitor error rate plots: do they stabilize? |

Increase input data: pool data from multiple runs (pool=TRUE) or increase nbases. Ensure rigorous primer/adapter trimming prior. |

mergePairs results in very low merge percentage (< 20%) |

Overlap region between forward and reverse reads is too short due to poor truncLen choice or amplicon length. |

Plot expected fragment length distribution. Check overlap with: expected_overlap <- (truncLen[1] + truncLen[2]) - amplicon_length. |

Re-trim reads with less aggressive truncLen values. Use justConcatenate=TRUE in mergePairs for long amplicons (less ideal). |

Chimeras > 80% of sequences post-removeBimeraDenovo |

Overly sensitive chimera detection due to low sequence depth per sample or PCR artifacts. | Check per-sample input read count to removeBimeraDenovo. Is the sample deeply sequenced? |

Use the method="pooled" option in removeBimeraDenovo. Consider using minFoldParentOverAbundance parameter to adjust sensitivity. |

Protocol for Diagnosing Convergence Issues inlearnErrors

A non-converging error model is a critical failure point.

Experimental Protocol: Error Model Learning and Diagnostics

- Data Input Preparation: Start with quality-filtered reads (output from

filterAndTrim). Use a subset of at least 1 million reads across all samples for robust learning. - Execute

learnErrorswith Verbose Output:errF <- learnErrors(fnFs, nbases = 2e8, randomize = TRUE, multithread = TRUE, verbose = TRUE). Therandomize=TRUEhelps assess convergence stability. - Visual Diagnostics: Generate the error rate plot:

plotErrors(errF, nominalQ=TRUE). - Convergence Assessment: In the plot, the black line (observed error rates) should closely follow the red line (estimated error rates) after a certain quality score. Large, systematic deviations indicate poor convergence.

- Remediation Steps:

- If convergence is poor, increase the

nbasesparameter to 2e8 or 5e8. - Implement the

pool=TRUEargument to use data from all samples for a single, more robust model. - If using pooled data and issues persist, inspect raw sequence quality from the sequencer; the run itself may be flawed.

- If convergence is poor, increase the

Workflow for Systematic Pipeline Debugging

Diagram Title: DADA2 Error Diagnosis Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for DADA2 Pipeline Research

| Item | Function in DADA2 Research Context |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, KAPA HiFi) | Minimizes PCR amplification errors that can be misinterpreted as biological sequence variants by DADA2, reducing false positive ASVs. |

| Validated 16S rRNA Primer Panels (e.g., 27F/1492R, 341F/806R) | Provides consistent and comprehensive amplification of target hypervariable regions, ensuring amplicon length compatibility with DADA2's mergePairs. |

| Quantitative PCR (qPCR) Kit with dsDNA Assay | Enables accurate normalization of DNA input pre-PCR, reducing sample-to-sample sequencing depth bias that affects diversity metrics. |

| Mock Microbial Community DNA (e.g., ZymoBIOMICS) | Serves as a positive control and ground truth for benchmarking DADA2 pipeline accuracy, error rates, and chimera removal performance. |

| Ultra-pure, Nuclease-free Water | Used in all PCR and library prep steps to prevent environmental contamination, which is a primary source of spurious sequences detected by DADA2. |

| Magnetic Bead-based Purification Kit (e.g., AMPure XP) | Provides size-selective clean-up of amplicons post-PCR, removing primer dimers that consume sequencing reads and interfere with DADA2 processing. |

| PhiX Control v3 Library | Spiked into sequencing runs to provide balanced nucleotide diversity and improve base calling accuracy, which underpins the quality scores DADA2 relies on. |

| DADA2-Compatible Reference Databases (e.g., SILVA, GTDB, RDP) | Curated files (train fasta and species assignment) for taxonomic assignment, a critical downstream step after sequence inference. |

Optimizing Trimming Length and Quality Thresholds for V1-V3 vs. V4 Regions

Within the broader thesis on optimizing the DADA2 pipeline for 16S rRNA amplicon data research, a critical variable is the adjustment of trimming and filtering parameters for different hypervariable regions. The V1-V3 and V4 regions differ in length, sequence composition, and error profiles, necessitating region-specific quality control strategies to maximize sequence retention while minimizing erroneous reads. This application note provides a comparative framework and explicit protocols for parameter optimization.

The following tables synthesize current best practices derived from recent literature and benchmark studies for paired-end Illumina data processed through DADA2.

Table 1: Recommended Parameters for V1-V3 Region (approx. 500-550 bp amplicon, 2x300 bp reads)

| Parameter | Recommended Value | Rationale & Notes |

|---|---|---|

truncLen (Forward) |

260-280 | Sharp quality drop common after 280 cycles. Retain sufficient length for overlap. |

truncLen (Reverse) |

220-240 | Reverse read often shows earlier quality decline. |

trimLeft (Fwd) |

10-20 | Remove primer sequences and initial low-complexity bases. |

trimLeft (Rev) |

10-20 | Remove primer sequences and initial low-complexity bases. |

maxEE (Forward) |

2.0 | Slightly more lenient due to longer read length and higher expected errors. |

maxEE (Reverse) |

2.0 | Slightly more lenient due to longer read length and higher expected errors. |

minLen |

200 | Ensure post-trimming length is sufficient for reliable merging. |

| Expected Merge Rate | 70-85% | Overlap is ~50-100 bp; rate highly dependent on sample and truncLen. |

Table 2: Recommended Parameters for V4 Region (approx. 250-300 bp amplicon, 2x250 bp reads)

| Parameter | Recommended Value | Rationale & Notes |

|---|---|---|

truncLen (Forward) |

240 | Consistent high quality across most of read. |

truncLen (Reverse) |

200-220 | Quality may drop earlier than forward. |

trimLeft (Fwd) |

10 | Remove primer sequences (e.g., 515F). |

trimLeft (Rev) |

10 | Remove primer sequences (e.g., 806R). |

maxEE (Forward) |

2.0 | Standard threshold for good quality reads. |

maxEE (Reverse) |

2.0 | Standard threshold for good quality reads. |

minLen |

200 | Ensure post-trimming length is sufficient for reliable merging. |

| Expected Merge Rate | >90% | Large, consistent overlap (~180-200 bp). |

Experimental Protocol: Systematic Parameter Optimization for a New Dataset

This protocol details the steps to empirically determine optimal truncLen and maxEE for a given region and sequencing run.

A. Prerequisite: Quality Profile Inspection

- Load Libraries and Data: In R, load

dada2andShortRead. Set path to demultiplexed FASTQ files. - Generate Quality Profiles: Use

plotQualityProfile()on a subset of forward and reverse reads (e.g., first 1 million reads). - Visual Assessment: Identify the cycle number where median quality score drops below Q30 (or another relevant threshold). This provides the initial

truncLencandidates.

B. Iterative Trimming and Merging Test

- Define Parameter Grid: Create a matrix of

truncLencombinations based on quality profiles (e.g., F: 260, 270, 280; R: 220, 230, 240 for V1-V3). - Run Filtering and Merging Loop: Write a script that loops through each combination, running

filterAndTrim(),learnErrors(),dada(), andmergePairs(). - Collect Metrics: For each run, record: (i) Percentage of reads passing filter, (ii) Percentage of filtered reads successfully merged, (iii) Mean expected errors in merged reads.

- Optimal Choice: Plot results. The optimal combination is typically at the "knee" of the curve for merged read count versus merged read length/quality. Prioritize merge rate for V4, balance merge rate and length for V1-V3.

C. maxEE Sensitivity Analysis

- Fix

truncLen: Use the optimaltruncLenfrom Step B. - Vary

maxEE: Test a range of values (e.g., 1.0, 2.0, 3.0, 5.0). - Evaluate Impact: Track the percentage of reads retained and the resulting error rates from

learnErrors(). Select the highestmaxEEthat does not significantly increase the inferred error rate.

D. Final Validation

- Process Full Dataset: Run the full DADA2 pipeline with chosen parameters.

- Check Chimera Rate: Monitor the fraction of sequences removed by

removeBimeraDenovo. Abnormally high rates may indicate overly lenient filtering. - Biological Sanity Check: Verify that expected dominant taxa are present and that negative controls are clean.

Visual Workflow: Parameter Optimization Decision Pathway

Title: DADA2 Parameter Optimization Decision Pathway

The Scientist's Toolkit: Key Reagent Solutions & Computational Tools

Table 3: Essential Research Reagents and Materials for 16S Amplicon Workflow

| Item | Function & Rationale |

|---|---|

| Region-Specific Primer Pairs (e.g., 27F-534R for V1-V3; 515F-806R for V4) | To specifically amplify the target hypervariable region from genomic DNA. Choice dictates amplicon length and taxonomic resolution. |

| High-Fidelity DNA Polymerase (e.g., Phusion, Q5) | Minimizes PCR errors introduced during amplification, which is critical for downstream sequence variant analysis. |

| Quantitative DNA Standard (e.g., gBlocks, Synthetic Microbial Communities) | Used to validate primer performance, estimate bias, and benchmark bioinformatic pipeline accuracy. |

| Size-Selective Magnetic Beads (e.g., AMPure XP) | For post-PCR cleanup to remove primer dimers and select the correct amplicon size range, reducing noise. |

| Calibrated Mock Microbial Community DNA | Essential positive control to measure accuracy, precision, and bias of the entire wet-lab and DADA2 pipeline. |

| Nuclease-Free Water & Low-Binding Tubes | To prevent contamination and adsorption of low-concentration DNA samples, especially crucial for low-biomass studies. |

| DADA2 R Package (v1.28+) | Core software that implements the error model and ASV inference algorithm. Requires proper parameter input. |

| High-Performance Computing (HPC) Environment | DADA2 and related preprocessing steps (e.g., cutadapt) are computationally intensive and require adequate RAM and CPU. |

Handling Low-Depth Samples and Managing Overly Aggressive Filtering

Within the comprehensive analysis of the DADA2 pipeline for 16S rRNA amplicon data, a critical challenge is balancing data fidelity with inclusivity. The core thesis posits that optimal bioinformatic outcomes require adaptive, context-aware filtering rather than universal fixed thresholds. This note addresses two interconnected issues: the responsible handling of samples with low sequencing depth and the mitigation of errors introduced by excessively stringent filtering parameters, which can distort community diversity estimates and bias downstream analyses.

Quantitative Impact of Filtering on Sample Retention

Recent benchmarking studies (2023-2024) illustrate the trade-offs between read quality control and data retention. The following table summarizes key metrics from simulated and empirical datasets.

Table 1: Impact of Standard vs. Aggressive Filtering Parameters on Sample and Read Retention

| Filtering Regime | MaxEE (Fwd/Rev) | TruncLen (Fwd/Rev) | Avg. % Input Reads Retained | Avg. % Samples Retained (Post-Depth Filter) | Avg. ASV Richness Reduction | Notes |

|---|---|---|---|---|---|---|

| Standard (DADA2 Default) | (2,2) | (240,160) | 65-80% | 95-98% | 10-20% | Balanced for most studies. |

| Aggressive (High Purity) | (1,1) | (245,165) | 40-55% | 60-75%* | 30-50% | High risk of discarding low-biomass/ degraded samples. |

| Permissive (Low-Biomass Focus) | (5,5) | (200,150) | 85-95% | >99% | 5-15% | Increased chimera load; requires careful downstream inspection. |

| Adaptive (Context-Aware) | Sample-specific | Quality plot-based | 70-90% | 98-100% | 15-25% | Computationally intensive; optimal for mixed sample types. |

*Percentage drops significantly when a minimum read threshold (e.g., 1000 reads) is applied post-filtering.

Protocol for Evaluating and Handling Low-Depth Samples

Objective: To systematically identify, diagnose, and decide on the inclusion of low-depth samples without compromising overall dataset integrity.

Detailed Protocol:

Depth Calculation: After the DADA2 sequence table construction step (

seqtab), calculate total reads per sample.Diagnostic Plotting: Generate visualizations to contextualize depth.

Decision Workflow: Implement a tiered approach based on depth and sample metadata.

- Tier 1 (>10,000 reads): Full inclusion.

- Tier 2 (1,000 - 10,000 reads): Include but flag for sensitivity analysis. Consider merging technical replicates.

- Tier 3 (<1,000 reads): Apply the following checklist: a. Metadata Check: Is the low depth biologically plausible (e.g., control swab, low-biomass site)? b. Contamination Check: Compare to negative controls. Is the sample's community profile distinct? c. Technical Assessment: Check raw read quality for specific sample; potential extraction/PCR failure. d. Downstream Impact: Remove and re-run alpha/beta diversity analysis to determine if sample is an outlier driving patterns.

Inclusion Strategies:

- Rarefaction: If most samples are >10,000 reads and low-depth samples are >1,000 reads, rarefy to a common depth (e.g., 2,000 reads) for diversity metrics only, acknowledging the uncertainty.

- Separate Analysis: Report core findings on a filtered dataset (e.g., >5,000 reads) and provide a supplementary analysis including all samples with appropriate caveats.

Diagram: Low-Depth Sample Decision Workflow

Title: Decision Pathway for Low-Depth 16S rRNA Samples

Protocol for Diagnosing and Correcting Overly Aggressive Filtering

Objective: To identify signatures of excessive filtering and implement corrective parameters that preserve biological signal.

Detailed Protocol:

Symptoms Diagnosis: Identify these signs post-pipeline run:

- High Sample Loss: >25% of samples lost after

filterAndTrim(). - Abnormal Length Distribution: Truncated reads (

truncLen) are shorter than the primary quality plateau observed inplotQualityProfile(). - Low Merge Rate: <70% of reads successfully merged, indicating over-trimming.

- Dilution of Negative Controls: Negatives comprise >1% of total reads in the sequence table, suggesting real signal was filtered out.

- High Sample Loss: >25% of samples lost after

Corrective Re-analysis Workflow:

Iterative Parameter Testing: For critical studies, design a filtering grid:

Diagram: Correcting Overly Aggressive Filtering

Title: Workflow to Correct Over-Filtering in DADA2

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Robust 16S Amplicon Studies

| Item | Function/Application | Consideration for Low-Depth/Filtering Issues |

|---|---|---|