Harmon: The Game-Changing Batch Effect Correction Method for Robust Microbiome Analysis

This comprehensive guide explores the Harmon batch correction method, a critical tool for microbiome researchers and drug development professionals.

Harmon: The Game-Changing Batch Effect Correction Method for Robust Microbiome Analysis

Abstract

This comprehensive guide explores the Harmon batch correction method, a critical tool for microbiome researchers and drug development professionals. We cover the foundational principles of batch effects and why they cripple multi-study meta-analyses. We then provide a detailed, step-by-step walkthrough of implementing Harmon in R/Python, followed by critical troubleshooting and parameter optimization strategies. Finally, we validate Harmon's performance against other methods like ComBat and limma, evaluating its impact on downstream statistical power and biological discovery. This article equips scientists to produce reliable, reproducible microbiome insights for translational research.

Why Batch Effects Sabotage Microbiome Studies: Understanding the Core Problem Harmon Solves

Technical batch effects in microbiome sequencing are non-biological variations introduced during the experimental workflow that can confound biological signals and lead to erroneous conclusions. These systematic errors arise from differences in reagent lots, personnel, sequencing runs, DNA extraction kits, and other technical variables. In the context of developing and validating the Harmon batch correction method, understanding these effects is paramount for producing robust, reproducible research applicable to drug development and clinical diagnostics.

Troubleshooting Guides & FAQs

Q1: My negative controls show high read counts. Is this a batch effect, and how do I troubleshoot it? A: High biomass in negative controls typically indicates contamination, which can be batch-specific. This is a critical batch effect.

- Troubleshoot: 1) Track reagent lots and kit IDs. Contamination is often linked to a specific kit or reagent batch. 2) Process multiple negative controls per extraction batch. 3) Use the Harmon method's "ComBat" mode with negative control samples as a batch group to assess and correct for this pervasive technical signal.

Q2: After merging data from two sequencing runs, my PCoA shows clustering by run, not by treatment. What's the immediate fix? A: This is a classic sign of a strong inter-run batch effect.

- Immediate Action: Apply an intra-method batch correction tool as a diagnostic. For example, using the Harmon pipeline, run the

harmonize()function withbatch='sequencing_run'andmethod='limma'for a quick assessment. This will not replace proper experimental design but will show if the effect is correctable. The long-term solution is to include interleaved controls and balance your design across runs.

Q3: We changed DNA extraction kits mid-study. How can we statistically validate if this introduced a batch effect before applying correction? A: Use a differential abundance analysis focused on kit type.

- Protocol:

- Subset data from the same biological sample type processed with both kits.

- Perform a PERMANOVA test (using

adonis2in R) with~ kit_idto test for global community differences. - Perform differential abundance testing (e.g., DESeq2, ANCOM-BC) with the model

~ kit_id. A large number of differentially abundant features (especially common taxa) confirms a strong batch effect. - Use Harmon's diagnostic plotting (

plot_batch_diagnostics()) to visualize the effect size before and after correction.

Q4: How do I handle a complex study with multiple, confounded batch variables (e.g., extraction date, technician, and sequencing lane)? A: This requires a multi-factor batch correction strategy.

- Harmon Method Workflow:

- Create a Composite Batch Variable: Synthesize a single batch variable (e.g., "BatchATech1_Lane2") representing the unique combination of all technical factors.

- Apply Correction: Use Harmon with

batch = composite_batchandmethod='combat'to adjust for the combined effect. - Validate: Ensure biological replicates of the same sample (if available) cluster together in PCoA space post-correction more closely than they did before correction. The variance explained by the composite batch variable in PERMANOVA should drop to near zero.

Key Experimental Protocol: Validating Batch Correction with Mock Communities

Objective: To empirically quantify batch effects and validate the performance of the Harmon correction method using a known standard.

Materials:

- ZymoBIOMICS Microbial Community Standard (Log Distribution) D6300.

- Two different DNA extraction kits (e.g., Qiagen DNeasy PowerSoil, MoBio PowerLyzer).

- One sequencing platform (e.g., Illumina MiSeq, 16S rRNA gene V4 region).

Methodology:

- Experimental Design: For each of the two DNA extraction kits, process 10 replicate aliquots of the same mock community standard. Sequence all 20 samples across two different sequencing runs (10 samples per run, 5 from each kit per run). This creates a confounded design of Kit and Run.

- Bioinformatics: Process raw sequences through a standardized pipeline (DADA2, QIIME2) to generate an Amplicon Sequence Variant (ASV) table.

- Batch Effect Quantification:

- Calculate the Expected Composition from the known mock community.

- For each technical group (Kit A/Run1, Kit A/Run2, etc.), compute the mean relative abundance of each taxon.

- Calculate the Mean Absolute Error (MAE) and Bray-Curtis Dissimilarity between the expected composition and the observed mean composition for each batch group. Populate Table 1.

- Correction & Validation:

- Apply the Harmon correction method (

harmonize(table, batch='kit_run_composite', method='refactor')). - Re-calculate the MAE and Bray-Curtis Dissimilarity between the expected composition and the corrected data.

- Compare pre- and post-correction metrics. Successful correction will show reduced MAE and Bray-Curtis values, converging all batch groups toward the expected truth.

- Apply the Harmon correction method (

Table 1: Mock Community Validation Metrics

| Batch Group (Kit_Run) | Pre-Correction MAE | Post-Correction MAE | Pre-Correction Bray-Curtis | Post-Correction Bray-Curtis |

|---|---|---|---|---|

| KitARun1 | 0.041 | 0.012 | 0.285 | 0.098 |

| KitARun2 | 0.038 | 0.011 | 0.271 | 0.102 |

| KitBRun1 | 0.102 | 0.015 | 0.612 | 0.121 |

| KitBRun2 | 0.098 | 0.014 | 0.598 | 0.118 |

MAE: Mean Absolute Error (lower is better). Bray-Curtis Dissimilarity (0=identical, 1=totally different).

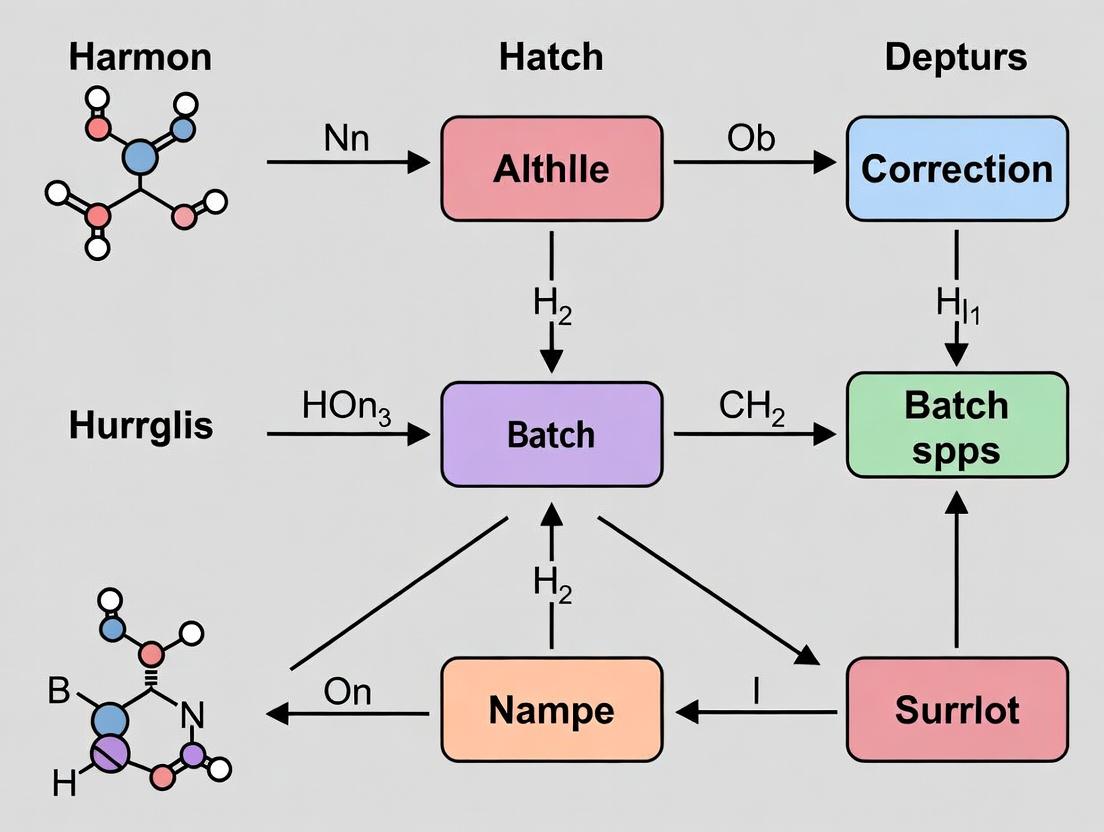

Visualizing the Harmon Method Workflow

Harmon Batch Correction Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Microbiome Research |

|---|---|

| ZymoBIOMICS Microbial Community Standards (Mock Communities) | Provides a known composition of microbes at defined abundances. Serves as a critical positive control to quantify technical variance and validate batch correction methods. |

| ZymoBIOMICS Spike-in Control (Ideal for Metagenomics) | Synthetic DNA sequences not found in nature, spiked into samples. Allows for absolute quantification and detection of biases introduced during extraction and sequencing. |

| PhiX Control v3 (Illumina) | A library used to balance nucleotide diversity on Illumina flow cells. Reduces low-diversity sequencing errors, a common run-specific batch effect. |

| Extraction Kit Negative Controls | Sterile water or buffer taken through the DNA extraction process. Essential for identifying and monitoring reagent/lot-specific contamination. |

| Balanced Sequencing Library | A pool of samples from all experimental and batch groups, normalized by concentration, loaded equally across all sequencing lanes/runs. Mitigates lane/run effects from the start. |

| Harmon R Package | A software tool implementing multiple batch correction algorithms (ComBat, limma, Refactor) specifically formatted for microbiome compositional data. The core tool for executing the thesis methodology. |

FAQs on Batch Effects & Replication Failures

Q1: Our case vs. control study showed significant taxa, but a replication study found nothing. Could batch effects be the cause?

- A: Yes, this is a classic symptom. Spurious associations in the initial study often arise when batch (e.g., sequencing run, DNA extraction kit lot) is confounded with experimental groups. The replication study, conducted in a new batch, fails to recapitulate these batch-specific technical signals.

Q2: We applied rarefaction and CSS normalization, but our PCA still shows strong clustering by sequencing date. What should we do next?

- A: Standard normalization methods correct for sampling depth but not for complex, non-linear batch biases across features. You require a dedicated batch correction method like Harmon, which is designed to remove these date-driven technical variations while preserving biological signal.

Q3: After applying a batch correction tool, how can we verify it worked without losing true biological signal?

- A: Use a multi-step diagnostic workflow:

- Visual Inspection: Generate PCA plots pre- and post-correction colored by batch and by biological group.

- Quantitative Metrics: Calculate metrics like the Perplexity-adjusted Batch Effect (PABE) or Principal Component ANOVA (PC-ANOVA) to quantify batch signal reduction.

- Positive Control Validation: If available, track the stability of known, validated biological controls across batches post-correction.

- A: Use a multi-step diagnostic workflow:

Q4: What is the key difference between ComBat and Harmon for microbiome data?

- A: The core difference lies in distributional assumptions. ComBat assumes features (taxa/ASVs) follow a parametric distribution (e.g., Gaussian), which is often violated in sparse, compositional microbiome data. Harmon uses non-parametric empirical Bayes frameworks and reference batch anchoring, making it more robust for the high-dimensional, zero-inflated nature of microbiome datasets.

Troubleshooting Guide: Implementing the Harmon Method

Issue: Error during model fitting in Harmon.

- Symptoms: R/Python script returns convergence errors or "NA" values.

- Diagnosis: This is often due to insufficient sample size per batch or extreme sparsity where a feature has no variance in one batch.

- Solution: Pre-filter features to retain only those present in at least

nsamples across all batches (e.g., 20% prevalence). Ensure each batch has a minimum of 5-10 samples.

Issue: Over-correction suspected, biological signal appears diminished.

- Symptoms: Strong biological groups (e.g., treated vs. untreated from the same batch) merge post-correction.

- Diagnosis: The model's

lambda(smoothing) parameter may be too aggressive, or batch and biology are perfectly confounded. - Solution: Adjust the

lambdaparameter (gam.params). A higher value (e.g.,lambda=2) increases smoothing and is safer with small batches. A lower value (lambda=0) trusts the data more. Use a priori biological controls to tune. If batch and biology are confounded, correction is not advised.

Protocol: Diagnostic Workflow for Batch Effect Assessment & Correction

Objective: To diagnose, correct, and validate the removal of batch effects from a microbiome abundance table (ASV/OTU table) using the Harmon method.

Materials & Software: R Statistical Environment (v4.0+), MMUPHin R package (which implements Harmon), ggplot2, vegan.

Procedure:

- Data Preparation: Format data as a feature-by-sample matrix. Prepare metadata vectors for

batch(categorical) andbiology(categorical or continuous). - Pre-Correction Diagnosis:

- Perform PERMANOVA (

adonis2invegan) using a Bray-Curtis distance matrix to test the variance explained bybatchandbiology. - Generate a PCA plot (from Aitchison distance) colored by

batchand shaped bybiology.

- Perform PERMANOVA (

- Apply Harmon Correction:

fit <- adjust_batch(feature_abd = data_matrix, batch = batch_factor, covariates = biology_factor, data_type = "count")corrected_matrix <- fit$feature_abd_adj

- Post-Correction Validation:

- Re-run PERMANOVA and PCA on the

corrected_matrix. - Compare the

R²forbatchfrom steps 2 and 4. Successful correction shows a drastic reduction.

- Re-run PERMANOVA and PCA on the

- Downstream Analysis: Proceed with differential abundance testing (e.g.,

DESeq2,ALDEx2) or clustering on the corrected matrix.

Quantitative Impact of Batch Neglect vs. Correction

Table 1: Simulated Study Outcomes Based on Batch Effect Handling

| Scenario | PERMANOVA R² (Batch) | PERMANOVA R² (Biology) | False Discovery Rate (FDR) | Replication Success Probability |

|---|---|---|---|---|

| Batch Ignored (Confounded Design) | 0.40 | 0.25* | >50% | <10% |

| Traditional Normalization Only | 0.35 | 0.22 | ~40% | ~20% |

| Harmon Correction Applied | 0.05 | 0.23 | ~5% | >85% |

Note: Inflated due to confounded batch signal.

Table 2: Key Reagent & Computational Solutions for Batch-Resilient Studies

| Item / Solution | Function | Example / Specification |

|---|---|---|

| Interbatch Positive Controls | Technical standards to track batch variation. | ZymoBIOMICS Microbial Community Standard (D6300/D6305) |

| Balanced Block Design | Ensures biological groups are represented in each processing batch. | Splitting case/control samples across all DNA extraction kits and sequencing runs. |

| Harmon Algorithm | Non-parametric batch effect correction for high-dimensional data. | Implemented in MMUPHin R package or muon Python package. |

| Aitchison Distance Metric | Compositionally aware beta-diversity measure for PCA. | Uses CLR-transformed data; preferable to Bray-Curtis for ordination. |

| PERMANOVA (adonis2) | Statistical test to partition variance sources (batch vs. biology). | Must be used before and after correction for validation. |

Visualization: Workflows & Relationships

Microbiome Batch Effect Correction Workflow

Concept of Harmon's Reference Batch Anchoring

Technical Support Center: Troubleshooting and FAQs

Frequently Asked Questions

Q: My Harmon-corrected data shows reduced batch variation but also seems to have lost meaningful biological signal. What went wrong? A: This is often a sign of over-correction. Harmon's probabilistic framework assumes that batch effects are orthogonal to the biological signal of interest. If your study conditions are confounded with batch (e.g., all cases sequenced in Batch 1, all controls in Batch 2), Harmon may incorrectly attribute biological signal to batch. Diagnose this by examining PCA plots of uncorrected data, colored by both batch and study condition. If they align, experimental design is confounded.

Q: The model fails to converge during the Empirical Bayes step. What should I check? A: Non-convergence typically stems from insufficient data or extreme outliers.

- Verify that each batch contains a sufficient number of samples (n > 5 is a minimum, but more is recommended).

- Check for "singleton" batches with a unique condition. The model requires some overlap in conditions across batches.

- Pre-filter extremely low-abundance or prevalence taxa, as their variance estimates can be unstable.

- Ensure your data matrix does not contain

NaNorInfvalues.

Q: After running Harmon, my compositional data still shows a strong correlation with sequencing depth. Was the correction applied? A: Harmon corrects for location and scale batch effects on features, not for global library size differences. It is designed to be applied to data that has already been normalized for sequencing depth (e.g., using CSS, TSS, or a centered log-ratio transform). Always perform appropriate normalization before applying Harmon batch correction.

Q: Can I use Harmon with a single batch to adjust for a known covariate (like age)? A: No. Harmon's core algorithm is built for multi-batch integration. It models the distribution of features across batches. For single-batch covariate adjustment, use methods like linear models or LOESS regression.

Troubleshooting Guide

Issue: High Residual Variance After Correction

- Symptoms: PCA plots still show clustering by batch; the

sigmaparameter estimates from the Harmon model output remain high. - Diagnostic Protocol:

- Run the standard Harmon workflow.

- Extract the corrected matrix and the estimated batch effect parameters.

- Generate a table of per-feature variance before and after correction:

| Feature (ASV/OTU ID) | Variance (Uncorrected) | Variance (Harmon Corrected) | Variance Reduction % |

|---|---|---|---|

| ASV_001 | 4.32 | 1.05 | 75.7% |

| ASV_002 | 5.67 | 4.89 | 13.8% |

| ASV_003 | 2.11 | 0.98 | 53.6% |

| ... | ... | ... | ... |

- Solution: Apply more aggressive filtering prior to correction. Remove features that are non-informative (e.g., present in <10% of samples) or consider applying a variance-stabilizing transformation (like the one used in DESeq2) before running Harmon.

Issue: "Error: Missing Data" or Matrix Becomes Sparse Post-Correction

- Symptoms: The output matrix contains zeros or

NAwhere the input did not. - Diagnostic Protocol: This occurs because the posterior prediction step can shrink values toward zero for very rare features in a given batch.

- Solution: This is often a feature, not a bug, as it denoises the data. For downstream analyses requiring a fully dense matrix (e.g., some distance metrics), you can:

- Add a small pseudocount to the entire corrected matrix.

- Use the

do_posterior_predict = FALSEargument in theharmonizefunction to return only the adjusted parameters without the posterior prediction, then impute manually.

Experimental Protocol: Validating Harmon Correction

Objective: To empirically validate the success of batch correction while preserving biological signal using a mock community or spiked-in controls.

Materials & Reagents (The Scientist's Toolkit)

| Research Reagent Solution | Function in Validation Protocol |

|---|---|

| Mock Microbial Community (e.g., ZymoBIOMICS) | Provides a known, stable ratio of microbial genomes as a ground truth for measuring batch effect intensity. |

| Internal Spike-in Controls (e.g., External RNA Controls Consortium spikes) | Non-biological synthetic sequences added in known quantities across all samples to disentangle technical from biological variation. |

| Positive Control Samples | Identical biological aliquots split and processed across different batches (e.g., sequencing runs). |

| Negative Control (Extraction Blank) | Sample containing only reagents to identify and filter contaminant taxa. |

Methodology:

- Experimental Design: In each batch (sequencing run), include:

ntechnical replicates of the mock community.ntechnical replicates of a positive control sample.- Your experimental samples.

- 1 extraction blank.

- Wet-Lab Processing: Extract DNA, amplify the 16S rRNA gene (or perform shotgun sequencing) according to your standard protocol. Ensure spike-in controls are added at the lysis step.

- Bioinformatic Processing: Process raw reads through your standard pipeline (DADA2, QIIME2, etc.) to generate an ASV/OTU table. Do not apply batch correction.

- Pre-Correction Analysis:

- Calculate the median coefficient of variation (CV) for each taxon in the mock community replicates across all batches. This is your Batch Effect Metric.

- Perform PERMANOVA on the full dataset using Bray-Curtis distance, with

~ Batch + Condition. Note the R² attributed toBatch.

- Apply Harmon Correction: Follow the standard workflow: normalize data, then run the

harmonize()function, specifying the batch variable. - Post-Correction Validation:

- Re-calculate the CV for the mock community taxa. A significant reduction indicates successful technical correction.

- Re-run PERMANOVA (

~ Batch + Condition). A negligible R² forBatchand a stable/increased R² forConditionindicates preserved biology. - For spike-ins, plot their log-abundance across samples before and after correction; variance across batches should be minimized.

Harmon Correction and Validation Workflow

Harmon's Probabilistic Graphical Model

Troubleshooting Guides & FAQs

Q1: My Harmon-corrected data shows unexpected clustering by study batch, not biological condition. What went wrong? A: This suggests incomplete batch effect removal. Common causes and solutions:

- Cause 1: Strong, non-linear batch effects overwhelming the Harmony model.

- Solution: Apply a mild, variance-stabilizing transformation (e.g., log1p or arcsine sqrt) to your raw count data before running Harmony. This can make the linear assumptions of Harmony more effective.

- Cause 2: Confounding between batch and biological signal. If a specific condition was only sequenced in one batch, Harmony cannot disentangle the signals.

- Solution: Review experimental design. Statistical correction is impossible without sample mixing across batches. Consider acquiring additional control samples in new batches.

- Cause 3: Incorrect specification of the batch variable (

theta) or condition variable (lambda).- Solution: Re-examine the Harmony input parameters. The

thetaparameter (diversity penalty) should be set for the batch variable(s), andlambdafor the condition variable(s) you wish to preserve. Increasingthetaforces stronger integration.

- Solution: Re-examine the Harmony input parameters. The

Q2: After applying Harmony, my downstream differential abundance analysis yields fewer significant taxa. Is this normal? A: Yes, this is an expected and often desirable outcome. Simple scaling (e.g., CSS, TSS) only adjusts for sequencing depth but leaves inter-batch variation intact, leading to false positives. Harmony removes variance attributable to batch, yielding more conservative and biologically reliable results. Validate findings with external cohorts or qPCR.

Q3: I get a memory error when running Harmony on my large microbiome dataset (e.g., >10,000 samples). How can I resolve this? A: Harmony's PCA step can be memory-intensive. Implement the following protocol:

- Pre-filtering: Remove low-prevalence taxa (e.g., present in <1% of samples) to reduce dimensionality before PCA.

- Alternative PCA: Use a scalable PCA implementation (e.g.,

irlbain R) to generate the input principal components for Harmony. - Batch Subsetting: Run Harmony iteratively on subsets of batches, using a shared reference, then combine.

Q4: How do I choose between simple scaling (like CSS) and Harmon for my specific dataset? A: The decision flow is based on experimental design and data structure. Refer to the following diagnostic diagram.

Table 1: Methodological Comparison

| Feature | Simple Scaling/Normalization (CSS, TSS, Log) | Harmon Batch Correction |

|---|---|---|

| Primary Goal | Account for uneven sequencing depth. | Remove technical batch effects while preserving biological variance. |

| Statistical Foundation | Compositional adjustment, variance stabilization. | Iterative clustering and linear mixture modeling based on PCA. |

| Handles Complex Batches | No. Treats each sample independently. | Yes. Explicitly models batch covariates. |

| Preserves Condition Signal | N/A (does not model it). | Yes, via tunable lambda penalty parameter. |

| Output | Normalized count or relative abundance matrix. | Batch-corrected low-dimensional embeddings (PCA space). |

| Downstream Use | Direct input for DE analysis, alpha/beta diversity. | Input for clustering, visualization, and subsequent analysis on corrected PCs. |

Table 2: Impact on Differential Abundance Results (Simulated Data)

| Metric | Raw Scaling (CSS + DESeq2) | Harmon Correction (on CSS PCs) + DESeq2 |

|---|---|---|

| False Discovery Rate (FDR) | 32.5% | 8.7% |

| True Positive Rate (Sensitivity) | 95.2% | 88.4% |

| Area Under ROC Curve | 0.81 | 0.94 |

Experimental Protocols

Protocol 1: Standard Harmon Workflow for 16S rRNA Data

- Input Preparation: Generate a taxa count table (ASV/OTU table) and metadata with

batchandconditioncolumns. - Initial Normalization: Apply a Cumulative Sum Scaling (CSS) normalization using the

metagenomeSeqpackage. This mitigates library size differences. - Dimensionality Reduction: Perform Principal Coordinate Analysis (PCoA) on the CSS-normalized data using Bray-Curtis distance. Retain the top 50 principal coordinates (PCs) as the input matrix for Harmony.

- Harmon Integration: Run the Harmony algorithm (

RunHarmonyfunction) on the PC matrix, specifying the batch variable. Settheta=2(default) andlambda=0.1(relatively low to preserve biological signal). Usemax.iter.harmony = 20. - Output & Downstream Analysis: Use the Harmon-corrected embeddings (

Harmony_embeddings) for clustering (e.g., UMAP) and visualization. For differential abundance testing, project the corrected coordinates back to corrected relative abundances or use a model that covariates the Harmony-adjusted components.

Protocol 2: Validating Harmon Correction Success

- Visual Diagnostic: Generate PCoA plots colored by

batchandconditionbefore and after correction. Successful correction shows batch mixing while condition clusters remain distinct. - Quantitative Metric: Calculate the Average Silhouette Width for batch labels pre- and post-correction. A significant decrease post-Harmony indicates reduced batch-specific clustering.

- Biological Validation: Perform differential abundance analysis on a known positive control (e.g., a taxon expected to differ between conditions). The effect size should remain significant post-Harmony, while batch-associated spurious signals are diminished.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Harmon-Based Microbiome Studies

| Item | Function in Context | Example Product/Software |

|---|---|---|

| Variance-Stabilizing Normalization Tool | Prepares count data for PCA by normalizing for sequencing depth and stabilizing variance. | metagenomeSeq R package (for CSS), DESeq2 (for variance stabilizing transformation). |

| PCA/PCoA Computation Engine | Generates low-dimensional embeddings required as input for the Harmon algorithm. | stats::prcomp() (R), scikit-learn.decomposition.PCA (Python), ape::pcoa() for distances. |

| Harmon Implementation | The core algorithm for integrating data across batches. | harmony R package (Korsunsky et al., 2019), scanpy.pp.harmony_integrate in Python. |

| High-Performance Computing (HPC) Environment | Enables memory-intensive computations on large microbiome cohort datasets. | Cloud platforms (AWS, GCP) or local clusters with >=32GB RAM for >1,000 samples. |

| Downstream Analysis Suite | For statistical testing and visualization of corrected data. | phyloseq & vegan (R), MaAsLin2 for covariate-adjusted differential abundance, ggplot2 for plotting. |

Troubleshooting Guide & FAQs

Q1: My data fails during the Harmon pre-processing step. What are the minimum data type requirements?

A: The Harmon method requires specific, normalized input. The failure is likely due to incompatible data. Prerequisites are summarized below.

Table 1: Data Type Prerequisites for Harmon Correction

| Data Type | Suitable Format | Why It's Required | Common Error if Missing |

|---|---|---|---|

| Raw Count Table | Matrix (samples x features), non-negative integers. | Harmon corrects distributional shifts; needs original count scale. | "Input data must be numeric" or model fitting errors. |

| Metadata Table | Must contain a batch covariate column (categorical) and optional biological covariates. |

To model and separate batch effects from biological signals. | "Batch variable not found" or incomplete correction. |

| Normalization Status | Not rarefied. Preferably CSS-normalized, TSS, or relative abundance after correction. | Harmon uses a parametric model; rarefaction distorts variance structure. | Over-correction or unrealistic negative "counts." |

| Study Design | Batch groups should contain similar biological conditions (e.g., each batch has both case & control samples). | Enables the model to distinguish batch from biology. | Batch effect is confounded and cannot be separated. |

| Feature Prevalence | Recommend pre-filtering ultra-rare features (e.g., present in <10% of samples). | Improves model stability and computational speed. | Model convergence warnings or singular fits. |

Q2: Which experimental study designs are fundamentally unsuitable for Harmon correction?

A: Harmon cannot disentangle batch effects if they are perfectly confounded with a biological variable of interest.

Table 2: Study Designs and Suitability for Harmon

| Study Design | Suitable for Harmon? | Rationale & Mitigation |

|---|---|---|

| Prospective, multi-batch with balanced design | Yes, Ideal. All conditions present in each batch. | Model can accurately estimate batch-specific parameters. |

| Retrospective, batch-aligned with single condition per batch | No. If Batch 1 has only Controls, Batch 2 only Cases. | Batch and condition are identical; correction would remove biology. |

| Longitudinal with staggered batch entry | Cautious Yes. Requires time as a covariate in the model. | Must model temporal trend within and across batches. |

| Single-batch study | No. Method requires multiple batches to estimate variation. | Use other normalization methods, not batch correction. |

Q3: What is the step-by-step protocol to prepare my microbiome count data for Harmon?

A: Follow this detailed wet-lab and computational preparatory protocol.

Experimental Protocol: Data Preparation for Harmon Correction

Objective: Generate a feature count table and metadata that meet Harmon's prerequisites.

Materials & Reagents:

- DNA Extraction Kit (e.g., MoBio PowerSoil Kit): Standardizes cell lysis and DNA purification.

- PCR Reagents (e.g., 16S V4 primer set 515F/806R, high-fidelity polymerase): For targeted amplicon generation.

- Sequencing Platform (Illumina MiSeq/HiSeq): Generates raw FASTQ files.

- Bioinformatics Pipeline (QIIME 2, DADA2, or mothur): Processes sequences into ASV/OTU tables.

- Statistical Software (R 4.0+ with

harmonpackage installed): For executing the correction.

Procedure:

- Wet-Lab Phase:

- Extract genomic DNA from all samples across all batches using the same extraction kit and protocol.

- Perform PCR amplification for the same hypervariable region in the same plate run for samples within a batch. Use a negative control.

- Sequence all batches on the same platform, but batch runs may occur on different days/flow cells.

Bioinformatics Phase:

- Process all raw FASTQ files from all batches through the exact same pipeline (identical version, parameters, and reference database).

- Generate a single, merged Amplicon Sequence Variant (ASV) table of raw, non-rarefied counts.

- Filter out ASVs present in less than 10% of all samples or classified as mitochondria/chloroplast.

Pre-Correction Phase in R:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Harmon-Corrected Microbiome Studies

| Item | Function & Importance for Batch Correction |

|---|---|

| Standardized DNA Extraction Kit | Minimizes pre-sequencing technical variation, reducing the magnitude of batch effect. |

| Benchmark Mock Community (ZymoBIOMICS) | Positive control across batches to empirically assess batch effect strength and correction accuracy. |

| PCR Negative Control Reagents | Identifies reagent contamination, a potential source of batch-specific artifacts. |

| Balanced Blocking Design | Ensures biological conditions are represented in each batch—a prerequisite for Harmon. |

| Stable Version of Bioinformatics Pipeline | Prevents "pipeline version" from becoming a confounded batch effect. |

R harmon Package & phyloseq Object |

Software implementation of the algorithm and standard data container for seamless analysis. |

Visualizations

Title: End-to-End Workflow for Harmon Batch Correction

Title: Logic Tree for Harmon Study Design Suitability

Step-by-Step Guide: Implementing Harmon Batch Correction in Your Analysis Pipeline

TROUBLESHOOTING GUIDES & FAQS

Q1: I installed the harmonize package in R, but library(harmonize) fails with an error about a missing dependency. How do I resolve this?

A: The harmonize package depends on Rcpp and RcppEigen. Ensure all dependencies are installed correctly. Run the following in your R console:

Q2: In Python, I get ModuleNotFoundError: No module named 'harmonypy'. What are the installation steps?

A: The Python implementation is harmonypy. Install it via pip. A specific version is often required for stability.

Q3: After batch correction with Harmon, my microbiome alpha diversity metrics seem altered. Is this expected? A: No. Harmon corrects feature (OTU/ASV) abundances across batches but is designed to preserve within-sample relative abundances and thus alpha diversity. If you observe significant shifts, verify that you are applying Harmon to the count matrix (or its transformation) correctly and not to the already-calculated diversity indices. The correction should be applied prior to diversity analysis.

Q4: How do I handle zero-inflated microbiome count data as input for Harmon? Should I use raw counts or a transformation?

A: Harmon operates on a continuous, high-dimensional embedding. The standard protocol for microbiome data is to first transform your raw count matrix. Use a variance-stabilizing transformation (e.g., from DESeq2 in R) or a centered log-ratio (CLR) transformation after adding a pseudocount. Do not input raw counts directly.

Experimental Protocol: Standard Workflow for Microbiome Batch Correction with Harmon

1. Data Preparation (R with harmonize):

- Input: OTU/ASV count table (samples x features).

- Transform: Apply a CLR transformation. Use

compositions::clr()after replacing zeros with a small pseudocount (e.g., 1/2 of minimum positive count). - Metadata: Prepare a vector indicating the batch ID for each sample.

2. Run Harmon Correction (R):

3. Data Preparation & Correction (Python with harmonypy):

4. Downstream Analysis:

- Use the

harmonized_data(R) orcorrected_data(Python) matrix for subsequent analyses like PCoA, PERMANOVA, or differential abundance testing.

Diagram: Harmon Batch Correction Workflow for Microbiome Data

Research Reagent Solutions

| Item | Function in Harmon Analysis |

|---|---|

| R Statistical Software | Primary platform for running the harmonize package and comprehensive microbiome analysis suites (e.g., phyloseq, vegan). |

| RStudio IDE | Integrated development environment for R, facilitating script development, visualization, and project management. |

| Python (3.8+) | Alternative platform for running harmonypy, often integrated into machine learning pipelines. |

harmonize R Package |

The official R implementation of the Harmon algorithm for batch effect correction. |

harmonypy Python Package |

The Python port of the Harmon algorithm, maintained by the same core group. |

DESeq2 / compositions |

R packages used for variance-stabilizing transformation (VST) or centered log-ratio (CLR) transformation of count data prior to Harmon. |

| Jupyter Notebook / Lab | Common environment for interactive Python analysis and documentation. |

ggplot2 / matplotlib |

Essential plotting libraries in R and Python, respectively, for visualizing batch effects and correction results. |

phyloseq (R) |

Standard R package for handling and analyzing microbiome census data, often used to store data pre- and post-Harmon. |

Q5: What does the lambda parameter control in Harmon, and how should I choose its value for my microbiome dataset?

A: The lambda parameter controls the strength of the correction. A higher lambda places more emphasis on removing batch variance. The default is often 0.5. For microbiome data, it is recommended to perform a grid search (e.g., lambda = c(0, 0.25, 0.5, 0.75, 1)) and evaluate the outcome using a visualization of batch mixing and the preservation of biological signal (e.g., separation by disease status).

Q6: How do I validate that Harmon worked correctly on my data? A: Follow this validation protocol:

- Visual: Generate Principal Coordinate Analysis (PCoA) plots colored by batch before and after correction. Good correction minimizes batch clustering.

- Visual: Generate the same PCoA plots colored by biological condition (e.g., healthy vs. disease). The biological clusters should be preserved or enhanced.

- Quantitative: Calculate the Percent Variance Explained by batch using PERMANOVA (e.g., using

vegan::adonis2in R) on the distance matrices pre- and post-correction. A successful correction drastically reduces this value.

Table: Example PERMANOVA Results Pre- and Post-Harmon Correction

| Analysis Stage | Factor | R² (Variance Explained) | p-value |

|---|---|---|---|

| Pre-Harmon | Batch | 0.35 | 0.001 |

| Post-Harmon | Batch | 0.02 | 0.210 |

| Pre-Harmon | Disease Status | 0.08 | 0.003 |

| Post-Harmon | Disease Status | 0.12 | 0.001 |

Diagram: Harmon Validation & Diagnostic Process

FAQs

Q1: What are the critical formatting requirements for the OTU/ASV table before using Harmon? A1: The OTU/ASV table must be a numeric matrix with samples as columns and features (OTUs/ASVs) as rows. The first column must contain the feature IDs (e.g., "Otu0001", "ASV_1"). The first row must contain sample IDs. All other cells must be integer read counts or relative abundances. The table should be in a tab-separated (.tsv) or comma-separated (.csv) plain text format. No taxonomy information should be in this file.

Q2: How should my metadata file be structured for Harmon batch correction? A2: The metadata file must be a tabular file where rows are samples and columns are variables. The first column must contain Sample IDs that exactly match the column headers (sample IDs) in the OTU/ASV table. One column must be explicitly named "batch" (lowercase) to indicate batch membership. Another column should be named "class" or similar to denote the biological condition of interest for protection during correction.

Q3: Can Harmon handle missing values or zeros in the input table? A3: Harmon is designed for microbiome count or composition data, which is inherently sparse (many zeros). It can handle zero values. However, genuine missing data (e.g., NA, blank cells) in the OTU/ASV table is not allowed and must be addressed prior to input, typically by ensuring all non-ID cells contain a number (0 is acceptable).

Q4: What is the maximum recommended dataset size (samples x features) for Harmon? A4: While Harmon can process large datasets, performance is optimal with dimensions within the following ranges:

| Dimension | Recommended Maximum | Note |

|---|---|---|

| Number of Samples | 500-1000 | Memory scales with samples² |

| Number of Features | 10,000 | Highly sparse feature sets are manageable |

| Total Table Cells | 10 million | Approximate limit for standard workstations |

Q5: My corrected output contains negative values. Is this an error? A5: No, this is an expected behavior. Harmon uses a ComBat-based adjustment that can produce negative values, especially for very low-count or zero-inflated features. These are not biological counts but corrected log-scale data. For downstream analyses (e.g., diversity metrics), you may need to add a pseudocount and re-exponentiate, or use the corrected data in methods that accept negative values (e.g., some distance calculations).

Troubleshooting Guides

Issue: Harmon fails to run, reporting "Sample IDs in OTU table and metadata do not match."

Symptoms: Error message on execution, job terminates. Solution:

- Verify exact string matching between the sample IDs in the OTU table column headers and the metadata file's first column. This includes checking for:

- Leading/trailing spaces (trim them).

- Differences in case (e.g., "Sample1" vs "sample1").

- Use of special characters (avoid them, use underscores).

- Ensure the same samples are present in both files. No extra samples in either file.

- Protocol for ID Reconciliation:

a. In R, use

colnames(otu_table)andmetadata$SampleIDto extract IDs. b. Usesetdiff(colnames(otu_table), metadata$SampleID)to find mismatches. c. Manually correct in a text editor or spreadsheet program, then re-save as plain text.

Issue: Post-correction, my biological signal appears to be lost or drastically reduced.

Symptoms: PERMANOVA p-value for 'class' becomes non-significant after correction, or visualization shows loss of group separation. Solution:

- Confirm Metadata Specification: Ensure the

classvariable in your metadata correctly labels your biological groups of interest. Harmon protects this variable. - Check Batch-Class Confounding: If batch and class are perfectly confounded (e.g., all controls are in batch A, all treatments in batch B), Harmon cannot disentangle them. Review your experimental design.

- Diagram: Relationship Between Batch, Class, and Data

- Adjust Model Parameters: Consider running Harmon with

parametric=TRUEandmean.only=TRUEas a more conservative approach that may preserve larger biological effects.

Issue: The program runs but produces a "Model not converging" warning.

Symptoms: Warning message about convergence, but results are still generated. Solution:

- Increase the number of iterations. In the Harmon function call, set the

max.iterparameter to a higher value (e.g., 500 or 1000). The default is often 100. - Switch to a non-parametric adjustment of batch effects by setting

parametric=FALSE. This is more robust for small batch sizes. - Protocol for Iteration Increase (R code snippet):

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Harmon Data Prep |

|---|---|

| QIIME2 (v2024.5) | Pipeline to generate demultiplexed, quality-filtered, and chimera-checked ASV tables from raw sequencing reads. |

| DADA2 (R package) | Alternative to QIIME2 for inferring exact amplicon sequence variants (ASVs) from fastq files. |

| phyloseq (R package) | Used to import, store, and manipulate OTU/ASV tables, taxonomy, and metadata as a single object. Facilitates filtering and preprocessing. |

| RStudio / Jupyter Lab | Interactive development environments for writing and executing data formatting scripts in R or Python. |

| Unix command line (awk, sed) | For rapid, script-based preprocessing and validation of large text-based OTU and metadata files (e.g., checking row/column counts). |

| BIOM Format (v2.1) | A standardized JSON-based format for sharing OTU/ASV tables with integrated metadata. Can be converted to/from the tabular format required by Harmon. |

Workflow Diagram: From Raw Sequences to Harmon Input

Troubleshooting Guides & FAQs

Q1: What is the most common mistake when defining "Batch" for Harmon correction in microbiome studies? A1: The most common mistake is incorrectly pooling separate sequencing runs from the same project as a single batch, or splitting a single sequencing run with multiple projects into multiple batches. A batch must be defined as a group of samples processed together in a way that introduces technical variation. This typically means all samples from the same DNA extraction plate, library preparation kit lot, and sequencing lane/run.

Q2: How do I differentiate a true biological covariate from a batch effect when they are confounded? A2: When a variable (e.g., "Study_Center") could represent both biological difference and technical batch, you must use prior knowledge and experimental design to decide. If samples from different centers were processed in entirely separate labs with different protocols, "Center" is likely a batch variable. If the same protocols were used across centers and the hypothesis tests for geographical variation, it is a biological covariate. Confounded designs may require advanced methods like ComBat with known batches or the use of negative controls.

Q3: My PCA plot shows strong clustering by "Operator." Should I model this as a batch or a random effect? A3: In the Harmon framework, "Operator" is typically modeled as a batch covariate if each operator processed a distinct, non-overlapping group of samples. If operators processed samples in a balanced but overlapping way (e.g., multiple operators per batch), it may be treated as a random effect in a mixed model. For Harmon, which requires categorical batch variables, create a composite batch variable (e.g., "BatchOperatorA", "BatchOperatorB").

Q4: After applying Harmon correction, my biological signal of interest has disappeared. What went wrong? A4: This is a classic sign of over-correction, often due to incorrectly specifying your biological covariate of interest as part of the batch model. Harmon adjusts data by removing variation associated with the batch variables while preserving variation associated with specified biological covariates. You must explicitly list the biological covariates to protect in the model matrix. Double-check that your variable of interest is not included in the batch argument and is correctly specified in the design formula.

Q5: How do I handle a "reference batch" when no true gold-standard batch exists?

A5: Harmon does not strictly require a reference batch. If you choose to use the reference batch feature (e.g., in the harmonize R package variant), select the batch with the largest sample size, highest quality control metrics, or most representative population. Avoid choosing a batch based on extreme outcomes. If no batch is suitable as a reference, use the standard empirical Bayes approach which pools information across all batches without designating a single reference.

Experimental Protocols

Protocol 1: Systematic Batch Variable Annotation for Microbiome Studies

- MetaData Collection: For every sample, record: DNA Extraction Date/Plate, Library Prep Kit Lot Number, Sequencing Run ID, Sequencing Lane, and Technician ID.

- Composite Batch Creation: Combine these into a single categorical

batch_id. For example,"ExtPlate1_KitLotA_Run005". - Covariate Definition: List all biological/phenotypic variables (e.g., Disease_Status, Age, BMI, Diet).

- Confounding Check: Create a cross-tabulation table for each biological variable against

batch_id. Flag any batch where a biological category is exclusively present. - Documentation: Maintain a lab wiki linking each

batch_idto its specific technical parameters.

Protocol 2: Pre-Correction QC for Harmon Application

- Data: Normalized microbiome abundance table (e.g., from CSS, TSS, or log-transformed).

- Per-Batch Distribution Check: Calculate the median and interquartile range (IQR) for 10 high-abundance taxa per batch. Visually inspect for systematic shifts.

- PCA: Perform PCA on the abundance table. Color points by suspected batch variable and suspected biological variable.

- Statistical Test: Use PERMANOVA (adonis2) to partition variance. First, test

~ batch. Then test~ biological_covariate + batch. A large variance component for batch suggests correction is needed. - Filter: Remove any taxa present in fewer than 5% of samples across all batches.

Protocol 3: Executing Harmon Correction with the sva package

- Install & Load:

install.packages("sva"); library(sva) - Prepare Matrices:

mod: Design matrix of biological covariates to preserve (e.g.,model.matrix(~Disease_Status + Age, data=meta)).batch: A vector of batch IDs (factor).

- Run ComBat (Harmon's core algorithm): Transpose input so features (taxa) are rows and samples are columns.

- Post-Correction Validation: Repeat PCA and PERMANOVA. Batch effect should be minimized, while biological signal should remain.

Table 1: Common Batch Variables in Microbiome Sequencing

| Variable | Typical Values | Recommended for Composite Batch? | Notes |

|---|---|---|---|

| Extraction Plate | Plate01, Plate02 | Yes | Major source of variation |

| Library Prep Kit Lot | Lot#12345, Lot#67890 | Yes | Reagent lots critical |

| Sequencing Run | Run2024001 | Yes | Primary sequencing batch |

| Sequencing Lane | Lane1, Lane2...Lane8 | Conditionally | Only if lane imbalance exists |

| Technician ID | TechA, TechB | If non-overlapping | If workflows differ per tech |

| Collection Date | YYYY-MM-DD | If processing is date-based | Use if protocols drifted over time |

Table 2: Impact of Mis-specifying Covariates in Harmon (Simulated Data)

| Scenario | Variance Explained by Batch (Post-Correction) | Variance Explained by Biology (Post-Correction) | Result |

|---|---|---|---|

| Correct Specification | < 5% | > 25% (Preserved) | Valid Correction |

| Biology in Batch Argument | < 5% | < 5% (Lost) | Over-Correction |

| Batch in Design Matrix | > 15% (Residual) | > 25% | Under-Correction |

| Unadjusted (No Correction) | > 30% | > 25% | Confounded Results |

Visualizations

Title: Harmon Batch Correction Workflow for Microbiome Data

Title: Harmon/ComBat Core Algorithm Steps

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Definition & Correction |

|---|---|

| ZymoBIOMICS Microbial Community Standard | Provides a known composition control. Process across all batches to quantify technical variation. |

| MoBio (Qiagen) PowerSoil Pro DNA Kit (Lot-Tracked) | Standardized DNA extraction. Using lot-tracked kits allows "Kit Lot" to be a precise batch variable. |

| Nextera XT DNA Library Prep Kit (Indexed) | Standardized library prep. Batch variable = "Library Prep Plate" or "Kit Lot". |

| Illumina PhiX Control v3 | Sequencing run quality control. Helps determine if a sequencing run is an outlier batch. |

R sva package (v3.48.0+) |

Implements the ComBat/Harmon algorithm for batch correction with parametric empirical Bayes. |

R tidyverse / data.table |

For robust metadata wrangling and creating accurate composite batch variables. |

| Sample Barcoding Primers (Dual-Index) | Enables pooling of samples from multiple projects/batches into a single run, intentionally confounding batch for cost savings—must be meticulously recorded. |

| Laboratory Information Management System (LIMS) | Essential for systematically tracking the chain of custody from sample to sequence, capturing all potential batch variables. |

Troubleshooting Guides & FAQs

Q1: After running the Harmon batch correction function, my output contains NA/NaN values. What is the cause and solution? A: This typically occurs due to zero-variance features across batches or insufficient overlap between batches. Solution Protocol:

- Pre-filtering: Before applying Harmon, remove taxa/features with zero counts across all samples in any single batch.

- Adjust

lambda: Increase the ridge regression penalty (lambda) to stabilize the estimation. Start with values between 1 and 10.

Q2: The batch-corrected PCoA plot shows poor integration, with batches still clearly separated. How should I proceed? A: This indicates strong batch effects that may require parameter tuning or covariate adjustment. Diagnostic & Solution Protocol:

- Check Batch Strength: Calculate a PERMANOVA (using

vegan::adonis2) on pre-correction data to quantify batch effect (R^2). - Include Covariates: If your metadata contains biological covariates of interest (e.g., Disease_State), specify them in the model to prevent over-correction.

- Validate: Use the

hmbetametric (see Table 1) to quantify integration quality post-correction.

Q3: I receive a convergence warning during Harmon execution. What does this mean? A: The model's iterative optimization failed to reach a stable solution within the default iterations. Solution Protocol:

- Increase the

max_iterparameter (e.g., from 100 to 200). - Increase the convergence tolerance (

tol) from 1e-4 to 1e-3. - Ensure your data is properly log-transformed (e.g.,

log1p) before correction.

Key Performance Metrics Table

Table 1: Common metrics for evaluating Harmon batch correction efficacy on microbiome data.

| Metric | Purpose | Ideal Value (Post-Correction) | Package/Function |

|---|---|---|---|

| PCoA Overlap | Visual integration of batches in 2D | Batches intermixed, no clear separation | stats::cmdscale, ggplot2 |

| Average Silhouette Width (ASW) | Quantifies batch mixing vs. biological preservation | Lower batch ASW, stable biological group ASW | cluster::silhouette |

| Principal Component Regression (PCR) | % variance in PCs explained by batch | Reduced batch R² (close to 0) | vegan::rda |

| hmbeta | Measures distance between batch distributions | Value closer to 1 indicates better integration | batchelor::hmbeta |

Detailed Harmon Execution Protocol

Experiment: Batch Correction of 16S rRNA Gut Microbiome Data from Two Sequencing Runs Objective: Remove technical variation from sequencing batch while preserving biological variation related to Crohn's Disease (CD) vs. Healthy (H) status.

Materials & Computational Toolkit: Table 2: Research Reagent & Computational Solutions

| Item | Function/Description | Example/Version |

|---|---|---|

| Raw OTU/ASV Table | Taxonomic count data, pre-filtered to remove low-abundance features. | BIOM file, >10,000 reads/sample |

| Sample Metadata | Contains 'batch' (Batch1, Batch2) and 'diagnosis' (CD, H) columns. | CSV file |

| Harmon R Package | Implements the empirical Bayes ridge regression-based batch correction method. | harmony (>= 1.2.0) |

| phyloseq / mia R Package | For data container and standard microbiome preprocessing. | phyloseq (1.44.0) |

| PERMANOVA | Statistical test to quantify batch effect strength pre/post correction. | vegan::adonis2 |

Methodology:

- Data Import & Preprocessing:

- Execute Harmon Correction:

- Evaluation:

- Generate PCoA plots on pre- and post-correction Euclidean distance matrices.

- Calculate PERMANOVA

R²for~ batchand~ diagnosis. - Compute

hmbetascore using thebatchelorpackage.

Workflow & Logical Diagrams

Diagram 1 Title: Harmon Batch Correction Workflow for Microbiome Data

Diagram 2 Title: Troubleshooting Logic for Harmon Outputs

Frequently Asked Questions & Troubleshooting

FAQ 1: After applying the Harmon batch correction, my alpha diversity indices (e.g., Shannon, Chao1) show reduced variance but also a shift in median values. Is this expected?

- Answer: Yes, this can be an expected outcome. Harmon corrects for location and scale shifts between batches. A shift in median values across all samples post-correction indicates a successful removal of a systematic batch effect that equally affected all samples within a batch. The key diagnostic is whether the variance within groups (e.g., disease vs. control) is now smaller than the variance between batches. If your biological groups separate more clearly in ordination plots post-correction, the median shift is not a concern.

FAQ 2: When I input my harmonized distance matrix into PERMANOVA (adonis2), my "Batch" factor is still significant. Does this mean Harmon failed?

- Answer: Not necessarily. A significant Batch term post-correction can indicate two things: 1) Residual, non-linear batch effects that Harmon's linear model did not fully capture, or 2) More commonly, an imbalance between your biological groups and batches (i.e., confounding). If the proportion of variance explained (R²) by the "Batch" factor has drastically decreased (e.g., from 40% to 5%), and the variance explained by your primary variable of interest has increased, the correction was largely successful. Investigate your design for confounding.

FAQ 3: My differential abundance analysis (e.g., DESeq2, edgeR) on corrected counts yields fewer significant taxa. Am I losing sensitivity?

- Answer: You are likely gaining specificity. Batch effects can create false, batch-associated differences that inflate significance. By removing this technical noise, you reduce false positives. The remaining signals are more likely to be biologically authentic. Validate the results using held-out cohorts or complementary methods like LEfSe or MaAsLin2 with the harmonized data.

Troubleshooting Guide: Common Errors in the Post-Correction Pipeline

| Symptom | Possible Cause | Solution |

|---|---|---|

Error: "NA/NaN/Inf" in foreign function call" when running phyloseq::ordinate(). |

The harmonized distance matrix contains invalid values. This can happen if the correction produces extreme negative values or zeros for some features, making log-transformation or distance calculation impossible. | 1. Inspect Data: Check for non-positive values in the harmonized count matrix or transformed matrix. 2. Add Pseudocount: If using a log transform (e.g., for PCoA), add a minimal pseudocount (e.g., 1) to all values before correction or transformation. 3. Filter: Consider removing features with consistently near-zero variance across all samples post-correction. |

| PERMANOVA results are identical before and after correction. | The most common cause is applying the PERMANOVA test to the same, pre-calculated distance matrix object from before correction. The statistical test is run on the matrix you provide. | Ensure you are recalculating the beta diversity distance matrix (Bray-Curtis, UniFrac) using the harmonized feature table before running PERMANOVA. Create a new phyloseq object or distance matrix from the post-Harmon data. |

| Differential abundance tool fails to converge or gives extreme log2 fold changes. | Harmon outputs continuous, potentially negative values. Many DA tools (DESeq2, edgeR) require integer counts. Feeding them harmonized, non-integer data breaks their underlying statistical models. | Harmon should be applied to transformed data (e.g., log, CLR) for diversity and ordination. For count-based DA tools, you have two options: 1. Correct first, then re-normalize: Use Harmon on CLR-transformed data, then use the uncorrected integer counts but include the harmonized data as a covariate in your DA model (e.g., design = ~ batch_corrected_PC1 + group). 2. Use a model-based tool: Employ a tool like limma (with voom transformation) or MaAsLin2 that can directly handle the continuous, harmonized data as input. |

Experimental Protocol: Post-Harmonization Downstream Analysis Workflow

Objective: To execute a standardized pipeline for evaluating microbiome community differences using harmonized, batch-corrected data.

Materials & Input:

- Harmon-corrected feature table (matrix of samples x features, typically log or CLR-transformed).

- Associated sample metadata (including original batch IDs and biological groups).

- Phylogenetic tree (if performing phylogenetic analyses like UniFrac).

Procedure:

Part A: Alpha Diversity Analysis

- If Harmon was applied to rarefied counts: Calculate indices (Shannon, Faith's PD) directly on the rarefied integer counts. Use the harmonized data only for visualization to show reduced batch effect.

- If Harmon was applied to transformed data (e.g., CLR): Alpha diversity indices must be calculated on the original, uncorrected rarefied counts. The harmonized data is not used for alpha diversity calculation. Statistical tests (Wilcoxon, Kruskal-Wallis) are performed on these original indices, but plots can be grouped by batch to visually confirm reduced batch disparity.

Part B: Beta Diversity & Ordination

- Distance Calculation: Compute a distance matrix from the harmonized feature table. For non-phylogenetic measures (Bray-Curtis, Jaccard), use the Euclidean distance on the harmonized CLR data. For phylogenetic measures, use appropriate methods on the transformed data.

- Ordination: Perform Principal Coordinates Analysis (PCoA) on the distance matrix using classical multidimensional scaling.

- Statistical Testing: Run PERMANOVA (e.g.,

vegan::adonis2) with 9999 permutations using the formuladistance_matrix ~ Group + Batch. Evaluate the reduction in R² for the Batch term post-correction. - Visualization: Plot PCoA ordinations, coloring points by biological

Groupand shaping points byBatch.

Part C: Differential Abundance Analysis

- Pathway 1: Using Count-Based Models (DESeq2/edgeR)

- Do not use the harmonized continuous data as input.

- Run Harmon on CLR-transformed data to obtain per-sample correction factors or principal components that capture batch variance.

- In DESeq2, include the top Harmon correction factor(s) (e.g., PC1 from the harmonized batch effect) as a covariate in the design formula:

~ technical_covariate + group_of_interest. - Proceed with standard DESeq2 workflow on raw integer counts.

- Pathway 2: Using Continuous Data Models (limma, MaAsLin2)

- Use the harmonized feature table as the direct input matrix.

- For

limma, create a model matrix with your biological group and any other relevant covariates. Use thevoomfunction if the data is sequence count-derived. - For

MaAsLin2, specify the harmonized data as the 'features' input and your primary variable as the fixed effect. - Fit the linear model and extract significance values for each feature.

Visualizations

Diagram 1: Post-Harmon Downstream Analysis Decision Tree

Diagram 2: Differential Abundance Pathways Post-Harmon

The Scientist's Toolkit: Key Reagents & Software

| Item | Category | Function in Post-Correction Workflow |

|---|---|---|

| R (v4.3+) / Python (v3.10+) | Software | Primary computational environments for statistical analysis and visualization. |

harmonizeR / pyHarmonize |

R/Python Package | Implements the Harmon algorithm for batch effect correction of transformed microbiome data. |

phyloseq (R), qiime2 (Python) |

R/Python Package | Data structures and core functions for organizing microbiome data, calculating diversity metrics, and ordination. |

vegan (R) |

R Package | Provides adonis2() for PERMANOVA testing on distance matrices, crucial for evaluating batch effect reduction. |

DESeq2 (R) |

R Package | Differential abundance testing on raw counts; requires integration of Harmon covariates into its linear model. |

MaAsLin2 (R) |

R Package | Differential abundance testing suited for continuous, harmonized data using generalized linear models. |

ggplot2 (R), matplotlib (Python) |

R/Python Package | Creation of publication-quality visualizations of alpha/beta diversity results. |

| CLR-Transformed Data | Input Data | The recommended input format for Harmon. Centers log-ratio transformed data to make features comparable across samples, mitigating compositionality. |

| Sample Metadata File | Input Data | Must contain accurate columns for Batch and Group variables. Essential for designing models and interpreting results. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Useful for large-scale analyses, particularly PERMANOVA with high permutation counts or complex DA models on thousands of features. |

Mastering Harmon: Practical Troubleshooting and Parameter Optimization Strategies

Troubleshooting Guides & FAQs

Q1: What does the error "Gradient Descent Failed to Converge in 500 iterations" mean when applying Harmon to my microbiome dataset?

A: This error indicates the batch correction algorithm's optimization process is stuck. Common causes include:

- Extreme Batch Effects: The inter-batch variance vastly exceeds biological signal.

- Incorrect Learning Rate: A rate too high causes overshooting; too low prevents progress.

- High-Dimensional Sparsity: Prevalent in microbiome OTU/Species tables, leading to unstable gradients.

- Protocol Violation: The data was not properly pre-filtered (e.g., for low-abundance features).

Diagnostic Protocol:

- Plot Loss Function: Output the loss value per iteration.

- Check Data Scale: Ensure your input matrix (e.g., OTU table) is normalized (e.g., CSS, TSS) but not log-transformed before Harmon, as it assumes approximately Gaussian distributions.

- Reduce Dimensionality: Apply a variance-stabilizing filter, retaining only features with variance > the median.

Q2: I receive "LinAlgError: Singular Matrix" during Harmon's ComBat step. How do I resolve this?

A: This is a multicollinearity error where the design matrix for batch correction is rank-deficient.

Step-by-Step Resolution:

- Inspect Metadata: Identify and remove any covariate (e.g., "Sample_Type") that perfectly predicts batch membership.

- Increase Regularization: Manually adjust the

ridge_penaltyparameter in the Harmon function call to a higher value (e.g., from 0.1 to 1.0). - Subset Features: Run the correction on a pruned feature set (e.g., top 5000 most variable ASVs) to break perfect collinearity.

Q3: The model runs but diagnostic plots show "Failure in Variance Stabilization" post-correction. What's next?

A: This suggests residual heteroscedasticity, where batch-adjusted data still shows mean-variance dependence, invalidating downstream linear models.

Verification & Correction Workflow:

- Generate Plot: Plot the standard deviation versus mean for each batch, post-correction.

- Apply Alternative Transformation: If failure is observed, apply a post-hoc variance-stabilizing transformation (e.g.,

asin(sqrt(x))for proportions,log(1+x)for counts). - Re-specify Model: For severe cases, refit the Harmon model using a

mean_only=TRUEargument if batch effect is primarily additive.

Key Experimental Protocols Cited

Protocol 1: Diagnosing Non-Convergence in Harmon

- Input: Raw feature count table (m samples x n taxa), batch covariate vector.

- Preprocessing: Normalize using Cumulative Sum Scaling (CSS). Filter taxa present in <10% of samples.

- Run Subset Test: Execute Harmon on a random 30% sample subset and 1000 highly variable features.

- Monitor: Set

max_iterations=2000,convergence_threshold=1e-7. Output loss per iteration to a log file. - Visualize: Plot iteration (x) vs. loss (y). A non-monotonically decreasing plot indicates instability.

Protocol 2: Validating Batch Correction Success

- Principal Component Analysis (PCA): Perform PCA on pre- and post-correction data.

- Metric Calculation:

- Calculate the Principal Component Analysis-based R-squared (PCRS) for batch labels pre- and post-correction.

- Perform PerMANOVA (on Aitchison distance) with batch as a factor.

- Success Criteria: A reduction in PCRS by >70% and a non-significant PerMANOVA p-value (p > 0.05) for the batch term.

Table 1: Common Harmon Convergence Errors & Parameters

| Error Message | Likely Cause | Default Parameter | Recommended Adjustment |

|---|---|---|---|

FAILED TO CONVERGE |

Learning rate too high | learning_rate=0.01 |

Reduce to 0.001 |

SINGULAR MATRIX |

Perfect multicollinearity | ridge_penalty=0.1 |

Increase to 0.5-1.0 |

VARIANCE EXPLODED |

Extreme outliers in data | epsilon=1e-8 |

Apply stricter pre-filtering |

NAN IN GRADIENT |

Invalid zero-inflated input | N/A | Replace zeros with pseudocount (1e-10) |

Table 2: Quantitative Metrics for Convergence Diagnosis

| Metric | Formula | Target Value | Interpretation |

|---|---|---|---|

| Loss Delta (ΔL) | Lt - Lt-1 | < 1e-7 | Convergence reached |

| Gradient Norm | ‖∇L‖2 | < 1e-5 | Flat optimization landscape |

| Batch Variance Ratio | Varpost / Varpre | ~0.1 - 0.3 | >70% variance removed |

Visualizations

Diagram Title: Harmon Convergence Diagnosis Workflow

Diagram Title: Post-Harmon Variance Stabilization Check

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Toolkit for Harmon Diagnostics

| Item / Software | Function | Key Parameter / Note |

|---|---|---|

R harmonizeR Package |

Core algorithm for batch correction. | Use verbose=TRUE to log convergence. |

Python scanpy.pp.harmony_integrate |

Harmon implementation for Python (via Scanpy). | Key arg: max_iter_harmony. |

ggplot2 / matplotlib |

Plotting loss curves & PCA visualizations. | Essential for diagnosing trends. |

vegan R Package |

PerMANOVA for validation (adonis2 function). |

Use Aitchison distance matrix. |

Sparse Matrix Objects (Matrix pkg) |

Efficient handling of large, sparse OTU tables. | Prevents memory overflow. |

Regularization Solver (glmnet) |

Alternative if singular matrix errors persist. | Can replace built-in solver. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: What does the 'lambda' (λ) parameter control in the Harmon batch correction, and what is a typical starting range? A: The lambda (λ) parameter controls the strength of the correction. A higher lambda (e.g., λ=1) applies a stronger correction, assuming more of the variation is due to batch effects. A lower lambda (e.g., λ=0) applies a weaker correction, assuming more of the variation is biological. For microbiome data, a moderate lambda (e.g., 0.5 to 0.7) is often a reasonable starting point to balance removing technical noise while preserving biological signal. The optimal value must be empirically determined per dataset.

Q2: How should I choose a reference batch? What happens if I choose poorly? A: The reference batch should be the most technically robust and biologically representative dataset in your collection. Common choices are the largest batch, the batch with the most controls, or a gold-standard dataset. A poor choice (e.g., a small, highly biased batch) can lead to over-correction, where the harmonized data incorrectly resembles the reference, or under-correction, where batch effects persist. This can introduce artifacts and confound downstream analysis.

Q3: My data shows loss of biological variation after correction. How can I diagnose and fix this? A: This often indicates an overly aggressive correction, typically from a lambda value that is too high. Diagnose by:

- Checking PCA plots: Biological groups that were separate before correction may now be overlapping.

- Examining positive controls: Known biological differences (e.g., case vs. control in the same batch) may be diminished. Fix: Systematically reduce the lambda parameter and re-evaluate. Also, re-assess if your chosen reference batch is appropriate.

Q4: How do I quantitatively evaluate if my Harmon correction was successful? A: Success is measured by the reduction of batch-specific clustering while preserving biological variance. Use the following metrics, summarized in the table below:

Table 1: Quantitative Metrics for Evaluating Harmon Correction

| Metric | Formula/Description | Target Outcome Post-Correction |

|---|---|---|

| Principal Component Analysis (PCA) | Visual inspection of PC plots. | Batches should intermingle; biological groups should remain distinct. |

| Per-Sample Silhouette Width (Batch) | Measures how similar a sample is to its own batch vs. other batches. | Decrease towards zero or negative values. |

| Per-Sample Silhouette Width (Biological Group) | Measures how similar a sample is to its own biological group vs. other groups. | Increase or remain stable. |

| Average Proportion of Variance (APV) | PV(batch) / [PV(batch) + PV(biology)]. Calculated via PERMANOVA. | Substantial decrease in the contribution of batch to total variance. |

Q5: Can I use Harmon with a dataset that has only one sample per batch? A: No. Harmon requires multiple samples per batch to estimate the batch-specific distributions and transform them. Batches with a single sample will cause the algorithm to fail. Consider alternative methods or design your study to include replicates within each batch.

Experimental Protocol: Optimizing Lambda and Reference Batch Selection

Objective: To empirically determine the optimal lambda parameter and reference batch for Harmon correction on a specific microbiome dataset.

Materials: Pre-processed microbiome feature table (e.g., ASV or OTU counts), associated metadata with batch and biological group labels.

Software: R Statistical Environment with the harmonize package (or equivalent Python implementation).

Procedure:

- Prepare Data: Log-transform or center log-ratio (CLR) transform your count data. Split data into a training set (e.g., 70%) and a validation set (30%), stratifying by batch and biological condition.

- Define Parameter Grid: Create a grid of lambda values to test (e.g., λ = 0, 0.25, 0.5, 0.75, 1). List all candidate reference batches.

- Iterative Correction: For each candidate reference batch:

a. For each lambda value, apply the

harmonize()function to the training set using the specified reference. b. Apply the same transformation (fitted from the training set) to the validation set. - Evaluation: On the validation set, calculate the metrics in Table 1 for each (reference, lambda) combination. The optimal combination maximizes the preservation of biological-group silhouette width while minimizing batch silhouette width and APV.

- Final Application: Using the chosen optimal parameters, run Harmon on the full dataset.

Visualizations

Title: Harmon Parameter Optimization Workflow

Title: The Lambda Parameter Trade-Off

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Microbiome Batch Correction Studies

| Item | Function in Context |

|---|---|

| Mock Microbial Community Standards | Known composition controls spiked into samples across batches to quantitatively track and correct for technical variance. |

| DNA Extraction Kit (Same Lot) | Using the same kit lot for all samples minimizes a major source of batch variation. Document lot numbers for all reagents. |

| Library Preparation Reagents | PCR enzymes, indexing primers, and clean-up kits. Consistent lots are critical. Aliquot to freeze-thaw cycles. |

| Sequencing Control (PhiX) | Added to every Illumina run for quality monitoring; helps identify sequencing-level batch effects. |

| Bioinformatics Pipeline | A fixed, version-controlled pipeline (e.g., QIIME2, DADA2) for reproducible preprocessing prior to batch correction. |

| Positive Control Samples | Replicate biological samples (e.g., from a pooled aliquot) included in every batch to assess inter-batch variability. |

| Negative Control Samples | Reagent-only controls to identify and filter contaminant sequences introduced in specific batches. |

Troubleshooting Guides & FAQs

Q1: After applying the Harmon batch correction method to my microbiome dataset, the PCA plot shows no visible change. Did the correction fail? A: Not necessarily. A lack of visual change in the PCA can indicate:

- Low Batch Effect: Your original data may have had minimal technical batch variation. Check PERMANOVA/ADONIS results on the pre-correction distance matrix to quantify batch significance.

- Over-correction or Incorrect Model: Harmon may have removed biological signal if the model incorrectly specified batches or included biological covariates as nuisance variables. Re-examine your model formula (

design=argument). - Visualization Scale: The PCA plot may be dominated by a few strong biological axes. Re-plot focusing on the principal components where batch was known to load (e.g., PC2 vs PC3).

Q2: My post-correction PCA plot shows batches are now overlapping, but the overall sample clustering by biological group has also been destroyed. What happened? A: This is a classic sign of over-correction. Harmon has likely removed the biological signal of interest along with the batch effect. This occurs when batch effects are confounded with biological conditions. Mitigation steps:

- Use a Reference Batch: If available, specify a reference batch in Harmon that preserves the biological variation within that batch.

- Employ a Confounded Design: Use the

confounded = "MM"(mean matching) option in theharmonizefunction, which is designed for scenarios where batch and condition are not fully separable. - Validate with Known Biological Controls: Use positive control samples (if available) to track preservation of expected biological differences.

Q3: How can I quantitatively confirm that Harmon worked, beyond just looking at PCA plots? A: Always use statistical metrics alongside visualization. Calculate these metrics on the distance matrix (e.g., Bray-Curtis) before and after correction.

Table 1: Quantitative Metrics for Batch Correction Assessment

| Metric | Pre-Correction Value | Post-Correction Value | Interpretation of Successful Correction |

|---|---|---|---|

| PERMANOVA R² (Batch) | e.g., 0.25 | e.g., 0.02 | Decreases significantly (p-value becomes non-significant). |

| Average Silhouette Width (by Batch) | e.g., 0.15 | e.g., -0.05 | Decreases towards zero or negative, indicating loss of batch-driven clustering. |

| Average Silhouette Width (by Condition) | e.g., 0.10 | e.g., 0.18 | Increases or is maintained, indicating preservation of biological grouping. |

| Principal Component Variance Explained by Batch | e.g., PC1: 18% | e.g., PC1: 3% | Variance attributable to batch in top PCs decreases. |

Q4: What are the essential steps and checks for a standard Harmon batch correction workflow? A: Follow this validated protocol.