Navigating the Compute Frontier: A Practical Guide to Managing High-Dimensional Microbiome Data

This article provides a comprehensive guide for researchers, scientists, and drug development professionals tackling the computational challenges of high-dimensional microbiome analysis.

Navigating the Compute Frontier: A Practical Guide to Managing High-Dimensional Microbiome Data

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals tackling the computational challenges of high-dimensional microbiome analysis. Covering foundational knowledge to advanced validation, it explores the unique demands of amplicon and metagenomic datasets. We detail scalable methodological pipelines, strategies for troubleshooting performance bottlenecks, and frameworks for validating computational results. The goal is to empower professionals to efficiently allocate resources, select appropriate tools, and derive robust, reproducible biological insights from complex microbial community data, accelerating the path from discovery to clinical application.

The High-Dimensional Hurdle: Understanding Microbiome Data's Unique Compute Demands

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: My dimensionality reduction (e.g., PCA, PCoA) is failing or producing nonsensical axes. What are the common causes?

- Answer: This is often due to data sparsity (excess zeros) or inappropriate distance metrics.

- Solution A (Sparsity): Apply a prevalence filter. Retain only features (OTUs/ASVs) present in >10% of samples. Re-run.

- Solution B (Distance Metric): For compositional OTU/ASV data, always use a distance metric designed for compositions, such as Aitchison distance (via a CLR transformation) or Bray-Curtis. Avoid Euclidean distance on raw or relative abundance data.

- Protocol: Prevalence Filtering & Aitchison PCoA

- Input: Raw ASV count table (m samples x n features).

- Filter: Remove features with counts >0 in fewer than

floor(0.10 * m)samples. - Transform: Apply a Centered Log-Ratio (CLR) transformation. Add a pseudo-count of 1 (or use parts per million) if zeros remain.

clr(x) = log(x / g(x)), whereg(x)is the geometric mean of the feature vector. - Distance: Compute the Euclidean distance matrix on the CLR-transformed data (this is Aitchison distance).

- Ordination: Perform PCoA on the resulting distance matrix.

FAQ 2: My machine runs out of memory when building a phylogenetic tree for 100,000+ ASVs. How can I proceed?

- Answer: Full-scale phylogenetic placement for ultra-high feature counts is computationally prohibitive.

- Solution: Use a lightweight, reference-based placement or opt for non-phylogenetic beta-diversity metrics.

- Protocol: FastTree Approximation for Large ASV Sets

- Align: Align your ASV sequences (e.g., 16S rRNA gene) to a curated reference alignment (e.g., SILVA) using

pynastorPASTA. - Subset: Merge identical sequences to create a unique set.

- Build Tree: Run FastTree with the

-fastestand-nosupportflags to maximize speed:FastTree -fastest -nosupport -gtr -nt alignment.fasta > tree.newick. - Re-integrate: Map the original ASVs back onto the unique sequence tree.

- Align: Align your ASV sequences (e.g., 16S rRNA gene) to a curated reference alignment (e.g., SILVA) using

FAQ 3: How do I handle the extreme dimensionality of gene families (e.g., from HUMAnN2) in regression models without overfitting?

- Answer: Metagenomic functional profiles require aggressive and informed feature selection before modeling.

- Solution: Employ a tiered selection strategy: 1) Prevalence, 2) Variance, 3) Association-based filtering.

- Protocol: Tiered Feature Selection for Metagenomic Pathways

- Prevalence Filter: Retain MetaCyc pathways or Gene Families present (nonzero) in ≥20% of samples.

- Variance Filter: Calculate the coefficient of variation (CV = std/mean) for each remaining feature. Keep the top 20% by CV.

- Univariate Association: For your outcome (e.g., disease state), perform a non-parametric test (Mann-Whitney U for binary; Spearman for continuous). Retain features with FDR-corrected p-value < 0.10.

- Model Input: Use this drastically reduced feature set for downstream penalized regression (e.g., LASSO, ridge).

FAQ 4: My differential abundance analysis (e.g., DESeq2, MaAsLin2) is too slow on a metagenomic species-level profile (thousands of features).

- Answer: Computational bottlenecks arise from model fitting for each feature. Optimize by reducing sample or feature dimensions intelligently.

- Solution: Filter low-abundance features first and ensure you are using a sparse data representation.

- Protocol: Optimized Workflow for MaAsLin2

- Pre-process Input: Filter the species table to remove features with a total count < 10 across all samples.

- Sparse Matrix: Save the filtered table as a

.tsvfile. Use tools that handle sparse structures internally. - Run Configuration: In MaAsLin2, set

normalization = "TSS"(total sum scaling),transform = "LOG",analysis_method = "LM"(fastest). Limit fixed-effect covariates to essential ones (≤3). - Hardware: Run the analysis on a machine with ≥16GB RAM. Consider splitting by a major metadata variable (e.g., body site) and analyzing separately.

Data Presentation

Table 1: Dimensionality Comparison Across Microbiome Data Types

| Data Type | Typical Feature Number (Dimensionality) | Feature Example | Common Analysis Challenges |

|---|---|---|---|

| 16S rRNA (OTU/ASV) | 1,000 - 20,000 | Bacteroides vulgatus ASV_125 | Sparsity (>90% zeros), compositionality, phylogenetic scale. |

| Metagenomic Species | 500 - 10,000 | Escherichia coli (strain A) | High inter-individual variation, strain-level functional redundancy. |

| Metagenomic Functional | 5,000 - 100,000+ | MetaCyc: PWY-6700 (L-threonine biosynthesis) | Extreme dimensionality, multi-collinearity, interpretability. |

| Metatranscriptomic | 10,000 - 500,000+ | KO:K03552 (rpoB gene) | Even higher noise, requires robust normalization to DNA level. |

Table 2: Computational Resource Demand for Common Tasks

| Analysis Task | High-Dimensional Input (e.g., 10k features) | Recommended Minimum RAM | Approx. Compute Time* | Key Resource-Saving Strategy |

|---|---|---|---|---|

| Beta Diversity (PCoA) | 100 samples x 10k ASVs | 8 GB | 2 min | Use efficient distance calc. (e.g., vegdist in R). |

| DESeq2 (Wald test) | 100 samples x 10k species | 16 GB | 15 min | Filter low-count features pre-analysis. |

| Sparse CCA (sCCA) | 100 samples x 5k pathways & 100 hosts | 32 GB | 30+ min | Increase sparsity penalty (lambda). |

| Random Forest | 500 samples x 20k gene families | 64 GB | 1+ hours | Limit tree depth (max_depth), use ranger R package. |

*Time estimated on a standard 8-core server.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in High-Dimensional Microbiome Research |

|---|---|

| Pseudo-Count Algorithms | Enables log-transformation of sparse count data by adding a minimal, informed value to all zeros (e.g., Bayesian or multiplicative replacement). |

| Compositional Data Analysis (CoDA) Tools | Software/R packages (compositions, ALDEx2, QIIME2 plugins) that properly handle the relative nature of sequencing data, preventing spurious correlation. |

| Sparse Matrix Objects | Data structures (e.g., Matrix package in R, scipy.sparse in Python) that efficiently store and compute on feature tables where most values are zero, saving memory. |

| PICRUSt2 / Tax4Fun2 | Tools to predict metagenomic functional potential from 16S data, bridging high-dimensional taxonomic profiles to even higher-dimensional inferred functional profiles. |

| HUMAnN2 / MetaPhlAn | Pipelines to generate species-level and stratified pathway-abundance profiles from shotgun metagenomic data, creating the definitive high-dimensional functional matrix. |

| FastTree / IQ-TREE | Software for rapid phylogenetic tree inference, essential for incorporating evolutionary relationships into analyses of high-dimensional OTU/ASV data. |

| ANCOM-BC / MaAsLin2 | Statistical models designed specifically for differential abundance testing in high-dimensional, compositional, and confounder-rich microbiome datasets. |

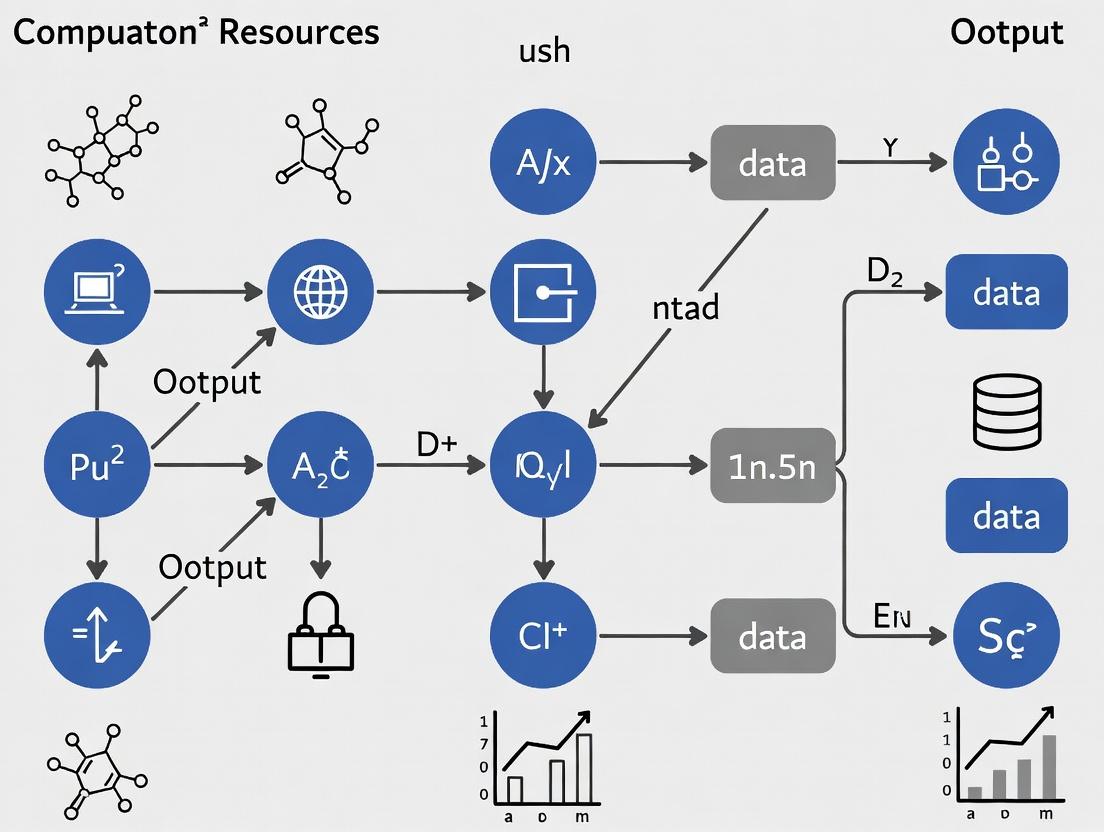

Experimental Protocol Visualizations

Title: Aitchison PCoA Workflow for Sparse Data

Title: Tiered Feature Selection Strategy

Title: Creating High-Dimensional Stratified Functional Profiles

Troubleshooting Guides & FAQs

FAQ 1: My model achieves 100% accuracy on training data but fails on new samples. What is wrong?

Answer: This is a classic sign of overfitting in a p >> n scenario. With far more features (p, e.g., microbial OTUs) than samples (n), models can memorize noise. Solutions include:

- Apply Regularization: Use LASSO (L1) or Ridge (L2) regression to penalize model complexity.

- Implement Dimensionality Reduction: Apply PCA or use phylogenetic-informed methods like UniFrac distances before modeling.

- Apply Feature Selection: Use statistical filters (e.g., DESeq2 for differential abundance) to reduce

pto a meaningful subset.

FAQ 2: My computational job runs out of memory during dimensionality reduction. How can I proceed?

Answer: High-dimensional data (large p) exhausts memory in standard operations. Use these protocols:

- Switch to Sparse Matrix Operations: If your abundance table is sparse (many zeros), use packages like

scipy.sparsein Python orMatrixin R. - Use Iterative/Partial Algorithms: For PCA, use

RandomizedPCA(scikit-learn) orirlba(R) which compute approximate results without loading the full covariance matrix. - Batch Processing: Split your feature set into batches, process each, and then integrate results.

FAQ 3: How do I validate a model when my sample size (n) is very small?

Answer: Small n limits data for traditional train/test splits.

- Use Nested Cross-Validation: An outer loop evaluates model performance, while an inner loop optimizes hyperparameters. This prevents data leakage and optimistic bias.

- Consider Leave-One-Out Cross-Validation (LOOCV): With very small

n(e.g., <30), LOOCV uses each sample as a test set once, maximizing training data. - Apply the

splitpackage in R orRepeatedStratifiedKFoldin Python to ensure class balance is maintained in small splits.

FAQ 4: Which multiple testing correction is most appropriate for high-dimensional microbiome feature testing?

Answer: When testing thousands of taxa (p is large), false discovery rate (FDR) control is often more appropriate than family-wise error rate (FWER).

| Correction Method | Controls For | Best Use Case for Microbiome p >> n |

Typical Command/Tool |

|---|---|---|---|

| Benjamini-Hochberg (BH) | False Discovery Rate (FDR) | Screening many taxa for potential associations. Preferred for exploratory studies. | p.adjust(p, method="BH") in R |

| Bonferroni | Family-Wise Error Rate (FWER) | Confirmatory studies on a pre-selected, small subset of taxa. Very conservative. | p.adjust(p, method="bonferroni") in R |

| q-value / Storey's Method | FDR (with estimated pi0) | Very large-scale hypothesis testing; often used in -omics. | qvalue package in R/Bioconductor |

Experimental Protocols

Protocol 1: Regularized Regression for p >> n Feature Selection

Aim: Identify a stable subset of predictive microbial features from a high-dimensional dataset.

Steps:

- Preprocessing: Rarefy or normalize (e.g., CSS) your OTU/ASV table. Log-transform if necessary.

- LASSO Regression:

- Use

glmnetpackage in R or scikit-learn'sLassoCVin Python. - Standardize features (center & scale).

- Use 10-fold cross-validation (CV) on the training set to select the optimal lambda (λ) that minimizes CV error.

- Use

- Stability Selection: Repeat LASSO on 100 subsamples of the data. Retain only features selected in >80% of subsamples to ensure robustness.

- Final Model: Fit a standard linear/logistic model using the stable feature subset for interpretable coefficients.

Protocol 2: Memory-Efficient Dimensionality Reduction for Large p

Aim: Perform PCA on a microbiome sample x feature matrix where p > 50,000.

Steps:

- Convert to Sparse Format:

X_sparse = scipy.sparse.csr_matrix(X)(Python). - Use Truncated SVD (Equivalent to PCA):

- In Python:

from sklearn.decomposition import TruncatedSVD svd = TruncatedSVD(n_components=50, random_state=42)X_reduced = svd.fit_transform(X_sparse)

- In Python:

- Alternative - Halko Method: The

irlbapackage in R (irlba(A, nv=50)) is designed for this purpose, computing only the first few singular vectors/values without the full decomposition.

Visualizations

Title: The p >> n Problem: Causes and Solution Strategies

Title: Nested Cross-Validation Workflow for Small n

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in High-Dimensional Microbiome Research |

|---|---|

Sparse Matrix Libraries (scipy.sparse, R Matrix) |

Enables efficient storage and computation on abundance tables where most entries are zero, saving memory. |

Regularized Regression Packages (glmnet in R, scikit-learn in Python) |

Implements LASSO, Ridge, and Elastic Net to prevent overfitting and perform feature selection in p >> n settings. |

| Stability Selection Algorithms | Provides a robust framework for feature selection by aggregating results over many subsamples, reducing false positives. |

Iterative SVD/PCA Libraries (irlba in R, RandomizedPCA in scikit-learn) |

Computes approximate principal components without constructing the full covariance matrix (size p x p), enabling analysis of large p. |

False Discovery Rate (FDR) Control Software (qvalue package, p.adjust in R) |

Corrects for multiple hypothesis testing across thousands of microbial features to identify statistically significant findings. |

Phylogenetic Placement Tools (EPA-ng, pplacer) |

Incorporates evolutionary relationships to reduce effective dimensionality and improve biological interpretability. |

Metagenomic Functional Prediction Pipelines (HUMAnN3, PICRUSt2) |

Maps high-dimensional taxonomic data into gene family or pathway space (changing p), often a more informative feature set. |

Troubleshooting Guides & FAQs

FAQ 1: Our institution has limited high-performance computing (HPC) resources. Which sequencing method should we choose for our initial pilot study to minimize computational burden while still generating meaningful data?

Answer: For pilot studies with limited HPC resources, 16S rRNA amplicon sequencing is strongly recommended. Its computational footprint is orders of magnitude smaller. A typical 16S analysis pipeline (from raw reads to taxonomic tables) for 100 samples requires approximately 10-50 CPU-hours and <10 GB of storage for intermediate files. In contrast, an equivalent shotgun metagenomic analysis would require 500-5,000+ CPU-hours and >500 GB of storage, primarily due to the need for host DNA filtering (if applicable), complex assembly, gene prediction, and functional annotation against large databases. Start with 16S to define community structure before committing to resource-intensive shotgun sequencing.

FAQ 2: During our shotgun metagenomic analysis, the quality control (QC) and host read removal step is taking multiple days and consuming all our server's memory. What are the primary bottlenecks and how can we optimize this?

Answer: The primary bottlenecks are the sheer volume of sequencing data and the large reference genome(s) used for host read filtering. A standard human gut metagenome (e.g., 10 Gb of paired-end reads) aligned to the human genome (≈3 Gb) is a massive computational task.

Optimization Protocol:

- Use fast, alignment-free host removal tools: Replace alignment-heavy tools like

BWAwith lightweight, k-mer-based tools such asKraken2(with a host database) orBBduk(BBDuk). These can be 10-100x faster. - Perform aggressive QC trimming first: Use

fastporTrimmomaticto remove low-quality bases and adapters before host removal. This reduces the data volume for the most expensive step. - Allocate sufficient RAM: For

Kraken2, provide enough RAM for its database to reside entirely in memory. For a standard database, this is often 30-70 GB. - Subsample: For initial pipeline testing, subsample your reads (e.g., using

seqtk) to 1-10% to debug workflows quickly.

FAQ 3: We are getting inconsistent taxonomic classification results for our 16S data when using different bioinformatics pipelines (e.g., QIIME 2 vs. MOTHUR). How do we decide which to use, and does this choice impact computational needs?

Answer: Inconsistencies often arise from different reference databases (Greengenes vs. SILVA vs. RDP) and classification algorithms (naive Bayes vs. VSEARCH). The choice significantly impacts computational needs.

Detailed Comparison Protocol:

- Benchmark on a mock community: Run a known, standard mock microbial community (e.g., from BEI Resources) through both pipelines.

- Measure accuracy vs. resource use: Create a table comparing the known composition to each pipeline's output. Simultaneously, track CPU time and memory use for each step (demultiplexing, denoising/OTU clustering, taxonomy assignment).

- Decision Matrix: Prioritize the pipeline that offers the best balance of accuracy for your specific microbiota and reasonable computational cost. Note that DADA2 (in QIIME 2) is more computationally intensive than deblur or 97% OTU clustering in MOTHUR, but provides higher-resolution Amplicon Sequence Variants (ASVs).

FAQ 4: For shotgun metagenomics, functional profiling (KEGG, MetaCyc) is causing our servers to run out of disk space during the HUMAnN3 or MetaPhlAn analysis. Where is this space being used and how can we reclaim it?

Answer: The disk space is consumed by large reference databases and intermediate alignment files (e.g., SAM/BAM). HUMAnN3, for instance, downloads and uses protein databases like UniRef90 (>60 GB).

Troubleshooting Steps:

- Identify large files: Use commands like

du -sh *in your working directory to find files >10 GB. - Clean intermediate files: Configure your pipeline to remove intermediate files after each step. Crucially, delete the SAM/BAM files after they are converted to read counts. The

--remove-temp-outputflag in HUMAnN3 does this. - Use pre-installed, shared databases: Work with your HPC sysadmin to install large databases (UniRef, ChocoPhlAn) in a network-accessible, shared location so each user doesn't need a local copy.

- Compress static files: Ensure large reference FASTA files are kept in compressed format (

.gz).

Data Presentation: Quantitative Comparison

Table 1: Computational Footprint Comparison for a 100-Sample Study

| Metric | 16S rRNA Amplicon Sequencing | Shotgun Metagenomic Sequencing | Notes |

|---|---|---|---|

| Raw Data per Sample | 10 - 50 MB (V4 region) | 1 - 10 GB | Drives all downstream costs. |

| Total Storage (Raw) | 1 - 5 GB | 100 GB - 1 TB | |

| QC & Preprocessing | 5-10 CPU-hours | 50-200 CPU-hours | Shotgun requires host removal. |

| Core Analysis(e.g., OTU/ASV, Taxonomy) | 10-30 CPU-hours | N/A | |

| Assembly & Annotation | N/A | 500-5000+ CPU-hours | Most computationally intensive step. |

| Typical RAM Requirement | < 8 GB per process | 16 - 128+ GB | Shotgun assembly needs high RAM. |

| Total Pipeline Time | Hours to 1 day | Days to weeks | Depends on HPC queue & parallelism. |

Table 2: Key Research Reagent Solutions & Computational Tools

| Item Name | Category | Function / Purpose |

|---|---|---|

| ZymoBIOMICS Mock Communities | Wet-lab Reagent | Standardized microbial mixes for validating wet-lab protocols and benchmarking bioinformatics pipeline accuracy. |

| Nextera XT / Flex Kits | Library Prep | Standardized kits for amplicon or shotgun library preparation. Consistent insert size impacts computational trimming. |

| Illumina NovaSeq S4 Flow Cell | Sequencing | Generates ultra-high-throughput data (>1Tb/run). Primary driver of computational burden; necessitates planning for data handling. |

| QIIME 2 (2024.5) | Software Platform | Integrative framework for 16S analysis. Manages data provenance but requires containerization (Docker/Singularity). |

| MetaPhlAn 4 / HUMAnN 3 | Software Tool | Standard for shotgun taxonomic & functional profiling. Relies on large, curated reference databases (ChocoPhlAn, UniRef). |

| Kraken2 / Bracken | Software Tool | Ultra-fast k-mer-based taxonomic classifier for shotgun reads. Lower footprint than alignment-based methods but needs large RAM for database. |

| Snakemake / Nextflow | Workflow Manager | Essential for orchestrating complex, reproducible pipelines on HPC/cluster environments, managing job submission and dependencies. |

| Singularity Container | Computational Environment | Packages entire software stack (tools, dependencies) for portability and reproducibility across different HPC systems. |

Experimental Protocols

Protocol 1: Benchmarking Computational Pipeline Performance

Objective: To quantitatively measure and compare the computational resource use (time, CPU, RAM, disk I/O) of different analysis pipelines for the same dataset.

Methodology:

- Data Selection: Obtain a publicly available dataset (e.g., from the SRA, such as PRJNA640118) including both 16S and shotgun data from the same samples.

- Pipeline Definition: Define two standard pipelines:

- 16S Pipeline:

fastp(QC) ->DADA2(in QIIME 2) ->SILVA classifier. - Shotgun Pipeline:

fastp(QC) ->Kraken2(host filter & taxonomy) ->HUMAnN3(function).

- 16S Pipeline:

- Resource Profiling: Use the

/usr/bin/time -vcommand on Linux to execute each pipeline on a dedicated compute node. Record: "User time," "System time," "Maximum resident set size (RAM)," and "File system inputs/outputs." - Data Logging: Run each pipeline 3 times. Log all outputs to a structured file (JSON or CSV). Calculate mean and standard deviation for each resource metric.

- Visualization: Create bar charts comparing CPU-hours and peak RAM usage between the 16S and shotgun pipelines.

Protocol 2: Optimizing Shotgun Metagenomic Host DNA Removal

Objective: To evaluate the speed, efficiency, and computational cost of different host read removal tools.

Methodology:

- Test Data: Use a human saliva or gut metagenomic dataset (known to have high host DNA content).

- Tool Selection: Test

BBduk(alignment-free),Kraken2(k-mer based), andBWA-MEM/Bowtie2(alignment-based) for host removal against the human reference genome (GRCh38). - Standardized Environment: Run all tools on the same HPC node specification (e.g., 16 CPU cores, 64 GB RAM).

- Metrics: For each tool, measure: (a) Wall-clock run time, (b) Percentage of reads classified as host, (c) CPU utilization, (d) Peak memory usage.

- Validation: Validate non-host reads by aligning a subset to a microbial reference to ensure true microbial reads are not being erroneously removed.

Mandatory Visualizations

Workflow & Resource Comparison: 16S vs Shotgun

Decision Tree: Sequencing Method Selection

Technical Support Center & Troubleshooting Guides

FAQ 1: Pre-processing and Quality Control Q: After running FASTQ files through a primer trimming tool (e.g., cutadapt), my read counts are extremely low or zero. What went wrong? A: This typically indicates a primer sequence mismatch. Verify:

- Primer Sequence: Ensure the primer sequences provided to the tool are correct, in the 5'->3' orientation, and account for degeneracy (e.g., use

Wfor A/T). - Adapter Contamination: Check if your reads contain 3' adapters. Trim these first or use the

-aand-Aoptions in cutadapt for paired-end reads. - Error Rate: Increase the allowed error rate (

-eparameter in cutadapt) from the default (0.1) to 0.2 if primers have high degeneracy.

Protocol: Basic cutadapt Command for Paired-End 16S rRNA Data

Q: DADA2 reports "No reads passed the filter." How do I adjust filtering parameters?

A: This error arises when filterAndTrim thresholds are too strict for your data's quality profile.

- Diagnose: Plot the quality profiles of a subset of reads using

plotQualityProfile()in DADA2. - Adjust: Lower the

maxEE(Expected Errors) parameter and/or shorten thetruncLenbased on the quality plot crossover point. For very noisy data, start with lenient values.

Table 1: Troubleshooting DADA2 Filtering Parameters

| Symptom | Likely Cause | Parameter Adjustment | Typical Starting Value |

|---|---|---|---|

| No reads pass filter | maxEE too low, truncLen too long |

Increase maxEE, shorten truncLen |

maxEE=c(2,5), truncLen based on quality plot |

| Too many reads lost | Overly aggressive trimming | Lengthen truncLen, increase maxEE slightly |

Ensure <10% expected errors per read |

| Chimeras >20% of reads | Poor filtering, true biological signal | Ensure proper filtering; use minFoldParentOverAbundance |

minFoldParentOverAbundance = 1.5 |

FAQ 2: Taxonomy Assignment & Database Issues Q: My ASVs are assigned as "uncultured bacterium" or have very low taxonomic resolution. How can I improve this? A: This is common when using general databases.

- Use a Specialized Database: Switch to a curated database specific to your sample type (e.g., SILVA for general 16S, UNITE for ITS, Greengenes for human microbiome).

- Increase Confidence Threshold: In classifiers like

assignTaxonomyin DADA2, the default minBoot=50 is lenient. Increase to 80 for more confident, albeit possibly less complete, assignments. - Consider Alternative Methods: Use

IDTAXAfrom theDECIPHERpackage, which often provides better resolution at lower sequence identities.

FAQ 3: Diversity Analysis Interpretation Q: My alpha rarefaction curves do not plateau, suggesting insufficient sequencing depth. Can I still compare samples? A: Proceed with extreme caution. Incomplete saturation means observed diversity is not fully captured.

- Use Metrics Insensitive to Depth: Rely more on Chao1 (richness estimator) and Shannon (evenness-weighted) than raw Observed OTU count.

- Employ Normalization: Use non-rarefaction methods like DESeq2's median-of-ratios or ANCOM-BC for differential abundance, which model sampling depth.

- State the Limitation Clearly: In your results, explicitly note that comparisons are made on unevenly saturated profiles.

Protocol: Core Beta Diversity Workflow using QIIME2 & Phyloseq

- Generate a BIOM table: Use DADA2/QIIME2 to create a feature table (ASVs/OTUs).

- Build a Tree: Create a phylogenetic tree (e.g., with

qiime phylogeny align-to-tree-mafft-fasttree). - Calculate Distances: Compute weighted/unweighted UniFrac or Bray-Curtis distances.

- Import into R/Phyloseq:

FAQ 4: Differential Abundance False Positives Q: My differential abundance analysis (using a simple t-test) yields hundreds of significant taxa. Are these all real? A: Likely not. This is a classic pitfall due to compositionality, high sparsity, and multiple testing.

- Use Compositionally Aware Tools: Switch to methods designed for microbiome data: ANCOM-BC, ALDEx2, DESeq2 (with proper filtering), or MaAsLin2.

- Account for Confounders: Include relevant metadata (e.g., age, batch, BMI) as covariates in the model.

- Apply FDR Correction: Always correct p-values for multiple hypotheses (e.g., Benjamini-Hochberg).

Table 2: Comparison of Differential Abundance Methods

| Method | Key Principle | Handles Compositionality? | Output | Best For |

|---|---|---|---|---|

| ANCOM-BC | Log-ratio transforms with bias correction | Yes | Absolute differential abundance | High specificity, low false discovery |

| ALDEx2 | Monte Carlo sampling from a Dirichlet distribution | Yes | Log-ratio differences & effect sizes | Small sample sizes, sensitive detection |

| DESeq2 | Negative binomial model with variance stabilization | Indirectly (via proper normalization) | Fold change & significance | Large effect sizes, RNA-seq-like workflows |

| MaAsLin2 | Generalized linear models with normalization | Yes | Association statistics | Complex metadata, multivariate analysis |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Microbiome Analysis

| Tool / Reagent | Function | Typical Use Case |

|---|---|---|

| FastQC / MultiQC | Quality control visualization. | Initial assessment of raw FASTQ files across all samples. |

| cutadapt / Trimmomatic | Adapter and primer trimming. | Removing sequencing adapters and PCR primer sequences. |

| DADA2 / Deblur | Exact sequence variant (ASV) inference. | Error-correcting amplicon reads to infer biological sequences. |

| SILVA / UNITE / GTDB | Curated reference taxonomy databases. | Assigning taxonomic labels to ASVs based on rRNA genes. |

| QIIME2 / mothur | Integrated analysis pipelines. | End-to-end processing from raw data to diversity metrics. |

| Phyloseq (R) | Data organization and analysis. | Statistical analysis and visualization of microbiome data in R. |

| LEfSe / MaAsLin2 | Differential abundance analysis. | Identifying features significantly associated with metadata. |

| PICRUSt2 / BugBase | Functional prediction. | Inferring metagenomic potential from 16S rRNA data. |

Visualizations

Diagram 1: Core 16S rRNA Amplicon Analysis Workflow

Diagram 2: Differential Abundance Decision Logic

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My 16S rRNA amplicon sequence analysis pipeline is failing with an "Out of Memory" error during the DADA2 denoising step. What are the minimum RAM requirements? A1: The DADA2 algorithm is memory-intensive as it loads the entire sequence dataset into RAM for error modeling. For a typical entry-level study (~10-20 million reads), a minimum of 32 GB of RAM is required. For larger studies (50M+ reads), 64 GB or more is recommended. Ensure your system has sufficient swap space configured as a temporary buffer, though this will significantly slow processing.

Q2: When assembling metagenomic contigs with MEGAHIT or metaSPAdes, the job is killed by the system. How do I estimate CPU and memory needs? A2: Metagenomic assembly is computationally demanding. The primary bottleneck is memory. Requirements scale with sample complexity and sequencing depth.

| Tool | Suggested Baseline for 10-20 Gbp of Data | Key Consideration |

|---|---|---|

| MEGAHIT | 8 CPU cores, 64 GB RAM | More memory-efficient, suitable for first-pass assembly. |

| metaSPAdes | 16 CPU cores, 128-256 GB RAM | More comprehensive but requires substantial resources. |

Protocol: To test resource needs, run the assembly on a random 10% subset of your reads first. Monitor peak memory usage with tools like /usr/bin/time -v.

Q3: I am setting up a new workstation for microbiome analysis. Should I prioritize CPU cores, RAM speed, or storage I/O? A3: For entry-level analysis, prioritize in this order: 1) Maximum RAM Capacity, 2) Fast Storage (NVMe SSD), 3) CPU Core Count. Most microbiome tools (QIIME2, MOTHUR) are not highly parallelized across many cores but are I/O and memory-bound. A system with 64 GB RAM, a 1 TB NVMe SSD, and a modern 8-core CPU is a robust starting point.

Q4: Is a GPU necessary for entry-level microbiome data analysis? A4: Generally, no. Most standard bioinformatics pipelines (quality control, clustering, taxonomy assignment) do not utilize GPU acceleration. However, a GPU (e.g., NVIDIA GTX 1660 or higher with 6+ GB VRAM) becomes valuable if you plan to use deep learning models for feature discovery, image analysis (e.g., from microscopes), or advanced neural network-based tools like DeepMicro or those in the NVIDIA CLARA framework.

Q5: My storage is filling up rapidly with intermediate files from QIIME2. What is a typical storage footprint? A5: QIIME2 artifacts and intermediate files can consume 10-50x the storage of the original raw FASTQ files. Implement a clean-up protocol.

| Data Type (per sample) | Raw FASTQ | Post-QC & Denoising | Full QIIME2 Artifacts (with phylogeny) |

|---|---|---|---|

| 16S (V4, 100k reads) | ~50 MB | ~30 MB | ~1-2 GB |

| Shotgun Metagenomic (5 Gbp) | ~15 GB | ~10 GB | ~200-500 GB |

Protocol: Use QIIME2's qiime tools export to convert bulky artifacts to lightweight, standard formats (BIOM, TSV) after key steps. Archive raw data separately on a network-attached storage (NAS) or cold storage.

Table 1: Estimated Baseline Hardware for Entry-Level Microbiome Analysis

| Analysis Type | Recommended RAM | CPU Cores | Storage (Active) | GPU |

|---|---|---|---|---|

| 16S rRNA Amplicon (QIIME2/MOTHUR) | 32-64 GB | 8-16 | 500 GB - 1 TB SSD | Not Required |

| Shotgun Metagenomics (Profiling) | 64-128 GB | 16-24 | 1-2 TB NVMe SSD | Optional |

| Shotgun Metagenomics (Assembly) | 128-256 GB | 16-32 | 2-4 TB NVMe SSD | Optional |

| Metatranscriptomics | 128+ GB | 24+ | 2-4 TB NVMe SSD | Not Required |

Table 2: Common Software & Their Primary Resource Bottlenecks

| Software / Step | Primary Bottleneck | Mitigation Strategy |

|---|---|---|

| FastQC / MultiQC | CPU, I/O | Use parallel processing on multiple samples. |

| DADA2, Deblur | RAM | Run samples in batches; increase RAM. |

| MetaPhlAn / Kraken2 | RAM, CPU | Kraken2 requires large RAM for database; use pre-built indices. |

| STAMP / LEfSe | CPU | Statistical tests are single-threaded; faster CPU clock helps. |

| R / Phyloseq (large plots) | RAM | Limit objects in session; use sparse data representations. |

Visualizations

Microbiome Analysis Resource Decision Flow

Typical 16S Analysis Pipeline & Resource Pressure Points

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Analysis |

|---|---|

| High-Capacity NVMe SSD (e.g., 2 TB) | Provides fast read/write speeds for processing large sequencing files, reducing I/O wait times in pipelines. |

| ECC (Error-Correcting Code) RAM | Prevents memory bit-flip errors that can cause silent, irreproducible errors during long-running computations. |

| BIOM File Format | A standardized (JSON/HDF5) table format for representing biological sample by observation matrices, used by QIIME2, PICRUSt2. |

| Conda/Mamba Environment | Isolated software environments to manage conflicting package dependencies (e.g., different Python versions for tools). |

| Singularity/Apptainer Container | Reproducible, portable software environments that package entire analysis pipelines, ensuring consistent results across HPC/clusters. |

| SRA Toolkit | Command-line utilities to download and convert sequence data from public repositories like NCBI SRA to FASTQ format. |

| Greengenes / SILVA Database | Curated 16S rRNA gene reference databases used for taxonomic classification of amplicon sequences. Must be downloaded locally. |

| UniRef / eggNOG Database | Protein functional databases essential for annotating genes and pathways in metagenomic and metatranscriptomic analyses. |

Building Scalable Pipelines: From Raw Reads to Biological Insights

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My QIIME 2 visualization (e.g., emperor PCoA plot) fails to render or shows an empty file. What should I check?

A: This is often a dependency or environment issue. First, ensure you are in the correct QIIME 2 environment (conda activate qiime2-20xx.x). Verify that all required Python libraries (e.g., matplotlib, jinja2) are installed at the correct versions. Check disk space and file permissions for the output directory. For web-based visualizations, ensure you are using a compatible browser and that JavaScript is enabled.

Q2: I get a "mothur > fatal error: Unable to open (input file)" message. How do I resolve this?

A: This typically indicates a file path issue. mothur can be sensitive to file names and paths. Ensure:

- There are no spaces or special characters in your file or directory names.

- You are using the correct file path relative to your working directory, or provide the absolute path.

- The file is not empty and is in the expected format (e.g.,

.fasta,.groups). - You have read permissions for the file.

Q3: HUMAnN 3 produces an error: "AttributeError: 'NoneType' object has no attribute 'shape'" during the alignment step. What does this mean?

A: This error usually occurs when the nucleotide or protein database files are not found or are corrupted. Confirm that:

- The

--nucleotide-databaseand--protein-databasepaths in your command are correct. - The ChocoPhlAn database was properly downloaded and installed using

humann_databases. - The necessary Bowtie2 index files (

.bt2) are present in the database directory.

Q4: MetaPhlAn 4 reports very low taxonomic abundance or "No markers found" for my samples. What are the potential causes? A: This suggests the sample may not be well-classified by the MetaPhlAn marker database.

- Sample Type: Verify MetaPhlAn is appropriate for your sample (optimized for human and environmental microbiomes). Non-standard samples (e.g., extreme environments) may have poor coverage.

- Data Quality: Check your input FASTQ quality. Low sequencing depth, high contamination (e.g., host), or excessive adapter content can cause this. Preprocess with quality trimming and host read removal.

- Database Version: Ensure you are using the latest

mpa_vJan21(or newer) database via--index. Older databases have less comprehensive marker sets. - Read Type: MetaPhlAn works best with shotgun metagenomic reads, not 16S rRNA amplicon data.

Q5: All pipelines are failing with "Killed" or "Out of memory" errors on my server. How can I manage computational resources?

A: High-dimensional microbiome data is resource-intensive. Implement these strategies:

- Pre-filtering: Use

usearchorseqtkto subsample large FASTQ files before analysis. - Increase Memory/CPU: Allocate more resources if using a cluster (SLURM, SGE). Key

mothurand QIIME 2 steps (chimera checking, multiple sequence alignment) are highly memory-intensive. - Parallel Processing: Use built-in threading options (

--threadsin MetaPhlAn/HUMAnN,processorsinmothur,--p-n-jobsin QIIME 2). - Workflow Optimization: For QIIME 2, use the

--p-no-pre-filterflag in DADA2/DeBlur to reduce intermediate memory load. Formothur, break largemake.contigsorclassify.seqbatches into smaller groups.

Table 1: Framework Overview & Resource Requirements

| Framework | Primary Analysis Type | Core Algorithm/Method | Typical Input | Key Outputs | Peak RAM Usage (Estimate)* | CPU Intensity |

|---|---|---|---|---|---|---|

| QIIME 2 | 16S/18S/ITS Amplicon | DADA2, Deblur, VSEARCH | Raw FASTQ, demultiplexed | Feature table, taxonomy, PCoA plots | 16-64 GB | High (during denoising) |

| mothur | 16S/18S/ITS Amplicon | MiSeq SOP, Bayesian classifier | FASTQ, SILVA database | OTU table, taxonomy, shared file | 8-32 GB | Medium-High |

| HUMAnN 3 | Shotgun Metagenomic | MetaPhlAn, Bowtie2, Diamond | Host-filtered FASTQ | Gene families (UniRef90), Pathways | 16-128 GB | Very High (alignment) |

| MetaPhlAn 4 | Shotgun Metagenomic | Unique clade-specific markers | Host-filtered FASTQ | Taxonomic profiles (rel. abundance) | 4-16 GB | Low-Medium |

Note: RAM usage is highly dependent on sample size, sequencing depth, and reference database.

Table 2: Recommended Use Cases

| Goal | Recommended Primary Tool | Complementary Tool(s) |

|---|---|---|

| Standard 16S diversity analysis from raw reads | QIIME 2 or mothur | - |

| Replicating published 16S methodology (pre-2018) | mothur | - |

| Microbial community taxonomic profiling (shotgun) | MetaPhlAn 4 | - |

| Functional potential/pathway analysis (shotgun) | HUMAnN 3 | MetaPhlAn 4 (for taxonomy) |

| Integrated taxonomy & function analysis | MetaPhlAn 4 + HUMAnN 3 | - |

Experimental Protocols

Protocol 1: Full 16S rRNA Gene Amplicon Analysis with QIIME 2 (DADA2)

- Import Data:

qiime tools import --type 'SampleData[PairedEndSequencesWithQuality]' --input-path manifest.csv --output-path demux-paired-end.qza --input-format PairedEndFastqManifestPhred33 - Denoise with DADA2:

qiime dada2 denoise-paired --i-demultiplexed-seqs demux-paired-end.qza --p-trim-left-f 13 --p-trim-left-r 13 --p-trunc-len-f 150 --p-trunc-len-r 150 --o-table table.qza --o-representative-sequences rep-seqs.qza --o-denoising-stats denoising-stats.qza - Generate Phylogenetic Tree:

qiime phylogeny align-to-tree-mafft-fasttree --i-sequences rep-seqs.qza --o-alignment aligned-rep-seqs.qza --o-masked-alignment masked-aligned-rep-seqs.qza --o-tree unrooted-tree.qza --o-rooted-tree rooted-tree.qza - Taxonomic Classification:

qiime feature-classifier classify-sklearn --i-classifier silva-138-99-nb-classifier.qza --i-reads rep-seqs.qza --o-classification taxonomy.qza - Core Diversity Metrics:

qiime diversity core-metrics-phylogenetic --i-phylogeny rooted-tree.qza --i-table table.qza --p-sampling-depth 10000 --m-metadata-file sample-metadata.tsv --output-dir core-metrics-results

Protocol 2: Taxonomic Profiling with MetaPhlAn 4

- Install & Update Database:

metaphlan --install - Run Single Sample:

metaphlan sample.fastq.gz --input_type fastq --bowtie2db /path/to/database --nproc 8 -o profiled_metagenome.txt - Merge Multiple Samples:

merge_metaphlan_tables.py profiled_*.txt > merged_abundance_table.txt - Strain-Level Profiling: For strain tracking, add the

--add_virusesand--unknown_estimationflags during profiling.

Mandatory Visualizations

Title: Framework Selection Workflow for Microbiome Data

Title: HUMAnN 3 Pipeline Steps

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Materials & Resources for Microbiome Analysis

| Item/Resource | Function & Description | Example/Source |

|---|---|---|

| Silva Database | Comprehensive, quality-checked ribosomal RNA (16S/18S/ITS) sequence database for taxonomic classification. | SILVA SSU & LSU rDNA (https://www.arb-silva.de/) |

| GTDB (Genome Taxonomy Database) | Phylogenetically consistent genome-based taxonomy for prokaryotes, an alternative to NCBI. | GTDB Toolkit (GTDB-Tk) (https://gtdb.ecogenomic.org/) |

| ChocoPhlAn Database | Pan-genome database of representative microbial genomes used by HUMAnN for functional profiling. | Downloaded via humann_databases |

| MetaPhlAn Marker Database (mpa_vXX) | Database of unique clade-specific marker genes used for fast taxonomic profiling. | Downloaded via metaphlan --install |

| PICRUSt2 / BugBase | Tools for inferring functional potential from 16S data (if shotgun sequencing is unavailable). | PICRUSt2 (Langille Lab) |

| KneadData | Tool for quality control and host read removal (e.g., human, mouse) from metagenomic reads. | (https://huttenhower.sph.harvard.edu/kneaddata/) |

| BioBakery Workflows | Integrated pipeline (KneadData, MetaPhlAn, HUMAnN) for end-to-end shotgun analysis. | (https://github.com/biobakery/biobakery_workflows) |

| Qiita / EBI MGnify | Platforms for public microbiome data deposition, retrieval, and re-analysis. | (https://qiita.ucsd.edu/, https://www.ebi.ac.uk/metagenomics/) |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Snakemake workflow fails on the cluster with a "MissingOutputException" even though the command ran. What's wrong?

A: This is commonly due to improperly defined output paths or filesystem latency. Ensure your output filenames in the output: directive exactly match those created by your shell command. On shared HPC filesystems, add a sleep or use snakemake --latency-wait (e.g., --latency-wait 60) to allow time for network file synchronization.

Q2: My Nextflow pipeline hangs at "submitted process" on an HPC scheduler (Slurm/PBS). How do I debug this?

A: This often indicates a scheduler integration issue. First, check your nextflow.config profile. Ensure the executor (e.g., executor = 'slurm'), queue, and memory/CPU directives are correct. Use nextflow log -f name,status,exit,workdir <run_name> to see the state of processes. Examine the .command.log file in the hanging process's work directory for scheduler errors.

Q3: When running a WDL workflow on Google Cloud, I get "Disk full" errors despite requesting sufficient size. What could cause this?

A: This is frequently caused by local disk (/tmp) filling up, not the mounted persistent disk. Modify your runtime attributes in the WDL task to request a larger boot disk size (bootDiskSizeGb) and/or use a localization_optional flag on large input files to stream them instead of copying.

Q4: How do I handle software dependency conflicts across different rules/steps in Snakemake?

A: Use conda environments per rule. In your Snakefile, define conda: directives with an environment YAML file for each rule requiring unique dependencies. Run Snakemake with --use-conda. For complete reproducibility, consider containerizing each rule with --use-singularity or --use-apptainer.

Q5: Nextflow's resume function is not working as expected; it re-runs cached tasks. How do I fix it?

A: Ensure you haven't changed the canonical task name (label, module, etc.) or the input file paths for the process. The resume feature relies on a unique hash generated from task properties. Also, avoid using cache = false in your process definition. Check that the work directory is intact and not cleaned.

Q6: My Cromwell server executing WDL workflows runs out of memory during large-scale microbiome cohort analysis. How to optimize?

A: This is likely due to Cromwell's default in-memory database. Configure Cromwell to use a MySQL or PostgreSQL database backend. Additionally, for cohort-level scatter operations, use preemptible VMs and optimize scatter counts to balance parallelism and overhead. Enable call caching to avoid recomputation.

Q7: How can I securely manage access keys for pulling private containers in cloud executions?

A: * Nextflow: Use the docker.registry and docker.user/docker.password settings in your config profile or via -with-docker flags. Consider using Singularity/Apptainer with bound credentials.

* Cromwell (WDL): Use the dockerCredentials section in your backend configuration JSON file (e.g., for Google Cloud, use "dockerhub").

* General: On HPC, use Singularity/Apptainer to pull and store images as static files. On cloud, use the native container registry (like Google Artifact Registry) close to your compute zone.

| Feature | Snakemake | Nextflow | WDL (Cromwell) |

|---|---|---|---|

| Primary Language | Python-based DSL | Groovy-based DSL | Separate WDL language |

| Execution Model | Rule-based DAG | Dataflow & channel | Task & workflow |

| Portability | HPC, Cloud, Local | HPC, Cloud, Local (Excellent) | Cloud, Local, HPC (via Tower) |

| Software Management | Conda, Singularity/Apptainer | Containers, Conda, Binaries | Containers |

| Resume Capability | Yes (--until, --omit-from) |

Excellent (-resume) |

Yes (Call Caching) |

| Best For | Python-centric teams, complex DAGs | Scalable, modular pipelines, streaming | Industry standards, cloud-native, CWL interop |

| Key Challenge in Microbiome | Scaling to 1000s of samples | Managing channel queues for large sample sets | Expressing complex sample-to-analysis maps |

Experimental Protocol: Reproducible 16S rRNA Analysis Workflow

Title: Reproducible Microbiome Analysis from Raw Sequences to Beta Diversity.

Objective: To perform a standardized, orchestrated analysis of 16S rRNA sequencing data that can be reproduced on both an HPC cluster and a cloud environment.

Methodology:

- Sample Manifest & Input: Create a TSV file (

samples.tsv) with columns:sample_id,read1_path,read2_path. - Workflow Orchestration: Implement the pipeline in Snakemake/Nextflow/WDL (see toolkit).

- Quality Control & Denoising: For each sample, use DADA2 (via QIIME 2 or R) to trim, filter, infer amplicon sequence variants (ASVs), and merge paired-end reads. Output: Feature table (

feature-table.biom) and representative sequences (sequences.fasta). - Taxonomic Assignment: Classify ASVs against a reference database (e.g., SILVA) using a classifier (e.g.,

q2-feature-classifier). - Phylogenetic Tree: Generate a rooted phylogenetic tree for phylogenetic diversity metrics using

mafftandfasttree. - Diversity Analysis: Compute alpha-rarefaction and beta diversity metrics (e.g., Weighted/Unweighted UniFrac, Bray-Curtis) at a specified sampling depth.

- Output Aggregation: Collect all per-sample and group-level results into a single ZIP archive for download and reporting.

Visualizations

Diagram 1: Core Workflow Orchestration Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Microbiome Workflow Orchestration |

|---|---|

| Conda / Mamba | Creates isolated software environments for different pipeline steps (e.g., DADA2, QIIME2, Picrust2). |

| Singularity/Apptainer Containers | Provides reproducible, system-agnostic packaging of complex software stacks and dependencies. |

| Reference Databases (SILVA, GTDB) | FASTA files used for taxonomic classification of ASVs; must be version-controlled. |

| Sample Manifest (CSV/TSV) | The primary input file linking sample IDs to raw data file paths; essential for workflow parallelization. |

| Cromwell Server / Nextflow Tower | Centralized servers for managing, monitoring, and sharing workflow executions, especially on cloud/HPC. |

| Metadata File (QIIME2 Artifact) | Contains sample-associated variables (e.g., pH, treatment) for downstream statistical analysis. |

| BIOM File Format | The standardized table format (biological observation matrix) for storing feature tables and metadata. |

Troubleshooting Guides & FAQs

Q1: I am trying to compress a large FASTQ file using gzip on our HPC cluster, but the process is taking over 24 hours and seems to have stalled. What could be the issue?

A: Extended compression times often indicate insufficient I/O bandwidth or memory. For large sequence files (>100 GB), standard gzip may be inefficient. Use pigz, a parallel implementation of gzip, which leverages multiple cores. Basic protocol: pigz -k -p [number_of_threads] your_large_file.fastq. Ensure you specify -k to keep the original file. Check cluster storage performance; consider moving the file to a local SSD on the compute node for the compression operation if network-attached storage is the bottleneck.

Q2: After chunking a large BAM file for parallel processing, my variant calling results are inconsistent between chunks. How can I ensure consistency?

A: Inconsistent results usually arise from reads or read pairs being split across chunk boundaries. When chunking aligned sequence data (BAM/SAM), you must use read-aware chunking. Do not split the file by bytes or lines. Use tools like samtools with region-based extraction (-h -b -o chunk.bam input.bam chr:start-end) or specialized splitters like bamUtil that preserve read groups and mates. Always include the BAM header in each chunk.

Q3: When streaming a CRAM file from an SRA repository directly into my analysis pipeline, the process fails with a "connection reset" error. How do I resolve this?

A: This is typically a network timeout issue due to the large transfer size. Implement a retry mechanism with exponential backoff in your streaming command. Use curl or wget with retry flags, or use the prefetch tool from the SRA Toolkit with the -X flag to set a maximum transfer rate and avoid overwhelming the connection. For direct streaming into a tool, consider a two-step process: first, stream to a local temporary file with retry logic, then pipe from that file.

Q4: My compressed multi-sample FASTA file has excellent compression ratio, but random access to a specific sample's sequences is very slow. What strategy should I use?

A: Monolithic compression blocks random access. Implement a compressed indexing strategy. Use BGZF compression (block gzip) as implemented in tools like samtools or bcftools. After compressing with BGZF (e.g., bgzip your_file.fasta), create a companion index file using tabix -p fasta your_file.fasta.gz. You can then rapidly extract specific regions or sequences using tabix your_file.fasta.gz chr:start-end. This combines compression with efficient random access.

Q5: I am chunking a massive metagenomic assembly graph for distributed analysis, but the process of splitting the graph file disrupts critical connectivity information. What is the correct approach?

A: Splitting raw graph files (e.g., GFA format) arbitrarily will break contiguity. Use a graph partitioning tool designed for this purpose, such as Metagraph or vg chunk. These tools partition the sequence space or graph structure while preserving local connectivity and can output chunks with overlap buffers. The key is to partition based on the graph topology, not the file size.

Data Presentation: Compression & Chunking Performance Metrics

| Strategy | Tool | Avg. Compression Ratio (FASTQ) | Compression Speed (MB/s) | Decompression Speed (MB/s) | Random Access Support |

|---|---|---|---|---|---|

| General-Purpose Compression | gzip (level 6) | ~3.5:1 | ~50 | ~200 | No |

| Parallel General-Purpose | pigz (level 6, 16 threads) | ~3.5:1 | ~450 | ~1200 | No |

| Bio-Specific Compression | Fastqzip (default) | ~4.2:1 | ~30 | ~100 | Limited |

| Blocked Compression for Indexing | BGZF (default) | ~3.4:1 | ~40 | ~180 | Yes (with .gzi index) |

| Reference-Based Compression | CRAM (lossless) | ~2.8:1* | Varies | Varies | Yes (with .crai index) |

*Ratio vs. uncompressed BAM; dependent on reference similarity.

Experimental Protocols

Protocol 1: Efficient Chunking of Large FASTQ for Parallel Quality Control

- Input: Single, large FASTQ file (

data.fastq). - Estimate Lines: Use

wc -l data.fastqto determine total lines (L). Each record uses 4 lines. - Determine Chunks: Decide number of chunks (N). Records per chunk = (L / 4) / N.

- Split: Use

split -l [lines_per_chunk * 4] --numeric-suffixes --additional-suffix=.fastq data.fastq chunk_. This ensures each chunk contains complete 4-line records. - Parallel Processing: Distribute

chunk_*.fastqfiles to separate cores/nodes for tools like FastQC or Trimmomatic. - Aggregation: Concatenate results (e.g., trimming output) using

cat.

Protocol 2: Streaming a CRAM File from ENA for Immediate Variant Calling

- Prerequisite: Install

samtools,curl. - Construct URL: Obtain the FTP URL for the desired CRAM and its CRAI index file from the ENA browser.

- Stream with Retry: Use a bash loop to stream with retry logic:

Note: The index is fetched automatically by

samtoolsfrom${cram_url}.crai.

Visualizations

Workflow for Parallel QC via File Chunking

Streaming Large Files with Error Handling

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Data Handling |

|---|---|

| High-Performance Computing (HPC) Cluster | Provides parallel computing resources essential for running compression, chunking, and distributed analysis jobs simultaneously. |

| SRA Toolkit (prefetch, fasterq-dump) | Standardized tools for downloading, extracting, and streaming sequence data from the NCBI Sequence Read Archive in various formats. |

| SAMtools/BAMtools | Core utilities for manipulating alignments (SAM/BAM/CRAM), including compression (samtools view -b), indexing, splitting, and filtering. |

| HTSlib (BGZF compression) | The underlying C library providing blocked GZIP format, enabling random access in compressed files; foundational for SAMtools and bcftools. |

| GNU Parallel or SLURM Job Arrays | Orchestration tools to efficiently execute chunked file processing across hundreds of CPU cores, managing job submission and output collection. |

| High-Throughput Network-Attached Storage (NAS) | Provides the shared, scalable filesystem needed for staging massive input files and aggregating outputs from distributed processes. |

| Checkpointing Software (e.g., CRIU, job-level) | Allows long-running data handling pipelines to be paused and resumed, protecting against node failures in long jobs. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My differential abundance analysis (e.g., DESeq2, edgeR) is failing due to "out of memory" errors on my large metagenomic count table. What are my options? A: This is a common issue with high-dimensional microbiome data where sample (n) and feature (p) counts are large. You can:

- Employ Feature Subsampling: Use a variance-stabilizing filter (e.g., retain top 10-20% of features by variance) before running the exact differential abundance algorithm. This reduces dimensionality drastically.

- Switch to an Approximate Algorithm: Use tools like

ALDEx2with Monte Carlo sampling orfastDESeqwhich uses approximations for dispersion estimation. - Leverage Heuristics: Apply a prevalence filter (e.g., features must be present in >10% of samples) based on biological plausibility to reduce the problem size.

- Protocol: For variance-based subsampling:

a. Load your count matrix (rows=features, columns=samples).

b. Calculate the row-wise variance.

c. Select the top

kfeatures (e.g.,k = 0.2 * total_features). d. Proceed with your chosen differential abundance test on this subset.

Q2: I am performing dimensionality reduction (PCoA, UMAP) on a massive distance matrix. Computation is taking days. How can I speed this up? A: The bottleneck is often the calculation of the pairwise distance matrix (O(n²)). Consider these approaches:

- Subsampling Technique: Use a randomized subset of your data (e.g., 10,000 random reads per sample) to calculate a representative distance matrix. This is standard practice for rarefaction in microbiome studies.

- Heuristic/Approximate Algorithm: For beta-diversity, use a fast, approximate distance metric like

Sørensen-Diceinstead of computationally intensive ones likeUniFrac. For UMAP, significantly lower then_neighborsparameter and use theapprox_pcainitialization.

- Protocol: For subsampled distance matrix calculation: a. Rarefy all samples to an even sequencing depth (e.g., 10,000 sequences per sample). b. Calculate the Bray-Curtis dissimilarity matrix on the rarefied table. c. Perform PCoA on this matrix. Compare stability by repeating with a different rarefaction seed.

Q3: My random forest model for phenotype prediction from microbiome features is overfitting and computationally expensive to train. How do I improve it? A: This indicates high dimensionality (many OTUs/ASVs) relative to samples.

- Feature Selection Heuristic: Prior to modeling, perform a robust feature selection. Use the

SIAMCATpipeline which employs a repeated cross-validation LASSO approach to identify a stable, small subset of predictive features. - Algorithm Substitution: Replace the random forest with a Regularized Logistic Regression (L1/L2). It performs built-in feature selection and is less prone to overfitting on wide data.

- Subsampling for Stability: Use repeated subsampling (e.g., 100 iterations of 80% data) to train multiple models and aggregate feature importance scores, distinguishing robust signals from noise.

- Protocol: For stable feature selection with SIAMCAT:

a. Create a

labelobject from your metadata. b. Normalize the feature matrix (e.g., log-transform). c. RunSIAMCATwith default parameters for LASSO feature selection in a 5x5-fold cross-validation. d. Extract the list of consistently selected features across folds.

Q4: When should I choose an exact algorithm over a heuristic or approximate method? A: The decision framework depends on your primary research question and resource constraints. Use this table:

| Scenario | Recommended Approach | Rationale | Example in Microbiome Analysis |

|---|---|---|---|

| Discovery Phase, Large Dataset (n>1000) | Heuristic or Subsampling | Prioritize speed and exploration to identify broad patterns. | Using ANCOM-BC on genus-level aggregated data instead of ASV-level. |

| Confirmatory Analysis, Key Hypothesis | Exact Algorithm (if feasible) | Requires precise p-values and unbiased estimates for publication. | Running full DESeq2 on a filtered, high-confidence set of ASVs. |

| Very High Dimensionality (p >> n) | Approximation or Regularization | Necessity; exact solutions are computationally impossible or unstable. | Using glmnet for classification instead of a standard logistic regression. |

| Resource-Limited (Time/Memory) | Subsampling | Mandatory to obtain any result. Must report and justify subsampling parameters. | Performing PERMANOVA on a rarefied, subsampled community table. |

| Assessing Algorithm Stability | Subsampling + Exact Method | Use bootstrap/subsampling to quantify uncertainty introduced by heuristics. | Comparing clustering results from 100 rarefied UMAP embeddings. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Experiments |

|---|---|

| High-Performance Computing (HPC) Cluster | Provides the parallel processing and high memory needed for exact algorithms on large data. |

| R/Python Environment Manager (Conda, renv) | Ensures computational reproducibility by pinning exact software and library versions. |

| Sparse Matrix Representations (R: Matrix, Python: scipy.sparse) | Reduces memory footprint for storing and operating on large, zero-inflated count tables. |

Benchmarking Suite (e.g., microbenchmark in R, timeit in Python) |

Empirically compares the runtime and memory usage of different algorithmic choices. |

Fixed Random Seed (e.g., set.seed(123)) |

Crucial for making subsampling, stochastic heuristics, and approximations reproducible. |

Experimental Protocol: Evaluating Trade-offs in Algorithm Selection

Objective: To systematically evaluate the cost-accuracy trade-off between exact, approximate, and subsampled algorithms for differential abundance analysis.

Methodology:

- Dataset: Use a publicly available, large microbiome dataset (e.g., >200 samples, >50k ASVs from MG-RAST or Qiita).

- Ground Truth: Define a "ground truth" signal by running an exact algorithm (DESeq2) on a small, manageable random subset of the data (e.g., 50 samples, 5k most abundant ASVs) where it is feasible.

- Interventions: Apply the following methods to the full dataset:

- A. Exact: DESeq2 (full, if possible, or until memory failure).

- B. Approximation:

ALDEx2witht-testandglmeffect size. - C. Heuristic Subsampling: Variance-based pre-filtering (top 10% ASVs) followed by DESeq2.

- D. Random Subsampling: Rarefy to 10k reads/sample, then run DESeq2.

- Metrics: For each method, record: (a) Wall-clock time, (b) Peak memory usage (RSS), (c) Number of significant ASVs (FDR < 0.05), and (d) Overlap with the "ground truth" significant ASVs (Jaccard index).

- Visualization: Plot a 3D trade-off surface (Time vs. Memory vs. Jaccard Index).

Algorithm Selection Decision Workflow

Microbiome Analysis Computational Pipeline

Technical Support Center: Troubleshooting Containerized Pipelines for Microbiome Research

This support center addresses common challenges researchers face when deploying high-dimensional microbiome analysis pipelines (e.g., QIIME 2, MetaPhlAn, HUMAnN) on major cloud platforms. The context is efficient computational resource management for large-scale 16S rRNA and metagenomic shotgun sequencing data.

Troubleshooting Guides & FAQs

Q1: My Docker pipeline (e.g., for taxonomic profiling) fails on AWS Batch with an "Insufficient Docker storage space" error. How do I resolve this? A: This occurs when the Docker image layer cache on the EC2 instance fills the allocated Amazon Elastic Block Store (EBS) volume. For microbiome pipelines pulling multiple large base images (e.g., Ubuntu, specific tool suites), the default storage (10 GB for the m5.large instance's root volume) is often insufficient.

- Solution: Configure the launch template for your Batch compute environment to use a larger root EBS volume (minimum 50 GB recommended). For on-demand setups, modify the EC2 instance's user data to expand Docker's storage driver (

daemon.json) to use a dedicated, larger attached EBS volume. - Experimental Protocol:

- AWS Batch Compute Environment Setup: In the AWS Console, create a new compute environment. Under "EC2 configuration," specify a custom launch template.

- Launch Template Creation: In the EC2 Console, create a launch template. In the "Storage" section, increase the size of the root volume (e.g.,

/dev/xvda) to 50 GiB. - Alternative Docker Storage: For greater control, attach a second EBS volume (e.g., 100 GiB GP2) at

/dev/sdb. In the "Advanced details" of the template, add User Data script to format and mount this volume, then configure Docker to use it:

Q2: Singularity containers for sensitive microbiome data fail to run on GCP due to permission issues with bind mounts. What's the correct configuration? A: Singularity, often used in HPC and security-conscious environments, maintains the user's permissions inside the container. On Google Compute Engine (GCE), the default service account or configured user permissions may not match the internal UID/GID, preventing write access to bound host directories.

- Solution: Ensure consistent UID/GID mapping between the host and container, and configure the GCE instance service account with appropriate scopes.

- Experimental Protocol:

- Build Container with Known UID/GID: When building your Singularity definition file for your analysis tool (e.g., Kraken2), define a specific user:

- Launch GCE Instance with Correct Scope: Use

gcloudto create an instance with full API access for the service account (or a custom service account with specific storage permissions): - Run with

--fakerootor--cleanenv:

Q3: Multi-container pipeline (Docker Compose) deployed on Azure Container Instances (ACI) cannot share files between containers for a multi-step microbiome QC process. A: ACI supports multi-container groups but requires an external, shared volume for persistent data exchange between containers, unlike local Docker Compose which can use anonymous volumes.

- Solution: Use an Azure File Share mounted to all containers in the group via the SMB protocol.

- Experimental Protocol:

- Create Azure File Share: In your Azure resource group, create a Storage Account (V2) and within it, create a File Share (e.g., named

pipeline-data). - Deploy with ARM/JSON Template: Structure your container group definition (

deploy.json) to include thevolumesproperty referencing the Azure File Share and mount it in each container (e.g.,fastq-input,quality-filter,assembly). - Deploy:

az container create --resource-group myResourceGroup --file deploy.json

- Create Azure File Share: In your Azure resource group, create a Storage Account (V2) and within it, create a File Share (e.g., named

Q4: My pipeline's cost on the cloud is unpredictable. How can I estimate and cap spending for long-running metagenomic assembly jobs? A: Use a combination of cloud budgeting tools, spot/preemptible instances, and architectural decisions to limit costs.

Table 1: Cost Management Strategies Across Cloud Platforms

| Strategy | AWS Implementation | GCP Implementation | Azure Implementation | Best for Microbiome Step |

|---|---|---|---|---|

| Budget Alerts | AWS Budgets with SNS alerts | GCP Budgets & Alerts via Pub/Sub | Azure Cost Management + Budget alerts | Overall project monitoring |

| Low-Cost Compute | EC2 Spot Instances (AWS Batch) | Preemptible VMs (GCE) | Spot VMs (Azure Batch) | Embarrassingly parallel tasks (read alignment, gene calling) |

| Autoscaling | Auto Scaling Groups for EC2 | Managed Instance Groups for GCE | Virtual Machine Scale Sets | Variable queue length in workflow managers (Nextflow, Snakemake) |

| Storage Lifecycle | S3 Lifecycle to Infrequent Access | Cloud Storage Object Lifecycle to Archive | Blob Storage Lifecycle to Cool/Archive | Long-term raw data (FASTQ) storage post-analysis |

Research Reagent Solutions: Essential Cloud & Software Toolkit

Table 2: Key Research Reagent Solutions for Cloud-Native Microbiome Analysis

| Item/Reagent | Function in the "Experiment" (Pipeline Deployment) | Example/Note |

|---|---|---|

| Container Images | Reproducible, isolated environments for analysis tools. | Docker Hub: qiime2/core:2024.5, staphb/sourmash. Singularity Library: library://someresearch/tool. |

| Orchestration Engine | Manages multi-step pipeline execution across cloud resources. | Nextflow (with AWS Batch, Google Life Sciences, or Azure Batch plugins), Snakemake (with Tibanna or Cloud profiles). |

| Workflow Language | Defines the computational steps and their dependencies. | CWL (Common Workflow Language), WDL (Workflow Description Language). Can be executed by Cromwell on cloud backends. |

| Object Storage | Durable, scalable storage for massive input/output files (FASTQ, BAM, profiles). | AWS S3, GCP Cloud Storage, Azure Blob Storage. Ideal for sequencing reads and final results. |

| Secret Management | Securely stores and provides API keys, database passwords, etc., to containers. | AWS Secrets Manager, GCP Secret Manager, Azure Key Vault. For accessing private container registries or external databases. |

| Container Registry | Stores and manages versioned container images. | AWS ECR, GCP Artifact Registry, Azure Container Registry. Hosts your custom-built pipeline images. |

Workflow & Architecture Diagrams

Title: Microbiome Pipeline Cloud Execution Flow

Title: Container Failure Troubleshooting Path

Diagnosing Bottlenecks and Optimizing Performance for Large-Scale Studies

Troubleshooting Guides & FAQs

Q1: My pipeline for processing 16S rRNA amplicon sequences is running extremely slowly. The CPU usage reported by top is low (<30%). What is the most likely bottleneck and how can I confirm it?

A1: Low CPU usage with high runtime strongly suggests an I/O (Input/Output) bottleneck. This is common when reading/writing large FASTQ or biom files across a network drive or to a slow disk. To confirm, use the iostat tool on Linux/Mac (iostat -dx 2) or Resource Monitor on Windows. Look for the await time (Linux) or High Response Time (Windows) on your data disk. If it's consistently high (>50ms), the pipeline is waiting for data read/write operations.

Q2: My MetaPhlAn analysis crashes with an 'Out of Memory (OOM)' error midway. How can I profile memory usage to adjust my resources?

A2: You can use htop or /usr/bin/time -v to track peak memory. For a detailed time-series profile, use psrecord (pip install psrecord). The protocol below will help you gather the necessary data before resubmitting your job.

Experimental Protocol: Profiling Pipeline Memory Usage

- Install profiling tools:

pip install psrecordor ensuregnu-timeis installed (brew install gnu-timeon Mac). - For a single command, profile with: or

- Analyze the output. From

time -v, note the "Maximum resident set size". Frompsrecord, observe the plot to see memory growth trends. - Request cluster resources or configure your pipeline's memory allocation to exceed the observed peak by 10-20%.

Q3: When running a large-scale PERMANOVA test in R, all CPU cores spike to 100% but progress is slow. How can I identify if the process is truly compute-bound or suffering from another issue?

A3: While high CPU suggests a compute-bound process, it could also indicate inefficient parallelization (e.g., thread contention). Use a system monitor that shows per-core utilization (like htop). If all cores are consistently at or near 100%, the task is likely compute-bound. To diagnose parallel efficiency, you can run a smaller test and compare wall time using 1 vs. N cores. A less than N-times speedup indicates parallel overhead.

Experimental Protocol: Benchmarking Parallel Scaling

- Take a representative subset of your data (e.g., 10%).

- Run your analysis script, controlling the number of CPU cores (e.g., via

OMP_NUM_THREADSor the specific package'sncoresparameter). - Measure the wall-clock time for runs with cores = 1, 2, 4, 8.

- Calculate the speedup: Time(1 core) / Time(N cores).

- Plot cores vs. speedup. Deviation from linear scaling suggests parallel overhead may be limiting further gains.

Q4: My Snakemake pipeline on a high-memory node seems to hang indefinitely during a file copying step. What I/O monitoring commands can I run to see what's happening?

A4: Use the lsof and iotop commands. First, find the process ID (PID) of the Snakemake rule. Then, use lsof -p <PID> to see all files the process has open. Use sudo iotop -p <PID> to see the real-time I/O bandwidth (read/write speed) of that specific process. If the write speed is very low or zero, it may indicate a network filesystem hang or permission issue.

Table 1: Common Monitoring Tools and Their Primary Use

| Tool Name | Primary Use Case | Key Metric to Observe | Typical Command |

|---|---|---|---|

| htop | CPU & Memory Overview | Per-core CPU %, Resident Memory | htop |

| iostat | Disk I/O Bottlenecks | await (ms), %util |

iostat -dx 2 |

| iotop | Process-level I/O | DISK READ, DISK WRITE |

sudo iotop |

| /usr/bin/time | Overall Resource Summary | %CPU, Max RSS (Memory) |

/usr/bin/time -v command |

| psrecord | Time-series Memory/CPU | Memory (MiB) over Time | psrecord cmd --plot plot.png |

| nvidia-smi | GPU Utilization | GPU-Util %, Memory-Usage | nvidia-smi -l 1 |

Table 2: Symptom-to-Bottleneck Diagnosis Guide

| Observed Symptom | Likely Bottleneck | First Tool to Use | Possible Solution |

|---|---|---|---|

| Low CPU %, long wait times | I/O | iostat, iotop |

Use local SSD, optimize file format (e.g., .feather over .csv) |

Process killed, OOM error |

Memory | htop, psrecord |

Increase RAM, stream data, use memory-efficient data types |

| High CPU %, slow scaling | CPU/Parallelization | htop (per-core) |

Check parallel scaling, use optimized libraries (e.g., Intel MKL) |

| Network timeouts, file errors | Network I/O | lsof, df -h |

Check network mount, reduce small file transfers |

Workflow & Logical Diagrams

Bottleneck Identification Decision Tree

Systematic Profiling and Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Resource Profiling

| Tool/Solution | Function in Profiling | Typical Use Case in Microbiome Analysis |

|---|---|---|

| Snakemake/Nextflow | Workflow Management | Enables built-in resource profiling (--profile) and automatic reporting of CPU/memory usage per rule. |

| Conda/Bioconda | Environment Management | Ensures reproducible installations of profiling tools (e.g., psrecord, perf) alongside bioinformatics packages. |

/usr/bin/time (GNU) |

Basic Resource Logger | Records total CPU time, max memory (RSS), and I/O for any pipeline command, crucial for initial SLURM job requests. |

psrecord |

Time-Series Profiler | Graphs memory and CPU usage over time for a process, identifying memory leaks in long-running R/Python scripts. |

iostat (sysstat) |

Disk I/O Monitor | Diagnoses slow read/write bottlenecks during large file operations, common in metagenomic assembly or alignment. |

| Threading Building Blocks (TBB) | Parallelization Library | Used internally by tools like QIIME 2 or DADA2 to manage CPU threads efficiently, reducing parallel overhead. |

| Feather/Parquet File Formats | Efficient Data Storage | Accelerates I/O bottlenecks by enabling faster read/write of large feature tables compared to classic TSV/CSV. |

Technical Support Center: Troubleshooting & FAQs

FAQ 1: Common Alignment and Phylogenetic Placement Issues

- Q: My sequence alignment with MUSCLE or MAFFT is taking days to complete and using all my server's memory. What are the primary optimization strategies?

- A: The primary strategies involve leveraging faster algorithms, approximate methods, and efficient resource management.

- Use Profile Alignments: For adding new sequences to an existing alignment, use the

-profilefunction in MUSCLE or MAFFT instead of re-aligning from scratch. - Employ Heuristics: Use the

--parttreeor--retree 1options in MAFFT for large datasets (>~10,000 sequences) to greatly speed up the process at a minor potential cost to accuracy. - Control Resources: Explicitly limit the number of threads (

--thread nin MAFFT) and memory. For massive jobs, consider using the--largeoption in MAFFT to prioritize memory efficiency over speed. - Tool Selection: Consider ultra-fast alignment tools like

Clustal Omegafor very large datasets orPaRafor rapid parallel alignment.

- Use Profile Alignments: For adding new sequences to an existing alignment, use the

- A: The primary strategies involve leveraging faster algorithms, approximate methods, and efficient resource management.

- Q: When performing phylogenetic placement with EPA-ng or pplacer, the analysis fails with an "out of memory" error on my reference tree. What can I do?

- A: This is often due to an excessively large reference package.

- Reduce Reference Size: Prune your reference phylogenetic tree and alignment. Remove redundant sequences (e.g., using