Solving the Perfect Separation Problem: Advanced Statistical Methods for Reliable Microbiome Differential Abundance Analysis

This article provides a comprehensive guide for researchers and drug development professionals on addressing perfect separation in microbiome differential abundance analysis.

Solving the Perfect Separation Problem: Advanced Statistical Methods for Reliable Microbiome Differential Abundance Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing perfect separation in microbiome differential abundance analysis. We first define the problem and its consequences for traditional statistical models. We then detail specialized methods like penalized regression (e.g., Firth), Bayesian approaches, and zero-inflated/hurdle models. A practical troubleshooting section covers data preprocessing and model diagnostics. Finally, we compare method performance across simulation studies and real-world applications. The conclusion synthesizes best practices for robust biomarker discovery and therapeutic target identification in microbiome research.

What is Perfect Separation? Diagnosing a Critical Pitfall in Microbiome Statistics

Defining Perfect Separation (Quasi-Complete Separation) in Logistic and Count Models

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My model fitting algorithm (e.g., in R's glm or DESeq2) fails with a warning about "perfect separation" or "coefficients diverging to infinity." What does this mean and what is the immediate cause?

A: This indicates a scenario where one or more predictor variables perfectly or near-perfectly predict the binary outcome (in logistic models) or group membership for zero counts (in count models like negative binomial). For instance, in microbiome data, if a particular bacterial taxon is only present in healthy controls and completely absent in all disease samples, the model cannot estimate a finite log-odds coefficient—it theoretically approaches infinity. The immediate cause is the lack of overlap in the distributions of predictors between outcome groups.

Q2: What is the practical difference between "Complete" and "Quasi-Complete" Separation? A:

| Separation Type | Definition in Microbiome Context | Example |

|---|---|---|

| Complete Separation | A predictor variable perfectly distinguishes all samples between groups. | Taxon X has >0 reads in all Healthy samples and exactly 0 reads in all Disease samples. |

| Quasi-Complete Separation | A predictor variable perfectly distinguishes a subset of samples between groups. | Taxon Y has >0 reads in all Healthy samples and 0 reads in most Disease samples, but has >0 reads in a few Disease samples. |

Q3: What are the key diagnostic signs of separation in my analysis output? A: Look for these quantitative signals in your model summary:

| Software/Symptom | Indicator | Typical Value | ||||

|---|---|---|---|---|---|---|

R glm (logistic) |

Large coefficient estimate (> | 10 | ), enormous standard error (> | 5 | ). | Coefficient: 35.22, Std. Error: 582904.5 |

R glm (logistic) |

Wald test p-value | Often exactly 1.0 | ||||

| DESeq2 / EdgeR | Extreme log2FoldChange | > | 20 | , with lfcSE > |

10 | |

| Bayesian Models | Poor convergence, extreme R-hat values. | R-hat >> 1.1 |

Q4: What experimental or data collection issues in microbiome studies most commonly lead to separation? A:

- Low Biomass Samples: True biological zeros in one condition are conflated with technical zeros from sampling depth.

- Over-aggressive Filtering: Removing low-prevalence features too early can artificially create separation.

- Batch Effects: If all samples from one experimental group are processed in a single batch, technical artifacts can induce separation.

- Biological Reality: The microbe may be a true biomarker, genuinely absent in one condition.

Experimental Protocol: Diagnostic Checklist for Separation

- Pre-modeling Check: Create a 2x2 contingency table for each feature and group. Look for cells with zeros.

- Fit Initial Model: Run your standard differential abundance or association model (e.g.,

DESeq2::DESeq,glm(family = binomial)). - Extract Coefficients: Isolate the model estimates, standard errors, and p-values into a results table.

- Flag Extreme Values: Apply filters:

abs(coef) > 10ANDstd_error > 5. Flag these features for investigation. - Visual Inspection: Generate a boxplot or violin plot of the flagged feature's abundance (e.g., CLR-transformed counts) across groups to confirm the separation pattern.

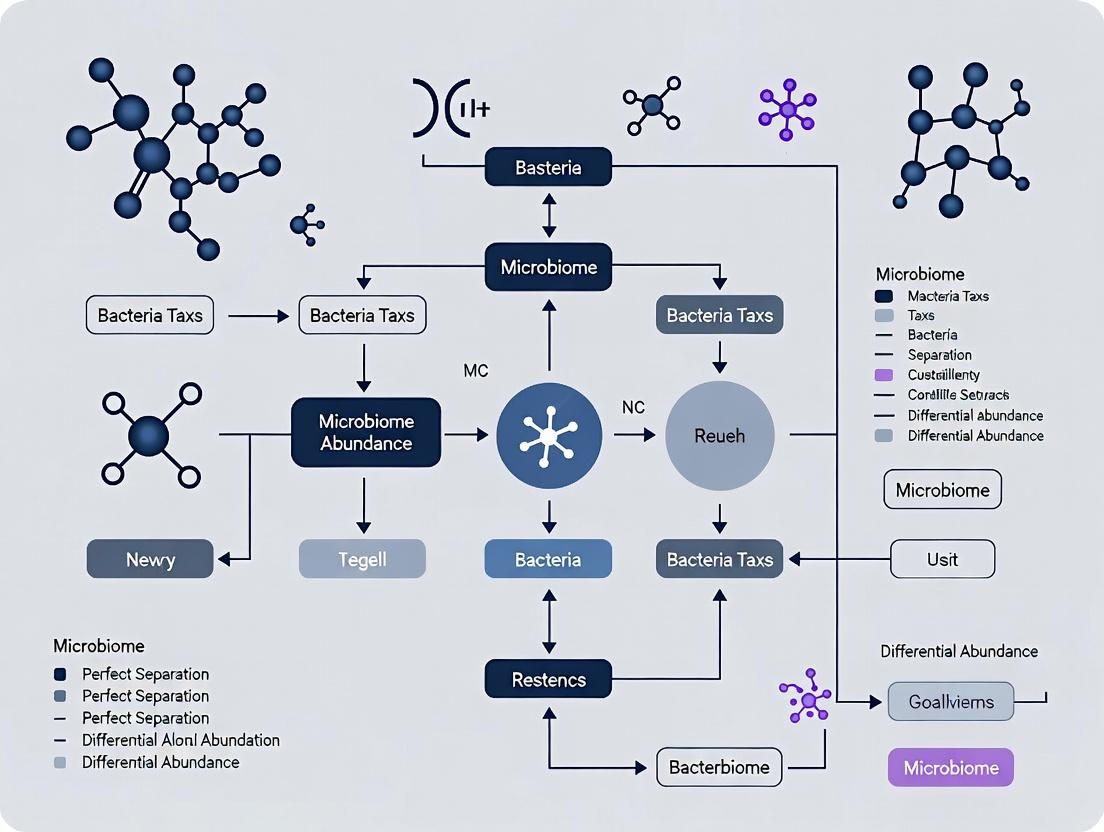

Diagram: Diagnostic Workflow for Perfect Separation

Q5: What are the recommended solutions to address perfect separation in my differential abundance analysis? A: Choose a method based on your model framework.

| Model Framework | Solution | Rationale & Implementation |

|---|---|---|

| Frequentist Logistic | Firth's Bias-Reduced Penalized Likelihood | Adds a penalty term to the likelihood, preventing coefficient divergence. Use logistf R package. |

| Frequentist Logistic/Count | Data-Driven Penalization (e.g., brglm2) |

Applies a general penalization to address separation. |

| Bayesian Models | Informative or Weakly-Informative Priors | Priors regularize coefficients, pulling extreme estimates toward a plausible range. Use brm or rstanarm. |

| Microbiome-Specific | Zero-Inflated Models (e.g., model.zero=~group in DESeq2) |

Explicitly models the zero-inflation component, which may be linked to the condition. |

| All Frameworks | Report with Caution | If the separation reflects a strong biological signal, report it as a candidate biomarker but acknowledge the statistical limitation. |

Experimental Protocol: Implementing Firth's Correction for Logistic Regression

- Install Package:

install.packages("logistf") - Prepare Data: Ensure your outcome is binary (0/1) and predictors are formatted correctly.

- Fit Model:

firth_model <- logistf(outcome ~ taxon_abundance + covariates, data = your_data) - Summarize Results:

summary(firth_model)to obtain penalized coefficients, confidence intervals, and p-values. - Compare: Contrast coefficient estimates with those from the failed

glmmodel to see the regularization effect.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Addressing Separation |

|---|---|

logistf R Package |

Implements Firth's penalized likelihood logistic regression to directly resolve separation. |

brglm2 R Package |

Provides tools for bias reduction in generalized linear models, including detection and handling of separation. |

DESeq2 R Package |

Allows the use of zero-inflated negative binomial models (zinbwave integration) to model condition-specific zeros. |

rstanarm R Package |

Enables Bayesian GLMs with regularizing priors (e.g., normal(0, 2)), which naturally handle separation. |

| Compositional Transform (CLR) | A pre-processing step (e.g., microViz::transform('clr')) to mitigate zeros before some multivariate analyses, but does not solve separation in supervised models. |

Diagram: Solution Pathways Based on Research Goal

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My statistical model (e.g., DESeq2, edgeR, logistic regression) fails with an error about "perfect separation" or "all-zero groups" when comparing two sample groups. What does this mean and what is the primary cause?

A1: This error indicates that your model cannot find a unique solution because one or more microbial features (ASVs/OTUs) are only present in one group and completely absent in the other. This is called "complete separation" or "perfect separation." In microbiome data, this is primarily caused by extreme sparsity (a high proportion of zeros) combined with the compositional nature of the data. A feature absent in the control group but present in the treatment group creates an infinite odds ratio, causing model convergence failure.

Q2: How can I diagnose if sparsity or compositionality is the main driver of separation issues in my dataset?

A2: Follow this diagnostic protocol:

- Calculate Sparsity Metrics: Compute the percentage of zero counts per sample and per feature across groups.

- Create a Presence/Absence Table: For each feature, tally the number of samples where it is present (count > 0) in Group A vs. Group B.

- Identify Separating Features: Use the following criteria to flag features likely to cause separation:

| Metric | Calculation | Threshold Indicating Problem |

|---|---|---|

| Feature Sparsity | (Number of zero samples) / (Total samples) | > 70% per feature |

| Group-wise Presence Skew | Presence in Group A vs. B (see table below) | One group count is zero |

| Prevalence Difference | |(Prev in Group A) - (Prev in Group B)| | > 0.8 (where prevalence is proportion of samples with non-zero counts) |

Example Diagnostic Table for Key Features:

| Feature ID | Total Zeros | Zeros in Group A | Zeros in Group B | Causes Separation? |

|---|---|---|---|---|

| OTU_781 | 18/20 (90%) | 10/10 (100%) | 8/10 (80%) | Yes (Absent in all A) |

| OTU_242 | 12/20 (60%) | 5/10 (50%) | 7/10 (70%) | No |

| OTU_543 | 15/20 (75%) | 10/10 (100%) | 5/10 (50%) | Yes (Absent in all A) |

Q3: What are the recommended experimental and bioinformatic protocols to mitigate separation while maintaining biological validity?

A3: Implement a multi-step protocol:

Protocol 1: Pre-analysis Filtering & Transformation

- Step 1: Prevalence Filtering. Remove features with very low prevalence (e.g., present in < 10% of samples in each group). This reduces sparsity from rare contaminants.

- Step 2: Add a Pseudocount Cautiously. Adding a small value (e.g., 1) to all counts can stabilize models but severely distorts compositional inferences. Recommended Alternative: Use Centered Log-Ratio (CLR) transformation with a multiplicative replacement strategy for zeros (using the zCompositions R package) to handle compositionality without introducing separation.

- Step 3: Employ Robust Models. Use statistical methods designed for sparse, compositional data:

- ANCOM-BC2: Models sampling fraction and corrects bias.

- LinDA: Linear model for differential abundance analysis after proper transformation.

- Bayesian Models with Regularizing Priors: e.g., brms in R with horseshoe or Cauchy priors, which shrink infinite effects to large but finite values.

Protocol 2: Inferential Robustness Check

- Step 1: Run Analysis with Multiple Methods. Perform DA analysis with at least one compositionally-aware method (ANCOM-BC2) and one robust count-based method (DESeq2 with

betaPrior=TRUEorfitType="glmGamPoi"). - Step 2: Sensitivity Analysis with Pseudocounts. Vary pseudocounts (e.g., 0.5, 1) in a CLR transformation and observe stability of effect sizes for top hits.

- Step 3: Report Effect Sizes with Confidence Intervals. Always report CI bounds. Infinite or astronomically large effect sizes are red flags for separation artifacts.

Diagram 1: Troubleshooting workflow for separation errors.

Q4: How do I interpret effect sizes from models that have addressed separation? What indicates a likely artifact vs. a true large effect?

A4: Interpretation requires scrutiny of both effect size and its variability.

- Artifact Warning Signs:

- An odds ratio (OR) from a logistic model that is

Infor > 10^6. - A log2 fold change (LFC) with an extremely wide, asymmetric confidence interval (e.g., from +2 to +Inf).

- The feature has zero variance within one group (all zeros or all non-zeros).

- An odds ratio (OR) from a logistic model that is

- Indicators of a Robust, Large Effect:

- The LFC or OR is large but finite, with a reasonably tight, symmetric confidence interval.

- The finding is consistent across multiple analytical methods (e.g., both ANCOM-BC2 and a robust count model agree on direction and significance).

- The feature is biologically plausible (e.g., a known pathogen expands in an infection model).

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Primary Function | Key Consideration for Separation |

|---|---|---|

| zCompositions R Package | Implements Bayesian-multiplicative and other methods for replacing zeros in compositional data prior to CLR. | Essential for handling zeros without creating separation artifacts in compositional models. |

| ANCOM-BC2 R Package | Differential abundance testing that accounts for sampling fraction and sparse counts. | Built to control FDR and is robust to sparse features that cause separation. |

DESeq2 with glmGamPoi |

A faster, more stable GLM fitting method for count data that better handles low counts. | Increased numerical stability can prevent convergence failures. |

| brms / STAN | Bayesian regression modeling with regularizing priors (e.g., horseshoe). | Priors shrink extreme estimates, turning "infinite" effects into large, finite ones for interpretability. |

| SILVA / GTDB Database | Curated taxonomic databases for classifying 16S rRNA sequences. | Accurate taxonomy helps filter out likely contaminant sequences that contribute to sparsity. |

| Mock Community Controls | DNA spikes of known microbial composition used in sequencing runs. | Allows estimation of technical dropout rates, informing minimum prevalence thresholds. |

| Rarefaction (Use with Caution) | Subsampling to equal sequencing depth per sample. | Can reduce separation from unequal sampling but loses data; not recommended as primary fix. |

Troubleshooting Guides & FAQs

Q1: What is "separation" in the context of microbiome count data analysis?

A: Separation occurs when a predictor variable (e.g., disease state) perfectly predicts the presence or absence of a taxon. In a dataset, this manifests as all zero counts in one experimental condition for a specific microbe, while it is present in another condition. This creates a quasi-complete separation in the statistical model, leading to non-convergence and infinite parameter estimates in traditional Generalized Linear Models (GLMs) like those underpinning DESeq2 and edgeR.

Q2: What specific error messages indicate I'm facing a separation problem?

A: Common warnings and errors include:

DESeq2: "Beta coefficients diverging to infinity" or "model failed to converge."edgeR: "Estimated dispersion tends to infinity" or "All weights not meaningful."glmin R: "fitted probabilities numerically 0 or 1 occurred" or "algorithm did not converge."

Q3: How does separation break DESeq2 and edgeR's statistical models?

A: Both packages use GLMs with log-link functions. Separation causes the maximum likelihood estimate for the coefficient of the separating variable to tend toward infinity (positive or negative). This invalidates the iterative fitting process (IRLS), prevents reliable estimation of dispersion and fold changes, and makes p-values extremely sensitive to arbitrary pseudo-count additions or normalization factors.

Q4: What are the practical consequences for my differential abundance results?

A: Results become unstable and non-reproducible. Key issues are:

- Exaggerated Effect Sizes: Log2 fold changes (LFCs) are massively overestimated.

- Unreliable P-values: P-values can be either spuriously significant or artificially inflated.

- Model Non-convergence: The analysis may fail outright.

Q5: What experimental design choices can minimize separation issues?

A:

- Increase biological replication within conditions.

- Ensure balanced group sizes.

- Consider longitudinal or paired designs to control for host-specific zero inflation.

- Use deep sequencing to reduce technical zeros.

| Metric | No Separation (Well-behaved Data) | With Separation (All zeros in one group) | Consequence |

|---|---|---|---|

| GLM Coefficient Estimate | Finite, stable (e.g., β ≈ 2.1) | Diverges to ± Infinity | Uninterpretable effect size |

| Estimated Dispersion | Stable, finite value | Tends to infinity or errors | Invalid variance model |

| Log2 Fold Change (DESeq2) | Stable estimate (e.g., LFC ≈ 1.8) | Extremely large magnitude (e.g., LFC > 20) | Biological nonsense |

| P-value Stability | Consistent across model runs | Highly sensitive to prior or pseudo-count | Non-reproducible significance |

| Model Convergence Status | Converges successfully | Fails to converge or warns | Analysis pipeline breaks |

Experimental Protocol: Diagnosing Separation in Your Dataset

Objective: To systematically identify taxa suffering from perfect or quasi-complete separation prior to differential abundance testing.

Materials: R environment, microbiome count table (OTU/ASV), sample metadata.

Procedure:

- Data Preprocessing: Load your phyloseq object or count matrix and metadata.

- Prevalence Filter: Remove taxa with extremely low prevalence (e.g., present in < 10% of all samples) to reduce noise.

- Separation Scan Function: Implement a function that, for each taxon and specified condition variable (e.g., Disease vs Healthy), checks:

- Are all counts in one group zero?

- Are all counts in one group non-zero and the other group has some zeros? (quasi-separation)

- Output: Generate a table listing taxa exhibiting separation, their count distribution per group, and flag them for subsequent careful analysis.

- Visualization: Create a heatmap of the flagged taxa to confirm the pattern.

Visualizations

Title: How Separation Breaks the GLM Workflow

Title: Decision Path for Addressing Separation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Addressing Separation |

|---|---|

R Package: brglm2 |

Implements Firth's bias-reduced penalized-likelihood logistic regression to prevent coefficient divergence. Useful for presence/absence analysis of separating taxa. |

R Package: countreg |

Provides zero-inflated and hurdle model frameworks (e.g., zero-inflated negative binomial) that explicitly model excess zeros separately. |

R Package: apeglm (via DESeq2) |

An adaptive prior shrinkage estimator for LFCs. When specified in DESeq2::lfcShrink, it can stabilize estimates for separated taxa. |

Bayesian Tools (rstanarm, brms) |

Allow specification of informative priors on coefficients, regularizing infinite estimates and providing credible intervals. |

| Prevalence Filtering Script | Custom R function to remove low-prevalence taxa (e.g., present in < 5-10% of samples) before testing, reducing instances of separation. |

| Effect Size Threshold | A pre-defined minimum reliable LFC (e.g., |LFC| > 2) to flag biologically meaningful changes versus artifacts of separation. |

Troubleshooting Guides & FAQs

Q1: I am analyzing a case-control microbiome study. My differential abundance analysis (e.g., DESeq2, edgeR) is failing with errors about "perfect separation" or "all zero counts." What is happening and how do I fix it?

A: This is a common issue in case-control designs where a microbial feature is present in all cases and absent in all controls (or vice versa), creating a "perfect separation" scenario. Standard negative binomial models cannot fit an infinite coefficient. To address this:

- Pre-filtering: Remove features with zero counts in less than a minimum number of samples (e.g., < 10% of smallest group size) before analysis.

- Use Robust Methods: Employ tools designed for sparse data:

- ANCOM-BC2: Incorporates a bias correction term.

- LinDA: Uses a pseudo-count approach and variance-stabilizing transformation.

- ZINB-based models: Like those in

glmmTMB, though they can be computationally intense.

- Regularization: Use

DESeq2withbetaPrior=TRUE(default) orapeglshrinkage, which can stabilize extreme log-fold changes.

Q2: When studying extreme phenotypes (e.g., super-responders vs. non-responders), my data is highly imbalanced and separation issues are worse. What strategies can I use?

A: Extreme phenotype designs are particularly prone to separation. Beyond pre-filtering:

- Aggregation: Analyze at a higher taxonomic rank (e.g., genus instead of ASV/OTU) to reduce sparsity.

- Conditional Logistic Regression: Implemented in tools like

fastANCOM, it conditions on the total read count per sample, reducing the impact of separation. - Bayesian Approaches: Use methods with strong, regularizing priors (e.g.,

brmfrombrmsR package) that pull extreme effect sizes toward reasonable estimates. - Firth's Bias-Reduced Logistic Regression: Available via the

logistfR package, it effectively mitigates separation issues in association testing.

Q3: In a longitudinal treatment effects study, I want to account for baseline. How can I avoid separation when modeling change from baseline?

A: Modeling change directly can be problematic with count data. Instead:

- Use a Mixed Model: Model the post-treatment counts with the baseline count as an offset or covariate in a generalized linear mixed model (GLMM). This avoids creating a derived response variable. For example, in

lme4orglmmTMB, you can specify:model <- glmer(post_count ~ treatment + baseline_offset + (1|subject), family=poisson(link="log")) - DON'T simply subtract baseline from post-treatment counts.

Q4: Are there specific experimental protocols to minimize these analytical issues from the start?

A: Yes, study design is key.

- Increase Sample Size: Especially for the smaller group in a case-control study.

- Depth of Sequencing: Aim for higher sequencing depth (>50,000 reads per sample) to detect low-abundance taxa and reduce zeros.

- Pooling Technical Replicates: Pool multiple technical replicates prior to DNA extraction or sequencing to reduce zeros from sampling variance.

- Careful Phenotype Definition: Avoid creating overly extreme groups if not biologically necessary; consider continuous outcomes.

Table 1: Performance Comparison of DA Methods under Perfect Separation

| Method | Handles Separation? | Recommended Use Case | Key Parameter Adjustment |

|---|---|---|---|

| DESeq2 | Partial (with shrinkage) | General case-control, balanced designs | fitType="glmGamPoi", sfType="poscounts" |

| ANCOM-BC2 | Yes | Sparse data, imbalanced designs | neg_lb=TRUE to handle structural zeros |

| LinDA | Yes | Small sample size, longitudinal | is.winsor=TRUE to control outliers |

| MaAsLin2 | Partial | Complex random effects, covariates | normalization="CLR", transform="AST" |

| Firth Logistic | Yes | Extreme binary phenotypes | logistf R function with firth=TRUE |

Table 2: Impact of Sequencing Depth on Zero Inflation

| Mean Sequencing Depth | % Zero Counts (Simulated Gut Data) | Probability of Perfect Separation (n=10/group) |

|---|---|---|

| 10,000 reads | 85.2% | 42.1% |

| 50,000 reads | 76.5% | 18.7% |

| 100,000 reads | 71.3% | 9.4% |

Experimental Protocol: ANCOM-BC2 for Case-Control Studies

Title: Protocol for Differential Abundance Analysis Using ANCOM-BC2 to Address Separation.

Steps:

- Data Preprocessing: Import feature table (ASV/OTU), taxonomy, and metadata into R. Remove samples with library size < 1000 reads.

- Filtering: Apply prevalence filtering (

prev.cut = 0.1) to remove features detected in less than 10% of samples. - Run ANCOM-BC2:

- Interpret Results: The primary output is in

res$res. Key columns:lfc_*(log-fold change),q_*(adjusted p-value).res$zero_indindicates features identified as structurally different between groups. - Visualization: Plot the log-fold changes (LFCs) against -log10(q-values).

Visualizations

Title: DA Method Selection for Separation Issues

Title: Core Protocol for Addressing Separation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Microbiome DA Studies

| Item | Function | Example/Supplier |

|---|---|---|

| DNA Extraction Kit (with bead beating) | Ensures lysis of tough Gram-positive bacteria for representative community profiling. | MP Biomedicals FastDNA Spin Kit, Qiagen DNeasy PowerSoil Pro Kit. |

| PCR Inhibitor Removal Reagent | Critical for stool samples; improves library prep success and reduces batch effects. | Zymo OneStep PCR Inhibitor Removal Kits. |

| Mock Community Control (Standard) | Quantifies technical error, batch effects, and informs filtering thresholds. | ZymoBIOMICS Microbial Community Standard. |

| Library Quantification Kit (fluorometric) | Accurate library pooling for balanced sequencing depth across samples. | Invitrogen Qubit dsDNA HS Assay Kit. |

| Bioinformatics Pipeline Software | Standardized processing from raw reads to count table to ensure reproducibility. | QIIME 2, DADA2 (R), mothur. |

| Positive Control Spike-Ins | Distinguishes technical zeros (dropouts) from biological absences. | Spike-in of known, non-bacterial DNA (e.g., SalmoGen). |

Troubleshooting Guides & FAQs

Q1: My logistic regression model for a microbiome taxon's presence/absence fails to converge, returning a "perfect or quasi-complete separation" error. What is the first diagnostic I should run? A1: Immediately check for zero-inflated covariates. In microbiome data, a metadata variable (e.g., a specific treatment group) might perfectly predict the absence (all zeros) of a taxon. This creates monotone likelihood and separation. Tabulate your predictor of interest against the binary outcome.

Q2: How do I formally test for a zero-inflated covariate causing separation? A2: For each categorical predictor, create a contingency table. Separation is indicated if one cell (e.g., Treatment Group A vs. Taxon Absence) has zero counts. For continuous predictors, examine boxplots or run a simple logistic regression; an infinite coefficient estimate is a key sign.

Q3: What does "monotone likelihood" mean in practice, and how can I detect it during my analysis?

A3: Monotone likelihood occurs when a predictor perfectly orders the outcomes (e.g., all cases where x > 10 have y=1). Detection involves examining the profile log-likelihood function. If it increases monotonically to an asymptote as the parameter tends to infinity, you have monotone likelihood. Software warnings (e.g., in R's glm about "fitted probabilities numerically 0 or 1") are primary indicators.

Q4: I've confirmed a zero-inflated covariate. What are my next steps before proceeding with differential abundance testing?

A4: You must address the separation. Options include: 1) Applying Firth's penalized-likelihood correction (e.g., logistf R package), 2) Using Bayesian methods with weakly informative priors (e.g., brm with Student-t priors), or 3) For hypothesis testing, employing conditional likelihood exact tests. Do not simply remove the separating variable if it is biologically relevant.

Q5: Are certain differential abundance methods more prone to issues from zero inflation and separation?

A5: Yes. Standard tools like DESeq2 and edgeR use parametric or quasi-likelihood tests that can be unstable under extreme zero inflation combined with separation. Methods explicitly designed for zero-inflated data (e.g., ZINB-WaVE followed by glmmTMB, or ANCOM-BC2) or non-parametric tests may be more robust, but separation in covariates must still be diagnosed.

Data Presentation

Table 1: Illustrative Contingency Table Showing a Zero-Inflated Covariate Causing Separation

| Taxon Absent (0) | Taxon Present (1) | Row Total | |

|---|---|---|---|

| Control Group | 15 | 5 | 20 |

| Treatment Group A | 0 | 12 | 12 |

| Treatment Group B | 8 | 7 | 15 |

| Column Total | 23 | 24 | 47 |

Interpretation: The "0" in the "Treatment Group A / Taxon Absent" cell indicates perfect separation. This covariate level perfectly predicts taxon presence.

Table 2: Common Differential Abundance Tools & Their Sensitivity to Separation

| Tool/Method | Underlying Model | Sensitivity to Separation | Recommended Diagnostic Step |

|---|---|---|---|

| DESeq2 (Wald test) | Negative Binomial GLM | High | Check for all-zero counts in design matrix subgroups. |

| edgeR (QL F-test) | Quasi-Likelihood GLM | High | Examine glmQLFit object for extreme dispersion estimates. |

| MaAsLin2 (default) | Various GLM/LMM | Moderate-High | Review model warnings for "perfect separation". |

| Firth's Logistic Regression | Penalized Logistic GLM | Low (Solution) | Use this as a diagnostic and solution tool. |

| ANCOM-BC2 | Linear Model with BC | Low for composition, but check covariates. | Ensure design matrix is full rank. |

Experimental Protocols

Protocol 1: Diagnostic Check for Zero-Inflated Covariates

- Data Preparation: From your phyloseq or similar object, extract the count matrix for a single microbial feature (e.g., an ASV) and your metadata of interest (e.g., disease state:

Healthyvs.Diseased). - Binarization: Convert the microbial count vector to a presence/absence (1/0) vector. Any count > 0 becomes 1.

- Contingency Table Analysis: For each categorical covariate, create a cross-tabulation similar to Table 1. Visually inspect for any zero-cell counts.

- Continuous Predictor Check: For continuous covariates (e.g., pH), split the data into quartiles and create a binned contingency table, or plot the covariate against the binary outcome using a jittered scatter plot.

- Documentation: Record any variables showing potential separation for further action.

Protocol 2: Implementing Firth's Bias-Reduced Logistic Regression as Diagnostic & Solution

- Installation: In R, install and load the

logistfpackage. - Model Fitting: Fit the same model that failed with standard

glm. Example code:

- Diagnostic: The function will complete without separation errors. Examine the summary (

summary(firth_model)). Large but finite coefficients with low p-values indicate the variable was likely causing separation in the standard GLM. - Solution: Use the p-values and confidence intervals from this model for inference on the problematic covariate, or use it as a benchmark for Bayesian models.

Mandatory Visualization

Diagnostic Workflow for Perfect Separation

Logical Chain from Zero Inflation to Model Failure

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Diagnosing and Solving Separation

| Item/Category | Specific Tool/Function | Purpose in Addressing Separation |

|---|---|---|

| Diagnostic Software | R: table(), xtabs() |

Creates contingency tables to identify zero cells. |

| Diagnostic Software | R: glm() with family=binomial |

Generates primary warnings of "fitted probabilities 0/1". |

| Solution Software | R: logistf package |

Implements Firth's penalized-likelihood logistic regression. |

| Solution Software | R: brms or rstanarm packages |

Enforces weakly informative priors in Bayesian GLMs. |

| Solution Software | R: elrm or logistf |

Performs exact or approximate conditional likelihood tests. |

| Robust DA Framework | ANCOM-BC2 R package |

Differential abundance method less sensitive to compositionality, though covariates仍需检查. |

| Zero-Inflated Modeling | glmmTMB R package |

Fits zero-inflated mixed models, can incorporate Firth-type penalties. |

Beyond the Error: Statistical Solutions for Reliable Differential Abundance Testing

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when implementing Firth's penalized-likelihood logistic regression to resolve perfect separation in microbiome differential abundance analysis.

Frequently Asked Questions (FAQs)

Q1: My model runs, but I get the warning "fitted probabilities numerically 0 or 1 occurred" even with brglm2 or logistf. Isn't Firth's correction supposed to fix this?

A: Yes, Firth's correction prevents coefficient divergence to ±∞. However, this warning indicates that on the probability scale, some predictions are still extremely close to 0 or 1, often due to quasi-complete separation. This is a numerical precision message, not a sign of model failure. The coefficients are valid and finite. You can typically proceed with inference.

Q2: How do I interpret p-values from logistf versus standard confidence intervals from brglm2?

A: The two packages use different default methods for inference, crucial for reporting.

Table 1: Default Inference Methods in logistf vs. brglm2

| Package | Default Confidence Interval Method | Default P-value Calculation | Note for Microbiome Data |

|---|---|---|---|

logistf |

Profile penalized likelihood | Likelihood ratio test based on penalized likelihood | Considered gold standard; more computationally intensive. |

brglm2 |

Standard asymptotic Wald-type | Wald test using penalized coefficients & standard errors | Faster; may be less accurate with very small sample sizes (n < 50). |

Recommendation: For final reporting, use profile likelihood-based confidence intervals from logistf or compute them via confint.brglm in brglm2.

Q3: I have a complex study design with random effects (e.g., patient ID). Can I use Firth's correction? A: Standard Firth's correction is for fixed-effects models. For generalized linear mixed models (GLMMs) with separation:

- Option 1: Use the

brglm2package withbrglm_fitin a fixed-effects model that includes the random effect as a fixed factor (if levels are few). - Option 2: For true GLMMs, consider the

logistfpackage'slogistffunction for fixed effects only, or explore thepenalizedpackage or Bayesian priors (e.g.,blmepackage).

Q4: My microbiome abundance table contains many zeros. How should I preprocess data before Firth's logistic regression? A: Follow this experimental protocol for robust analysis:

- Filtering: Remove taxa with prevalence < 10% across all samples.

- Transformation: Apply a centered log-ratio (CLR) transformation to compositional data OR use a presence/absence (binary) outcome.

- Dichotomization: For binary analysis, define a binary outcome (e.g., taxon presence vs. absence) or a high (>median) vs. low abundance threshold.

- Modeling: Apply Firth's logistic regression (

logistforbrglm2) to assess the association between a covariate (e.g., disease state) and the binary taxon outcome.

Table 2: Key Research Reagent Solutions for Computational Analysis

| Item/Software | Function in Analysis | Example/Note |

|---|---|---|

| R Statistical Environment | Core platform for statistical computing and graphics. | Version 4.3.0 or higher. |

logistf R Package |

Implements Firth's bias-reduced logistic regression with profile-likelihood inference. | Primary tool for final, report-ready models. |

brglm2 R Package |

Provides a unified framework for bias reduction in GLMs, including Firth. | Excellent for exploration and integration with tidyverse. |

microbiome R Package |

Provides tools for filtering, transformation, and analysis of microbiome data. | Used for CLR transformation and prevalence filtering. |

phyloseq R Package |

Handles and organizes microbiome data (OTU table, taxonomy, sample data). | Used for initial data ingestion and management. |

Q5: How do I perform a systematic differential abundance analysis across all taxa in my microbiome dataset using Firth's method? A: Implement the following experimental workflow protocol:

Protocol: Microbiome-Wide DA Analysis with Firth's Correction

- Data Import: Load your OTU/ASV table and metadata into a

phyloseqobject. - Preprocessing: Filter low-prevalence taxa (e.g., <10%). Optionally, agglomerate at a specific taxonomic rank (e.g., Genus).

- Binary Transformation: For each taxon

i, create a binary vectorY_iwhere 1 indicates abundance > threshold (e.g., geometric mean > 0), and 0 otherwise. - Model Fitting Loop: For each taxon

i: a. Construct model formula:Y_i ~ Primary_Covariate + Covariate1 + Covariate2. b. Fit model usinglogistf(formula, data = metadata). c. Extract coefficient, profile likelihood CI, and p-value forPrimary_Covariate. - Multiple Testing Correction: Apply False Discovery Rate (FDR) correction (e.g., Benjamini-Hochberg) to all obtained p-values.

- Significance Reporting: Identify taxa with FDR-adjusted p-value < 0.05 and report their odds ratios and confidence intervals.

Title: Workflow for Microbiome-Wide Firth Regression Analysis

Title: Logical Path from Problem to Solution with Firth's Correction

Troubleshooting Guides & FAQs

General Concept & Model Specification Issues

Q1: What exactly is a "weakly informative prior," and how do I choose one for microbiome count data?

A: A weakly informative prior regularizes estimates by pulling them slightly toward a reasonable default, without being as influential as a strongly informative prior. For microbiome differential abundance using models like brms (Stan) or bayglm, common choices are:

- For coefficients (beta): Normal(0, sigma) with sigma scaled to the data (e.g.,

set_prior("normal(0, 2.5)", class = "b")). This assumes effect sizes are unlikely to exceed 5-10 on the log-odds scale. - For intercepts: Student-t distributions with low degrees of freedom (e.g.,

student_t(3, 0, 2.5)) for robustness. - For overdispersion parameters: Exponential(1) is a common default for hierarchical standard deviations.

Q2: My Stan model is running very slowly or hitting maximum treedepth. How can I improve sampling efficiency? A: This is common with high-dimensional microbiome data.

- Reparameterize: Use non-centered parameterizations for hierarchical effects.

- Standardize Predictors: Center and scale continuous covariates.

- Reduce Dimensionality: Use aggressive filtering on low-prevalence ASVs/OTUs before modeling.

- Simplify: Start with a subset of taxa or samples to debug.

- Check Priors: Very weak priors (e.g.,

normal(0, 100)) can create pathological geometries; tighten them.

Q3: I am getting "divergent transitions" in Stan. What does this mean, and how do I fix it? A: Divergent transitions indicate the Hamiltonian Monte Carlo sampler is struggling with areas of high curvature in the posterior, often due to very small or large parameter values, which is a risk in perfect separation scenarios.

- Primary Fix: Increase the

adapt_deltaparameter (e.g., from 0.95 to 0.99). This makes the sampler take smaller, more accurate steps. - Secondary Actions: Use more strongly regularizing (but still weakly informative) priors. Re-examine your model for separation (see Q5).

Implementation & Code-Specific Issues

Q4: How do I implement a Zero-Inflated Negative Binomial (ZINB) model in brms/Stan for microbiome counts, and what priors to set?

A: The ZINB model handles both excess zeros and overdispersion. A basic brms formula and prior specification:

Q5: I suspect perfect separation in my logistic/Binomial model for presence/absence. How can I diagnose and address it with Bayesian methods? A:

- Diagnosis: Look for extremely large maximum likelihood estimates (MLEs) and huge standard errors if you fit a frequentist model. In Stan, you may see

rhat> 1.1, very low effective sample sizes (n_eff), or chains that get "stuck." - Bayesian Solution: The primary remedy is to specify a regularizing prior.

- In

bayglm(R): Useprior = student(df=7, scale=2.5)in thebayglmcall. - In

brms/Stan directly: Explicitly set anormal(0, 2.5)orcauchy(0, 2.5)prior on the problematic coefficient. This will shrink the implausibly large coefficient to a more reasonable value, providing a stable, probabilistic estimate.

- In

Interpretation & Validation

Q6: How do I interpret the output of a Bayesian model with weakly informative priors, specifically the credible intervals? A: The 95% Credible Interval (CrI) contains the true parameter value with 95% probability, given the data and the prior. With weakly informative priors, the interval is dominated by the data. You report the posterior median or mean along with the CrI (e.g., "The log-fold change for Taxon_A was 1.85 [95% CrI: 0.92, 2.91]"). If the CrI does not span zero, it indicates evidence for an effect.

Q7: How do I perform model comparison (e.g., Negative Binomial vs. ZINB) in a Bayesian framework? A: Use cross-validation to assess out-of-sample prediction accuracy.

- In

brms: Use theloo()oradd_criterion(model, "loo")function to compute the Pareto-smoothed importance sampling Leave-One-Out (PSIS-LOO) information criterion. A higher LOO-ELPD (expected log predictive density) indicates a better predicting model. - Caution: Do not use DIC. The

loopackage also provides diagnostic warnings for models with problematic observations.

Experimental Protocols

Protocol 1: Bayesian Differential Abundance Analysis usingbrms(Stan backend)

Objective: To identify taxa whose abundances are associated with a clinical condition while accounting for overdispersion, zeros, and covariates, using regularizing priors to prevent overfitting.

Data Preprocessing:

- Filter ASV/OTU table: Remove taxa with < 10 total counts or present in < 10% of samples.

- Convert counts to relative abundances or use raw counts with an appropriate model (e.g., Negative Binomial).

- Standardize continuous covariates (mean=0, sd=1).

Model Specification (Negative Binomial):

- Formula:

count ~ group + age + (1 | patient_id) - Family:

negbinomial()for overdispersed counts. - Priors:

- Formula:

Model Fitting:

Diagnostics & Interpretation:

- Check traceplots (

plot(fit)),rhat(< 1.01), and effective sample sizes (neff_ratio(fit)). - Extract summary:

summary(fit) - Plot posterior distributions:

mcmc_plot(fit, type = "areas", pars = "^b_group")

- Check traceplots (

Protocol 2: Addressing Separation withbayglm

Objective: To fit a logistic regression model for taxon presence/absence in the presence of quasi-complete separation.

- Prepare Data: Create a binary outcome vector (1=presence, 0=absence) for a specific taxon.

Model Fitting with

bayglm:Summarize Results:

Data Presentation

Table 1: Comparison of Prior Distributions for Logistic Regression Coefficients

| Prior Type | Stan/brm Syntax | Scale Parameter | Use Case & Rationale |

|---|---|---|---|

| Very Weak | normal(0, 10) |

10 | Minimal regularization. Often too weak for separation. |

| Weakly Informative (Recommended) | normal(0, 2.5) |

2.5 | Default in many brms models. Shrinks log-odds to plausible range (-5, 5). |

| Regularizing Cauchy | cauchy(0, 1) |

1 | Heavy tails allow occasional large effects but provide strong shrinkage near zero. |

| Hierarchical Shrinkage | normal(0, sigma); sigma ~ exponential(1) |

Varies | Partially pools coefficients, adapting shrinkage level from data. |

Table 2: Common Sampling Problems and Solutions in Stan

| Problem Symptom | Likely Cause | Corrective Actions |

|---|---|---|

High rhat (>1.1), low n_eff |

Inefficient sampling, model misspecification | Increase iter/warmup, reparameterize, simplify model. |

| Divergent transitions | High posterior curvature | Increase adapt_delta (e.g., to 0.99), improve priors. |

Maximum treedepth warnings |

Regions difficult to traverse | Increase max_treedepth (e.g., to 15), check for very large/small values. |

| Low Bayesian Fraction of Missing Information (E-BFMI) | Poor adaptation of step size | Reparameterize model, standardize predictors. |

Visualizations

Title: Bayesian Microbiome Analysis Workflow with Priors

Title: How Weakly Informative Priors Solve Separation

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Bayesian Microbiome Analysis

| Item/Category | Specific Tool/Package | Function & Rationale |

|---|---|---|

| Probabilistic Programming | Stan (cmdstanr, rstan), brms (R), pymc3 (Python) |

Core engines for specifying Bayesian models and performing Hamiltonian Monte Carlo (HMC) sampling. |

| R Interface & Wrapper | brms (R package) |

High-level formula interface for Stan, drastically simplifies model specification for common GLMMs. |

| Prior Specification | brms::set_prior, priors argument in bayglm |

Functions to define weakly informative priors (Normal, Cauchy, etc.) on model parameters. |

| Diagnostic & Visualization | bayesplot, shinystan, rstan::check_hmc_diagnostics |

Plot traceplots, posterior distributions, and diagnose sampling issues like divergences. |

| Model Comparison | loo package (PSIS-LOO) |

Evaluates and compares models based on expected out-of-sample prediction accuracy. |

| Data Wrangling | phyloseq (R), qiime2 (Python/R), tidyverse |

Curates, filters, and transforms microbiome sequencing data into formats suitable for modeling. |

| High-Performance Computing | RStudio Server, Linux cluster, Stan's parallel sampling (chains=4, cores=4) |

Enables computationally intensive HMC sampling for large models or datasets. |

Troubleshooting Guides & FAQs

Q1: My model fails to converge or returns extreme coefficient estimates (e.g., ± 10^9). What does this mean and how can I fix it? A: This is a classic symptom of perfect (or complete) separation in your dataset. It occurs when a predictor variable or combination of predictors perfectly predicts a binary outcome (e.g., presence/absence). In Zero-Inflated and Hurdle models, this often happens when a taxon is absent in all samples of one experimental group but present in at least one sample of another group.

- Diagnosis: Check your model summary for unrealistically large coefficients and huge standard errors.

- Solution: Apply Firth's bias-reduced logistic regression via the

logistforbrglm2R packages to the zero-inflation/hurdle component. This penalized likelihood method prevents coefficients from tending to infinity.- Protocol: Replace

glm(..., family="binomial")withlogistf(...)in your model specification for the zero-part.

- Protocol: Replace

Q2: How do I choose between a Zero-Inflated Negative Binomial (ZINB) and a Hurdle model for my microbiome count data? A: The choice hinges on your biological hypothesis about the source of zeros.

- ZINB Model: Assumes two latent groups: a "always zero" group (technical or biological zeros) and a "not always zero" group where counts come from a Negative Binomial distribution. Use if you believe zeros arise from both technical dropouts (technical zeros) and genuine biological absence (biological zeros).

- Hurdle Model: A two-part model that explicitly separates the presence/absence process (logistic regression) from the abundance process (zero-truncated count model like Negative Binomial). Use if you treat all zeros as "structural" and model positive counts separately.

Table 1: Model Selection Guide

| Feature | Zero-Inflated Negative Binomial (ZINB) | Hurdle Model (Negative Binomial) |

|---|---|---|

| Source of Zeros | Two types: structural & sampling | Single type: all structural |

| Philosophy | Latent class: "always zero" vs. "could be count" | Conditional two-part process |

| Zero Process | Models probability of being an "excess zero" | Models probability of crossing the "hurdle" (non-zero) |

| Count Process | Negative Binomial (including zeros from the "count" group) | Zero-Truncated Negative Binomial (only positive counts) |

| Key Question | "Are excess zeros present?" | "Are the processes for presence and abundance different?" |

Q3: What are the best practices for model validation and checking fit for these types of models? A: Always compare against simpler models and use diagnostic plots.

- Protocol for Model Comparison:

- Fit a standard Negative Binomial (NB) model, a Hurdle model, and a ZINB model.

- Compare them using AIC/BIC. A lower value (difference >2) suggests better fit.

- Perform a Vuong test (in the

psclR package) to formally compare ZINB vs. NB or Hurdle models.

- Protocol for Residual Checks:

- Use randomized quantile residuals (from the

DHARMaR package), which are correctly distributed for complex models if the model is specified correctly. - Plot these residuals against fitted values and compare to simulated data. P-values from goodness-of-fit tests should be non-significant.

- Use randomized quantile residuals (from the

Q4: Which R packages are most robust for implementing these models with microbiome data, considering common issues like separation? A: Current recommended packages address separation and offer flexibility.

Table 2: Key R Package Solutions

| Package | Core Function | Advantage for Microbiome/Addressing Separation |

|---|---|---|

glmmTMB |

glmmTMB() |

Supports ZINB & Hurdle; fast; allows complex random effects. |

pscl |

zeroinfl() |

Classic package for ZI models; good for initial learning. |

brglm2 |

brglm_fit() |

Enables Firth’s correction within glmmTMB or other functions to handle separation in the zero component. |

countreg |

zerotrunc() |

Useful for building custom Hurdle model components. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Controlled Microbiome Experiments

| Item | Function & Rationale |

|---|---|

| Mock Microbial Community Standards (e.g., ZymoBIOMICS) | Contains known, sequenced strains in defined ratios. Critical for distinguishing technical zeros (failed detection) from biological zeros during bioinformatics pipeline validation. |

| Process Control Spike-Ins (e.g., External RNA Controls Consortium - ERCC RNA spikes) | Added to samples pre-extraction. Used to quantify technical noise, batch effects, and detection limits, informing the statistical threshold for "true absence." |

| Inhibitor-Removal DNA Extraction Kits (e.g., MoBio PowerSoil) | Consistent, high-yield extraction is key. Inhibitors cause PCR dropout, a major source of technical zeros. These kits standardize recovery. |

| Unique Molecular Identifiers (UMIs) | Barcodes ligated to amplicons pre-PCR. Corrects for amplification bias and chimera formation, providing more accurate absolute abundance estimates for the count model component. |

| Blocking Primers for Host DNA Depletion | In host-associated studies (e.g., tissue, stool), these suppress host DNA amplification, increasing microbial sequencing depth and reducing false zeros for low-abundance taxa. |

Experimental Protocols

Protocol 1: Incorporating Firth's Correction in a ZINB Model using glmmTMB and brglm2

Objective: Fit a ZINB model while preventing coefficient divergence due to perfect separation in the zero-inflation component.

- Install and load packages:

install.packages(c("glmmTMB", "brglm2")) - Specify the model with a custom family:

Protocol 2: Performing the Vuong Test for Model Comparison Objective: Statistically compare whether a ZINB model fits significantly better than a standard NB model.

- Fit the competing models using the

psclpackage:

- Execute the Vuong test:

- Interpretation: A significant positive test statistic (p < 0.05) favors the ZINB model. A significant negative statistic favors the NB model. Non-significant means they are not statistically distinguishable.

Visualizations

Title: Model Selection & Separation Troubleshooting Workflow

Title: Sources of Zeros in Microbiome Data

Troubleshooting Guides & FAQs

Q1: When running ANCOM-BC, I encounter the error: "Error in solve.default(est$inv_hessian) : system is computationally singular." What causes this and how can I resolve it? A: This error indicates perfect separation or multicollinearity in your design matrix, often due to a metadata variable that completely predicts a taxon's presence/absence. This is a core challenge the thesis addresses.

- Solution: First, check your metadata for near-zero variance predictors using

nearZeroVar()from thecaretR package. Remove or combine such categories. Second, usemodel.matrix()to inspect your design matrix for linear dependencies. Simplify your model by removing collinear variables. Consider usingancombc2()with thegroupargument for a less parameterized model.

Q2: The metagenomeSeq fitZig() model fails to converge or yields extremely large coefficients/standard errors for some taxa. What does this signify?

A: This is a classic symptom of perfect separation, where a taxon is only present in one experimental group. The ZIG model's logit component for presence-absence becomes unstable.

- Solution: Implement

zigControl()to increase the maximum iterations (maxit) to 100. More fundamentally, pre-filter taxa with very low prevalence (e.g., present in < 10% of samples in any group) usingfilterData(..., present = X). This removes the taxa causing separation issues, aligning with the thesis's focus on robust method application.

Q3: ALDEx2 outputs seem highly variable between runs on the same data. Is this normal, and how can I ensure stable results? A: Yes, some variability is expected due to the Monte Carlo sampling of the Dirichlet distribution. Excessive variability, however, can be exacerbated by sparse, separable data.

- Solution: Increase the number of Monte Carlo instances (

mc.samples=128or higher) for more stable estimates. Useset.seed()beforealdex()for reproducibility. Crucially, apply a prior to handle zeros by usingaldex.clr(..., denom="iqlr")ordenom="all"instead of the default, as this reduces sensitivity to separation artifacts.

Q4: How do I choose the appropriate tool when I suspect perfect separation in my dataset? A: The choice depends on your data's characteristics and the question.

- For high-resolution, log-ratio based testing: Use ALDEx2 with

denom="iqlr". It is robust to separation but provides compositional, not absolute, differences. - For absolute abundance estimation and differential testing: Use ANCOM-BC. It directly addresses bias from sampling fraction and provides a structured test for separation.

- For modeling excess zeros with a mixed model: Use metagenomeSeq's ZIG. It is powerful but requires careful filtering to avoid separation-induced instability, as discussed in the thesis.

Table 1: Comparative Performance of Tools on Simulated Data with Perfect Separation

| Tool | Control of False Positive Rate (FPR) | Sensitivity to Separation | Recommended Pre-processing Step |

|---|---|---|---|

| ANCOM-BC | High (≤0.05) | Moderate-High | Check design matrix rank |

| metagenomeSeq ZIG | Moderate (can be >0.1) | Very High | Prevalence filtering (e.g., >10%) |

| ALDEx2 | High (≤0.05) | Low | Use IQLR denominator |

Table 2: Key Parameters for Stabilizing Models

| Tool | Critical Parameter | Default Value | Recommended Value for Separation Issues |

|---|---|---|---|

| ANCOM-BC | group (in v2) |

NULL |

Use for multi-group comparisons |

| metagenomeSeq | present in filterData() |

0 | 5-10 (minimum sample count) |

| ALDEx2 | denom |

"all" |

"iqlr" or "zero" |

| ALDEx2 | mc.samples |

128 | 512 or higher |

Experimental Protocols

Protocol 1: Diagnosing Perfect Separation with ANCOM-BC

- Load Data: Prepare a

phyloseqobject (ps) and a sample metadata dataframe. - Check Design: Run

model.matrix(~ primary_condition + covariate, data = metadata). Check output for columns with all zeros or that are linear combinations of others. - Run ANCOM-BC v2:

out <- ancombc2(data = ps, assay_name = "counts", tax_level = "Genus", fix_formula = "primary_condition + covariate", rand_formula = NULL, group = "primary_condition", zero_cut = 0.90) - Diagnose: Examine

out$res$diff_abn. If results areNAor errors occur, simplify thefix_formula.

Protocol 2: Robust Analysis with ALDEx2 for Compositional Effects

- Convert Data: Create input matrix

x(features x samples). - Generate Clr Instances:

x.clr <- aldex.clr(x, mc.samples=512, denom="iqlr", verbose=TRUE) - Run Statistical Test:

x.test <- aldex.t.test(x.clr, paired=FALSE) - Effect Size Calculation:

x.effect <- aldex.effect(x.clr, include.sample.summary=FALSE) - Interpret: Combine

x.testandx.effectdataframes. Useeffectandwe.ep(expected p-value) for inference.

Visualizations

Title: Tool Selection Workflow for Separation Issues

Title: ALDEx2 Internal Workflow for Robustness

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools

| Item | Function | Example/Note |

|---|---|---|

| R/Bioconductor | Core computational environment for statistical analysis. | Versions 4.3.0+; essential for all featured packages. |

| phyloseq Object | Standardized data container for microbiome analysis in R. | Holds OTU table, taxonomy, sample data, and phylogenetic tree. |

| ANCOM-BC v2.0+ | R package for bias-corrected differential abundance analysis. | Key functions: ancombc2(). Addresses sampling fraction bias. |

| metagenomeSeq | R package for zero-inflated Gaussian model fitting. | Key functions: fitZig(), MRfulltable(). Requires careful filtering. |

| ALDEx2 v1.30+ | R package for compositional differential abundance analysis. | Key functions: aldex.clr(), aldex.t.test(). Uses a prior. |

| caret R Package | Provides helper function to detect problematic metadata variables. | nearZeroVar() identifies predictors causing separation. |

| High-Performance Computing (HPC) Cluster | Enables large Monte Carlo simulations and big data processing. | Critical for ALDEx2 (mc.samples > 512) and large datasets. |

Troubleshooting Guides & FAQs

Q1: My DESeq2 analysis on microbiome count data fails with the error: "GLM fit did not converge. Use nbinomWaldTest with betaPrior=FALSE". What does this mean and how do I fix it? A: This error often indicates a problem of perfect separation or quasi-complete separation in your design matrix, typically when a covariate perfectly predicts the presence/absence of a microbial taxon. This is common in case-control studies where a microbe is only present in one group.

- Solution: Use

DESeq2'snbinomWaldTestfunction withbetaPrior=FALSEas suggested. Alternatively, apply Firth's bias-reduced logistic regression via thelogistfR package on presence/absence data, or use a zero-inflated model. - R Code Example:

Q2: I suspect perfect separation is inflating my log2 fold changes in MaAsLin 2 or similar tools. How can I diagnose this? A: You can diagnose by checking for all-zero or all-non-zero counts in one experimental group.

- Python Code Example (using pandas & NumPy):

Q3: What are the best-practice R/Python methods to address perfect separation in differential abundance testing? A: Current best practices involve penalization or Bayesian shrinkage.

- R:

glmmTMBwith Beta-binomial family. Handles overdispersion and can be more stable.

- Python:

statsmodelswith Firth correction (vialogistfR call or custom penalization).

Table 1: Comparison of Methods for Handling Perfect Separation in Microbiome DA

| Method | Software/Package | Key Principle | Advantage | Limitation |

|---|---|---|---|---|

| Firth's Penalized LR | logistf (R), brglm2 (R) |

Penalization of likelihood to remove bias | Eliminates infinite coefficients, reliable p-values | Slower on very large datasets. |

| Bayesian GLM | brms (R), PyMC3 (Python) |

Incorporates prior information to regularize estimates | Natural uncertainty quantification, flexible | Computationally intensive, requires prior specification. |

| Wald test w/ betaPrior=FALSE | DESeq2 (R) |

Disables prior on coefficients | Simple fix within DESeq2 workflow | May not fully solve inference problems. |

| Zero-Inflated Models | glmmTMB (R), pscl (R) |

Models zero counts from two processes | Separates structural zeros from sampling zeros | Complex interpretation, convergence issues. |

Experimental Protocol: Addressing Separation in a Case-Control Study

Title: Protocol for Differential Abundance Analysis with Perfect Separation Checks

1. Data Preprocessing & Quality Control:

- Input: Raw ASV/OTU count table, sample metadata.

- Filtering: Remove taxa with less than 10 total reads or present in <10% of samples.

- Normalization: Apply a variance-stabilizing transformation (e.g.,

DESeq2::varianceStabilizingTransformation) or center log-ratio (CLR) transformation.

2. Separation Diagnostic Step:

- For each taxon and each experimental group, test if counts are all zero or all non-zero using the R/Python code from FAQ #2.

- Flag taxa exhibiting perfect separation.

3. Model Fitting with Correction:

- For non-separated taxa: Proceed with standard negative binomial models (e.g.,

DESeq2,edgeR). - For separated taxa: Apply a penalized method.

- R Implementation: Use the

logistfpackage on presence/absence data. - Workflow: For taxon i, model

Presence/Absence_i ~ Group + Covariates.

- R Implementation: Use the

4. Result Synthesis:

- Combine p-values and effect estimates from both standard and penalized analyses.

- Apply multiple testing correction (e.g., Benjamini-Hochberg FDR) across all tests.

Visualizations

Title: DA Workflow with Separation Check

Title: Separation Problems & Solutions

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Computational DA Analysis

| Item | Function in Analysis | Example/Note |

|---|---|---|

R DESeq2 Package |

Primary tool for negative binomial-based DA testing. | Use betaPrior=FALSE and cooksCutoff adjustments for stability. |

R logistf / brglm2 |

Implements Firth's bias-reduced logistic regression for separated data. | Essential for presence/absence modeling of zero-inflated taxa. |

R phyloseq / Python qiime2 |

Data container and ecosystem for handling microbiome data structures. | Enforces tidy organization of counts, taxonomy, and sample data. |

Python SciPy / statsmodels |

Core libraries for statistical testing and model fitting in Python. | Used for implementing custom permutation or conditional tests. |

Bayesian MCMC Library (e.g., brms, PyMC3) |

Fits models with explicit priors that regularize extreme estimates. | Useful for sophisticated hierarchical models incorporating prior knowledge. |

| High-Performance Computing (HPC) Cluster | Provides resources for computationally intensive bootstrapping or Bayesian fits. | Necessary for large meta-analyses or strain-level datasets. |

Preventing False Discoveries: A Practical Guide to Model Optimization and Diagnostics

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: My differential abundance analysis (e.g., with DESeq2 or MaAsLin2) fails due to "perfect separation" errors. What does this mean and which preprocessing step should I adjust first? A: Perfect separation occurs when a microbe is present (non-zero) exclusively in one experimental group (e.g., only in cases, never in controls). This creates a model matrix of rank deficiency. Your first adjustment should be prevalence filtering. Filter out taxa with very low prevalence (e.g., present in less than 10-20% of samples in any group) before analysis. This removes the most likely candidates causing separation.

Q2: Does rarefaction help prevent separation, and when should I use it versus CLR? A: Rarefaction can reduce the risk by removing low-count samples and shrinking many low counts to zero, but it does not eliminate it. CLR transformation (after adding a pseudocount) eliminates separation risk by handling zeros intrinsically, as it operates on non-zero, compositionsl data. Use rarefaction if your goal is to address uneven sequencing depth for alpha/beta diversity analyses. Use CLR if you plan to use compositional-aware methods (e.g., for linear models like MaAsLin2 with a CLR transform) and wish to retain all samples.

Q3: After CLR transformation with a pseudocount, my results seem highly sensitive to the pseudocount value. How do I choose it?

A: This is a known issue. A pseudocount is a form of multiplicative replacement. Best practice is to use a geometric mean-based imputation (like the zCompositions::cmultRepl() R function) instead of a fixed, arbitrary pseudocount (e.g., 1). If you must use a fixed value, conduct a sensitivity analysis across a range (e.g., 0.5, 1, minNonZero/2) and report the stability of your key findings.

Q4: I've applied aggressive filtering, but separation errors persist. What are my options? A: Consider these steps:

- Aggregate taxa at a higher taxonomic rank (e.g., Genus instead of ASV/OTU).

- Switch your model: Use a zero-inflated or hurdle model (e.g.,

glmmTMB,MAST) explicitly designed for sparse data with two processes: presence/absence and abundance. - Use a separation-penalized model: Employ methods like Firth's bias-reduced logistic regression (available in the

logistfR package) orbrglm2, which provide finite coefficient estimates even under separation.

Q5: How do I decide on the prevalence and abundance filtering thresholds? A: There is no universal standard. Thresholds should be informed by your data and experimental design. A common strategy is:

- Prevalence: Filter taxa present in < X% of samples, where X is often 10% for large cohorts or 20% for smaller studies (< 50 samples/group).

- Abundance (Total Count): Filter taxa with < Y total reads across all samples (Y often ranges from 10 to 50). See the comparative table below for guidance.

Table 1: Impact of Preprocessing Methods on Separation Risk & Data Properties

| Method | Primary Purpose | Effect on Separation Risk | Key Parameter Choices | Best Suited For |

|---|---|---|---|---|

| Rarefaction | Normalize sequencing depth | Reduces (by introducing zeros) | Rarefaction depth (use minimum reasonable sample depth) | Alpha/Beta diversity metrics; between-sample comparisons when depth varies widely. |

| Prevalence Filtering | Remove sporadically observed taxa | Significantly Reduces | Prevalence threshold (e.g., 10%), apply per group. | First-line defense against separation; all downstream analyses. |

| Abundance Filtering | Remove low-count noise | Moderately Reduces | Minimum total count (e.g., 10 reads) or mean relative abundance. | Reducing multiple testing burden and noise. |

| CLR + Pseudocount | Compositional data transformation | Eliminates (zeros are handled) | Pseudocount value or imputation method. | Compositional-aware differential abundance (ANCOM-BC, MaAsLin2, linear models). |

| Taxonomic Aggregation | Reduce feature dimensionality | Reduces (by pooling counts) | Taxonomic rank (e.g., Genus, Family). | Simplifying models when ASV/OTU-level separation is problematic. |

Table 2: Recommended Troubleshooting Protocol for Separation Errors

| Step | Action | Tool/Function Example | Expected Outcome |

|---|---|---|---|

| 1 | Apply prevalence filtering (per group). | phyloseq::filter_taxa() or microViz::tax_filter() |

Removal of taxa exclusive to one group. |

| 2 | Apply mild abundance filtering. | phyloseq::filter_taxa() |

Removal of ultra-low count noise. |

| 3 | If error persists, aggregate data. | phyloseq::tax_glom() |

Fewer features, lower sparsity. |

| 4 | If error persists, switch analysis method. | Use ANCOM-BC, MaAsLin2 (with CLR), or logistf |

Successful model fitting with stable estimates. |

Experimental Protocols

Protocol 1: Standardized Preprocessing Pipeline to Mitigate Separation

- Input: Raw ASV/OTU count table (

phyloseqobject or similar). - Step 1 - Initial Filtering:

- Remove samples with total reads < 1000 (or a study-specific minimum).

- Remove taxa with total reads < 10 across all samples.

- Step 2 - Prevalence Filtering (Critical for Separation):

- For each experimental group (e.g., Control, Treatment), calculate the prevalence of each taxon.

- Retain only taxa present in at least 15% of samples in any one group. (Implement via

microViz::tax_filter(prev_perc = 15)).

- Step 3 - Choose Path:

- Path A (for Diversity): Perform rarefaction to an even depth (e.g., the 90th percentile of minimum sample depths). Proceed to alpha/beta diversity.

- Path B (for Differential Abundance):

a. Apply Centered Log-Ratio (CLR) transformation using geometric mean imputation of zeros (

zCompositions::cmultRepl()followed bylog(x / g(x))). b. Alternatively, use a pseudocount of half the minimum non-zero count (minNonZero/2) before CLR, document sensitivity.

- Step 4 - Analysis: Use compositionally robust tools like

ANCOM-BC,MaAsLin2(specifying the CLR-transformed data), oraldex2.

Protocol 2: Applying Firth's Penalized Regression for Separated Features

- Input: A specific taxon count vector (after moderate filtering) and metadata.

- Model Setup: The goal is to model

Taxon_Presence_Absence ~ Group + Covariates. - Procedure:

- In R, install the

logistfpackage. - Use the function:

fit <- logistf(Taxon_Binary ~ Group + Age + Sex, data = your_metadata) - Examine the summary

summary(fit)for finite coefficients and p-values.

- In R, install the

- Interpretation: The coefficients are log-odds ratios from a bias-reduced logistic regression, providing valid inference even when the taxon is perfectly associated with the group.

Visualizations

Decision Workflow for Mitigating Separation

Causes & Solutions for Separation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages

| Tool/Package | Primary Function | Role in Mitigating Separation |

|---|---|---|

| phyloseq (R) | Data structure & basic preprocessing. | Enables easy filtering (prevalence/abundance) and taxonomic aggregation. |

| microViz (R) | Extended microbiome visualization & analysis. | Provides tax_filter() for streamlined per-group prevalence filtering. |

| zCompositions (R) | Imputation for compositional data. | Implements cmultRepl() for geometric mean imputation prior to CLR, a robust alternative to pseudocounts. |

| ANCOM-BC (R) | Differential abundance testing. | Uses a linear model with BC correction on log-ratios, inherently handling compositionality and reducing separation risk. |

| MaAsLin2 (R) | Multivariate association modeling. | Allows specification of CLR-transformed data, avoiding models on raw counts prone to separation. |

| logistf / brglm2 (R) | Firth's penalized regression. | Directly fits logistic models for binary presence/absence outcomes, providing finite estimates under separation. |

| QIIME 2 | Pipeline for microbiome analysis. | Offers feature-table filter-features for prevalence/abundance filtering and compositional plugin options. |

Covariate Adjustment and Confounder Control to Reduce Extreme Patterns

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: General Concepts and Application

Q1: What is the primary purpose of covariate adjustment in microbiome differential abundance analysis, and how does it relate to extreme patterns? A: Covariate adjustment is a statistical method used to control for the influence of extraneous variables (confounders) that may distort the observed relationship between an exposure (e.g., treatment) and an outcome (microbial abundance). Unadjusted confounders can create spurious associations or mask true signals, leading to extreme and unreliable effect size estimates, including perfect separation events where a taxon appears to be exclusively present or absent in one group.

Q2: What common confounders should I consider in human microbiome studies? A: Key confounders vary by study design but often include:

- Demographics: Age, Sex, BMI, Genetic background.

- Technical Factors: Sequencing depth (library size), batch, DNA extraction kit, sequencing run.

- Clinical & Lifestyle: Diet, medication (especially antibiotics/proton pump inhibitors), smoking status, disease severity sub-scores, sample collection method.

- Geographic/Ecological: Site of collection, geography, season.

Q3: My model is failing due to perfect separation after adding several covariates. What should I do? A: This indicates numerical instability, often due to high-dimensional, sparse data combined with many predictors.

- Troubleshooting Steps:

- Pre-filtering: Remove taxa with extremely low prevalence (e.g., present in <10% of samples) before analysis to reduce sparse rows.

- Regularization: Use penalized methods (e.g.,

glmnet,meiflywithlassoorridgepenalty) that shrink coefficients and handle collinearity. - Confounder Aggregation: Use dimension reduction (PCA) on potential confounders and adjust for the top principal components.

- Prior Modification: For Bayesian models (e.g.,

brms), use more informative, regularizing priors (e.g., Cauchy, Horseshoe) to stabilize estimation. - Model Simplification: Re-evaluate if all covariates are necessary; use domain knowledge or change-in-estimate criteria for selection.

FAQ: Technical Implementation & Software

Q4: How do I implement covariate adjustment in common differential abundance tools? A: See the protocol table below.

Table 1: Implementation Protocols for Covariate Adjustment

| Software/Package | Key Function | Covariate Adjustment Syntax Example | Note on Extreme Patterns |

|---|---|---|---|

DESeq2 |

DESeq() |

design = ~ Batch + Age + Treatment |

Uses a regularized log transformation (rlog) & outlier detection to mitigate extreme counts. Prefers raw counts. |

edgeR |

glmQLFit() |

design <- model.matrix(~Batch + Age + Treatment) |

Uses quasi-likelihood F-tests and glmQLFTest() which are more stable than LRT with many covariates. |

MaAsLin2 |

Maaslin2() |

random.effects = c("Subject") |

Supports fixed and random effects, normalization, and transformation. Outputs warn on singular fits. |

ANCOM-BC |

ancombc() |

formula = "Treatment + Age" |

Specifically addresses sampling fraction bias and uses a bias correction term. |

MMINP |

MMINP.train() |

meta = metadata[, c("Age", "BMI")] |

A novel method designed for metagenomic data; incorporates feature network. |

Limma |

lmFit() |

design <- model.matrix(~0 + Group + Age) |

Use with voom (for counts) or on CLR-transformed data. Very stable linear modeling. |

Q5: I am using a compositional data approach (ALR/CLR). How do I adjust for confounders?

A: After performing a Center Log-Ratio (CLR) transformation using a tool like compositions or robCompositions, you can use standard linear models (e.g., limma, lm) with the transformed abundances as the response variable.

- Protocol:

- Impute Zeros: Use a multiplicative replacement (

zCompositions::cmultRepl) or geometric Bayesian method. - CLR Transform:

clr_values <- compositions::clr(imputed_counts). - Linear Model: Fit

lm(clr_values[, taxon] ~ Treatment + Age + BMI, data=meta). - Multivariate Control: For global testing, use PERMANOVA (

vegan::adonis2) with the formulaclr_dist ~ Treatment + Age + BMI.

- Impute Zeros: Use a multiplicative replacement (

Experimental Protocol: A Standardized Workflow for Confounder-Adjusted Analysis

Title: Integrated Workflow for Confounder Control in Microbiome DA.

Detailed Methodology:

- Pre-processing & Filtering:

- Input: Raw ASV/OTU count table and metadata.

- Remove taxa with prevalence < 10% across all samples.

- For compositional methods, perform careful zero imputation.

Confounder Identification:

- Univariate Screening: Test association of each potential covariate with the primary exposure (Treatment/Group) using Chi-squared, t-test, or regression. Retain those with p < 0.2.

- DAG Construction: Use domain knowledge to draw a Directed Acyclic Graph (DAG) with tools like

dagittyto identify minimal sufficient adjustment set.

Model Fitting with Adjustment:

- Select appropriate tool from Table 1 (e.g.,

DESeq2for raw counts). - Construct design matrix incorporating the adjustment set from Step 2.

- Run the model. If convergence warnings occur, apply Troubleshooting Steps from Q3.

- Select appropriate tool from Table 1 (e.g.,

Sensitivity Analysis:

- E-value Calculation: Assess robustness to unmeasured confounding by calculating E-values for significant taxa.

- Model Comparison: Compare effect sizes and significance of the primary exposure between adjusted and unadjusted models. Large shifts indicate strong confounding.

Visualization & Reporting:

- Plot adjusted abundances (e.g., via

DESeq2::plotCountswithintgroup=c("Treatment", "Age")). - Report both unadjusted and adjusted results in summary tables.

- Plot adjusted abundances (e.g., via

Visualization: Confounder Control Workflow & Logic

Title: Workflow for Confounder Adjustment and Error Resolution

Title: DAG for Microbiome Study Confounding

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Controlled Microbiome DA Experiments

| Item/Category | Function & Relevance to Confounder Control |

|---|---|

| Standardized DNA Extraction Kit | Minimizes technical batch variation, a major confounder. Use the same kit for all samples. |

| Internal Spike-in Controls | Added pre-extraction (e.g., Known quantities of foreign cells/DNA) to differentiate technical from biological variation and correct for efficiency biases. |