The CLR Transformation Guide: Mastering Compositional Data Analysis for Microbiome Research & Drug Discovery

This comprehensive guide details the Center Log-Ratio (CLR) transformation for analyzing microbiome sequencing data, which is inherently compositional.

The CLR Transformation Guide: Mastering Compositional Data Analysis for Microbiome Research & Drug Discovery

Abstract

This comprehensive guide details the Center Log-Ratio (CLR) transformation for analyzing microbiome sequencing data, which is inherently compositional. It covers foundational concepts of compositional data analysis (CoDA), step-by-step methodological implementation, common pitfalls and optimization strategies, and comparative validation against other normalization methods. Targeted at researchers, scientists, and drug development professionals, the article provides the practical knowledge needed to apply CLR transformation correctly, ensuring statistically sound and biologically interpretable insights in studies of the human microbiome and its role in health, disease, and therapeutic intervention.

Why Compositional Data Demands CLR: Foundational Principles for Microbiome Analysis

Within the broader thesis advocating for the centered log-ratio (CLR) transformation as a foundational step in robust microbiome data analysis, understanding the nature of compositional data is the primary hurdle. Microbiome sequencing data (e.g., from 16S rRNA gene amplicon or shotgun metagenomics studies) is inherently compositional. The total number of reads per sample (library size) is an artifact of sequencing depth, not a measure of absolute microbial abundance. Consequently, data is typically normalized to relative abundances (proportions), where each sample sums to 1 (or 100%). This is the 'Constant Sum' Constraint. This property induces spurious correlations and distorts distance metrics, making standard statistical methods invalid. The CLR transformation, defined as the logarithm of the ratio of each component to the geometric mean of all components, is a critical step to break this constraint and move data into a Euclidean space amenable to standard analysis, provided zeros are appropriately handled.

Table 1: Simulated Example of Spurious Correlation Induced by Closure (Sum=1)

| Taxon | True Absolute Abundance (Sample A) | True Absolute Abundance (Sample B) | Relative Abundance (Sample A) | Relative Abundance (Sample B) |

|---|---|---|---|---|

| Taxon 1 | 1000 | 1000 | 0.50 | 0.20 |

| Taxon 2 | 500 | 2000 | 0.25 | 0.40 |

| Taxon 3 | 300 | 1500 | 0.15 | 0.30 |

| Taxon 4 | 200 | 500 | 0.10 | 0.10 |

| Total Count | 2000 | 5000 | 1.00 | 1.00 |

Interpretation: In absolute terms, Taxon 1 is unchanged between samples. However, because the total count increased in Sample B (a technical artifact), Taxon 1's relative abundance decreases from 0.50 to 0.20. This creates an artificial negative correlation between Taxon 1 and other taxa, purely due to the closure operation.

Table 2: Key Properties of Raw, Relative, and CLR-Transformed Data

| Property | Raw Count Data | Relative Abundance (Closed) | CLR-Transformed Data |

|---|---|---|---|

| Sum Constraint | No (Variable Total) | Yes (Constant Sum=1) | No (Sum=0) |

| Sample Space | Non-negative Integers | Simplex (0,1] | Real Euclidean Space |

| Covariance Structure | Unconstrained | Distorted (Negative Bias) | Interpretable (Aitchison) |

| Appropriate Stats | Count Models (e.g., Neg. Binomial) | Limited (Compositional Methods) | Standard Euclidean Stats* |

*After zero imputation (e.g., using a multiplicative replacement strategy).

Experimental Protocols

Protocol 1: Standard 16S rRNA Gene Amplicon Data Pre-processing Pipeline Leading to CLR Transformation

Objective: To process raw sequencing reads into a CLR-transformed feature table for downstream statistical analysis.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Demultiplexing & Quality Control: Use

demuxplugin in QIIME 2 to assign reads to samples based on barcodes. Denoise sequences with DADA2 (viaq2-dada2) to correct errors, infer exact amplicon sequence variants (ASVs), and remove chimeras. - Feature Table Construction: Generate an ASV table (BIOM format) containing counts per sample.

- Taxonomic Assignment: Classify ASVs against a reference database (e.g., SILVA, Greengenes) using a classifier like

q2-feature-classifier. - Normalization & Filtering:

a. Filter out ASVs present in less than 10% of samples or with low prevalence.

b. Addressing Zeros: Apply a multiplicative replacement (e.g., using

zCompositionsR packagecmultRepl()function) to impute zeros prior to CLR. This adds a small pseudo-count proportional to the abundance of nonzero components. - CLR Transformation: Compute the geometric mean G(x) of all D components in a sample: G(x) = (x₁ * x₂ * ... * x_D)^(1/D). Then, apply CLR: clr(x) = [ln(x₁/G(x)), ln(x₂/G(x)), ..., ln(x_D/G(x))]. This can be performed using the

mixOmics::clr()function in R orskbio.stats.composition.clrin Python. - Output: A transformed matrix where rows are samples and columns are CLR-transformed ASV abundances, ready for PCA, regression, or correlation networks.

Protocol 2: Validating Compositional Effects via a Spike-in Experiment

Objective: To empirically demonstrate the necessity of CLR transformation using controlled spike-in communities.

Materials: Defined microbial community standards (e.g., ZymoBIOMICS Microbial Community Standard), DNA spike-ins. Procedure:

- Experimental Design: Create a series of samples with a background microbiome community. Spike in a known, varying absolute abundance of a control organism (or synthetic DNA sequences) not present in the background.

- Sequencing: Process all samples simultaneously in a single sequencing run to minimize batch effects.

- Data Analysis: a. Generate relative abundance tables. b. Observe the relative abundance of the spike-in taxon. Despite its known increasing absolute abundance, its relative abundance may appear non-monotonic or decrease due to the constant sum constraint from variations in the background community. c. Apply CLR transformation to the entire dataset (including background and spike-in). d. Correlate the CLR-transformed values of the spike-in taxon with its known log-transformed absolute concentration. The CLR values should show a strong linear relationship, validating its utility for recovering meaningful biological signal.

Visualizations

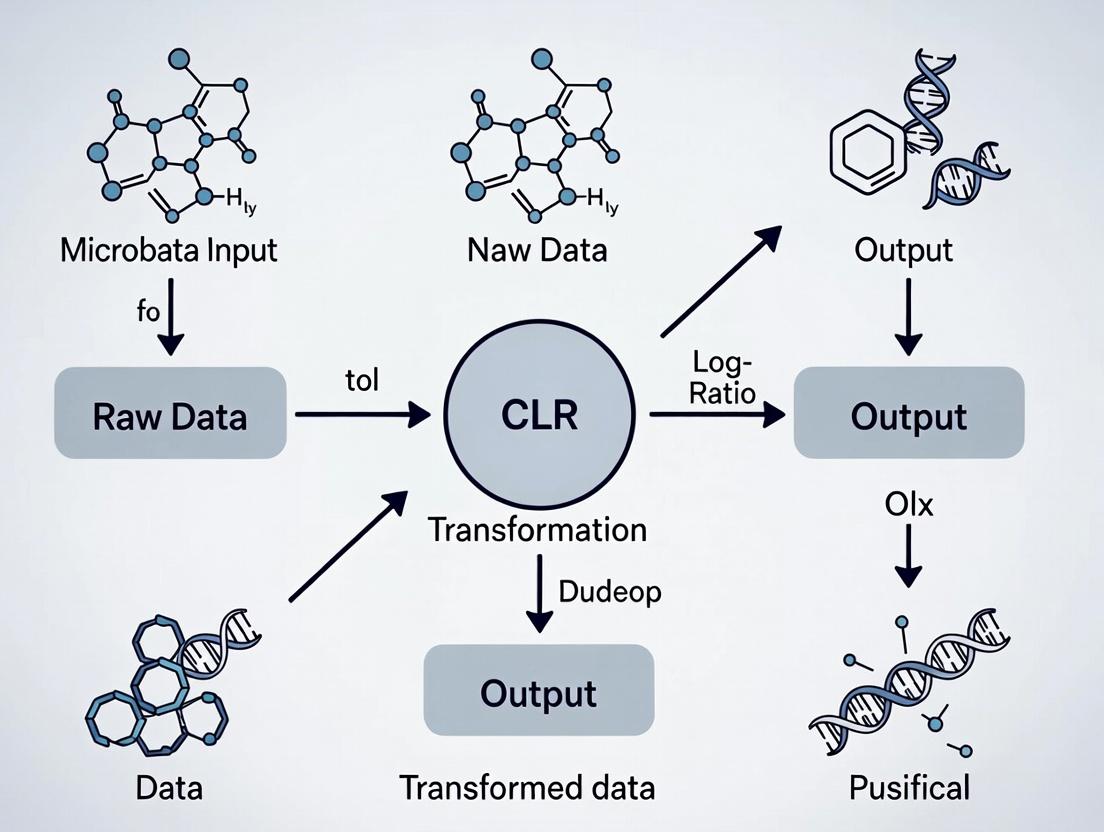

Title: Microbiome Data Analysis Workflow with CLR Transformation

Title: Mathematical Relationship: Simplex to Euclidean Space via CLR

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Compositional Microbiome Analysis

| Item | Function / Relevance |

|---|---|

| ZymoBIOMICS Microbial Community Standard | Defined mock community of known composition. Critical for benchmarking pre-processing pipelines and validating the detection of compositional effects. |

| PowerSoil Pro DNA Extraction Kit | Robust, standardized kit for microbial cell lysis and DNA isolation from complex samples. Reproducibility is key for comparative studies. |

| QIIME 2 (BioBakery) Software Platform | Open-source, reproducible pipeline for microbiome analysis from raw reads to initial visualizations. Essential for standardized pre-processing. |

zCompositions R Package |

Provides functions for dealing with zeros in compositional data, including the cmultRepl function for multiplicative replacement, a prerequisite for CLR. |

CoDaSeq / robCompositions R Packages |

Offer a suite of tools for compositional data analysis, including CLR transformation, outlier detection, and additional imputation methods. |

mixOmics R Package |

Contains optimized clr() function and provides integrative multivariate analysis methods (sPLS-DA) designed for or compatible with CLR-transformed data. |

| Silva or Greengenes Database | Curated 16S rRNA gene reference databases for taxonomic assignment of sequence variants. Accuracy here influences downstream biological interpretation. |

| Phylogenetic Tree (e.g., from SATé) | Used for phylogenetic-aware diversity metrics (UniFrac). CLR-transformed data can also be incorporated into phylogenetic models. |

Compositional data are vectors of positive components representing parts of a whole, carrying only relative information. This is the defining characteristic of microbiome sequencing data, where total read counts (library size) are arbitrary and non-informative. The Aitchison geometry provides the mathematical framework for analyzing such data, based on principles of scale invariance, sub-compositional coherence, and permutation invariance.

The Aitchison Geometry: Principles & Operations

Key operations within the simplex sample space (S^D) are defined below.

Table 1: Core Principles of Aitchison Geometry

| Principle | Mathematical Definition | Implication for Microbiome Data |

|---|---|---|

| Scale Invariance | C(x) = C(αx) for any α>0 | Normalization (e.g., rarefaction) does not change the composition. |

| Sub-compositional Coherence | Analysis of a subset of parts is consistent with the full composition. | Results for a phylogenetic subgroup are consistent with the full community analysis. |

| Permutation Invariance | Analysis is independent of the order of components. | Taxon order in the OTU/ASV table does not affect results. |

Table 2: Aitchison Geometry Operations

| Operation | Formula | Purpose |

|---|---|---|

| Perturbation (⊕) | x ⊕ y = C(x₁y₁, ..., xDyD) | Simplex analogue of addition. Represents a change in composition. |

| Powering (α ⊗ x) | α ⊗ x = C(x₁^α, ..., x_D^α) | Simplex analogue of scalar multiplication. |

| Inner Product | ⟨x, y⟩A = (1/(2D)) ∑{i,j} ln(xi/xj) ln(yi/yj) | Defines distances and angles in the simplex. |

| Aitchison Norm | ‖x‖A = √⟨x, x⟩A | Measure of the magnitude of a composition. |

| Aitchison Distance | dA(x, y) = ‖x ⊖ y‖A = √∑{i,j} [ln(xi/xj) - ln(yi/y_j)]² | Distance between two compositions. |

The Centered Log-Ratio (CLR) Transformation

The CLR transformation is a cornerstone isometric map from the D-dimensional simplex to (D-1)-dimensional real space, central to the thesis context. It is defined as:

clr(x) = [ln(x₁ / g(x)), ln(x₂ / g(x)), ..., ln(x_D / g(x))]

where g(x) = (∏_{i=1}^D x_i)^(1/D) is the geometric mean of all components.

Table 3: Properties of the CLR Transformation

| Property | Description | Relevance to Microbiome Analysis |

|---|---|---|

| Isometry | Preserves Aitchison distances as Euclidean distances. | Enables use of standard Euclidean-based statistical methods (PCA, regression). |

| Singular Covariance | The clr-coordinates sum to zero, leading to a singular covariance matrix. | Requires special handling for multivariate procedures (e.g., use of pseudoinverse). |

| Interpretability | Each coordinate is the log-ratio of a part to the geometric mean of all parts. | Features represent the relative abundance of a taxon compared to the average taxon. |

Protocol: CLR Transformation for Microbiome Data

Objective: To transform raw count or relative abundance data into isometric, real-valued coordinates for downstream analysis.

Input: D-dimensional composition (e.g., ASV/OTU counts after quality control).

Reagents & Software: R (v4.3+), packages compositions, zCompositions, or SpiecEasi.

Procedure:

- Data Preprocessing: Filter low-abundance taxa (e.g., prevalence <10% across samples). Handle zeros using a multiplicative replacement method (e.g.,

cmultReplfromzCompositions) with a small delta (δ=0.65). - Normalization: Convert filtered counts to relative abundances (closed compositions) by dividing each sample vector by its total count.

- Geometric Mean Calculation: For each sample, compute the geometric mean

g(x)of all D components (post-zero handling). - CLR Computation: For each component

iin a sample, computeclr_i = log(x_i / g(x)). - Output: A matrix of size

(n_samples x D)clr-transformed values. Note: This matrix is rank-deficient (columns sum to 0).

Application Notes & Case Protocol: Microbial Dysbiosis Detection

Experimental Workflow

Diagram Title: CLR-Based Microbiome Analysis Workflow

Research Reagent Solutions & Essential Materials

Table 4: Key Reagents & Tools for CoDA Microbiome Analysis

| Item | Function / Description | Example Product / Package |

|---|---|---|

| Zero-Replacement Reagent | Replaces count zeros to allow log-transform, preserving compositional properties. | zCompositions::cmultRepl (R) |

| CLR Transformation Module | Computes isometric log-ratio coordinates from closed compositions. | compositions::clr (R), skbio.stats.composition.clr (Python) |

| CoDA-Capable Stats Package | Performs PCA, regression, and testing on compositional data. | robCompositions (R), prophetic (Python) |

| Compositional Data Simulator | Validates pipelines with known ground-truth compositional effects. | compositions::rDirichlet.acomp (R) |

| High-Contrast Color Palette | Ensures clear visualization of clr-PCA biplots and balances. | ColorBrewer Set2 or Tableau10 |

Detailed Protocol: Identifying Differential Taxa via CLR

Objective: To identify taxa whose relative abundance differs significantly between two groups (e.g., Healthy vs. Disease). Experimental Design: Case-Control study, 16S rRNA gene sequencing.

Procedure:

- Apply CLR Transformation: Follow Protocol 3.1 to obtain clr-matrix

Z(n x D). - Dimensionality Reduction: Perform PCA on the covariance matrix of

Z. Plot PC1 vs. PC2 to visualize sample separation. - Univariate Testing: For each clr-transformed taxon

j, perform a two-sample t-test (or Mann-Whitney) between groups. The clr-value is interpreted as the log-ratio of taxonj's abundance to the geometric mean of all taxa. - Multiple Testing Correction: Apply Benjamini-Hochberg FDR correction to p-values from all D tests.

- Interpretation: A significant taxon with a positive mean clr difference in Group A indicates that taxon is more abundant relative to the microbial average in Group A than in Group B.

Table 5: Example Results from CLR-Based Differential Analysis

| Taxon (ASV ID) | Mean clr (Healthy) | Mean clr (Disease) | clr Difference | p-value (FDR adj.) | Interpretation |

|---|---|---|---|---|---|

| Bacteroides ASV1 | 2.15 | 0.98 | -1.17 | 0.003 | Depleted in Disease |

| Ruminococcus ASV5 | -0.45 | 1.89 | +2.34 | <0.001 | Enriched in Disease |

| Faecalibacterium ASV12 | 1.67 | 1.72 | +0.05 | 0.82 | Not Significant |

Critical Considerations & Limitations

- Zero Handling: Critical pre-processing step. Choice of method (multiplicative, Bayesian) influences results.

- High-Dimensionality: D >> n leads to poorly estimated geometric mean and covariance. Consider alternative log-ratios (e.g., ILR) or regularization.

- Confounding: Variation in the geometric mean can dominate the first CLR component, often correlating with technical factors like sequencing depth.

- Not for Absolute Change: CLR analyzes relative changes. Integrating microbial load (qPCR) is needed for absolute quantification.

What is CLR Transformation? Definition, Mathematical Formulation, and Core Properties.

Definition

The Centered Log-Ratio (CLR) transformation is a compositional data analysis technique used to transform constrained, relative data (like microbiome read counts or OTU tables) into a Euclidean space suitable for standard statistical analysis. It addresses the unit-sum constraint (e.g., all samples sum to 1 or a fixed total) by taking the logarithm of the ratio between each component and the geometric mean of all components within a sample. This transformation is a cornerstone in the analysis of high-throughput sequencing data within the broader thesis on developing robust analytical pipelines for microbiome-host interaction studies in drug development.

Mathematical Formulation

For a composition vector x = (x₁, x₂, ..., x_D) with D components (e.g., microbial taxa), the CLR transformation is defined as:

CLR(x) = [ ln(x₁ / g(x)), ln(x₂ / g(x)), ..., ln(x_D / g(x)) ]

where g(x) is the geometric mean of the composition: g(x) = (∏{i=1}^D xi)^{1/D}

This ensures that the transformed values sum to zero: ∑{i=1}^D CLR(xi) = 0.

A pseudocount (typically 1) is added to all components to handle zeros before transformation: xi' = xi + 1.

Core Properties

- Scale Invariance: Results are independent of the total sequencing depth (library size).

- Sub-compositional Coherence: Analysis of a subset of taxa is consistent with the analysis of the full composition.

- Isometry: Approximates preservation of Aitchison distances in the transformed Euclidean space.

- Zero-Sum Constraint: Transformed values are centered, introducing linear dependency (covariance matrix is singular).

Application Notes and Protocols

Note 1: Data Pre-processing for CLR

CLR transformation is applied to count data after filtering and normalization. A critical step is zero handling via pseudocount addition or imputation.

Protocol: Standard Pre-CLR Workflow

- Filtering: Remove taxa with prevalence < 10% across all samples.

- Normalization (Optional): Convert raw counts to relative abundances (divide by total reads per sample).

- Zero Management: Add a uniform pseudocount of 1 to all abundance values.

- Transformation: Apply the CLR formula using computational tools (e.g.,

clr()function in thecompositionsR package orskbio.stats.composition.clrin Python). - Downstream Analysis: Use transformed data for PCA, regression, or network analysis.

Note 2: Comparative Analysis of Transformations

For microbiome data, CLR is compared against other common transformations.

Table 1: Comparison of Common Transformations for Microbiome Data

| Transformation | Formula (Per Component i) | Handles Zeros | Preserves Euclidean Geometry | Use Case |

|---|---|---|---|---|

| Raw Proportion | ( pi = xi / T ) | No | No | Basic visualization |

| Log10 | ( \log{10}(xi + 1) ) | Yes (pseudocount) | Poor | Simple normalization |

| CLR | ( \ln(x_i / g(\mathbf{x})) ) | Requires imputation | Yes (Aitchison) | PCA, covariance networks |

| ALR | ( \ln(xi / xD) ) | Requires imputation | No (non-isometric) | Regression with reference taxon |

Note 3: Experimental Protocol for Differential Abundance Testing with CLR

This protocol is designed for a case-control study within drug efficacy research.

Title: CLR-based Linear Model for Differential Abundance Objective: Identify taxa whose abundances are associated with a treatment condition. Reagents & Materials:

- Input: Filtered OTU/ASV count table.

- Software: R (v4.3+) with packages

compositions,limma, orMaaslin2. Procedure:

- Apply CLR: Transform the entire filtered count table using the standard protocol above.

- Model Fitting: For each taxon j, fit a linear model:

CLR_j ~ Treatment + Covariate1 + Covariate2. - Hypothesis Testing: Perform t-tests or F-tests on the 'Treatment' coefficient using empirical Bayes moderation (

limma) to improve variance estimates. - Multiple Testing Correction: Apply the Benjamini-Hochberg procedure to control the False Discovery Rate (FDR) at 5%.

- Interpretation: Significantly associated taxa with |log2 fold change| > 1 and FDR < 0.05 are reported as differentially abundant.

Visualizations

CLR Transformation Data Processing Workflow

Core Mathematical Properties of CLR Transformation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for CLR-Based Microbiome Analysis

| Item | Function / Role | Example Product/Software |

|---|---|---|

| Pseudocount Reagent | Adds a small constant to handle zero counts, enabling log-transformation. | Uniform addition of 1. Imputation: zCompositions R package. |

| Geometric Mean Calculator | Computes the central tendency measure used as the CLR divisor. | gm_mean() function in R; scipy.stats.mstats.gmean in Python. |

| CLR Transformation Software | Applies the transformation to entire data matrices efficiently. | R: compositions::clr(). Python: skbio.stats.composition.clr. |

| Compositional Covariance Estimator | Calculates robust covariance matrices from CLR data for network inference. | SpiecEasi package with mbMethod="clr". |

| Compositional PCA Tool | Performs principal component analysis on CLR-transformed data. | prcomp function in R on CLR matrix; sklearn.decomposition.PCA. |

| Differential Abundance Suite | Fits linear models to CLR data for association testing. | Maaslin2, limma with voom/limma-trend on CLR output. |

Within the broader thesis on Centered Log-Ratio (CLR) transformation for microbiome data analysis, a fundamental obstacle is the nature of raw amplicon sequence variant (ASV) or operational taxonomic unit (OTU) count data. Direct analysis of these raw reads is compromised by three interrelated issues: Sparsity, the challenge of insufficient Sequencing Depth, and the resulting propensity for False Correlations. This document details these problems, provides protocols for their diagnosis, and introduces preprocessing steps essential for robust downstream analysis, including CLR transformation.

Table 1: Quantitative Manifestations of Raw Read Problems in Typical Microbiome Studies

| Problem | Typical Metric | Range in Typical 16S rRNA Studies | Impact on Downstream Analysis | ||

|---|---|---|---|---|---|

| Sparsity | Percentage of Zero Counts | 50-90% of the sample-by-feature matrix | Inflates beta-diversity distances; violates assumptions of many statistical models. | ||

| Insufficient Depth | Total Reads per Sample | 10,000 - 100,000 reads (highly variable) | Fails to capture rare taxa; leads to undersampling bias. | ||

| False Correlations | Spurious Correlation Coefficient | Can exceed | ±0.7 | in simulation | Misleads network inference, biomarker discovery, and mechanistic hypotheses. |

Table 2: Causes and Consequences of False Correlations

| Primary Cause | Mechanism | Resulting Artifact |

|---|---|---|

| Compositionality | Sum constraint (e.g., library size) creates negative dependence between features. | A decrease in one taxon's proportion artificially inflates the proportions of others. |

| Sparsity-Induced | Co-occurrence of zeros (due to undersampling, not biology) is misinterpreted as positive association. | Non-coexisting taxa appear correlated. |

| Depth Heterogeneity | Large variation in library sizes across samples distorts covariance structure. | Correlations reflect sampling effort rather than biological relationships. |

Diagnostic Protocols

Protocol 3.1: Assessing Sparsity and Sequencing Depth

Objective: To quantify data sparsity and depth variation within a dataset prior to analysis. Materials:

- Sample-by-feature count table (e.g., ASV table).

- Statistical software (R, Python).

Procedure:

- Calculate Library Size: For each sample i, compute total reads:

LibSize_i = sum(counts_i). - Calculate Sparsity: For the entire dataset, compute:

Sparsity = (Number of Zero Entries) / (Total Entries in Table) * 100. - Visualize Distribution: Generate histograms for (a) library sizes per sample and (b) read counts per non-zero feature.

- Set Depth Threshold: Apply a minimum read threshold (e.g., 10,000 reads) for sample inclusion. Justify based on rarefaction curves (see Protocol 3.2).

Research Reagent Solutions:

| Item | Function in Diagnosis |

|---|---|

| QIIME 2 (q2-core) | Pipeline for importing and summarizing feature tables. |

| R phyloseq package | sample_sums() and taxa_sums() functions for rapid calculation. |

| ggplot2 R package | Creation of publication-quality histograms and density plots. |

Protocol 3.2: Generating Rarefaction Curves

Objective: To determine if sequencing depth was sufficient to capture community diversity. Procedure:

- Subsampling: Using a tool like

vegan::rarecurvein R, repeatedly subsample without replacement at increasing sequencing depths (e.g., 100, 1000, 5000... reads). - Calculate Richness: At each depth, compute the number of observed features.

- Plot & Interpret: Plot subsampled richness against sequencing depth. A plateau indicates sufficient depth; a continued rise suggests undersampling.

Preprocessing Workflow for CLR Transformation

CLR transformation (clr(x) = log(x / g(x)), where g(x) is the geometric mean) is a cornerstone of the presented thesis. However, it cannot be applied directly to raw reads containing zeros. The following workflow mitigates sparsity and depth issues to enable valid CLR application.

Title: Preprocessing Workflow to Enable CLR Transformation

Protocol 4.1: Prevalence-Based Filtering

Objective: Remove spurious features that exacerbate sparsity. Procedure: Remove any feature (ASV/OTU) not present in at least 10% of all samples (or a sample subset, e.g., per treatment group). This reduces the number of uninformative zeros.

Protocol 4.2: Addressing Depth Variation (Two Pathways)

Objective: To negate the effect of variable sequencing depth on covariance structure. Option A: Rarefaction

- Use

q2-corepluginrarefyor R packagevegan::rrarefy. - Choose a rarefaction depth that balances data retention (minimal sample loss) and diversity capture (from rarefaction curves).

- Drawback: Discards valid data.

Option B: Cumulative Sum Scaling (CSS) Normalization

- Use

metagenomeSeq::cumNormfunction in R. - This method scales counts by a percentile (e.g., median) of counts distributed across features, preserving all data while reducing technical variation.

Research Reagent Solutions:

| Item | Function in Preprocessing |

|---|---|

| QIIME 2 q2-core plugin | For rarefaction (rarefy) and prevalence filtering (feature-table filter-features). |

| R metagenomeSeq package | For CSS normalization (cumNorm, MRcounts). |

| DESeq2 R package | For median-of-ratios normalization (an alternative for RNA-seq, adaptable for microbiome). |

Protocol 4.3: Zero Imputation for Compositional Methods

Objective: To replace zeros with sensible non-zero values, allowing log-ratio analysis.

Procedure using R package zCompositions:

- Install and load the

zCompositionspackage. - Apply the Bayesian-multiplicative replacement method:

cmultRepl(t_count_table, method="CZM", label=0). - This function replaces zeros with estimates based on the correlation structure of the data, preserving the compositional nature.

Validation Protocol: Detecting False Correlations

Protocol 5.1: Simulation to Benchmark Correlation Methods

Objective: To compare the false positive rate of correlation methods on compositional data. Procedure:

- Simulate Data: Use the

SPIEC-EASI::makeGraphandSPIEC-EASI::sparsifyfunctions in R to generate a known, sparse ground-truth microbial network with no correlations. - Generate Counts: Use

SPIEC-EASI::makeMockDatato produce realistic, compositional count data from the network. - Calculate Correlations: Compute pairwise correlations on (a) raw counts, (b) normalized counts, and (c) CLR-transformed data.

- Quantify Error: Calculate the false discovery rate (FDR) for each method by comparing inferred correlations to the known (null) network.

Title: Validation of Correlation Methods via Simulation

Key Reagent: SPIEC-EASI R package (Tools for generating synthetic microbiome data and inferring networks, essential for method benchmarking).

Key Assumptions and Prerequisites for Applying CLR to Microbiome Datasets

The centered log-ratio (CLR) transformation is a cornerstone of compositional data analysis (CoDA) for microbiome sequencing data, such as 16S rRNA gene amplicon or shotgun metagenomic surveys. Its application is not universal and rests on specific mathematical and biological assumptions. Within the broader thesis on CLR for microbiome research, this protocol outlines the critical pre-application checks and methodologies.

Core Prerequisite: Recognizing Compositionality Microbiome sequence count data is fundamentally compositional. The total number of sequences per sample (library size) is arbitrary and constrained, carrying no biological information. Thus, inferences can only be made about relative abundances. CLR is designed to operate within this simplex sample space.

Key Assumptions: Validation Checklist

Applying CLR requires verifying the following assumptions. Failure to do so can lead to spurious correlations and erroneous statistical conclusions.

Table 1: Key Assumptions for CLR Application

| Assumption Category | Specific Requirement | Diagnostic Check | Acceptable Outcome | ||

|---|---|---|---|---|---|

| Data Structure | Absence of True Zeros | Examine count table for zeros. | Zeros are only due to undersampling (i.e., "count zeros"), not structural absence. | ||

| Data Structure | High-Dimensionality | Assess feature (e.g., ASV/OTU) count. | Features (p) >> Samples (n). CLR is most stable when p is large. | ||

| Distributional | Log-Normality Underlying | Use goodness-of-fit tests on non-zero reads. | After imputation, the underlying (unobserved) absolute abundances are log-normal. | ||

| Experimental | Library Size Independence | Correlate library size with first PC of raw counts. | Correlation is negligible (e.g., | r | < 0.1). |

| Biological | Relevant Signal in Covariance | Perform prior variance analysis (e.g., via ANCOM-BC). | A substantial proportion of features show differential abundance across groups. |

Pre-Processing Protocol: From Raw Counts to CLR Input

This detailed protocol must be executed prior to the CLR transformation itself.

Protocol 3.1: Zero Handling and Pseudo-Count Addition

Objective: To address count zeros, which are undefined in log-space, without introducing severe bias.

Materials: Raw Amplicon Sequence Variant (ASV) count table (samples x features).

Procedure:

1. Filtering: Remove features with a prevalence (percentage of non-zero samples) below 5-10%. This eliminates rare, spurious taxa that amplify noise.

2. Pseudo-Count Addition: Add a uniform pseudo-count of 1 to all counts in the matrix. Critical Note: This is a minimal, non-informative imputation. For more sophisticated handling, implement Bayesian multiplicative replacement (e.g., using the zCompositions R package) which models zeros as missing values below a detection limit.

3. Validation: Post-addition, confirm no zeros remain in the dataset slated for CLR transformation.

Protocol 3.2: Library Size and Compositional Effect Diagnostic Objective: To verify that the variation in library size does not dominate the biological signal. Procedure: 1. Calculate total reads (library size) per sample. 2. Perform a Principal Component Analysis (PCA) on the raw, filtered count matrix (without CLR). 3. Calculate the Pearson correlation between sample library sizes and their scores along the first principal component (PC1). 4. Interpretation: A strong correlation (|r| > 0.3) suggests library size is a major source of variance, violating the core compositional principle. In such cases, consider more aggressive filtering or investigate technical batch effects before proceeding.

Core CLR Transformation Protocol

Protocol 4.1: Mathematical Implementation

Objective: To correctly compute the CLR-transformed features.

Input: Pre-processed count matrix X with n samples and p features, containing only positive values.

Formula:

For a sample vector x = [x₁, x₂, ..., xₚ], the CLR transformation is:

clr(x) = [log(x₁ / g(x)), log(x₂ / g(x)), ..., log(xₚ / g(x))]

where g(x) = (∏ᵢ xᵢ)^(1/p) is the geometric mean of the sample vector.

Software Steps:

1. In R, use the clr() function from the compositions or mixOmics package.

2. In Python, use the clr() function from the skbio.stats.composition module.

Output: An n x p matrix of real-valued, centered log-ratios. Each feature's values are centered around zero by the sample-specific geometric mean.

Visualization of Workflows and Relationships

Title: CLR Application Pre-Processing and Validation Workflow

Title: Mathematical Relationship of CLR Transformation for Three Taxa

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagent Solutions for CLR-Based Microbiome Analysis

| Item/Category | Function & Rationale | Example Product/Software |

|---|---|---|

| High-Fidelity PCR Mix | For initial 16S rRNA gene amplification. Minimizes PCR drift bias, which can distort composition prior to sequencing. | Q5 High-Fidelity DNA Polymerase (NEB). |

| Standardized Mock Community | Essential positive control. Validates sequencing run and bioinformatic pipeline, confirming that observed zeros are technical. | ZymoBIOMICS Microbial Community Standard. |

| DNA Extraction Kit with Bead Beating | Standardized cell lysis. Critical for unbiased representation of Gram-positive and Gram-negative bacteria in the final composition. | DNeasy PowerSoil Pro Kit (Qiagen). |

| Bioinformatic Pipeline (QIIME 2 / DADA2) | Processes raw sequences into Amplicon Sequence Variant (ASV) count tables. Accurate denoising reduces artificial inflation of rare taxa. | QIIME 2 (2024.5 release), DADA2 R package. |

| CoDA Software Library | Performs the CLR transformation and related compositional methods (e.g., robust variant, proportionality). | compositions (R), scikit-bio (Python). |

| SparCC or PRO | Algorithm for inferring microbial association networks from CLR-transformed data, addressing the compositionality-induced bias in correlation. | SparCC (Python), propr (R). |

Step-by-Step CLR Implementation: A Practical Pipeline for Microbiome Data

Within the broader thesis on the Centered Log-Ratio (CLR) transformation for microbiome data analysis, pre-processing is the critical, non-negotiable foundation. The CLR transformation, defined as CLR(x) = ln[x_i / g(x)], where g(x) is the geometric mean of the feature vector, is highly sensitive to zeros and compositionality. Therefore, rigorous pre-processing protocols for filtering, zero handling, and library size normalization are essential to ensure the resulting compositional representations are biologically valid and statistically sound for downstream analyses in drug development and biomarker discovery.

Key Pre-processing Steps: Protocols & Application Notes

Library Size Normalization (Total Sum Scaling)

Protocol: Total Sum Scaling (TSS) to counts per million (CPM).

- Input: Raw count matrix (features × samples).

- Calculation: For each sample j, divide the count of every feature i by the sample's total library size and multiply by a scaling factor (e.g., 1,000,000).

CPM_ij = (X_ij / Σ_i X_ij) * 1,000,000 - Output: Relative abundance matrix. This mitigates differences in sequencing depth but does not address compositionality.

Table 1: Effect of Library Size Normalization on a Simulated Dataset

| Sample ID | Raw Count (Feature A) | Total Library Size | CPM (Feature A) |

|---|---|---|---|

| S1 | 150 | 50,000 | 3,000 |

| S2 | 150 | 150,000 | 1,000 |

| S3 | 300 | 100,000 | 3,000 |

Filtering of Low-Abundance Features

Protocol: Prevalence and Minimum Abundance Filtering.

- Define Thresholds: Set a minimum abundance (e.g., >10 counts) and a minimum prevalence (e.g., in >10% of samples).

- Apply Filter: For each feature, calculate the number of samples where it exceeds the minimum abundance. Retain only features where this count meets or exceeds the prevalence threshold.

- Rationale: Removes spurious noise, reduces dimensionality, and minimizes false positives. Critical Note: Filtering must be performed before zero-handling steps to avoid imputing or modifying zeros from features deemed irrelevant.

Zero Handling: Pseudocounts vs. CZM

Zeros are non-informative and prevent geometric mean calculation for CLR. Two primary strategies are employed.

Protocol A: Pseudocount Addition

- Method: Add a uniform, small positive value to all entries in the count matrix.

X_adj = X + α, where α is typically 1 or the minimum positive count observed. - Impact: Simplifies CLR computation but is arbitrary. It disproportionately affects low-abundance features and can distort the covariance structure.

Protocol B: Count Zero Multiplicative (CZM) Imputation

- Method: Replace zeros with a probability-based, feature-specific value that respects the compositional nature of the data.

- Algorithm (Simplified): a. For each sample, estimate the probability that a zero is a "count zero" (due to undersampling). b. Impute zeros multiplicatively using the remaining positive counts and a Bayesian approach. c. This preserves the relative proportions of the non-zero parts.

- Rationale: More statistically rigorous than a pseudocount for compositional data, as it attempts to model the zero as a missing value due to sampling depth.

Table 2: Comparison of Zero-Handling Methods for CLR Transformation

| Method | Core Principle | Advantage | Disadvantage | Suitability for CLR |

|---|---|---|---|---|

| Pseudocount | Add constant (e.g., 1) to all counts. | Simple, fast, guaranteed non-zero. | Arbitrary, biases low counts, distors variance. | Low - introduces compositional bias. |

| CZM Imputation | Probabilistic, multiplicative replacement. | Respects compositionality, models sampling zeros. | Computationally intensive, requires careful parameterization. | High - designed for compositional data. |

Integrated Experimental Workflow for CLR Pre-processing

Title: Integrated Pre-processing Workflow for CLR Transformation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Microbiome Data Pre-processing

| Tool/Software | Function | Key Application in Protocol |

|---|---|---|

| R with phyloseq | Bioconductor object for organizing microbiome data. | Container for OTU table, taxonomy, and sample metadata; enables integrated filtering. |

| R with microbiome package | Specialized toolbox for microbiome analysis. | Provides functions for prevalence filtering, compositionality tools, and CZM implementation. |

| R with zCompositions | R package for handling zeros in compositional data. | Primary tool for CZM imputation (cmultRepl function). |

| QIIME 2 (qiime2.org) | End-to-end microbiome analysis platform. | Offers plugins for filtering (feature-table filter-features) and downstream analysis. |

| Python with skbio & SciKit-bio | Python libraries for bioinformatics and machine learning. | Enables scripting of custom pre-processing pipelines and integration with ML workflows. |

| Jupyter / RStudio | Interactive development environments. | Essential for exploratory data analysis, protocol scripting, and visualization. |

Within microbiome data analysis, the Compositional Data Analysis (CoDA) paradigm is essential due to the non-informative total sum constraint of sequencing data. The Centered Log-Ratio (CLR) transformation is a cornerstone technique for moving data from the simplex to real Euclidean space, enabling the application of standard statistical methods. This application note, situated within a broader thesis on robust CLR application for drug development biomarker discovery, details the precise calculation and critical importance of the geometric mean as the CLR's denominator.

The CLR transformation for a (D)-component compositional vector (\mathbf{x} = [x1, x2, ..., xD]) is defined as: [ CLR(\mathbf{x}) = \left[ \ln\frac{x1}{g(\mathbf{x})}, \ln\frac{x_2}{g(\mathbf{x})}, ..., \ln\frac{D}{g(\mathbf{x})} \right] ] where (g(\mathbf{x})) is the geometric mean of all (D) components. This transformation centers the log-transformed components around zero, ensuring isometric (distance-preserving) properties, but its validity is wholly dependent on a correctly and robustly calculated (g(\mathbf{x})).

Theoretical Foundation & Quantitative Data

The geometric mean, as opposed to the arithmetic mean, is the appropriate measure of central tendency for multiplicative processes and ratio-based data, such as microbial abundances.

Table 1: Comparison of Mean Types for Compositional Data

| Mean Type | Formula | Sensitivity to Zeros | Appropriate Data Space |

|---|---|---|---|

| Arithmetic | (\frac{1}{D}\sum{i=1}^{D} xi) | Low (zero values reduce sum) | Real Euclidean, additive |

| Geometric | (\left(\prod{i=1}^{D} xi\right)^{1/D}) | High (any zero makes product zero) | Simplex, multiplicative |

| Modified Geometric* | (\left(\prod{i=1}^{D} (xi + c)\right)^{1/D}) | Mitigated by pseudo-count (c) | Aitchison geometry (with care) |

*Required for sparse microbiome data containing true zeros.

Table 2: Impact of Geometric Mean Calculation on CLR Output (Simulated Data)

| Taxon | Raw Abundance (Sample A) | CLR (Correct GM) | CLR (GM w/ Pseudo-count=1) | CLR Error |

|---|---|---|---|---|

| Taxon_1 | 10 | 1.05 | 0.85 | -0.20 |

| Taxon_2 | 5 | 0.21 | 0.01 | -0.20 |

| Taxon_3 | 0 | -3.91* | -1.56 | +2.35 |

| Taxon_4 | 120 | 2.65 | 2.45 | -0.20 |

| Geometric Mean | 0.0 | 2.87 | 4.17 | N/A |

*Derived from a pseudo-count applied prior to GM calculation for the whole sample. This table illustrates the systemic shift and distortion introduced when a pseudo-count alters the denominator.

Experimental Protocols

Protocol 3.1: Standard Geometric Mean Calculation for Non-Zero Compositions

Purpose: To compute the CLR denominator for a compositional sample with no zero values.

- Input: A vector of (D) positive, non-zero abundance values ((x1, x2, ..., x_D)).

- Log-Transform: Calculate the natural logarithm of each component: (li = \ln(xi)).

- Arithmetic Mean of Logs: Compute ( \bar{l} = \frac{1}{D} \sum{i=1}^{D} li ).

- Exponentiate: The geometric mean (g(\mathbf{x}) = e^{\bar{l}}).

- CLR Calculation: For each component (i), (CLRi = \ln(xi) - \bar{l}). Note: This is mathematically equivalent to (g(\mathbf{x}) = (\prod x_i)^{1/D}) but avoids numerical overflow.

Protocol 3.2: Modified Geometric Mean with Pseudo-Count for Sparse Data

Purpose: To compute a stable CLR denominator for sparse microbiome data containing zeros.

- Input: A vector of (D) non-negative abundance values, some potentially zero.

- Pseudo-Count Selection: Choose an appropriate positive value (c). Common strategies include:

- A global minimum non-zero value across the dataset (e.g., 1 for integer counts).

- A proportion of the minimum non-zero value per sample (e.g., 0.65).

- The cmultRepl method from the

zCompositionsR package.

- Replacement: Create a modified vector (\mathbf{x'} = (x1 + c, x2 + c, ..., x_D + c)).

- Geometric Mean Calculation: Apply Protocol 3.1 to the modified vector (\mathbf{x'}) to obtain (g(\mathbf{x'})).

- CLR Calculation: For each component (i), (CLRi = \ln(xi + c) - \ln(g(\mathbf{x'}))). Critical Consideration: The choice of (c) introduces bias and affects downstream analysis. This must be documented and justified within the research thesis.

Protocol 3.3: Evaluation of Geometric Mean Stability Across Sample Groups

Purpose: To assess the robustness of the CLR denominator in a case-control study.

- Grouping: Partition samples into pre-defined groups (e.g., Healthy vs. Disease).

- Calculate: Compute the geometric mean for each individual sample using Protocol 3.1 or 3.2.

- Statistical Test: Perform a non-parametric Mann-Whitney U test between the log-transformed geometric means of the two groups.

- Interpretation: A significant p-value (e.g., < 0.05) indicates a systematic difference in the central tendency of the microbial biomass between groups, violating the assumption of a common baseline and complicating inter-group CLR comparisons. This may necessitate a more sophisticated normalization (e.g., between-sample CLR, also known as CLR-b).

Mandatory Visualizations

Diagram Title: CLR Transformation Workflow with Geometric Mean.

Diagram Title: Role of GM as the Centering Point for CLR.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for CLR & Geometric Mean Analysis

| Item/Category | Example/Product | Function in Analysis |

|---|---|---|

| CoDA Software Package | compositions (R), scikit-bio (Python) |

Provides built-in, optimized functions for clr() and geometric mean calculation, ensuring mathematical correctness. |

| Zero-Handling Library | zCompositions (R package) |

Implements advanced pseudo-count and multiplicative replacement methods for dealing with zeros prior to GM calculation. |

| High-Precision Math Library | GNU MPFR (via Rmpfr or mpmath) |

Prevents numerical underflow/overflow when calculating the GM of very large or small numbers across many taxa. |

| Data Visualization Suite | ggplot2 (R), matplotlib/seaborn (Python) |

Creates essential diagnostic plots (e.g., boxplots of sample GMs, scatterplots of CLR values) to assess transformation performance. |

| Statistical Testing Framework | statsmodels (Python), stats (R) |

Enables Protocol 3.3 to test for significant differences in geometric means across experimental groups, a key validity check. |

In the broader thesis on Centered Log-Ratio (CLR) transformation for microbiome data analysis, this document serves as a practical guide for implementing this essential preprocessing step. CLR transformation addresses the compositional nature of microbiome sequencing data, allowing for the application of standard statistical methods by moving from the simplex to real Euclidean space. Its correct application is critical for meaningful downstream analysis in drug development and biomarker discovery.

Core Mathematical Principles

The CLR transformation is defined for a composition x = (x₁, x₂, ..., xₖ) as:

clr(x) = [ln(x₁ / g(x)), ln(x₂ / g(x)), ..., ln(xₖ / g(x))]

where g(x) is the geometric mean of all components. This creates a transformation with a zero-sum constraint.

Research Reagent Solutions & Essential Materials

| Item/Category | Function in Microbiome CLR Analysis |

|---|---|

| 16S rRNA Gene Sequencing Kit (e.g., Illumina 16S Metagenomic) | Provides the raw count data from microbial communities for transformation. |

| QIIME2 or mothur Pipeline | Processes raw sequences into Amplicon Sequence Variant (ASV) or Operational Taxonomic Unit (OTU) tables. |

R compositions Package |

Implements coherent compositional data analysis, including robust CLR. |

R microbiome Package |

Provides microbiome-specific utilities and wrappers for transformation. |

Python scikit-bio Library |

Offers skbio.stats.composition.clr for compositional data analysis. |

Zero-Replacement Tool (e.g., zCompositions R package) |

Handles zeros, which are undefined in log-ratios, prior to CLR transformation. |

| High-Performance Computing (HPC) Cluster | Enables transformation of large-scale microbiome datasets (e.g., >10,000 samples). |

Detailed Experimental Protocols

Protocol 4.1: Data Preprocessing Prior to CLR

- Input: An ASV/OTU table (Samples x Taxa) of raw read counts.

- Filtering: Remove taxa present in fewer than 10% of samples or with fewer than 10 total reads (thresholds adjustable per study).

- Zero Handling:

- Apply a multiplicative replacement method using the

cmultReplfunction from thezCompositionsR package (for count data). - OR apply a Bayesian-multiplicative replacement using the

bayesmultReplfunction for more robust handling. - Pseudocode:

table_no_zeros <- zCompositions::cmultRepl(count_table, method="CZM", output="p-counts")

- Apply a multiplicative replacement method using the

- Normalization: Convert the zero-handled table to relative abundances (sum to 1 per sample) or use the output from

cmultRepldirectly. - Output: A compositionally coherent table ready for CLR transformation.

Protocol 4.2: Performing CLR Transformation in R

Method A: Using the compositions Package (Theoretically Cohesive)

Method B: Using the microbiome Package (Microbiome-Optimized)

Protocol 4.3: Performing CLR Transformation in Python

Protocol 4.4: Validation of CLR Transformation

- Zero-Sum Check: Calculate the sum of each sample's CLR-transformed features. The result should be approximately zero (within floating-point precision error).

- R:

all(colSums(clr_matrix) < 1e-10) - Python:

np.allclose(df_clr.sum(axis=1), 0, atol=1e-10)

- R:

- Dimensionality Assessment: Perform Principal Component Analysis (PCA) on the CLR matrix. The first principal component should explain a substantial proportion of variance, as CLR transforms to a

D-1dimensional space. - Downstream Analysis: Use the validated CLR matrix in multivariate analyses (e.g., PERMANOVA, DESeq2 for differential abundance).

Table 1: Impact of CLR Transformation on a Simulated Microbiome Dataset (n=100 samples, D=50 taxa)

| Metric | Raw Count Data | Relative Abundance | CLR-Transformed Data |

|---|---|---|---|

| Data Space | Counts (ℕ⁵⁰) | Simplex (S⁵⁰) | Real Euclidean (ℝ⁴⁹) |

| Mean Correlation between Features | -0.12 | -0.38 | 0.02 |

| Avg. Euclidean Distance between Samples | 1.2e5 ± 4500 | 0.81 ± 0.05 | 12.7 ± 1.2 |

| Variance Explained by PC1 (%) | 45.2% | 62.5% | 34.1% |

| Result of Zero-Sum Check | N/A | N/A | TRUE (sum < 1e-12) |

Visual Workflows and Pathways

Diagram 1: Standard CLR Transformation Protocol Workflow

Diagram 2: Logical Rationale for CLR in Microbiome Analysis

This Application Note, framed within a broader thesis on Centered Log-Ratio (CLR) transformation for microbiome data analysis, details the interpretation of CLR-transformed abundance values. In microbiome and metabolomics research, raw compositional data (e.g., 16S rRNA sequencing counts, metabolite intensities) are subject to a constant-sum constraint, making standard statistical analyses invalid. The CLR transformation, a cornerstone of Compositional Data Analysis (CoDA), addresses this by transforming data into a log-ratio space, enabling the application of Euclidean geometry and standard multivariate methods. This document provides researchers, scientists, and drug development professionals with protocols and interpretive frameworks for accurately analyzing and deriving biological meaning from CLR-transformed data.

Core Concepts & Mathematical Definition

The CLR Transformation converts a composition vector x = (x₁, x₂, ..., x_D) of D components (e.g., microbial taxa) with positive parts to a vector of log-ratios relative to the geometric mean of all components.

[ clr(\mathbf{x}) = \left[ \ln\frac{x1}{g(\mathbf{x})}, \ln\frac{x2}{g(\mathbf{x})}, ..., \ln\frac{xD}{g(\mathbf{x})} \right] ] where the geometric mean ( g(\mathbf{x}) = \sqrt[D]{x1 \cdot x2 \cdots xD} ).

This transformation results in values that are centered (sum to zero), are scale-invariant, and exist in a D-1 dimensional real space (the Aitchison simplex). The interpretation of a single CLR value for a feature (e.g., a bacterial taxon) is its log-abundance relative to the average abundance across all features in the sample.

Data Presentation: Interpreting CLR Values

Table 1: Interpretation Guide for CLR-Transformed Abundance Values

| CLR Value | Interpretation | Relative Abundance Context |

|---|---|---|

| 0 | The feature's abundance is exactly equal to the geometric mean abundance of all features in the sample. | Baseline (Average) |

| Positive (e.g., +2.0) | The feature is more abundant than the geometric mean. A value of +2.0 means the feature is exp(2.0) ≈ 7.4 times more abundant than the geometric mean. | Relatively Enriched |

| Negative (e.g., -3.0) | The feature is less abundant than the geometric mean. A value of -3.0 means the feature is exp(-3.0) ≈ 0.05 times (or 1/20th) the geometric mean. | Relatively Depleted |

| Magnitude Difference (e.g., Δ = 4.0) | The difference in CLR values between two conditions for the same feature. exp(4.0) ≈ 54.6 indicates a ~55-fold relative change in abundance between conditions. | Fold-Change in Relative Space |

Table 2: Example CLR Values from a Simulated Gut Microbiome Dataset

| Taxon | Sample A (Healthy) CLR | Sample B (Disease) CLR | Δ (B - A) | exp(Δ) | Interpretive Summary |

|---|---|---|---|---|---|

| Bacteroides | 3.21 | 1.85 | -1.36 | 0.26 | ~4-fold relative depletion in Disease. |

| Faecalibacterium | 2.15 | 0.98 | -1.17 | 0.31 | ~3-fold relative depletion in Disease. |

| Escherichia | -4.50 | -2.10 | +2.40 | 11.02 | ~11-fold relative enrichment in Disease. |

| Akkermansia | -1.22 | -3.50 | -2.28 | 0.10 | ~10-fold relative depletion in Disease. |

Note: CLR values are only directly comparable within the same sample or across samples transformed together, as the geometric mean is sample-specific.

Experimental Protocols

Protocol 4.1: Standard CLR Transformation for Microbiome Abundance Tables

Objective: To transform a count or proportion table (OTU/ASV table) into CLR-transformed abundances suitable for downstream statistical analysis.

Materials:

- Raw count table (features x samples).

- Computational environment (R/Python).

Procedure:

- Preprocessing & Filtering:

- Remove features present in fewer than 10% of samples or with a total count below a chosen threshold (e.g., 10 reads).

- Do NOT rarefy. Use methods robust to sequencing depth.

- Handling Zeros (Critical Step):

- Apply a multiplicative replacement strategy (e.g., the

cmultReplfunction in R'szCompositionspackage ormultiplicative_replacementin Python'sscikit-bio). - This method replaces zeros with small, non-zero values based on the multiplicative structure of the data, preserving the covariance structure for CoDA.

- Apply a multiplicative replacement strategy (e.g., the

- Transformation:

- Calculate the geometric mean for each sample across all features.

- For each feature in each sample, compute the natural logarithm of the proportion relative to the sample's geometric mean.

- R code:

clr_table <- compositions::clr(count_table + 1)(pseudocount; less ideal) or usemicrobiome::transform(abund_table, "clr")after zero-handling. - Python code:

from skbio.stats.composition import clr; clr_table = clr(abund_table)

- Output: A matrix of CLR-transformed abundances (dimensions: samples x features).

Protocol 4.2: Differential Abundance Analysis Using CLR-Transformed Data

Objective: To identify features whose relative abundance differs significantly between two or more experimental conditions using CLR-transformed data.

Materials:

- CLR-transformed abundance table (from Protocol 4.1).

- Sample metadata with grouping variables.

Procedure:

- Statistical Modeling:

- For simple two-group comparisons, use a linear model (e.g., t-test on CLR values).

- For complex designs, use linear models (e.g.,

limmain R) or linear mixed-effects models (e.g.,lme4in R) with CLR values as the response variable. - Important: The CLR transformation legitimizes the use of these Euclidean-based models.

- Effect Size Calculation:

- The model coefficient for a group contrast (e.g., Disease vs. Healthy) is the estimated difference in mean CLR value (Δ).

- Interpretation: exp(Δ) is the fold-difference in relative abundance between the conditions. An exp(Δ) of 2 means the feature is, on average, twice as abundant relative to the geometric mean in the first group compared to the second.

- Multiple Testing Correction:

- Apply Benjamini-Hochberg False Discovery Rate (FDR) correction to p-values across all tested features.

- Output: A list of differentially abundant features with Δ (CLR difference), exp(Δ) (fold-change), p-value, and q-value (FDR-adjusted p-value).

Mandatory Visualizations

Title: Workflow for CLR Transformation of Microbiome Data

Title: Step-by-Step CLR Calculation Example

The Scientist's Toolkit

Table 3: Research Reagent & Computational Solutions for CLR-Based Analysis

| Item / Resource | Function in CLR Analysis | Notes & Recommendations |

|---|---|---|

zCompositions R Package |

Implements Bayesian-multiplicative zero imputation (cmultRepl). |

Essential for proper zero handling before CLR. Preferable to simple pseudocounts. |

compositions R Package |

Core package for CoDA. Contains the clr() function and related tools. |

Provides a robust suite for all compositional transformations. |

scikit-bio Python Library |

Provides the clr() function and multiplicative_replacement in Python. |

Key Python resource for implementing CoDA workflows. |

microbiome R Package |

Wrapper function transform() for easy CLR transformation of phyloseq objects. |

Streamlines workflow within the popular phyloseq ecosystem. |

limma R Package |

Performs differential analysis on CLR-transformed data using linear models. | Ideal for complex experimental designs with multiple factors. |

MetagenomeSeq R Package |

Uses a zero-inflated Gaussian model on CLR-like log2 transformed data (fitFeatureModel). |

An alternative model-based approach that handles zeros internally. |

| SILVA / Greengenes Databases | Provide taxonomic classification for 16S rRNA sequences. | Required for annotating features before biological interpretation of CLR results. |

ggplot2 / ComplexHeatmap |

Visualization of CLR results (boxplots, heatmaps of CLR-transformed abundances). | CLR values are suitable for creating intuitive, quantitative visualizations. |

Application Notes

Differential Abundance Analysis (ANCOM-BC)

ANCOM-BC (Analysis of Compositions of Microbiomes with Bias Correction) is a robust statistical method for identifying differentially abundant taxa across groups. It accounts for the compositionality of microbiome data and corrects for bias induced by sample-specific sampling fractions.

Key Principles:

- Log-ratio Transformation: Operates on a chosen log-ratio (e.g., CLR) to address compositionality.

- Bias Correction: Estimates and corrects for sample-specific sampling efficiency biases.

- Linear Modeling: Fits a linear regression model to the bias-corrected abundances.

- False Discovery Rate (FDR): Controls for multiple hypothesis testing.

Recent Comparative Performance Data (Simulation Studies, 2023-2024):

| Method | Control of False Discovery Rate (FDR) | Power (Sensitivity) | Handles Zero Inflation | Adjusts for Covariates | Reference |

|---|---|---|---|---|---|

| ANCOM-BC | Strong (≤0.05) | High (>0.85) | Yes | Yes | [Lin & Peddada, 2020] |

| ALDEx2 | Moderate | Moderate | Yes | Yes | [Fernandes et al., 2014] |

| DESeq2 (modified) | Variable | High | Yes | Yes | [McMurdie & Holmes, 2014] |

| LEfSe | Weak | High | No | Limited | [Segata et al., 2011] |

| MaAsLin2 | Strong | Moderate-High | Yes | Yes | [Mallick et al., 2021] |

Microbial Correlation Networks

Microbial correlation networks infer co-occurrence or co-exclusion relationships between microbial taxa, providing insights into community structure and potential ecological interactions.

Key Considerations:

- Association Measure: Choice of correlation metric (e.g., SparCC, Proportionality, robust correlations on CLR-transformed data) to mitigate compositionality.

- Pseudo-count Addition: A critical step before CLR transformation to handle zeros. Recent benchmarks (2024) suggest values like 1/2 the minimum non-zero count or Bayesian-multiplicative replacements outperform simple addition of 1.

- Sparsity & Inference: Methods like SPIEC-EASI or gCoda apply graphical model inference to distinguish direct from indirect associations.

Performance Metrics of Network Inference Methods (Benchmark on Mock Communities):

| Method | Core Algorithm | Precision (1-FDR) | Recall | Runtime | Recommended for |

|---|---|---|---|---|---|

| SPIEC-EASI (MB) | Neighborhood Selection | 0.89 | 0.71 | Medium | Large-scale networks |

| SPIEC-EASI (GLasso) | Graphical Lasso | 0.85 | 0.75 | Slow | Dense networks |

| gCoda | Compositional Graphical Lasso | 0.91 | 0.68 | Fast | Moderate-sized datasets |

| SparCC | Iterative Correlation | 0.80 | 0.65 | Fast | Exploratory analysis |

| Propr (ρp) | Proportionality | 0.95 | 0.60 | Very Fast | Close associations |

Machine Learning for Microbiome Data

Supervised ML models are used for classification (e.g., disease state) or regression (e.g., predicting metabolite levels) using microbial features.

State-of-the-Art Workflow (Post-CLR Transformation):

- Feature Engineering: CLR-transformed abundances are the primary features. Phylogenetic or functional hierarchies can be used to create aggregated features.

- Model Selection: Regularized models (LASSO, Elastic Net) are favored for their inherent feature selection. Random Forests and gradient-boosting machines (XGBoost) are also common.

- Validation: Strict nested cross-validation is mandatory to avoid overfitting. External validation on a hold-out cohort is the gold standard.

Comparative Model Performance on IBD Classification (Meta-analysis, 2023):

| Model | Mean AUC (95% CI) | Key Top Features Identified | Feature Selection | Interpretability |

|---|---|---|---|---|

| LASSO Logistic Regression | 0.88 (0.85-0.91) | 15-20 Genera (e.g., Faecalibacterium, Escherichia) | Intrinsic | High (coefficients) |

| Random Forest | 0.90 (0.87-0.93) | 50+ OTUs, incl. rare taxa | Importance Scores | Medium |

| XGBoost | 0.91 (0.89-0.94) | Complex interactions | Gain-based | Medium-Low |

| SVM (Linear Kernel) | 0.86 (0.83-0.89) | Similar to LASSO | External filter | Low |

| MLP (Neural Net) | 0.89 (0.86-0.92) | Distributed representation | None | Very Low |

Experimental Protocols

Protocol: Differential Abundance Analysis with ANCOM-BC

Objective: To identify taxa whose absolute abundances are significantly different between two or more study groups (e.g., Control vs. Treatment).

Materials: CLR-transformed OTU/ASV table (samples x taxa), sample metadata table.

Software: R (≥4.0.0) with ANCOMBC package.

Procedure:

- Data Preparation:

Run ANCOM-BC:

Interpret Results:

Protocol: Constructing a Co-occurrence Network with SPIEC-EASI

Objective: To infer a sparse, undirected network of direct microbial associations from CLR-transformed abundance data.

Materials: CLR-transformed OTU/ASV table. A high-performance computing environment is recommended for large datasets.

Software: R with SpiecEasi and igraph packages.

Procedure:

- Data Input & Preprocessing:

Network Inference (using the Meinshausen-Bühlmann method):

Network Extraction & Analysis:

Protocol: Building a Predictive Classifier with Regularized Regression

Objective: To develop a model that predicts a binary outcome (e.g., disease status) from CLR-transformed microbial features.

Materials: CLR-transformed feature table, corresponding response vector. A pre-defined train/test split or cross-validation scheme.

Software: R with glmnet and caret packages.

Procedure:

- Setup for Nested Cross-Validation:

Train Elastic Net Model with Tuning:

Evaluate & Interpret Final Model:

Visualizations

Diagram Title: ANCOM-BC Analysis Workflow

Diagram Title: Supervised Machine Learning Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Analysis | Example/Note |

|---|---|---|

| R/Python Environment | Core computational platform for statistical and ML analyses. | R 4.3+ with tidyverse, ANCOMBC, SpiecEasi, glmnet. Python 3.10+ with scikit-learn, pandas, networkx. |

| CLR-Transformed Data Table | The primary analytical input, mitigating compositionality. | A samples (rows) x taxa/features (columns) matrix. Should be checked for and handled for zeros prior to CLR. |

| Stable Pseudo-count | Added to raw counts to enable log-transformation. | Recommended: ½ minimum non-zero count per sample or Bayesian-multiplicative replacement (e.g., zCompositions package). |

| High-Quality Metadata | Covariates and experimental factors for modeling and correction. | Must be meticulously curated. Includes clinical variables, batch, sequencing depth. |

| Reference Databases | For taxonomic and functional annotation of features. | SILVA, GTDB for 16S rRNA; UniRef, KEGG for shotgun metagenomics. |

| Computational Resources | For intensive tasks like network inference or large-scale ML. | Multi-core CPU, ≥16GB RAM for moderate datasets. HPC cluster for large-scale SPIEC-EASI or deep learning. |

| Visualization Tools | For generating publication-quality figures. | R: ggplot2, igraph, pheatmap. Python: matplotlib, seaborn, Cytoscape (for networks). |

Solving Common CLR Challenges: Troubleshooting, Pitfalls, and Best Practices

The centered log-ratio (CLR) transformation is a cornerstone of modern compositional data analysis for microbiome sequencing (e.g., 16S rRNA, shotgun metagenomics). A fundamental incompatibility arises because CLR requires non-zero values, while microbiome abundance tables contain an overwhelming number of zero-count features from undersampling, biological absence, or technical non-detects. This article, situated within a broader thesis on robust CLR application, critically compares prevalent zero-handling methods, providing explicit protocols and analytical guidance.

Critical Comparison of Zero-Handling Methods

Table 1: Comparison of Primary Zero-Handling Methods for CLR Transformation

| Method | Core Principle | Key Parameters & Variants | Advantages | Major Disadvantages | Suitability for CLR |

|---|---|---|---|---|---|

| Multiplicative Replacement | All zeros replaced with a proportion δ of a chosen baseline (e.g., minimum non-zero count). |

δ typically between 0.5-0.66. Baseline: global min, feature-wise min, or a constant. |

Simple, preserves data structure. Fast. | Introduces artificial "pseudo-counts." Distorts covariance structure. Sensitivity to δ choice. |

Directly enables CLR. May induce bias in downstream stats. |

| Bayesian Multiplicative Replacement (BM-R) | Models counts with Dirichlet or Multinomial distribution, replacing zeros with posterior estimates. | Dirichlet prior strength (alpha). Implemented in zCompositions R package. |

More statistically principled than simple multiplicative. Reduces distortion. | Computationally heavier. Prior choice influences results. | Good. Provides non-zero comp. for CLR. |

| Pseudocount Addition | Adds a fixed constant C to all counts in the matrix. |

C commonly 0.5, 1, or a minimal value (e.g., 1e-10). |

Extremely simple to implement. | Arbitrary. Over-inflates low counts. Severely distorts compositional properties. | Enables CLR but is not recommended for microbiome data. |

| Probability of Being Zero (PBZ) / Model-Based | Uses a statistical model (e.g., hurdle model) to estimate if a zero is biological or technical. | Implemented in tools like mbImpute or SparseDOSSA. |

Attempts to distinguish technical zeros. Can impute more realistic values. | Complex. Model-dependent. Risk of over-imputation. | Can provide a cleaned matrix for CLR if imputed values >0. |

| Simple Subtraction | Replace zeros with a small, fixed non-zero value (e.g., 0.001). | Value must be less than the smallest observed count. | Simple. | Highly arbitrary. Can dominate the composition of low-biomass samples. | Enables CLR but often produces poor, unstable results. |

Table 2: Quantitative Impact on Simulated Microbiome Data (Example)

| Method (Parameters) | Mean Relative Error of Covariance* | Mean Aitchison Distance from Ground Truth* | Runtime (sec, 100x500 matrix) |

|---|---|---|---|

| Ground Truth (No Zeros) | 0.00 | 0.00 | N/A |

| Multiplicative (δ=0.65) | 0.42 | 1.85 | <0.1 |

| BM-R (alpha=0.5) | 0.28 | 1.21 | 3.5 |

| Pseudocount (C=1) | 0.87 | 3.94 | <0.1 |

| PBZ Imputation | 0.31 | 1.45 | 62.0 |

*Lower values are better. Simulated data with 70% zeros.

Detailed Experimental Protocols

Protocol 3.1: Standardized Pipeline for Comparing Zero-Handling Methods

Objective: To evaluate the impact of different zero-handling methods on downstream CLR-based analyses (e.g., differential abundance, beta-diversity).

Materials: High-performance computing environment, R/Python with necessary packages.

Procedure:

- Data Input: Load a count matrix

X(samples x features) and metadata. - Method Application: Create copies of

Xand process each with a different zero-handling method.- Multiplicative Replacement (R):

- Multiplicative Replacement (R):

CLR Transformation: Apply CLR to each processed matrix.

Downstream Analysis:

- Beta-diversity: Calculate Euclidean distance on CLR matrices (equivalent to Aitchison distance). Perform PERMANOVA.

- Differential Abundance: Apply linear models (e.g., MaAsLin2,

limma) on CLR-transformed data.

- Benchmarking: Compare outputs against a validated benchmark (e.g., mock community data, simulation ground truth) using metrics from Table 2.

Protocol 3.2: Optimization of Delta (δ) for Multiplicative Replacement

Objective: Empirically determine an optimal δ value for a specific dataset.

Procedure:

- Define a search grid for

δ(e.g.,seq(0.5, 1, by=0.05)). - For each

δ:- Apply multiplicative replacement.

- Perform CLR and PCA.

- Calculate the Procrustes correlation between this PCA and a reference PCA derived from a high-depth, rarefied subset of data with minimal zeros.

- Plot Procrustes correlation vs.

δ. Theδyielding the highest correlation suggests optimal preservation of the geometric data structure. - Validate by checking the stability of key differential taxa identifications across a range of

δvalues near the optimum.

Visualizations

Title: Zero-Handling Workflow for CLR Analysis

Title: Core Method Comparison Table

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Zero-Handling & CLR Analysis

| Item / Solution | Function in Research | Example / Specification |

|---|---|---|

R Package: zCompositions |

Implements multiplicative, BM-R, and other zero replacement methods. Primary tool for protocol. | v1.5.0+. Function: cmultRepl(). |

R Package: compositions / robCompositions |

Provides the clr() function and robust compositional data analysis tools. |

Essential for the transformation step after zero handling. |

Python Library: scikit-bio |

Python implementation of CLR and other compositional metrics. | skbio.stats.composition.clr |

| Benchmark Dataset: Mock Community | Ground truth data with known compositions to validate method performance. | e.g., ATCC MSA-1003, BEI Mock Communities. |

Simulation Framework: SPARSim / SparseDOSSA |

Generates synthetic microbiome data with controlled zero structures for method testing. | Critical for controlled experiments in thesis research. |

| High-Performance Computing (HPC) Access | Necessary for running intensive model-based imputation (PBZ) or large-scale benchmarking. | Cloud (AWS, GCP) or institutional cluster. |

Managing High-Dimensionality and Low Sample Size (the 'p >> n' problem)

The analysis of microbiome data, characterized by sequencing count tables with thousands of microbial taxa (features, p) across far fewer samples (observations, n), epitomizes the 'p >> n' problem. This high-dimensionality, low-sample-size regime invalidates standard statistical inferences, leading to model overfitting, unreliable feature selection, and poor generalizability. Within the broader thesis on Centerd Log-Ratio (CLR) transformation for microbiome analysis, addressing 'p >> n' is paramount. The CLR transformation, by addressing compositionality, itself operates in the high-dimensional space. Therefore, subsequent analytical steps must incorporate robust dimensionality reduction, regularization, and validation protocols specifically designed for this challenging data structure to draw biologically meaningful conclusions applicable to drug development and translational research.

Table 1: Common Consequences of the 'p >> n' Problem in Microbiome Data Analysis

| Challenge | Manifestation | Typical Impact (Quantitative Example) |

|---|---|---|

| Curse of Dimensionality | Distance concentration; all pairwise distances become similar. | In a 16S rRNA study with p=10,000 ASVs and n=50, sample dissimilarity measures lose discriminative power. |

| Model Overfitting | Perfect in-sample prediction with zero out-of-sample accuracy. | A classifier may achieve 100% training accuracy but perform at ~50% (random) on an independent test set. |

| High-False Discovery Rate | Inflated Type I errors in differential abundance testing. | Without correction, with 10,000 hypothesis tests at α=0.05, 500 false positives are expected by chance alone. |

| Rank Deficiency | Covariance matrix is singular, preventing inversion. | The p x p covariance matrix has rank at most n-1 (e.g., 49), making multivariate methods like standard LDA impossible. |

| Feature Selection Instability | Selected features vary drastically between subsamples. | Top 20 "important" taxa identified from bootstrap samples may show less than 30% overlap. |

Table 2: Comparative Efficacy of Common 'p >> n' Mitigation Strategies in Microbiome Context

| Strategy | Key Mechanism | Advantages | Limitations (Post-CLR Application) |

|---|---|---|---|

| Regularized Regression (LASSO/Elastic Net) | L1/L2 penalty to shrink coefficients, perform automatic feature selection. | Produces sparse, interpretable models; handles collinearity. | Choice of penalty (λ) is critical; features selected can be sensitive to data perturbations. |

| Dimensionality Reduction (PCA on CLR) | Projects data onto orthogonal axes of maximal variance. | Reduces noise, facilitates visualization. | Principal components may not be biologically interpretable or relevant to the outcome. |

| Distance-Based Methods (PERMANOVA) | Uses a permutation test on a distance matrix (e.g., Aitchison). | Non-parametric; makes few distributional assumptions. | Provides only global significance, not feature-specific inferences. |

| Tree-/Network-Based Methods (Random Forests) | Aggregates predictions from many decorrelated trees. | Handles non-linearities; provides feature importance measures. | Prone to overfitting if not carefully tuned; can be computationally intensive. |

| Bayesian Graphical Models | Incorporates prior distributions to regularize estimates. | Quantifies uncertainty naturally; robust to small n. | Computationally complex; requires careful prior specification. |

Experimental Protocols for Managing 'p >> n'

Protocol 3.1: Regularized Regression Pipeline for Biomarker Discovery (Post-CLR)

Objective: To identify a stable, minimal set of microbial taxa predictive of a host phenotype (e.g., disease state) from CLR-transformed data.

- Input Data: CLR-transformed abundance matrix

Z(n x p) and response vectory(e.g., case/control). - Pre-screening (Optional): Apply a univariate filter (e.g., Wilcoxon rank-sum test) to reduce p to a more manageable size (e.g., 500-1000 top taxa) while maintaining a liberal alpha (e.g., 0.10).

- Data Splitting: Partition data into independent Training (70%), Validation (15%), and Hold-out Test (15%) sets. Ensure stratification by

yif classes are imbalanced. - Hyperparameter Tuning on Training Set:

- For Elastic Net (glmnet), perform 10-fold cross-validation over a grid of λ (penalty) and α (mixing parameter: 0=L2, 1=L1).

- The optimal (λ, α) pair is that which minimizes the cross-validated mean squared error (for continuous

y) or deviance (for binaryy).

- Model Training: Fit the final Elastic Net model on the entire training set using the optimal hyperparameters.

- Feature Selection: Extract the non-zero coefficients from the fitted model. These taxa constitute the candidate biomarker panel.

- Validation & Stability Assessment:

- Predict on the Validation Set to assess preliminary performance (AUC-ROC, accuracy).

- Perform stability analysis using 100 bootstrap resamples of the training set. Report the frequency with which each taxon is selected.

- Final Evaluation: Assess the final model's performance on the untouched Hold-out Test Set to report unbiased estimates of generalizability.

Protocol 3.2: Stability Selection Framework for Robust Feature Identification

Objective: To control false discoveries and increase the reproducibility of selected features in high-dimensional settings.

- Base Selector: Choose a feature selection method prone to high variance in 'p >> n' (e.g., LASSO, univariate testing).

- Subsampling: Generate B (e.g., 100) random subsamples of the data, each containing 50% of the samples.

- Selection: Apply the base selector to each subsample, recording which features are selected.

- Stability Score Calculation: For each feature j, compute its selection probability: Π̂_j = (Number of subsamples where j is selected) / B.

- Thresholding: Define a stable feature set as {j: Π̂j ≥ πthr}, where π_thr is a user-defined threshold (e.g., 0.6 - 0.8). This controls the expected number of false discoveries.

Protocol 3.3: Proper Validation and Error Estimation in 'p >> n'