The Complete MOTHUR SOP Guide: From Raw Sequences to Publication-Ready Microbiome Analysis

This article provides a comprehensive, step-by-step Standard Operating Procedure (SOP) for analyzing 16S rRNA gene sequencing data using the MOTHUR software platform.

The Complete MOTHUR SOP Guide: From Raw Sequences to Publication-Ready Microbiome Analysis

Abstract

This article provides a comprehensive, step-by-step Standard Operating Procedure (SOP) for analyzing 16S rRNA gene sequencing data using the MOTHUR software platform. Tailored for researchers, scientists, and drug development professionals, it covers the complete workflow from foundational concepts and raw sequence processing to advanced statistical analysis, common troubleshooting, and validation of results. The guide synthesizes current best practices to ensure rigorous, reproducible, and interpretable microbiome data analysis for biomedical and clinical research applications.

MOTHUR Microbiome 101: Core Concepts, Workflow Design, and Experimental Setup

Why MOTHUR? Understanding Its Niche in the Microbiome Analysis Ecosystem

Application Notes

MOTHUR is an open-source, platform-independent bioinformatics suite that implements a single piece of software to execute a microbial ecology community analysis pipeline. Its development was driven by the need for a standardized, reproducible, and comprehensive method to analyze 16S rRNA gene sequence data, particularly from high-throughput sequencing platforms like Illumina. MOTHUR’s niche is defined by its origins in, and continued focus on, the Sanger sequencing and 454 pyrosequencing eras, and its unparalleled capacity for processing and curating sequence data to the highest standard. While newer, faster pipelines (e.g., QIIME 2, DADA2) have gained popularity for large-scale Illumina datasets, MOTHUR remains the tool of choice for researchers requiring maximal control over each quality filtering and curation step, for analyzing legacy data, and for implementing gold-standard, peer-reviewed Standard Operating Procedures (SOPs).

The core philosophy of MOTHUR is to provide a complete toolkit, enabling users to go from raw sequences to publication-ready statistical analyses and visualizations within a single environment. It is exceptionally well-documented, with a seminal SOP publication that has been cited tens of thousands of times, making it a cornerstone of reproducible microbiome research.

Key Quantitative Comparisons of Analysis Platforms

Table 1: Comparison of Major Microbiome Analysis Platforms

| Feature | MOTHUR | QIIME 2 | DADA2 |

|---|---|---|---|

| Primary Niche | In-depth curation, legacy data, established SOPs | Modular, extensible pipeline for diverse data | Fast, accurate amplicon sequence variant (ASV) inference |

| Core Algorithm | Oligotyping & OTU clustering (e.g., average neighbor) | Deblur / DADA2 (via plugins) for ASVs | Divisive amplicon denoising algorithm for ASVs |

| Typical Input | Sanger, 454, Illumina (fastq, fasta, qual) | Demultiplexed Illumina fastq (primarily) | Demultiplexed Illumina fastq |

| Speed | Slower, highly meticulous | Moderate to Fast (depends on plugins) | Very Fast |

| Reproducibility | High (single-environment scripts) | Very High (provenance tracking) | High (R script) |

| Learning Curve | Steep (command-line) | Moderate (CLI & GUI options) | Moderate (R-based) |

| Key Strength | Granular control, comprehensive error checking | Ecosystem of plugins, interactive visuals | Accurate ASVs without clustering |

Protocols

Protocol 1: MOTHUR Standard Operating Procedure for Illumina MiSeq Data (Abridged)

This protocol follows the Schloss lab SOP, designed to process paired-end Illumina MiSeq data to generate high-quality Operational Taxonomic Units (OTUs).

I. Research Reagent Solutions & Essential Materials

Table 2: Essential Toolkit for MOTHUR Analysis

| Item | Function |

|---|---|

| Demultiplexed FASTQ Files | Raw sequence data with barcodes/linker sequences removed. |

| Silva or Greengenes Reference Alignment | Curated database for aligning sequences to determine phylogeny and filter out non-16S regions. |

| RDP Reference Taxonomy File | Training set for classifying sequences into taxonomic groups (phylum, class, order, etc.). |

| MOTHUR-Compatible Primer Sequences | File containing the DNA sequences of the primers used for amplification, for trimming. |

| Group File | A simple text file mapping each sequence file to its sample of origin. |

| Metadata File | A matrix describing the experimental variables (e.g., treatment, pH, host health state). |

II. Detailed Methodology

- Make Contigs: Combine forward and reverse reads into contiguous sequences.

- Screen Sequences: Filter sequences based on length, ambiguous bases, and homopolymers.

- Alignment: Align filtered sequences to a reference alignment (e.g., SILVA v132).

- Filter Alignment: Remove columns that are all gaps to reduce computational load.

- Pre-Cluster: Denoise sequences by merging nearly identical sequences (diffs=2).

- Chimera Removal: Identify and remove chimeric sequences using UCHIME.

- Taxonomic Classification: Classify sequences using the Wang method against the RDP training set.

- Remove Non-Target Sequences: Remove sequences classified as Mitochondria, Chloroplast, Archaea, or unknown.

- Cluster into OTUs: Cluster sequences into OTUs at 97% similarity using the average neighbor algorithm.

- Generate Shared File: Create a sample-by-OTU table for downstream analysis.

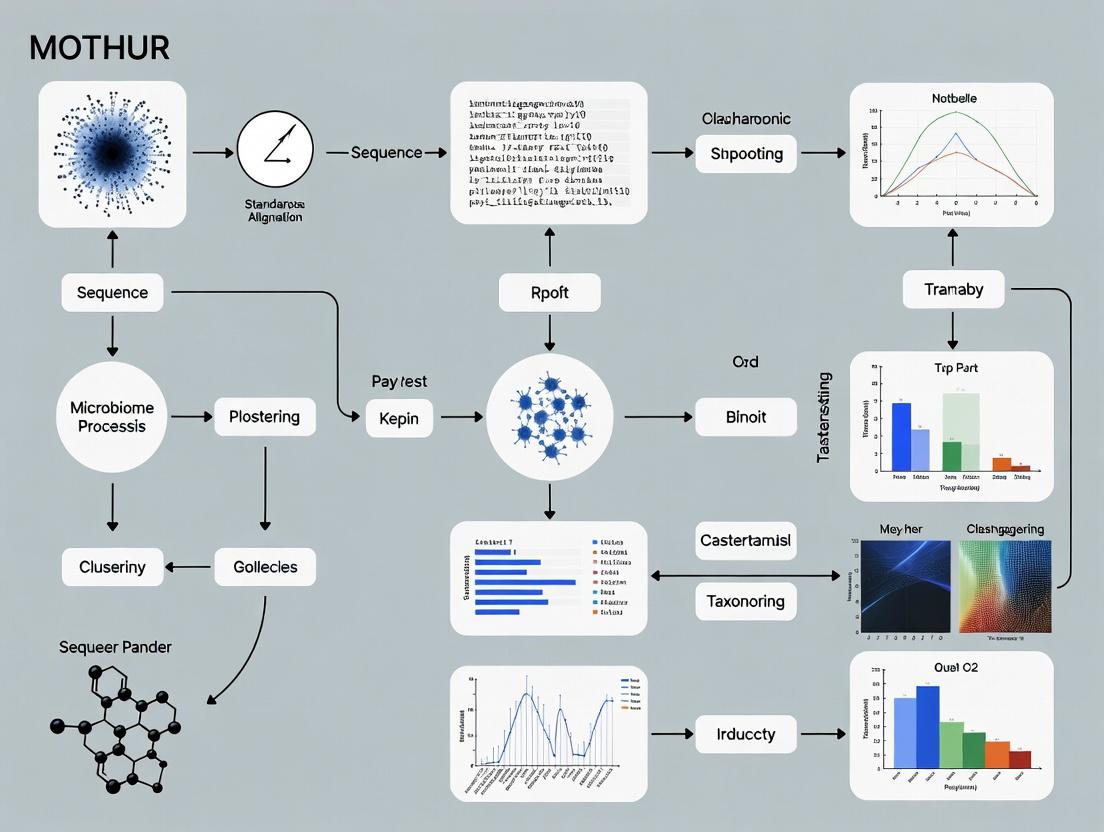

III. Visualization of Workflow

MOTHUR SOP Core Workflow

MOTHUR's Position in the Tool Ecosystem

Within the context of a standardized thesis on microbiome analysis, the MOTHUR pipeline represents a critical, reproducible framework for processing raw sequence data into biologically interpretable results. Developed to address the need for consistency in microbial ecology, MOTHUR implements the Standard Operating Procedure (SOP) outlined by the Schloss lab, enabling robust comparative studies essential for researchers and drug development professionals.

The standard MOTHUR SOP follows a sequential workflow from raw data to ecological inference.

Diagram Title: MOTHUR SOP Primary Workflow

Key Quantitative Benchmarks & Parameters

The following tables summarize standard metrics and thresholds used in the pipeline.

Table 1: Standard Sequence Quality Control Parameters

| Step | Parameter | Typical Threshold | Purpose |

|---|---|---|---|

make.contigs |

Maximum Homopolymer Length | 8-10 bp | Reduces sequencing errors |

screen.seqs |

Sequence Length | ± 20% of median | Removes overly short/long reads |

filter.seqs |

Terminal Ambiguous Bases | 0 allowed | Ensures alignment quality |

chimera.uchime |

Chimera Detection Threshold | 80-90% confidence | Removes artifactual sequences |

pre.cluster |

Differences Allowed | 1-2 nucleotides | Reduces noise before clustering |

Table 2: Standard Alpha Diversity Metrics in MOTHUR

| Metric | Command | Interpretation | Sensitive To |

|---|---|---|---|

| Observed Richness | summary.single(calc=sobs) |

Simple count of OTUs/ASVs | Rarefaction depth |

| Shannon Index | summary.single(calc=shannon) |

Community evenness & richness | Mid-abundance species |

| Inverse Simpson | summary.single(calc=invsimpson) |

Dominance of common species | High-abundance species |

| Chao1 | summary.single(calc=chao) |

Estimated total richness | Rare species |

Detailed Experimental Protocols

Protocol 4.1: From Raw Reads to Contigs

Objective: Merge paired-end reads and perform initial quality screening.

- Create Contigs: Execute

make.contigs(file=stability.files, processors=8). MOTHUR aligns forward and reverse reads, creating a contig and reporting any ambiguities. - Summarize Sequence Lengths: Run

summary.seqs(fasta=current). Identify the median length of sequences. - Screen Sequences: Apply

screen.seqs(fasta=current, group=current, maxambig=0, minlength=250, maxlength=500). This removes sequences with ambiguous bases and those falling outside the expected length range (e.g., 250-500 bp for V4 region). - Remove Unique Sequences: Execute

unique.seqs(fasta=current)to dereplicate the dataset.

Protocol 4.2: Alignment, Filtering, and Chimera Removal

Objective: Align sequences to a reference database and remove erroneous sequences.

- Align to Reference: Run

align.seqs(fasta=current, reference=silva.v4.fasta). The SILVA database is commonly used. - Filter Alignment: Execute

filter.seqs(fasta=current, vertical=T, trump=.). This creates a trimmed alignment of the same length for all sequences. - Pre-cluster Sequences: Use

pre.cluster(fasta=current, count=current, diffs=2)to merge sequences within a 2-nucleotide difference, reducing sequencing error. - Detect Chimeras: Perform chimera removal with

chimera.uchime(fasta=current, count=current, dereplicate=t). Follow withremove.seqs(fasta=current, accnos=current)to eliminate chimeras. - Final Classification: Classify sequences using

classify.seqs(fasta=current, count=current, reference=trainset_v16, taxonomy=trainset_v16.tax, cutoff=80). - Remove Non-Target Sequences: Remove mitochondrial, chloroplast, archaeal, or eukaryotic sequences with

remove.lineage(fasta=current, count=current, taxonomy=current, taxon=Chloroplast-Mitochondria-Archaea-Eukaryota).

Protocol 4.3: OTU/ASV Generation and Analysis

Objective: Cluster sequences into operational taxonomic units (OTUs) or define amplicon sequence variants (ASVs) and generate shared community file.

Diagram Title: OTU vs. ASV Clustering Pathways

- Calculate Distances: Generate a pairwise distance matrix with

dist.seqs(fasta=current, cutoff=0.03). - Cluster into OTUs: For a 97% similarity OTU approach, use

cluster(column=current, count=current, cutoff=0.03). Alternatively, usecluster.split( taxonomy=current)for a phylotype-based method. - Generate Shared File: Create the core community file with

make.shared(list=current, count=current, label=0.03). - Assign Consensus Taxonomy: Run

classify.otu(list=current, count=current, taxonomy=current, label=0.03). - Normalize by Rarefaction: For comparative analysis, subsample with

sub.sample(shared=current, size=10000)to the lowest reasonable sequencing depth.

Protocol 4.4: Diversity and Statistical Analysis

Objective: Calculate within- and between-sample diversity and perform statistical tests.

- Alpha Diversity: Calculate metrics with

summary.single(shared=current, calc=sobs-chao-shannon-invsimpson, subsample=10000). - Beta Diversity: Generate distance matrices (e.g., Bray-Curtis, ThetaYC) using

dist.shared(shared=current, calc=braycurtis-thetayc). - Ordination: Perform NMDS with

nmds(phylip=current, mindim=2, maxdim=3). Visualize withplotnmds(shared=current). - Statistical Testing: Use

amova(phylip=current, design=stability.design)for group significance oranosim(shared=current, design=stability.design, distance=braycurtis)for community dissimilarity.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key MOTHUR Pipeline Inputs & Resources

| Item | Function/Description | Source/Example |

|---|---|---|

| Raw FASTQ Files | Paired-end sequence data from 16S rRNA gene amplicon sequencing (e.g., Illumina MiSeq). | Experimental output. |

| Reference Alignment Database | Curated multiple sequence alignment for aligning 16S rRNA sequences. | SILVA (v132/v138) or Greengenes (v13.8). |

| Taxonomic Training Set | Classified sequences used to assign taxonomy via the Wang Bayesian classifier. | RDP trainset (v16) aligned to reference. |

| Group File | A 2-column, tab-delimited file linking sequence names to sample IDs. | Created during demultiplexing. |

| Count File | Tracks abundance of each unique sequence after unique.seqs. |

Generated by MOTHUR commands. |

| Design File | A 2-column, tab-delimited file specifying group membership for statistical tests. | Manually created based on experimental metadata. |

| MOTHUR Executable | The core software platform for executing all SOP commands. | https://mothur.org |

| High-Performance Computing (HPC) Access | Necessary for computationally intensive steps (alignment, clustering). | Local cluster or cloud computing instance. |

This protocol details the installation of MOTHUR, a comprehensive bioinformatics suite for analyzing microbiome sequence data, within the framework of a standard operating procedure (SOP) for robust and reproducible microbiome research. Proper installation of MOTHUR and its dependencies is the critical first step in establishing a reliable analytical pipeline for drug development and clinical research.

Table 1: Minimum and Recommended System Requirements

| Component | Minimum Requirement | Recommended for Large Datasets |

|---|---|---|

| Operating System | Linux (64-bit), macOS (10.14+), Windows 10/11 (via WSL2) | Linux (Ubuntu 22.04 LTS or CentOS/Rocky 8+) |

| CPU | 64-bit processor (2 cores) | 64-bit processor (8+ cores) |

| RAM | 8 GB | 32 GB or more |

| Storage | 10 GB free space | 100 GB+ free SSD storage |

| Package Manager | apt (Debian/Ubuntu), yum/dnf (RHEL), Homebrew (macOS) | As per OS |

Installation of Essential Dependencies

MOTHUR relies on several third-party tools and libraries. The following protocol ensures a complete environment.

Protocol 2.1: Installing Core Dependencies on Linux (Ubuntu/Debian)

Protocol 2.2: Installing Core Dependencies on macOS (Using Homebrew)

Table 2: Key Dependency Versions for MOTHUR v.1.48.0+

| Dependency | Minimum Version | Purpose in MOTHUR Pipeline |

|---|---|---|

| g++ / GCC | 4.7+ | Compilation of MOTHUR C++ source code. |

| Boost C++ Libraries | 1.54.0+ | Provides essential data structures and algorithms. |

| MAFFT | 7.310+ | Used for multiple sequence alignment (align.seqs). |

| MUSCLE | 3.8.31+ | Alternative aligner for protein-coding gene analysis. |

| BLAST+ | 2.7.0+ | Required for classification against reference databases. |

| GNU Scientific Library (GSL) | 1.16+ | Statistical computing for community analyses. |

Installing MOTHUR

Two primary methods are available: compiling from source (recommended for performance and control) or using a pre-compiled executable.

Protocol 3.1: Compiling MOTHUR from Source (Recommended)

Protocol 3.2: Installing Pre-compiled MOTHUR Executable

Verification and Test Dataset Run

Protocol 4.1: Basic Functionality Test

Expected Outcome: The summary.seqs command should execute without errors, displaying a table summarizing the sequences in the test file.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for MOTHUR Environment Setup

| Item | Function/Description | Example/Version |

|---|---|---|

| Ubuntu Server LTS | Stable, secure Linux OS foundation for the analysis server. | Ubuntu 22.04.3 LTS |

| Windows Subsystem for Linux (WSL2) | Allows native Linux environment on Windows 10/11 systems. | WSL2 Kernel 5.15.90.1 |

| Homebrew | Package manager for macOS to simplify dependency installation. | Homebrew 4.1.0 |

| Conda/Bioconda | Alternative package manager for creating isolated bioinformatics environments. | Miniconda3 23.3.1 |

| Reference Databases | Curated sequence databases for alignment, classification, and OTU clustering. | SILVA v138.1, RDP trainset 18 |

| High-Performance Computing (HPC) Scheduler | For managing large-scale MOTHUR jobs on cluster infrastructure. | Slurm 23.02, Sun Grid Engine |

| Version Control System | Tracks changes to MOTHUR scripts and analysis parameters for reproducibility. | Git 2.40.0 |

| Text Editor/IDE | For writing and editing MOTHUR batch files and scripts. | VS Code 1.86, Vim 9.0 |

Visual Workflow: MOTHUR Installation & Verification Pathway

Diagram Title: MOTHUR Installation Decision and Verification Workflow

Diagram Title: MOTHUR Software and Reagent Dependency Map

Experimental Design Principles for Robust MOTHUR Analysis

Effective microbiome analysis with MOTHUR requires rigorous pre-analytical experimental design to mitigate batch effects, control contamination, and ensure statistical power. These principles are foundational to the MOTHUR Standard Operating Procedure (SOP) for generating biologically interpretable data.

Table 1: Key Experimental Design Principles and Quantitative Benchmarks

| Principle | Objective | Recommended Benchmark / Threshold | Rationale |

|---|---|---|---|

| Replication | Ensure statistical power & reproducibility. | Minimum n=5 per treatment group; >10 for complex communities. | Enables detection of modest effect sizes (α=0.05, power=0.8). |

| Negative Controls | Detect reagent & environmental contamination. | Include at least 3 extraction blanks & 3 no-template PCR controls per batch. | Identifies contaminant OTUs for subtraction; thresholds typically at 0.1-1% of sample reads. |

| Positive Controls | Assess protocol efficiency & bias. | Use mock microbial community (e.g., ZymoBIOMICS) with known composition. | Expect >80% taxonomic recovery; Shannon index within 10% of expected. |

| Randomization | Minimize batch effects. | Randomize sample processing order across experimental groups within sequencing batch. | Reduces technical bias correlation with experimental conditions. |

| Sample Size Calculation | Determine adequate sequencing depth. | Target >10,000 reads/sample after quality control; pilot data for rarefaction curve. | Ensures coverage of diversity; plateaus in rarefaction curves indicate sufficiency. |

| Standardized Metadata | Enable covariate analysis. | Document >50 parameters (e.g., pH, temp, host BMI, collection time) using MIMARKS standard. | Critical for downstream PERMANOVA or ANOSIM statistical models in MOTHUR. |

Detailed Protocols

Protocol 2.1: Design and Implementation of Controls

Objective: To integrate essential control samples into the workflow for contamination monitoring and data normalization.

- Negative Controls:

- Extraction Blanks: Process sterile, DNA-free buffer through the entire DNA extraction kit alongside samples.

- PCR Blanks: Include reactions containing all PCR master mix components but no template DNA.

- Analysis: Sequence controls in the same run as experimental samples. In MOTHUR, create a

groupfile where controls are labeled separately. Post-clustering, remove OTUs present in controls at a frequency exceeding 0.5% of the average experimental sample read count using theremove.groupscommand.

- Positive Control (Mock Community):

- Procedure: Aliquot a commercially available mock community (e.g., ZymoBIOMICS D6300). Process identical to samples from extraction through sequencing.

- MOTHUR Analysis:

Protocol 2.2: Power Analysis and Sample Size Determination

Objective: To calculate the required number of biological replicates.

- Pilot Study: Sequence a subset of samples (n=3-4 per group) to estimate variability.

- Calculate Alpha Diversity Power:

- Use pilot data to obtain mean and standard deviation of the Shannon index per group.

- Apply a two-sample t-test power calculation:

Required n = 2 * (SD/Δ)^2 * f(α, power)where Δ is the desired detectable difference.

- Calculate Beta Diversity Power: Utilize software like

PERMANOVA.Sin R with pilot distance matrices to estimate power for community-level differences.

Protocol 2.3: Randomized Block Design for Processing

Objective: To prevent confounding of technical processing order with biological conditions.

- Procedure:

- Assign each sample a unique ID.

- Using a random number generator, create a processing order for DNA extraction that interleaves samples from all experimental groups.

- Repeat this randomization independently for the PCR amplification and library preparation steps.

- Record the final processing order as critical metadata.

- Batch Correction in MOTHUR: If a batch effect is detected via PCoA, use the

bayescommand or incorporate 'batch' as a covariate in subsequentanosimoradonisanalyses.

Diagrams

Diagram 1: Experimental Design Workflow for MOTHUR

Diagram 2: Contamination Control and Mitigation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Robust MOTHUR Experimental Design

| Item | Function | Example Product(s) |

|---|---|---|

| Mock Microbial Community | Positive control for DNA extraction, PCR, and sequencing efficiency; quantifies technical bias. | ZymoBIOMICS Microbial Community Standard (D6300); ATCC Mock Microbiome Standards. |

| DNA Extraction Blank | Negative control to identify contaminants originating from extraction kits and reagents. | Kit-specific lysis buffer or nuclease-free water processed identically to samples. |

| PCR Grade Water | Ultra-pure, DNA-free water for PCR master mix preparation to prevent amplicon contamination. | Invitrogen UltraPure DNase/RNase-Free Distilled Water; Qiagen Water, PCR Grade. |

| High-Fidelity DNA Polymerase | Enzyme with proofreading reduces PCR errors and chimeric sequence formation. | Thermo Scientific Phusion High-Fidelity DNA Polymerase; Q5 High-Fidelity DNA Polymerase. |

| Barcoded Primers with Linkers | Allows multiplexing of samples; linkers improve sequencing efficiency on platforms like MiSeq. | Golay barcoded 515F/806R primers for 16S V4 region. |

| Quantification Standard | Accurate library quantification for balanced pooling, preventing read depth bias. | KAPA Library Quantification Kit for Illumina; Qubit dsDNA HS Assay Kit. |

| Standardized Metadata Sheet | Ensures consistent collection of covariates critical for statistical analysis in MOTHUR. | MIMARKS (Minimum Information about a MARKer gene Sequence) checklist. |

Within the MOTHUR Standard Operating Procedure (SOP) for microbiome research, data progresses through a pipeline that transforms raw sequencing reads into interpretable ecological and statistical summaries. This journey is embodied in a series of specialized file formats, each serving a distinct purpose. Understanding these formats—their structure, generation, and application—is critical for researchers, scientists, and drug development professionals to accurately process data, troubleshoot pipelines, and interpret results for therapeutic or diagnostic insights.

Core File Formats in Microbiome Analysis

The table below summarizes the key file formats encountered in the MOTHUR SOP workflow, detailing their content, primary use, and typical origin.

Table 1: Essential File Formats in the MOTHUR Microbiome Pipeline

| File Format | Extension | Primary Content | Role in Workflow | Typical Source |

|---|---|---|---|---|

| FASTQ | .fastq, .fq | Raw sequencing reads with quality scores. | Input of raw data from the sequencer. | Illumina, PacBio, Ion Torrent sequencers. |

| FASTA | .fasta, .fa | Biological sequences (DNA/RNA/AA) without quality scores. | Contains curated sequences post-quality control and alignment. | Generated by trimming, filtering, and aligning FASTQ files. |

| Count | .count_table | Frequency of each unique sequence per sample. | Tracks sequence redundancy; essential for downstream clustering and OTU/ASV picking. | Generated by mothur command make.count.table. |

| Group | .groups | Assignment of each sequence to its sample of origin. | Maintains sample identity for sequences throughout analysis. | Created during demultiplexing or by mothur command make.group. |

| Taxonomy | .taxonomy | Taxonomic classification for each sequence (e.g., phylum, genus). | Provides biological identity to sequences/OTUs/ASVs. | Output of classifiers like RDP, SILVA, or Greengenes within mothur. |

| Shared | .shared | Matrix of OTU/ASV counts across all samples. | Primary input for community ecology and statistical analysis. | Generated by mothur command make.shared. |

| List | .list | Pairwise distance matrix between sequences. | Input for clustering sequences into OTUs. | Generated by mothur command dist.seqs. |

Detailed File Structures & Protocols

From Raw Data: FASTQ to FASTA

Protocol 1: Generating a Processed FASTA File from Paired-end FASTQ Data in MOTHUR Objective: Convert raw Illumina MiSeq paired-end reads into a high-quality, aligned FASTA file for downstream analysis.

- Make File Contiguous: Combine forward and reverse reads for each sample into a single file.

mothur > make.contigs(file=stability.files, processors=8)Outputs:stability.trim.contigs.fastaandstability.contigs.groups. - Screen Sequences: Remove sequences with ambiguous bases ('N') or unexpected lengths.

mothur > screen.seqs(fasta=stability.trim.contigs.fasta, group=stability.contigs.groups, maxambig=0, maxlength=275) - Dereplicate Sequences: Identify unique sequences and generate count table.

mothur > unique.seqs(fasta=stability.trim.contigs.good.fasta)mothur > count.seqs(name=stability.trim.contigs.good.names, group=stability.contigs.good.groups) - Align to Reference Database: Align sequences to a curated alignment template (e.g., SILVA v132).

mothur > align.seqs(fasta=stability.trim.contigs.good.unique.fasta, reference=silva.v4.fasta) - Filter Alignment: Remove columns with only gaps to streamline data.

mothur > filter.seqs(fasta=stability.trim.contigs.good.unique.align, vertical=T, trump=.) - Pre-cluster Sequences: Remove slight sequencing errors by merging near-identical sequences.

mothur > pre.cluster(fasta=stability.trim.contigs.good.unique.filter.fasta, count=stability.trim.contigs.good.count_table, diffs=2)Final Output: A filtered, aligned FASTA file (stability.trim.contigs.good.unique.filter.precluster.fasta) and its associated count file, ready for chimera removal and clustering.

Generating Sample-Specific and Taxonomic Files

Protocol 2: Creating Count, Group, Shared, and Taxonomy Files Objective: Generate the core data matrices and classifications needed for community analysis.

- Remove Chimeras: Identify and remove artefactual sequences.

mothur > chimera.uchime(fasta=current, count=current, dereplicate=t)mothur > remove.seqs(fasta=current, accnos=current) - Assign Taxonomy: Classify sequences using a Bayesian classifier and reference taxonomy.

mothur > classify.seqs(fasta=current, count=current, reference=trainset_v4_032024.rdp.fasta, taxonomy=trainset_v4_032024.rdp.tax, cutoff=80) - Cluster into OTUs: Generate a distance matrix and cluster sequences at a 97% similarity cutoff.

mothur > dist.seqs(fasta=current, cutoff=0.03)mothur > cluster(column=current, count=current) - Generate Shared File: Create the OTU abundance table.

mothur > make.shared(list=current, count=current, label=0.03) - Get Consensus Taxonomy: Determine taxonomy for each OTU.

mothur > classify.otu(list=current, count=current, taxonomy=current, label=0.03)Final Outputs: The key analysis files:final.shared(OTU table),final.0.03.cons.taxonomy(OTU taxonomy), and the underlyingfinal.count_table.

Visualizing the Workflow & Data Relationships

Diagram 1: MOTHUR file format conversion workflow

Diagram 2: Relationship between Shared, Taxonomy, and Count files

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Reagents for MOTHUR SOP Implementation

| Item | Function in Workflow | Example/Supplier |

|---|---|---|

| Silica Gel Membrane Filtration Kits | Environmental DNA extraction from soil, water, or filters. | DNeasy PowerSoil Pro Kit (Qiagen) |

| Bead-Beating Lysis Tubes | Mechanical disruption of tough microbial cell walls in stool or tissue samples. | Garnet beads in 2ml tubes (e.g., OMNI Beat Rupture Tubes) |

| PCR Reagents with High-Fidelity Polymerase | Amplification of 16S rRNA gene regions (e.g., V4) with minimal bias. | Phusion High-Fidelity DNA Polymerase (Thermo Scientific) |

| Dual-Index Barcoded Primers | Multiplexing of hundreds of samples in a single sequencing run. | Nextera XT Index Kit (Illumina) |

| Quantitative Fluorometric Assay | Accurate quantification of DNA library concentration prior to sequencing. | Qubit dsDNA HS Assay Kit (Thermo Fisher) |

| Reference Alignment Database | Template for aligning 16S rRNA sequences. | SILVA SSU Ref NR database (https://www.arb-silva.de/) |

| Curated Taxonomy Training Set | Reference for Bayesian taxonomic classification. | RDP training set (v18) formatted for MOTHUR |

| Positive Control Genomic DNA | Verification of PCR and sequencing pipeline integrity. | ZymoBIOMICS Microbial Community Standard (Zymo Research) |

| Negative Extraction Control (PCR-grade Water) | Detection of reagent or environmental contamination. | Nuclease-free Water (Ambion) |

Step-by-Step MOTHUR SOP: A Practical Walkthrough from Raw Data to OTU Table

This protocol constitutes the foundational Step 1 within the comprehensive MOTHUR Standard Operating Procedure (SOP) for 16S rRNA gene-based microbiome analysis. The objective is to process raw sequencing reads (typically from Illumina platforms) to generate high-quality, aligned sequences ready for downstream analysis (e.g., chimera removal, clustering, classification). The choice of reference database (SILVA or Greengenes) dictates the specific alignment coordinates and taxonomy. This Application Note details the parallel paths for both references.

Research Reagent Solutions & Essential Materials

| Item | Function/Description |

|---|---|

| Raw FASTQ Files | Paired-end (R1 & R2) or single-end sequencing reads from the target hypervariable region (e.g., V4). |

| MOTHUR Software | Open-source, community-supported bioinformatics platform for microbial ecology analysis. |

| SILVA Reference Database | Curated, comprehensive database of aligned rRNA sequences. Provides high-quality alignments and updated taxonomy. Requires specific seed alignment (silva.seed_v138.align) and taxonomy files. |

| Greengenes Reference Database | 16S rRNA gene database aligned to a fixed core. Uses the core_set aligned for a consistent coordinate system (e.g., core_set_aligned.fasta). |

| Primer Sequences | Exact oligonucleotide sequences used for PCR amplification (e.g., 515F/806R for V4 region). Required for precise trimming. |

| Barcodes File | A text file linking sample names to their unique nucleotide barcode sequences. |

| Computing Resources | Multi-core Unix/Linux server or cluster with sufficient RAM (>16GB recommended) for alignment steps. |

| Metric | Typical Threshold/Value | Purpose |

|---|---|---|

| Maximum Ambiguous Bases (maxambig) | 0 | Removes sequences with any ambiguous base calls (N). |

| Maximum Homopolymer Length (maxhomop) | 8 | Filters sequences with homopolymers longer than specified length. |

| Minimum Sequence Length (minlength) | Varies by region (e.g., 250 for V4) | Removes truncated reads. |

| Maximum Sequence Length (maxlength) | Varies by region (e.g., 275 for V4) | Removes abnormally long reads. |

| Quality Score Window (qwindowaverage) | 35 | Minimum average quality score over a sliding window. |

| Alignment to Reference | >80% similarity to seed alignment | Retains sequences that align properly to the reference. |

| Start/End Position for Alignment | SILVA: start=13862, end=23444; Greengenes: start=1968, end=11550 (for full-length) |

Trims alignment to consistent region for comparison. |

Detailed Experimental Protocol

Part A: Initial Quality Control & Trimming

This section is common to both SILVA and Greengenes pathways.

- Create Stability Files: Combine your forward reads, reverse reads, and barcode files into MOTHUR-compatible

stability.files. - Merge Paired-End Reads: Merge forward and reverse reads into contigs.

Output:

stability.trim.contigs.fastaand summary file. - Screen Sequences: Apply initial quality filters based on length, ambiguous bases, and homopolymers.

- Remove Duplicate Sequences: Identify and group identical sequences to speed up downstream processing.

- Generate Count Table: Create a table linking sequence names to their abundance in each sample.

Part B: Alignment & Trimming (SILVA Pathway)

- Align to SILVA Seed: Align unique sequences to the SILVA reference alignment.

- Screen for Alignment Quality: Keep sequences that align within the expected region.

- Filter Alignment: Remove columns containing only gaps or terminal gaps to streamline data.

- Remove Redundancy: Identify unique sequences post-alignment.

Output:

stability.trim.contigs.good.unique.good.filter.unique.fastaand an updated count table. This is the input for Step 2 (Pre-clustering).

Part C: Alignment & Trimming (Greengenes Pathway)

- Align to Greengenes Core: Align unique sequences to the Greengenes core reference alignment.

- Screen for Alignment Quality: Keep sequences that align within the expected region.

- Filter Alignment: Remove columns containing only gaps or terminal gaps.

- Remove Redundancy: Identify unique sequences post-alignment. Output: Filtered unique alignment and updated count table for Step 2.

Visualized Workflows

Title: MOTHUR Step 1 Workflow: QC to Alignment

Title: Sequence Alignment to Reference Database Logic

Application Notes

Within the MOTHUR Standard Operating Procedure (SOP) for microbiome analysis, Step 2 is a critical quality control and refinement phase. It follows initial sequence processing and precedes final clustering and taxonomic classification. The primary objectives are to reduce dataset complexity, identify and remove PCR-generated artificial sequences (chimeras), and correct for sequencing errors to generate accurate biological sequences (Zero-radius Operational Taxonomic Units, zOTUs). This step directly impacts downstream diversity metrics and ecological interpretations, making it essential for robust research in drug development and microbial ecology.

Table 1: Comparative Overview of Chimera Detection Algorithms

| Algorithm | Mode | Reference Database Required? | Primary Advantage | Typical Removal Rate in 16S Studies |

|---|---|---|---|---|

| UCHIME | de novo | No | Effective without reference | 5–20% |

| UCHIME | Reference | Yes (e.g., SILVA, RDP) | High accuracy with good reference | 10–30% |

| VSEARCH | de novo | No | Faster, open-source alternative to UCHIME | 5–20% |

| VSEARCH | Reference | Yes | High sensitivity | 10–30% |

Table 2: Impact of Pre-Clustering on Dataset Size

| Pre-Clustering Difference Threshold | Approximate Sequence Reduction | Computational Time | Risk of Merging Rare Biological Variants |

|---|---|---|---|

| 1 difference (0.2% divergence) | 10-25% | Low | Low |

| 2 differences (0.4% divergence) | 20-40% | Medium | Medium |

| 4 differences (1% divergence) | 30-60% | High | High |

Experimental Protocols

Protocol 1: Pre-Clustering in MOTHUR

Purpose: To reduce dataset size by merging nearly identical sequences, mitigating the impact of sequencing errors prior to chimera detection.

- Input: Quality-filtered FASTA file (

final.fasta) and corresponding name or count file. - Command: Execute the

pre.clustercommand using thediffsoption to allow a specified number of differences (typically 1 or 2). - Output: A new FASTA file (

final.precluster.fasta) and associated files where sequences withindiffsof a more abundant sequence are merged.

Protocol 2: Reference-Based Chimera Detection with VSEARCH/UCHIME

Purpose: To identify and remove chimeric sequences by comparison against a curated reference database.

- Preparation: Download and format a reference database (e.g., SILVA v138) for MOTHUR compatibility.

- Command: Run the

chimera.vsearchcommand in reference mode. - Removal: Create a chimera-free sequence file using the

remove.seqscommand.

Protocol 3: Sequence Denoising withunoise3(DADA2-like method in MOTHUR)

Purpose: To correct sequencing errors and infer biological sequences (zOTUs).

- Input: Chimera-checked, pre-clustered files.

- Command: Use the

cluster.splitcommand with theunoisealgorithm. This requires a count table. - Output: A

listfile containing zOTUs and acount_tablewith their abundances across samples. These replace traditional OTUs for downstream analysis.

Visualizations

Title: MOTHUR SOP Step 2 Workflow

Title: Chimera Formation in PCR

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Step 2

| Item | Function / Example | Critical Note |

|---|---|---|

| Curated Reference Database (e.g., SILVA, RDP, Greengenes) | Provides a collection of high-quality, non-chimeric sequences for reference-based chimera checking. | Version alignment (e.g., SILVA 138) with primer region is crucial for accuracy. |

| MOTHUR SOP-Compatible Script | A batch file (.batch) containing the sequential commands for Step 2, ensuring reproducibility. | Must be validated for specific sequencing platform (MiSeq, PacBio) and region (V4, V3-V4). |

| High-Performance Computing (HPC) Resources | Adequate CPU (multi-core) and RAM (>32GB) for memory-intensive steps like pre-clustering and VSEARCH. | De novo chimera detection is computationally more intensive than reference-based. |

| Sequence Count Table | Tracks abundances of unique sequences across samples post-quality filtering. Essential for denoising (unoise3) and maintaining quantitative integrity. |

Replaces the older "name file" in modern MOTHUR workflows. |

| Negative Control Samples | Amplicons from template-free PCR or blank extractions. Used to identify potential contaminants that may persist through chimera checking. | Critical for diagnostic and drug development studies to distinguish signal from noise. |

Within the MOTHUR standard operating procedure (SOP) for 16S rRNA gene analysis, Step 3 is a critical decision point: clustering sequences into Operational Taxonomic Units (OTUs). This step directly influences downstream alpha and beta diversity metrics. Researchers must choose between two principal methodologies: distance-based clustering (e.g., average-neighbor, furthest-neighbor) and phylotype-based classification (e.g., genus-level binning). This Application Note details the protocols, comparative performance, and considerations for each method within the MOTHUR framework, aimed at ensuring reproducible and biologically interpretable microbiome research.

Comparative Analysis: Distance-Based vs. Phylotype Methods

The choice between methods involves trade-offs in sensitivity, computational demand, and biological resolution. The following table summarizes key quantitative comparisons based on recent benchmarking studies.

Table 1: Quantitative Comparison of OTU Picking Methods

| Parameter | Distance-Based Clustering (e.g., avg-neighbor) | Phylotype Method (e.g., Genus-level) |

|---|---|---|

| Primary Metric | Pairwise sequence dissimilarity (e.g., 0.03 = 97% similarity) | Taxonomic rank identity (e.g., same genus assignment) |

| Typical Threshold | 0.03 (Species-level) | V6-V8 hypervariable region(s) |

| Computational Demand | High (All-vs-all distance calculation required) | Low (Relies on pre-classified database) |

| Sensitivity to PCR/Sequencing Errors | Moderate (Can be mitigated with pre-clustering) | High (Errors may lead to misclassification) |

| Handling of Novel Diversity | Groups novel sequences based on similarity | Often bins novel sequences as "unclassified" |

| Common Downstream Metric | OTU-based (e.g., Chao1, Weighted UniFrac) | Taxon-based (e.g., Genus abundance, PCoA on Bray-Curtis) |

| Reported Inflation of Richness Estimates | Lower (when using optimized algorithms) | Higher (due to splitting of polymorphic taxa) |

Experimental Protocols

Protocol 1: Distance-Based OTU Clustering in MOTHUR

This protocol creates OTUs by clustering sequences based on pairwise genetic distances.

Materials & Pre-processing:

- Input Data: A high-quality, aligned sequence file (e.g.,

stability.trim.contigs.good.alignfrom MOTHUR SOP Steps 1 & 2). - Distance Matrix: Calculate a pairwise distance matrix for all sequences.

- Clustering Algorithm: Choose a clustering method. Average-neighbor is often recommended for balancing sensitivity and specificity.

- Clustering Command: Cluster sequences into OTUs at a 97% similarity (0.03 distance) threshold.

- OTU Classification: Assign taxonomy to the representative sequence of each OTU using a reference database (e.g., SILVA, RDP).

Output: An OTU shared file (

stability.trim.contigs.good.an.unique_list.shared) and a consensus taxonomy file.

Protocol 2: Phylotype (Taxonomic Binning) Classification in MOTHUR

This protocol assigns sequences directly to taxonomic groups, using the genus level as the default OTU equivalent.

Materials & Pre-processing:

- Input Data: The same high-quality, aligned sequence file.

- Taxonomic Classification: Classify each individual sequence against a reference database.

- Binning Sequences: Bin sequences into "phylotypes" based on their genus-level classification.

- Create Shared File: Generate a shared file from the phylotype list.

Output: A phylotype shared file (

stability.trim.contigs.good.nr_v132.wang.tx.shared) where columns represent genus-level taxa.

Visualization of Methodological Workflows

Diagram 1: OTU Picking Decision Pathway in MOTHUR SOP

Diagram 2: Taxonomic Classification & Binning Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Computational Tools for OTU Picking

| Item | Function/Description | Example/Provider |

|---|---|---|

| Reference Database | Curated collection of aligned, classified sequences for taxonomic assignment. Critical for both methods. | SILVA, RDP, Greengenes |

| Alignment Template | A full-length 16S rRNA gene alignment used to align query sequences prior to distance calculation or classification. | SILVA SEED, MOTHUR-compatible aligned templates |

| Taxonomy File | Paired with the reference database, provides the taxonomic lineage for each reference sequence. | silva.nr_v132.tax |

| Count Table | Tracks the abundance of each unique sequence across samples, maintained from chimera removal. Essential for accurate abundance calculation. | MOTHUR count.file |

| High-Performance Computing (HPC) Cluster | Necessary for all-vs-all distance matrix calculations on large datasets (>100k sequences). | Local University HPC, Cloud (AWS, GCP) |

| MOTHUR Executable | The core software platform containing all commands for protocol execution. | www.mothur.org |

| Quality Score File (qfile) | Original per-base quality scores (e.g., from SFF or fastq). Used in earlier processing steps that feed into OTU picking. | Output from sequencing platform |

Application Notes

This protocol details the generation of the final analytical outputs within the MOTHUR SOP pipeline for 16S rRNA gene amplicon studies. This step transforms processed sequence data into biologically interpretable formats, integrating taxonomic and phylogenetic information with sample metadata to enable robust statistical analysis. The core outputs are the Operational Taxonomic Unit (OTU) table, the phylogenetic tree, and the integrated metadata file, which together form the foundation for downstream ecological and differential abundance analyses.

Core Outputs and Their Significance

OTU Table: A biological observation matrix (samples x OTUs) containing sequence counts, representing the abundance of each taxon in each sample. It is the primary data structure for calculating diversity metrics and testing hypotheses.

Phylogenetic Tree: A branching diagram inferring the evolutionary relationships among the OTUs. It is essential for phylogenetically-informed diversity metrics (e.g., UniFrac) and provides a framework for evolutionary interpretations of community differences.

Metadata Integration: The process of merging the OTU table with sample-associated experimental and clinical data (e.g., pH, treatment, disease state). This integration is critical for testing specific hypotheses about factors influencing microbiome composition.

Key Quantitative Outputs and Benchmarks

Table 1: Typical Output Metrics from MOTHUR Core Generation Steps

| Output Metric | Typical Range/Value | Interpretation & Impact |

|---|---|---|

| Final OTU Count | 100 - 10,000+ per study | Determines matrix complexity. High counts may indicate over-splitting; low counts may indicate over-aggressive clustering. |

| Total Sequences per Sample (Post-Clustering) | 1,000 - 50,000+ | Defines sampling depth for rarefaction. Low counts (<1,000) may limit detection of rare taxa. |

| Chimera Removal Rate | 1% - 20% | Proportion of pre-clustered sequences identified as chimeric. Rates >20% may indicate PCR artifacts. |

| Non-Bacterial/Archaea Sequences | <1% (for 16S primers) | Efficacy of primer specificity and post-processing filtering. High rates indicate off-target amplification. |

| Metadata Variables Integrated | 5 - 50+ variables | Number of clinical, environmental, or technical covariates linked to each sample for hypothesis testing. |

Experimental Protocol

Protocol: Generation of OTU Table and Phylogenetic Tree in MOTHUR

Objective: To cluster high-quality sequences into OTUs, assign taxonomy, generate a count table, and construct a phylogenetic tree.

Materials & Software:

- MOTHUR v.1.48.0 or later

- Processed sequence file (

*.fasta) - Corresponding name/group files

- Reference alignment database (e.g., SILVA SEED v.138)

- Reference taxonomy database (e.g., RDP training set v.18)

- Computing cluster or high-performance workstation (recommended 16+ GB RAM).

Procedure:

Cluster Sequences into OTUs:

This generates a

*.listfile and an updatedcount_table. Thecutoff=0.03parameter defines a 97% similarity threshold for OTU clustering.Classify OTU Representative Sequences:

The

get.oturepcommand selects the most abundant sequence as the representative for each OTU.classify.seqsassigns taxonomy using a Bayesian classifier.Remove Non-Target Lineages:

This step removes sequences from chloroplasts, mitochondria, and other non-target domains to focus analysis on the bacterial/archaeal microbiome.

Generate Final OTU Table:

make.sharedcreates the OTU abundance table.classify.otuassigns consensus taxonomy to each OTU in the table.Construct Phylogenetic Tree:

Representative sequences are aligned, filtered to conserved columns, and used to generate a distance matrix and a Newick-formatted phylogenetic tree (

*.trefile).

Protocol: Metadata Integration and Curation

Objective: To merge sample metadata with the OTU table, ensuring data integrity for statistical analysis.

Procedure:

- Prepare Metadata File: Create a tab-separated text file where the first column header is "sample" and contains sample IDs exactly matching those in the OTU table. Subsequent columns contain metadata (e.g.,

pH,Treatment,DiseaseState). - Integrate in MOTHUR: This command internally links metadata to samples. The shared file can be merged with taxonomy for export.

- Export for Downstream Analysis:

This creates a

.tsvfile that can be directly imported into statistical software (e.g., R, QIIME2). Alternatively, usewrite.otuand manually merge with metadata in R.

Diagrams

Title: MOTHUR workflow for generating core microbiome outputs.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Core Output Generation

| Item | Function & Rationale |

|---|---|

| SILVA SEED Database (v.138+) | A curated, aligned reference database for 16S/18S rRNA genes. Used for alignment and provides a consistent taxonomy framework for classification. |

| RDP Reference Files | Training sets for the Naïve Bayesian classifier. Required for the classify.seqs command to assign taxonomic identities to sequences. |

| MOTHUR Executable (v.1.48+) | The core software implementing the SOP. Later versions contain critical bug fixes and improved algorithms for clustering and chimera detection. |

| High-Performance Compute (HPC) Cluster | Clustering and tree-building are computationally intensive. An HPC with MPI support enables parallel processing, reducing runtime from days to hours. |

| Curated Metadata Template | A standardized spreadsheet template ensures consistent metadata collection, minimizing errors during integration. Should include controlled vocabulary for key variables. |

| R/Python Environment with phyloseq/qiime2 | Downstream statistical analysis and visualization of the core outputs (OTU table, tree, metadata) is most efficiently performed in these specialized environments. |

Application Notes

Within the MOTHUR SOP framework for microbiome research, downstream analysis transforms processed sequence data into biological insights. This step quantifies microbial diversity within (alpha) and between (beta) samples, followed by statistical hypothesis testing. These analyses are critical for drug development professionals to identify dysbiosis, assess treatment effects, and discover biomarker taxa.

The following metrics, calculated via the summary.single command, provide complementary views of within-sample richness and evenness.

| Metric | Type | Formula (Conceptual) | Interpretation | Typical Range (16S) |

|---|---|---|---|---|

| Observed Species | Richness | Sobs = Count of OTUs | Simple count of observed OTUs. Sensitive to sequencing depth. | 10s - 1000s |

| Chao1 | Richness | Schao1 = Sobs + (F1² / 2*F2) | Estimates total species richness, correcting for unseen species via singletons (F1) and doubletons (F2). | Higher than Sobs |

| Shannon Index | Diversity | H' = -Σ (pi ln(pi)) | Combines richness and evenness. Increases with more species and more equal abundances. | 1.5 - 7+ |

| Inverse Simpson | Diversity | 1/D, where D = Σ pi² | Weighted towards dominant species. Less sensitive to rare species. | 1 - 100s |

| Good's Coverage | Sequencing Depth | C = 1 - (n1 / N) | Proportion of sequences from non-singleton OTUs. Estimates completeness. | >0.97 acceptable |

Beta diversity measures dissimilarity between microbial communities. MOTHUR generates distance matrices (e.g., using Bray-Curtis, ThetaYC) which are then visualized and tested.

| Analysis | Primary MOTHUR Command | Key Outputs | Common Statistical Test (in MOTHUR/R) |

|---|---|---|---|

| Principal Coordinates Analysis (PCoA) | pcoa |

Eigenvalues, eigenvectors (coordinates) | Visual clustering assessment; PERMANOVA (anosim, adonis in R) |

| Non-Metric Multidimensional Scaling (NMDS) | nmds |

Stress value, NMDS coordinates | Lower stress (<0.2) indicates better representation; PERMANOVA |

| Cluster Analysis | cluster |

Tree file (e.g., .phylip.tre) | Similarity Profile test (simprof), AMOVA (amova) |

Experimental Protocols

Protocol: Comprehensive Alpha and Beta Diversity Analysis Pipeline

Objective: To calculate, visualize, and statistically compare microbial diversity across sample groups.

Input: A shared file (e.g., final.an.shared) and a consensus taxonomy file (e.g., final.an.cons.taxonomy).

Procedure:

- Calculate Distance Matrix:

Generates:

final.an.braycurtis.square.dist

Alpha Diversity Calculation:

Uses subsampling to normalize effort. Output:

.groups.ave-std.summaryBeta Diversity Ordination (PCoA & NMDS):

Check NMDS stress. Iterate with

nmds(..., maxdim=3)if stress >0.2. Outputs:.pcoa.axes,.nmds.axes.Visualization & Statistical Testing (in R):

- Import axes and metadata into R.

- Plot PCoA/NMDS using

ggplot2. - Perform PERMANOVA using

vegan::adonis2on the distance matrix to test for group differences.

Protocol: Statistical Comparison of Alpha Diversity

Objective: Test if alpha diversity differs significantly between pre-defined sample groups (e.g., Treatment vs. Control). Procedure:

- Extract the

shannoncolumn from the.groups.ave-std.summaryfile. - In statistical software (R, GraphPad Prism), perform:

- Normality Test: Shapiro-Wilk test on each group.

- Parametric Test: If data are normal and homoscedastic, use ANOVA (for >2 groups) or t-test (for 2 groups).

- Non-Parametric Test: Otherwise, use Kruskal-Wallis (>2 groups) or Wilcoxon rank-sum (2 groups).

Visualizations

Title: MOTHUR Downstream Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in MOTHUR Downstream Analysis |

|---|---|

MOTHUR Software (mothur) |

Core platform for executing all commands for distance calculation, alpha/beta diversity, and ordination. |

R Statistical Environment with vegan package |

Essential for advanced statistical testing (PERMANOVA, SIMPER) and high-quality publication-grade visualizations of ordination results. |

| GraphPad Prism / SPSS | Alternative software for performing standard statistical tests on exported alpha diversity indices (t-test, ANOVA). |

| Subsampled / Rarefied OTU Table | Input file normalized to an even sequencing depth per sample, crucial for fair alpha/beta diversity comparisons. |

| Bray-Curtis Dissimilarity Matrix | A beta diversity distance matrix quantifying compositional differences between all sample pairs; the primary input for ordination and PERMANOVA. |

| Sample Metadata File (.txt/.csv) | Tabular data linking sample IDs to experimental groups (e.g., treatment, timepoint, phenotype) for statistical grouping and plot coloring. |

Solving Common MOTHUR Pitfalls: Error Messages, Parameter Tuning, and Best Practices

Decoding Common MOTHUR Error Messages and How to Fix Them

Within the MOTHUR Standard Operating Procedure (SOP) for microbiome data research, error messages are a critical feedback mechanism. This document provides Application Notes and Protocols for diagnosing and resolving frequent errors, ensuring analytical reproducibility and pipeline integrity for researchers, scientists, and drug development professionals.

Common Errors, Causes, and Solutions

Table 1: Summary of common MOTHUR errors, their primary causes, and recommended fixes.

| Error Message / Symptom | Likely Cause | Solution Protocol |

|---|---|---|

ERROR: ...unable to open file... or file not found |

Incorrect file path, missing file, or permission issue. | Protocol 1.1: Verify the file exists at the specified location using system("ls /path/to/file") within MOTHUR. Ensure no typos. Use absolute paths. Check read permissions. |

ERROR: Your sequence...is not the same length as... |

Sequence length mismatch within an alignment or group file. | Protocol 1.2: Use summary.seqs(fasta=your.fasta) to check sequence length distribution. Re-run align.seqs() with consistent parameters or screen sequences with screen.seqs() to enforce length homogeneity. |

ERROR: The names in your ...file do not match... |

Mismatch in sequence names between files (e.g., fasta, group, names). | Protocol 1.3: Use count.groups(group=your.groupfile) and summary.seqs(fasta=your.fasta, name=your.names) to audit files. Correct using make.shared() with consistent name= and group= inputs, or regenerate problematic files from raw data. |

ERROR: ...matrix is not square |

Distance matrix file is corrupted or incorrectly formatted. | Protocol 1.4: Recalculate the matrix. Ensure the phylip file is not opened/edited in a plain text editor. Re-run dist.seqs() or cluster() commands with verified input files. |

WARNING: ...could not find a group for sequence... |

A sequence in the fasta or name file has no corresponding entry in the group or count file. | Protocol 1.5: This is often non-fatal. MOTHUR will exclude the orphan sequence. To resolve, ensure your group= or count= file is generated from the same set of sequences using make.group() or count.seqs(). |

segmentation fault (core dumped) |

Memory/Resource issue, binary incompatibility, or severe data corruption. | Protocol 1.6: 1) Check system memory. 2) Simplify dataset (e.g., subsample). 3) Re-install MOTHUR from source or update to latest version. 4) Check for special characters in file names or sequence labels. |

ERROR: ...not a candidate for removing... in chimera.uchime |

Incorrect input format for the uchime command. | Protocol 1.7: The fasta= input must be the name of an aligned fasta file. Ensure alignment has been performed prior to chimera checking. Use chimera.uchime(fasta=silva.v4.align, template=silva.gold.align) format. |

Detailed Experimental Protocols

Protocol 1.2: Resolving Sequence Length Mismatch Errors

- Diagnose: Run

summary.seqs(fasta=stability.trim.contigs.good.fasta). - Identify Outliers: Note the minimum and maximum sequence lengths. A large spread indicates an issue.

- Screen Sequences: Execute

screen.seqs(fasta=stability.trim.contigs.good.fasta, group=stability.contigs.good.groups, maxambig=0, maxlength=275, minlength=230). - Re-align: Re-run

align.seqs()using the screened fasta file. - Verify: Re-run

summary.seqs()on the aligned file to confirm uniform length.

Protocol 1.3: Fixing Name Mismatches Between Files

- Audit: Generate a count table from raw data:

make.count(file=stability.files)followed bycount.seqs(name=stability.names, group=stability.groups). - Use Count Table: In all downstream commands, use the

count=stability.trim.contigs.good.count_tablefile instead of agroupfile. - Consistent Inputs: For

make.shared(), use:make.shared(count=stability.trim.contigs.good.count_table, label=0.03). - Principle: The count table is the authoritative source for sequence-group relationships and minimizes mismatch errors.

MOTHUR Error Diagnosis Workflow Diagram

Diagram Title: MOTHUR Error Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and computational tools for MOTHUR SOP troubleshooting.

| Item | Function in MOTHUR SOP Troubleshooting |

|---|---|

| Reference Alignment Database (e.g., SILVA, RDP, Greengenes) | Provides the template for align.seqs(). Mismatched version between database and taxonomy files is a common source of downstream errors. |

| High-Quality Metadata File | A correctly formatted text file linking samples to group names. Essential for generating correct group or count files. |

Count Table File (*.count_table) |

Superior to a simple group file as it tracks redundancy. The central file for resolving name mismatch errors (Protocol 1.3). |

Sequence Summary Output (summary.seqs()) |

Diagnostic tool for identifying sequence length outliers and total number of sequences, critical for Protocol 1.2. |

| Subsampled Dataset | A smaller, representative fasta file used to test pipeline steps and isolate memory/resource errors (Protocol 1.6). |

System Monitoring Tool (e.g., top, htop) |

Monitors memory (RAM) and CPU usage during MOTHUR execution to diagnose segmentation fault causes. |

| Version-Controlled Scripts | Records all exact commands and parameters used, enabling reproducible error diagnosis and correction. |

MOTHUR File Dependency Pathway Diagram

Diagram Title: MOTHUR SOP Core File Dependencies

Conclusion: Systematic diagnosis using these protocols, within the framework of the MOTHUR SOP, minimizes analytical downtime. Adherence to the file dependency pathway and consistent use of a count table are paramount for robust microbiome analysis in drug development and research.

Within the Mothur Standard Operating Procedure (SOP) for microbiome analysis, the optimization of bioinformatic preprocessing parameters is critical for generating accurate, reproducible, and biologically meaningful results. This Application Note details the experimental protocols for determining optimal settings for three foundational parameters: trim length for sequence quality control, cluster cutoff for Operational Taxonomic Unit (OTU) or Amplicon Sequence Variant (ASV) formation, and alignment thresholds for positioning sequences within a reference alignment. Proper calibration of these parameters directly influences downstream diversity metrics, statistical power, and the fidelity of ecological inferences, which are paramount for researchers and drug development professionals investigating microbiome-disease relationships or therapeutic interventions.

Parameter Definitions & Impact Analysis

Trim Length

Definition: The position within sequencing reads after which all subsequent bases are removed. This is determined by evaluating per-base sequence quality. Impact: Over-trimming sacrifices valid data and reduces read overlap for paired-end sequences, while under-trimming retains low-quality bases that introduce errors and inflate diversity estimates. Key Consideration: For paired-end reads, the final trim length must ensure a minimum overlap (e.g., ≥20 bp) for reliable merging.

Cluster Cutoff

Definition: The similarity threshold (e.g., 0.03 for 97% similarity) used to group sequences into OTUs or for denoising to form ASVs. Impact: A stricter cutoff (e.g., 0.01) increases apparent diversity and may separate ecologically similar variants, while a more relaxed cutoff (e.g., 0.05) decreases diversity and may combine distinct taxa. Key Consideration: The choice between OTU (with a defined cutoff like 0.03) and ASV methods (which implicitly use a cutoff of ~0) represents a fundamental methodological decision influencing resolution and reproducibility.

Alignment Thresholds

Definition: Parameters governing how sequences are aligned to a reference database (e.g., SILVA). This includes the keepchimera flag, the ksize for word matching, and the minimum similarity score for placement.

Impact: Poor alignment leads to misplacement of sequences, inaccurate phylogenetic tree construction, and erroneous taxonomic assignment. Chimera detection is highly sensitive to alignment parameters.

Key Consideration: Alignment must balance specificity (avoiding misalignment) with sensitivity (allowing for novel but valid diversity).

Table 1: Typical Parameter Ranges and Defaults in Mothur SOP

| Parameter | Typical Test Range | Common Default in 16S rRNA SOP | Primary Impact Metric |

|---|---|---|---|

| Trim Length | 200-350 bp (V4 region) | Quality Score (Q) ≥ 20, length ≥ 250 bp | Number of sequences retained, Error rate |

| Cluster Cutoff (for OTUs) | 0.01 - 0.05 | 0.03 (97% similarity) | Number of OTUs, Alpha Diversity (Shannon, Chao1) |

| Alignment Minimum Similarity | 80-95% | 80% (minscore=80 in align.seqs) |

Percentage of sequences aligned, Chimera detection rate |

| k-mer Size for Alignment | 8, 9, 10 | 9 (ksize=9 in align.seqs) |

Alignment speed and accuracy |

Table 2: Example Optimization Outcomes from a Mock Community Study

| Parameter Set (Trim/Cluster/Align Score) | Sequences Retained (%) | OTUs Generated | Known Species Recovered | Observed/Expected Chao1 Ratio |

|---|---|---|---|---|

| 250 bp / 0.03 / 80 | 95% | 105 | 18/20 | 1.05 |

| 275 bp / 0.03 / 80 | 88% | 98 | 18/20 | 1.02 |

| 250 bp / 0.01 / 80 | 95% | 310 | 20/20 | 1.55 |

| 250 bp / 0.03 / 90 | 92% | 101 | 17/20 | 1.10 |

Detailed Experimental Protocols

Protocol 4.1: Systematic Optimization of Trim Length

Objective: To determine the optimal trim position that maximizes retained high-quality sequence data. Materials: Fastq files from a representative subset (≥ 10,000 reads) of your study. Procedure:

- Generate Quality Summary: Run

mothur > summary.seqs(fasta=current)on the raw fasta file to view initial length distribution. - Create Quality Profiles: Use

mothur > fastq.info()or external tools like FastQC to visualize per-base quality scores. - Iterative Trimming & Evaluation:

a. Starting at a conservative length (e.g., 200 bp for 2x250bp V4 reads), run:

b. Incrementally increase the

minlengthparameter (e.g., 225, 250, 275 bp). c. After each trim, re-runsummary.seqsand record: i) total sequences retained, ii) mean sequence length. d. For paired-end data, merge reads after trimming:make.contigs(ffastq=forward.trim.fastq, rfastq=reverse.trim.fastq). Record the percentage of successfully merged reads. - Decision Point: Plot sequences retained (%) vs. trim length. Choose the longest trim length before a sharp drop in retained/merged reads (the "elbow" of the curve).

Protocol 4.2: Empirical Determination of Cluster Cutoff

Objective: To select a clustering cutoff that best reflects the known biology of a control sample. Materials: Processed sequences from a well-characterized mock microbial community. Procedure:

- Process Mock Community: Following the Mothur SOP, process the mock community sequences through chimera removal (

chimera.vsearch) and pre-clustering (pre.cluster). - Iterative Clustering:

a. Cluster sequences at a range of cutoffs (e.g., 0.01, 0.02, 0.03, 0.04, 0.05) using the

cluster.splitordist.seqs/clustermethod. b. For each cutoff, generate a shared file and calculate alpha diversity (e.g.,summary.single(calc=chao,shannon)). - Evaluate Against Ground Truth:

a. Classify the OTU/ASV representatives (

classify.seqs) and compare to the known mock community composition. b. Calculate performance metrics: i) Number of expected species detected, ii) Ratio of observed to expected OTUs/ASVs, iii) False positive rate (unexpected taxa). - Decision Point: The optimal cutoff minimizes false positives while maximizing recovery of expected species. The 0.03 cutoff is often optimal for genus-level analysis but may vary with study aims.

Protocol 4.3: Calibration of Alignment Parameters

Objective: To establish alignment thresholds that ensure accurate placement without excessive data loss. Materials: A high-quality, non-redundant reference alignment (e.g., SILVA SEED) and a subset of study sequences. Procedure:

- Align with Defaults: Perform an initial alignment:

align.seqs(fasta=study.fasta, reference=silva.seed.align, ksize=9, minscore=80). - Vary

minscore: Repeat alignment varyingminscore(e.g., 70, 80, 85, 90). For each run, record the percentage of sequences that align (summary.seqs). - Vary

ksize: With the optimalminscore, test differentksizevalues (8, 9, 10). Monitor alignment time and the "search" column insummary.seqs(sequences needing full dynamic programming). - Assess Chimera Detection: Run

chimera.vsearchon each resulting alignment. The rate of chimeras detected should be consistent (typically 5-30% for noisy data) and not spike anomalously with parameter changes. - Decision Point: Choose the highest

minscorethat aligns >95% of your sequences and theksizethat provides a reasonable balance of speed (higher ksize) and sensitivity (lower ksize).

Visualizations

Diagram 1: Mothur SOP Workflow with Key Optimization Points

Diagram 2: Parameter Optimization Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for Parameter Optimization

| Item | Function in Optimization | Example/Note |

|---|---|---|

| Mock Microbial Community (DNA) | Gold standard for validating cluster cutoff and overall pipeline accuracy. | ZymoBIOMICS Microbial Community Standard, ATCC MSA-1003. |

| High-Quality Reference Alignment | Essential baseline for aligning sequences and tuning alignment parameters. | SILVA SSU NR SEED alignment (mothur-compatible). |

| Mothur Software Package | Primary platform for executing all protocols and analyses. | Version 1.48.0 or later. |

| FastQC | Provides initial visualization of per-base sequence quality to inform trim length. | Used prior to or in conjunction with Mothur. |

| Computational Cluster/HPC Access | Necessary for running iterative parameter tests on large datasets in a feasible time. | Local SLURM cluster or cloud computing (AWS, GCP). |

| R with vegan & phyloseq | For post-Mothur visualization of results (alpha/beta diversity) to assess parameter impact. | Critical for making final optimization decisions. |

| Custom Perl/Python Scripts | To automate the iterative running of Mothur batches and collate summary statistics. | Enables systematic high-throughput parameter testing. |

Within the MOTHUR standard operating procedure for microbiome research, analyzing amplicon sequences from large-scale studies (e.g., Earth Microbiome Project, human gut meta-studies) routinely involves datasets exceeding terabytes. Efficient memory and computational management is not optional but fundamental to deriving robust ecological inferences, such as alpha/beta diversity metrics and taxonomic classification, which form the core of downstream hypothesis testing in therapeutic development.

Core Principles & Quantitative Benchmarks

Table 1: Memory Footprint of Common MOTHUR Operations on a 10 Million Read Dataset (~1.5 GB FASTQ)

| Operation | Approx. RAM Use (GB) | Approx. Disk I/O (GB) | Key Efficiency Factor |

|---|---|---|---|

make.contigs (paired-end) |

4-6 | 10 | Number of processors, batch size |

screen.seqs/filter.seqs |

2-3 | 5 | Use of maxambig, maxhomop parameters |

align.seqs (vs. SILVA) |

8-12 (high) | 15+ | Reference database pre-loading, flip=t |

pre.cluster |

6-8 | 8 | diffs parameter setting |

chimera.uchime |

5-7 | 7 | Dereplication step prior |

classify.seqs (Bayesian) |

4-6 | 10 | Training set size, probs=f for speed |

cluster.split (distance matrix) |

15-25+ (very high) | 30+ | Critical: Use cluster=f method over classic |

Detailed Experimental Protocols

Protocol 3.1: Memory-Efficient Sequence Processing Workflow

Aim: To process raw MiSeq reads into chimera-checked sequences with minimal RAM overhead.

- Batch Processing with

make.contigs:- Split sample file list (

stability.files) into batches of 50-100 samples. - Run:

mothur "#make.contigs(file=subset_files, processors=8, batch=100)" - Rationale: Prevents loading all raw data simultaneously.

- Split sample file list (

- Streamlined Filtering & Alignment:

- Concatenate batch outputs, then run:

screen.seqs(fasta=current, group=current, maxambig=0, maxlength=275) - Align using the

kmer-based search shortcut:align.seqs(fasta=current, reference=silva.v4.align, flip=t, kmer=9, processors=12) - Filter alignment:

filter.seqs(fasta=current, vertical=T, trump=., processors=12)

- Concatenate batch outputs, then run:

- Dereplication Before Chimera Checking:

unique.seqs(fasta=current)- Run pre-clustering to reduce noise:

pre.cluster(fasta=current, count=current, diffs=2) - Crucial step: Run UCHIME on dereplicated data:

chimera.uchime(fasta=current, count=current, dereplicate=t, processors=12) - Remove chimeras:

remove.seqs(accnos=current, fasta=current, count=current)

Protocol 3.2: Computational Efficiency for OTU Clustering & Taxonomy

Aim: To generate OTU tables and taxonomy assignments for large datasets without generating massive distance matrices in RAM.

cluster.splitMethod (Recommended):- Generate a rough taxonomic classification:

classify.seqs(fasta=current, count=current, reference=trainset_v138, taxonomy=trainset_v138.tax, probs=f, processors=12) - Use taxonomy to split input for parallel clustering:

cluster.split(fasta=current, count=current, taxonomy=current, splitmethod=classify, taxlevel=4, cutoff=0.03, processors=24) - Rationale: Avoids all-vs-all distance calculation; splits data into phylogenetic groups.

- Generate a rough taxonomic classification:

- Alternative

clusterwithcluster.fastalgorithm:- If split method is unsuitable, use:

dist.seqs(fasta=current, cutoff=0.03, processors=24)followed bycluster(column=current, count=current, method=average, cutoff=0.03) - Warning:

dist.seqson >1M sequences requires significant temporary disk space (~2TB for 1M seqs).

- If split method is unsuitable, use:

- Final Classification & Downstream:

- Get OTU taxonomy:

classify.otu(list=current, count=current, taxonomy=current, label=0.03) - For efficiency, subsample for beta-diversity:

sub.sample(shared=current, label=0.03, size=10000)

- Get OTU taxonomy:

Visualization of Workflows

Diagram Title: MOTHUR Efficient vs. Memory-Intensive Workflow Paths

Diagram Title: Data Flow & Memory Hierarchy in Large MOTHUR Runs

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Resources for MOTHUR on Large Datasets

| Item | Function/Utility | Key Consideration for Large Data |

|---|---|---|

| High-Speed Network-Attached Storage (NAS) | Central repository for raw FASTQ files and shared reference databases. | Minimizes data transfer time; use RAID 6 for redundancy. |

| Local NVMe SSD (≥2TB) | Temporary "scratch" space for I/O-intensive operations (distance matrices, alignment). | Prevents network latency; critical for dist.seqs. |

| Server-Grade CPU (≥24 cores) | Parallel processing for processors= parameter in MOTHUR commands. |

Enables cluster.split and parallelized align.seqs. |

| RAM (≥128 GB recommended) | Holds reference databases, sequence objects, and distance matrix chunks in memory. | Prevents disk swapping; essential for align.seqs with full SILVA. |

| Job Scheduler (e.g., SLURM, SGE) | Manages cluster resources, queues long jobs, and handles job arrays for batch processing. | Essential for reproducible, scalable HPC use. |

MOTHUR count file |

Tracks sequence counts per sample after each step, rather than duplicating sequences. | Drastically reduces memory vs. using name files. |

| Trimmed Reference Alignment (e.g., SILVA V4 region) | Subset of full-length SILVA aligned to your target amplicon region (e.g., V4). | Reduces align.seqs RAM use by >60%. |

| Custom Perl/Python Wrapper Scripts | Automates batch splitting, job submission, and log file parsing. | Reduces manual error and improves reproducibility. |

Addressing Contamination and Batch Effects in Your MOTHUR Analysis

Contamination from laboratory reagents and batch effects introduced during sample processing are critical confounders in microbiome studies. Within the MOTHUR Standard Operating Procedure (SOP) framework, proactive identification and statistical correction of these issues are mandatory for generating biologically valid conclusions. Failure to address them can lead to spurious associations and irreproducible results, especially in translational drug development research.

Table 1: Common Sources of Contamination and Batch Effects

| Source Type | Specific Example | Typical Impact on Data | Recommended Mitigation in MOTHUR |

|---|---|---|---|

| Reagent Contamination | DNA extraction kits, PCR master mix | Introduction of non-biological sequences (e.g., Pseudomonas, Bradyrhizobium) | Use of negative control samples; post-sequencing subtraction. |

| Batch Effects | Different PCR runs, sequencing lanes, extraction dates | Increased within-batch similarity vs. between-batch similarity, overshadowing biological signal | Randomization of samples across batches; inclusion of positive controls. |

| Primer/Lot Variability | Different lots of 16S rRNA gene primers | Variation in amplification efficiency and bias | Standardization of reagent lots; use of standardized mock communities. |

| Bioinformatic Processing | Different sequence processing dates or parameters | Clustering artifacts, OTU inflation/deflation | Use of standardized MOTHUR SOP scripts; version control for code. |

Table 2: Statistical Metrics for Identifying Batch Effects

| Metric | Calculation (Conceptual) | Threshold for Concern | MOTHUR/Associated Tool |

|---|---|---|---|

| PERMANOVA R² (Batch) | Variance explained by 'Batch' factor in distance matrix | R² > 0.05 - 0.1 (significant p-value) | dist.shared -> amova or pcoa -> par(n)manova in R |

| PCoA Visualization | Ordination plot colored by batch | Clear visual clustering by batch, not treatment | pcoa command, visualized in R/PRIMER-e |

| Median Coefficient of Variation (for Controls) | CV of abundances in replicate positive controls | High CV across batches indicates technical noise | Analysis of shared file from mock community samples |

Detailed Experimental Protocols

Protocol 1: Implementing Control Samples in the MOTHUR Workflow

- Sample Design: Include at least three types of controls per processing batch:

- Negative Extraction Controls: Sterile water or buffer taken through DNA extraction.

- Positive Control: A standardized mock microbial community (e.g., ZymoBIOMICS) with known composition.

- Blank PCR Control: Water in place of template DNA during amplification.

- Sequencing & Processing: Sequence controls alongside experimental samples on the same lane. Process all files together through the MOTHUR SOP (e.g.,

make.contigs,screen.seqs,align.seqs,filter.seqs,unique.seqs,pre.cluster,chimera.uchime,classify.seqs). - Analysis: In MOTHUR, generate a

sharedfile (make.shared). Export the OTU table and taxonomy for controls.- For Negative Controls: Identify OTUs present. These are candidate contaminant sequences. Consider using the

decontamR package (frequency or prevalence method) to statistically identify and remove contaminants from all samples. - For Positive Controls: Calculate alpha-diversity (e.g.,